- The paper introduces a data-driven finite element method that replaces traditional material models with experimental data, enforcing conservation laws.

- It contrasts classical strong and novel weaker formulations to improve robustness against noisy or incomplete datasets.

- Adaptive hp* refinement and Markov chain Monte Carlo techniques are employed to quantify uncertainty in complex heat transport simulations.

Conservative Data-Driven Finite Element Framework

Introduction

The paper introduces a data-driven finite element framework tailored for engineering simulations. It leverages experimental data directly in numerical simulations while adhering to conservation laws and boundary conditions using the finite element method, eschewing traditional material models. In particular, the paper proposes a "weaker" mixed finite element formulation, which relaxes regularity requirements on the approximation space for the primary field but ensures continuity of the normal flux component across inner boundaries, thus maintaining the conservation law in the strong sense. This approach offers advantages in handling imperfections in datasets, such as missing or noisy data, making solution uncertainty quantifiable using methods like Markov chain Monte Carlo.

Data-Driven Approach for Heat Transport Problems

To solve heat transport problems using the proposed method, the conservation of energy and boundary conditions need resolution. This framework provides a mechanism where, instead of phenomenological constitutive equations like Fourier's Law, experimental datasets define relationships between key variables such as heat flux and temperature gradient, encapsulated as points within a multidimensional space.

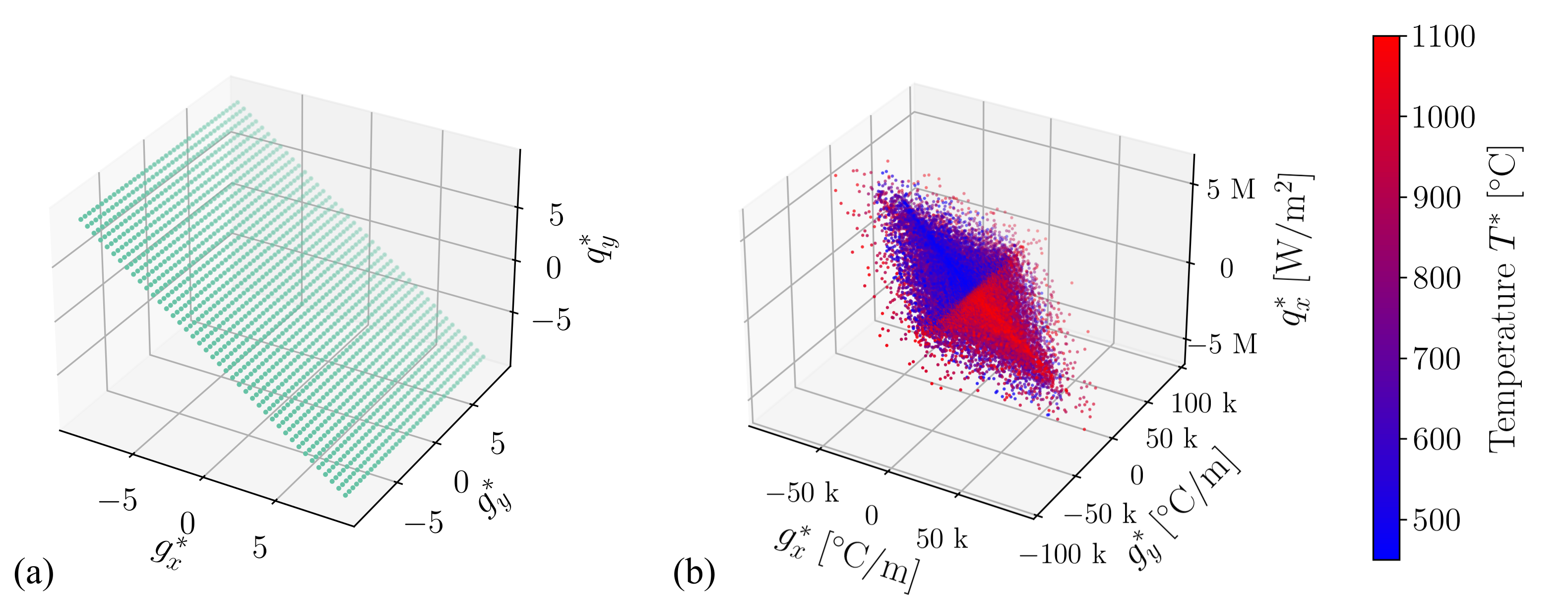

Figure 1: Example material datasets (a) D4D and (b) D5D showing grid-like and artificially generated structures, respectively.

The datasets enable searching for the closest material points at every step of the iterative process, ensuring that the conservation law and prescribed boundary conditions are satisfied.

The article contrasts two formulations: the classical ("stronger") and the newly introduced ("weaker") data-driven mixed finite element formulations.

- Stronger Formulation: Uses Sobolev space H1 for temperature and Lebesgue space L2 for flux, similar to classical FEM setups. (Figure 2)

Figure 2: Essential and natural boundary conditions for (a) Stronger DD and (b) Weaker DD formulations.

- Weaker Formulation: Introduces discontinuous L2 space for temperature, allowing it to be discontinuous cross-element boundaries, while ensuring flux continuity in H(div). This framework is more natural to handle datasets with variability within material responses.

Both formulations enable leveraging imperfect datasets, presenting a mechanism for quantifying uncertainty through adaptive refinement using a posteriori error indicators and estimators.

Verification and Implementation

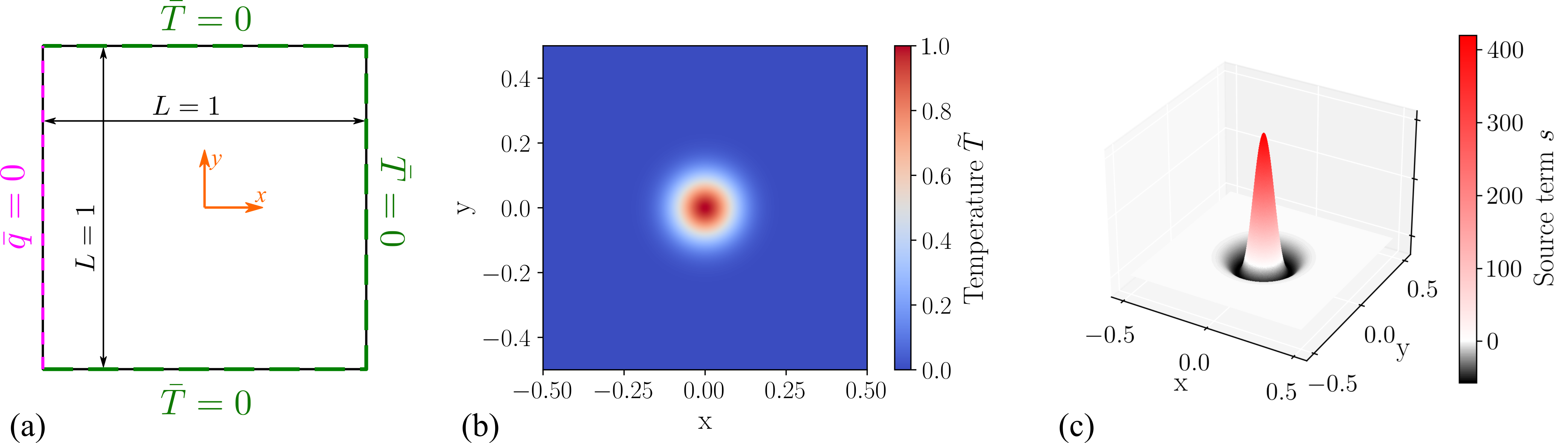

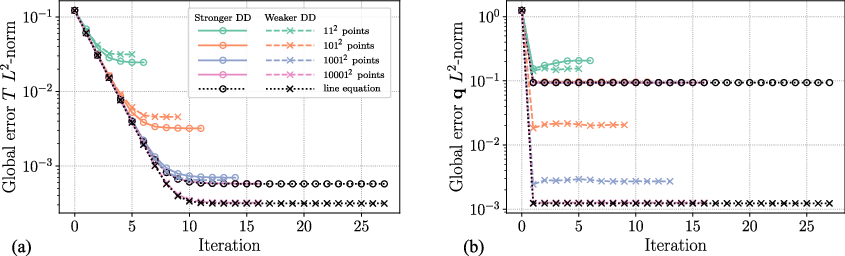

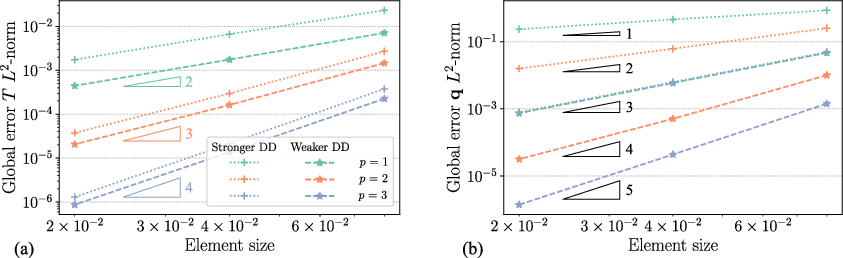

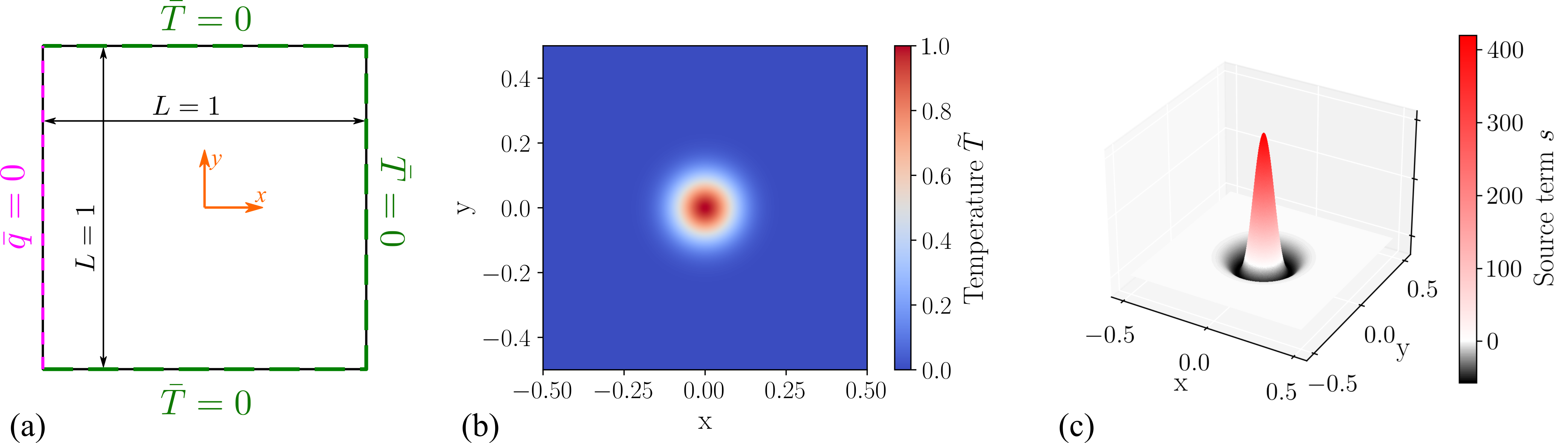

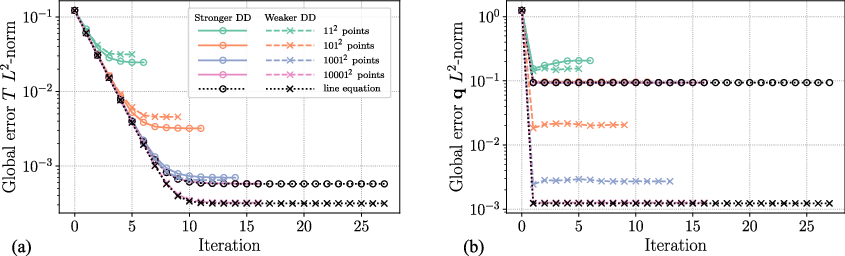

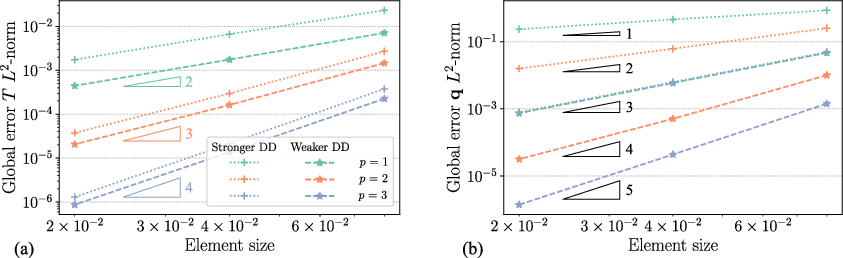

Verification of the framework is conducted using problem setups with known analytical solutions, observing convergence against enriched material datasets (Figure 3).

Figure 3: Example Exp-Hat setup showing analysis corresponding to exact solution.

The stronger and weaker formulations converge differently, especially highlighted with "saturated" datasets—indicating various computational efficiencies depending on dataset density and approximation orders.

Adaptive Refinement and Error Estimation

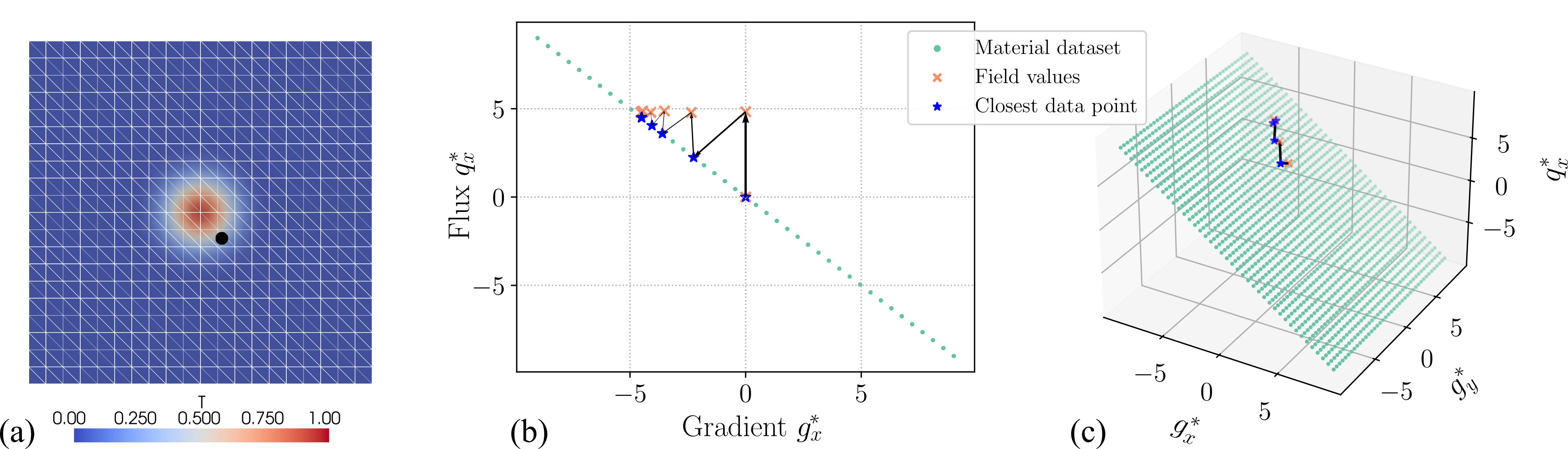

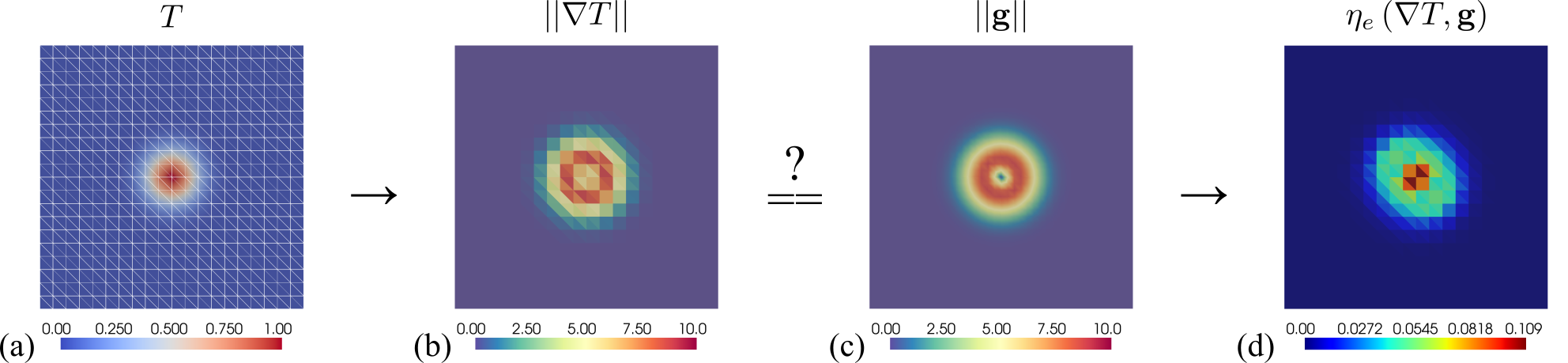

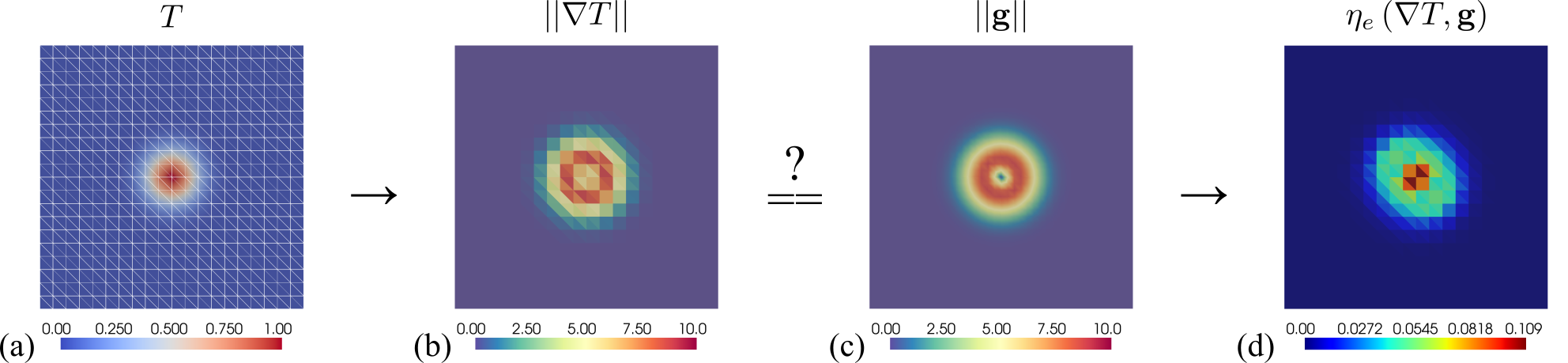

The framework adapts with hp∗-refinement algorithms driven by error indicators tailored for weak formulations (Figure 4).

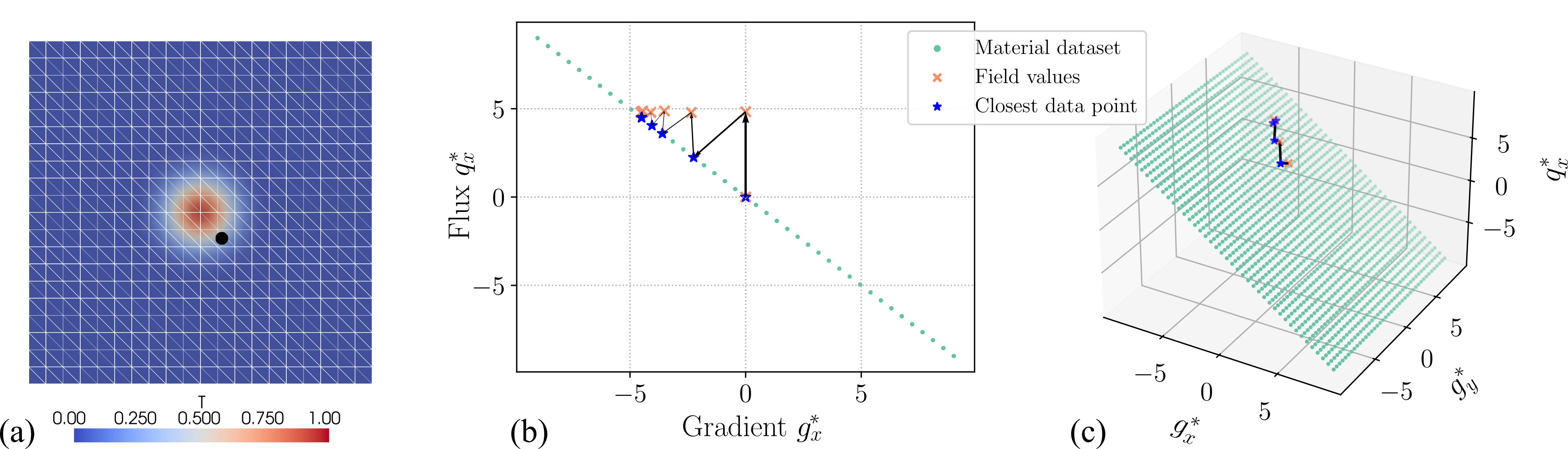

Figure 4: Example Exp-Hat paths of observed data point, with Closest data point and Field values illustrations.

Adaptive refinement minimizes computational effort, ensuring accuracy and confidence in predictions. The method distinguishes between FE approximation errors and uncertainties due to material dataset imperfection.

Application in Nuclear Graphite

A practical illustration is demonstrated within heat transfer modeling for a nuclear graphite brick. The problem utilized flawed datasets to emulate realistic scenarios with missing information and inherent noise, guiding adaptive refinement efforts accordingly (Figure 5).

Figure 5: Graphite brick model showing setup conditions and resulting fields after adaptive hp∗ refinement.

Non-Uniqueness Quantification

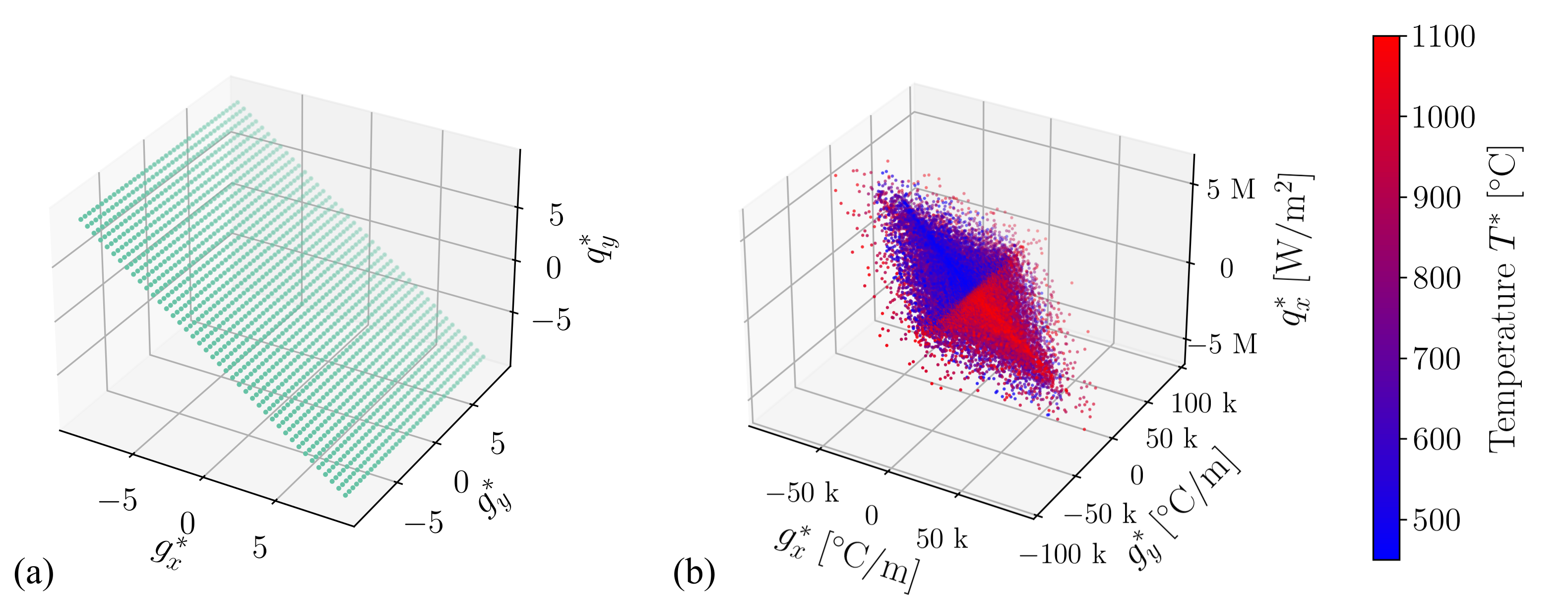

Since the dataset-related uncertainties present non-unique solutions, Markov chain Monte Carlo simulations help provide a probabilistic measure of solution uniqueness within the designed framework (Figures 6 and 7).

Figure 6: Standard deviation of flux fields showing noise-added impact on predictions.

Figure 7: High standard deviation points on material dataset projections indicating dataset noise orientation.

This facilitates insightful discussion on solution variation with potentially noisy datasets, making the approach robust yet informative for future analysis development.

Conclusion

The paper underscores a novel mixed finite element framework reliant on data-driven approaches eschewing material models, introducing substantial flexibility and efficiency in handling variability and uncertainties inherent in engineering datasets. The framework is a stride toward versatile numerical solutions across thermally driven applications, vibrant across digital twin applications in complex systems.