- The paper introduces an LLM-driven framework that iteratively refines GPU kernels through stages of code selection, experiment design, and autonomous implementation.

- It demonstrates significant performance gains, reducing execution time from ~860 μs to ~450 μs on the AMD MI300 compared to baseline methods.

- The methodology addresses limited documentation and profiling constraints, bridging human expertise gaps with AI-driven optimization strategies.

GPU Kernel Scientist: An LLM-Driven Framework for Iterative Kernel Optimization

The paper "GPU Kernel Scientist: An LLM-Driven Framework for Iterative Kernel Optimization" (2506.20807) introduces an automated methodology for optimizing GPU kernels using LLMs. This approach is crucial for refining GPU kernels, especially on architectures like the AMD MI300, where documentation is limited, and traditional profiling tools are insufficient. The "GPU Kernel Scientist" is positioned to iterate and evolve kernel code through a multi-stage process, aiming to bridge gaps in human expertise with LLM-derived insights. Below, the core components and findings of the methodology are discussed in detail.

Methodology

Multi-Stage Evolutionary Process

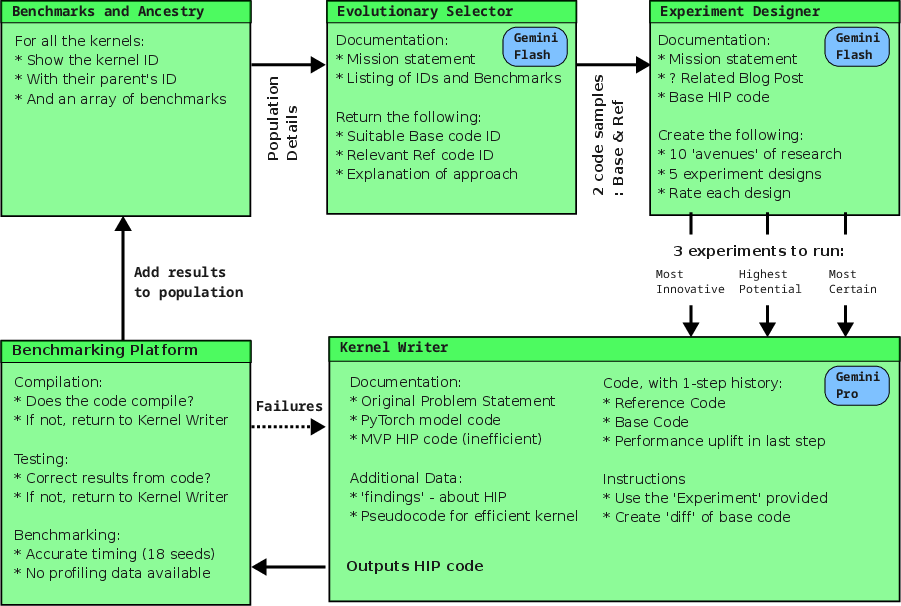

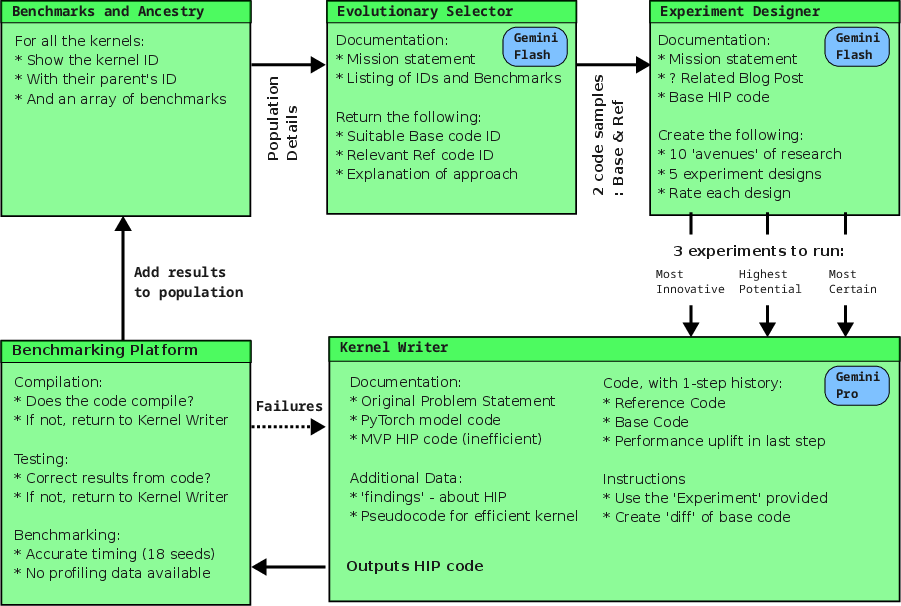

The proposed framework operates through three primary LLM-driven stages: code selection, experiment generation, and autonomous implementation. This iterative approach allows the system to refine kernels for performance without extensive human intervention.

- LLM Evolutionary Selector: This stage involves selecting promising code versions based on performance metrics, leveraging the LLM to make sophisticated decisions informed by multi-objective optimization.

- LLM Experiment Designer: Here, the LLM crafts potential optimization experiments by incorporating latent knowledge and summarizing external sources. Ten potential avenues are generated, from which five are selected based on innovative potential and estimated performance improvements.

- LLM Kernel Writer: The LLM synthesizes new kernel code by implementing the experimental strategies designed in the previous stage. This process is achieved autonomously, using HIP syntax for the target hardware.

Figure 1: GPU Kernel Scientist Process

Implementation Constraints and Considerations

Target Hardware Documentation

The system targets the AMD MI300 GPU, a platform with sparse documentation compared to CUDA ecosystems. LLMs generalize optimization practices from well-documented platforms (e.g., CUDA) to infer applicable strategies for AMD architectures.

Limited Profiling and Feedback

With the only source of feedback being end-to-end timing results from an external evaluation system, the methodology relies on LLMs to correlate code modifications with performance outcomes. This constraint necessitates a focus on developing robust benchmarks to infer detailed performance characteristics indirectly.

Experimental Results

The framework's competitive entry in the AMD Developer Challenge 2025 demonstrates its capability to produce significant performance gains in kernel optimization, achieving execution times closer to those achieved by human experts with direct hardware access.

- Initial PyTorch Baseline: ~860 μs

- Naïve HIP Conversion: ~5000 μs

- LLM-Optimized Kernel: ~450 μs

- Top Human Entry: ~105 μs (with hardware access)

The LLM-driven framework achieves considerable improvement over the naïve HIP implementation, illustrating its effective navigation of limited feedback environments.

Implications and Future Work

Practical Implications

This framework provides an approach that can democratize high-performance GPU programming by reducing dependence on traditional expertise and expansive documentation. It addresses the need for accelerated kernel development, particularly on emergent hardware platforms lacking comprehensive supporting materials.

Theoretical Implications

The paper demonstrates the potential of LLMs in autonomous decision-making for code optimization. By integrating evolutionary algorithms with LLMs, the research opens avenues for further exploration into AI-driven programming tools that adapt dynamically to new hardware constraints and opportunities.

Future Directions

Future work could focus on expanding the adaptability of the framework to other computational platforms and refining the integration with profiling tools for more precise performance feedback. Exploring the application of this framework to other domains, such as CPU optimization and other parallel computing environments, could also offer new opportunities for enhancing computational efficiency.

Conclusion

The "GPU Kernel Scientist" showcases the role of LLM-driven methods in evolving GPU kernel optimization, highlighting its potential for significant performance gains even without robust initial documentation or human expertise. This research underscores the transformative capabilities of AI in automating and enhancing computational practices, pointing towards a future where machine learning and AI significantly impact software development and optimization tasks.