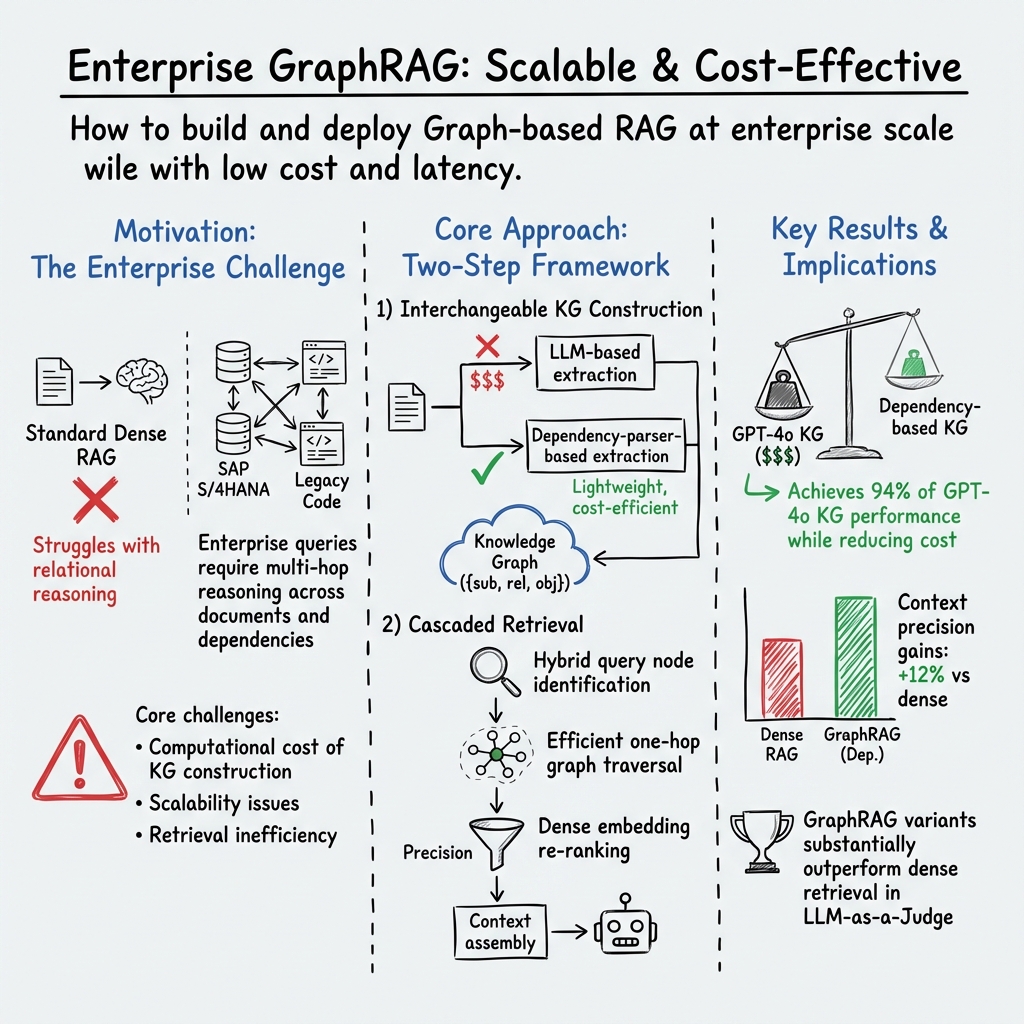

- The paper introduces a dependency-based knowledge graph construction method that achieves 94% of LLM performance while significantly reducing computational costs.

- It proposes a hybrid graph retrieval strategy combining dense vector re-ranking and one-hop traversal to improve context precision by up to 15% compared to traditional methods.

- The system shows practical benefits in enterprise applications by reducing hallucinations and enhancing performance in tasks like CCM Chat and legacy code migration.

Efficient Knowledge Graph Construction and Retrieval from Unstructured Text for Large-Scale RAG Systems

Introduction

The paper "Towards Practical GraphRAG: Efficient Knowledge Graph Construction and Hybrid Retrieval at Scale" (2507.03226) introduces a framework for deploying Graph-based Retrieval-Augmented Generation (GraphRAG) in enterprise environments. GraphRAG systems have shown promise for multi-hop reasoning and structured retrieval, essential for complex enterprise tasks such as legacy code migration and policy evaluation. However, their adoption has faced significant barriers due to computational costs associated with constructing knowledge graphs using LLMs and the inefficiencies in graph retrieval.

To tackle these challenges, the authors propose two innovations: a dependency-based knowledge graph construction pipeline and a lightweight graph retrieval strategy. These methods aim to reduce reliance on LLMs, thereby mitigating costs and latency while maintaining high recall and low latency.

Methodology

The framework comprises two primary components: knowledge graph construction and graph retrieval. The construction pipeline utilizes dependency parsing techniques instead of LLMs, leveraging industrial-grade NLP libraries to extract entities and relations from unstructured text efficiently. This dependency-based approach is noted for achieving 94% of the performance of LLM-generated graphs while significantly lowering costs.

For graph retrieval, the authors implement a strategy combining hybrid query node identification with efficient one-hop traversal. This method ensures the extraction of semantically relevant subgraphs, achieving substantial improvements over traditional RAG systems. The pipeline's retrieval process employs dense vector-based re-ranking using OpenAI embeddings, refining subgraph extraction for optimal performance.

Results and Analysis

Empirical evaluation on two SAP datasets demonstrates marked improvements in both qualitative and quantitative metrics. The proposed system showed significant gains in context precision and retrieval accuracy compared to dense vector retrieval methods. Specifically, it recorded up to 15% and 4.35% improvements over traditional RAG baselines based on LLM-as-Judge and RAGAS metrics, respectively.

The dependency-based graph model retained 94% of the performance of its LLM counterpart in context precision measures. In practical applications such as CCM Chat and Code Proposal, the system exhibited lower incidence of hallucinations and faulty function definitions, a common issue with dense vector retrieval.

Implications and Future Directions

The implications of this study are profound for enterprise-scale applications requiring complex multi-hop reasoning. By decoupling LLMs from knowledge graph construction, enterprises can achieve scalable, cost-effective GraphRAG deployments. The dependency-based approach not only lowers computational expenses but also offers domain-agnostic adaptability across various textual domains.

Future work could explore extending these techniques to other domains beyond SAP-specific applications, validating generalizability on broader benchmarks, such as HotpotQA. Additionally, investigating methods to capture implicit relations not directly expressed in syntax would further enhance the robustness and versatility of GraphRAG systems.

Conclusion

The research presents a scalable and efficient framework for enterprise-grade GraphRAG systems, addressing key bottlenecks in computational cost and retrieval efficiency. By introducing dependency parsing and hybrid retrieval mechanisms, the paper demonstrates the feasibility of deploying scalable GraphRAG systems in complex enterprise scenarios. These findings pave the pathway for practical, explainable, and adaptable retrieval-augmented reasoning in real-world applications. Future work will focus on increasing domain applicability and refining implicit relation extraction techniques, aiming for broader and more versatile applications of GraphRAG systems.