- The paper introduces the Jack Unit, a MAC unit that significantly reduces area (up to 2.01x) and power (up to 1.84x) consumption.

- It employs precision-scalable CSM, significand adjustments, and 2D sub-word parallelism to support multiple data formats including INT, FP, and MX.

- The design shows strong potential for AI accelerators, achieving energy efficiency improvements of up to 5.41x across various benchmarks.

Introduction

The "Jack Unit" paper introduces a novel architectural solution aimed at enhancing area and energy efficiency in Multiply-Accumulate (MAC) units while supporting a variety of data formats. This work addresses the challenges posed by the increasing demand for AI applications that require processing various precisions, such as INT, FP, and MX formats. The Jack unit leverages bit-level flexibility to improve hardware efficiency, positioning it as an innovative solution for AI accelerators.

Architectural Design

The Jack unit is built on three core design strategies to achieve its goals:

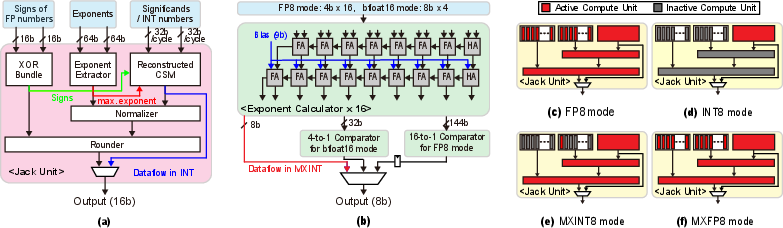

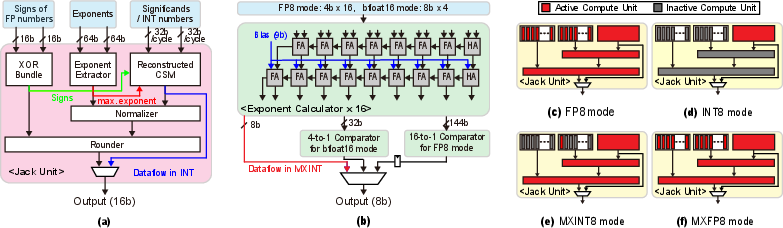

- Precision-Scalable CSM: By replacing traditional CSM with a precision-scalable variant, the unit supports multiple data formats in a unified way. This approach eliminates the need for dedicating separate multipliers for each format.

- Significand Adjustment within CSM: This method allows operating using an INT adder for accumulation, replacing costlier FP adder operations. The adjustment of significands based on exponent differences within CSM optimizes the computational flow.

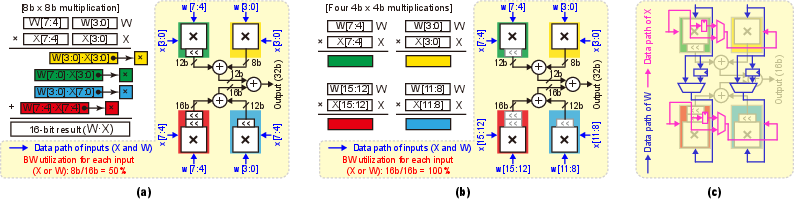

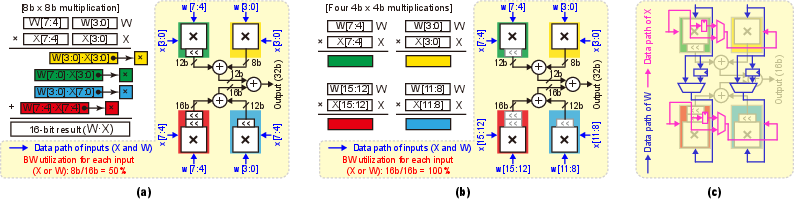

- 2D Sub-word Parallelism: By utilizing this technique, the Jack unit reduces hardware overhead through shared barrel shifters across sub-multipliers, thereby optimizing area and power.

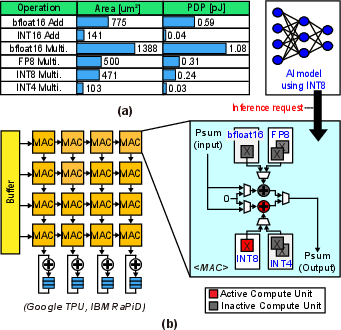

Figure 1: Hardware costs of compute units for various data formats.

Figure 2: Operation of the precision-scalable CSM on different bit-width multiplication.

Evaluation Results

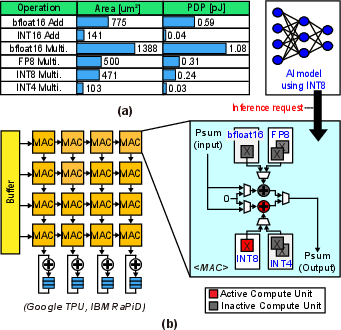

The Jack unit demonstrates significant improvements over baseline MAC units used in commercial accelerators. It achieves 1.17∼2.01× area reduction and 1.05∼1.84× power savings. Architectural evaluations conducted on a Jack unit-based AI accelerator confirm the practical viability of these improvements.

Figure 3: Computational flow and reconstruction mechanism of the Jack unit.

Figure 4: Structural enhancement and sub-module activation in Jack unit.

Implications and Future Directions

The Jack unit's design implications extend into practical applications where diverse data types are used. Its ability to improve energy efficiency by 1.32∼5.41× across AI benchmarks implies a strong potential for deployment in cloud and edge computing environments that require dynamic precision scaling. As AI workloads become more complex, particularly in processing low-precision formats like INT4 and mixed-precision tasks, the Jack unit stands out as a cost-effective solution.

Moving forward, the adoption of Jack units could standardize precision scalability in AI hardware, influencing both processor design and AI model architecture. Further research may explore integrating Jack units in more heterogeneous computing environments and expanding support for novel data formats beyond those currently standardized.

Conclusion

The Jack unit represents a significant advance in designing MAC units for AI accelerators, optimizing for both area and energy efficiency while supporting diverse data formats. Its scalable approach to precision handling addresses key limitations in conventional MAC designs. As AI demands continue to evolve, the flexible design of the Jack unit offers a promising framework for future innovation in AI processor technology.