- The paper introduces a modular multi-agent simulation platform that integrates high-fidelity VR interfaces with multimodal human sensing.

- The methodology employs dedicated hardware and wearable sensors to capture physiological, neurological, and behavioral responses in real time.

- Use cases include examining multimodal travel transitions and human-automated vehicle interactions to enhance urban transportation design.

Simulation for All: A Step-by-Step Cookbook for Developing Human-Centered Multi-Agent Transportation Simulators

Introduction

The paper "Simulation for All: A Step-by-Step Cookbook for Developing Human-Centered Multi-Agent Transportation Simulators" (2507.09367) explores the urgent need for advanced simulation tools in multimodal transportation systems. Traditional platforms are often limited by predefined behaviors and exclude active participation from public transit users. This research presents a novel multi-agent simulation platform designed to facilitate real-time, immersive interaction among diverse road users, including drivers, pedestrians, cyclists, automated vehicles, and public transit participants.

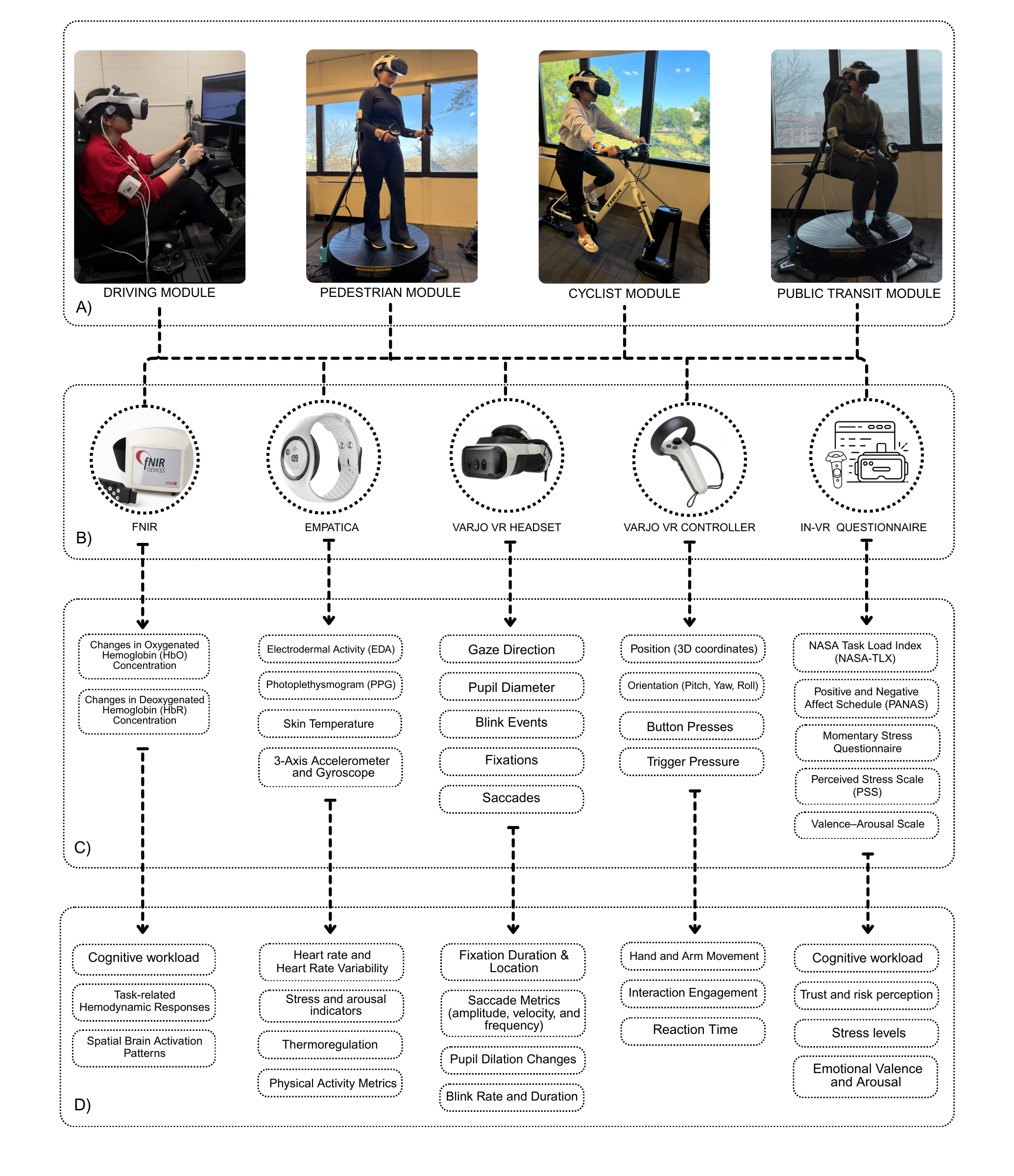

Figure 1: System architecture outlining the components and connections for each agent in the simulation—driver, pedestrian, cyclist, and public transit user. Each agent is equipped with a dedicated computing engine and integrated with its corresponding physical interface.

Methodology

System Architecture

The multi-agent platform features a modular and extensible system architecture, as depicted in Figure 1. Each agent—drivers, cyclists, pedestrians, and public transit users—operates through dedicated hardware interfaces, allowing for flexible and scalable interaction within the simulation environment. The system employs high-fidelity VR environments supported by hardware-specific modules such as omnidirectional treadmills, smart trainers, and actuated cockpits. This setup ensures realism and accessibility, promoting comprehensive data collection across physiological, neurological, and behavioral dimensions.

Human Sensing Integration

Human sensing integration is critical for nuanced understanding of road user behavior. The platform supports multimodal data acquisition through functional near-infrared spectroscopy (fNIRS), eye tracking, and wrist-based biosensors. These technologies provide real-time insights into cognitive load, emotional response, and behavioral choices across various scenarios. Such integration allows for a detailed exploration of user interactions in transportation contexts, supporting both theoretical investigations and practical applications.

Figure 2: Human Sensing Module A) Modules, B) Devices, C) Data Collection, D) Feature Extraction.

Use Cases

Multimodal Travel Transitions

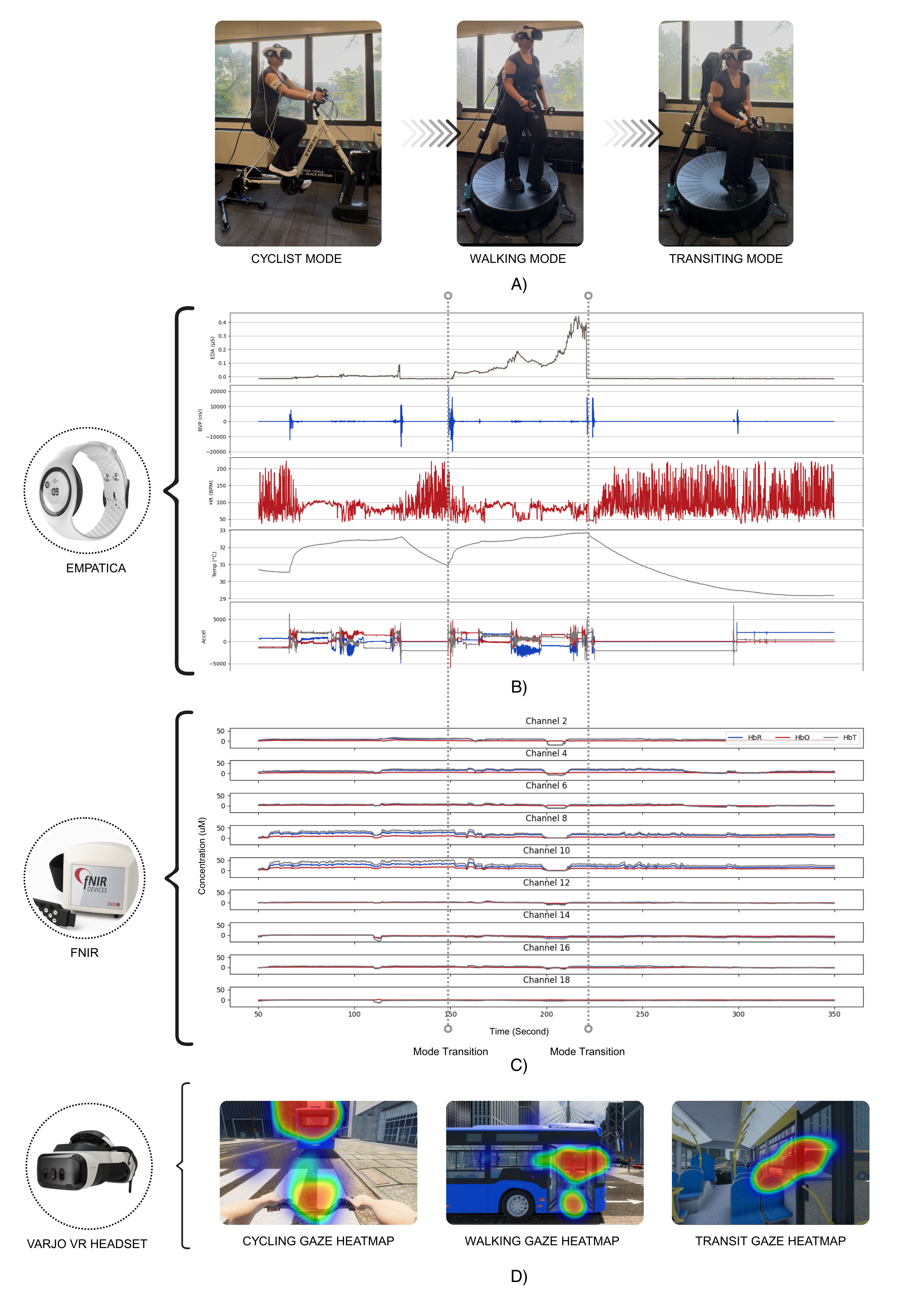

The platform facilitates research on multimodal travel transitions—examining how users switch between cycling, walking, and public transit within a single journey. This configuration allows detailed examination of accessibility, usability, safety, and traveler well-being under realistic conditions. Integrated sensing streams enable researchers to capture physiological and affective responses during these transitions, offering valuable insights for urban design and transportation policy.

Figure 3: Multimodal Travel Transitions: Sample Data Streams from a Single Participant During Cycling, Walking, and Riding Public Transit.

Human-Automated Vehicle Interactions

A significant portion of the research focuses on human interactions with automated vehicles. This includes the simulation of scenarios with external human-machine interfaces (eHMIs) for communication between autonomous systems and vulnerable road users. Such studies are essential for developing socially-aware autonomous systems capable of negotiating complex urban interactions in real-time. The platform's sensing capabilities are particularly useful for evaluating trust, perceived safety, and behavioral compliance.

Figure 4: Implemented Multimodal External Human-Machine Interfaces (eHMIs) for AV–VRU Communication in Simulation.

Discussion

The current research presents an innovative approach to transportation simulation, emphasizing the importance of human-centered design. By integrating multiple real-time sensing modalities, the platform provides comprehensive insights into road user behavior and interaction dynamics. However, challenges remain in terms of scalability and user comfort, particularly when utilizing VR over extended periods. Future work may focus on refining sensor integration, expanding system accessibility, and enhancing environmental realism within simulated scenarios.

Conclusion

The detailed architecture and methodology outlined in the paper offer a promising foundation for advancing transportation simulation research. By democratizing access to high-fidelity simulation tools, the platform empowers researchers to undertake complex studies of multimodal interactions and road user behaviors in increasingly sophisticated urban environments. Ultimately, this work contributes to the broader goal of improving transportation systems through informed design and inclusive experimentation.