- The paper introduces a brain-inspired framework that integrates spatial, temporal, episodic, and semantic memories to support long-term planning in robots.

- It employs a Planner-Critic closed-loop planning module that dynamically adjusts multi-step action sequences, mitigating outdated plans and infinite loops.

- Experimental evaluations on EB-ALFRED and EB-Habitat benchmarks demonstrate significant improvements in task success rates and real-world performance.

RoboMemory: A Brain-inspired Multi-memory Agentic Framework for Interactive Environmental Learning in Physical Embodied Systems

Introduction

The paper "RoboMemory: A Brain-inspired Multi-memory Agentic Framework for Lifelong Learning in Physical Embodied Systems" presents a novel framework designed to enhance the learning and interaction capabilities of embodied systems, specifically robots. This framework draws inspiration from cognitive neuroscience, implementing a multi-memory system that facilitates long-term planning and learning. The architecture integrates four core modules: Information Preprocessor, Lifelong Embodied Memory System, Closed-Loop Planning Module, and Low-Level Executor. These modules collectively address challenges such as continuous learning, memory latency, task correlation, and infinite loops in closed-loop planning. Evaluated on EmbodiedBench, RoboMemory improves success rates significantly compared to open-source and closed-source baselines.

Figure 1: RoboMemory architecture with working pipeline and memory mechanisms.

RoboMemory Architecture

The RoboMemory framework is structured around a unified memory paradigm which efficiently updates and retrieves information across its four submodules: Spatial, Temporal, Episodic, and Semantic. This architecture mitigates latency issues common in complex memory frameworks by enabling parallel updates.

The Information Preprocessor uses dual modules, the Step Summarizer and the Query Generator, to transform visual observations into textual data that interfaces with RoboMemory's retrieval system. It allows fast indexing and querying, providing an agile mechanism for memory operations during each action cycle.

Lifelong Embodied Memory System

At the core of the RoboMemory architecture is the Lifelong Embodied Memory System. This system integrates:

Closed-Loop Planning

RoboMemory leverages a Planner-Critic mechanism for dynamic environment interaction. The planner generates multi-step action sequences adjusted by the critic, who ensures suitability based on the latest environmental data. This iterative evaluation prevents execution of outdated plans, circumventing potential infinite loops.

Experimental Evaluation

RoboMemory was evaluated on the EB-ALFRED and EB-Habitat benchmarks, demonstrating superior performance in task success rates compared to VLM-based and agent frameworks. The contributions of each module were validated through ablation studies, which showed the importance of long-term memory and the critic module in enhancing task adaptability.

Figure 3: Comparison of Success Rates (SR) and Goal Condition Success Rates (GC) across difficulty levels between RoboMemory and baseline methods on EB-Habitat.

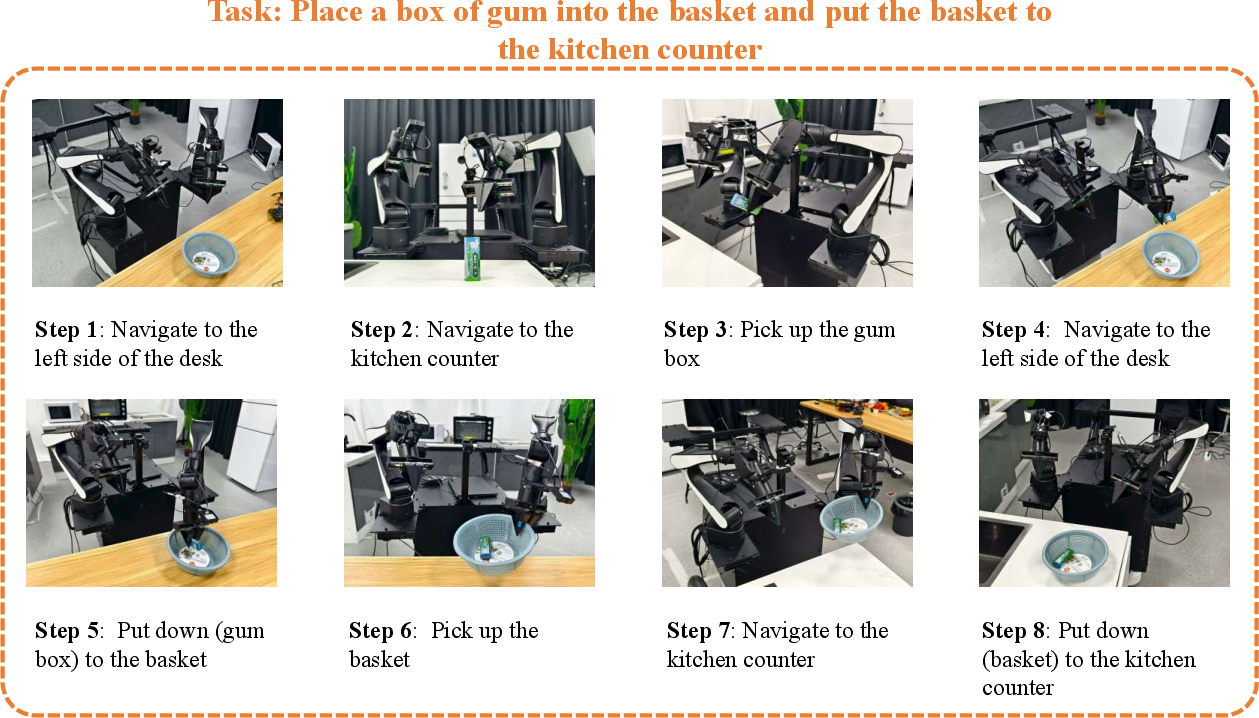

Real-world Deployment

In real-world environments, RoboMemory successfully demonstrated lifelong learning capabilities with significant performance improvements in sequential task execution. The setup mirrored benchmark conditions, confirming the framework's scalability and robustness in practical scenarios.

Figure 4: Visualization of the experimental environment.

Conclusion

RoboMemory establishes a new standard for embodied systems with its brain-inspired architectural design, facilitating lifelong learning and efficient long-term planning. While the framework excels in dynamic environments, future developments could refine reasoning, integrate more robust action execution strategies, and enhance VLA-agent interaction to maximize adaptability and performance beyond simulated setups.

Figure 5: Case that a task is successful.