- The paper proposes a moral agency framework to guide AI integration while ensuring clear human accountability in bureaucratic systems.

- The paper demonstrates that treating AI as an augmentation rather than an autonomous moral agent preserves transparency and democratic oversight.

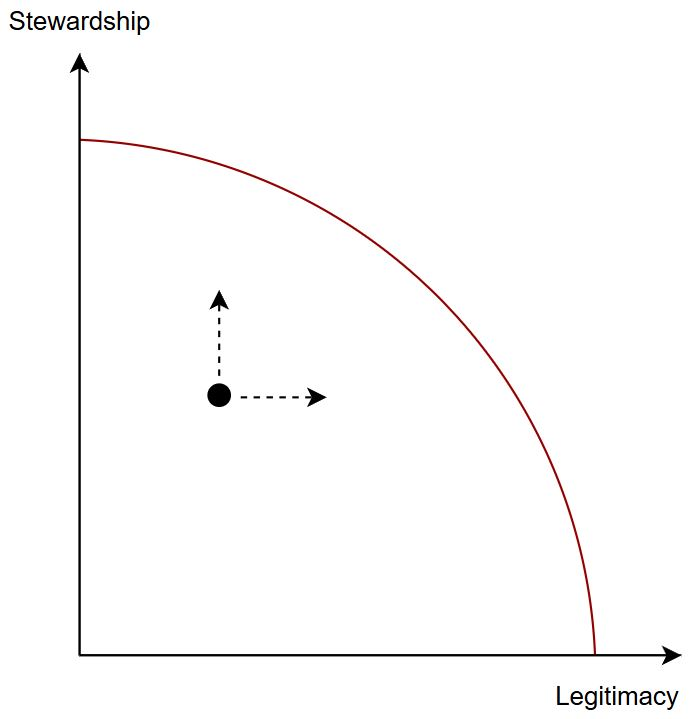

- The paper emphasizes maintaining the dual aims of legitimate legislative implementation and long-term stewardship through structured human oversight.

A Moral Agency Framework for the Integration of AI in Bureaucracies

Introduction

The paper "A Moral Agency Framework for Legitimate Integration of AI in Bureaucracies" explores the introduction of AI systems into public sector organizations (PSOs) and the attendant concerns about accountability and transparency. While AI promises efficiency and personalization, it raises ethical implications, notably the potential creation of "ethics sinks" — structures where responsibility may dissipate due to AI systems interfacing with bureaucratic frameworks. The authors propose a moral agency framework to guide AI integration while enhancing organizational transparency and legitimacy.

Dual Purposes of Bureaucracy

Bureaucracies serve two primary functions: the legitimate implementation of legislation and stewardship. These functions rely heavily on the moral agency of human bureaucrats. Legitimate Implementation of Legislation: Bureaucracies are designed to ensure that legislative goals set by elected representatives are faithfully executed, thereby maintaining public trust in democratic systems. This requires bureaucrats to work like cogs in a machine, faithfully implementing orders with minimal personal discretion. Such design enables transparency and accountability, ensuring the organization functions towards specified legislative goals.

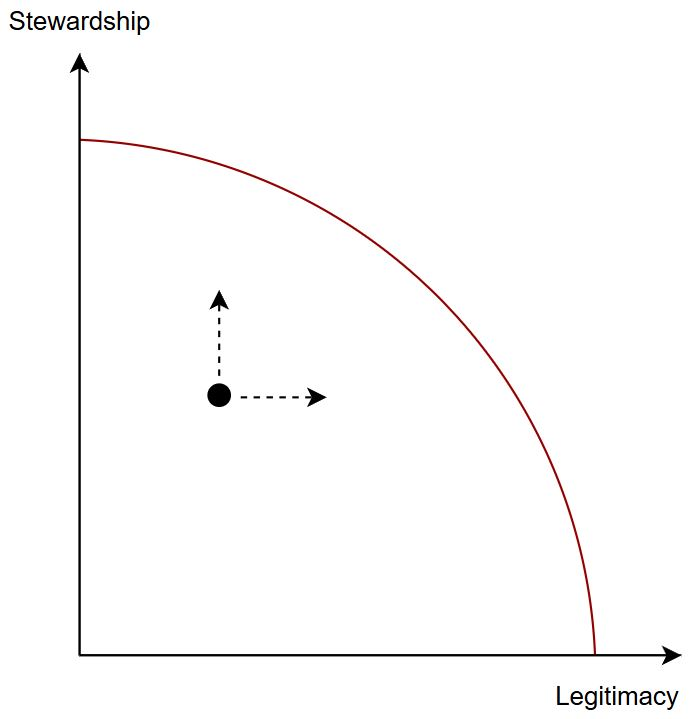

Figure 1: Illustration of the "Pareto Frontier" between bureaucracies' dual aims of stewardship and legitimacy. PSOs not at the frontier can improve in one dimension without necessarily jeopardizing the other.

Stewardship: Beyond execution, bureaucracies maintain state stability over the long term, providing the necessary environment for sustained governance and safeguarding against impulsive legislative changes. The resilience and consistency of bureaucracies derive from the plurality of bureaucrats' moral dispositions, which collectively provide checks and balances to ensure stability.

Situating AI in Bureaucracy

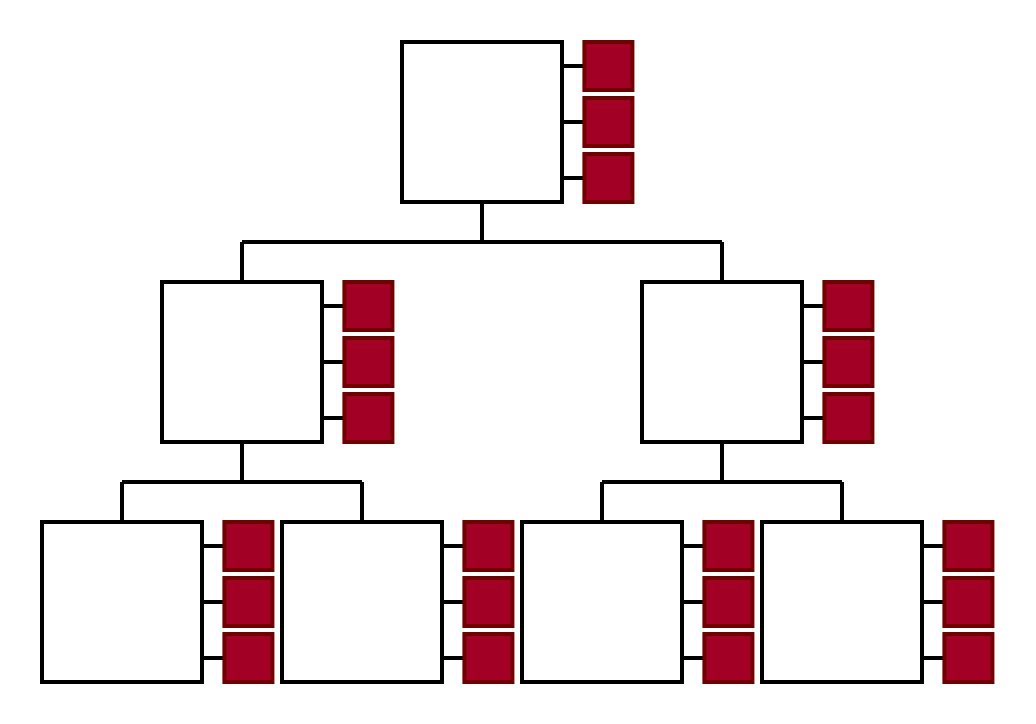

AI Systems as Tools: The paper discusses the "tool conception" of AI, which traditionally views AI systems as continuations of ICT-driven digitalization processes. While practical implementations align with this conception, modern AI systems' increasing generality and autonomy challenge this view. AI systems today can be seen less as singularly purposed tools and more as complex, interactive systems integral to organizational functioning.

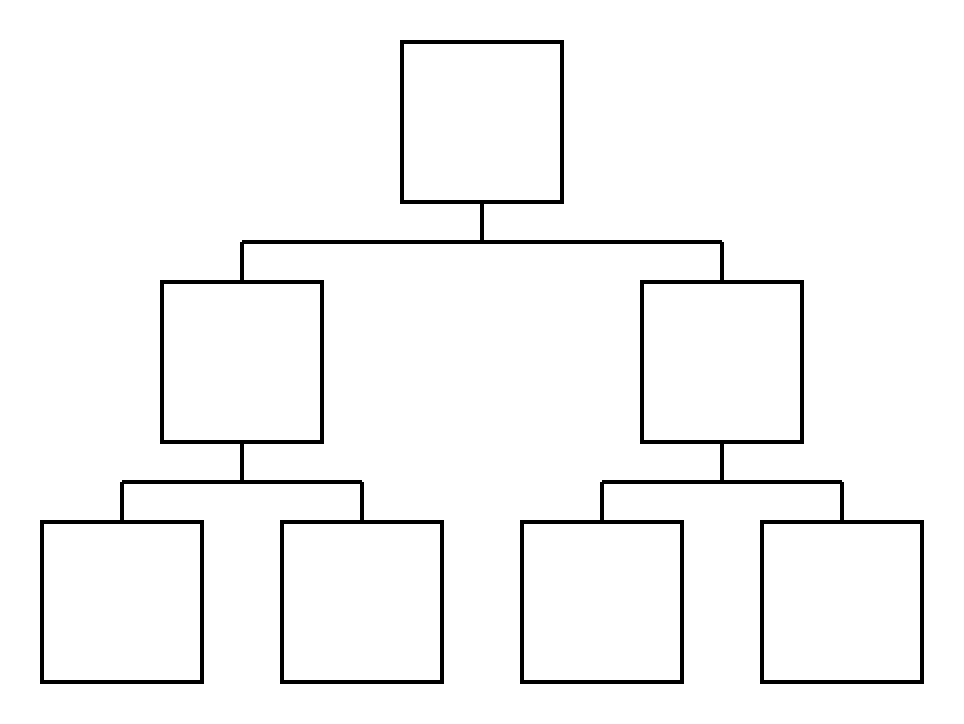

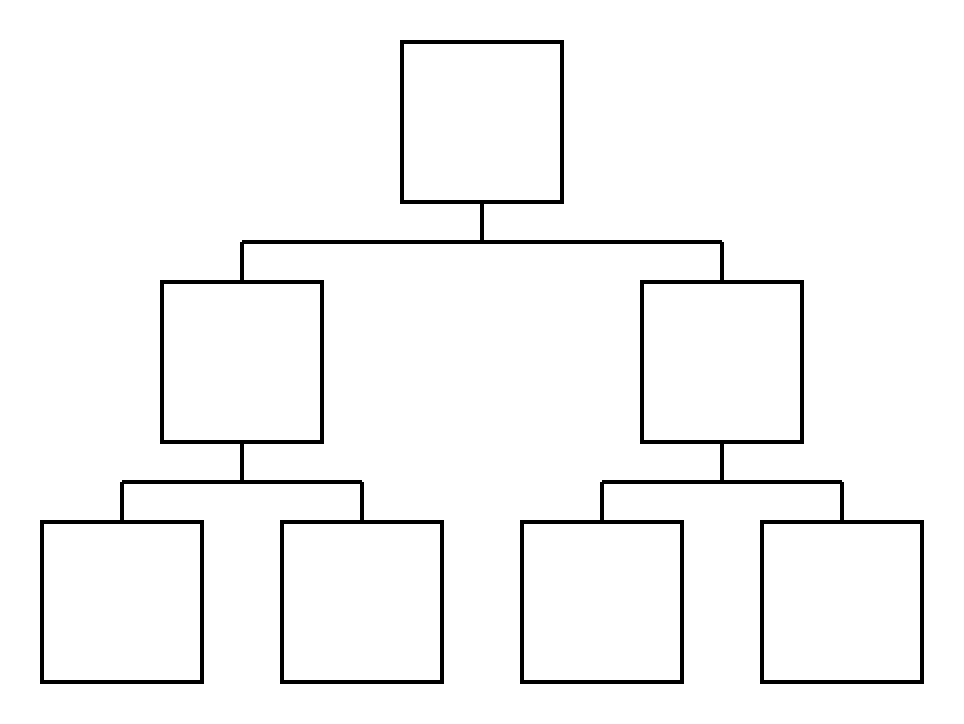

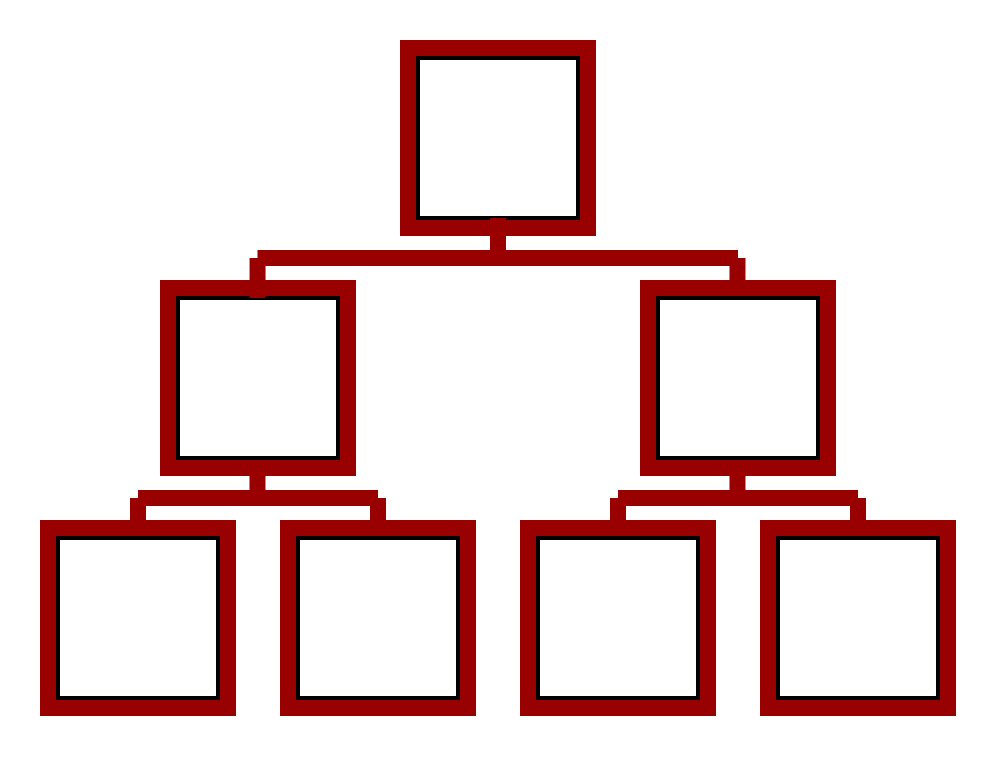

Figure 2: The ideal-type Weberian bureaucracy is a system of responsibility attribution between legitimate moral agents. The grounding of that legitimacy in democracies lies in rooting the chains of responsibility in elected representatives.

AI Systems as Moral Subjects: The notion of AI as moral agents is debated, with the paper rejecting this possibility due to AI's lack of fundamental attributes like consciousness and stable self-motivation. The authors argue for caution against attributing moral agency to AI, advocating instead for viewing AI as part of the bureaucratic infrastructure rather than as autonomous entities with moral status.

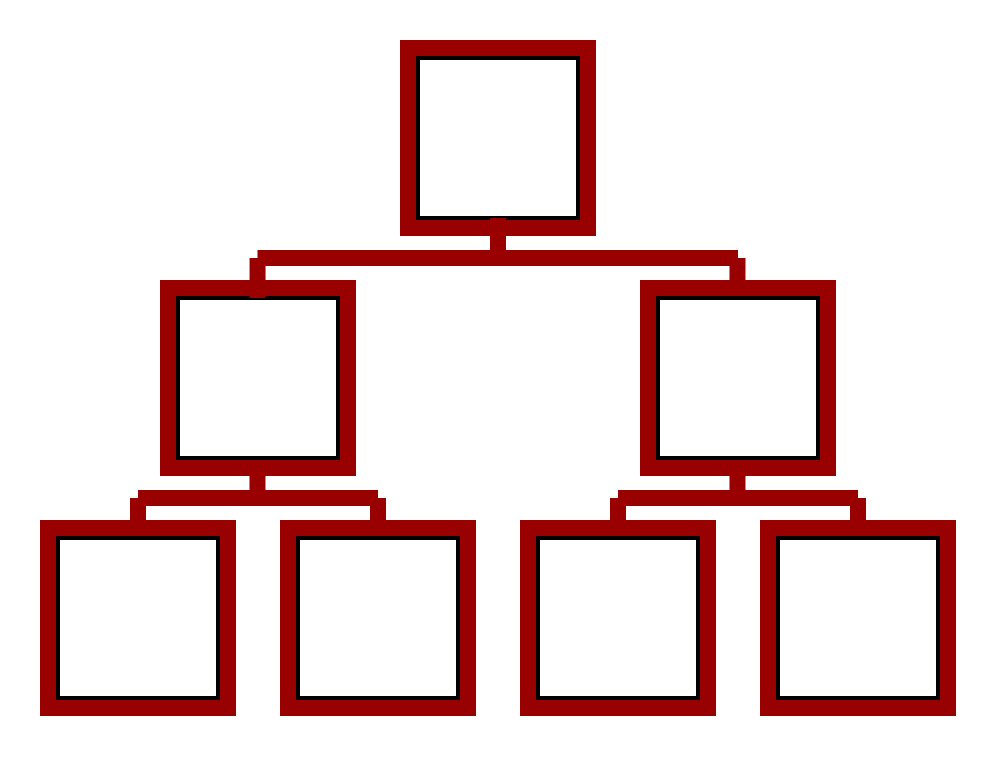

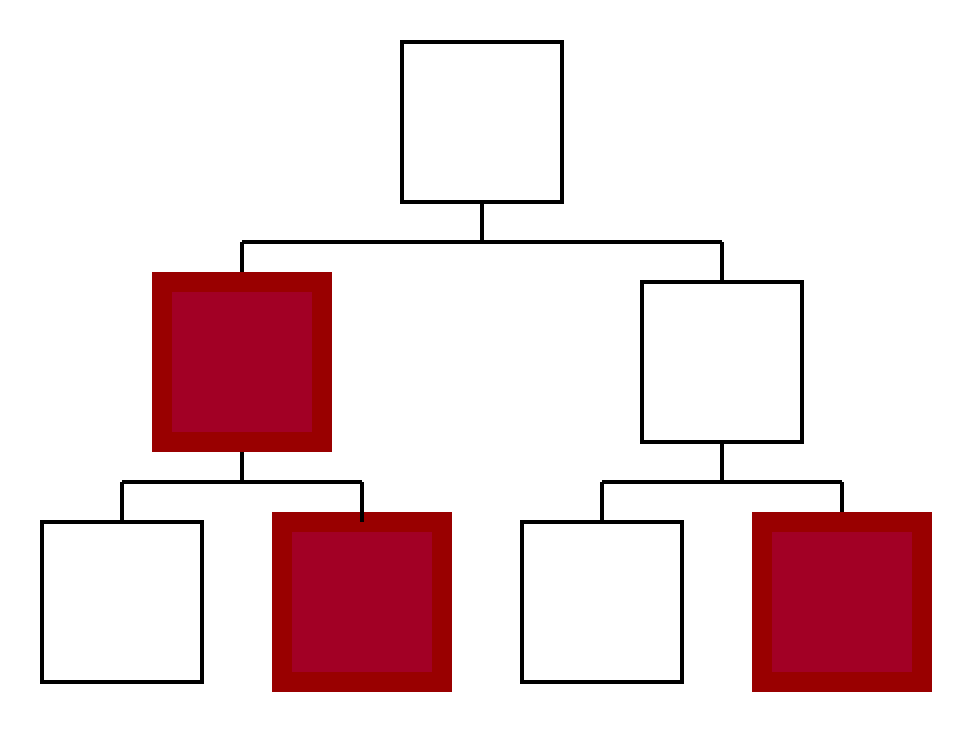

Figure 3: Illustration of AI integration in bureaucracy under the "tool conception". Humans (in white) use AI systems (in red) in purpose-bound ways, thereby retaining moral agency.

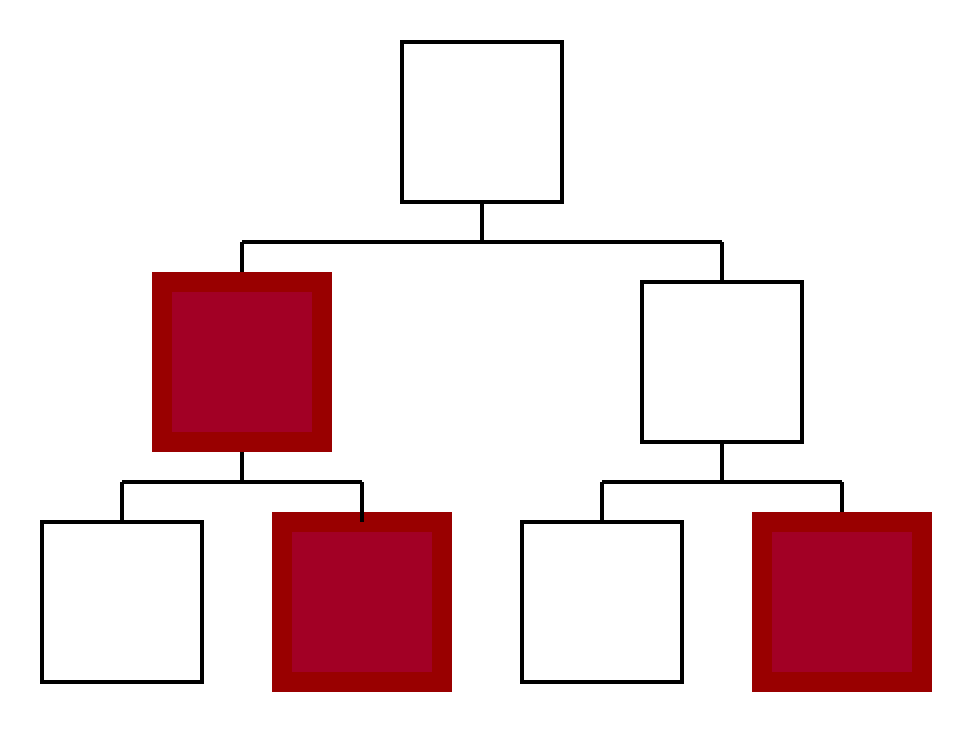

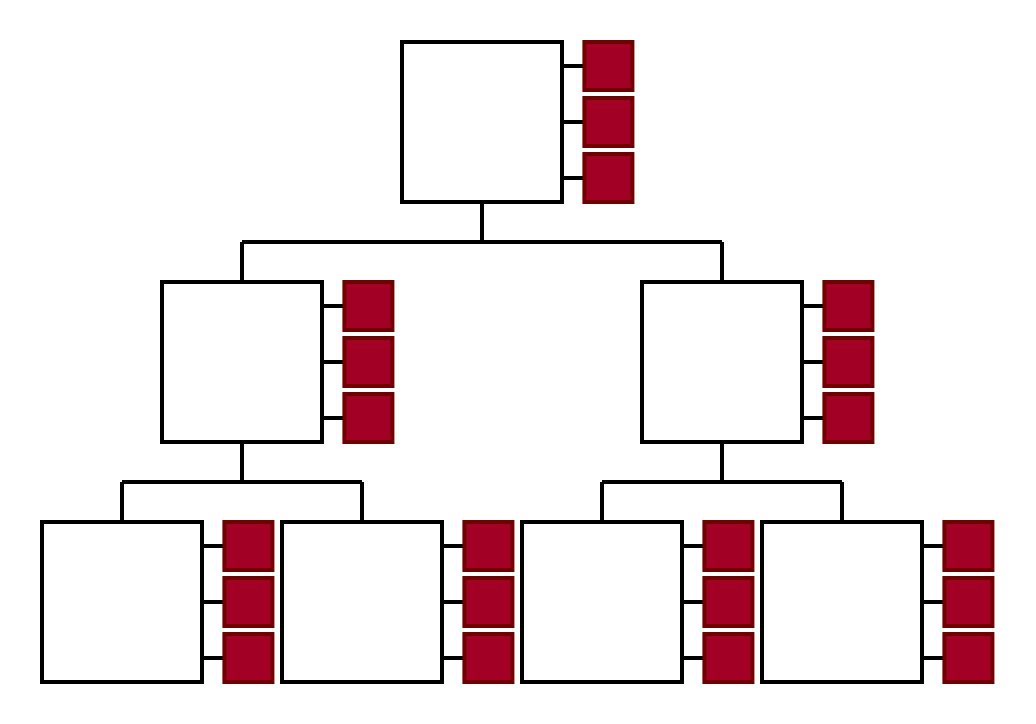

Figure 4: Illustration of AI integration in bureaucracy under the assumption that AI systems can be moral subjects. Here, AI systems can be directly integrated in structures of responsibility attribution.

AI as Augmenting Infrastructure: AI systems should be seen as augmentations to bureaucratic structures, enhancing the capabilities of human moral agents without usurping their role in responsibility allocation. AI can serve as the "muscles, sinews, and ligaments" of an organization, amplifying human agency while preserving the legitimate distribution of responsibility among human agents.

Figure 5: Our conception of AI systems as "muscles, sinews and ligaments" of a bureaucracy. AI systems increase the agency of the organization as a whole by amplifying the capacities of human moral agents — but do not negate the distribution of responsibility exclusively among the legitimate moral agents.

A Moral Agency Framework

To ensure the ethical integration of AI in bureaucracies, the authors propose a three-point framework:

- Maintain clear and just human lines of accountability: Ensuring each decision influenced by AI can be traced back to human agents responsible for oversight and evaluation.

- Ensure humans can verify correct functioning of AI systems: Humans need to be able to validate the outputs of AI systems to maintain transparency and accountability.

- Introduce AI without jeopardizing the dual aims of legitimacy and stewardship: AI can bolster one aim without undermining the other, capturing efficiency gains while preserving democratic and stewardship principles.

Conclusion

While AI integration poses risks of undermining bureaucratic functionality, these risks are not insurmountable. By focusing on design decisions that prioritize transparency and accountability, the responsible integration of AI can not only preserve but enhance the legitimacy and capacity of PSOs. The moral agency framework proposed in the paper serves as a guide, ensuring AI systems augment rather than erode bureaucratic structures, thus upholding both the efficiency and ethical integrity of government operations.