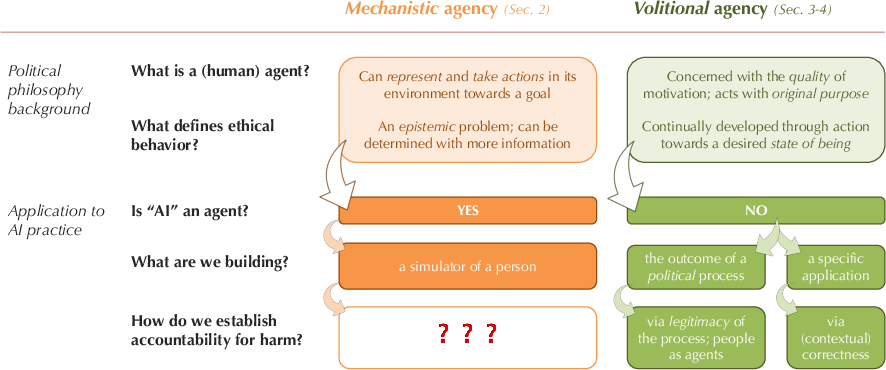

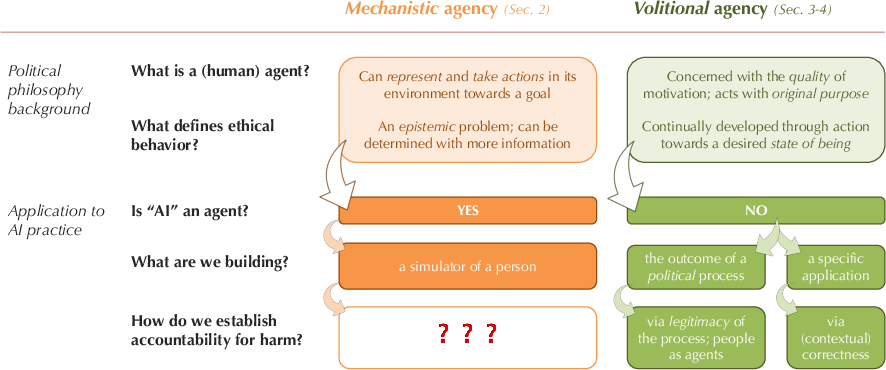

- The paper critiques the anthropomorphic analysis of AI by contrasting mechanistic agency with volitional agency in ethical considerations.

- It demonstrates that mechanistic models, despite their functional metrics, fall short in assigning genuine moral accountability to AI systems.

- The discussion advocates for alternative frameworks that emphasize human oversight and political governance to responsibly manage AI influences.

Exploring AI Agency and Accountability: Mechanistic vs. Volitional Perspectives

Introduction

Jessica Dai's paper "Beyond Personhood: Agency, Accountability, and the Limits of Anthropomorphic Ethical Analysis" (2404.13861) provides a deep dive into the conceptual debates surrounding AI agency and ethical accountability. By incorporating political science and philosophy, the paper critiques the prevalent assumption that AI can be treated as human-like agents for ethical examination. Dai introduces two distinct interpretative frameworks: mechanistic and volitional views of agency, highlighting their implications on the ethical standards and accountability mechanisms applicable to AI systems.

Mechanistic Agency in AI Research

In AI, mechanistic agency is often the go-to model. AI systems are typically described as "agents" based on their functional capabilities—like reinforcing learning processes. The mechanistic view defines agency primarily through action capacities informed by representational states. AI agents can simulate actions based on pre-defined criteria and are mathematically evaluated for performance across metrics such as planning capabilities or standardized tests [bubeck2023sparks]. This mechanistic approach, while logical, runs into ethical issues when tasked to simulate moral agency using datasets that emulate human dilemmas [nie2023moca]. Such paradigms, while replicating human moral reasoning, converge towards viewing AI as simulators of ethically ideal humans, a notion inherently problematic due to the absence of genuine human traits like intrinsic motivation.

Figure 1: A summary of the core arguments made in this paper. There are two core views of human agency; the mechanistic view is commonly assumed by AI research, yet leads mostly to a dead-end for establishing accountability.

Ethical Implications and Mechanistic Limitations

Mechanistic agency, despite its prevalence, struggles with assigning moral accountability. For holding AI systems responsible, Dai draws on philosophical definitions where the agent must be capable of normative significance, judgmental capacity, and relevant control [list2011group]. Even if AI systems simulate ethical judgment, their capacity to exert control or bear responsibility is limited. Therefore, AI systems under mechanistic agency cannot critically be deemed moral agents. The mechanistic approach suggests the ideal of modeling perfect ethical behavior—a pursuit inherently limited and philosophically inadequate due to its reductionist view of ethics as mere epistemic problem-solving rather than moral reasoning.

Volitional Agency: An Alternative Framework

The volitional view posits agency as a function of intrinsic desires and motivations to act towards self-defined moral beliefs [taylor1985philosophical]. This perspective inherently disqualifies AI systems from being considered volitional agents due to their lack of internal motivations and original purpose [bai2022constitutional]. Unlike mechanistic agency, volitional agency underscores ethics as an active practice—a continuous choice to align actions with self-ascribed morals, something beyond AI's functional capacities. Under this view, AI cannot independently justify ethical actions or shoulder moral responsibility.

Alternatives to AI as Moral Agents

Addressing the limitations of defining AI as moral agents, Dai proposes alternative frameworks. One suggestion is specificity in application contexts, which allows for clear standards of correctness without attributing moral agency to AI [antoniak2023designing]. Another approach is viewing AI as outcomes of political processes, shifting the accountability to the creators and users of AI systems rather than the systems themselves. This political lens emphasizes participatory governance and consensus in AI development, advocating for continuous engagement and legitimacy deriving from collective human values [delgado2023participatory].

Conclusion

Jessica Dai's exploration in "Beyond Personhood" reconfigures the conventional discourse on AI ethics by examining deeper philosophical underpinnings of agency. Recognizing AI as non-agent entities in moral frameworks allows for more practical governance models that prioritize human agency and decision-making. The discussion encourages reevaluating current AI practices, urging stakeholders to regard AI as politically shaped artifacts rather than imperfect human simulators, thus redefining accountability in AI ethics.