- The paper introduces ArtiPoint, which uses deep point tracking and factor graph optimization to estimate articulated object models in dynamic environments.

- The methodology leverages ego-centric RGB-D video and a novel Arti4D dataset to overcome limitations of fixed-camera setups in practical, cluttered scenes.

- Experimental results show lower angular and translational errors compared to existing approaches, ensuring high accuracy in revolute and prismatic joint estimation.

Articulated Object Estimation in the Wild

The paper "Articulated Object Estimation in the Wild" introduces a novel framework, ArtiPoint, capable of estimating articulated object models in dynamic environments from ego-centric RGB-D inputs under conditions of partial observability. This method addresses the limitations of previous approaches that are constrained to fixed camera positions and isolated objects, often failing in real-world, cluttered scenes.

Introduction

Robotic manipulation involves moving objects to achieve desired goals, and the complexity increases significantly when dealing with articulated objects. Previous solutions have predominantly leveraged stable environments with static cameras, which limits their applicability in practical settings such as domestic environments or workplaces where conditions are dynamic. ArtiPoint provides a solution by employing deep point tracking combined with factor graph optimization. This method allows for robust estimation using only raw video inputs without relying on pre-trained deep models that may overfit.

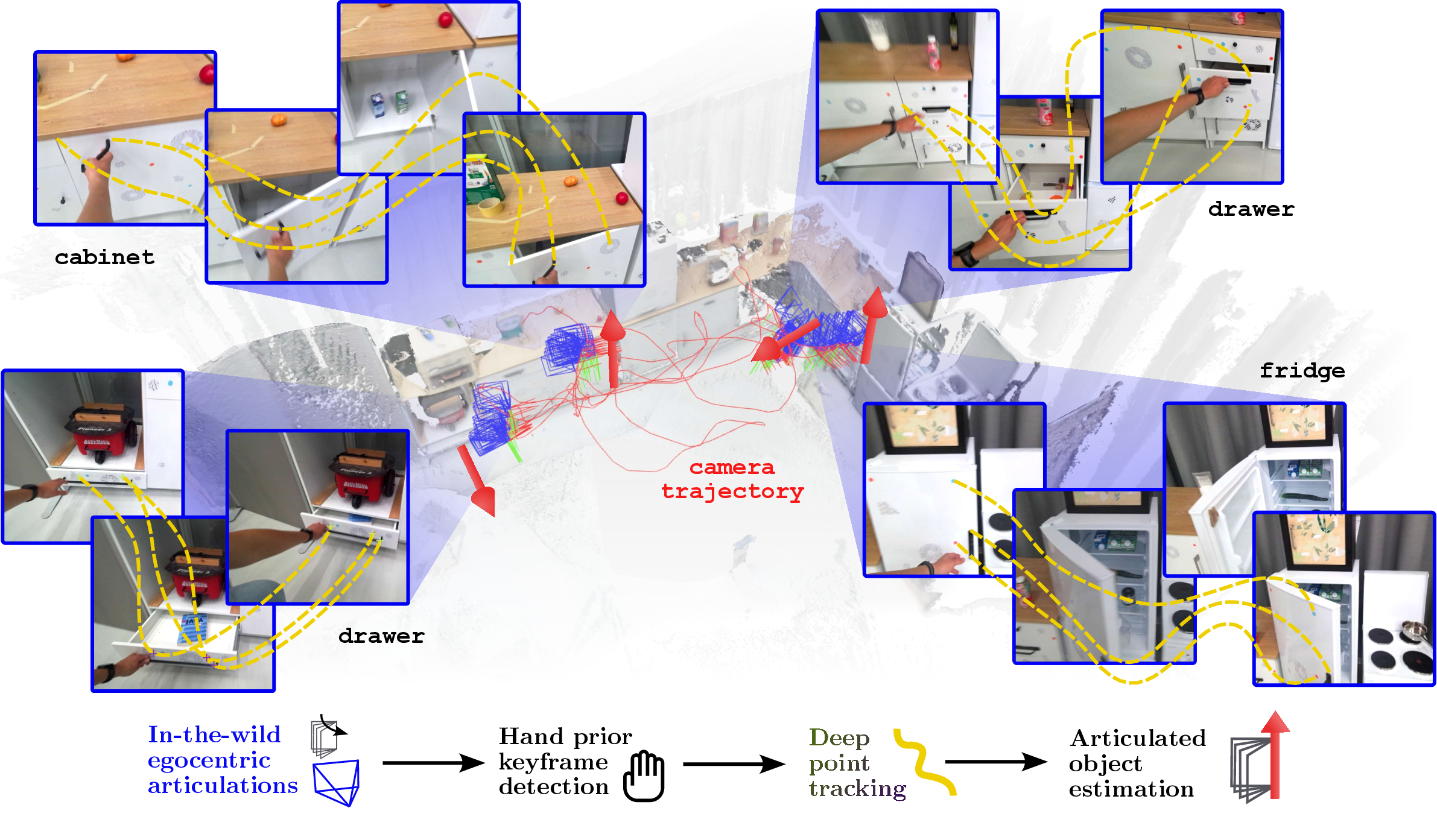

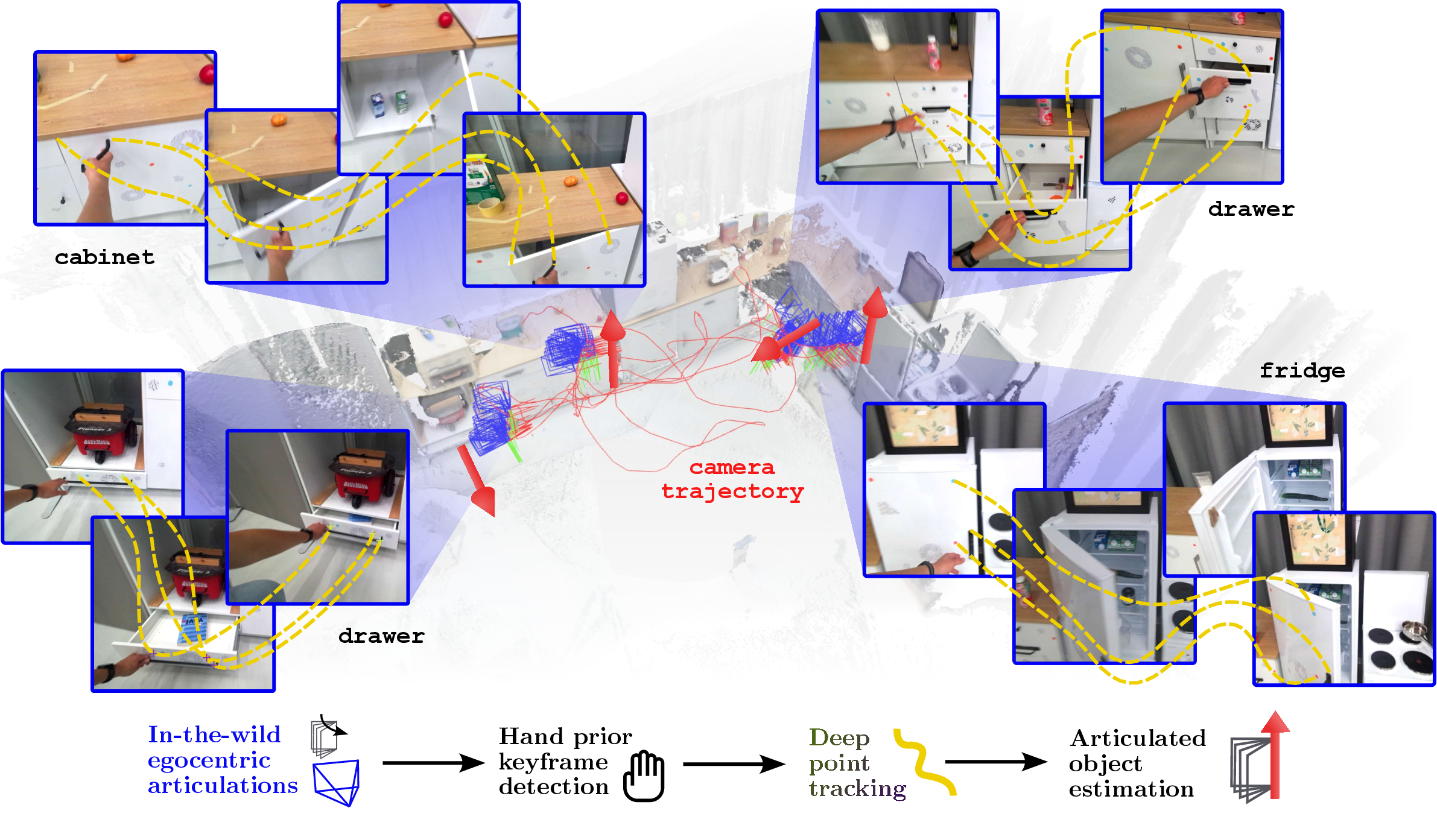

Figure 1: We present ArtiPoint, our novel approach to articulated object estimation in the wild. ArtiPoint makes use of deep point tracking and factor graph optimization.

Methodology

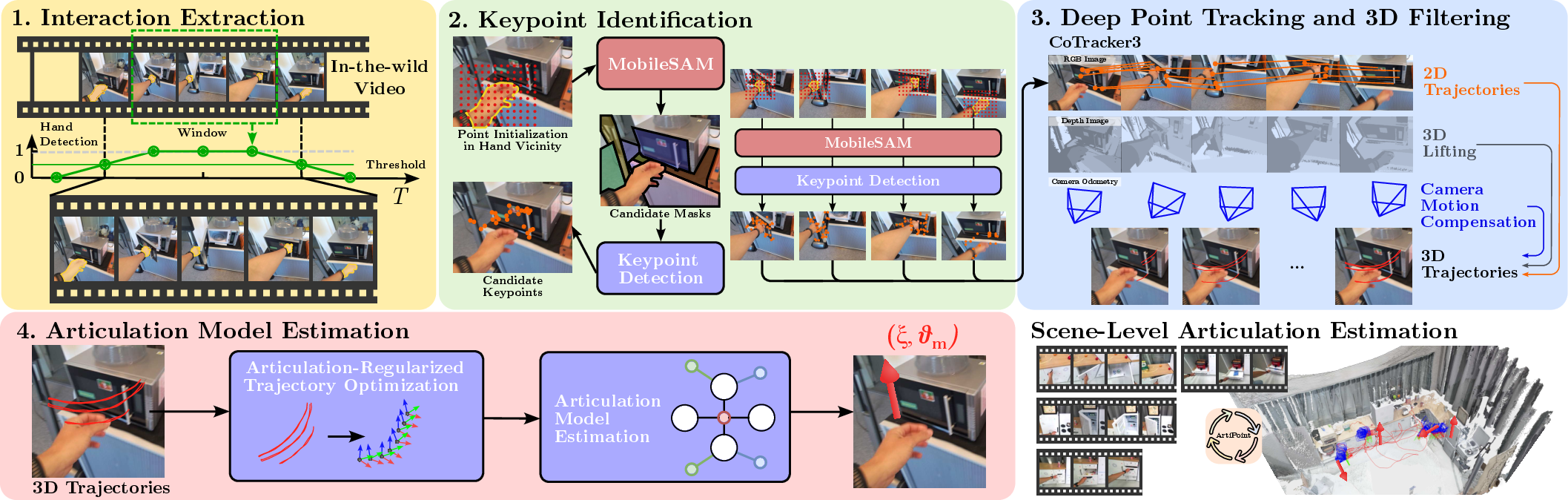

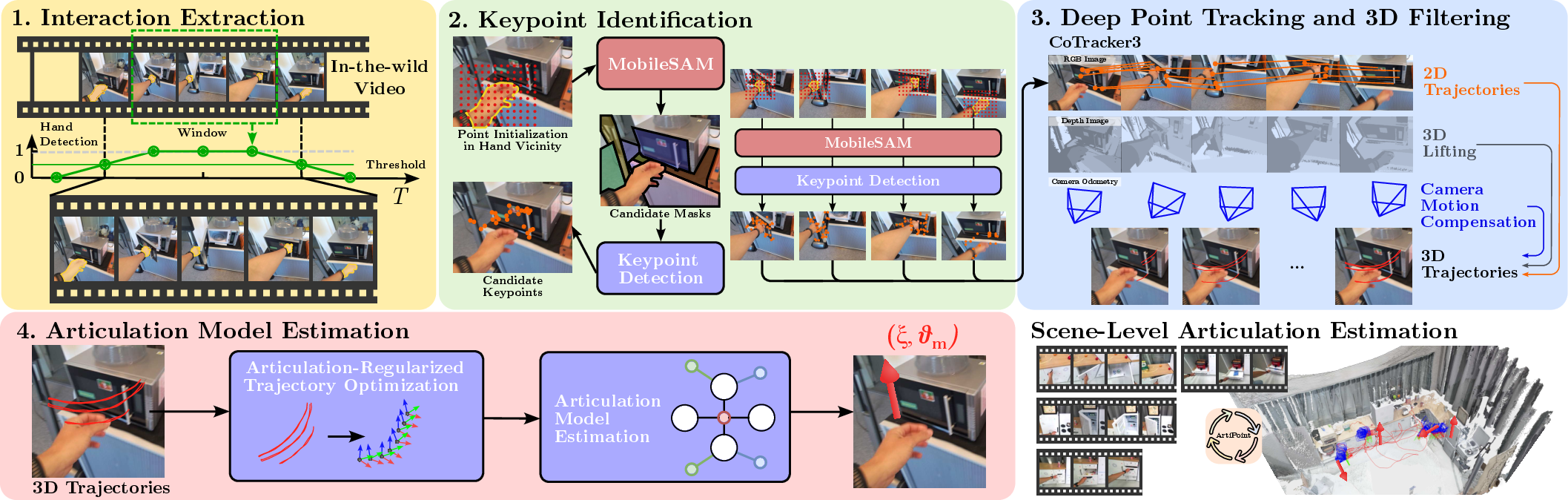

ArtiPoint's methodology is centered around leveraging human interactions with objects as cues for estimating articulated motions. This is divided into four stages: interaction detection, point tracking, 3D trajectory lifting and filtering, and articulation model estimation.

- Interaction Interval Extraction: Using an ego-centric video feed, hand masks are extracted to identify segments of interaction. A moving average computes the visibility of hands, creating time intervals corresponding to object interaction scenarios.

- Point Tracking: CoTracker3, an advanced point tracking system, is employed to track points around detected hands, efficiently gathering data on the object's movement trajectory during interactions.

- 3D Trajectory Estimation and Filtering: Extracted 2D trajectories are converted to 3D using depth readings, and further local transformations are applied to compensate for the camera motion. Filtering techniques remove static points and occlusions, enhancing the accuracy of motion estimation.

- Articulation Model Estimation: Factor graph formulations are applied to the trajectory data, flexibly modeling complex joint types, such as revolute and prismatic joints, in 6D space by leveraging SE(3) transformations.

Figure 2: Overview of the method: Interaction segments are identified and point trajectories are extracted and processed to estimate articulation models.

Dataset: Arti4D

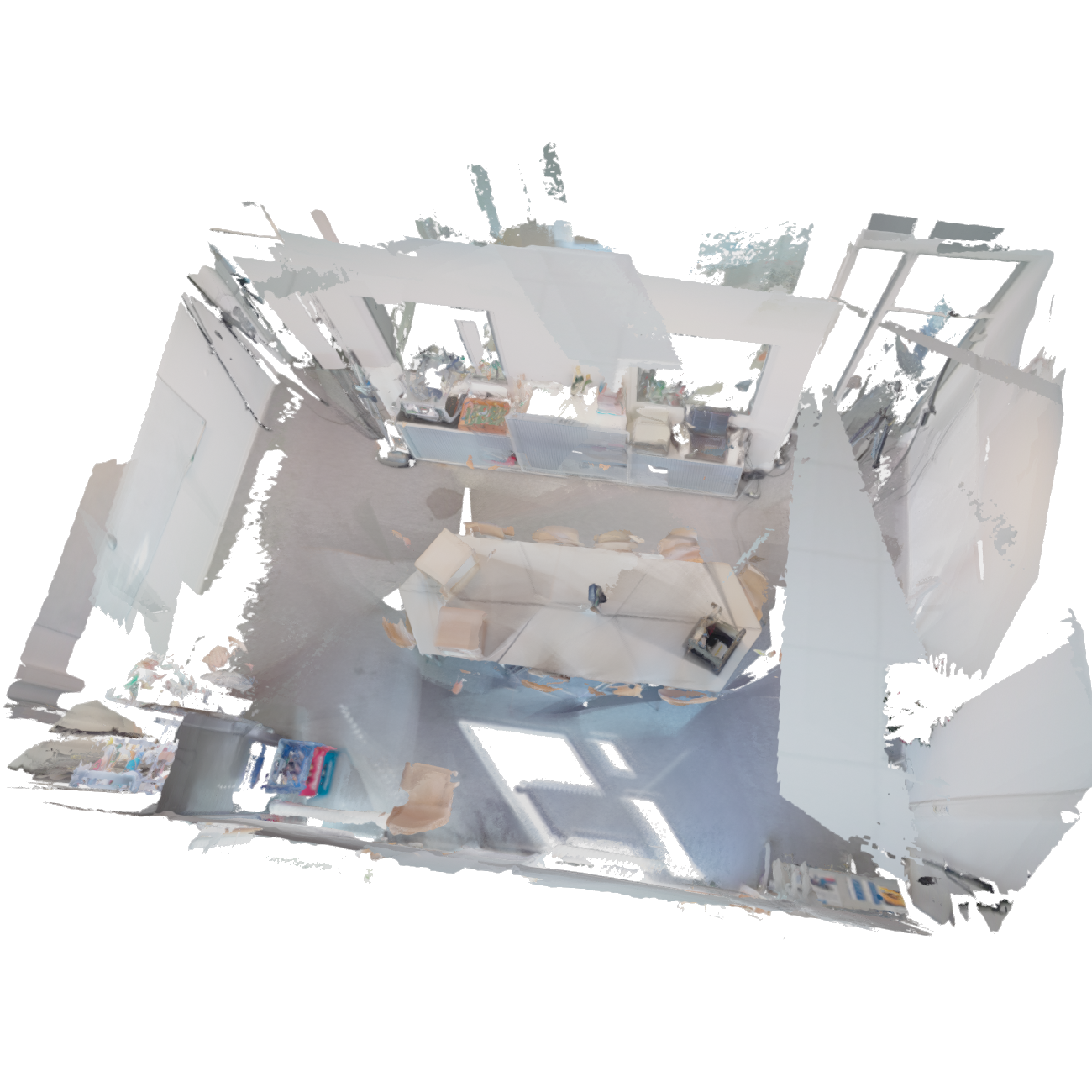

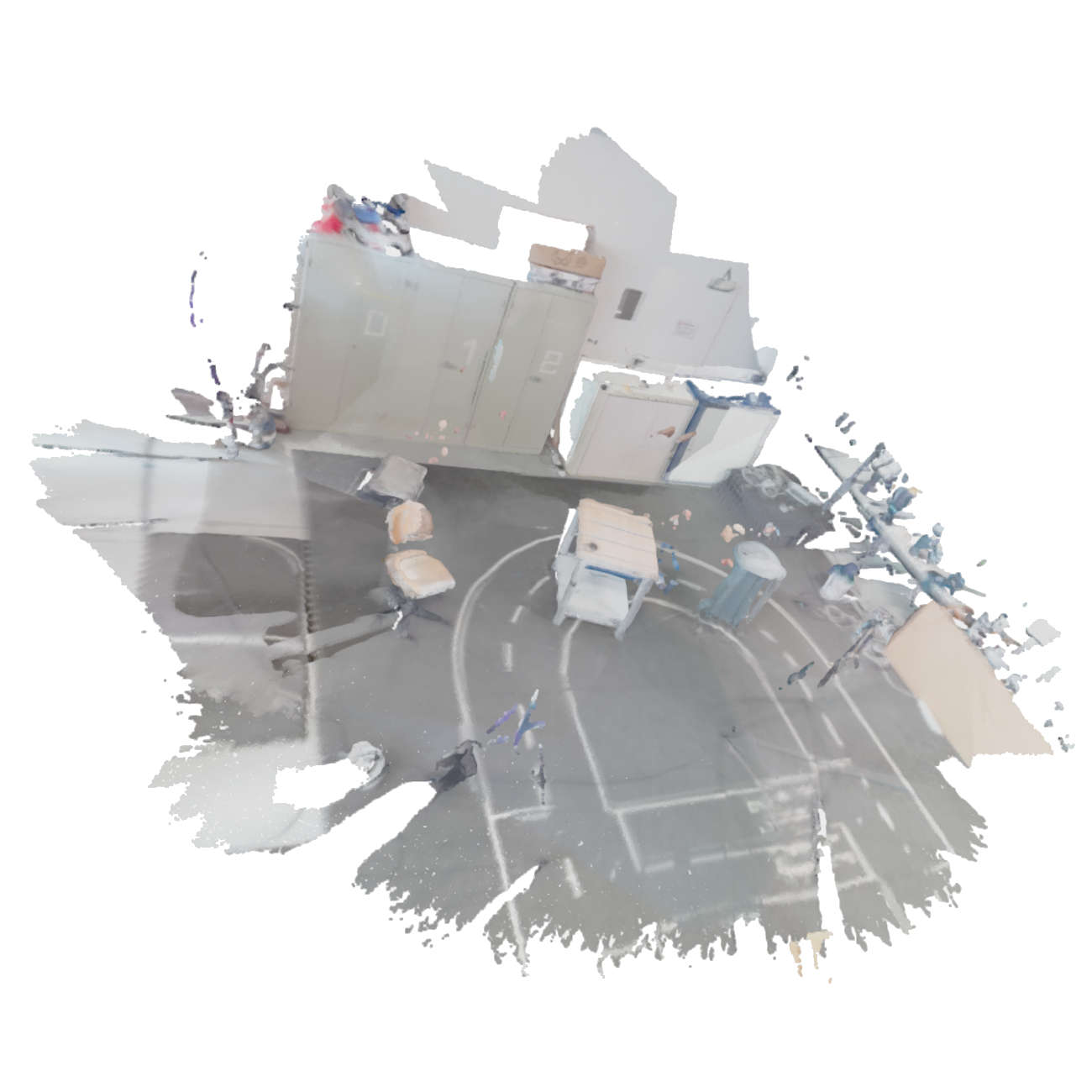

The introduction of Arti4D, a dataset recorded with an ego-centric camera, provides the first real-world scene-level annotated interactions of articulated objects. This dataset includes interaction windows, axis labels, and camera pose ground truths, offering a comprehensive benchmark for future research. Arti4D covers various environments, capturing diverse and complex interactions, from kitchen scenes to robotics labs, under dynamic camera movements and real-world scene complications.

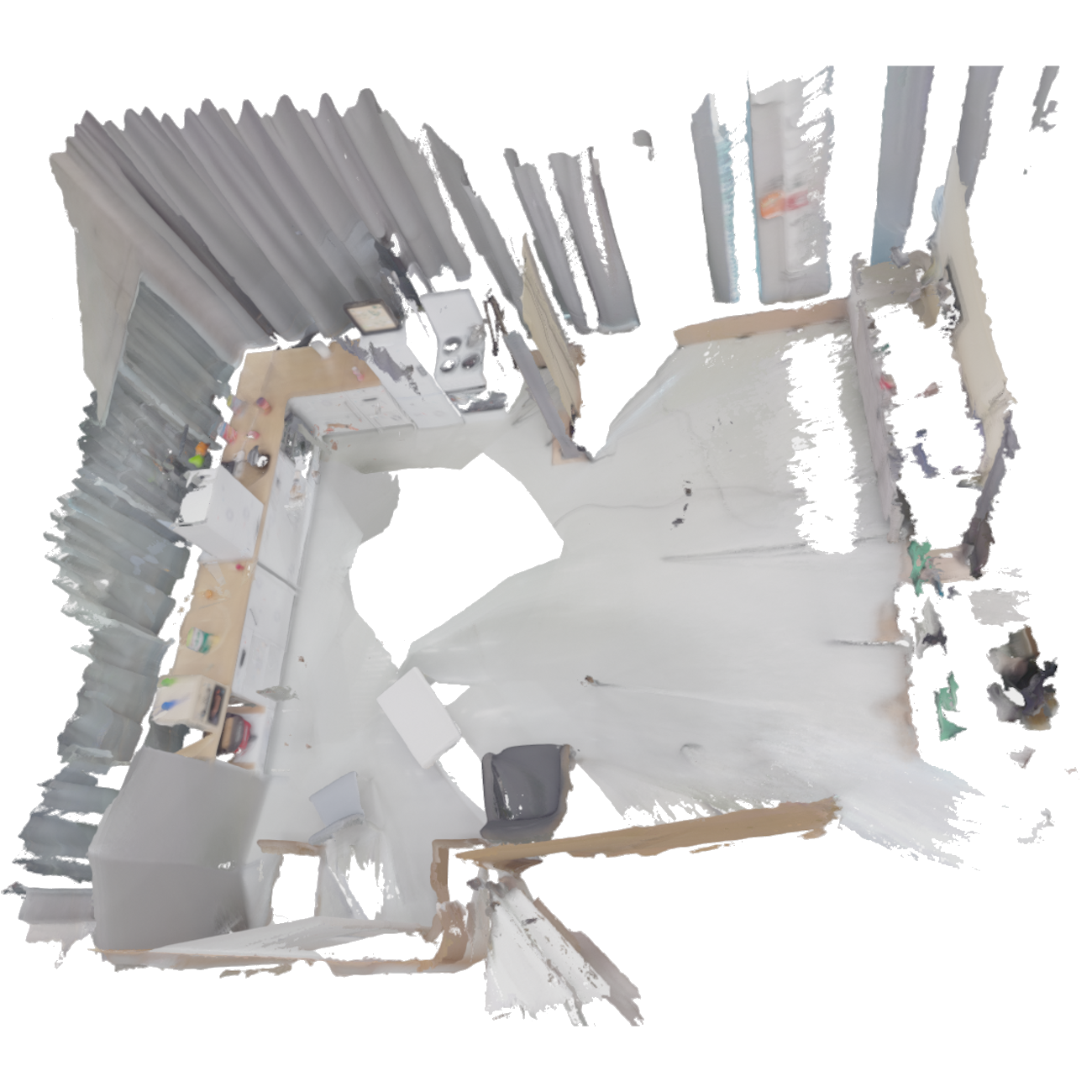

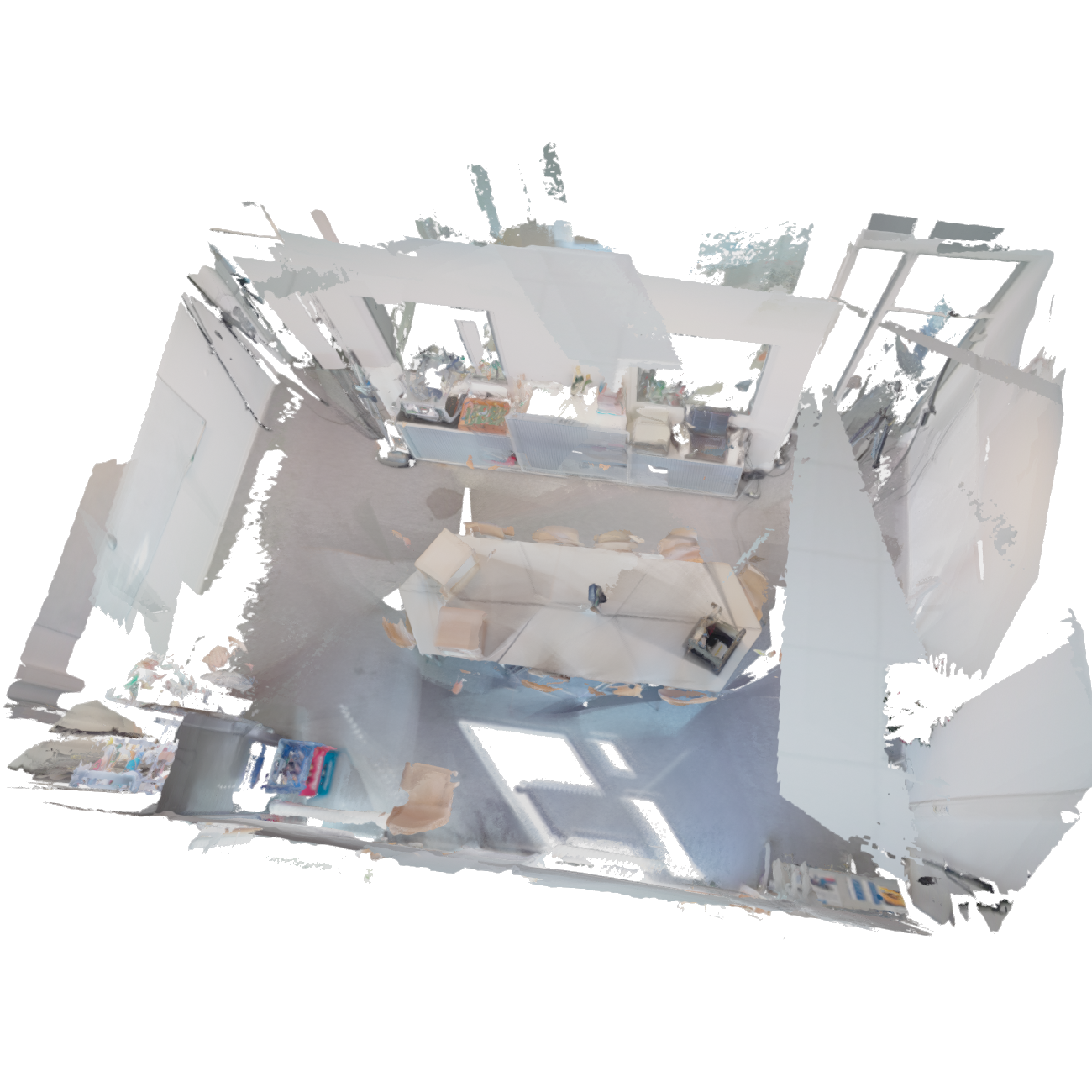

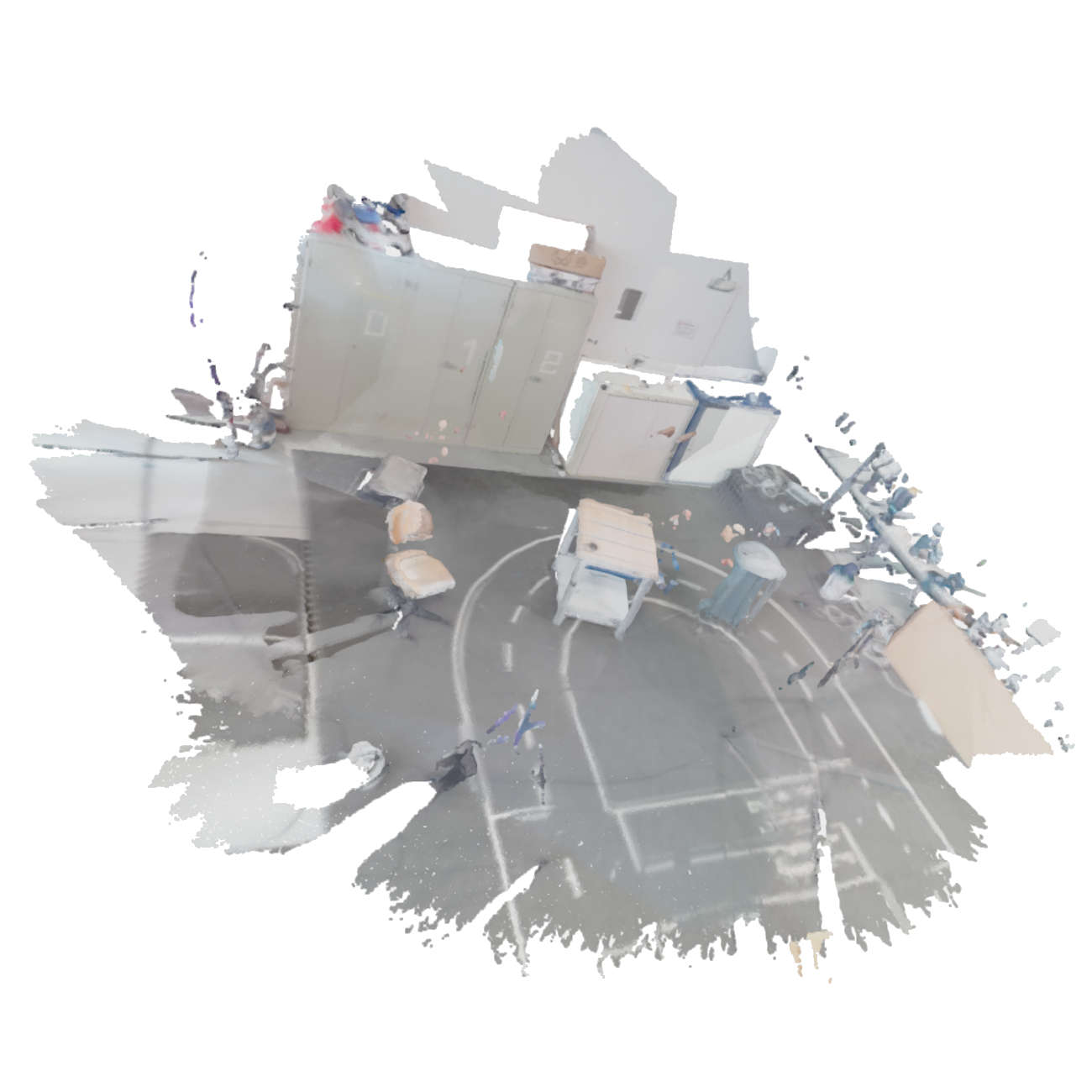

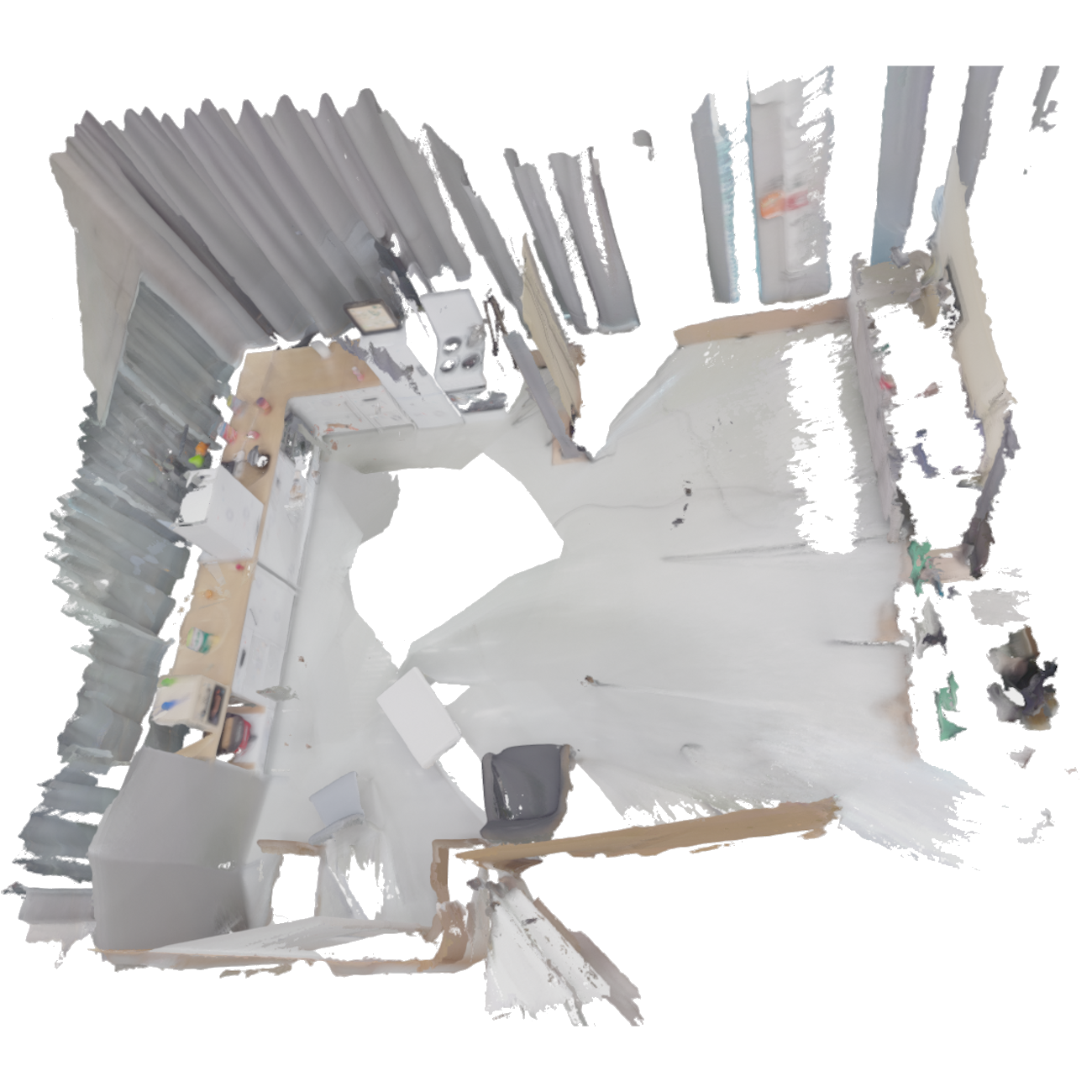

Figure 3: Reconstructed scenes of the four Arti4D environments.

Experimental Results

The experimental evaluation demonstrates that ArtiPoint outperforms existing methods, including both classical factor graph solutions and contemporary deep learning models. Compared to alternatives like Ditto and ArtGS, ArtiPoint shows lower angular and translational errors across both revolute and prismatic joints.

Quantitative results detail ArtiPoint's robust performance, with metrics showing the lowest errors and highest type prediction accuracy in prismatic and revolute joint identification. The deployment of ArtiPoint using realistic camera odometry further confirms its stability in unpredictable environments.

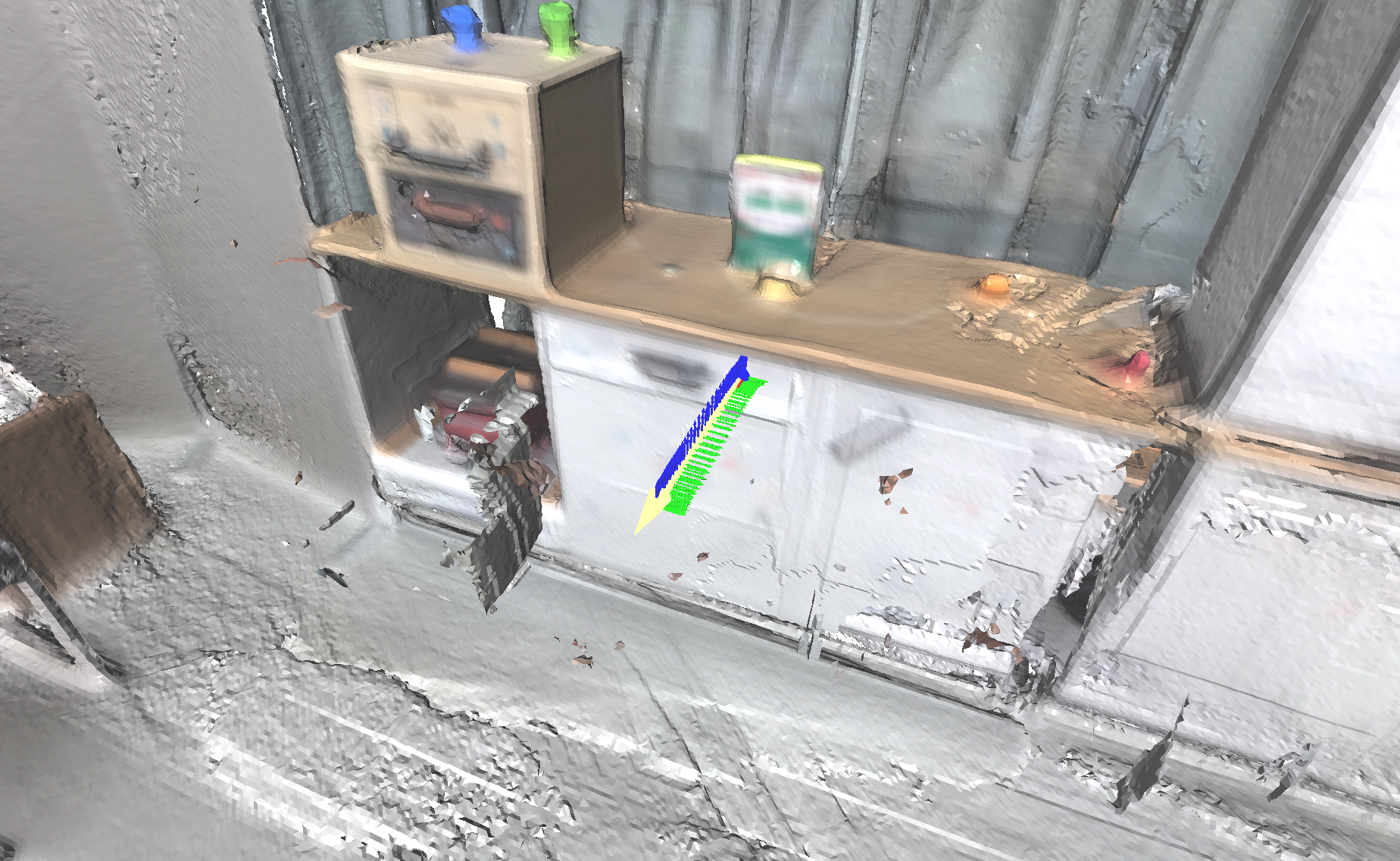

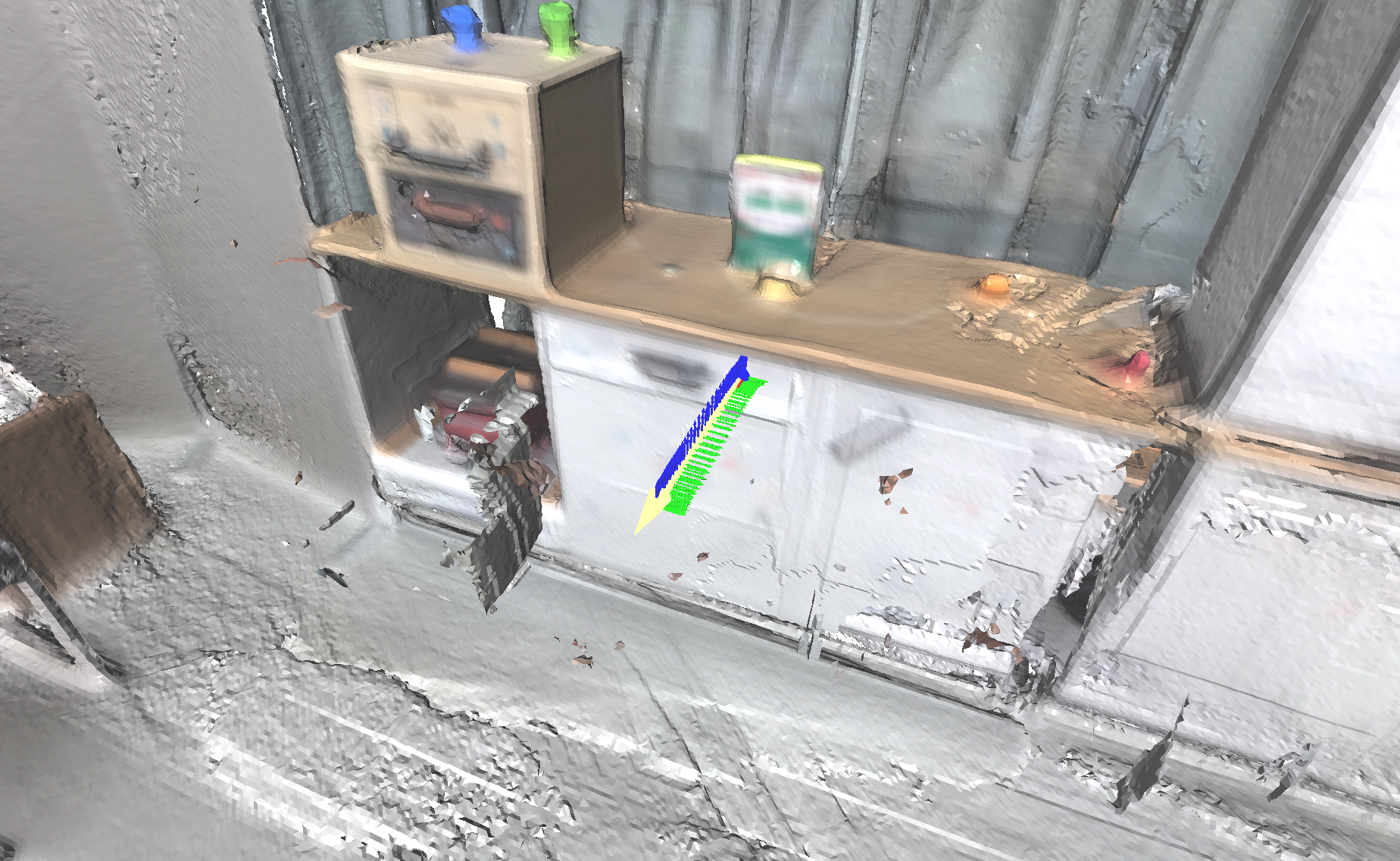

Figure 4: Qualitative results on Arti4D: Estimated joint axis and pose trajectory demonstrate ArtiPoint’s effectiveness in complex scenes.

Conclusion

ArtiPoint marks a significant step forward in articulated object estimation by enabling systems to understand complex interactions in dynamic settings. This work not only proposes an innovative method but also presents a challenging dataset to drive future research. As AI continues to integrate into real-world applications, methods like ArtiPoint are essential for enhancing robotic interaction capabilities. Future advancements could further refine these techniques, making autonomous systems even more adaptive and resilient in diverse environments.

Figure 5: Scene-level prediction results showcasing the comprehensive output of the ArtiPoint method.

In summary, ArtiPoint offers a robust and scalable approach to articulated object estimation, setting a new standard for real-world applicability. Integrating this system into broader robotic platforms holds promise for transformative applications in everyday environments.