Meta-Policy Reflexion: Reusable Reflective Memory and Rule Admissibility for Resource-Efficient LLM Agent

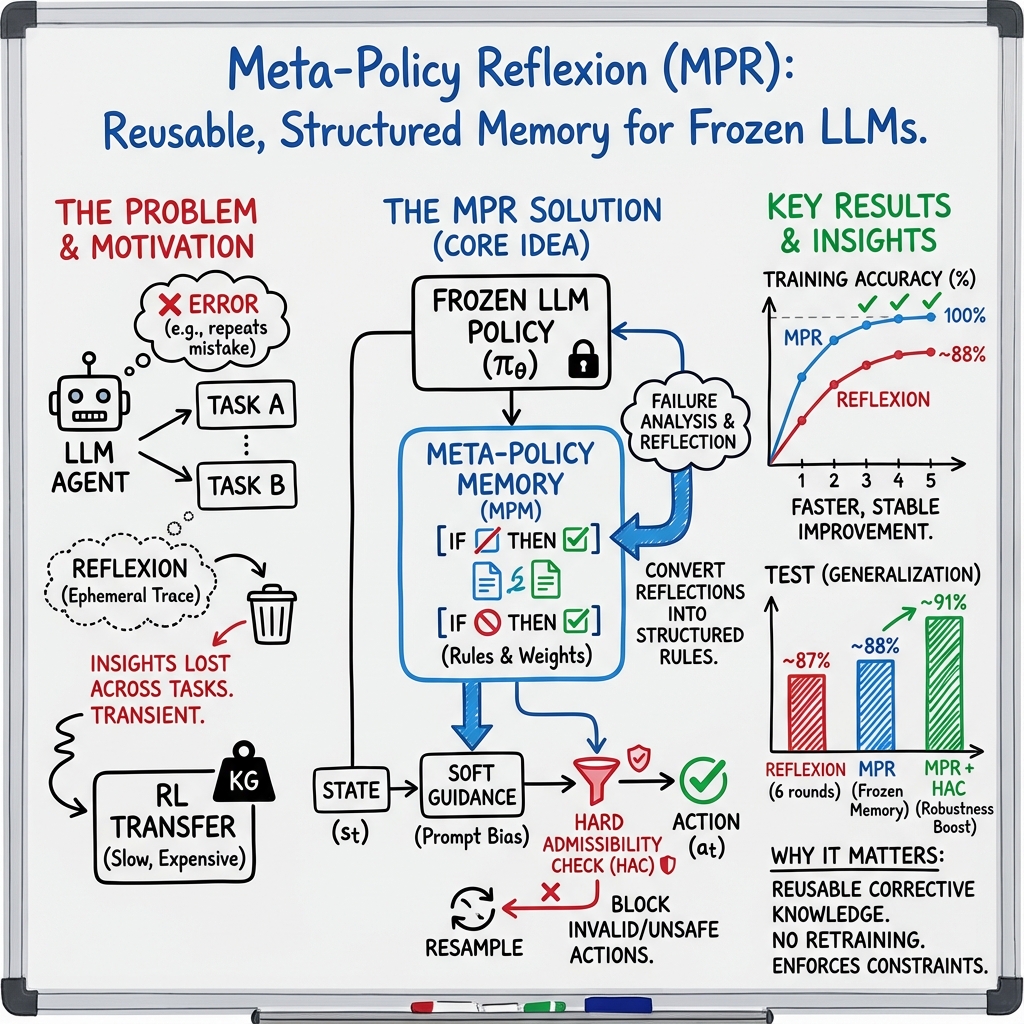

Abstract: LLM agents achieve impressive single-task performance but commonly exhibit repeated failures, inefficient exploration, and limited cross-task adaptability. Existing reflective strategies (e.g., Reflexion, ReAct) improve per-episode behavior but typically produce ephemeral, task-specific traces that are not reused across tasks. Reinforcement-learning based alternatives can produce transferable policies but require substantial parameter updates and compute. In this work we introduce Meta-Policy Reflexion (MPR): a hybrid framework that consolidates LLM-generated reflections into a structured, predicate-like Meta-Policy Memory (MPM) and applies that memory at inference time through two complementary mechanisms soft memory-guided decoding and hard rule admissibility checks(HAC). MPR (i) externalizes reusable corrective knowledge without model weight updates, (ii) enforces domain constraints to reduce unsafe or invalid actions, and (iii) retains the adaptability of language-based reflection. We formalize the MPM representation, present algorithms for update and decoding, and validate the approach in a text-based agent environment following the experimental protocol described in the provided implementation (AlfWorld-based). Empirical results reported in the supplied material indicate consistent gains in execution accuracy and robustness when compared to Reflexion baselines; rule admissibility further improves stability. We analyze mechanisms that explain these gains, discuss scalability and failure modes, and outline future directions for multimodal and multi-agent extensions.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Practical Applications

Immediate Applications

The following applications can be deployed with current LLM agent stacks by adding a Meta-Policy Memory (MPM) layer and Hard Admissibility Checks (HAC) around a frozen base model. Each item includes sector, potential tools/products/workflows, and assumptions or dependencies that affect feasibility.

- Software operations (DevOps/SRE)

- Sector: Software

- Use case: Safer command execution agents for CI/CD, infrastructure changes, and incident response. MPM captures reusable “do/don’t” rules distilled from past postmortems; HAC enforces environment and policy constraints (e.g., prevent destructive commands on production without change tickets).

- Tools/workflows: Shell/CLI wrapper with HAC (policy-as-code via JSON/XML schemas), “MPM Rule Store” that retrieves relevant rules based on context (service, environment, time window), prompt-level memory injection.

- Assumptions/dependencies: Accurate constraint specification (e.g., allowed commands per environment), reliable state detection (prod vs. staging), deterministic decoding or low-temperature sampling, audit logging.

- Robotic Process Automation (RPA) for back-office workflows

- Sector: Finance, Insurance, Retail, HR

- Use case: LLM agents updating CRMs, billing, and procurement systems with fewer repeated errors. MPM consolidates corrective patterns (e.g., field mapping fixes, ordering steps), HAC validates forms against schemas and business rules before submission.

- Tools/workflows: Form schema validators, API contract checkers, “Meta-Policy Memory Manager” for rule authoring and confidence weighting, LangChain/LlamaIndex plugin for memory retrieval.

- Assumptions/dependencies: Stable APIs and schemas; codified business constraints; access to logs of failed episodes for rule extraction.

- Data engineering and ETL governance

- Sector: Software, Data Platforms

- Use case: Agents that transform and load data with guardrails. MPM stores rules from historical data quality incidents; HAC enforces schema compatibility, PII handling, and lineage constraints before writes.

- Tools/workflows: Schema registry integration, DLP (data loss prevention) checks, pre-commit validation hooks for agent actions, “Rule Guardrails SDK.”

- Assumptions/dependencies: Metadata/catalog access; clear compliance policies (e.g., PII tagging); deterministic task flows.

- Customer support assistants with policy-compliant responses

- Sector: Customer Service

- Use case: Conversation agents that reuse corrective rules (e.g., refund eligibility logic, escalation criteria) and avoid repeated misinformation. HAC filters responses against compliance policies and tone/style constraints.

- Tools/workflows: Policy memory store (predicate-like rules with confidence), content filters and response validators, turn-level memory injection.

- Assumptions/dependencies: Up-to-date policy codification; consistent retrieval of relevant rules; human-in-the-loop for sensitive cases.

- Administrative healthcare workflows (non-clinical)

- Sector: Healthcare (operations)

- Use case: Scheduling, prior authorizations, and billing agents with HAC enforcing HIPAA-compliant data access and payer rules; MPM captures recurring insurer-specific correction patterns.

- Tools/workflows: EHR API wrappers with admissibility checks, payer rule packs in MPM, audit trails.

- Assumptions/dependencies: Strong privacy controls; domain-expert rule authoring; human approval for high-impact actions.

- Financial back-office: reconciliation and reporting

- Sector: Finance (accounting, compliance)

- Use case: Agents preparing reports and reconciliations that reuse corrective rules (e.g., period close procedures) and apply HAC to enforce accounting standards (e.g., double-entry consistency, threshold approvals).

- Tools/workflows: Ledger validators, approval workflows, “Policy Studio” for codifying financial rules.

- Assumptions/dependencies: Well-defined accounting policies; separation of read/write privileges; audit logging and versioned memory.

- Browser/UI automation for everyday tasks

- Sector: Daily life, Productivity

- Use case: Personal agents that fill forms, schedule meetings, and manage emails. MPM encodes user preferences and recurring fixes; HAC blocks risky actions (e.g., sending emails without confirmation).

- Tools/workflows: UI action validators (DOM constraints, confirmation prompts), preference memory, task templates.

- Assumptions/dependencies: Stable UI selectors; consent and confirm workflows; personal data privacy in memory.

- Academic and benchmarking use

- Sector: Academia

- Use case: Reproducible agent evaluations using frozen base models augmented by MPM and HAC; sharing rule sets to enable cross-task transfer studies without fine-tuning.

- Tools/workflows: Open-source MPM library, standardized datasets and failure logs, evaluation harnesses.

- Assumptions/dependencies: Access to agent logs; consistent task protocols; well-scoped constraint definitions.

- Enterprise compliance and data governance agents

- Sector: Enterprise IT, Legal

- Use case: Document routing, retention, and redaction with HAC enforcing regulatory policies (e.g., GDPR/CCPA); MPM reuses corrections from past policy breaches.

- Tools/workflows: DLP scanners, policy rule packs, redaction validators, “Compliance Guard” modules.

- Assumptions/dependencies: Accurate policy translation to machine-checkable constraints; privacy-aware memory storage; periodic rule audits.

Long-Term Applications

The following applications require further research, scaling, multimodal extensions, or formal verification to be reliable and safe. They build on MPR’s predicate-style memory, soft guidance, and HAC concepts.

- Clinical decision support and care pathway planning

- Sector: Healthcare (clinical)

- Use case: Agents suggesting diagnostics/treatments aligned with guidelines; MPM stores reusable clinical heuristics; HAC enforces formal medical constraints and contraindications.

- Tools/workflows: Guideline-to-rule compilers, medical knowledge bases, human-in-the-loop validation, post-deployment monitoring.

- Assumptions/dependencies: High-quality, formally verifiable rules; liability and ethics frameworks; multimodal inputs (labs/imaging).

- Embodied robotics and autonomous systems

- Sector: Robotics, Manufacturing, Logistics

- Use case: Robots that reuse corrective task rules (grasping sequences, safety zones) and apply HAC for physical constraints (collision avoidance, torque limits).

- Tools/workflows: Sensor-to-constraint mappers, motion planners integrated with HAC, graph-based MPM for task hierarchies.

- Assumptions/dependencies: Robust state estimation; precise environment models; multimodal memory and real-time validation.

- Financial trading and risk-aware decision agents

- Sector: Finance (front-office)

- Use case: Strategy agents with HAC enforcing regulatory and risk limits (position caps, market halts) and MPM encoding reusable patterns from past failures.

- Tools/workflows: Formal limit-check engines, market-state-aware rule retrieval, audit-grade traceability.

- Assumptions/dependencies: Regulator-approved guardrails; latency-sensitive pipelines; adversarial robustness.

- Government digital services and policy enforcement

- Sector: Public sector

- Use case: Policy-aware service agents that apply HAC to ensure eligibility, privacy, and fairness constraints; MPM captures cross-agency rules and updates.

- Tools/workflows: Policy-to-constraint compilers; federated rule sharing; oversight dashboards.

- Assumptions/dependencies: Standardized policy formats; data sharing agreements; bias and fairness audits.

- Multi-agent systems with shared meta-policy memory

- Sector: Software, Robotics, Education

- Use case: Teams of agents negotiating and sharing rules; conflict resolution via rule confidence weighting and provenance.

- Tools/workflows: Distributed MPM (graph/KB), rule reconciliation protocols, versioning and trust scores.

- Assumptions/dependencies: Secure memory synchronization; provenance tracking; consensus mechanisms.

- Multimodal MPM/HAC for vision, audio, and structured data

- Sector: Robotics, Healthcare, Industrial IoT

- Use case: Agents that ground rules in visual scenes (e.g., “do not place object on hot surface”) and validate actions against sensor-derived constraints.

- Tools/workflows: Multimodal retrieval and grounding, perception-to-predicate pipelines, real-time HAC.

- Assumptions/dependencies: Reliable perception; robust grounding from raw signals to predicates; latency budgets.

- Automated rule lifecycle management and verification

- Sector: Software tooling

- Use case: End-to-end systems that extract, deduplicate, rank, and formally verify rules; continuous calibration of confidence weights and admissibility thresholds.

- Tools/workflows: “RuleOps” platform, static/dynamic analyzers, model checking for high-impact rules, drift detection.

- Assumptions/dependencies: Formal semantics for rules; scalable verification methods; human review for irrevocable constraints.

- Cross-organization rule marketplaces and compliance libraries

- Sector: Enterprise, Standards

- Use case: Sharing validated rule packs (e.g., ISO, SOC2, HIPAA) that agents can import; HAC aligns actions with standardized controls.

- Tools/workflows: Rule registries, licensing and update mechanisms, compatibility layers for different agent frameworks.

- Assumptions/dependencies: Standardization of rule formats; legal agreements; version control and provenance.

- Safety-critical industrial process control

- Sector: Energy, Manufacturing, Chemical plants

- Use case: Supervisory agents that suggest control actions with HAC enforcing interlock logic and safety envelopes; MPM captures incident-derived corrective rules.

- Tools/workflows: Digital twins integrated with HAC, alarm rationalization rules, operator-in-the-loop approval.

- Assumptions/dependencies: High-fidelity plant models; certified verification; strict change management.

- Auditable AI governance for high-stakes decision-making

- Sector: Cross-sector (Finance, Healthcare, Public)

- Use case: Using MPM as an interpretable audit trail linking decisions to predicate rules, with HAC proving constraint adherence.

- Tools/workflows: Explainability dashboards, policy provenance tracking, compliance reporting.

- Assumptions/dependencies: Traceability requirements; accepted audit standards; resistant to rule tampering.

Notes on Feasibility

- Base model quality matters: MPR assumes a reasonably competent LLM; memory and HAC augment but do not replace core capabilities.

- Constraint availability: HAC depends on well-specified, machine-checkable constraints (schemas, policies, environment models).

- Domain regularities: The approach benefits from recurring task structure; highly heterogeneous domains may need richer representations and longer adaptation phases.

- Privacy and security: Storing reflective memory can expose sensitive information; implement access controls, redaction, and differential privacy where needed.

- Human-in-the-loop: For safety-critical or regulated domains, keep human approval for rules that become hard constraints and for high-impact actions.

- Operational tooling: Effective deployment requires rule authoring, retrieval, confidence calibration, logging, and drift detection (e.g., an “AgentOps” stack around MPM/HAC).

Collections

Sign up for free to add this paper to one or more collections.