- The paper introduces LUIVITON, a system that automates garment fitting by predicting clothing-to-SMPL and body-to-SMPL correspondences.

- It leverages a modified DiffusionNet and DINOv2-based features to achieve robust and precise garment simulations with improved Chamfer Distance and interpenetration ratios.

- LUIVITON extends virtual try-on technology to applications in animation and AR, offering scalable and realistic digital garment simulation.

LUIVITON: Learned Universal Interoperable VIrtual Try-ON

The paper introduces LUIVITON, a system designed for automated virtual try-on applications capable of handling complex garments on diverse humanoid characters. LUIVITON integrates geometric and diffusion-based approaches to handle the intricacies of clothing-to-body correspondence, leveraging the SMPL model as an intermediary representation.

Introduction

The modern rendering of avatars in various digital contexts often necessitates detailed and realistic garment simulation, which has been traditionally labor-intensive. LUIVITON addresses these challenges by automating the garment fitting process for humanoid 3D models irrespective of shape, pose, or style. This is achieved through novel methodologies for predicting correspondences between clothing and the SMPL model and the body to SMPL model mappings.

Methodology

System Overview

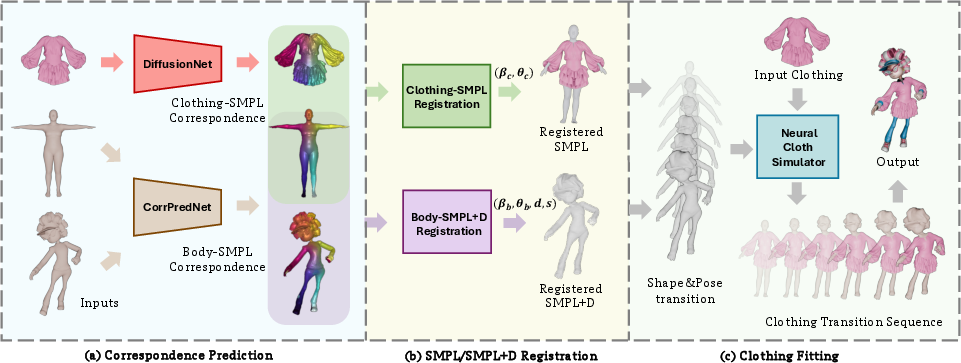

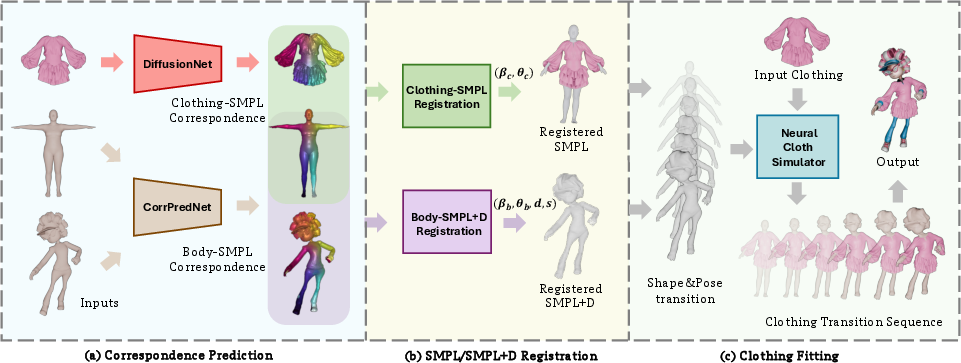

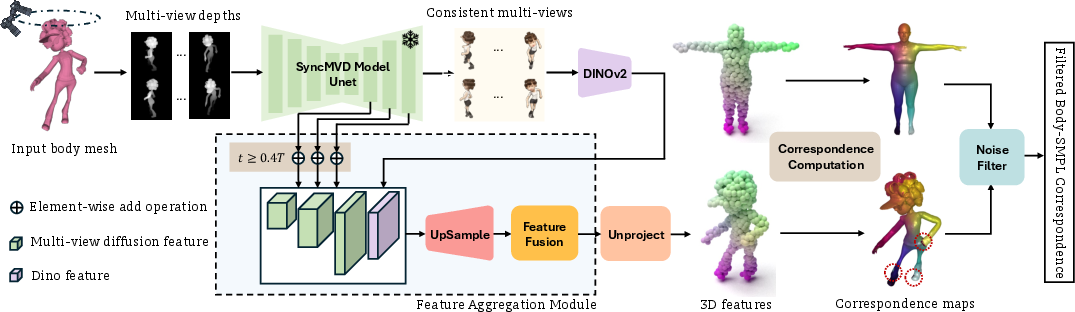

LUIVITON's framework is structured into three primary stages:

- Correspondence Prediction: This involves establishing clothing-to-SMPL and body-to-SMPL correspondences.

- Registration: Aligning the SMPL model to clothing and adapting it to various body types using SMPL+D.

- Simulation: Final garment fitting follows through a neural cloth simulator.

Figure 1: The overview of our LUIVITON system.

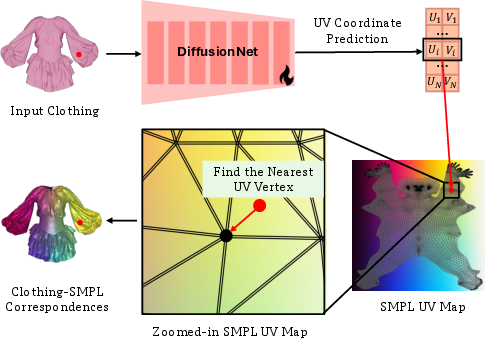

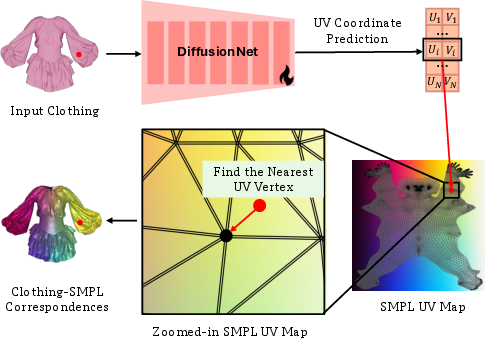

Clothing-SMPL Correspondence

A modified DiffusionNet predicts UV coordinates for clothing vertices, facilitating robust partial-to-complete mappings.

Figure 2: The clothing-SMPL correspondence module utilizes an adapted DiffusionNet.

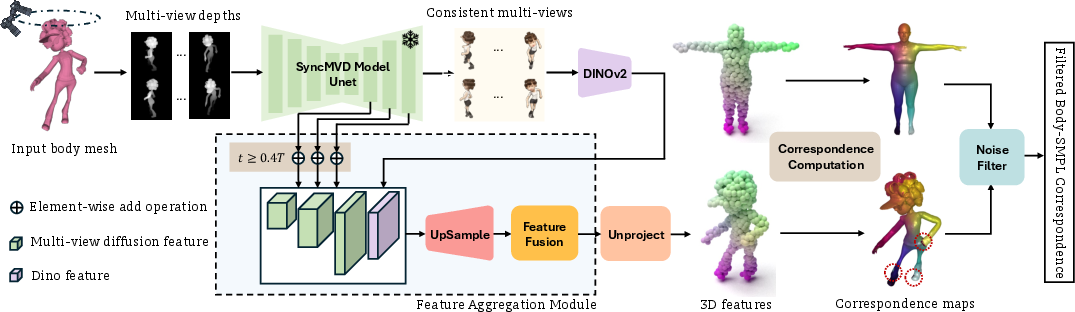

Body-SMPL Correspondence

The use of diffusion models, combined with pre-trained semantic features from DINOv2, allows for precise body-SMPL correspondence, overcoming challenges associated with extreme body proportions and diverse poses.

Figure 3: Our system computes correspondence using a feature aggregation module combined with noise filtering.

Experiments and Results

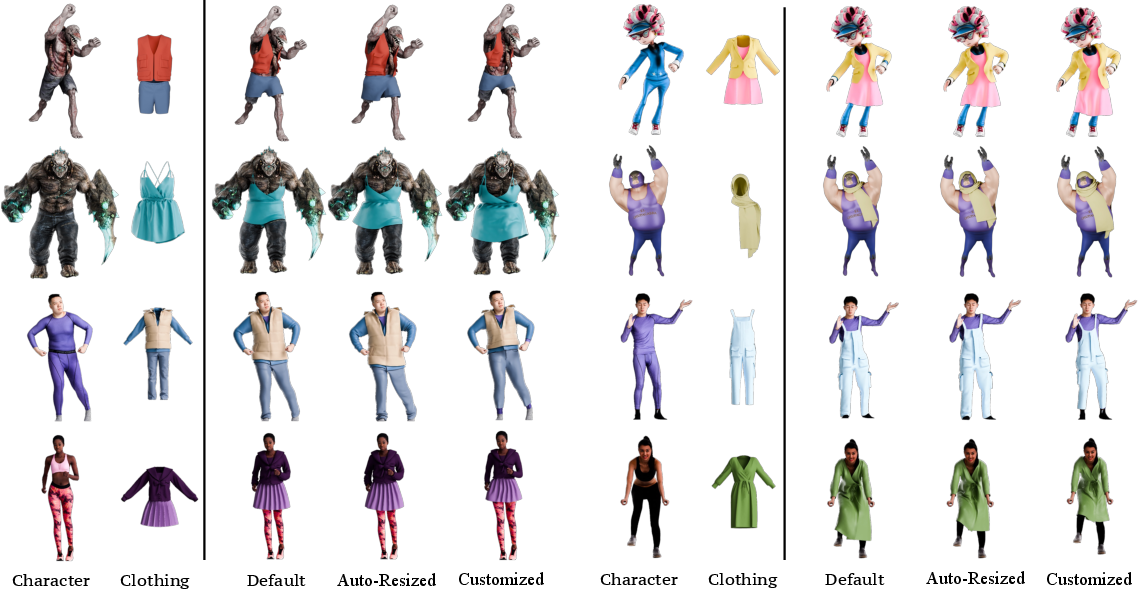

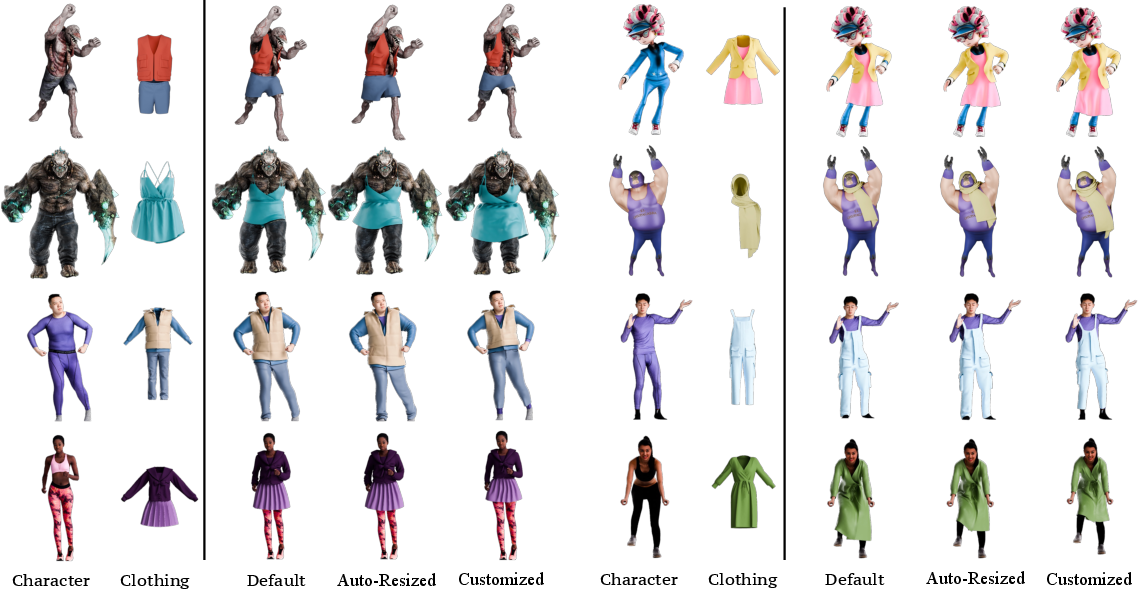

Qualitative assessments on a range of garments and humanoid bodies demonstrate LUIVITON's ability to deliver plausible results across various configurations.

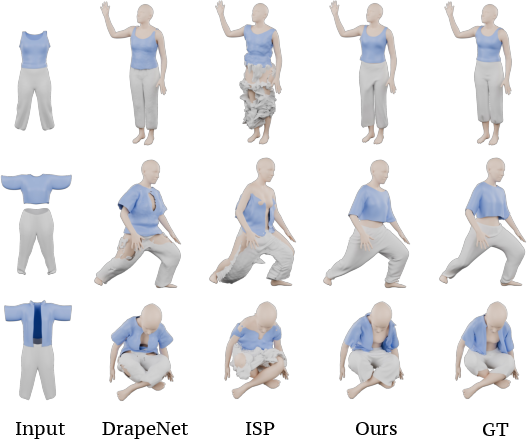

Figure 4: Garments are accurately draped on different humanoid bodies in diverse poses.

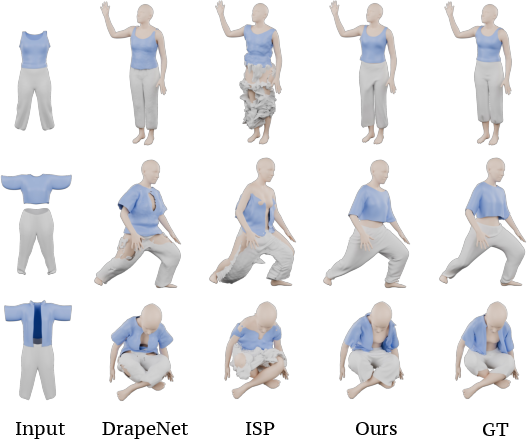

Comparative Analysis

Compared to existing methods like DrapeNet and ISP, LUIVITON outperforms in terms of Chamfer Distance and Interpenetration Ratios, highlighting its superior ability to handle complex garment simulations without manual intervention.

Figure 5: Qualitative comparisons with DrapeNet and ISP.

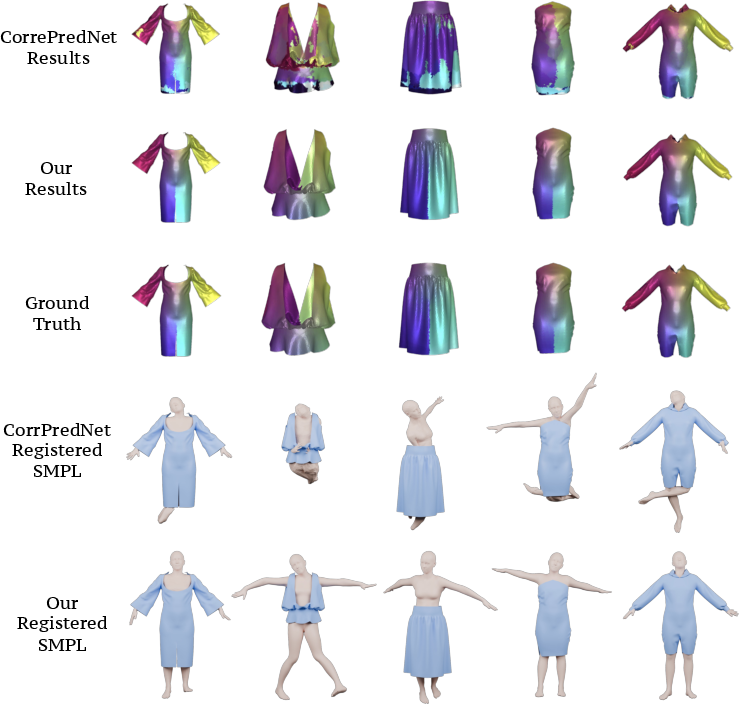

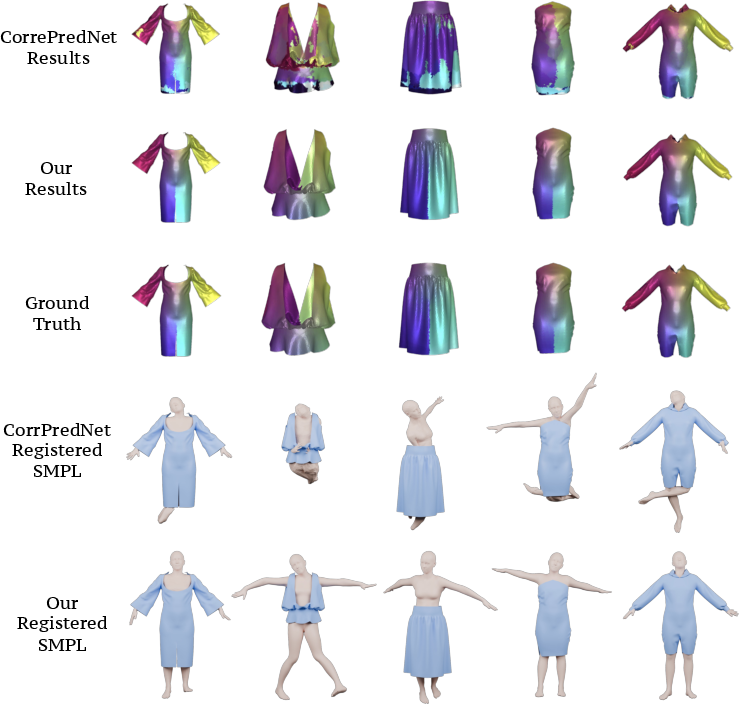

Correspondence and Registration Evaluation

LUIVITON shows a substantial improvement over baseline models for both clothing-SMPL and body-SMPL correspondence outcomes, resulting in lower errors and higher precision in fitting.

Figure 6: Comparison showing the accuracy of clothing-SMPL correspondences.

Applications and Limitations

LUIVITON extends its utility to various applications, including animation and AR environments, by offering comprehensive customization of garment sizes. However, the current implementation faces limitations with non-humanoid shapes and hard material garments.

Figure 7: The system is applicable to animation tasks, fitting clothing for characters seamlessly.

Conclusion

LUIVITON presents a significant advancement in virtual try-on technology, using innovative approaches to address traditional challenges in garment fitting. It achieves broad applicability and robust generalization across a myriad range of avatars and garment types. This system not only promises to streamline the process of virtual garment fitting but also paves the way for future improvements in handling more intricate and varied garment types beyond the constraints of current methodologies. Future endeavors could focus on direct clothing-to-body mappings, bypassing intermediate models like SMPL, enhancing the efficiency and scalability of the approach.