- The paper identifies overreliance on LLMs as a critical risk in human decision-making and outlines methods to measure and mitigate it.

- It details system-level mitigations such as mixed-initiative interfaces and cognitive forcing functions to recalibrate user trust.

- The study underscores the need for refined metrics and enhanced AI literacy to preserve human decision-making capabilities.

Measuring and Mitigating Overreliance on LLMs

The paper "Measuring and mitigating overreliance is necessary for building human-compatible AI" addresses the nuanced issue of humans potentially overrelying on LLMs in decision-making processes across various domains. The work explores the characteristics that exacerbate overreliance, outlines measurement challenges, and proposes mitigation strategies. The discussion is framed around ensuring LLMs serve as effective thought partners without undermining human capabilities, thus highlighting the critical significance of this research.

Introduction

Recent strides in AI have enabled LLMs to engage in sophisticated cognitive tasks, transforming them into collaborative 'thought partners.' These models excel in areas such as code generation and language reasoning, but their widespread deployment across healthcare, personal advice, and other fields poses risks of overreliance. The reliance on LLMs beyond their capabilities can lead to errors, governance challenges, and cognitive deskilling. Consequently, focusing on the measurement and mitigation of overreliance is paramount to prevent these models from becoming detrimental to human capacities.

Historical Context

Overreliance has historically been researched across disciplines such as human-computer interaction, cognitive science, and psychology. Defined as the adoption of system outputs when incorrect or undesirable, overreliance is primarily a behavioral phenomenon resulting from excessive trust in automation. Models often exhibit confident language, sycophancy, and an illusion of competence, which can contribute to human users misjudging their outputs as authoritative.

Risks of Overreliance

The paper categorizes risks into individual and societal levels. At the individual level, short-term consequences include high-stakes errors in fields like healthcare, where misdiagnosis can result from overreliance on inaccurate AI outputs. Long-term effects involve dependency and cognitive deskilling, where users progressively lose the motivation for independent problem-solving. Societal risks manifest as governance challenges and the potential shift in social norms, given the capacity of LLMs to produce homogenized outputs and influence cultural practices.

Factors Influencing Overreliance

Various factors contribute to overreliance, including model characteristics, system design, and user cognition. LLMs’ anthropomorphic qualities, fluency, and perceived certainty can lead to undue trust. Inadequacies in system interface design, such as lack of uncertainty indicators and explanation options, further influence reliance decisions. Users’ cognitive biases and limited resources may inadvertently encourage overreliance when engaging with models.

Measurement Challenges

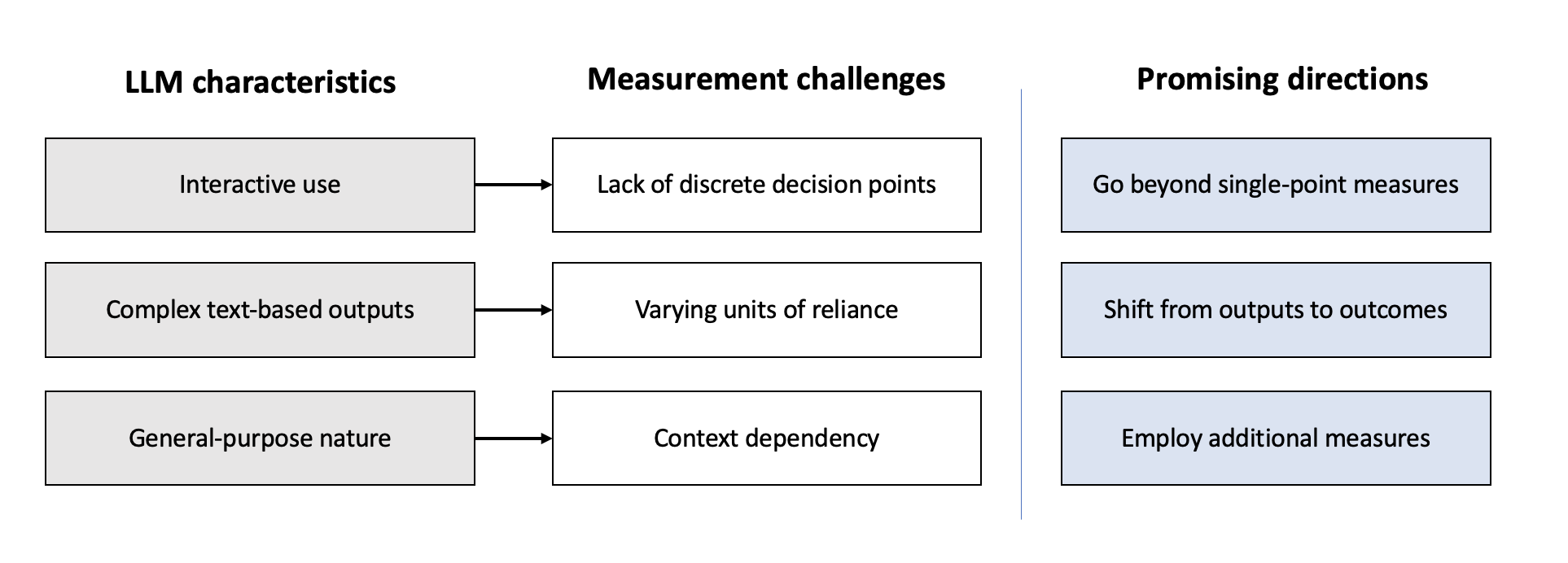

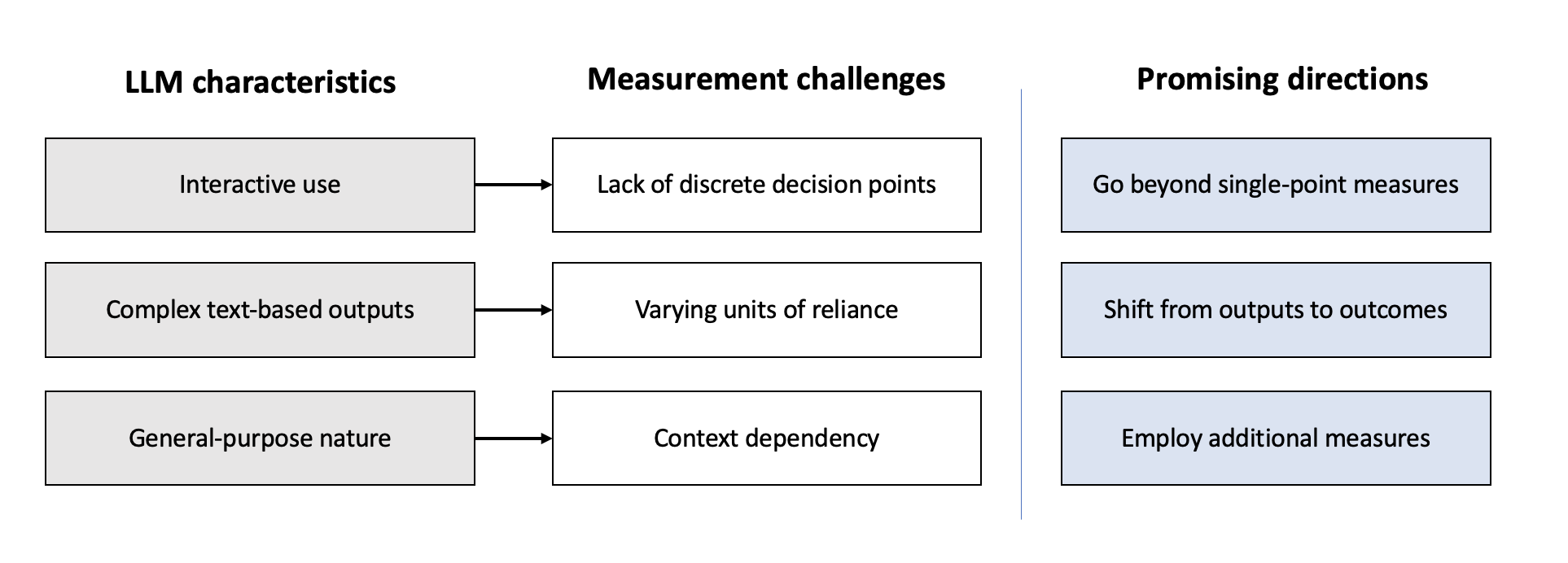

Existing methodologies for measuring reliance fall short in capturing the dynamic interactions typical of LLM usage. Traditional metrics like agreement and switch fraction struggle with LLMs’ interactive and complex text-based outputs. The paper calls for developing new methods that go beyond single-point measures and focus on outcome-based assessments instead of merely measuring outputs.

Figure 1: Summary of LLM characteristics, measurement challenges, and promising directions for improving measurement of overreliance on LLMs.

Mitigation Strategies

The paper suggests several strategies to mitigate overreliance:

Model-Level Mitigations

Refining training processes to address linguistic biases can help manage overreliance. Modifications in reward functions and human feedback protocols can prevent models from inherently favoring certainty over accuracy.

System-Level Mitigations

Interface designs incorporating cognitive forcing functions and mixed-initiative controls can calibrate user reliance. Friction mechanisms and visual cues can slow down critical decisions and reduce reliance on AI outputs.

User-Level Mitigations

Educating users about the capabilities and limitations of AI can empower more informed interaction. AI literacy initiatives, possibly leveraging simplified summaries around expected performance, can guide users in calibrating trust appropriately.

Conclusion

In conclusion, measuring and mitigating overreliance on LLMs is crucial for their successful integration into human processes. A proactive approach that combines technical measurement advances with effective mitigation strategies will ensure LLMs enhance rather than diminish human capabilities. By focusing on this research area, AI can evolve to be more human-compatible, ultimately realizing its potential as a beneficial technological partner.