Embodied Navigation Foundation Model

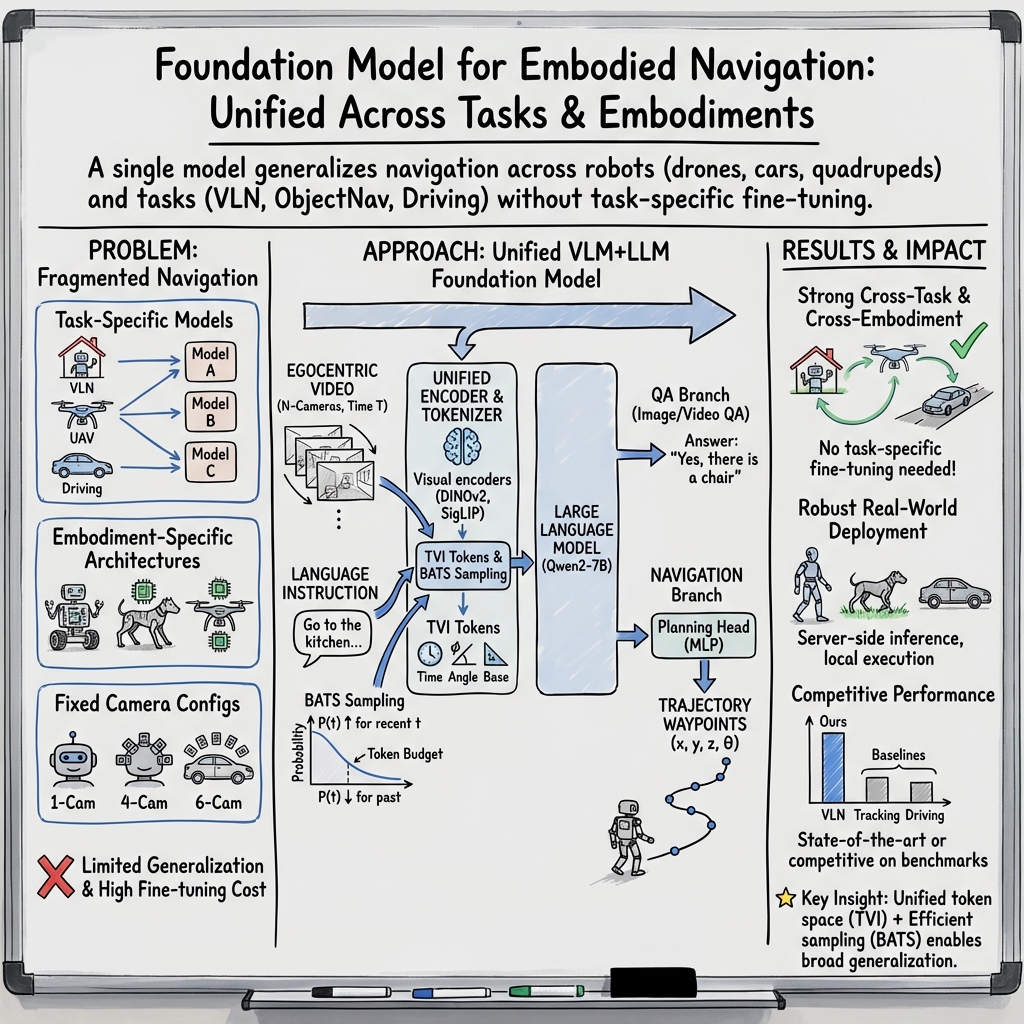

Abstract: Navigation is a fundamental capability in embodied AI, representing the intelligence required to perceive and interact within physical environments following language instructions. Despite significant progress in large Vision-LLMs (VLMs), which exhibit remarkable zero-shot performance on general vision-language tasks, their generalization ability in embodied navigation remains largely confined to narrow task settings and embodiment-specific architectures. In this work, we introduce a cross-embodiment and cross-task Navigation Foundation Model (NavFoM), trained on eight million navigation samples that encompass quadrupeds, drones, wheeled robots, and vehicles, and spanning diverse tasks such as vision-and-language navigation, object searching, target tracking, and autonomous driving. NavFoM employs a unified architecture that processes multimodal navigation inputs from varying camera configurations and navigation horizons. To accommodate diverse camera setups and temporal horizons, NavFoM incorporates identifier tokens that embed camera view information of embodiments and the temporal context of tasks. Furthermore, to meet the demands of real-world deployment, NavFoM controls all observation tokens using a dynamically adjusted sampling strategy under a limited token length budget. Extensive evaluations on public benchmarks demonstrate that our model achieves state-of-the-art or highly competitive performance across multiple navigation tasks and embodiments without requiring task-specific fine-tuning. Additional real-world experiments further confirm the strong generalization capability and practical applicability of our approach.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Practical Applications

Practical Applications of the Embodied Navigation Foundation Model (NavFoM)

Below are concrete applications derived from the paper’s findings and methods (TVI tokens, BATS, unified trajectory prediction, cross-embodiment/data co-tuning). Each item is scoped to sectors, potential tools/workflows, and feasibility assumptions or dependencies.

Immediate Applications

- Cross-embodiment waypoint planner for robots (ROS2 plugin)

- Sectors: robotics, logistics/warehousing, healthcare (hospitals), hospitality, security

- What it enables: Drop-in “trajectory head” that consumes RGB video + language and outputs 2D/3D waypoints; plugs into existing local planners (DWA, TEB, model predictive control) via ROS2

- Tools/products: NavFoM ROS2 planner node; “Trajectory Planning-as-a-Service” inference microservice; SDK for wheeled, quadruped, humanoid platforms

- Dependencies/assumptions: GPU edge or low-latency cloud (0.5 s/trajectory under ~1.6k-token budget), calibrated cameras (single or multi-view), reliable local planner for safety, network QoS if remote, site safety procedures

- Language-to-navigation interface for AMRs

- Sectors: warehousing, manufacturing, retail backrooms

- What it enables: Voice/text commands (“go to aisle 12, bay B”) without task-specific finetunes; faster deployment in new layouts

- Tools/workflows: Speech-to-text front-end, waypoint executor, fleet tasking UI

- Dependencies/assumptions: Basic topology knowledge (or geo-fenced zones), obstacle detection stack, operations guardrails

- Human-following and crowd navigation for service robots

- Sectors: hospitals, malls, airports, events

- What it enables: Target-following from natural descriptions (“follow the nurse in blue”) leveraging the active tracking capability

- Tools/workflows: Person-selection UI, privacy-aware logging, proximity control via local planner

- Dependencies/assumptions: Person-identification policy compliance, safety sensors (e-stop/lidar), crowd-density limits

- Multiview perception upgrade using TVI tokens

- Sectors: robotics, security/surveillance analytics, smart facilities

- What it enables: Robust multi-camera fusion in VLM systems; consistent tokenization of arbitrary camera rigs with azimuth/time awareness

- Tools/products: TVI tokenizer library; camera-angle metadata generator; synchronization utility

- Dependencies/assumptions: Known/estimated camera orientations (azimuth) and time-sync; integration with existing vision backbones

- Predictable low-latency video-LM inference with BATS

- Sectors: edge AI, robotics, streaming analytics, mobile apps

- What it enables: Budget-aware temporal sampling to keep token counts, latency, and memory bounded while retaining recent context

- Tools/products: BATS module for popular VLM toolkits; streaming inference wrappers; telemetry to auto-tune token budgets

- Dependencies/assumptions: Target latency/SLA specified; token budget selection; compatibility with chosen LLM/VLM

- Drone “follow-me” and scripted filming in controlled areas

- Sectors: media/entertainment, sports training, R&D labs

- What it enables: Language-driven aerial tracking and waypointing (“orbit runner, then follow to finish line”)

- Tools/workflows: Offboard planner + PX4/ArduPilot bridge; geo-fence and failsafe configuration

- Dependencies/assumptions: Local regulations, GPS/visual odometry reliability, clear VLOS operations

- Telepresence and delivery robot autopilot in buildings/campuses

- Sectors: enterprise IT/facilities, universities, hotels

- What it enables: Semi-autonomous waypointing from chat instructions; operator “co-pilot” reduces manual driving

- Tools/workflows: Operator console with language prompt, building-zone constraints, fallback teleop

- Dependencies/assumptions: Reliable connectivity, elevator/door integration policies, human-in-the-loop for safety

- QA-augmented navigation logs for ops transparency

- Sectors: robotics operations, customer support

- What it enables: Use the model’s QA branch to answer “what did the robot see?” during incidents; generate language summaries of navigation episodes

- Tools/workflows: Post-hoc analysis dashboard; explainability reports

- Dependencies/assumptions: Data retention and privacy guardrails; timestamped logs and video

- Training acceleration and data pipelines for labs

- Sectors: academia, industrial research

- What it enables: Feature caching + multi-task co-tuning to scale navigation experiments; expand datasets with web-video traces

- Tools/products: Visual cache DB, dataset ingestion scripts, simulator bridges (Habitat, EVT-Bench, OpenUAV)

- Dependencies/assumptions: Dataset licenses, H100/A100-class compute for large-scale finetuning

- Fleet cross-embodiment transfer in one facility

- Sectors: third-party logistics, robotics solution integrators

- What it enables: One navigation model serving multiple robot types (AMRs, quadrupeds) with consistent instruction interface

- Tools/workflows: Unified fleet skill library; per-robot low-level controller adapters

- Dependencies/assumptions: Standardized waypoint interface; safety recertification across embodiments

Long-Term Applications

- Language-native autonomous driving and shuttle operations

- Sectors: mobility/transport, campus shuttles, industrial sites

- What it enables: “Go to dock C via safe route” with unified planning from RGB; natural-language route negotiation

- Tools/products: Driver-assist copilot for low-speed domains, integration with HD-maps or mapless ops

- Dependencies/assumptions: Regulatory approval, redundancy sensors (LiDAR/radar), rigorous safety cases, closed-course validation; current results are open-loop/pseudo-sim

- Search-and-rescue with heterogeneous fleets

- Sectors: public safety, disaster response

- What it enables: Drones + UGVs follow language cues to search targets; object search and target tracking outdoors/indoors

- Tools/workflows: Incident command UI with language prompts; multi-robot task allocation

- Dependencies/assumptions: Robust out-of-distribution generalization, comms in degraded networks, formal safety envelopes

- Household generalist navigation for assistive robots

- Sectors: consumer robotics, eldercare

- What it enables: “Bring me the red mug from the kitchen” combining VLN, object search, and person-following

- Tools/products: Home assistant stack with manipulation planner, multilingual instruction front-end

- Dependencies/assumptions: Reliable grasping/manipulation stack, privacy by design, adaptation to cluttered, dynamic homes

- Industrial and energy infrastructure inspection by instruction

- Sectors: energy/utilities, construction, mining

- What it enables: Language-driven routes for drones/UGVs to inspect turbines, substations, conveyors; anomaly-spotting prompts (“inspect for oil leaks along the line”)

- Tools/workflows: CMMS integration, visual report generation, digital twin overlays

- Dependencies/assumptions: Domain-specific finetunes, safety and EMI robustness, operating permits

- Retail floor automation via natural instructions

- Sectors: retail

- What it enables: Inventory rover navigates aisles on request; person-follow to assist customers; after-hours audits

- Tools/workflows: Store map integration or mapless constraints, SKU recognition linking

- Dependencies/assumptions: Customer privacy, dynamic obstacle policies, SKU/planogram perception stack

- Mapless or rapid “day-zero” deployment in new facilities

- Sectors: logistics, field services

- What it enables: Good-enough navigation without prior mapping; language constraints (“stay in receiving area”)

- Tools/workflows: Quickstart deployment playbooks, safety rails via virtual geofences

- Dependencies/assumptions: Conservative speed limits, strong obstacle avoidance, human oversight

- Human wayfinding assist using phone AR

- Sectors: accessibility, tourism, campuses

- What it enables: Phone camera + language interface suggests turn-by-turn trajectories in unfamiliar indoor spaces

- Tools/products: On-device VLM-lite with BATS; AR arrow overlays; haptic guidance

- Dependencies/assumptions: Robust localization (visual-inertial), accessibility/safety constraints, edge acceleration

- Corporate “navigation foundation” for multi-robot product lines

- Sectors: robotics OEMs, platform providers

- What it enables: One backbone model distilled to fit diverse SKUs (AMRs, drones, forklifts) with shared data flywheel

- Tools/workflows: Distillation/quantization pipeline, guardrail policies, continuous evaluation harness

- Dependencies/assumptions: IP-secure data pipeline, model governance, rigorous ODD definitions

- Standards and policy for embodied foundation navigation

- Sectors: policy, certification, insurance

- What it enables: Cross-embodiment benchmark suites, compute/token-budget disclosures, multi-camera privacy practices, incident reporting schemas

- Tools/workflows: Conformance testkits; scenario libraries; procurement checklists

- Dependencies/assumptions: Industry consortia participation, regulator engagement, shared datasets

- Continual learning from web-scale egocentric video

- Sectors: AI platforms, robotics vendors

- What it enables: Automated curation (Sekai-style) to expand coverage; self-improving navigation

- Tools/workflows: Data quality filters, drift detection, replay buffers

- Dependencies/assumptions: Label noise control, safety for updating deployed models, data licensing compliance

- Interactive shared autonomy with clarification dialogs

- Sectors: all robot deployments

- What it enables: Robot asks “Do you mean the red door on the left?”; combines QA and VLN for error recovery

- Tools/workflows: Conversation UIs, fallback behaviors, user-accessible logs

- Dependencies/assumptions: Dialogue reliability, UX design, safety overrides

- TVI-token standardization across vehicles/sensors

- Sectors: automotive, robotics, surveillance OEMs

- What it enables: Interoperable multi-camera metadata (angles/timestamps) for VLMs; plug-and-play camera rigs

- Tools/workflows: Camera registry formats, calibration-to-token exporters

- Dependencies/assumptions: Agreement on schemas, field calibration procedures

- Certified compute/resource safety cases using BATS

- Sectors: safety-critical robotics, automotive

- What it enables: Deterministic compute budgets under worst-case horizons; certifiable timing guarantees for perception-planning stacks

- Tools/workflows: Real-time inference profiling, token-budget certificates

- Dependencies/assumptions: End-to-end timing analysis, RTOS/accelerators, independent verification

Notes on Cross-Cutting Assumptions and Risks

- Environmental diversity and domain shift: Some targets (agriculture, heavy industry, public roads) will need additional domain data and safety validation.

- Sensing stack: While the model uses RGB only, many deployments will require depth/LiDAR and redundant sensing for safety certification.

- Latency and compute: Meeting sub-200 ms loop times on embedded hardware may require model distillation, quantization, and aggressive BATS settings.

- Instruction interfaces: Robust speech/NLU and multilingual support may be necessary; ambiguity handling benefits from interactive clarification.

- Privacy and compliance: Multi-camera and person-tracking use cases need consent, retention limits, and on-device processing where possible.

- Safety and governance: Human-in-the-loop oversight, geofencing, and conservative speed/clearance policies are essential until thorough certification is achieved.

Collections

Sign up for free to add this paper to one or more collections.