- The paper introduces GP-CA, a framework that quantifies and explains uncertainty in point cloud registration using concept attribution.

- The paper utilizes a DGCNN backbone and a multi-class Gaussian Process Classifier to map latent embeddings to uncertainty concepts, achieving over 93% attribution accuracy.

- The paper demonstrates strong label efficiency and actionable recovery strategies in robotic perception through active learning and targeted mitigation policies.

Human-Interpretable Uncertainty Explanations for Point Cloud Registration

Introduction and Motivation

Point cloud registration is a foundational problem in robotic perception, underpinning tasks such as SLAM, 3D reconstruction, and 6-DoF object pose estimation. The Iterative Closest Point (ICP) algorithm and its variants are widely adopted for this purpose, but their reliability is compromised under conditions of sensor noise, poor initialization, and partial overlap due to occlusion. While probabilistic methods have advanced the quantification of registration uncertainty, they typically lack mechanisms to attribute uncertainty to specific, actionable causes. This paper introduces Gaussian Process Concept Attribution (GP-CA), a framework that not only quantifies uncertainty in point cloud registration but also provides human-interpretable explanations by attributing uncertainty to semantic concepts such as noise, pose error, and overlap. The approach integrates active learning to adaptively discover new sources of uncertainty, enabling robust and explainable perception in real-world robotic systems.

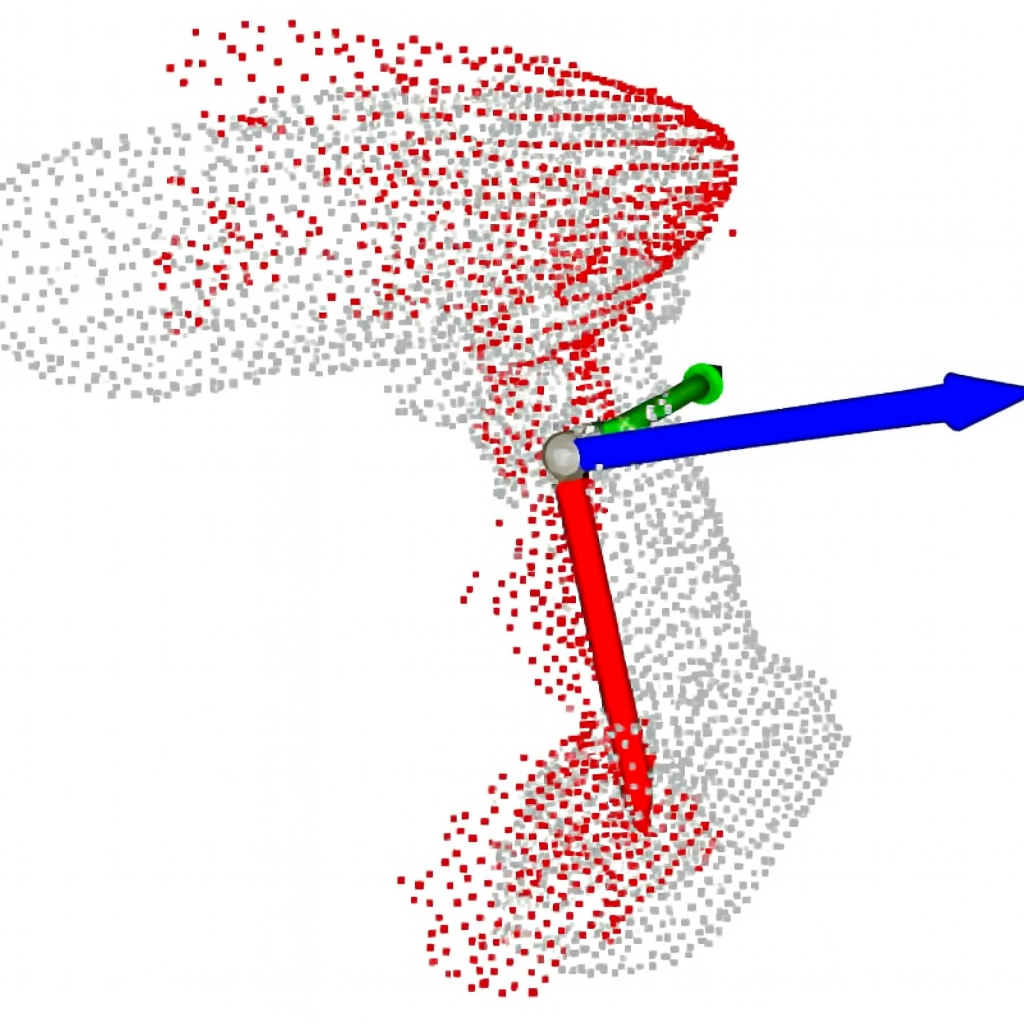

Figure 1: Pose estimation via point-cloud registration. (a) The target object (mustard bottle) is occluded, so ICP-based 6-DoF pose estimation fails. (b) GP-CA attributes the failure primarily to occlusion. (c) The induced recovery policy is to change the viewpoint. (d) The new view reveals the bottle, ICP succeeds, and the bottle pose is successfully estimated.

GP-CA Architecture and Methodology

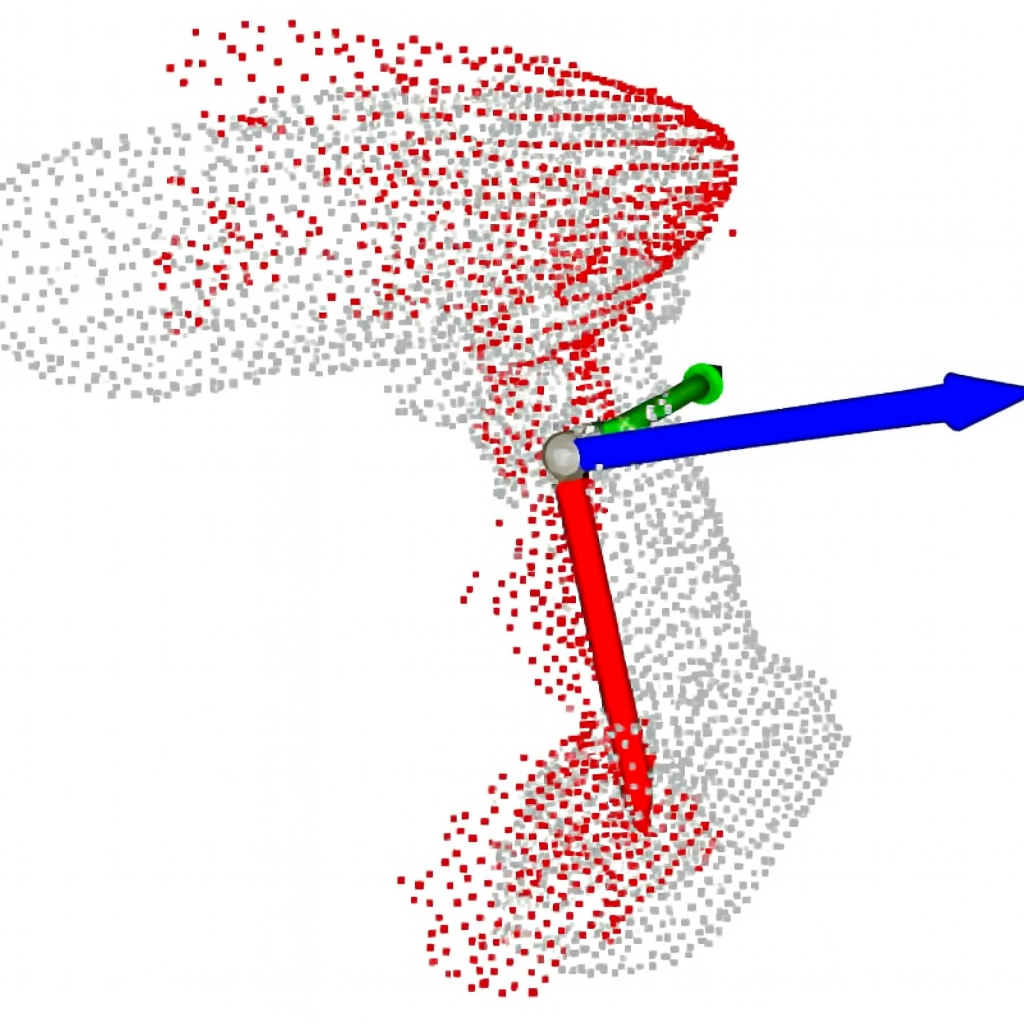

GP-CA operates by first refining the pose estimate using ICP, then encoding the aligned point cloud with a Dynamic Graph CNN (DGCNN) to obtain a latent representation. A multi-class Gaussian Process Classifier (GPC) maps this embedding to probabilities over predefined uncertainty concepts, with associated epistemic variances. The system incorporates an active learning loop, leveraging Bayesian Active Learning by Disagreement (BALD) to query labels for instances with high uncertainty, thereby enabling rapid adaptation to novel failure modes.

Figure 2: GP-CA Overview: ICP refines the pose, DGCNN encodes the point cloud into a latent vector. A Gaussian process classifier outputs concept probabilities with associated uncertainty. An active learning loop queries labels when uncertainty is high and updates the classifier.

The DGCNN backbone is pretrained for concept classification and then frozen, ensuring efficient inference. The GPC is trained using variational inference with inducing points, enabling scalable posterior sampling and uncertainty quantification. The active learning loop selects informative and diverse samples for annotation, using BALD scores and k-means clustering to maximize label efficiency.

Figure 3: Pipeline with online inference (top) and active learning (bottom). Online inference computes concept scores and variances; active learning selects and annotates high-uncertainty samples for GPC retraining.

Experimental Evaluation

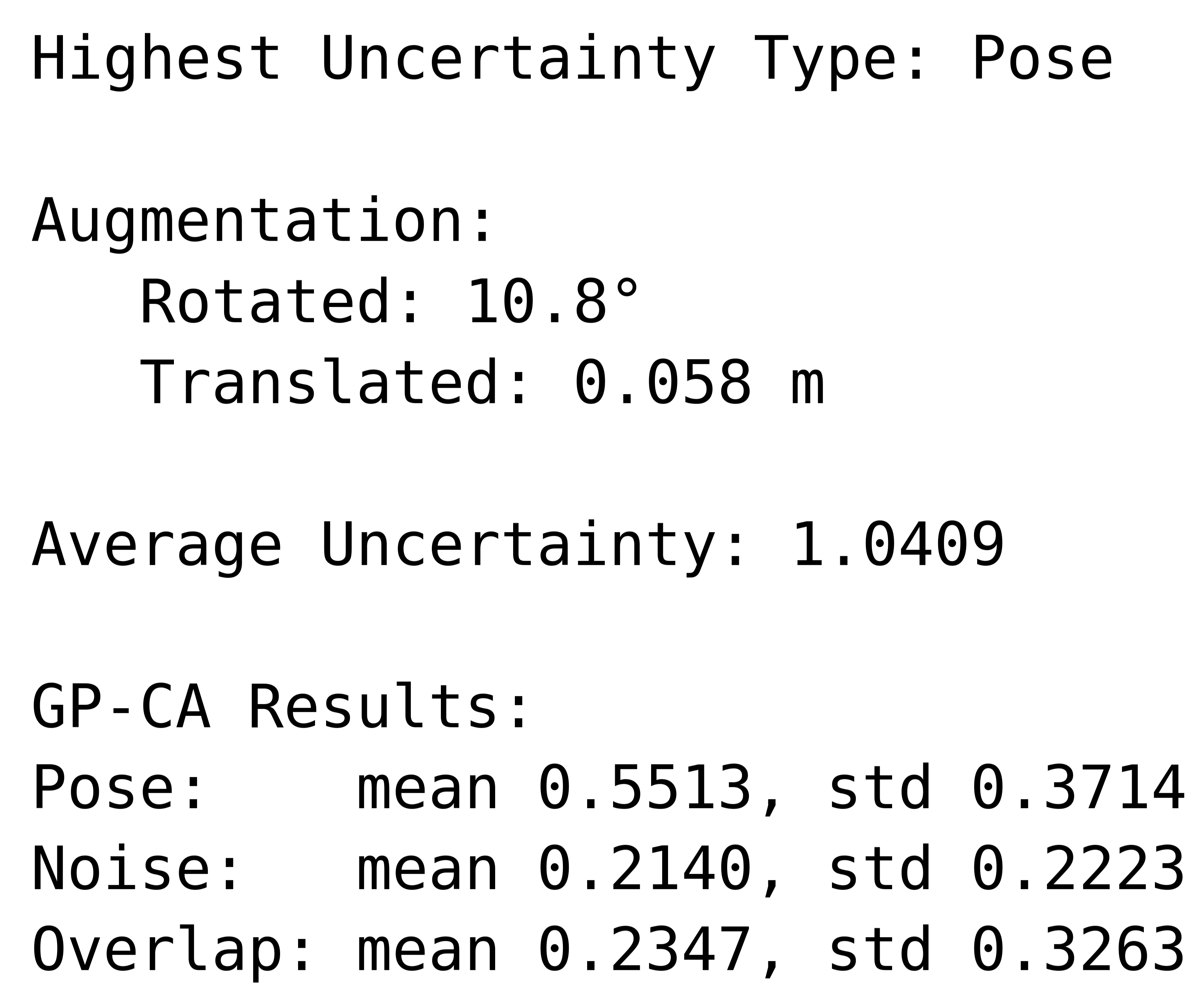

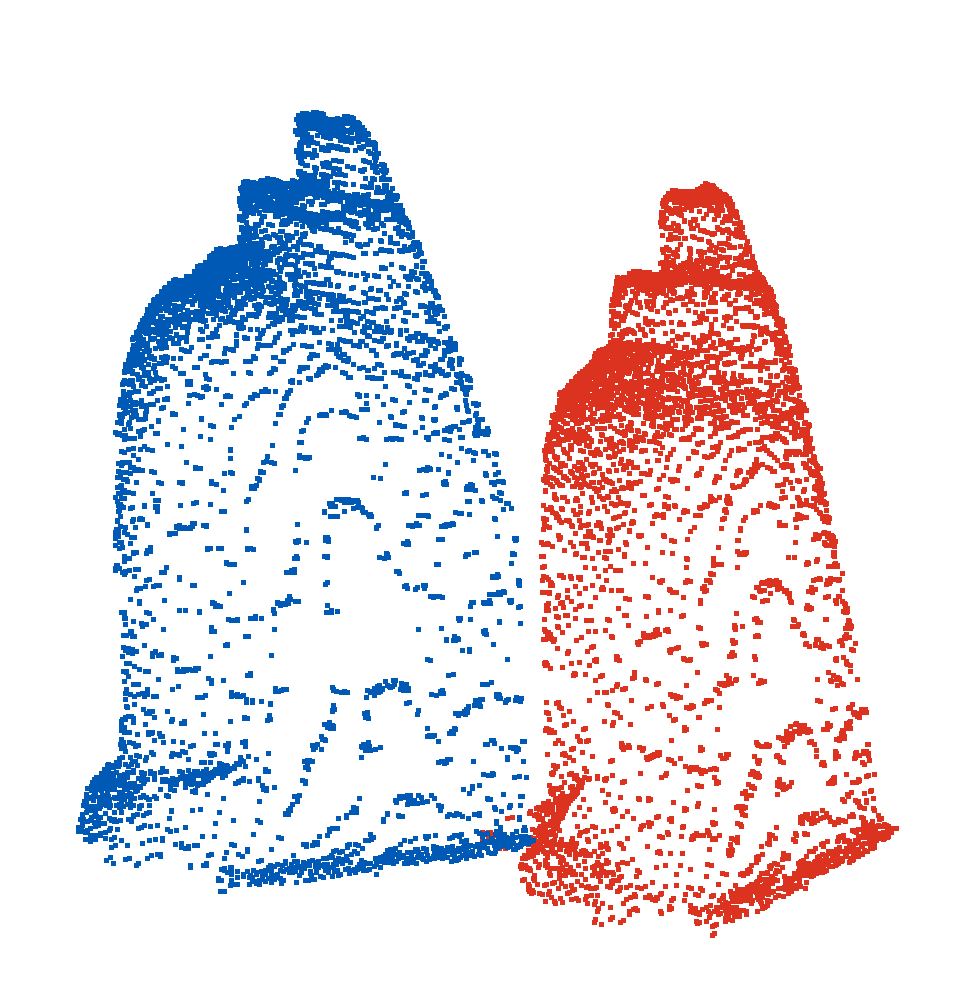

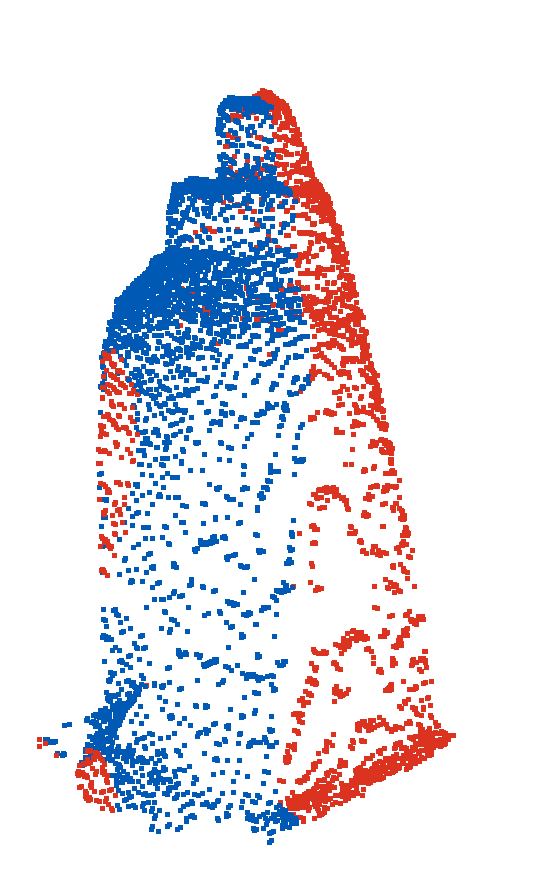

GP-CA is validated on three public datasets (Coffee Cup, LINEMOD, YCB) and a custom real-world RGB-D dataset. Controlled perturbations are applied to generate point cloud pairs exhibiting isolated uncertainty sources: Gaussian noise, random pose transformations, and partial overlap. GP-CA achieves near-perfect attribution accuracy across datasets, with per-concept accuracies consistently above 93%. In contrast, feature attribution baselines such as SHAP and Sensitivity Analysis (SA) yield substantially lower accuracy (7.9–38.3%) and incur orders-of-magnitude higher computational cost per sample.

Active learning experiments demonstrate that GP-CA, using BALD-based selection, requires only 12–20 labeled samples to reach 90% attribution accuracy when integrating a new concept, compared to 350–550 samples for random selection. This strong label efficiency is maintained in real-world settings, where GP-CA achieves 85.2% overall accuracy despite increased scene complexity and concept co-occurrence.

Ablation studies confirm the superiority of the DGCNN backbone over PointNet, PointNet++, and PointNeXt, with learned embeddings yielding higher separability and calibration. The multi-class GPC provides well-calibrated uncertainty estimates that track distributional shift, outperforming Random Forest and SVM baselines in uncertainty diagnostics (Spearman correlation, coverage-risk AUC, ECE).

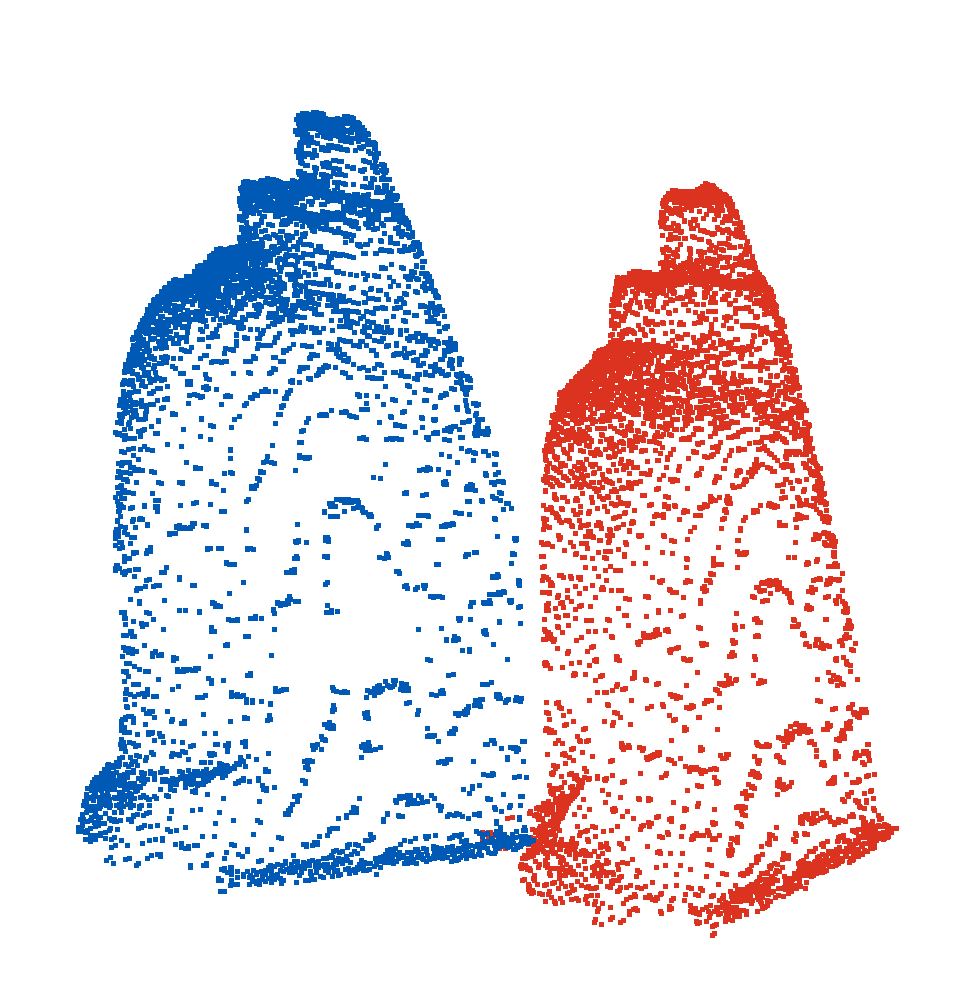

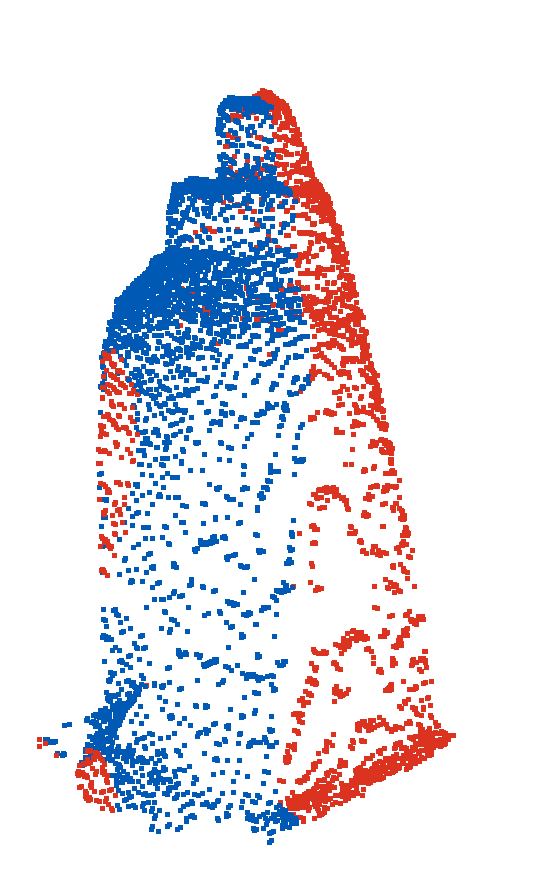

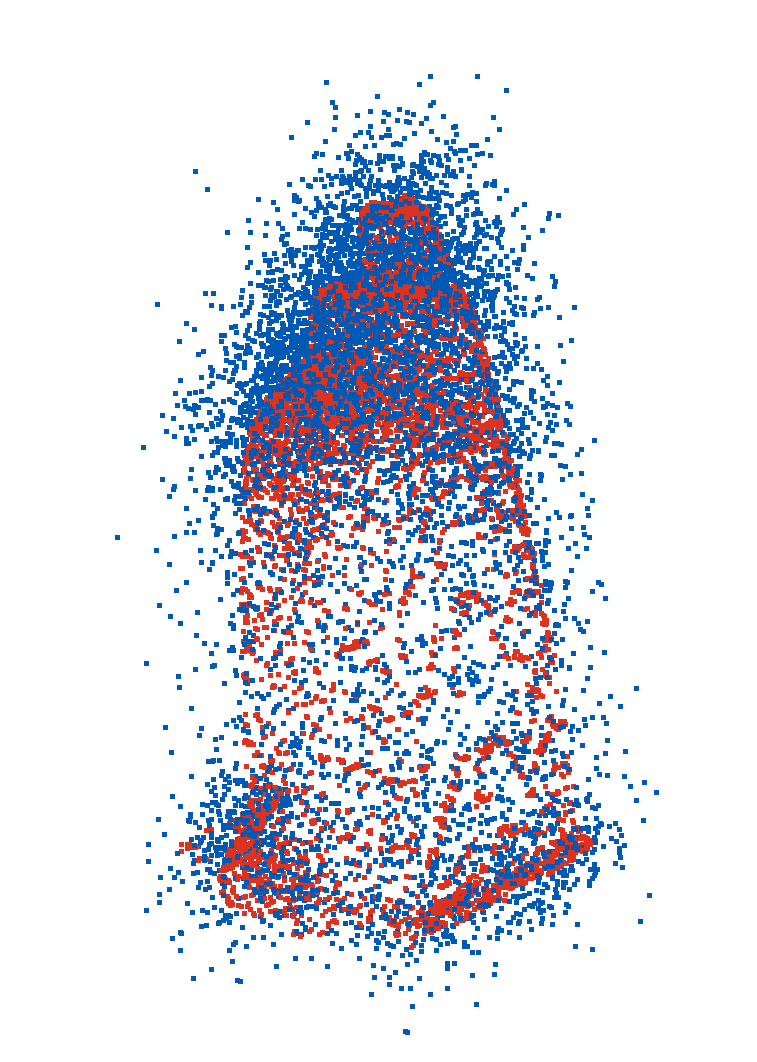

Figure 4: Visualization of noise-induced perturbation in point cloud registration.

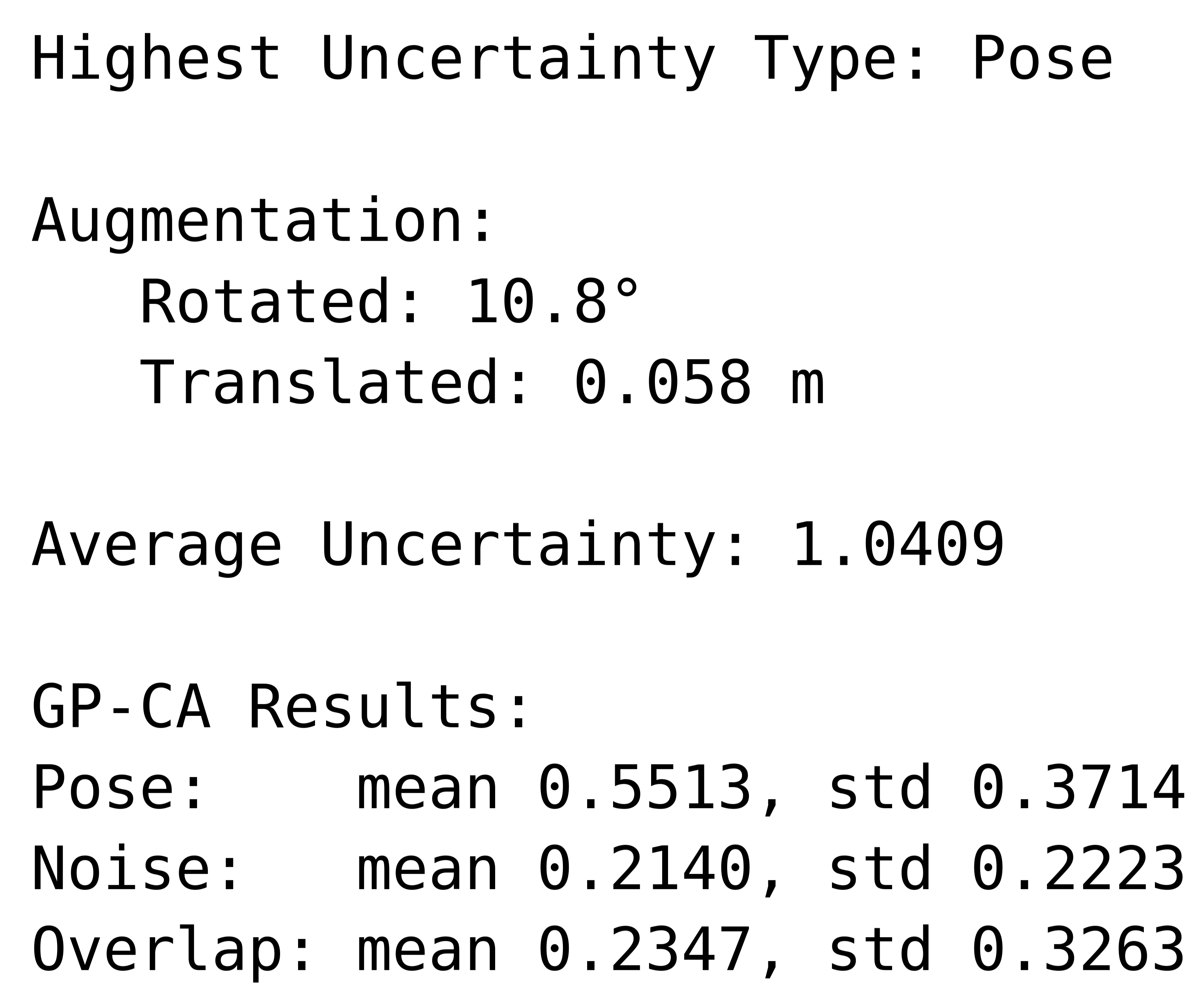

Figure 5: Real-robot validation example: (a) Input and ROI selection, (b) initial pose estimate, (c) ICP alignment with axes, (d) concept attribution with ground truth augmentation and GP-CA concept scores.

Runtime analysis shows that the end-to-end pipeline is dominated by ICP and DGCNN inference (4.2 s per registration), with concept attribution and uncertainty scoring adding negligible overhead (~25 ms). GPC retraining is lightweight and can be performed asynchronously.

Actionable Uncertainty Explanations and Robotic Recovery

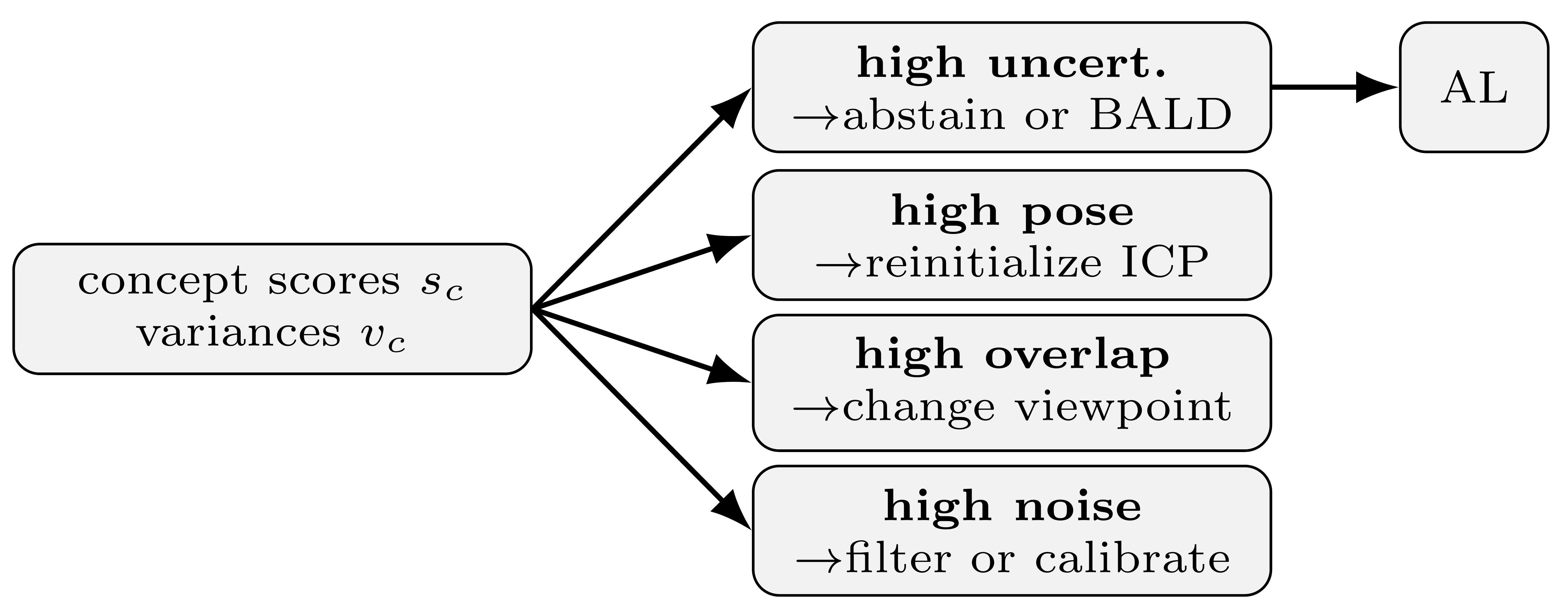

GP-CA enables targeted recovery actions in robotic perception by mapping concept scores and variances to mitigation strategies. For example, high pose error prompts ICP reinitialization, dominant overlap triggers viewpoint changes, and high noise leads to sensor filtering or calibration. When uncertainty remains unresolved, the system abstains and queues the instance for active learning. This closed-loop integration of explainable uncertainty and action selection enhances robustness in dynamic and unstructured environments.

Figure 6: Mitigation mapping from concept outputs to actions. From the concept scores and variances, the system selects one of four branches: abstain/BALD, reinitialize ICP, change viewpoint, or filter/calibrate.

Implications and Future Directions

GP-CA advances the state of explainable AI in geometric computer vision by providing actionable, human-interpretable uncertainty explanations for point cloud registration. The framework's sample efficiency, runtime performance, and adaptability to new concepts make it suitable for deployment in real-world robotic systems. The approach assumes a predefined concept set and single-label attribution per instance, which may limit expressiveness in cases of multi-cause uncertainty. Future work could extend GP-CA to multi-label attribution, incorporate aleatoric uncertainty modeling, and explore integration with upstream and downstream perception modules.

Theoretically, GP-CA demonstrates the utility of concept-based explanations in bridging the gap between uncertainty quantification and decision-making in robotics. Practically, it enables robust perception and failure recovery, facilitating safer and more reliable autonomous systems. The methodology is extensible to other domains where interpretable uncertainty attribution is critical, such as medical imaging, autonomous driving, and industrial inspection.

Conclusion

GP-CA provides a principled framework for human-interpretable uncertainty explanations in point cloud registration, combining learned geometric representations, probabilistic classification, and active learning. The approach achieves high attribution accuracy, strong label efficiency, and practical runtime performance, outperforming representative baselines. By enabling targeted recovery actions and adaptive concept integration, GP-CA enhances the robustness and explainability of robotic perception systems. Future research should address multi-cause attribution, richer uncertainty modeling, and broader integration with autonomous decision-making pipelines.