- The paper introduces a novel spectral method that accurately decomposes predictive uncertainty into aleatoric and epistemic components.

- It leverages Von Neumann entropy and functional Bregman information through a two-stage sampling process to capture subtle semantic nuances.

- Empirical evaluations demonstrate superior performance in ambiguity detection and reliability, paving the way for safer, interpretable LLM deployments.

Fine-Grained Uncertainty Decomposition in LLMs: A Spectral Approach

Introduction

The advent of LLMs has significantly enhanced their applicability across varied domains like scientific research, politics, and medicine. With their growing integration into critical applications, there arises a crucial need to accurately quantify the uncertainty in their predictions. This paper introduces a novel method called Spectral Uncertainty, which not only measures the magnitude of uncertainty but also decomposes it into aleatoric (stemming from input ambiguity) and epistemic (due to model limitations) components.

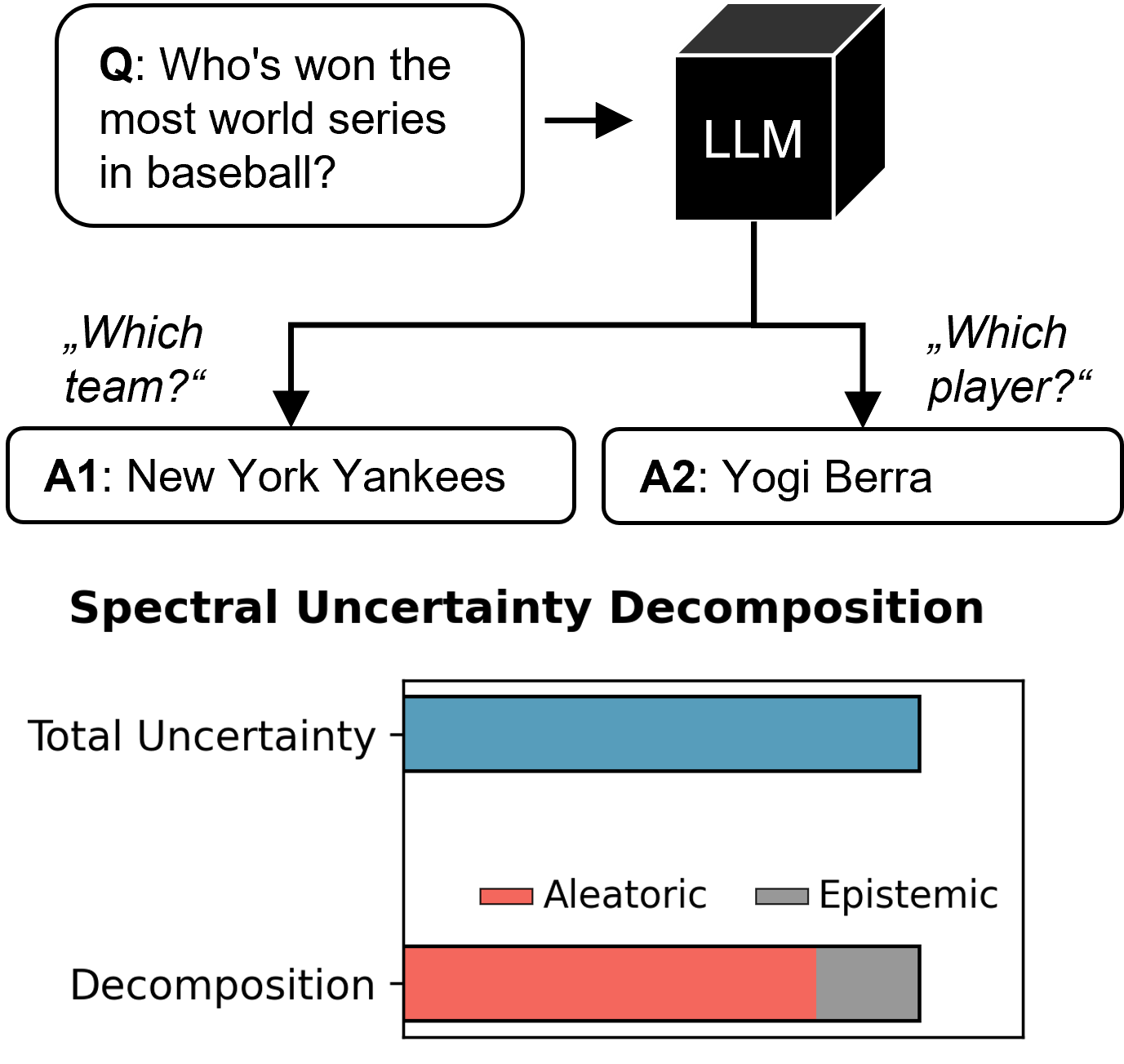

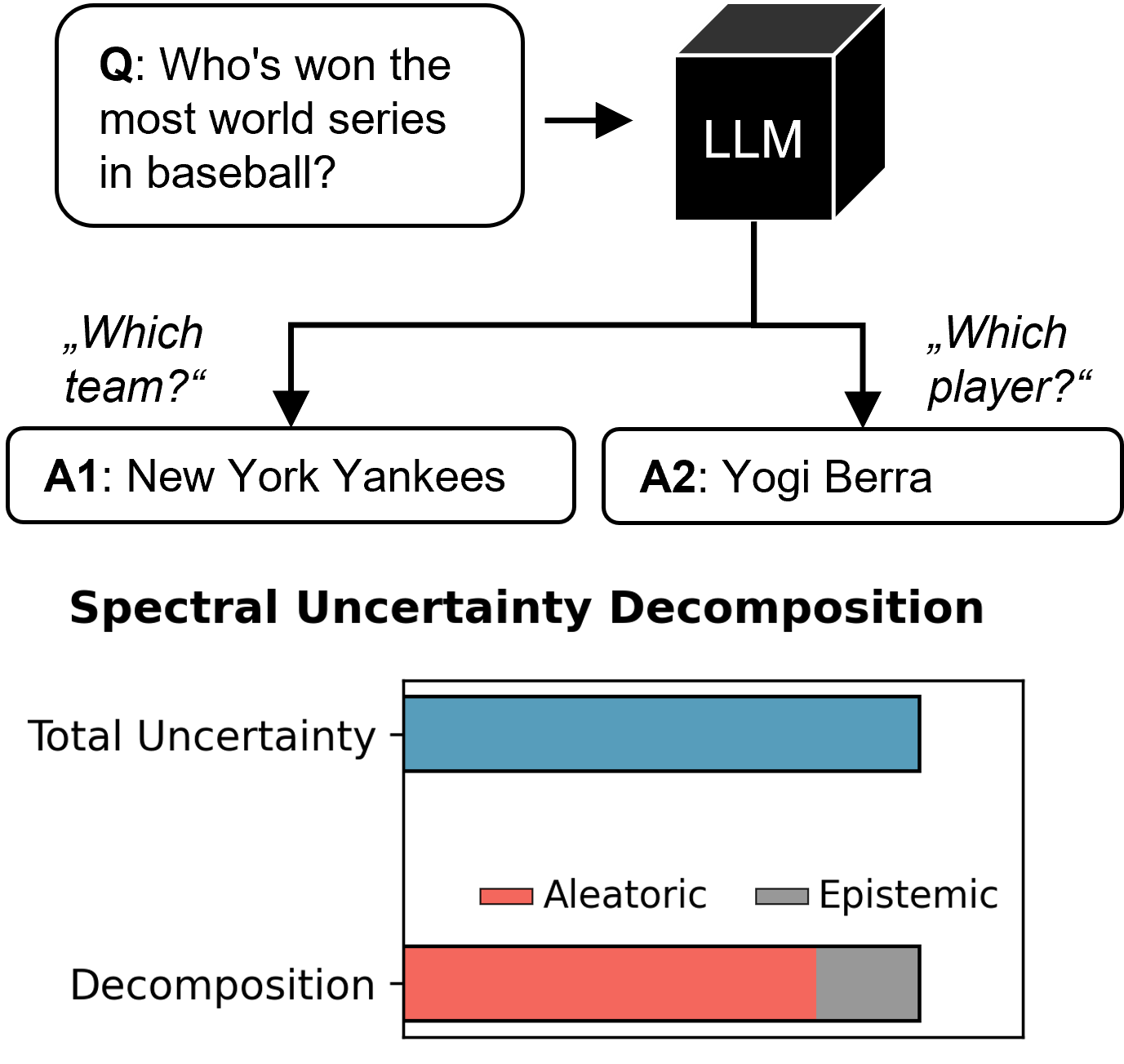

Figure 1: Illustration of our Spectral Uncertainty Decomposition. Given an ambiguous query like “Who’s won the most World Series in baseball?”, an LLM may interpret it in multiple valid ways (e.g., by team or by player), leading to high predictive uncertainty. Unlike existing methods, our spectral decomposition quantifies not just the magnitude but also the source of uncertainty, revealing in this case a dominant aleatoric component rooted in semantic ambiguity.

Methodology

Spectral Uncertainty decomposes the total predictive uncertainty into its epistemic and aleatoric components by leveraging Von Neumann entropy and functional Bregman information. The framework's novelty lies in its precise theoretical foundation, which allows for the separation of uncertainty into fine-grained components, thereby enhancing interpretability and practical utility.

To operationalize this, the method employs a two-stage sampling process: first, multiple clarifications of the input question are generated; second, responses are sampled for each clarification. These responses are embedded into a continuous semantic space, and uncertainty is then decomposed through spectral analysis using a kernel function. This approach is particularly adept at capturing subtle semantic distinctions that traditional token-based techniques overlook.

Empirical Evaluation

The empirical evaluation highlights Spectral Uncertainty's superior performance in measuring both aleatoric and total uncertainty across various models and benchmark datasets. Specifically, it outperforms state-of-the-art methods in ambiguity detection and correctness prediction tasks. The tabled results illustrate the robust reliability and improved interpretability that Spectral Uncertainty offers, demonstrating its efficacy over existing semantic and decomposition-based baselines.

Implications and Future Work

The implications of this work extend to both theoretical and practical domains. The decomposition of predictive uncertainty promises improved model interpretability, critical for the deployment of LLMs in risk-sensitive environments. Spectral Uncertainty's ability to discern the source of uncertainty allows practitioners to tailor model improvements, focusing on either data quality (aleatoric) or model capacity (epistemic).

Future developments could integrate efficiency improvements to reduce the computational overhead associated with generating responses, potentially through adaptive sampling strategies. There's an open field for further validation in real-world applications, which could also investigate the integration with ensemble methods for richer uncertainty modeling.

Conclusion

Spectral Uncertainty presents a rigorous framework grounded in solid theoretical underpinnings to disentangle predictive uncertainty in LLMs. By distinguishing between aleatoric and epistemic sources, it paves the way for more reliable and interpretable predictions. This work stands as a significant contribution to the field, offering a granular approach to uncertainty quantification that could enhance the safe deployment of AI technologies across various sectors.