Optimal Control Meets Flow Matching: A Principled Route to Multi-Subject Fidelity

Abstract: Text-to-image (T2I) models excel on single-entity prompts but struggle with multi-subject descriptions, often showing attribute leakage, identity entanglement, and subject omissions. We introduce the first theoretical framework with a principled, optimizable objective for steering sampling dynamics toward multi-subject fidelity. Viewing flow matching (FM) through stochastic optimal control (SOC), we formulate subject disentanglement as control over a trained FM sampler. This yields two architecture-agnostic algorithms: (i) a training-free test-time controller that perturbs the base velocity with a single-pass update, and (ii) Adjoint Matching, a lightweight fine-tuning rule that regresses a control network to a backward adjoint signal while preserving base-model capabilities. The same formulation unifies prior attention heuristics, extends to diffusion models via a flow-diffusion correspondence, and provides the first fine-tuning route explicitly designed for multi-subject fidelity. Empirically, on Stable Diffusion 3.5, FLUX, and Stable Diffusion XL, both algorithms consistently improve multi-subject alignment while maintaining base-model style. Test-time control runs efficiently on commodity GPUs, and fine-tuned controllers trained on limited prompts generalize to unseen ones. We further highlight FOCUS (Flow Optimal Control for Unentangled Subjects), which achieves state-of-the-art multi-subject fidelity across models.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Explaining “Optimal Control Meets Flow Matching: A Principled Route to Multi-Subject Fidelity”

What is this paper about? (Big picture)

This paper is about making text-to-image AI models better at following prompts that mention several things at once, like “a cat, a dog, and a red ball on a couch.” Today, these models often mess up by mixing subjects together, giving the wrong attributes to the wrong thing (like the dog getting the cat’s stripes), or forgetting a subject entirely. The authors propose a clear, math-based way to guide these models so that each subject shows up, looks right, and stays separate from the others—without ruining the model’s overall style.

What problems are they trying to solve?

The paper focuses on three common mistakes AI image generators make with multi-subject prompts:

- Attribute leakage: a trait meant for one subject “leaks” onto another (e.g., the “red hat” ends up on the wrong person).

- Identity entanglement: subjects get merged into a strange hybrid (e.g., a “cat-dog”).

- Subject omission: one or more of the requested subjects doesn’t appear.

Their goals are to:

- Keep each subject accurate and distinct.

- Do this in a principled, predictable way, not with guesswork.

- Make it work across different modern image models.

- Offer two practical options: a quick, training-free “steering” method at test time, and a small, efficient fine-tuning method.

How do they approach it? (Methods, in simple terms)

Step 1: See generation as moving through time

Modern text-to-image models can be viewed as moving a point in “image space” from random noise to a final image over time, guided by a learned “flow” (like a wind field that pushes the point toward a good image). This viewpoint is called flow matching. Older diffusion models fit into this picture too.

Step 2: Add gentle steering using optimal control

Think of the model’s normal behavior as a car on cruise control following a route. The authors add a small steering input—tiny nudges—to keep subjects separate and correct. In control theory, this is called an optimal control problem: you balance two things at once:

- Stay close to the original model’s style (don’t oversteer).

- Reduce entanglement mistakes (steer when necessary).

They derive two practical tools from this idea:

- A training-free test-time controller: it computes quick, one-step “nudges” at each time step while the image is forming. It’s fast, simple, and works with existing models.

- Fine-tuning via Adjoint Matching: they train a small helper network that learns when and how to nudge, using a smart backward signal (like learning from future consequences) computed efficiently. This keeps the base model’s style intact and speeds up inference later.

Step 3: Measure “who goes where” using attention as probabilities

Inside these models, “cross-attention” maps show which parts of the image pay attention to which words. You can think of them as heatmaps: where does the model think “dog” should go, and where does it think “cat” should go?

Many past methods treated these maps like raw scores. The authors instead treat them as probability distributions over image locations. That allows them to use a principled measure called the Jensen–Shannon divergence to do two things at once:

- Within-subject agreement: each subject’s maps should agree and be focused (the “dog” heatmaps all point to the same area).

- Between-subject separation: different subjects’ maps should not overlap much (the “dog” area shouldn’t collide with the “cat” area).

They call their attention-based cost FOCUS (Flow Optimal Control for Unentangled Subjects). During generation, the controller nudges the model in the direction that improves this FOCUS score.

Step 4: Make it work across models

Because their method is built on the general “flow” view, it works for modern flow-matching models like Stable Diffusion 3.5 and FLUX, and can also be adapted to older diffusion models like Stable Diffusion XL.

What did they find? (Main results)

- The training-free controller improves multi-subject prompts consistently across several popular models, without retraining the base model.

- The fine-tuned controller improves things even more and generalizes well—even when trained on very few prompts (sometimes just one). After fine-tuning, you can sample at normal speed.

- Their FOCUS cost (using attention as probabilities) achieves strong, stable improvements, often beating previous heuristics in tests and human preference studies.

- Importantly, they do this while preserving the model’s style and overall image quality.

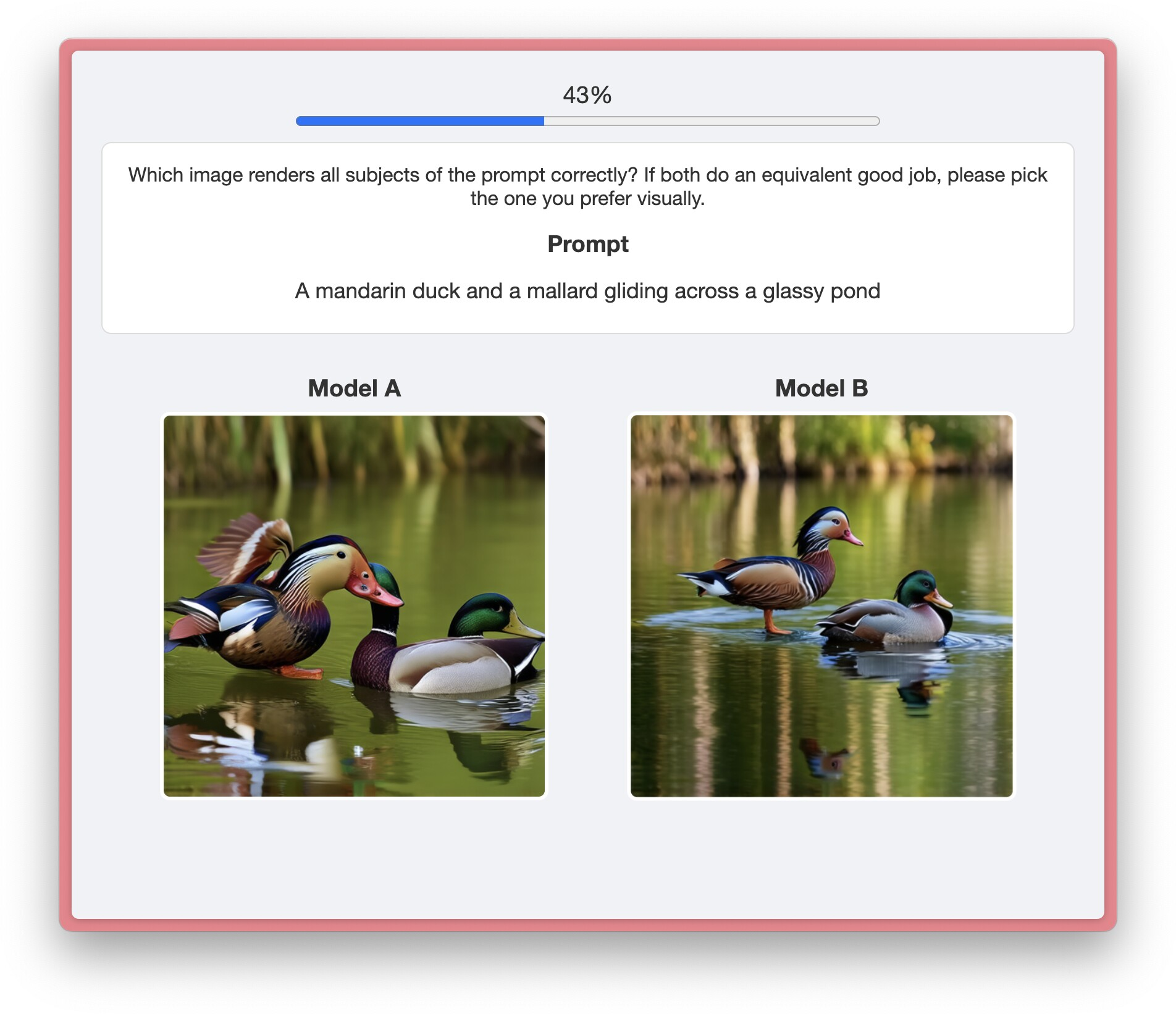

In human studies with 50 participants comparing pairs of images against a prompt, their approach was preferred more often than baselines.

Why is this important?

- Better multi-subject reliability means more trustworthy images for storybooks, comics, educational graphics, scientific diagrams, and any scene with multiple characters or objects.

- It replaces trial-and-error “hacks” with a clear, optimizable objective. That makes it easier to understand, improve, and transfer across models.

- It offers two practical modes: a plug-in test-time controller for quick use, and a small fine-tuning step for longer-term gains with no extra cost at inference time.

What could this lead to? (Implications and impact)

- Creators and developers can get more faithful multi-character scenes with fewer weird mistakes.

- Researchers now have a unifying framework to guide image models in a principled way, which could be extended to other tricky tasks (like binding specific attributes to specific subjects or coordinating actions among subjects).

- The idea of treating attention maps as probability distributions could help design better, more stable guidance signals in other AI systems.

- Future work could automate identifying “subjects” in a prompt and explore deeper reasons why current models entangle attention, leading to even more robust tools.

In short: the paper shows a clear, math-based way to “steer” text-to-image models so that multiple subjects appear correctly, stay separate, and keep their attributes—making the results more reliable and useful in the real world.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, concrete list of what remains missing, uncertain, or unexplored in the paper, aimed to guide future research.

- Theoretical guarantees for the single-pass test-time controller: provide error bounds for the local adjoint approximation (freezing ∇ₓb≈0 and using a left-Riemann estimate a(t)≈(1−t)∇ₓf), characterize when this approximation reduces entanglement, and quantify its dependence on scheduler, step size, and model smoothness (e.g., conditions on ∥∇ₓb∥, Lipschitz constants).

- Stability and convergence of controlled FM dynamics: analyze whether adding u(t) per the SOC objective preserves existence/uniqueness, avoids limit cycles or stiff behavior, and yields bounded deviations from the base generator, especially under large λ or aggressive f.

- Formal “closeness to base model” criterion: replace the informal notion of staying close to the base with a principled metric (e.g., path-space KL, control energy integrated over time, or Wasserstein distance) and prove bounds on distributional divergence induced by u.

- Memoryless schedule implications: assess how training controllers under the memoryless diffusion schedule (X₀ ⟂ X₁) affects semantic coherence, sample diversity, and alignment when deploying with standard ODE samplers at inference; provide sensitivity analyses and ablations across schedules.

- Drift–velocity reparameterization validity and scope: rigorously derive and test the identity b=2v−(ḋα/α)X under different FM schedulers; quantify residual error when schedules deviate from memoryless assumptions or when applied to discrete integrators.

- Automatic λ selection: develop closed-loop or prompt-adaptive strategies to choose λ based on online entanglement signals (e.g., attention overlap metrics), avoiding manual sweeps and reducing sensitivity to hyperparameters.

- Cross-attention as a spatial probability proxy: systematically quantify how well token-wise cross-attention predicts spatial placement across models, layers, timesteps, and prompts, and identify regimes where this proxy fails (e.g., highly abstract prompts, style tokens, negations).

- FOCUS loss design choices: evaluate the impact of Gaussian smoothing, map aggregation across blocks, and equal weighting of intra- vs inter-subject terms; test alternative spatially aware divergences (e.g., Sinkhorn/Wasserstein distances) to balance separation with coverage and avoid artifacts.

- Collapse prevention without side effects: investigate regularizers or alternative objectives that discourage over-concentrated attention without pushing mass away from subjects (e.g., variance constraints, spatial entropy with locality-aware kernels, multi-peak penalties).

- Subject tokenization dependency: replace manual subject token annotations with automated, robust extraction (NER + dependency parsing + tokenizer alignment) that generalizes across languages, encoders (CLIP/T5), multi-word subjects, synonyms, and subword splits.

- Attribute-level binding beyond spatial separation: augment attention-based losses with attribute-aware objectives (color, texture, pose) using localized image-text matching or concept classifiers to address leakage that is not purely spatial.

- Scalability to complex compositions: evaluate performance on prompts with >4 subjects, occlusions, explicit inter-subject relations (verbs, prepositions), fine-grained similarities (e.g., breeds), and nested structures (subjects with multiple attributes), including failure mode taxonomies.

- Effects on single-subject and non-entangled prompts: measure potential regressions on single-entity inputs or already well-behaved multi-subject prompts using aesthetic scores, FID-like metrics, and style/identity preservation benchmarks.

- Interaction with sampler choices and CFG: provide guidelines or theory for how the controller interacts with classifier-free guidance, sampler types (ODE vs SDE), step counts, and noise schedules, including recommended defaults and trade-offs.

- Generalization and sample efficiency of fine-tuning: perform scaling studies on training set size, diversity, and prompt complexity; analyze whether controllers trained on few prompts avoid overfitting, memorize layouts, or degrade diversity; derive sample complexity guarantees for Adjoint Matching.

- AM target bias and optimality: characterize the bias introduced by the lean adjoint (dropping u-dependent Jacobians), provide conditions under which uθ trained via AM approaches the optimal control u*, and quantify approximation gaps.

- LoRA placement and rank ablations: systematically test where to insert LoRA (self-/cross-attention, MLPs), rank r, and training duration; measure catastrophic forgetting, support preservation, and style drift with objective metrics (e.g., distributional similarity, variability indices).

- Cross-model portability and breadth: beyond SD 3.5 and FLUX.1, run comprehensive quantitative evaluations on SDXL and other diffusion/FM backbones (including closed-source and transformer-U-Net hybrids), verifying the FM–diffusion correspondence empirically and characterizing portability limits.

- Benchmarking and metrics for entanglement: establish standardized, open benchmarks with per-subject ground truth (masks, attributes, identities) and robust multi-subject fidelity metrics that capture leakage, omissions, and identity mixing beyond global CLIP/SigLIP or caption similarities.

- Human evaluation depth: increase participant numbers and diversify prompts; incorporate attribute-specific checklists, identity verification, and layout fidelity assessments to disentangle visual preference from correctness; report inter-rater reliability and bias analyses.

- Safety and fairness considerations: study whether attention manipulation disproportionately affects depictions of protected attributes or stereotypes, and design safeguards to prevent amplifying harmful biases when steering attention or attributes.

- Runtime and memory optimization: quantify overhead across GPUs, investigate low-rank or sparse attention updates, caching, or step-skipping strategies to reduce the ~2× latency at test time while maintaining fidelity gains.

- Combining with layout/region methods: evaluate synergies with MultiDiffusion, GLIGEN, and Be Decisive—e.g., using FOCUS to refine within-region binding while external methods set coarse layouts—and characterize conflicts or complementarities.

- Temporal/sequence consistency: extend the controller to video or multi-panel generation to maintain subject identity and attributes over time or across related images, with metrics for temporal entanglement and drift.

- Robustness to language phenomena: test prompts with negations, counting (“three dogs, two cats”), coreference, long-range dependencies, and multilingual inputs; adapt the controller to LLMs with different tokenization schemes.

- Theoretical trade-offs in the SOC objective: analyze Pareto fronts between control energy and disentanglement cost, derive optimal schedules for u(t) over time (not just the 1−t factor), and explore alternative cost structures (e.g., constraints instead of penalties) for stronger guarantees.

Practical Applications

Practical Applications Derived from the Paper

The paper introduces a control-theoretic framework (FOCUS) for steering flow matching and diffusion-based text-to-image models to produce faithful, disentangled multi-subject images. It offers two deployable mechanisms: a single-pass, training-free test-time controller and a lightweight fine-tuning procedure via Adjoint Matching, plus a probabilistic attention loss (FOCUS) that treats attention maps as distributions. Below are practical, real-world applications grouped by readiness.

Immediate Applications

The following applications can be implemented now by integrating the test-time controller or the lightweight fine-tuned controller into existing T2I pipelines (e.g., Stable Diffusion 3.5, FLUX, SDXL), with modest engineering and compute overhead.

- Reliable multi-subject image generation in creative workflows (Advertising/Marketing, Media/Entertainment, Design)

- Description: Generate scenes with multiple products, characters, or props where attributes remain bound to the correct subject (reduced leakage and omissions), preserving the model’s original style.

- Tools/Workflows: “FOCUS mode” in T2I products; an API parameter for λ controlling the FOCUS cost; single-pass controller integrated in inference; LoRA-based fine-tuning packs per brand/style.

- Assumptions/Dependencies: Access to cross-attention maps and subject token indices; commodity GPU for ~2× inference time (test-time control) or short fine-tune jobs; support for FM/diffusion backbones.

- Story illustration and comics with consistent multi-character scenes (Publishing, Education, Media)

- Description: Storybooks, comics, and visual narratives where each character’s identity and attributes stay consistent across panels without manual bounding-box layout.

- Tools/Workflows: Fine-tuned controller trained on small curated prompts; batch generation with human-in-the-loop review; attention-derived diagnostics to flag entanglement.

- Assumptions/Dependencies: Availability of consistent subject tokens; minimal dataset for fine-tuning; use of models exposing text–image cross-attention.

- Lifestyle imagery for e-commerce featuring multiple items (Retail/E-commerce)

- Description: Generate product group shots (e.g., bundles, room settings) that correctly bind colors, materials, sizes, and positions to the right items to reduce post-production edits.

- Tools/Workflows: Integration into listing-image pipelines; “multi-product fidelity” preset with FOCUS; attribute-binding prompt templates; QA via entanglement scores.

- Assumptions/Dependencies: Accurate mapping from product attributes to tokens; cross-attention access; governance for synthetic images.

- Concept art and environment exploration with many entities (Gaming, Film/TV)

- Description: Early-phase ideation with multiple characters/objects in complex scenes without attribute cross-contamination, maintaining a studio’s signature style.

- Tools/Workflows: Studio-specific LoRA fine-tunes; per-shot λ sweeps for best composition; FOCUS-based score to rank outputs.

- Assumptions/Dependencies: Stable prompts; cross-attention accessible in the backbone; small fine-tuning data per style.

- Synthetic data generation for multi-object detection and segmentation (Software/CV/Robotics)

- Description: Generate training datasets with multiple labeled objects where attribute leakage is minimized, reducing label noise for detectors/segmenters.

- Tools/Workflows: Use attention maps as probabilistic masks to derive pseudo-annotations; FOCUS-controlled sampling for diverse multi-object scenes.

- Assumptions/Dependencies: Attention-to-mask reliability; domain gap management; validation pipeline for downstream model performance.

- Scientific figures and educational diagrams with multiple labeled components (Education, Scientific Communication)

- Description: Compose clear multi-component visuals (e.g., biological structures, instruments) with reduced identity entanglement and clearer spatial separation.

- Tools/Workflows: Template prompts with subject tokens; test-time controller for on-demand fidelity; FOCUS score thresholds in internal QA.

- Assumptions/Dependencies: Tokenization of technical subjects; oversight to verify correctness; base model visual style suitability.

- Consumer photo apps for multi-person portraits and collages (Consumer Software)

- Description: Generate or stylize multi-person images where clothing, accessories, and roles remain bound to the correct person (e.g., event cards).

- Tools/Workflows: A “disentangled multi-subject” toggle; fine-tuned controllers for platform styles; simple λ presets per device class.

- Assumptions/Dependencies: Identity rights and privacy; device-side performance constraints; access to attention signals (may require on-device models).

- Automated entanglement auditing and quality control (Trust & Safety, Policy/Compliance)

- Description: Use FOCUS loss as an internal metric to flag outputs with high multi-subject entanglement before publication.

- Tools/Workflows: Batch scoring pipeline; threshold-based gating; dashboards that track entanglement over time/versions.

- Assumptions/Dependencies: Cross-attention availability; agreed-upon thresholds; complementary human review for edge cases.

- Prompt engineering assistants for multi-subject scenes (Software Tools)

- Description: Suggest prompt rewrites or token selections to improve subject separation and attribute binding, guided by FOCUS signals during sampling.

- Tools/Workflows: IDE-like prompt editors; λ recommendations; real-time feedback on attention overlap.

- Assumptions/Dependencies: Attention introspection APIs; rapid inference paths; consistent tokenizer behavior across models.

- Benchmarking and evaluation of multi-subject fidelity (Academia/Research)

- Description: Standardize multi-subject evaluation using FOCUS scores and human preferences; compare models and heuristics under a unified SOC objective.

- Tools/Workflows: Public benchmarks with per-subject annotations; composite score reporting; reproducible λ sweeps.

- Assumptions/Dependencies: Access to attention maps; community acceptance of metrics; availability of curated prompt sets.

Long-Term Applications

These applications are promising but require additional research, scaling, or ecosystem support (e.g., automated tokenization, video/3D extensions, mobile optimization).

- Multi-subject text-to-video with identity and attribute coherence across time (Media/Entertainment)

- Description: Extend SOC-based control and probabilistic attention losses to video, preserving subject separation and stable attributes across frames.

- Tools/Workflows: Temporal controllers; frame-wise and sequence-level attention divergences; video LoRA fine-tunes.

- Assumptions/Dependencies: Robust temporal attention signals; scalable training/inference; memory-efficient controllers.

- 3D/scene generation with disentangled multi-object composition (AR/VR, Robotics, Architecture)

- Description: Apply FOCUS-like objectives to 3D generative pipelines (NeRFs, diffusion-based 3D models) to place multiple entities with consistent attributes.

- Tools/Workflows: 3D attention or feature-space distributions; SOC controllers for spatial consistency; integration with CAD/scene graphs.

- Assumptions/Dependencies: Well-defined 3D attention analogs; geometry-aware divergences; cross-modal consistency.

- Automated subject and attribute tokenization (Software/ML Tooling)

- Description: Remove manual subject annotation via models that discover and tag subjects/attributes from prompts, enabling broader use and lower friction.

- Tools/Workflows: NER-like tokenizers for prompts; attribute parsers; validation tools that map tokens to attention columns reliably.

- Assumptions/Dependencies: High-precision tokenization; robustness across languages; alignment with backbone tokenizers.

- Interactive layout-aware design assistants (Design, Interior/Industrial Design)

- Description: Combine SOC controllers with optional layout guidance to place multiple objects precisely without heavy user effort.

- Tools/Workflows: Mixed “layout-free” and “layout-aware” modes; constraints fused with FOCUS; GUI widgets for regional hints.

- Assumptions/Dependencies: Stable fusion of spatial constraints and attention-based control; avoidance of conflicts with model priors.

- Fairness and bias audits for multi-subject generation (Policy/Compliance/Ethics)

- Description: Use disentanglement metrics to detect and mitigate attribute leakage across demographic tokens, reducing stereotype propagation.

- Tools/Workflows: FOCUS-based fairness dashboards; counterfactual prompt tests; risk scoring pipelines integrated with content review.

- Assumptions/Dependencies: Sensitive attribute handling; representative test suites; governance frameworks.

- Edge and real-time deployment of multi-subject controllers (Mobile/AR)

- Description: Optimize controllers for on-device generation (e.g., AR filters with multiple entities), balancing latency and fidelity.

- Tools/Workflows: Distilled controllers; schedule-invariant updates; partial attention introspection on-device.

- Assumptions/Dependencies: Efficient backbones; hardware acceleration; privacy-compliant local processing.

- Cross-modal control (Audio, TTS, Multimodal Documents)

- Description: Generalize SOC + attention-divergence notions to audio/TTS for multi-speaker scenes or to multimodal doc assembly (text + images + layout).

- Tools/Workflows: Attention distributions over time-frequency bins; SOC controllers adapted to audio; multimodal composition engines.

- Assumptions/Dependencies: Suitable attention representations; differentiable costs per modality; user studies for quality.

- Semi-automatic dataset annotation using attention distributions (CV/Data Ops)

- Description: Turn attention maps into probabilistic masks to bootstrap segmentation and detection labels for multi-object scenes.

- Tools/Workflows: Mask extraction + human refinement; active learning loops; uncertainty-aware labelers.

- Assumptions/Dependencies: Reliable attention–pixel correspondence; domain-specific validation; scalable curation tools.

- Regulatory standards for synthetic images with multi-subject fidelity guarantees (Policy/Regulation)

- Description: Codify minimum fidelity thresholds (e.g., FOCUS score bands) for synthetic ads or disclosures, reducing deceptive layouts/attributes.

- Tools/Workflows: Compliance scorecards; audit trails tied to controller settings; certification for generative vendors.

- Assumptions/Dependencies: Policy consensus; verifiable and interpretable metrics; industry adoption.

- Enterprise-grade controlled generation at scale (Cloud/Enterprise Software)

- Description: Governance-aware pipelines that combine SOC controllers, human review, and telemetry to ensure consistent multi-subject outputs across brands and campaigns.

- Tools/Workflows: Controller registries; λ management per project; composite scoring; rollback/versioning of fine-tuned controllers.

- Assumptions/Dependencies: MLOps maturity; access controls; reproducibility and monitoring infrastructure.

Notes on Feasibility

- The test-time controller adds roughly 2× inference time but requires no retraining; the fine-tuned controller uses LoRA with <0.1% trainable parameters and short training runs, and can match base inference speed thereafter.

- Both methods rely on access to cross-attention maps and subject token indices; automated tokenization is a key dependency for broad deployment.

- The approach is architecture-agnostic across modern flow matching and diffusion backbones; portability depends on attention introspection and the flow–diffusion correspondence.

- FOCUS treats attention maps as probability distributions (with Jensen–Shannon divergence), which assumes that attention correlates with spatial placement; while empirically supported, some domains may require additional validation.

Glossary

- Adjoint: In optimal control, the co-state variable that evolves backward in time and captures sensitivity of the objective with respect to the state. "where is the co-state (adjoint)."

- Adjoint Matching: A training method that regresses a control network to an adjoint signal computed along frozen trajectories to approximate optimal control. "Fine-tuning via Adjoint Matching. A stable, low-cost update rule based on Adjoint Matching \citep{domingo-enrich_adjoint_2025} that regresses a control network to a backward adjoint signal while preserving base-model capabilities."

- Brownian motion: A continuous-time stochastic process with independent Gaussian increments used to model noise in SDEs. "where is standard Brownian motion in ."

- Co-state: The adjoint variable in the Hamiltonian formulation of optimal control representing the Lagrange multipliers for state dynamics. "where is the co-state (adjoint)."

- Conditional Flow Matching (CFM): A training loss that regresses a learned velocity field towards the conditional expectation of a reference path’s velocity. "FM is trained with the conditional flow matching loss \citep{lipman_flow_2023}"

- Control-affine dynamics: Systems whose dynamics are linear in the control input, enabling quadratic optimal control formulations. "For control-affine dynamics with , the Hamiltonian of the SOC is"

- Cross-attention: Transformer mechanism computing attention from image-space queries to text tokens for conditioning during generation. "At each sampling step, T2I backbones compute cross-attention from image-space queries to text tokens."

- Denoising diffusion: Generative modeling framework where data are recovered from noise via iterative denoising, often formulated in continuous time. "Classical denoising diffusion models arise as special cases of FM when their discrete procedures are lifted to continuous time; refer to \Cref{app:denoising} for details."

- Diffusion coefficient: The scalar function controlling noise magnitude in an SDE’s stochastic term. "with diffusion coefficient :"

- Diffusion Transformer: A transformer-based architecture for diffusion or flow-matching models that processes tokens to perform generation. "Modern T2I backbones follow Diffusion Transformer designs \citep{peebles_scalable_2023}."

- Drift: The deterministic part of an SDE that governs the mean direction of motion in state space. "We will refer to as the (base) drift."

- Flow Matching (FM): A generative modeling framework that learns a time-dependent vector field transporting a base distribution to the data distribution. "Flow Matching (FM) trains a timeâdependent vector field $v_\theta:R^d\times[0,1]\toR^d$ that transports a base distribution (e.g., ) to a target distribution (e.g. ), without simulating a forward noising process during training."

- Flow–diffusion correspondence: The theoretical relationship connecting flow matching and diffusion formulations, enabling transfer of methods. "The same formulation unifies prior attention heuristics, extends to diffusion models via a flowâdiffusion correspondence"

- FOCUS (Flow Optimal Control for Unentangled Subjects): A probabilistic attention-based loss and controller to reduce multi-subject entanglement by encouraging separation and consistency of subject attention maps. "We introduce FOCUS (Flow Optimal Control for Unentangled Subjects), which achieves state-of-the-art multi-subject fidelity across models."

- Hamiltonian: A function combining running cost and dynamics used in optimal control to derive optimality conditions via adjoint variables. "the Hamiltonian of the SOC is \begin{align} \mathcal{H}(x,u,a,t) = \frac{1}{2} |u|_22 + f(x,t) + a\top \left(b(x,t) + \sigma(t) u\right), \end{align}"

- InfoNCE-style objective: A contrastive learning objective that separates classes by pulling matched pairs together and mismatched pairs apart. "CONFORM formulates a contrastive, InfoNCE-style objective that separate different subjects while pulling subjectâattribute pairs together \citep{meral_conform_2023}."

- Jensen–Shannon divergence: A symmetric, bounded measure of similarity between probability distributions derived from KL divergence. "We promote separation of subjects by maximizing a Jensen--Shannon divergence (JSD) defined over attention distributions."

- Kullback–Leibler divergence: An asymmetric measure of discrepancy between two probability distributions. "with $D_{\mathrm{KL}( \| ) = \sum_{i=1}^d p_i \log \frac{p_i}{q_i}$ being the Kullback-Leibler divergence."

- Lean adjoint: An approximate adjoint computed along frozen trajectories that omits certain Jacobian terms for efficiency during training. "regressing to a cheaper lean adjoint computed along frozen forward trajectories while dropping -dependent Jacobian terms:"

- LoRA (Low-Rank Adaptation): A parameter-efficient fine-tuning technique that inserts low-rank adapters into attention layers. "We insert LoRA layers \citep{hu_lora_2021} into self-attention blocks and freeze all base parameters."

- Memoryless diffusion schedule: A noise schedule that makes interpolant endpoints independent and yields a simple relationship between drift and velocity. "We adopt the memoryless diffusion schedule, which makes the stochastic interpolant endpoints independent () and yields a simple driftâvelocity identity:"

- MultiDiffusion: A technique that fuses multiple diffusion trajectories under spatial constraints to compose multi-object scenes. "MultiDiffusion fuses multiple diffusion trajectories under shared spatial constraints (e.g., boxes or masks), enabling faithful multi-subject placement without retraining \citep{bar-tal_multidiffusion_2023}."

- ODE (Ordinary differential equation): A deterministic continuous-time equation describing the evolution of a system without stochastic noise. "Many off-the-shelf T2I models are optimized for ODE sampling ()."

- Probability simplex: The set of all probability vectors over d discrete outcomes (nonnegative entries summing to one). "let be the probability simplex."

- Rectified Flow (RF): A specific FM scheduler using linear interpolation between start and end states. "A widely used instance is rectified flow (RF) with and \citep{liu_flow_2022}."

- SDE (Stochastic differential equation): A differential equation with stochastic terms modeling systems with randomness, typically driven by Brownian motion. "which can be passed to any SDE solver without modifying the integrator."

- Stochastic optimal control (SOC): The optimization of controls for systems with stochastic dynamics to minimize expected cumulative costs. "Viewing flow matching (FM) through stochastic optimal control (SOC), we formulate subject disentanglement as control over a trained FM sampler."

- Vector field: A function assigning a velocity vector to each point in state space, guiding trajectories over time. "Flow Matching (FM) trains a timeâdependent vector field $v_\theta:R^d\times[0,1]\toR^d$"

- Velocity reparameterization: Reformulating the effects of control on the drift as an equivalent shift in the model’s velocity for SDE solvers. "Velocity reparameterization (SDE). Let $v_{\text{base}$ denote the base FM velocity."

Collections

Sign up for free to add this paper to one or more collections.