- The paper presents a survey analysis showing how developers use GenAI tools to boost productivity across coding, debugging, and documentation tasks.

- It employs mixed-methods to uncover preferences for iterative prompting and feedback loops over single comprehensive prompts.

- Insights reveal reliability challenges in complex code generation, highlighting the need for refined prompt techniques and guidance.

The integration of Generative AI (GenAI) tools into software engineering, exemplified by platforms such as GitHub Copilot and ChatGPT, is reshaping the development landscape. The focal point of this transformation is prompt engineering—crafting effective natural language instructions to guide GenAI systems in producing useful output. This paper systematically investigates how software developers incorporate GenAI tools into their workflows through a large-scale survey, offering insights into usage patterns, prompting strategies, and reliability perceptions across diverse software engineering tasks.

Study Methodology

The methodology of this study was designed to explore the integration of GenAI tools within developmental workflows, covering various software engineering tasks such as code generation, debugging, documentation, and more.

Figure 1: Study Methodology.

Participant Recruitment and Survey Design

The survey targeted developers experienced with GenAI, gathering insights on three core areas: GenAI usage patterns, prompting and conversation strategies, and reliability perceptions. The survey was distributed via professional networks and social media, engaging developers from varied roles and domains. Participants shared experiences on the integration of GenAI across six major SE tasks, employing a mixed-method approach to analyze quantitative data distributions and qualitative coding for open-ended responses.

Results

GenAI Usage Patterns

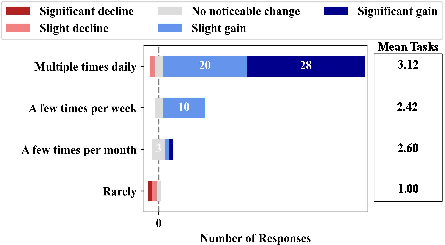

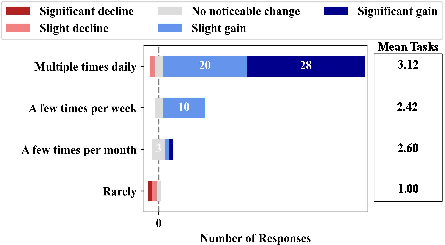

Developers primarily use GenAI for code generation; however, proficiency correlates with engagement in diverse tasks such as debugging and documentation. Developers who frequently use GenAI also report increased productivity, correlating with broader GenAI application across tasks.

Figure 2: Productivity and task breadth by usage frequency.

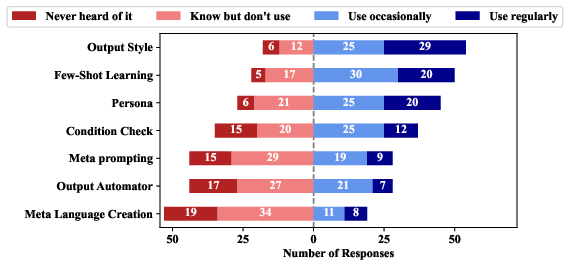

Prompting Techniques and Conversation Strategies

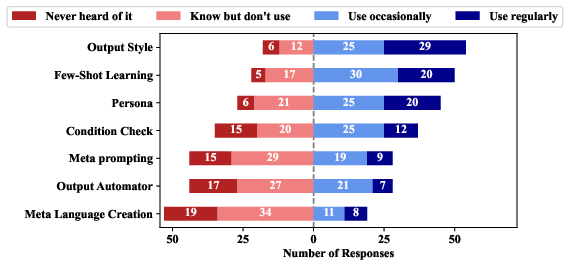

Survey results highlight a widespread familiarity with prompting techniques, yet actual adoption is less prevalent. Techniques like Few-Shot Learning and Output Style are most recognized, while Meta Language Creation remains less familiar.

Figure 3: Prompting technique familiarity distribution.

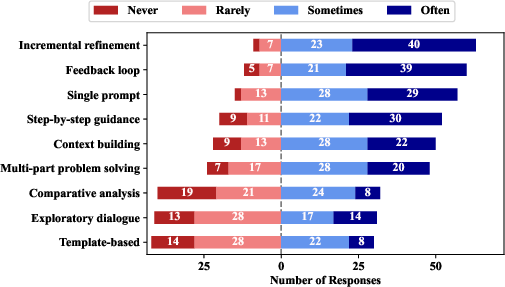

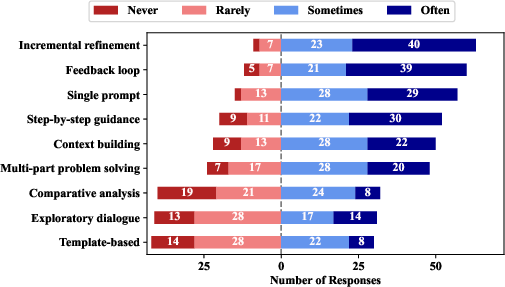

In terms of conversation strategies, developers favor iterative refinement and feedback loops over single comprehensive prompts, illustrating a preference for multi-turn interactions.

Figure 4: Conversation structure usage frequency.

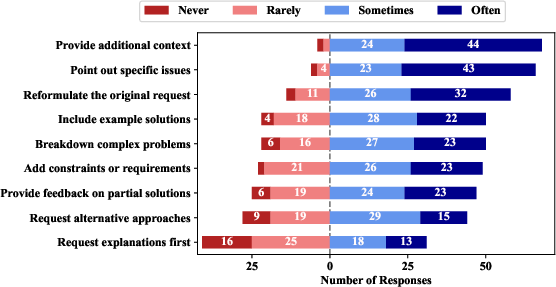

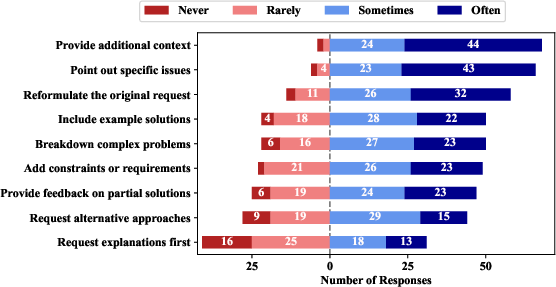

Error handling strategies show developers often provide additional context and feedback, underscoring an interactive approach to managing GenAI inadequacies.

Figure 5: Error handling strategy usage frequency.

Reliability and Issues

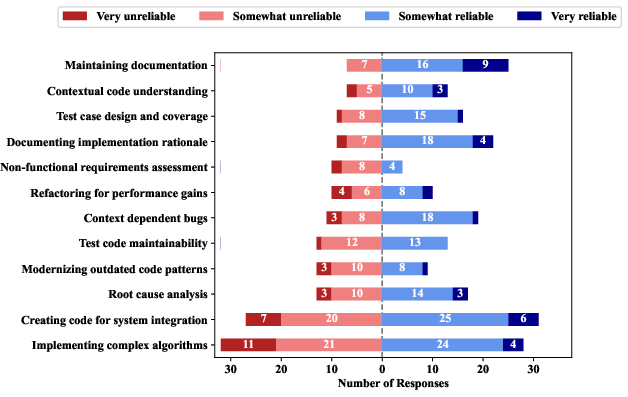

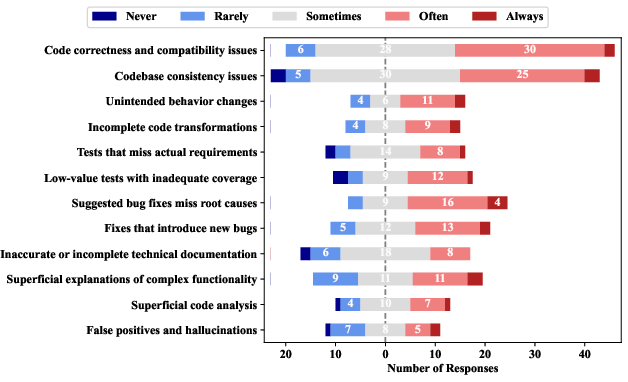

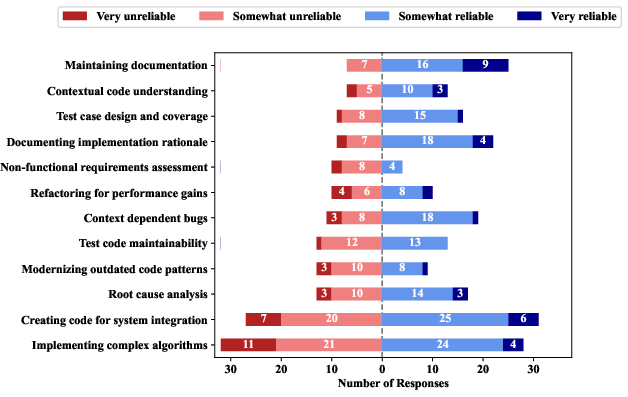

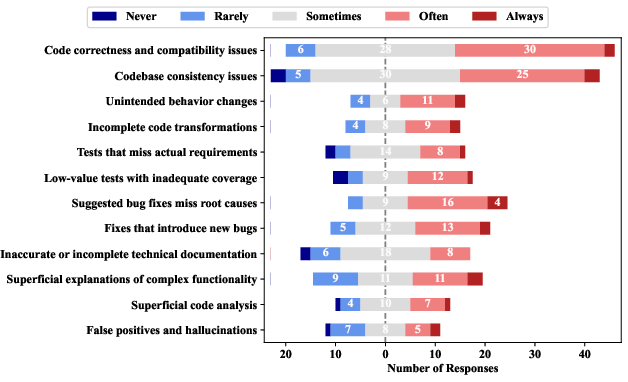

Documentation tasks are perceived as most reliable, whereas complex code generation presents challenges. Senior developers report reliability concerns predominantly with debugging, marked by frequent issues such as missed root causes in bug fixes.

Figure 6: Reliability perceptions of GenAI across tasks.

Figure 7: GenAI issue frequency distribution.

Discussion

Developing GenAI Proficiency

The paper identifies a mutual reinforcement between perceived GenAI proficiency and task utilization breadth. The iterative interaction method aligns with effective GenAI utilization, leading to substantial productivity gains in developers' workflows.

Practical Implications

Despite high awareness, prompting techniques witness modest adoption, signaling potential usability or applicability challenges. This presents an opportunity for deeper investigation into guidance and taxonomies of prompting strategies.

Future Work

Expanding research on GenAI interaction methods can involve refining iterative conversation tools and exploring comprehensive taxonomies encompassing all possible GenAI interaction types.

Conclusion

The study provides a detailed characterization of GenAI integration in software development, emphasizing iterative interaction and highlighting substantial challenges in complex code tasks. It sets a foundation for future improvements in GenAI tool reliability and developer interaction strategies.