- The paper presents a novel robotic pollination framework that fuses 3D plant skeletonization with elastic rod vibration modeling, achieving a 92.5% main-stem grasping success rate.

- It employs a 7-DoF manipulator with advanced semantic segmentation (Grounding DINO and SAM2) to optimize collision-free grasp planning in complex plant architectures.

- The study validates simulation-experiment correlations (r > 0.96) and underlines scalability across diverse plant species for robust greenhouse deployment.

Vision-Guided Targeted Grasping and Vibration for Robotic Pollination in Controlled Environments

Introduction and Motivation

This paper presents a comprehensive robotic pollination framework for controlled environment agriculture (CEA), addressing the limitations of manual and bumblebee-assisted pollination in greenhouses and indoor farms. The system integrates vision-based 3D plant reconstruction, targeted grasp planning, and physics-based vibration modeling to enable precise, safe, and efficient pollination. The motivation stems from the need to automate labor-intensive pollination tasks, reduce operational costs, and ensure reliable pollination in environments where natural wind and commercial pollinators are unavailable or restricted.

System Architecture and Methodology

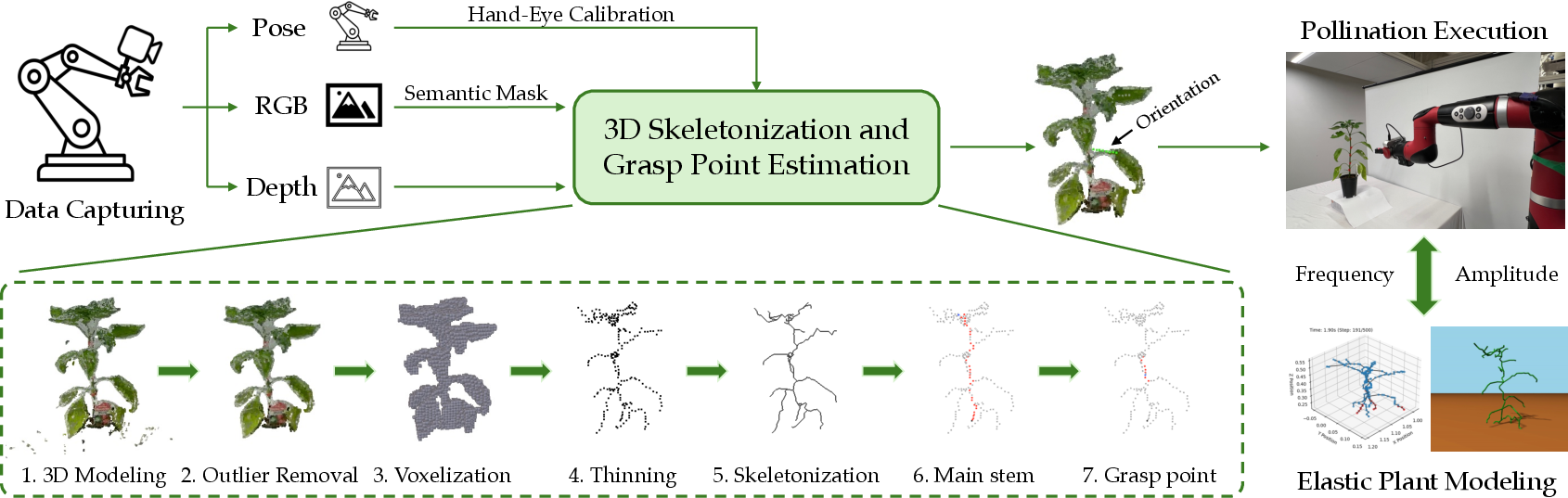

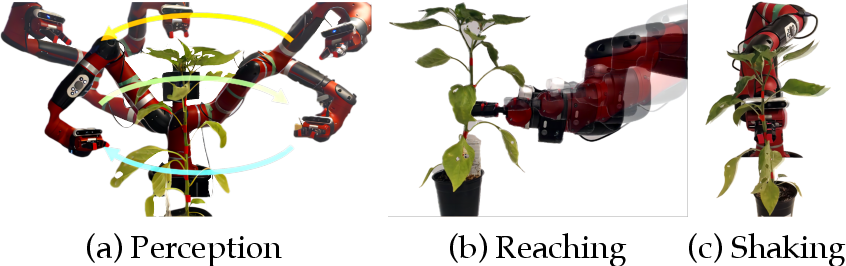

The proposed system consists of a 7-DoF robotic manipulator equipped with an RGB-D sensor and soft grippers. The workflow is divided into two main stages: (i) vision-guided 3D skeletonization and grasp planning, and (ii) elastic rod-based plant dynamics modeling for vibration optimization.

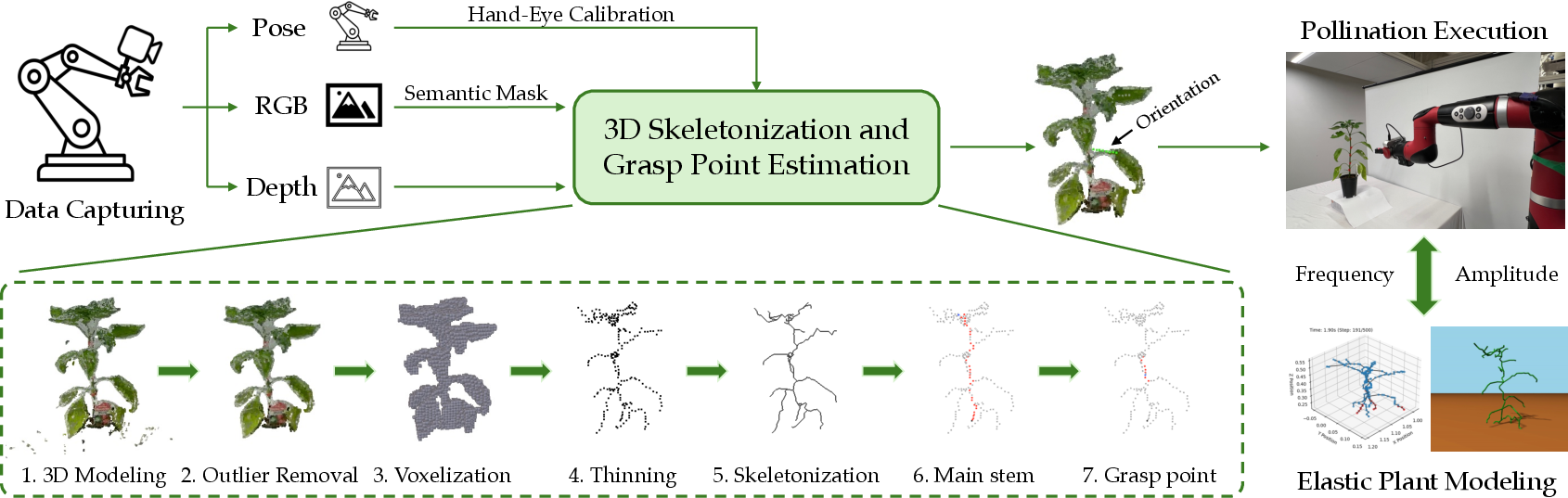

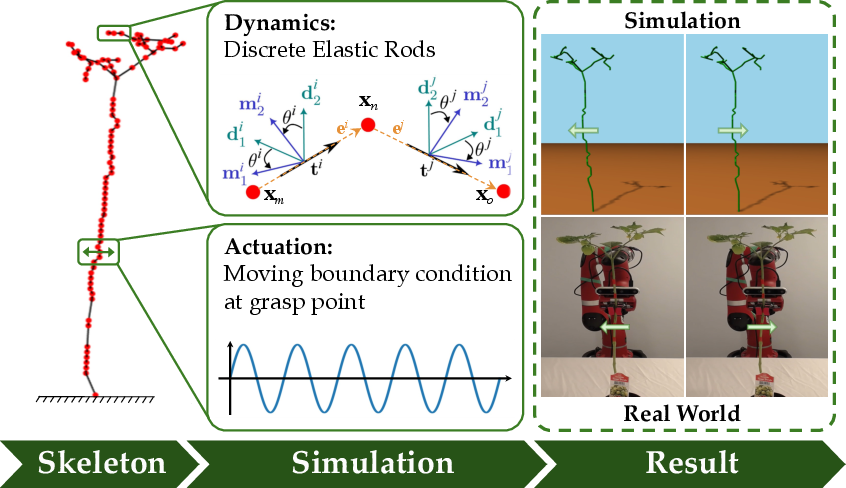

Figure 1: Overview of the robotic pollination system, illustrating the integration of multi-view perception, skeletonization, grasp planning, and vibration modeling.

3D Plant Skeletonization and Grasp Planning

The perception pipeline utilizes multi-view RGB-D images to reconstruct a high-fidelity 3D point cloud of the plant. Semantic segmentation is performed using Grounding DINO and SAM2 to isolate plant structures from background noise. The fused point cloud is processed via voxel grid downsampling and DBSCAN clustering to remove artifacts, followed by conversion to a binary voxel grid.

A 3D thinning algorithm extracts a one-voxel-thick skeleton, which is then simplified using a weighted KNN graph and minimum spanning tree to identify the main stem. The optimal grasp point is selected as the midpoint of the longest edge on the main stem, with a collision-free approach vector determined by minimizing obstruction from nearby branches. The final grasp pose is computed as a 7-DoF transformation, ensuring precise alignment with the robot's coordinate frame.

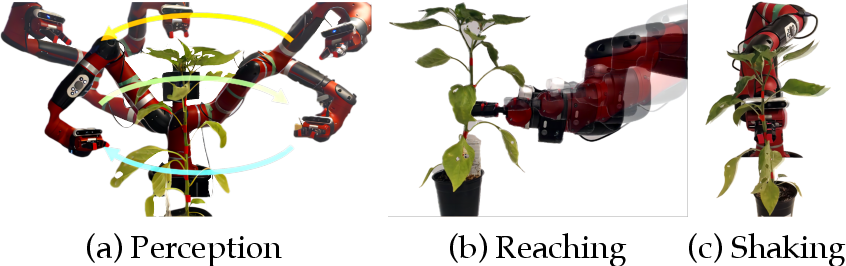

Figure 2: The robotic pollination process, showing Perception, Reaching, and Shaking stages for end-to-end execution.

Elastic Rod-Based Plant Dynamics Modeling

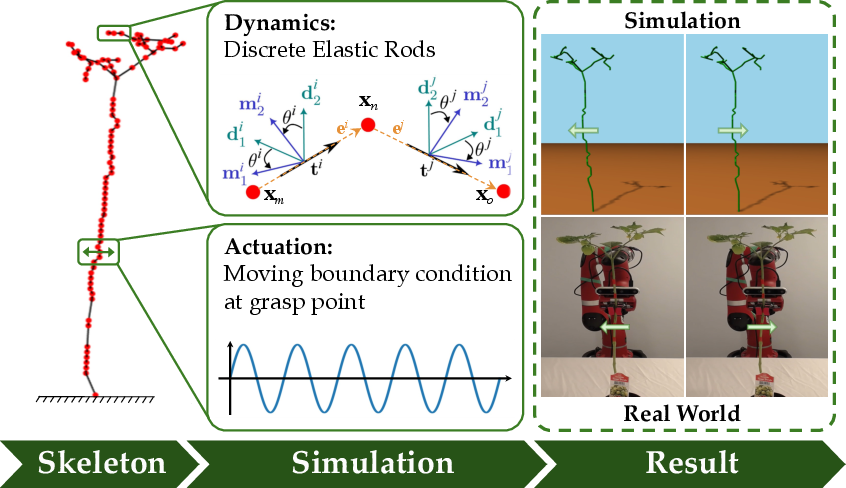

The plant is modeled as a network of one-dimensional elastic rods using the Discrete Elastic Rod (DER) framework, extended via PyDiSMech to handle branched structures and compliant joints. The model simulates stretching and bending deformations, with material parameters (density, Young’s modulus) estimated from physical measurements and vibration experiments.

The vibration actuation is emulated by prescribing time-varying boundary conditions at the grasp node. The governing equations of motion are solved implicitly, capturing the dynamic response of the plant and enabling optimization of vibration parameters to maximize flower motion while minimizing the risk of damage.

Figure 3: Simulation workflow using PyDiSMech, demonstrating the modeling of plant skeletons and vibration actuation.

Experimental Validation

Skeletonization and Generalizability

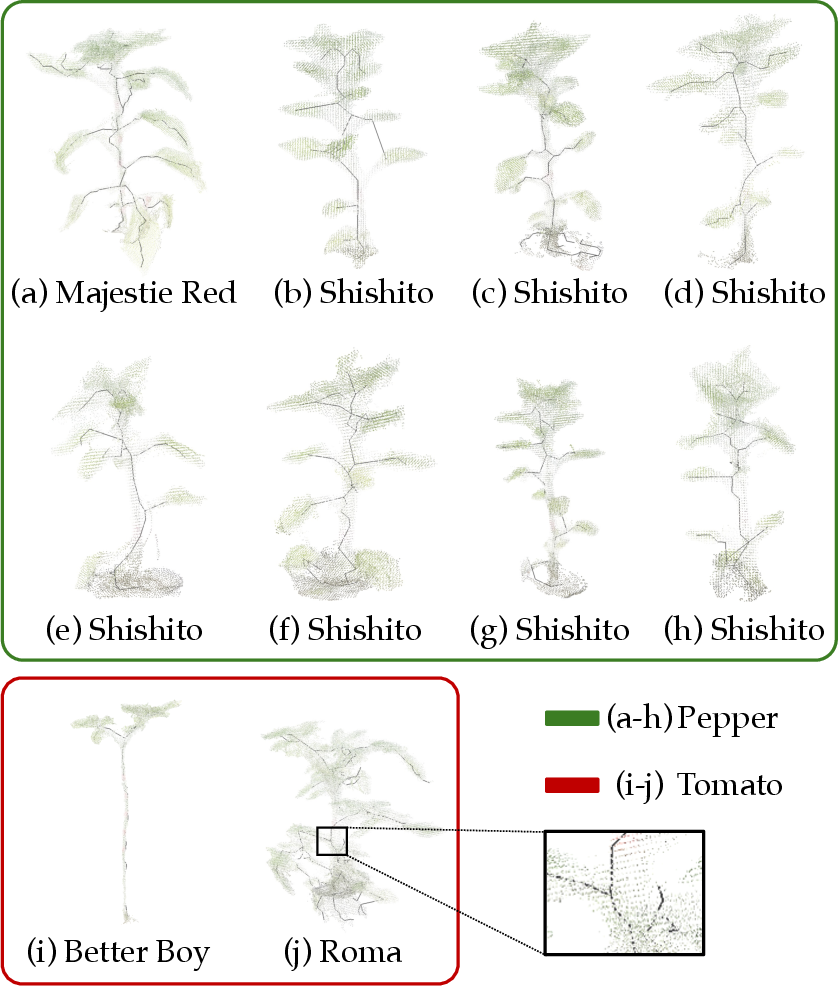

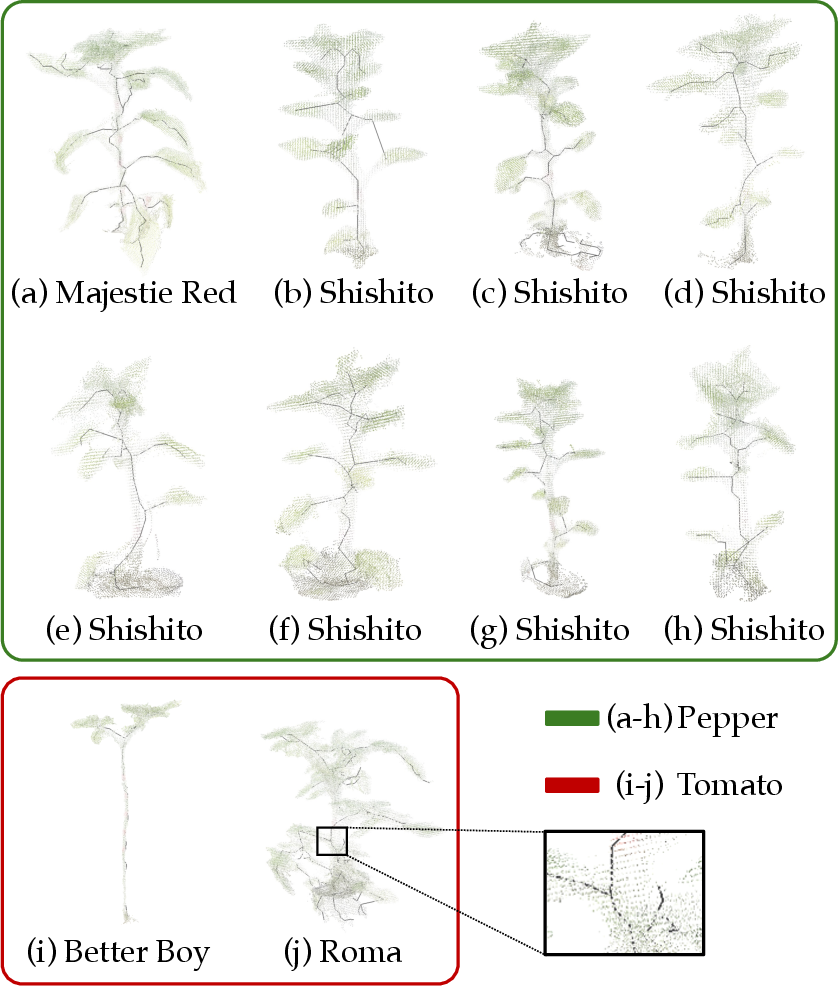

The skeletonization algorithm was evaluated on 10 morphologically diverse plants, consistently producing well-aligned skeletons using a single parameter set. Minor segmentation bleed and depth sensing inaccuracies were noted, but these did not significantly impact main stem identification in typical CEA scenarios.

Figure 4: Generalizability of the skeletonization algorithm across 10 diverse plant specimens.

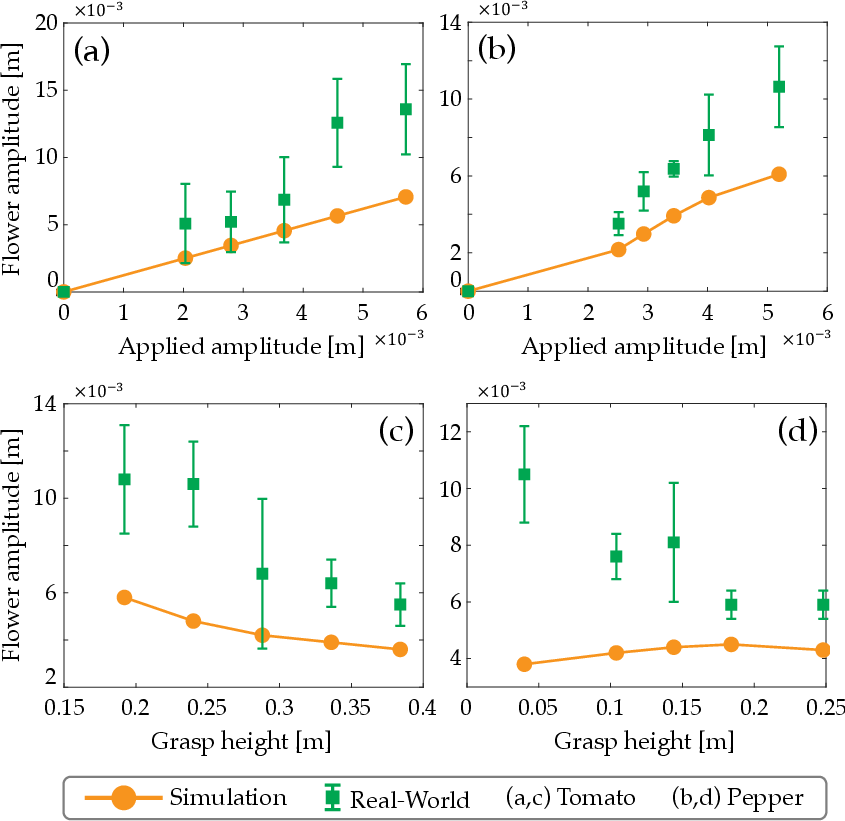

Vibration Transfer and Sim-to-Real Analysis

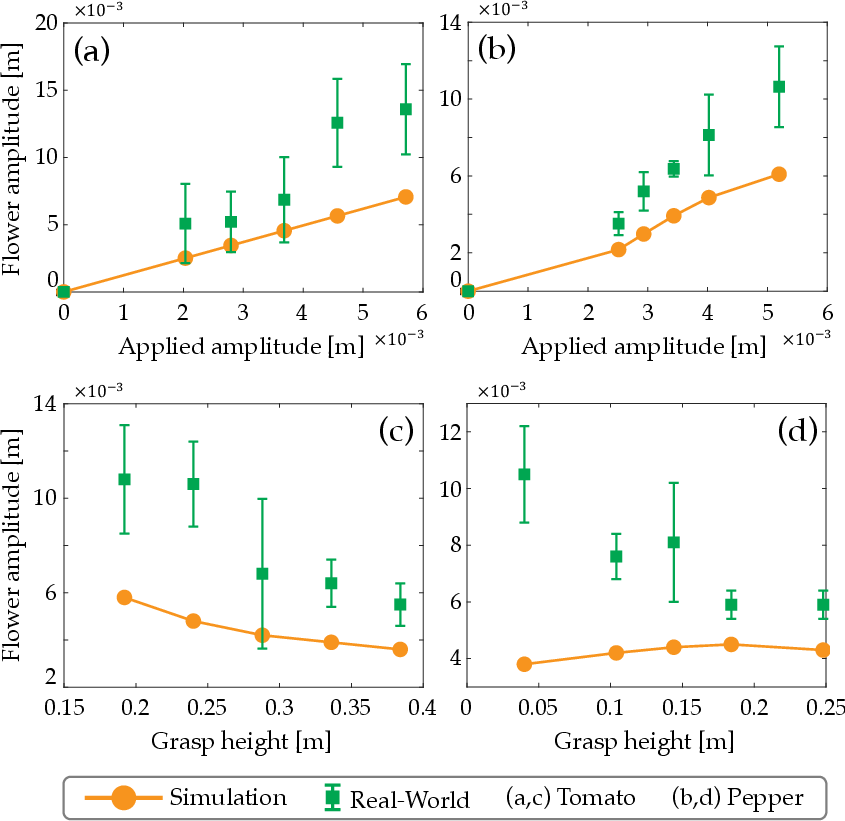

Controlled vibration experiments on tomato and pepper plants demonstrated a strong positive correlation (r>0.96) between applied vibration amplitude and flower oscillation amplitude. The DER-based simulations reproduced experimental trends but underpredicted amplitude by 45% on average, attributed to model simplifications such as neglecting stem tapering and local flexibility.

The dependence of flower amplitude on grasping location was also validated, with amplitude decreasing as the grasp point moved closer to the flower. Quantitative agreement was observed for tomato (r≈0.92), while pepper exhibited larger deviations due to complex branching.

Figure 5: Comparison of experimental and simulated flower motion, showing amplitude correlations and grasp location effects.

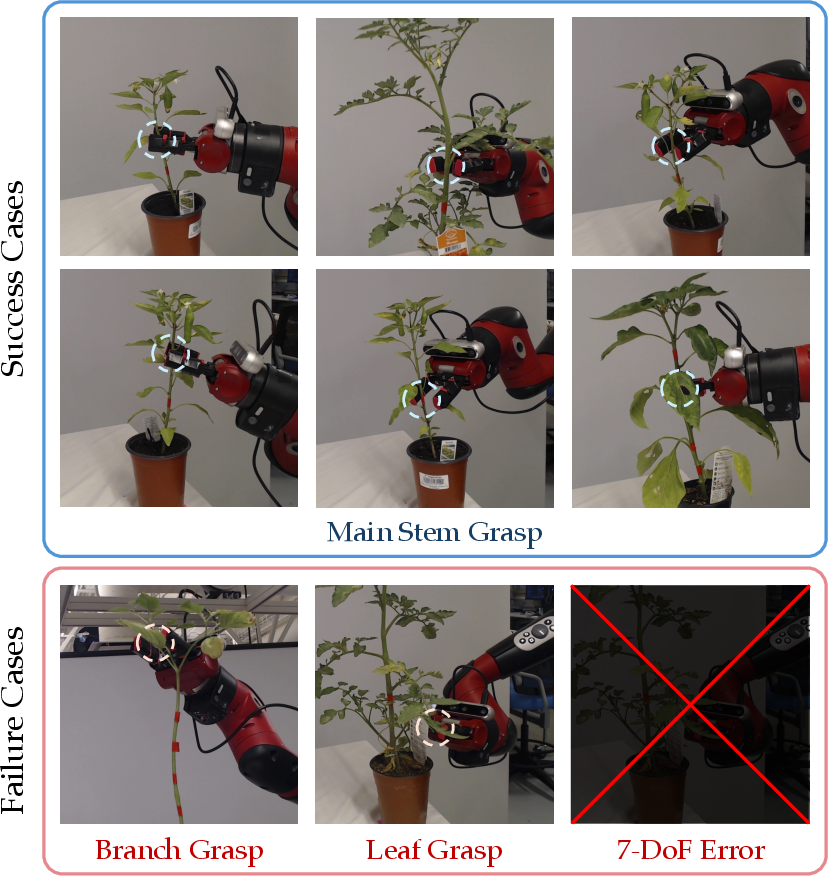

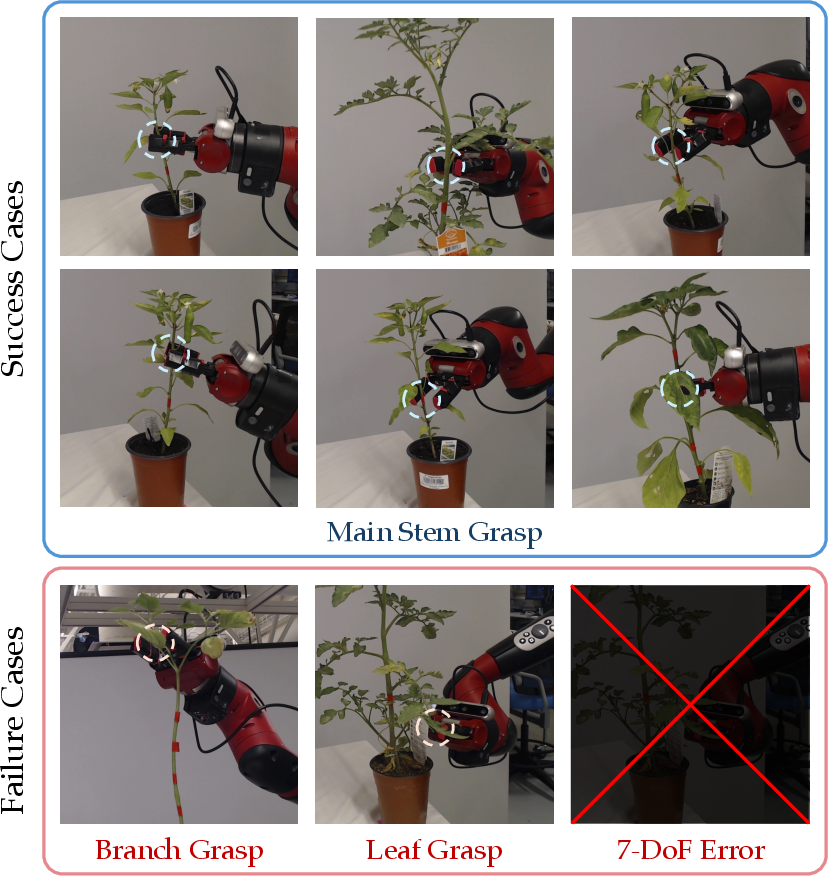

End-to-End Robotic Pollination Trials

Forty trials on 10 plants yielded a 92.5% main-stem grasping success rate, with failures primarily due to grasping branches/leaves or manipulator pose errors. The system demonstrated robust generalization across plant morphologies and approach angles.

Figure 6: Qualitative results of real-world experiments, highlighting grasping success and failure cases.

- Grasping Success Rate: 92.5% across 40 trials (96.9% for pepper, 75% for tomato).

- Simulation-Experiment Correlation: r>0.96 for vibration amplitude transfer; amplitude underprediction of 40–55%.

- Computational Requirements: Real-time perception and planning achieved with Intel i9-9900KF CPU and RTX 2080 Ti GPU; skeletonization and grasp planning completed within 75 seconds per trial.

- Scalability: The framework is modular and can be adapted to other crops with similar stem/branch structures; upgrading depth sensors (e.g., RealSense D405) is recommended for improved near-field accuracy.

Implications and Future Directions

The integration of vision-guided grasp planning with physics-based vibration modeling represents a significant advancement in autonomous pollination for CEA. The demonstrated Sim-to-Real transfer enables data-driven optimization of pollination strategies, reducing reliance on manual labor and mitigating risks of flower damage. The framework’s generalizability and high grasping success rate suggest strong potential for large-scale greenhouse deployment.

Future work should focus on:

- Refining vibration parameters based on fruit-set rates and long-term yield outcomes.

- Enhancing skeletonization accuracy with improved depth sensing and multi-modal fusion.

- Extending the system to handle more complex plant architectures and additional crop species.

- Investigating closed-loop feedback for adaptive vibration control and real-time pollination monitoring.

Conclusion

This paper introduces a robust, vision-guided robotic pollination system that leverages 3D skeletonization and elastic rod modeling for targeted grasping and vibration in controlled environments. The approach achieves high grasping accuracy and effective vibration transfer, validated through extensive real-world and simulation experiments. The framework provides a scalable solution for automated pollination, with clear pathways for further optimization and deployment in sustainable agriculture.