- The paper introduces a continuous learning framework that integrates real-time agent feedback to reduce retraining cycles and enhance response accuracy.

- It employs a Unified Knowledge Base and an Agent Annotation Interface to centralize resources and capture diverse feedback for iterative model updates.

- Experimental results demonstrate significant improvements in retrieval accuracy and agent adoption, validating the framework's practical impact on customer support.

Agent-in-the-Loop: A Data Flywheel for Continuous Improvement in LLM-based Customer Support

Introduction

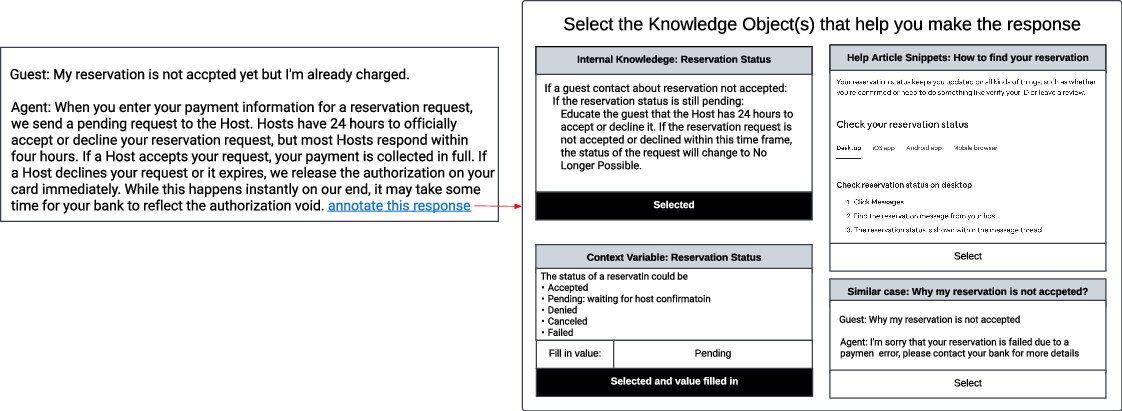

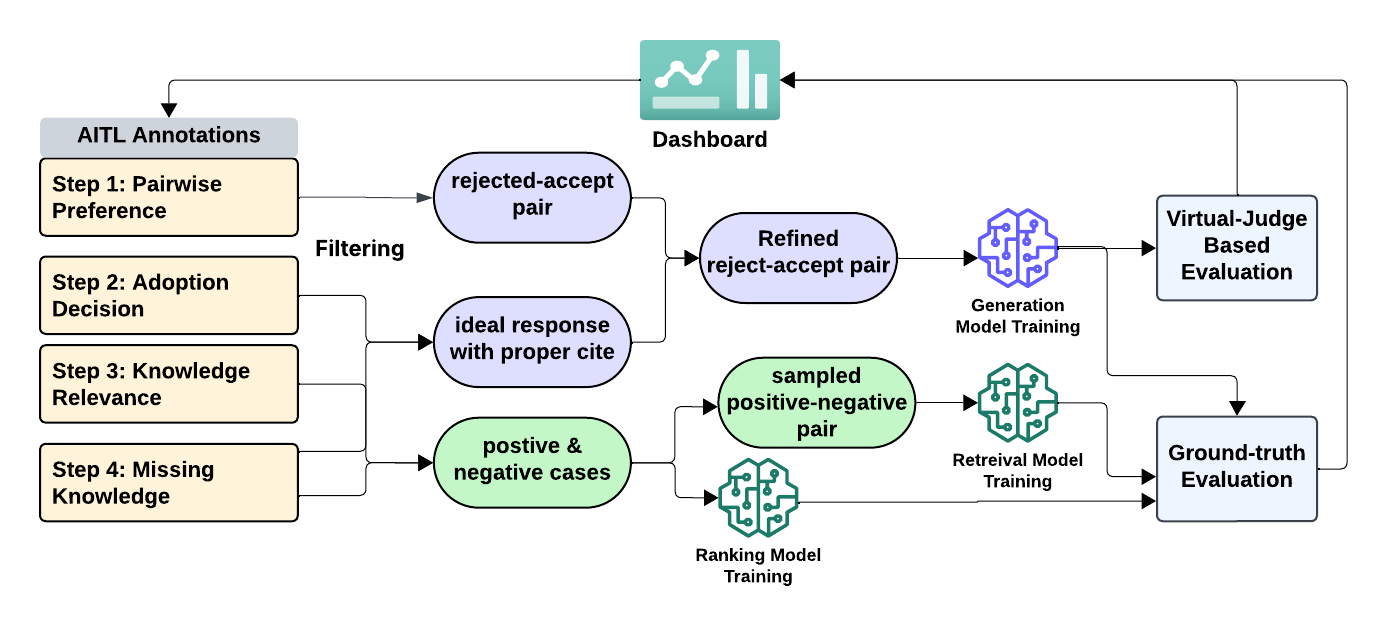

The paper introduces an Agent-in-the-Loop (AITL) framework designed to enhance LLM-based customer support systems through a continuous data flywheel. This approach directly integrates live feedback from customer support operations, focusing on four annotation types: pairwise response preferences, agent adoption decisions and rationales, knowledge relevance checks, and identification of missing knowledge. These annotations feed into an iterative model update cycle, reducing retraining times from months to weeks and improving performance across several key metrics.

System Architecture

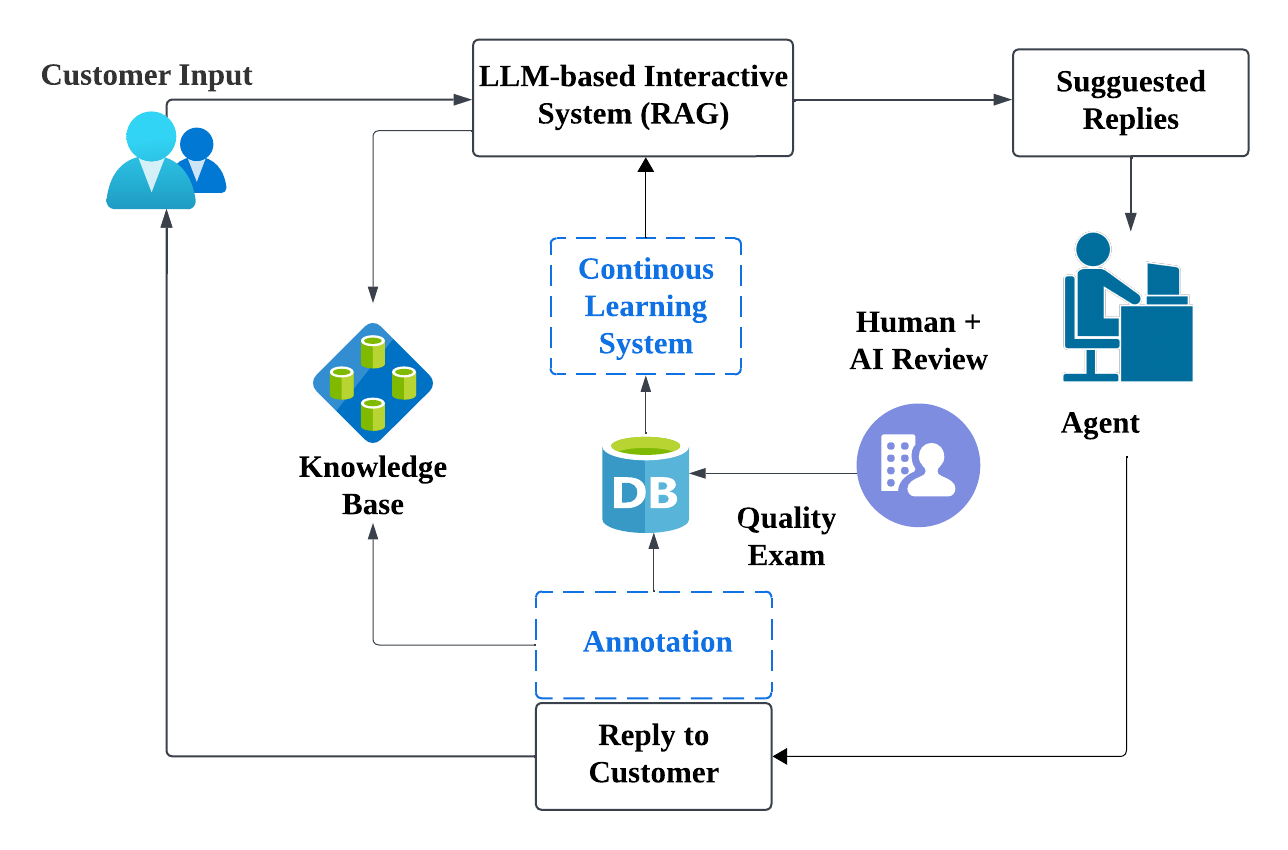

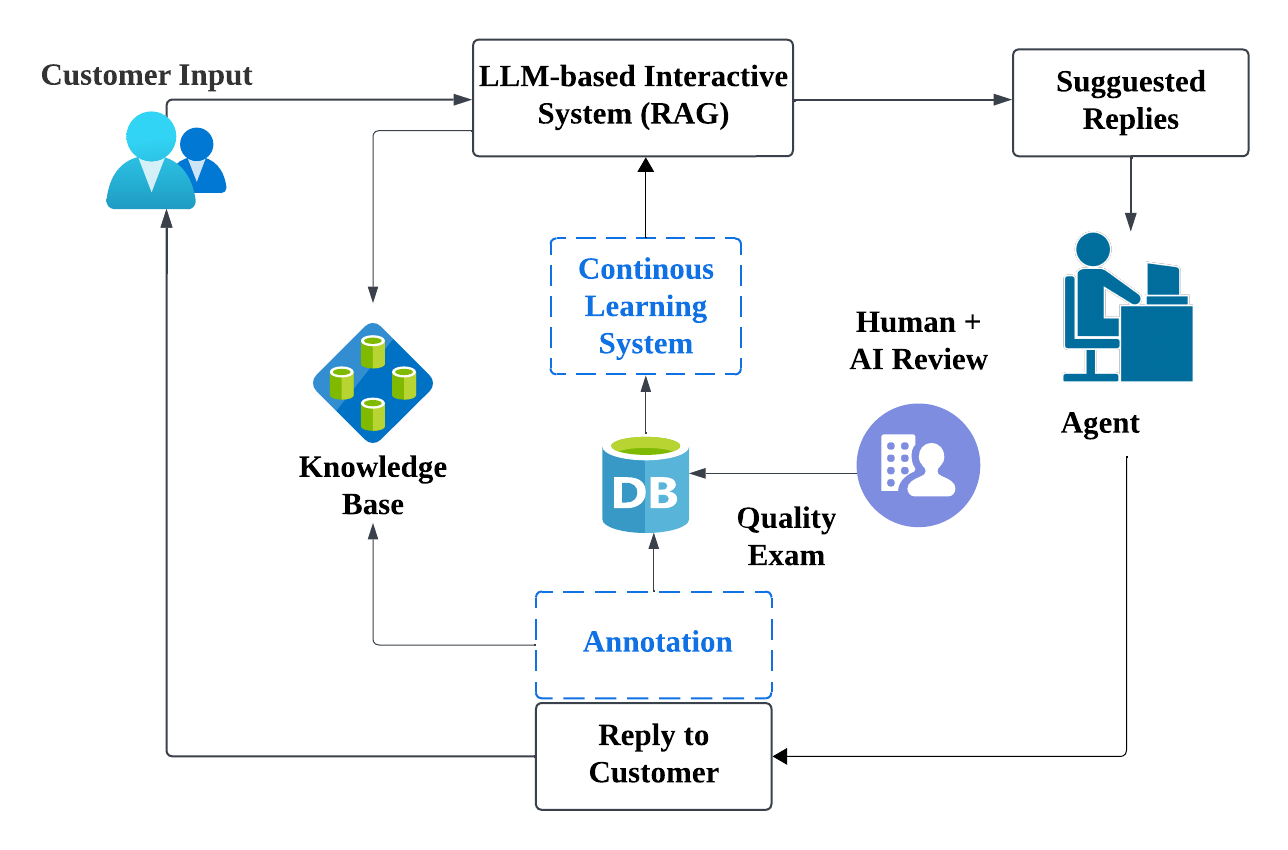

Figure 1: Overview of the agent-in-the-loop architecture.

The AITL framework involves a structured workflow that begins with customer interactions and integrates human annotations into the model training pipeline. This system is supported by a Unified Knowledge Base that combines diverse resources, facilitating effective response generation.

Methodology

The AITL system captures real-time feedback from agents during customer interactions. Key components include:

Experimental Results

The AITL system was tested in a US-based pilot with significant enhancements over baseline systems. Improvements were seen in:

- Retrieval Accuracy: Recall@75 improved by 11.7% and Precision@8 by 14.8%, demonstrating the effectiveness of real-time agent feedback in enhancing response accuracy.

- Generation Quality: Notable increases in response helpfulness and citation correctness highlight the impact of immediate human feedback on generation models.

- Agent Adoption Rates: Real-time annotation led to a 4.5% increase in adoption rates, confirming the system’s efficacy in aligning model outputs with agent needs.

Annotation Timing and Quality

An ablation study on annotation timing showed that immediate annotations improved the identification of missing knowledge without impacting preference judgments, suggesting different strategies may optimize annotation workload across various customer support channels.

Conclusions and Future Work

AITL represents a significant step forward in integrating human-in-the-loop feedback within LLM-driven systems. Future work could focus on scaling the annotation framework, integrating product-embedded AITL for efficiency gains, and exploring automation in dataset curation. Continued adaptation to multilingual support contexts and the long-term sustainability of annotation workflows remain areas of interest.