- The paper introduces a 'super-optimizer' that integrates multimodal LLMs to achieve up to 10x speedup in streaming query processing.

- The paper details a three-phase optimization method—world-knowledge extraction, operator selection, and plan update—to seamlessly integrate MLLMs into streaming systems.

- The paper validates its approach using an Apache Flink environment, demonstrating significant throughput improvements while maintaining high result accuracy.

Towards a Multimodal Stream Processing System

Introduction

The paper "Towards a Multimodal Stream Processing System" (2510.14631) explores advancements in real-time query processing across multiple modalities by embedding multimodal LLMs (MLLMs) into stream processing systems as first-class operators. These systems address the stringent latency and throughput demands that are characteristic of streaming environments, which contrast with traditional database systems. A prototype named 'Sa' demonstrates substantial throughput improvements through comprehensive optimizations, thereby guiding the paper's vision for future scalable and efficient multimodal stream processing systems.

Vision for Multimodal Stream Processing

This research presents a transformative framework for stream processing systems by incorporating MLLMs to enable seamless querying across varied data forms such as text, images, and audio.

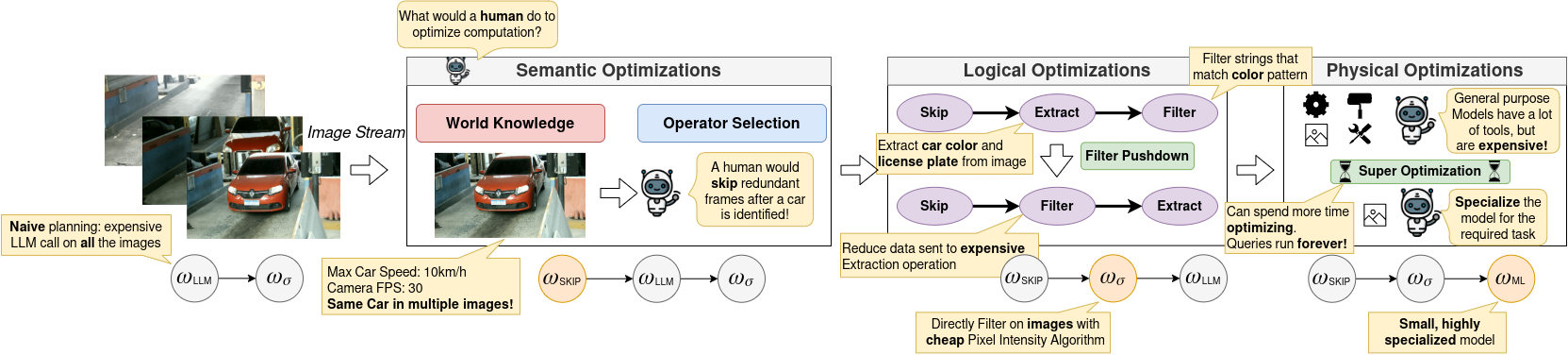

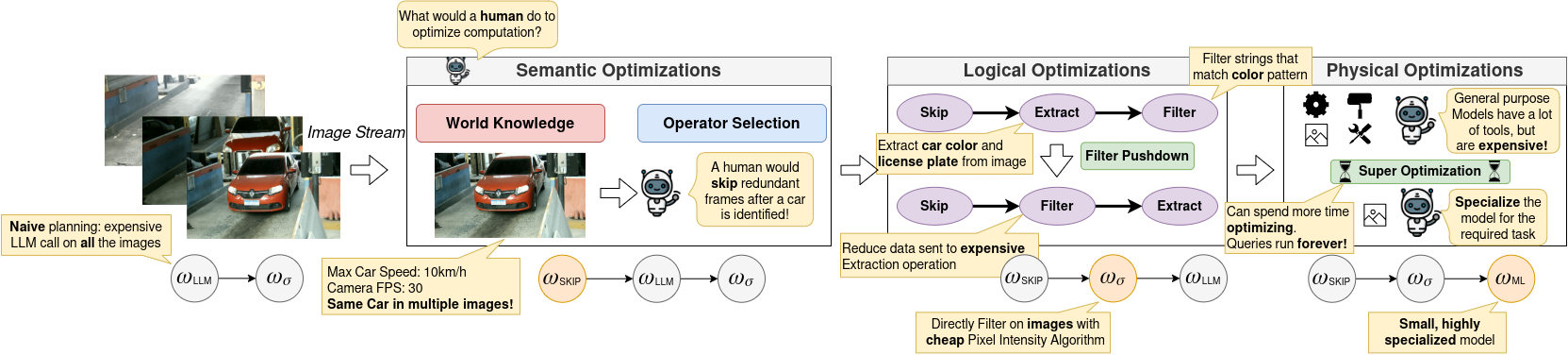

Figure 1: Overview of Sa, a super-optimizer to enable multimodal streaming systems through semantic, logical, and physical optimizations.

The novel concept of a 'super-optimizer' is introduced to integrate MLLMs effectively into streaming environments. Sa aggressively refines query plans tailored to continuous data streams. By leveraging deep semantic understanding, Sa performs systematic transformations analogous to human expert adjustments. These transformations use advanced reasoning to minimize redundant computational loads, making real-time processing viable. Traditional phases like logical and physical optimizations are reconciled with newly introduced semantic optimizations to enhance throughput—a necessity in latency-critical scenarios.

Super-Optimizer Design

Sa utilizes a structured semantic optimization procedure that harnesses the LLM's capabilities for knowledge extraction and operator selection.

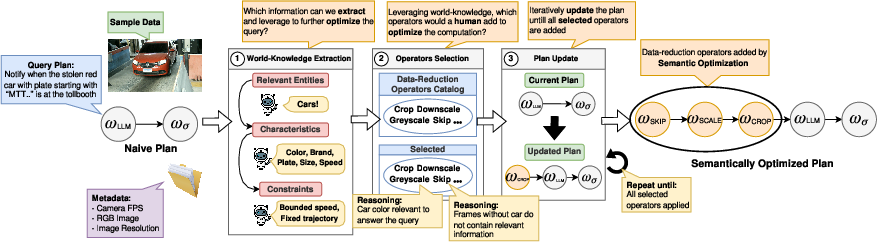

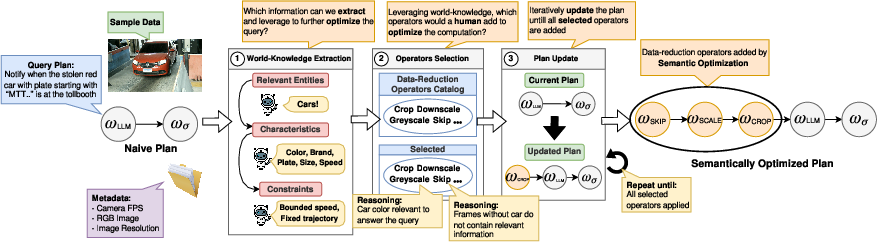

Figure 2: Semantic optimization for tollbooth queries using LLM-guided reasoning for efficient execution.

The optimization procedure exists in three phases:

- World-Knowledge Extraction: Extract relevant semantic attributes using LLM-based reasoning.

- Operator Selection: Identify cost-effective operations that conserve workloads while retaining accuracy.

- Plan Update: Integrate selected optimizations into existing query plans seamlessly.

Through semantic optimization, redundant frames in streaming data are strategically skipped, and computational loads are reduced without compromising result fidelity. This mechanism extends the traditional bounds of query optimization by enriching it with semantic insights.

Logical and Physical Optimizations

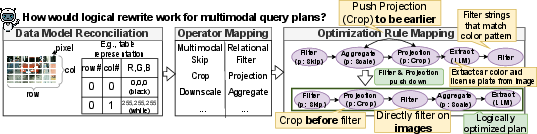

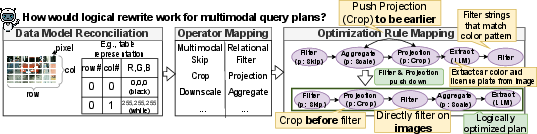

The logical optimization component of Sa maps multimodal attributes to relational structures to apply efficient rewrite rules within query plans.

Figure 3: Logical optimiseation mapping multimodal operators to relational counterparts.

Physical optimization involves selecting the best-suited algorithm. MLLM models undergo processes such as pruning or quantization, adapted to streaming data's evolving nature. Model specialization enhances throughput, providing significant performance gains while managing computational resources.

Initial Results

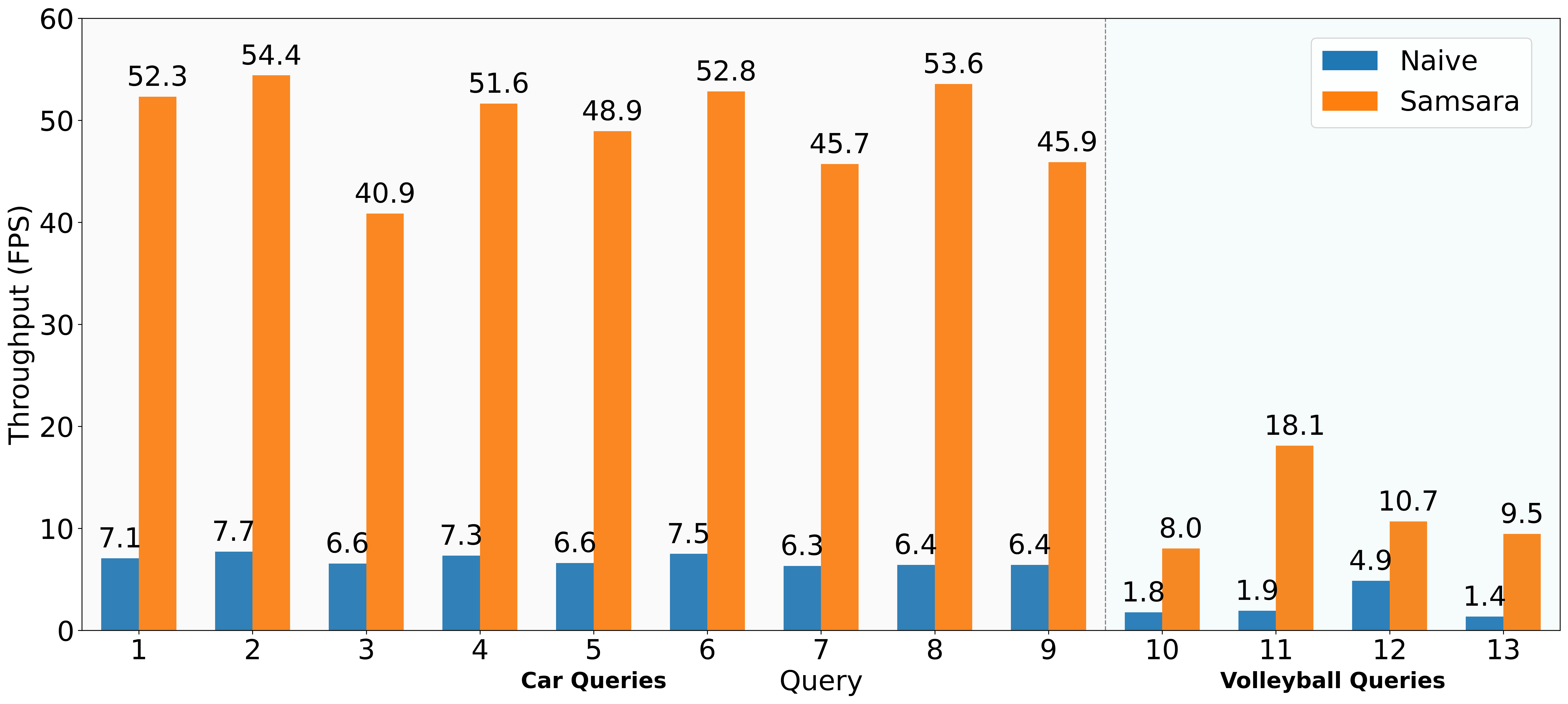

The implementation of Sa in an Apache Flink environment demonstrates significant improvements. A series of experiments showcases how the optimization phases impact throughput across varying complexity queries.

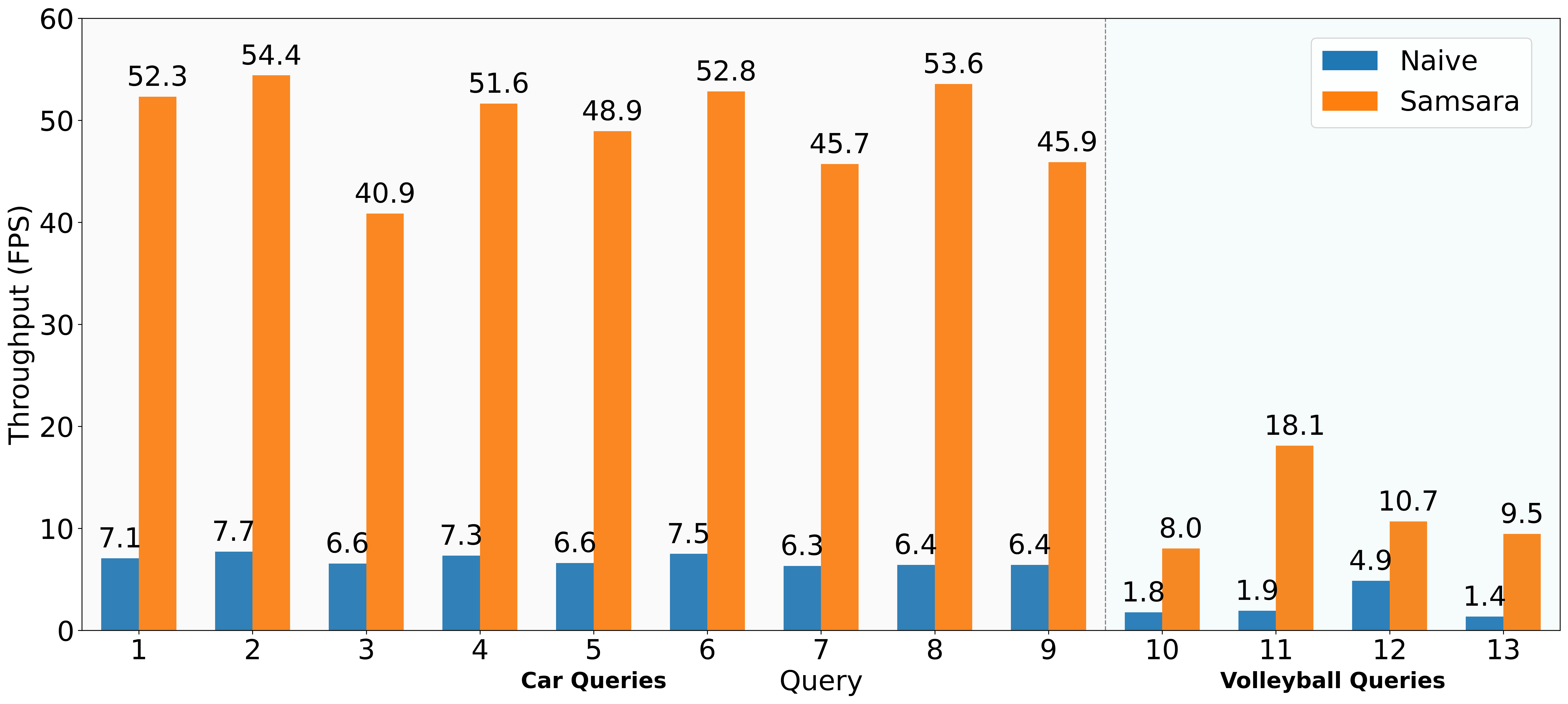

Figure 4: End-to-End gains for all queries, showing up to 10× speedup compared to naive execution.

Sa's optimizations led to substantial acceleration—upwards of a 10× increase in some cases—without a notable trade-off in accuracy, proving its efficacy in converting inefficient pipelines into feasible streaming operations.

Conclusion and Future Directions

This paper pioneers a way forward for deploying MLLMs within stream processing frameworks, opening numerous investigative paths. Scaling the concept of super-optimization, establishing formal semantics for query transformations, and extending multimodal operators beyond images remain promising directions for future research. As streaming data gains prevalence, such innovations are crucial in harnessing the full potential of real-time multimodal analyses efficiently.

This paper serves as a foundational stepping stone towards advancing streaming technologies with enhanced multimodal processing capabilities, addressing both theoretical and practical challenges that lie ahead.