- The paper introduces LAFA, which integrates LLM-driven natural language processing with federated analytics to enable privacy-preserving data queries over decentralized sources.

- The methodology employs a hierarchical multi-agent system that decomposes complex queries into optimized DAG workflows, significantly reducing operation redundancy.

- Results demonstrate near-perfect completion ratios and reduced operational overhead compared to baseline prompting techniques, ensuring efficient and scalable analytics.

LAFA: Agentic LLM-Driven Federated Analytics over Decentralized Data Sources

The paper introduces LAFA, a system that combines LLMs with federated analytics (FA) to enable privacy-preserving data analytics over decentralized data sources. By integrating LLM-driven natural language interfacing with FA, LAFA aims to resolve complex queries while maintaining stringent privacy protections, a task that neither traditional LLM systems nor existing FA frameworks can adequately accomplish on their own.

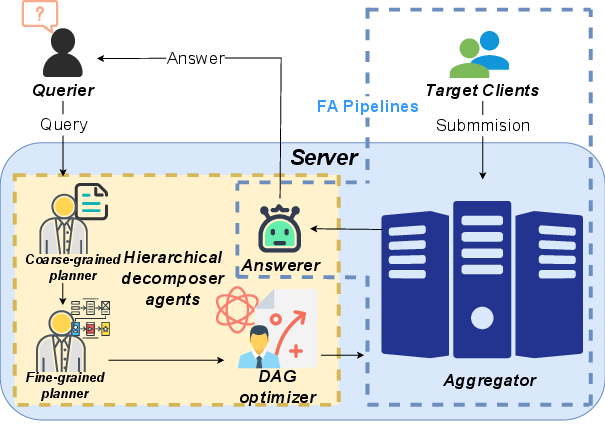

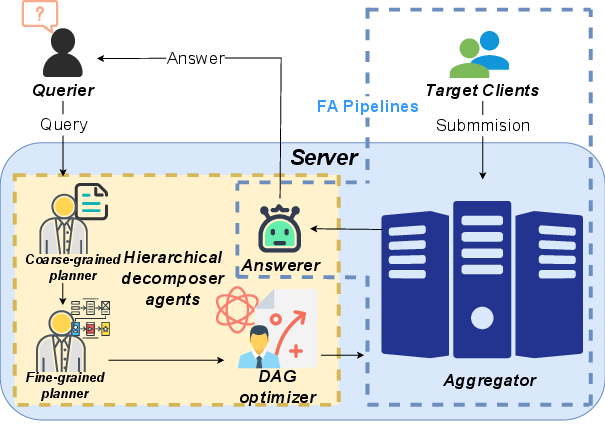

System Overview

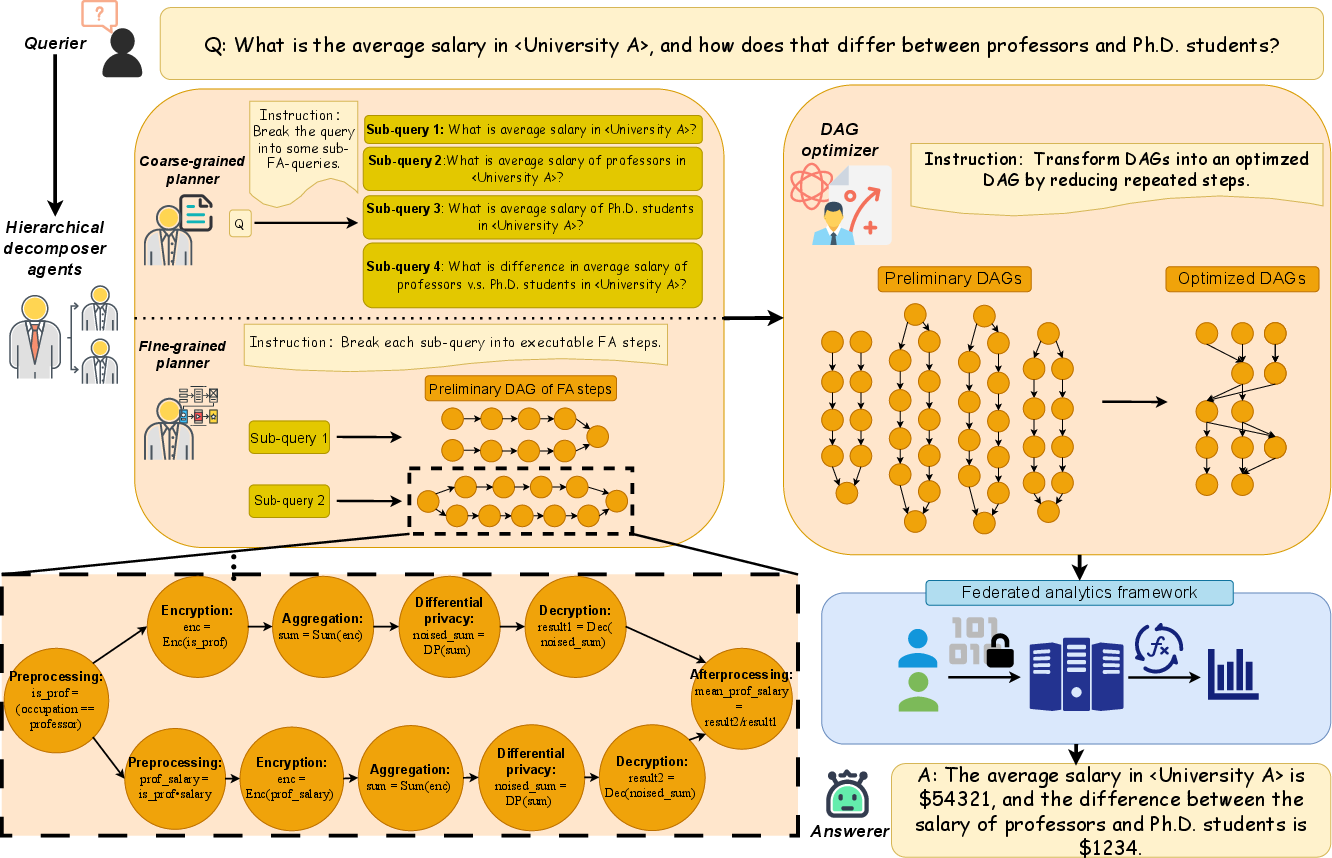

LAFA features a hierarchical multi-agent system designed to accept natural language queries and transform them into optimized FA workflows. The system comprises several key components:

- Queriers: Users who submit natural language analytics queries.

- Clients with Devices: Distributed devices where data is stored and processed locally to preserve privacy.

- Server: Hosts the LLM-driven agents and acts as a central coordinator for aggregating results without accessing raw data.

The hierarchical agent framework works in three phases:

- Hierarchical Decomposition: The coarse-grained planner segments complex queries into single-intent sub-queries, while the fine-grained planner maps each sub-query into a Directed Acyclic Graph (DAG) of FA operations.

- DAG Optimization: The optimizer agent rewrites and merges multiple DAGs to minimize redundant operations.

- FA Execution: The optimized DAG is executed across distributed clients, preserving data privacy and efficient computation.

Figure 1: The system overview of LAFA.

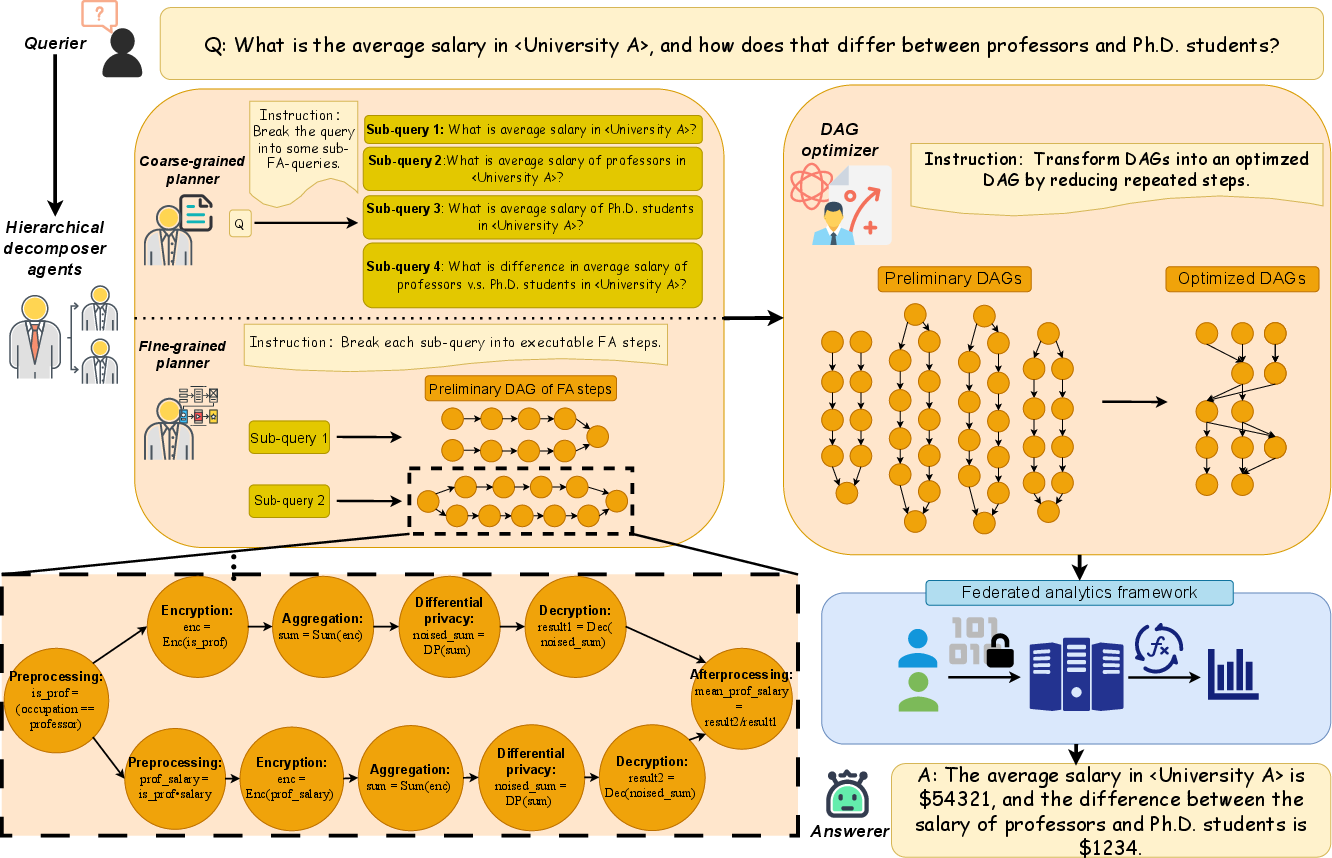

Workflow and Execution

The execution of LAFA starts with the submission of queries in natural language, which are then processed by the agents to construct executable FA workflows. The agents ensure logical sequence adherence and eliminate redundancy, significantly reducing operational overhead without sacrificing analytical accuracy.

Figure 2: The workflow of LAFA using a query as an example.

Key Challenges Addressed

LAFA addresses two principal challenges in LLM and FA integration:

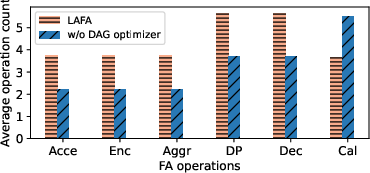

Evaluation and Results

LAFA demonstrates significant improvements over baseline prompting techniques in both completion ratio and operational efficiency:

- Completion Ratio: LAFA achieves near-perfect completion ratios across diverse query sets, significantly outperforming zero-shot and one-shot prompting strategies.

- Operation Count: Redundant operations are markedly reduced in LAFA, showcasing better resource utilization and efficiency. The optimized execution plan reduces computational and communication overheads.

These outcomes establish LAFA as a superior framework capable of answering complex federated analytics queries while ensuring privacy compliance.

Conclusion

LAFA represents a pivotal advancement in LLM-driven federated analytics, blending natural language processing capabilities with privacy-preserving data computation. This integration provides a scalable solution for executing complex analytics across decentralized data sources without compromising data privacy. Future developments in LAFA might include expanded support for diverse data types and enhanced optimization algorithms, further broadening the system's applicability and efficiency in federated environments.