- The paper presents a globally optimized caching framework that minimizes worst-case error peaks through lexicographic minimax path selection.

- It leverages a directed acyclic graph constructed from diverse prompt sampling to balance acceleration and content fidelity without model retraining.

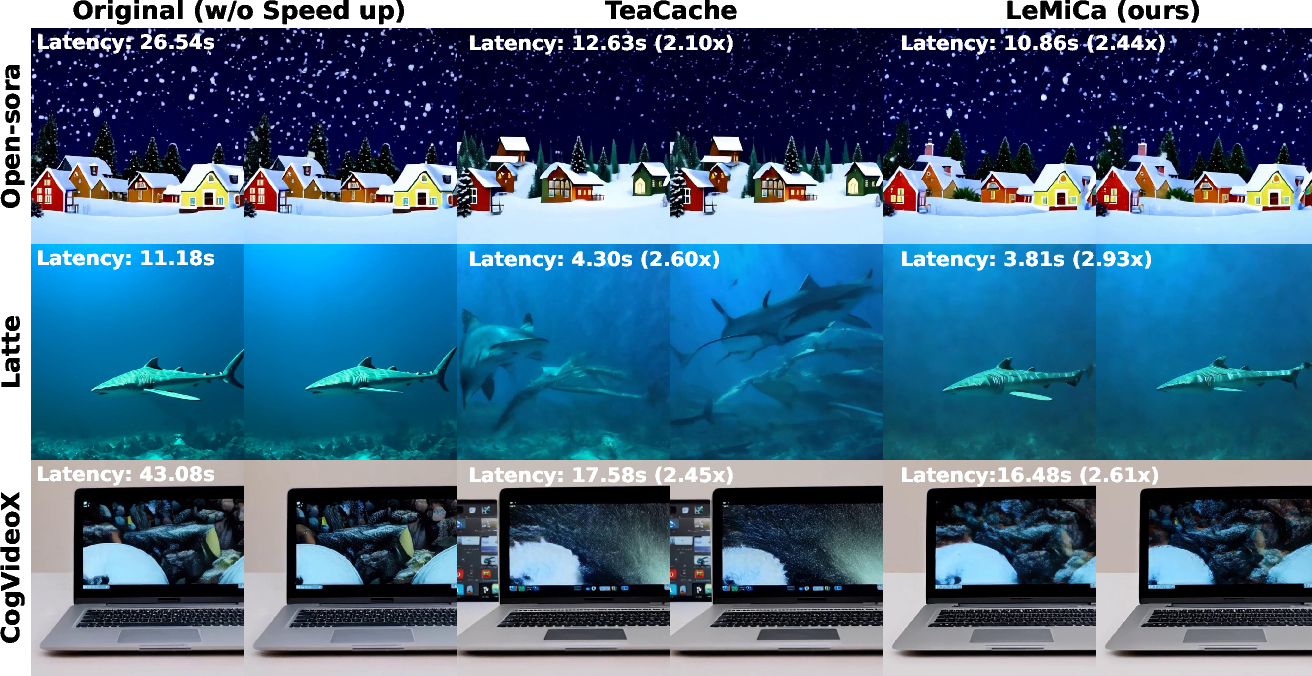

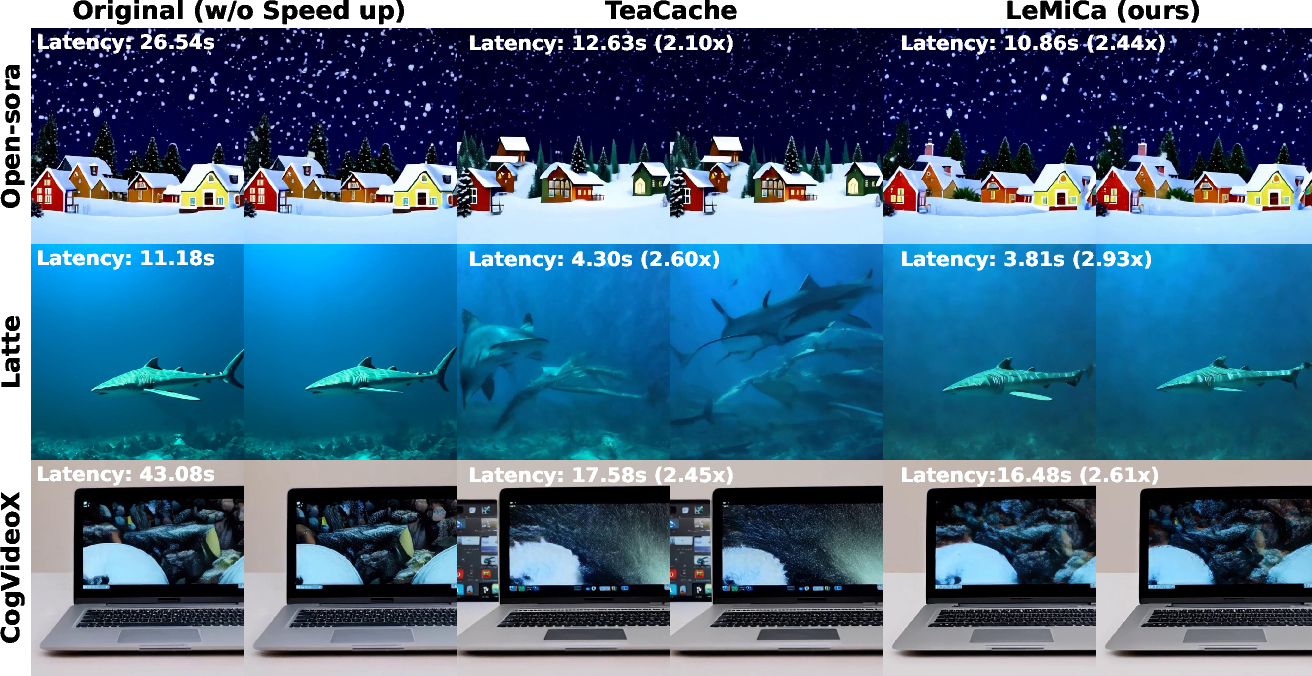

- State-of-the-art experiments demonstrate significant speedups (2.44× to 2.93×) and improved perceptual metrics across various diffusion-based video models.

Lexicographic Minimax Path Caching for Efficient Diffusion-Based Video Generation

Introduction

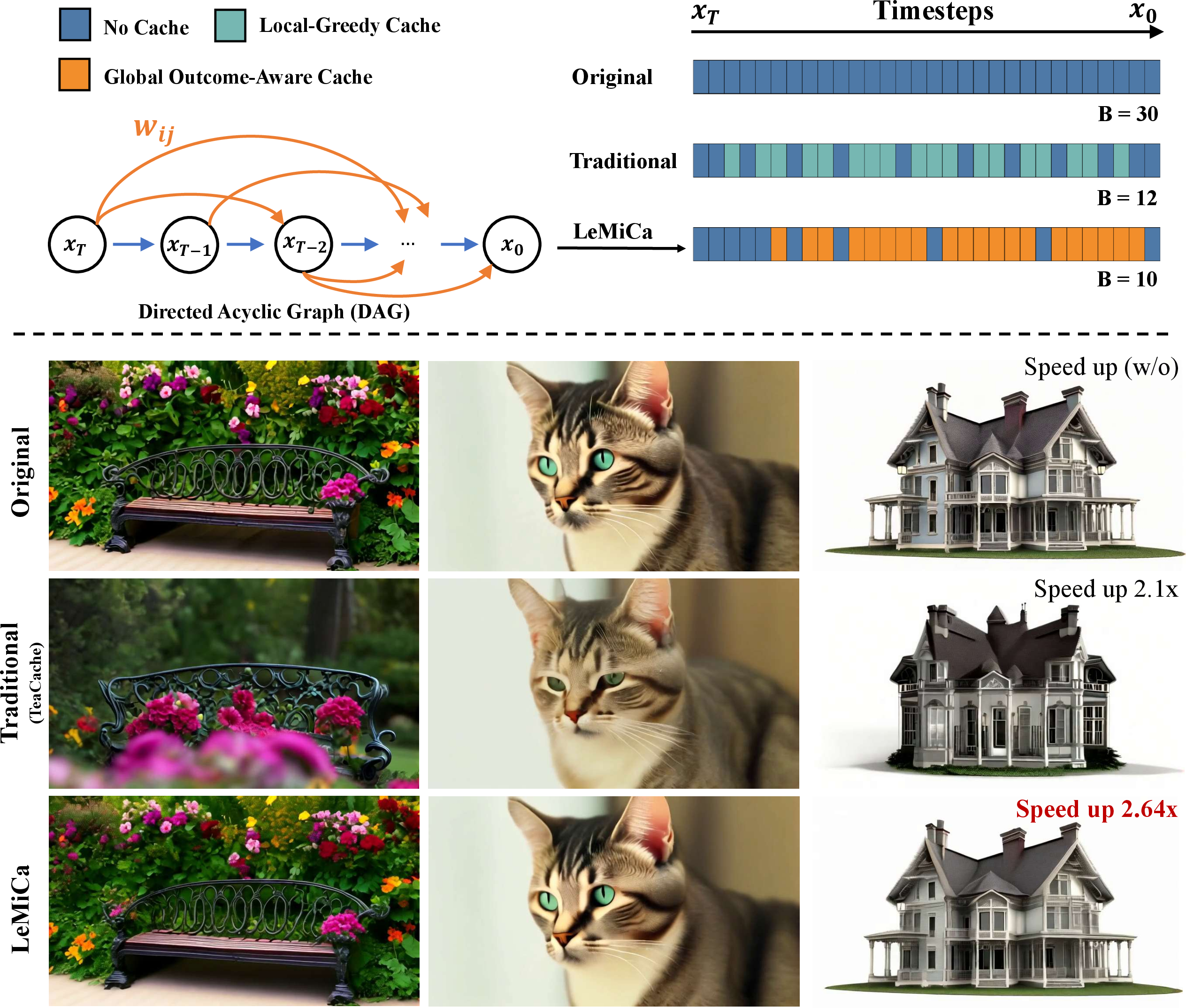

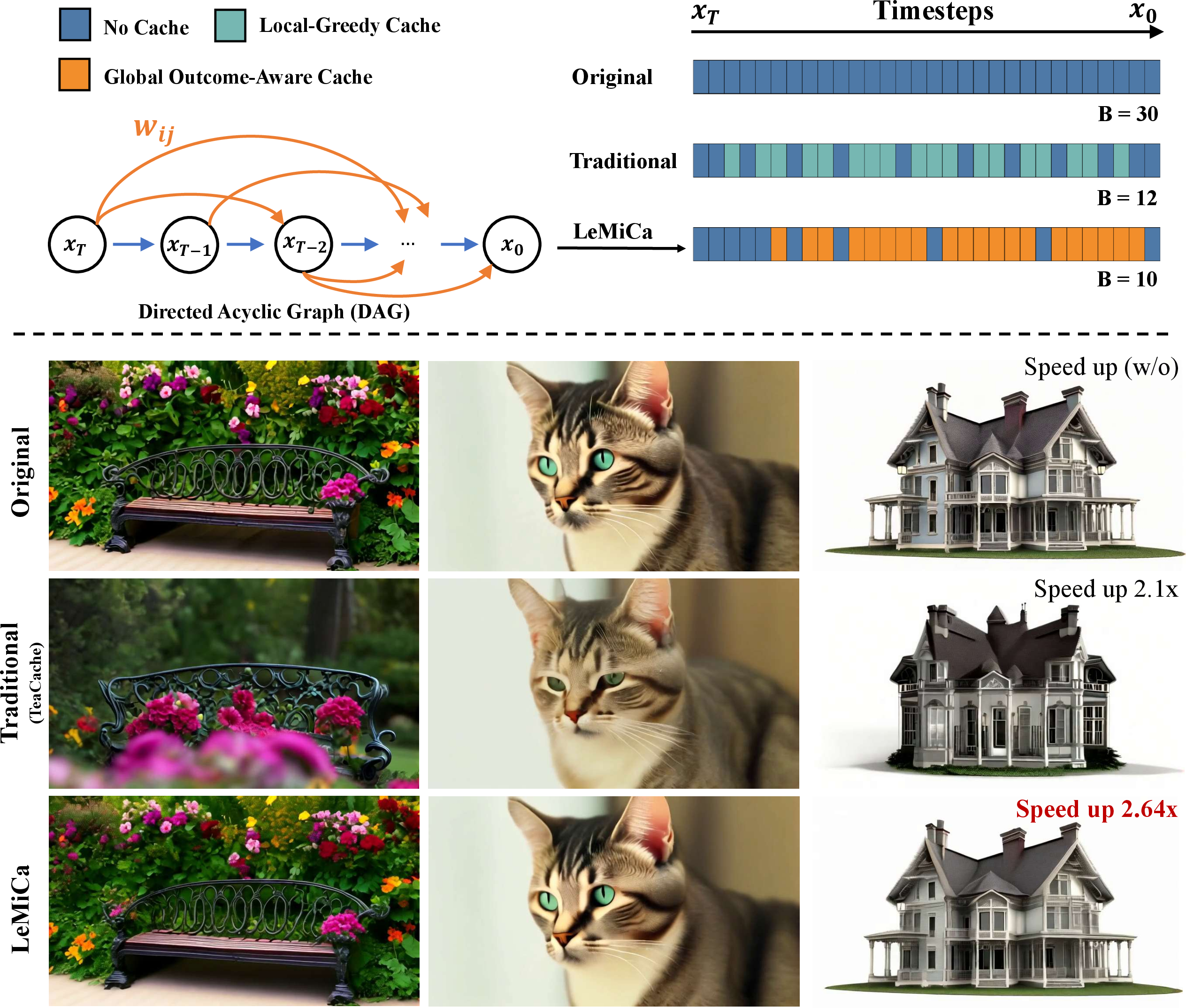

The paper "LeMiCa: Lexicographic Minimax Path Caching for Efficient Diffusion-Based Video Generation" (2511.00090) presents LeMiCa, a training-free framework for accelerating video generation with diffusion models, which addresses the critical trade-off between inference efficiency and video content fidelity. The authors critique conventional caching strategies such as Local-Greedy approaches that minimize pointwise error heuristically, ignoring the global accumulation and propagation of errors throughout the denoising trajectory. LeMiCa introduces a globally optimized, outcome-aware cache mechanism based on a Lexicographic Minimax Path algorithm over a Directed Acyclic Graph (DAG), substantially reducing worst-case cache errors and maintaining superior content consistency across frames.

Figure 1: LeMiCa establishes a globally controlled cache mechanism, optimizing cache scheduling via lexicographic minimax search over a DAG, yielding superior temporal consistency and error control compared to traditional local greedy methods.

Diffusion-based video generation, particularly DiT architectures, have set benchmarks in sample quality but remain computationally prohibitive. Common acceleration techniques—distillation, pruning, quantization—require model retraining and extensive engineering, whereas caching enables retraining-free acceleration by reusing outputs for select timesteps.

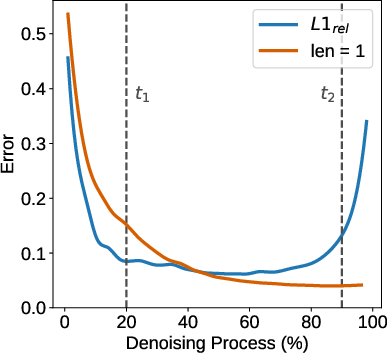

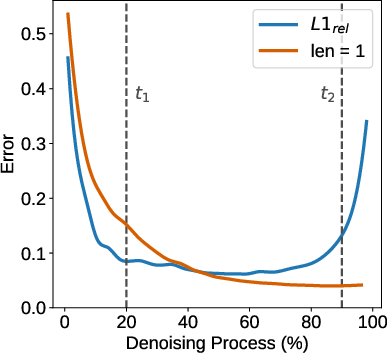

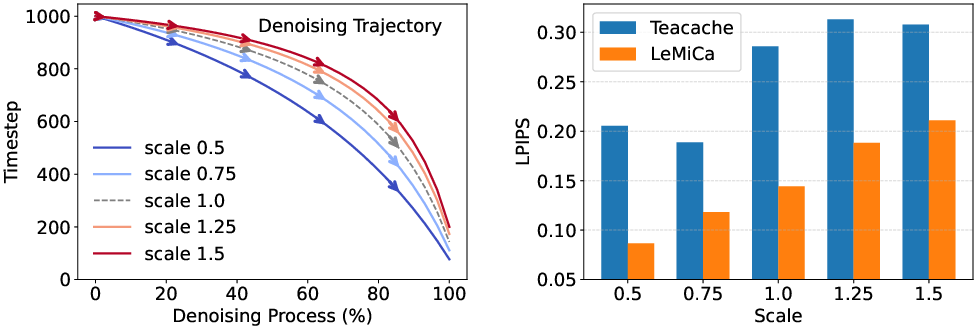

Conventional caching, exemplified by TeaCache and DeepCache, typically adopts locally greedy error heuristics, using relative differences (L1rel) between adjacent denoising steps to trigger cache reuse. This local perspective neglects the non-uniform temporal importance and compounding error semantics inherent in diffusion dynamics. The authors empirically demonstrate that cache decisions made early in the denoising schedule cascade, yielding pronounced global perceptual degradation—an aspect ignored by uniform thresholding schemes.

Figure 2: Local-Greedy caching only minimizes local error, while Global Outcome-Aware error estimation captures segmentwise impact on final output fidelity.

LeMiCa Framework

Global Outcome-Aware Error and Graph Construction

LeMiCa’s central innovation is the explicit modeling of cache scheduling as a graph traversal problem, where edges represent cache segments and are weighted by the global impact on terminal reconstruction quality (L1glob). The DAG is constructed offline by sampling edge-wise errors from a diverse prompt and noise seed distribution, allowing for model-agnostic caching policies independent of architectural specifics.

Lexicographic Minimax Path Optimization

To mitigate the risk of cache-induced degradation at sensitive timesteps, LeMiCa seeks a cache path that lexicographically minimizes the maximum error spike along any feasible trajectory given an inference step budget B. This approach differs fundamentally from additive shortest-path error minimization—by prioritizing suppression of catastrophic error peaks rather than aggregate reduction. Formally, the procedure selects a path whose ordered error vector is optimized in a lexicographic minimax fashion, yielding robust worst-case guarantees essential for high-fidelity video synthesis.

Experimental Evaluation

State-of-the-Art Comparisons

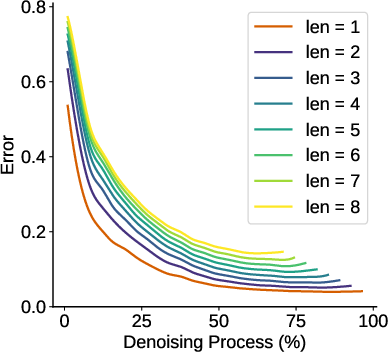

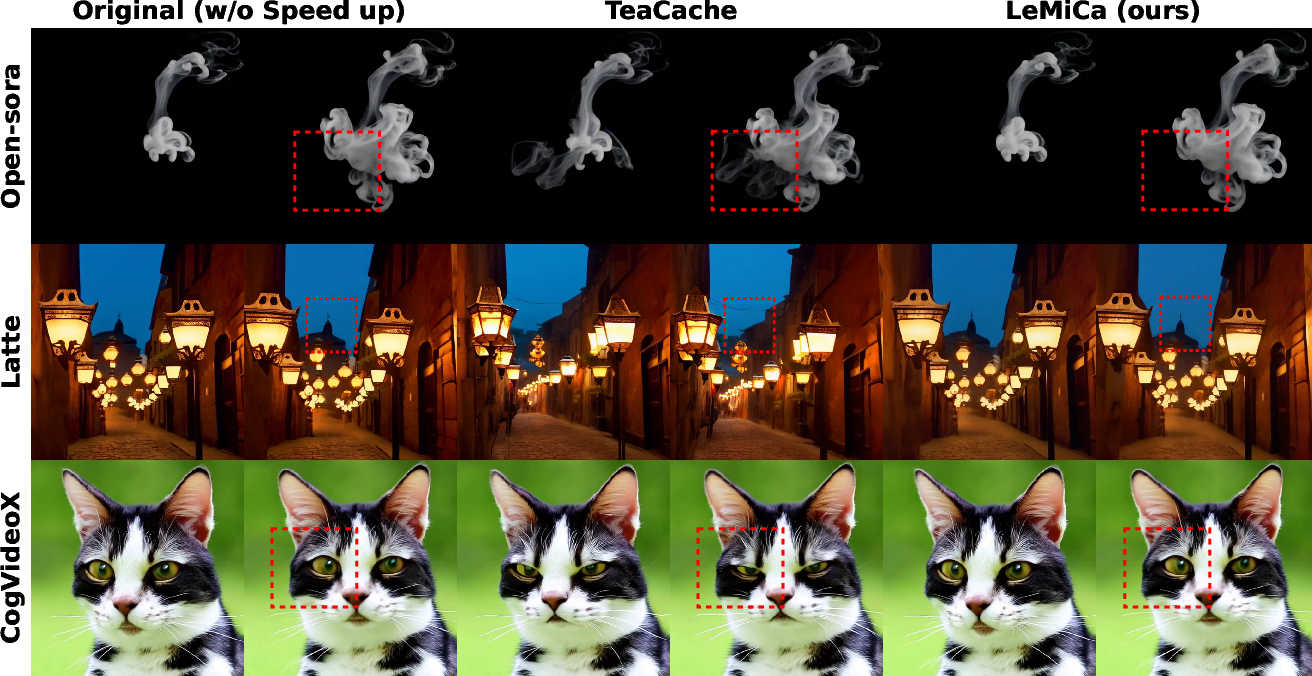

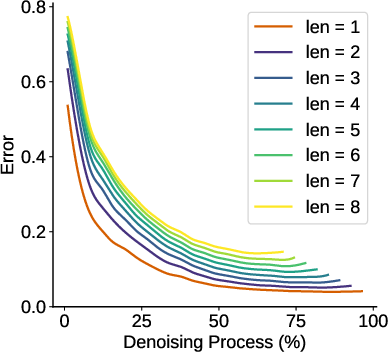

LeMiCa is evaluated on leading diffusion-based video models (Open-Sora, Latte, CogVideoX), benchmarked against TeaCache, PAB, T-GATE, and Δ-DiT under both slow (high-fidelity) and fast (high-speed) regimes. Across all configurations, LeMiCa achieves dual improvement in efficiency and perceptual quality:

- On Open-Sora: 2.44× speedup with LPIPS reduced from 0.134 (TeaCache-slow) to 0.050 (LeMiCa-slow); comparable consistency at over 79% VBench scores.

- On Latte: 2.93× speedup with superior SSIM and PSNR, maintaining visual coherence and object integrity.

- On CogVideoX: Over 2.6× acceleration and marked reduction in distortion artifacts.

Figure 3: LeMiCa-slow preserves critical content details versus TeaCache-slow, as shown in highlighted regions across diverse models.

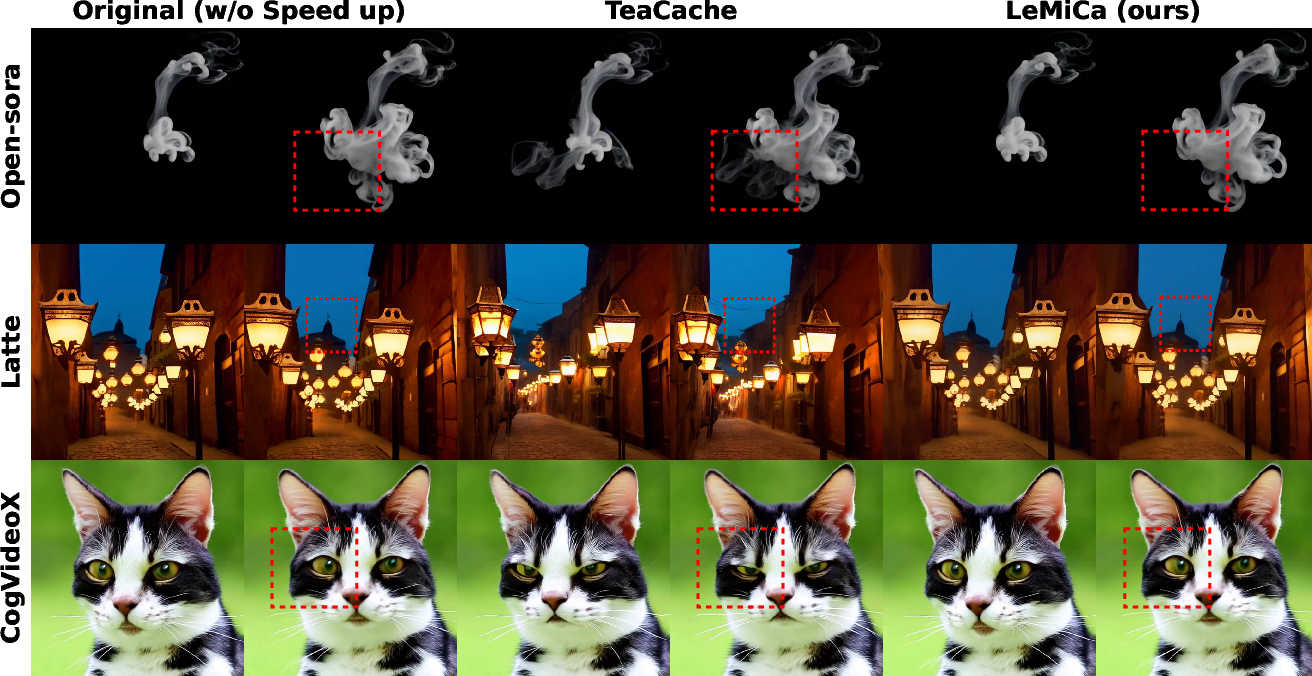

Figure 4: Under aggressive acceleration, LeMiCa-fast maintains content consistency and mitigates distortion compared to TeaCache-fast.

Ablations and Robustness

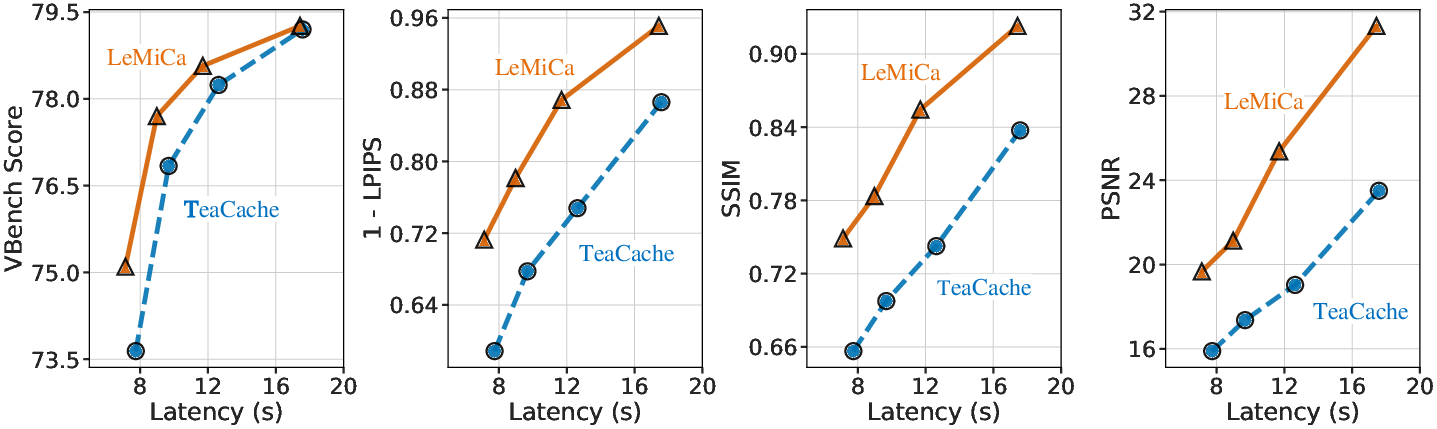

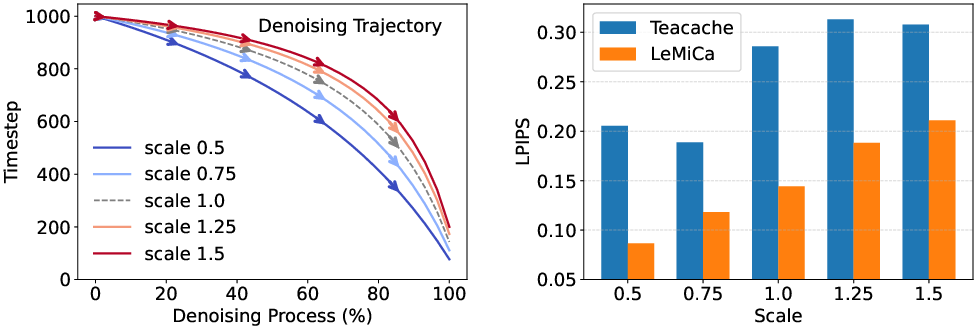

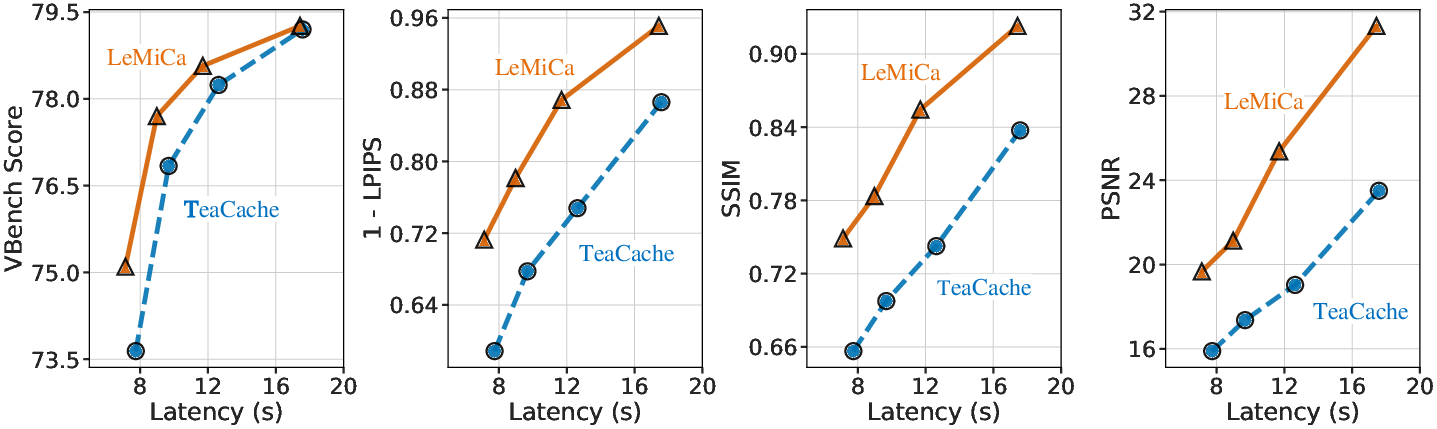

The authors assess LeMiCa under varying cache budgets, sample sizes for graph construction, and denoising trajectory scaling. Crucially, LeMiCa exhibits high sample efficiency: the static caching graph saturates in performance with as few as 20 prompts. Dynamic trajectory variance experiments confirm that LeMiCa outperforms TeaCache across altered diffusion schedules, validating robustness to scheduler changes.

Figure 5: LeMiCa consistently achieves superior quality-latency trade-off compared to TeaCache across computational budgets.

Figure 6: LeMiCa maintains lower LPIPS across denoising trajectory variants, indicating stable cache performance despite schedule scaling.

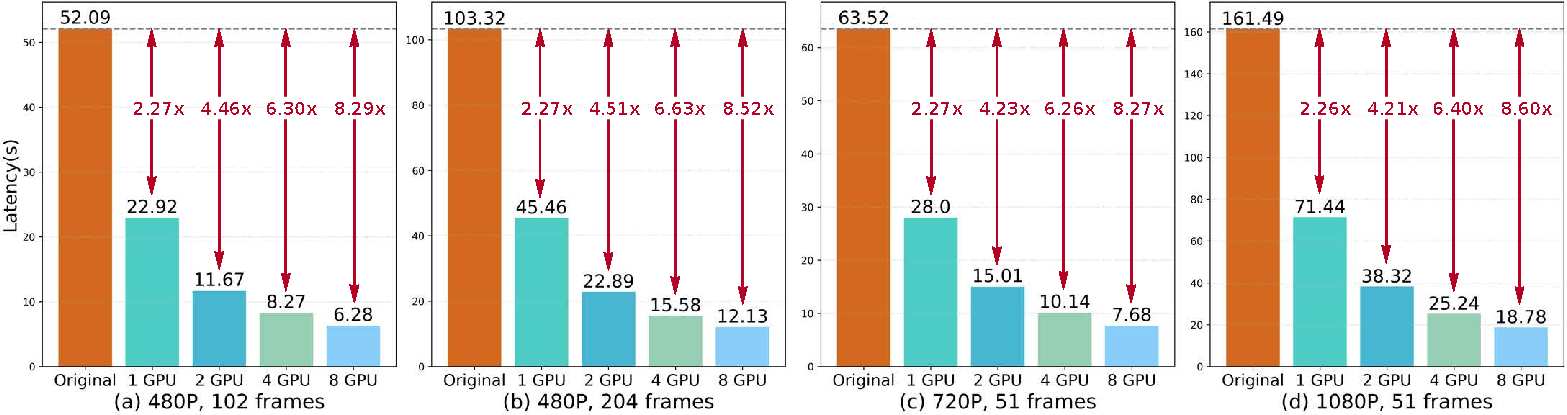

Scalability

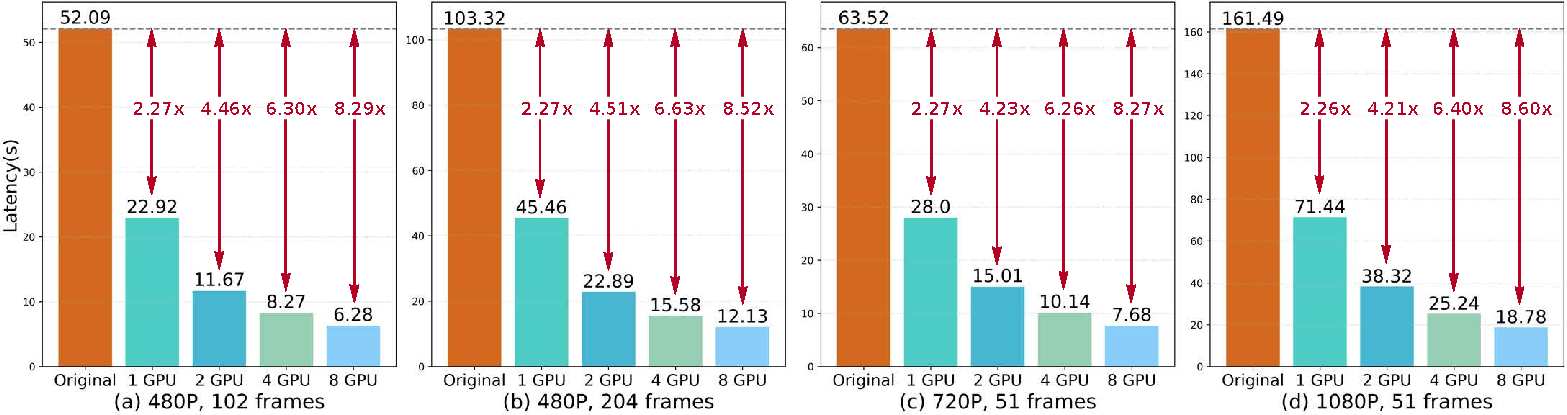

Leveraging DSP (Dynamic Sequence Parallelism), LeMiCa is adapted to multi-GPU video synthesis, showing stable acceleration for increasing video frame numbers and resolutions—a necessary condition for production-scale video generation.

Figure 7: LeMiCa delivers robust inference efficiency across varying video resolutions and durations, scaling effectively with workload size.

Extension and OOD Generalization

LeMiCa’s architecture-agnostic design is validated on Qwen-Image for text-to-image acceleration, matching or exceeding Cache-DiT in both latency and quality metrics. Further, evaluations on OOD datasets (IP-VBench) demonstrate strong generalization, with LeMiCa sharply outperforming TeaCache on challenging semantic domains in LPIPS, SSIM, and PSNR.

Implications and Future Directions

LeMiCa formally addresses global error control in diffusion caching, shifting the paradigm from localized heuristics to robust, trajectory-aware optimization. Practically, the approach enables efficient, high-quality video synthesis suitable for real-time and resource-constrained deployments, with minimal offline policy cost and strong robustness to unseen prompt or scheduler variations. The theoretical foundation—lexicographic minimax path selection—suggests broad applicability to caching acceleration in other sequential generation domains, extending to 3D, multi-view, or multi-modal settings.

Potential future research may explore adaptive online error modeling, tighter integration with scheduler learning, or cache-aware hybrid distillation frameworks. Moreover, leveraging LeMiCa’s explicit sensitivity modeling can directly inform curriculum scheduler design and selective refinement in generative pipelines.

Conclusion

LeMiCa introduces a principled, training-free framework for efficient diffusion-based video generation, balancing inference speed and perceptual fidelity through lexicographic minimax path optimization. The global outcome-aware cache policy yields substantial improvement over existing local greedy approaches, maintaining strong visual consistency even under high acceleration. The framework’s scalability, robustness, and generalization suggest considerable promise for broader application in generative modeling acceleration research.