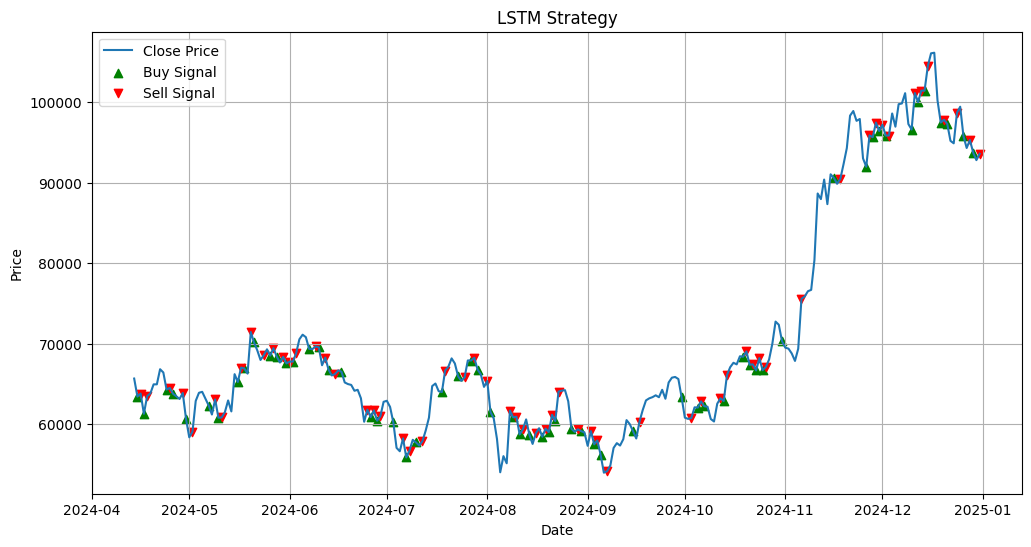

- The paper demonstrates that the LSTM model delivers the highest cumulative returns, outperforming both technical analysis strategies and buy-and-hold benchmarks.

- It employs rigorous out-of-sample validation using rolling input windows to capture short-term price movements and test models against market structural changes.

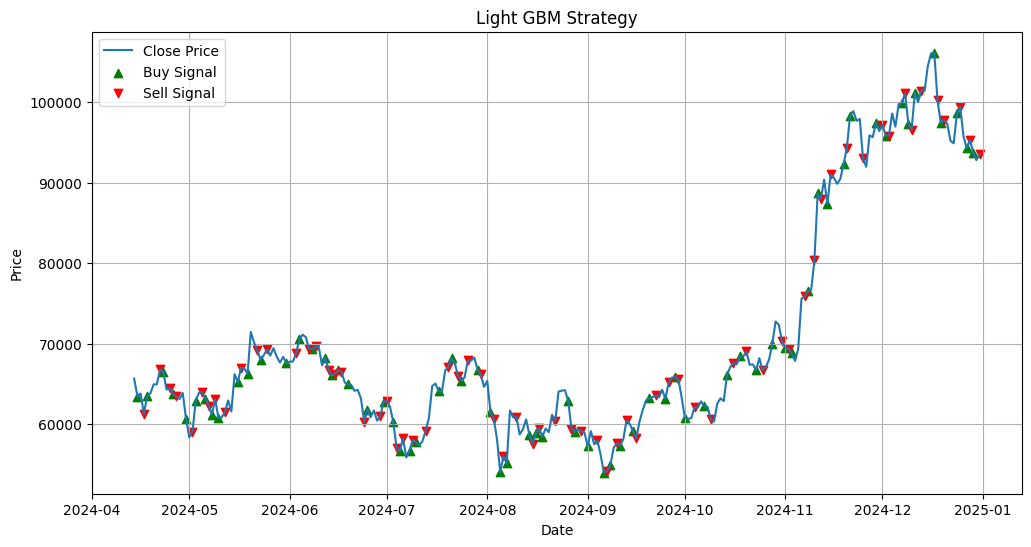

- The study highlights that incorporating transaction costs reveals excessive trading frequency, as seen in LightGBM, can significantly erode trading performance.

Introduction

This work presents a systematic evaluation of Bitcoin trading performance by juxtaposing two machine learning approaches—Long Short-Term Memory (LSTM) neural networks and Light Gradient Boosting Machine (LightGBM)—against two technical analysis (TA) strategies: Exponential Moving Average (EMA) crossover and a combined Moving Average Convergence/Divergence with Average Directional Index (MACD+ADX). The empirical study is situated around the period following the landmark regulatory event—the approval of the first spot Bitcoin exchange-traded funds (ETFs) by the U.S. Securities and Exchange Commission on January 10, 2024—providing a unique testbed to assess model robustness against structural changes in market dynamics. Performance is quantified by cumulative returns on out-of-sample test data, with consideration for transaction costs to reflect practical implementation viability.

Methodology

Data and Setup

The dataset comprises daily Bitcoin closing prices from January 19, 2021 to December 31, 2024. Model training is conducted using data preceding the ETF approval (2021-01-10 to 2024-01-09), and testing spans the post-ETF period. For ML models, a supervised learning setup is adopted with rolling 95-day input windows to predict the next day’s price direction (binary: up/down). In contrast, TA strategies require no warmup period for making predictions. All cumulative return calculations are made from April 14, 2024 (post warm-up) through December 31, 2024.

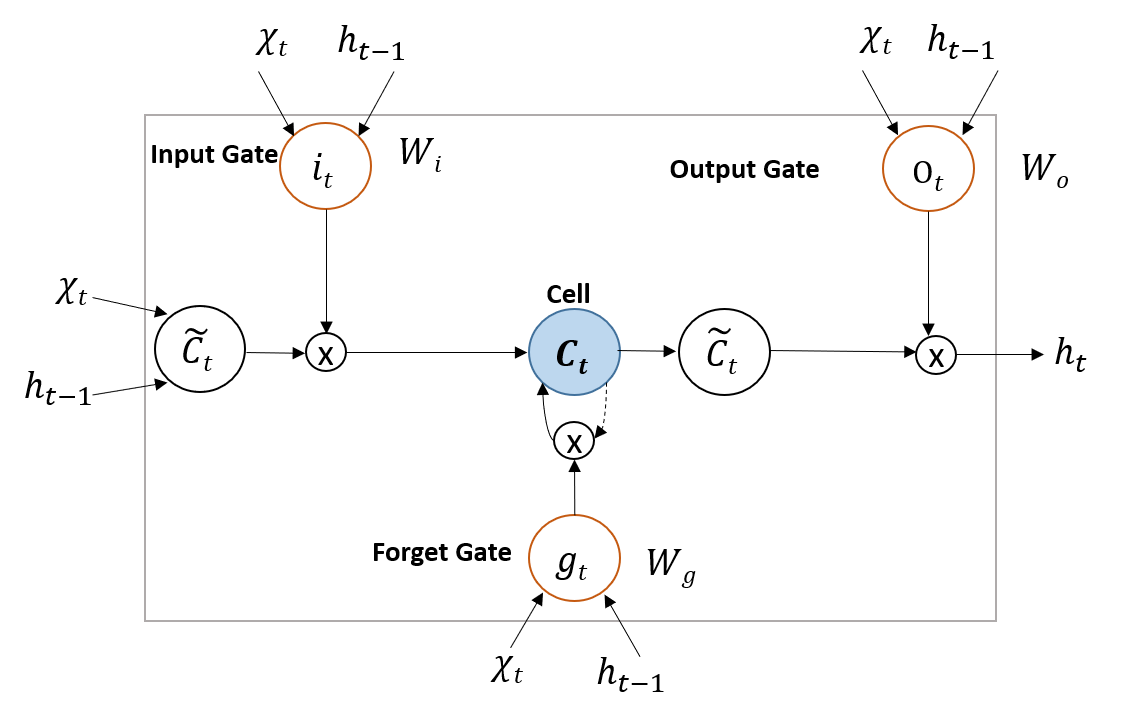

LSTM Model Architecture

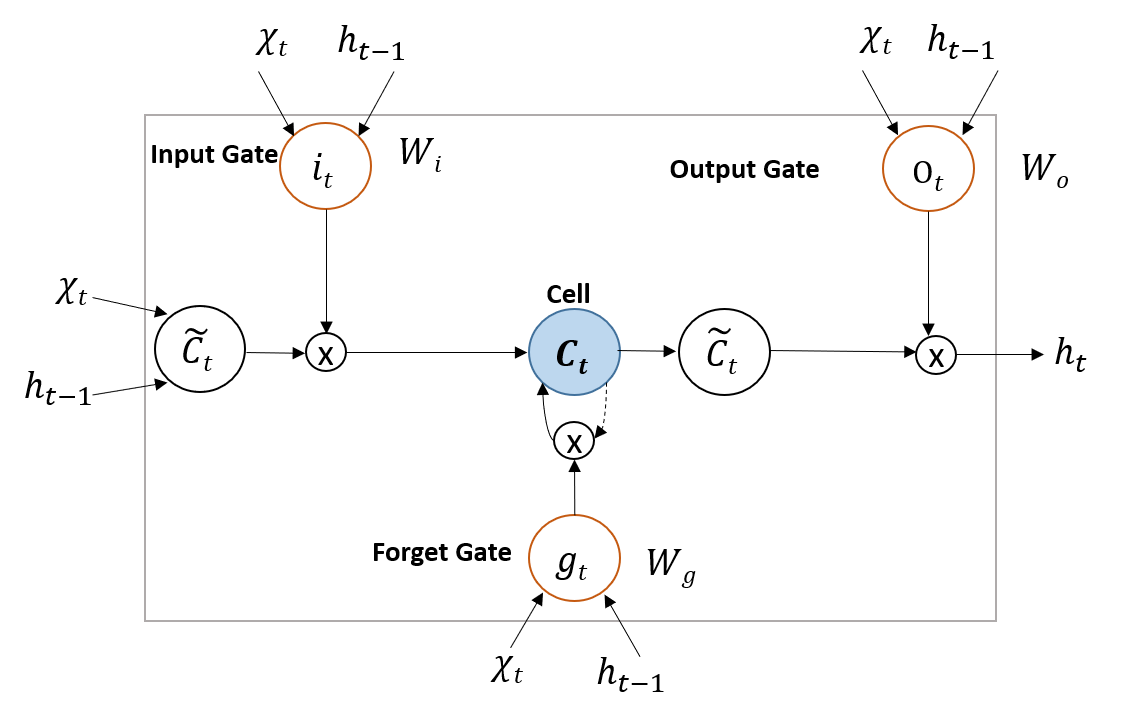

The LSTM network, designed for sequence modeling and long-term dependency learning, utilizes a memory cell architecture with adaptive input, forget, and output gates. This enables effective learning from the highly non-stationary and autocorrelated structure of financial time series, mitigating vanishing gradient problems prevalent in classical RNNs.

Figure 1: General structure of an LSTM unit, showing gate flow and cell state updates as applied to time-series forecasting.

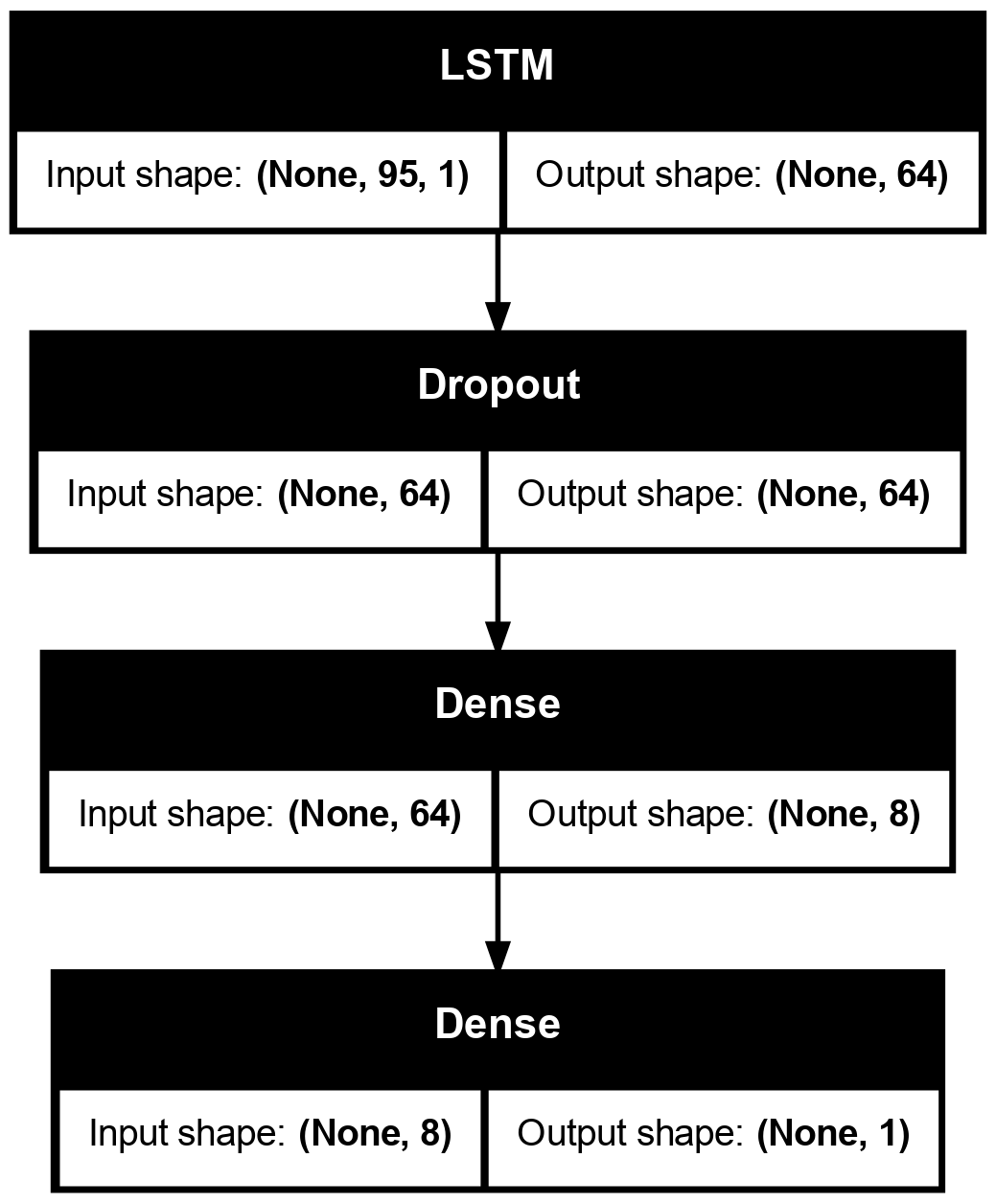

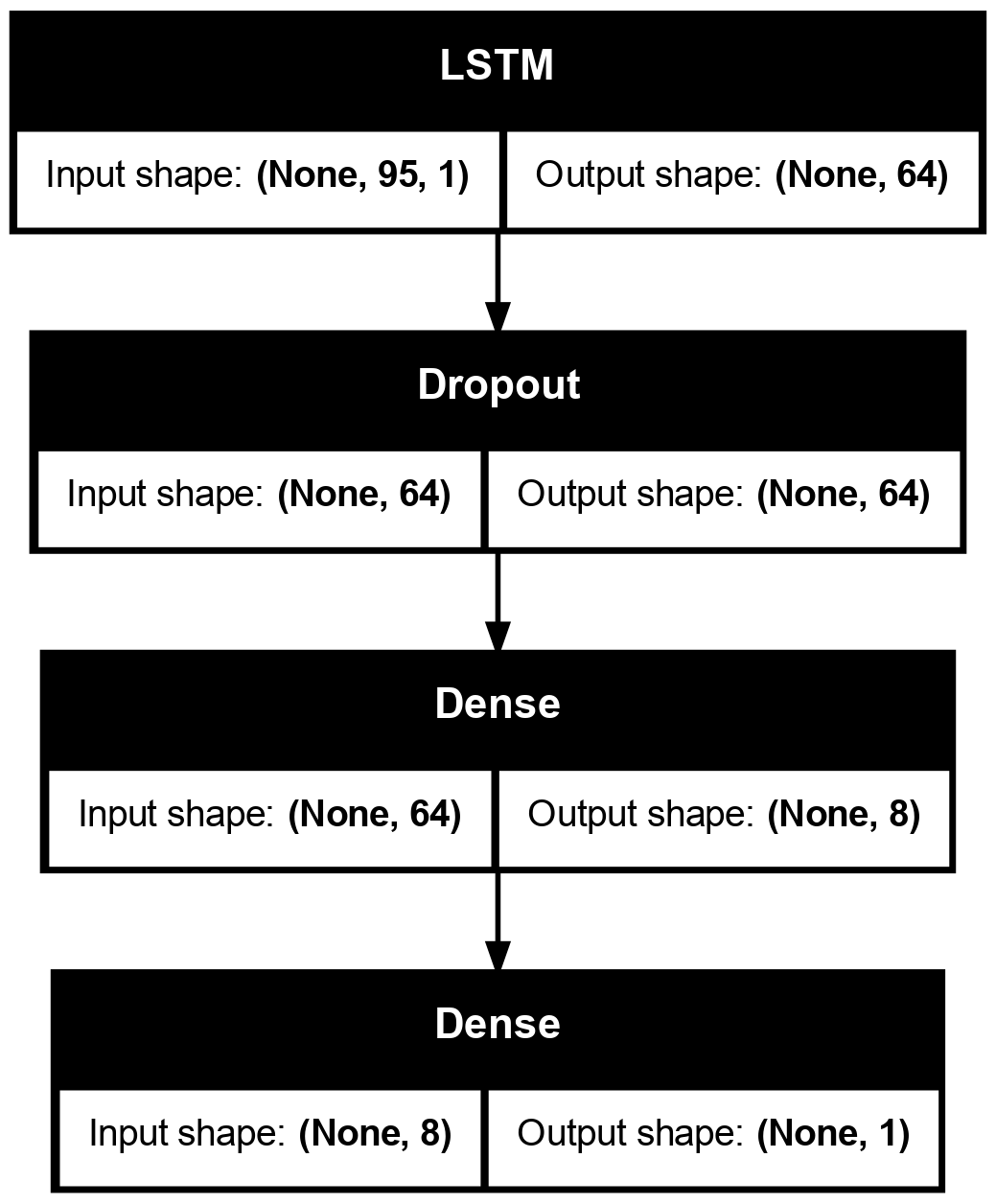

Key hyperparameters, including batch size, learning rate, and dropout rate, are grid-searched and regularized via early stopping. The output layer uses a sigmoid activation suited to binary classification. The Adam optimizer variant incorporating long-term gradient memory is applied to enhance convergence stability.

Figure 2: Model architecture of LSTM showing the stacking of LSTM layers, dropout regularization, and the final dense output layer.

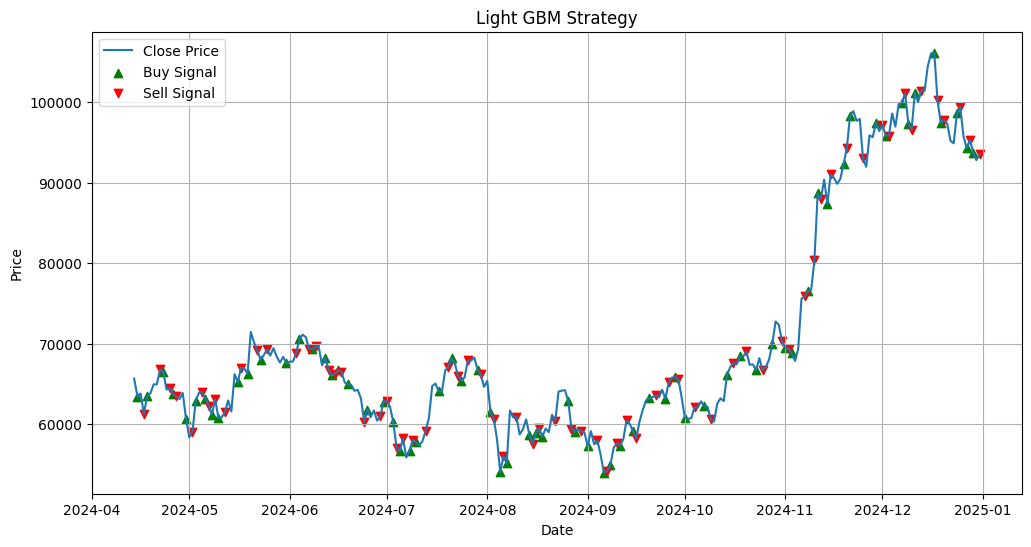

LightGBM Model

The LightGBM model leverages gradient boosting decision trees with optimizations—Gradient-based One-Side Sampling (GOSS) and Exclusive Feature Bundling (EFB)—to enhance both computational efficiency and predictive accuracy. Parameters such as number of leaves, learning rate, and bagging fraction are optimized via grid search. Training minimizes the area under the ROC curve (AUC) given the binary classification framework.

Figure 3: LightGBM performance plot illustrating frequency and magnitude of executed trades on the test period.

Technical Analysis Strategies

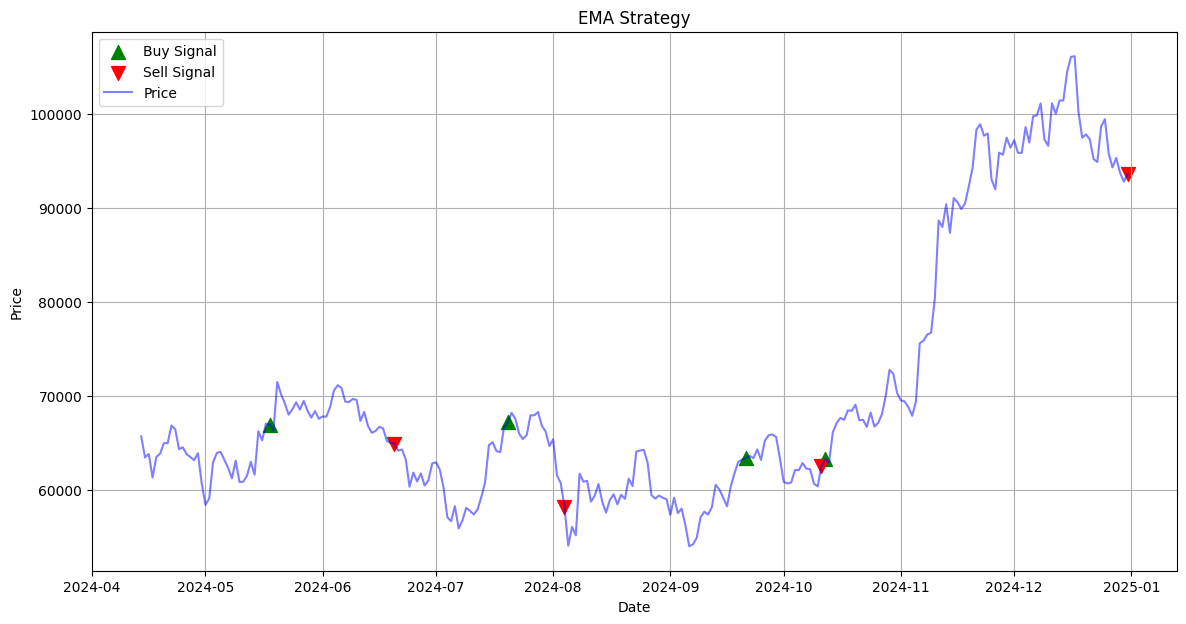

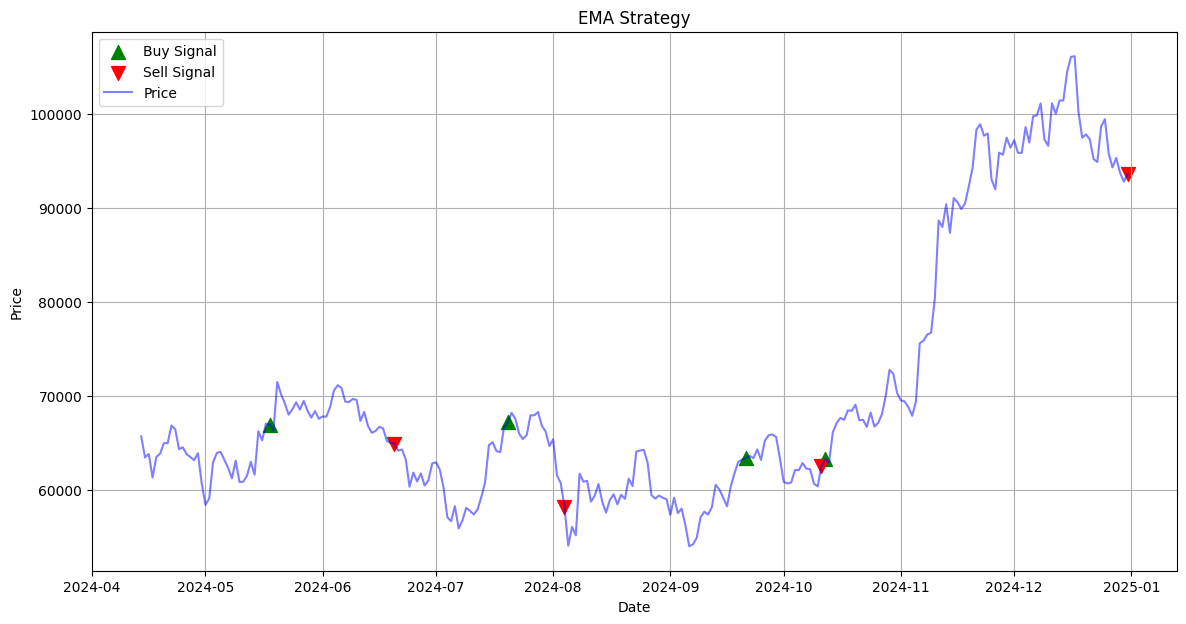

EMA Crossover

The EMA crossover strategy generates buy/sell signals based on the intersection of short-term and long-term exponential moving averages, optimized via grid search for window lengths to maximize historical returns.

Figure 4: EMA strategy trade execution, emphasizing low frequency but high-impact position changes.

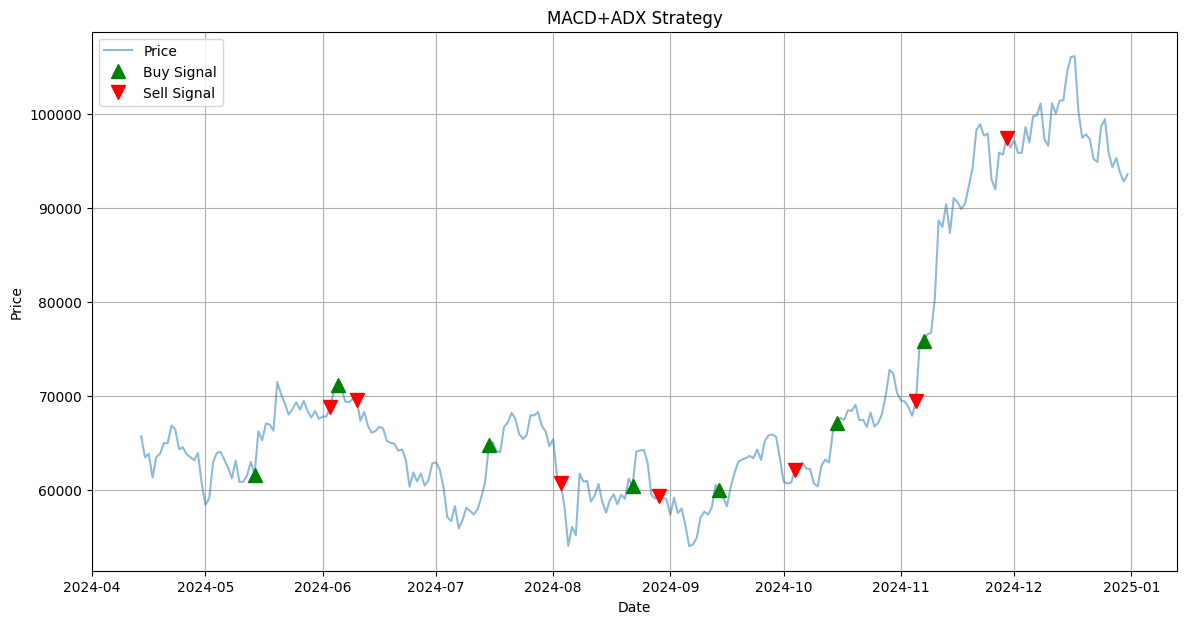

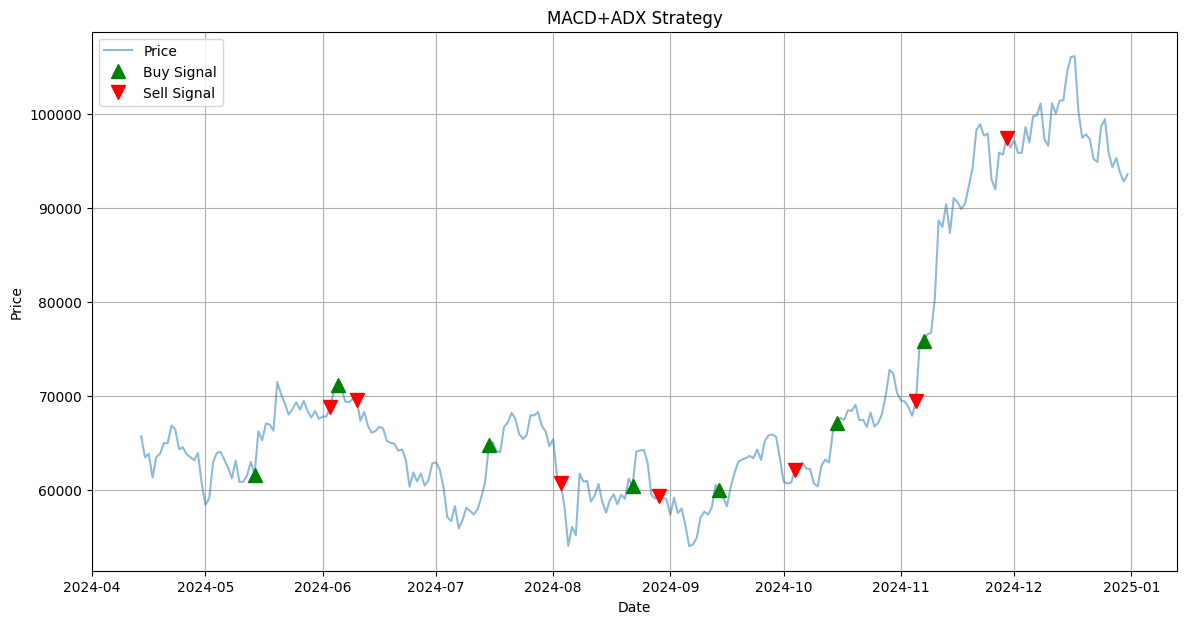

MACD+ADX

This hybrid combines MACD’s momentum detection with ADX’s trend strength filtering to improve signal reliability. Optimal parameter values—MACD short/long window, signal EMA, ADX period—are grid-searched.

Figure 5: MACD+ADX trade execution, illustrating targeted entry/exit in strong trending regimes.

Results

The cumulative returns for the out-of-sample period show a rank order in strategies as follows:

- LSTM: 65.23%

- LightGBM: 53.38%

- Buy-and-Hold: 42.51%

- MACD+ADX: 35.45%

- EMA: 26.07%

The LSTM approach delivers the highest realized return, outperforming both TA strategies and the standard buy-and-hold benchmark. Notably, after accounting for a realistic 0.1% commission fee per trade, only the LSTM maintains its edge over buy-and-hold, achieving a net return of 53.23%.

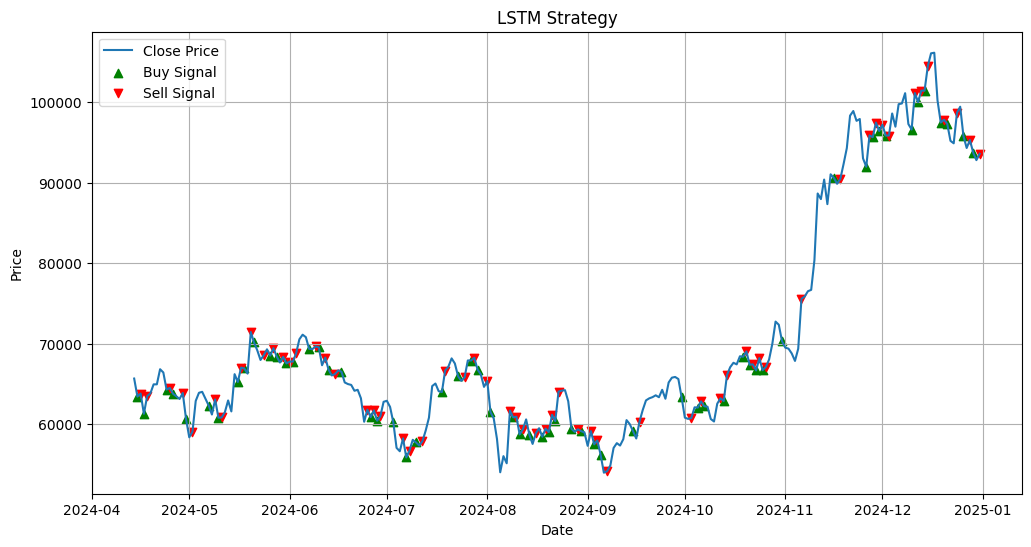

Figure 6: LSTM trade execution plot, highlighting adaptability to high-frequency short-term fluctuations.

Classification metrics reinforce these results: LSTM achieves an accuracy of 0.5611 and F1 score of 0.5306, both exceeding the random 0.5 baseline. LightGBM, while marginally higher in accuracy (0.5840), does not translate this edge into superior trading performance post transaction costs, likely due to excessive trading frequency and noise sensitivity.

Signal Dynamics

ML strategies, particularly LSTM, exhibit high trade frequencies aiming to capture short-lived price micro-movements. In contrast, TA strategies deploy far fewer trades, with MACD+ADX and EMA focusing on regime shifts and momentum phases rather than mean-reversion or microstructure arbitrage.

Discussion

The analysis corroborates several points of academic and industry relevance:

- Data-driven ML, when appropriately regularized and calibrated, offers substantive alpha over both TA and buy-and-hold, particularly resilient to market regime shifts (post-ETF structural break).

- Excess trading frequency (as in LightGBM) attenuates edge after realistic transaction fees, underscoring the criticality of operational cost modeling in strategy design.

- The integration of trend-strength measures (ADX) provides robust performance uplift to traditional momentum strategies such as MACD.

- Results substantiate the capacity of LSTM architectures to learn superior representations of often non-stationary and auto-correlated financial time series. The gate dynamics in LSTM facilitate extraction of actionable trading signals not captured by static TA rules or shallow ensemble methods.

Implications and Future Directions

From a practical standpoint, the findings recommend LSTM as a strong candidate for automated Bitcoin trading, particularly in periods of market turbulence or structural change. However, mitigating operational frictions (slippage, commissions, latency) and overtrading risks remains crucial. The results suggest further exploration into hybrid paradigms—combining deep learning with TA-derived features (e.g., MACD, EMA states)—may yield further improvements in predictive power while constraining overtrading.

Theoretically, this work demonstrates the importance of out-of-sample validation across structural breaks, a design principle with direct relevance for both financial ML and econometric forecasting research.

Conclusion

This study presents a meticulous comparison of machine learning and technical analysis approaches for Bitcoin trading, using a unified statistical learning framework that controls for data leakage and market regime changes. LSTM-based strategies offer distinct performance superiority, especially after accounting for practical trading costs, affirming the value of deep learning architectures in financial time-series modeling. Technical analysis strategies—while respectable in their returns—do not match the adaptive capacity of modern ML models under real market conditions. The research invites extensions into hybrid modeling regimes and further robustness testing across alternative digital assets and evolving market structures.