Learning When to Quit in Sales Conversations

Abstract: Salespeople frequently face the dynamic screening decision of whether to persist in a conversation or abandon it to pursue the next lead. Yet, little is known about how these decisions are made, whether they are efficient, or how to improve them. We study these decisions in the context of high-volume outbound sales where leads are ample, but time is scarce and failure is common. We formalize the dynamic screening decision as an optimal stopping problem and develop a generative LLM-based sequential decision agent - a stopping agent - that learns whether and when to quit conversations by imitating a retrospectively-inferred optimal stopping policy. Our approach handles high-dimensional textual states, scales to LLMs, and works with both open-source and proprietary LLMs. When applied to calls from a large European telecommunications firm, our stopping agent reduces the time spent on failed calls by 54% while preserving nearly all sales; reallocating the time saved increases expected sales by up to 37%. Upon examining the linguistic cues that drive salespeople's quitting decisions, we find that they tend to overweight a few salient expressions of consumer disinterest and mispredict call failure risk, suggesting cognitive bounds on their ability to make real-time conversational decisions. Our findings highlight the potential of artificial intelligence algorithms to correct cognitively-bounded human decisions and improve salesforce efficiency.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about

This paper is about helping salespeople decide the right moment to end a phone call that probably won’t lead to a sale, so they can use that time to call someone else. The authors build an AI “stopping agent” that listens (silently) to a live sales conversation and decides whether to keep going or quit, aiming to save time without losing sales.

The big questions

- How should a salesperson decide, in the middle of a call, whether to keep talking or move on?

- Can an AI learn to make this “when to quit” decision better than humans do in real time?

- If so, how much time can it save, and does that time translate into more sales?

- What patterns do salespeople use when deciding to quit, and are those patterns efficient?

How they studied it

The core idea: “optimal stopping”

Think of fishing at a lake. Do you keep waiting for a fish here, or move to a new spot? Waiting might pay off (you might catch a fish), but it also costs time you could spend somewhere else. That is the “optimal stopping” problem: deciding the best moment to stop waiting and switch.

In this paper, the “fish” is a possible sale, and the “cost” is the salesperson’s limited time. The goal is to stop calls that will likely fail early, and keep calls that still have a good chance to succeed.

Building a “stopping agent” using LLMs

The authors create an AI that reads the live transcript of a call and outputs one of two actions at certain moments: “wait” (keep going) or “quit.”

Instead of using a complicated trial-and-error training method, they use a simpler “learn by copying” approach (called imitation learning):

- Step 1: Look at lots of past calls (with full transcripts and whether they ended in a sale).

- Step 2: For each past call, pretend you could have quit at every possible time, and calculate which quitting time would have been best. “Best” means balancing the value of a sale against the time spent on the call (time has an opportunity cost).

- Step 3: Mark the “best” action at each moment in that past call (wait, wait, wait… then quit).

- Step 4: Train a LLM to read a partial transcript and output the action (wait or quit) that the “best” timeline would have taken. In other words, the model learns to imitate the best quitting pattern discovered from past calls.

This is like watching replays of a sports game, figuring out when a player should have passed or shot to maximize the chance of winning, and then training a rookie to copy those choices in similar situations.

The AI doesn’t talk to the customer or change what the salesperson says. It only observes the text and decides when to stop (or advises the salesperson to stop).

Why not standard reinforcement learning?

Traditional “trial-and-error” AI methods can be unstable, hard to tune, and very expensive to run—especially with giant LLMs and long text. The authors avoid these problems by using imitation learning (supervised fine-tuning), which is simpler, more stable, and cheaper.

The data

- 11,627 outbound sales calls from a European telecom company, over one month.

- Goal: cross-sell electricity contracts to existing mobile customers.

- Only 5.5% of calls ended in a sale.

- Failed calls still took time: about 2.8 minutes each on average (169 seconds).

- Successful calls were longer, since closing a deal takes extra time.

- Transcripts were created with automated speech-to-text (Whisper). The language of the calls is Spanish.

Before building the stopping agent, the authors checked if early parts of a call predict failure. Using an LLM, they could predict after the first 60 seconds whether a call would eventually fail with very high accuracy (AUC ≈ 94%). That means there are strong early signals of failure—yet salespeople often keep talking on those calls much longer than needed.

What they found

- Big time savings without losing sales:

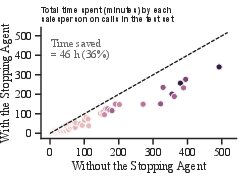

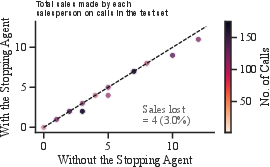

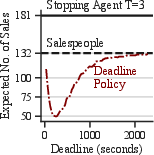

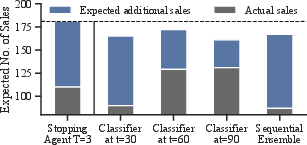

- A cautious version of the stopping agent (allowed to quit at 60 or 90 seconds) kept almost all the sales (130 out of 132) but cut total calling time by about 36%.

- If you use that saved time to make more calls, expected sales could increase by about 33%.

- A more aggressive version (also allowed to quit at 30 seconds) cut total calling time by about 54%, raising expected sales by up to 37%.

- Training and running the model cost about $150—very low compared to the potential gains.

- Salespeople underreact to early warning signs:

- The LLM can spot high risk of failure after 60 seconds, but salespeople often keep going for several minutes.

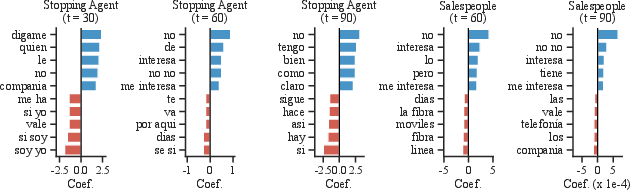

- Salespeople appear to wait for very obvious phrases like “no me interesa” (“I’m not interested”) before quitting, even though subtle cues showed the call probably wouldn’t succeed. That wastes time.

- The AI uses a smarter, evolving strategy:

- Early in the call, it focuses on “Are we talking to the right person?”

- Next, “Is this person actually interested?”

- Later, “Do they already have a good alternative?”

- This flexible focus helps it quit earlier on calls that are going nowhere.

- End-of-shift behavior shows limits in human prediction:

- Calls near the end of a shift are shorter (time feels more valuable then).

- But salespeople don’t shorten only the riskiest calls—they shorten across the board, suggesting they struggle to tell which calls will fail in real time.

Why it matters

- Time is the most valuable resource in high-volume sales. Stopping hopeless calls early lets salespeople talk to more people who might buy.

- The AI is a low-cost, practical assistant: it doesn’t replace the salesperson or talk to the customer; it quietly recommends when to move on.

- It can correct human habits like waiting for obvious signals and missing subtle ones, reducing wasted time and boosting results.

- Managers can deploy this with existing call transcripts and off-the-shelf LLMs—even through standard APIs.

Simple takeaways and impact

- Many sales calls show early signs they won’t succeed; humans notice some of these, but not reliably.

- An AI “stopping agent” can learn when to quit by studying past calls and copying the best quitting moments.

- In tests, it cut time on failed calls by up to half, while keeping nearly all sales, leading to large gains in expected sales when time is reallocated.

- The approach is stable, scalable, and inexpensive to run.

- Beyond this case, the same idea could help in other conversation-heavy settings where time is scarce and many interactions won’t succeed (for example, some types of customer outreach or support triage), as long as transcripts are available and decisions are time-sensitive.

Collections

Sign up for free to add this paper to one or more collections.