- The paper presents an agent-based framework that transforms static UI-to-Code generation into an interactive, verifiable process using exploration and automated validation.

- It achieves state-of-the-art performance with 93.1% completeness and 97.7% correctness, demonstrating robust multi-step UI state traversal and interaction synthesis.

- The framework’s modular design decouples exploration, code generation, and validation, paving the way for scalable benchmarks and practical web automation deployment.

WebVIA: An Agentic Framework for Interactive and Verifiable UI-to-Code Generation

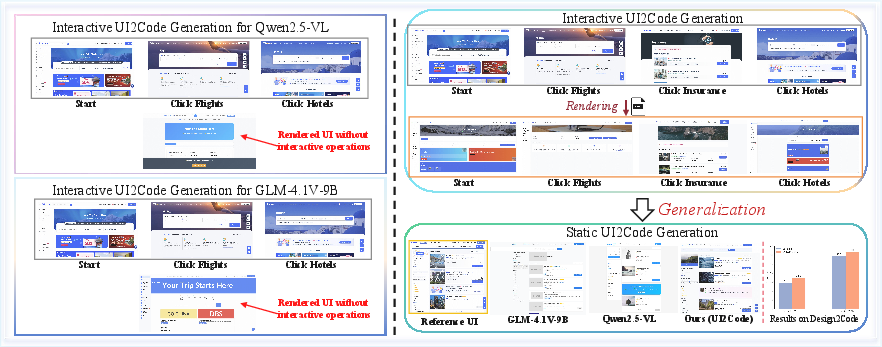

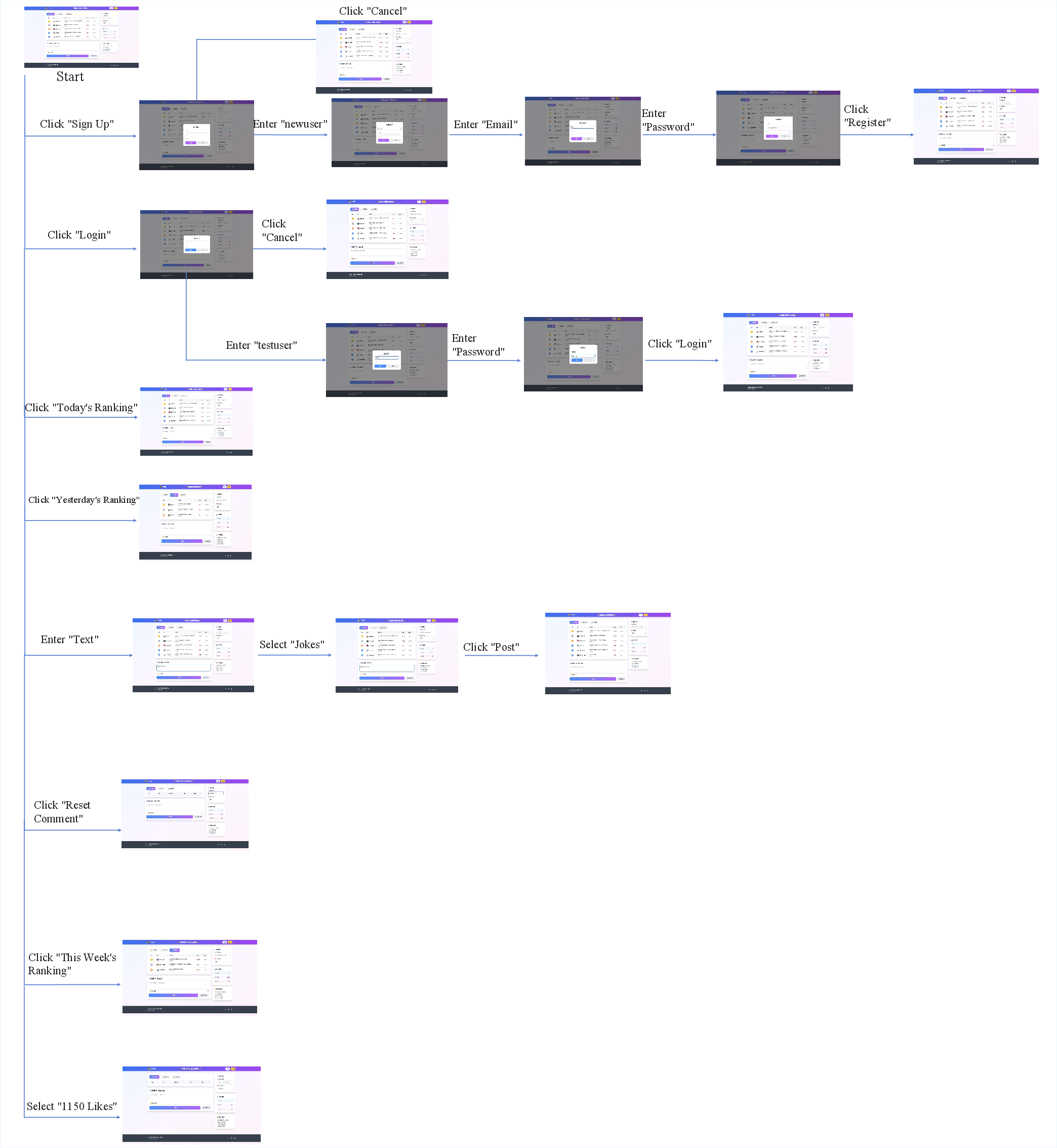

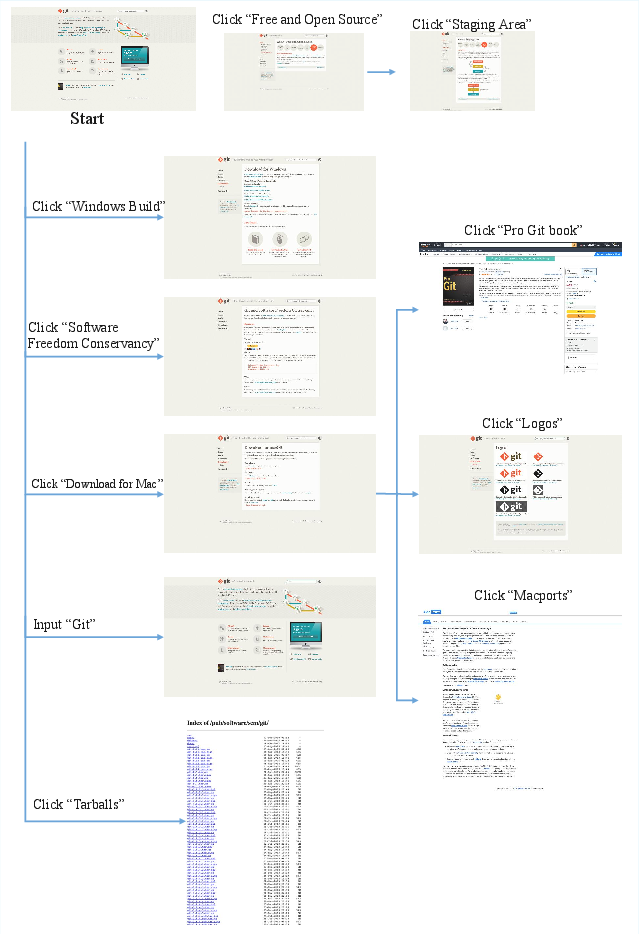

Current automated UI-to-code (UI2Code) pipelines are bottlenecked by their inability to generate interactive interfaces, limiting model outputs to static HTML/CSS/JavaScript despite recent progress in VLM-powered translation from visual mockups to code. As highlighted by the authors, this restriction impedes the integration of UI2Code systems into production web development workflows, as generated code typically ignores GUI interactivity, input handling, and other dynamic behaviors essential for practical deployment. The paper formalizes the interactive UI-to-code generation challenge as a sequential decision-making problem over a structured environment, where the agent must explore, capture, and translate multiple user-driven interface states.

Figure 1: A motivating example illustrating the fundamental gap between static code generation and interactive, executable UI synthesis.

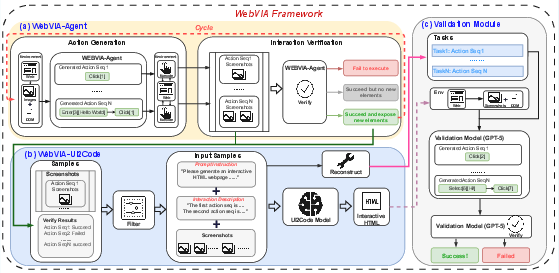

WebVIA Framework and System Architecture

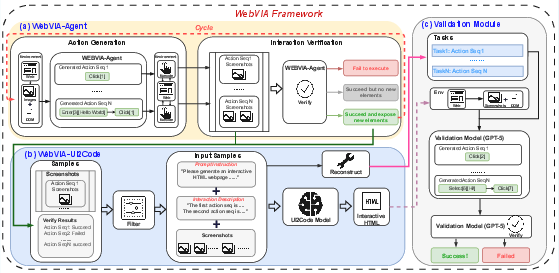

WebVIA introduces an agent-driven pipeline addressing the deficiencies of prior art by (i) systematically exploring interactive webpage states, (ii) translating multi-state observations into executable, interaction-preserving code, and (iii) verifying behavioral fidelity through automated validation. The framework is compositional, featuring three primary components:

- An exploration agent tasked with efficient state space traversal and actionable UI element discovery;

- A multimodal UI2Code model that accepts interaction graphs—composed of multiple screenshots and DOM traces—and emits executable HTML/CSS/JavaScript approximating both visual and interactive fidelity;

- A validation module ensuring that synthesized code supports the intended transition graph, mimicking real-world user workflows.

Figure 2: Overview of the WebVIA framework and its exploration–generation–validation pipeline.

This design fundamentally extends the expressivity of UI2Code systems, enabling the transition from static rendering to interaction-aware synthesis and functional validation.

Exploration Agent: Design and Benchmarking

The exploration agent—WebVIA-Agent—is trained to maximize coverage over possible interface transitions while minimizing redundant exploration. The authors use a hybrid exploration policy combining breadth-first and depth-first rollout in a perception–action–verification loop. Action candidates are generated via supervised finetuning (SFT) on GLM-4.1V-9B using a large-scale synthetic data regime, comprising action generation and state verification branches. The dataset consists of paired UI screenshots, DOM trees, and exhaustive ground-truth interaction traces.

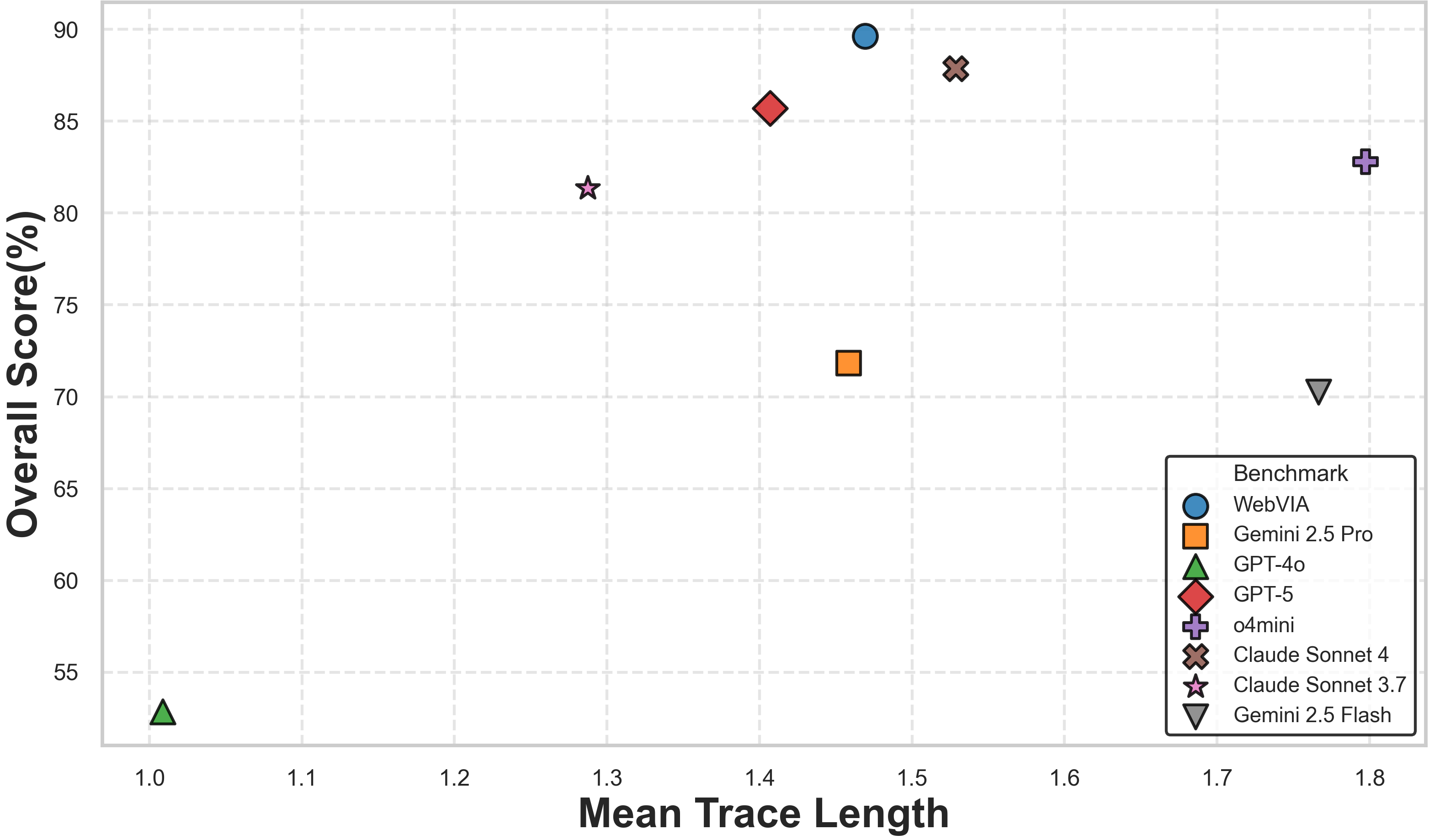

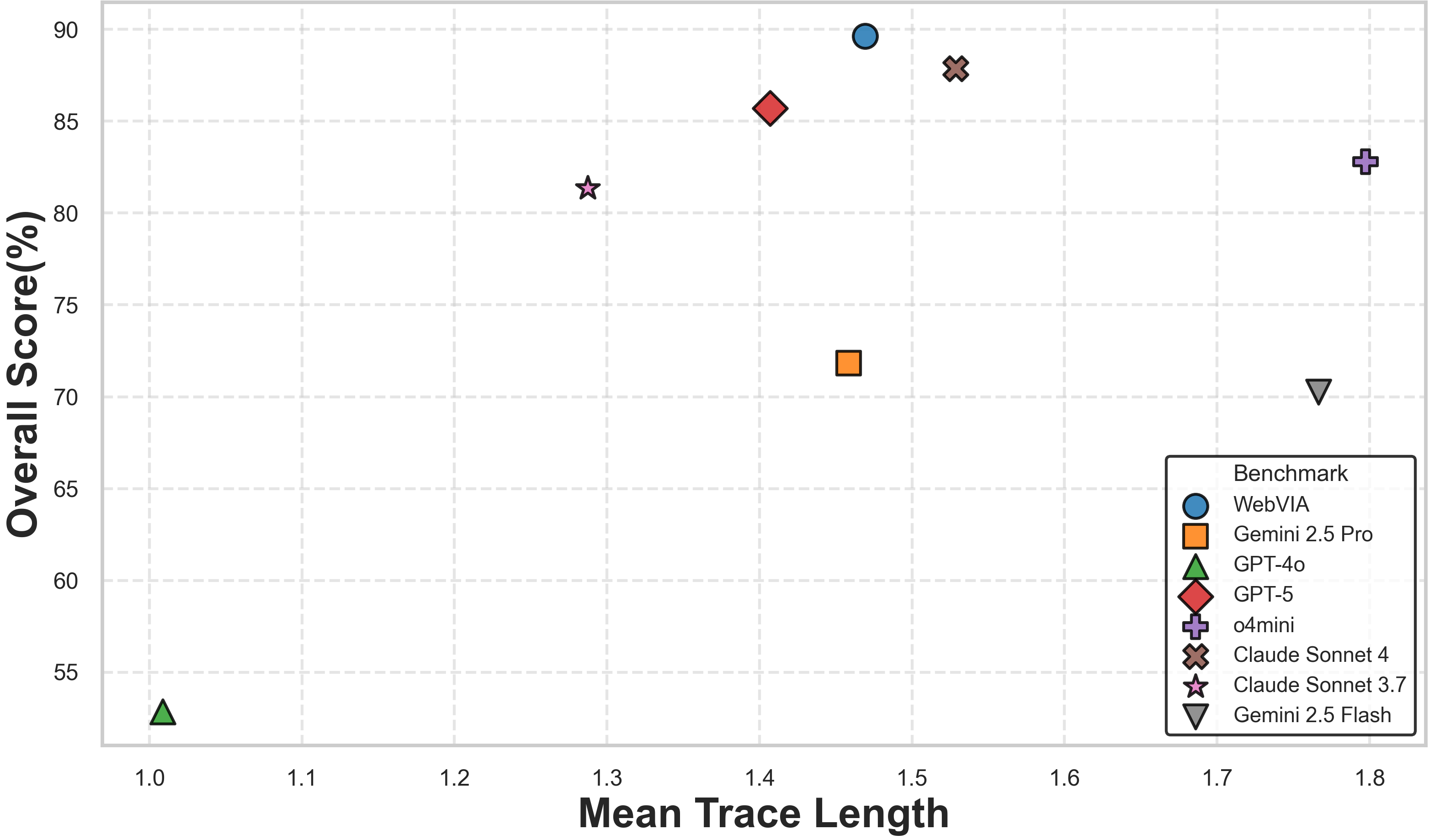

Empirical results show the WebVIA-Agent achieving state-of-the-art completeness (93.1%) and correctness (97.7%) scores on the UIExplore-Bench compared to Gemini-2.5-Pro, Claude-Sonnet-4, and other VLMs, while reducing action redundancy. The agent's trace-length–performance correlation further indicates improved planning efficiency and exploration quality.

Figure 3: Correlation between mean interaction trace length and overall exploration performance across models, with WebVIA-Agent achieving optimal tradeoff.

The exploration module is robust in both synthetic and real-world settings, demonstrating zero-shot generalization to unseen interface layouts and behaviors.

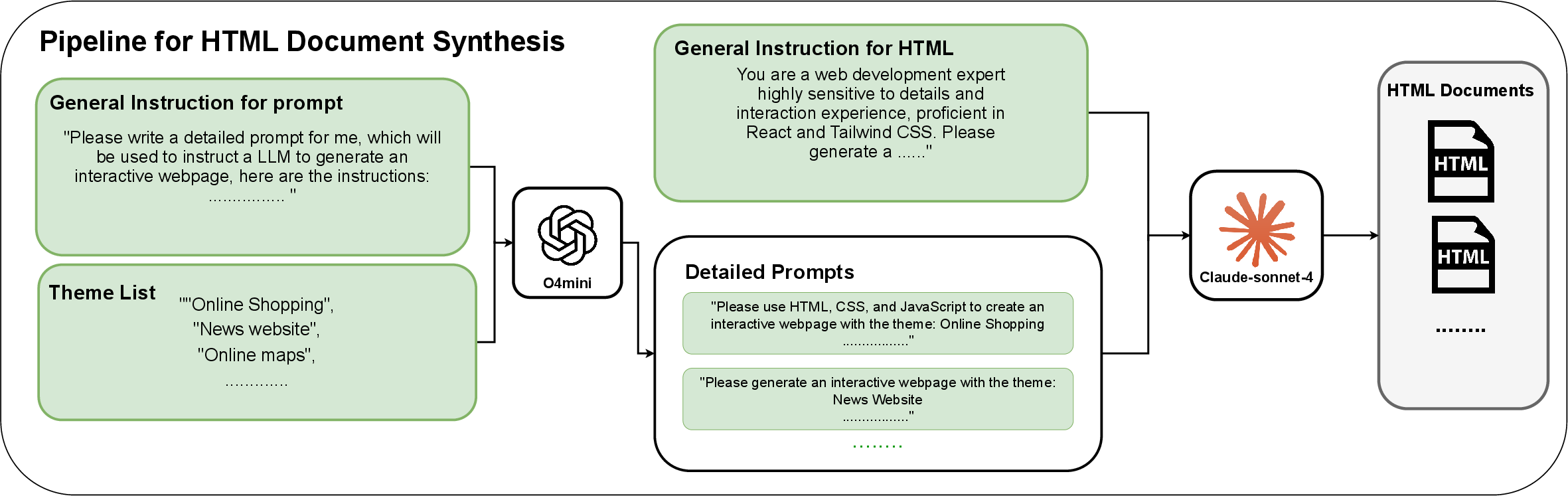

Synthetic Environment and Dataset Construction

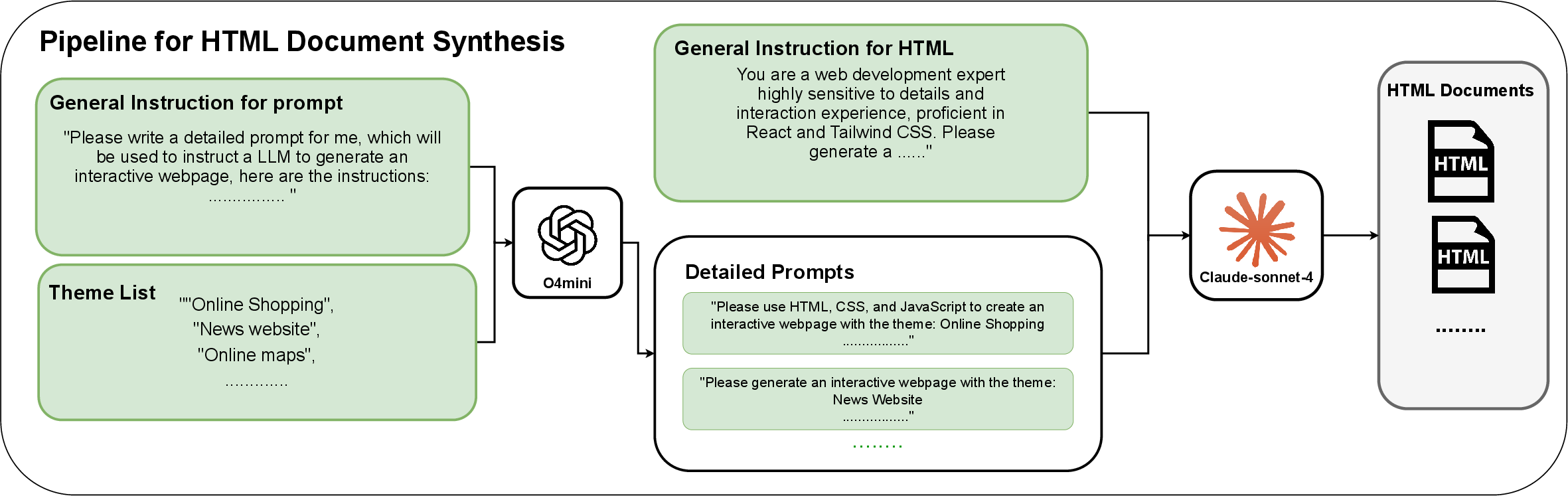

To scale training and evaluation, the authors construct a synthetic HTML environment—WebEnv—using templated task prompts to generate interactive React/Tailwind HTML/CSS/JavaScript documents via o4-mini and Claude-Sonnet-4. This synthetic corpus is used for both agent exploration and the construction of the WebView dataset, which aligns interaction traces (multi-state screenshots and DOM) with programmatically generated ground-truth UIs.

Figure 4: Overview of the webpage synthesis process in the WebVIA framework.

The dataset enables controlled coverage over diverse interactive patterns, event bindings, and layout hierarchies, providing structured supervision for both agent and UI2Code model training.

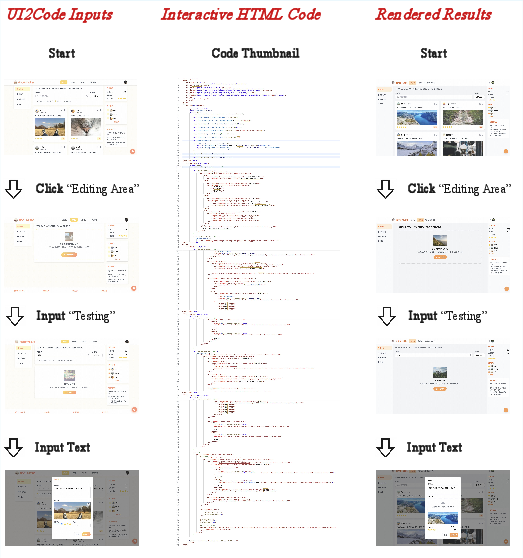

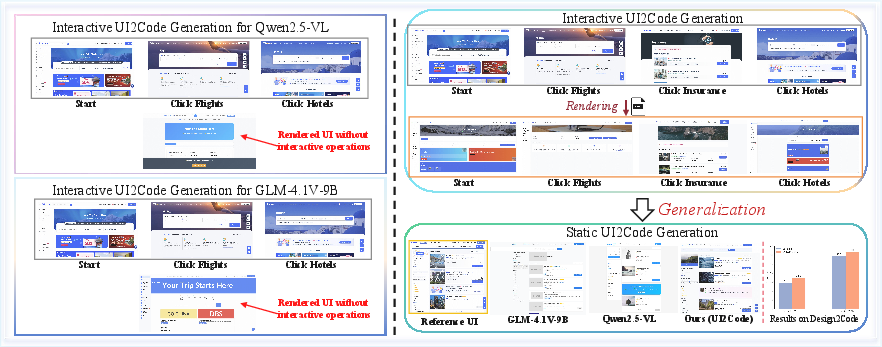

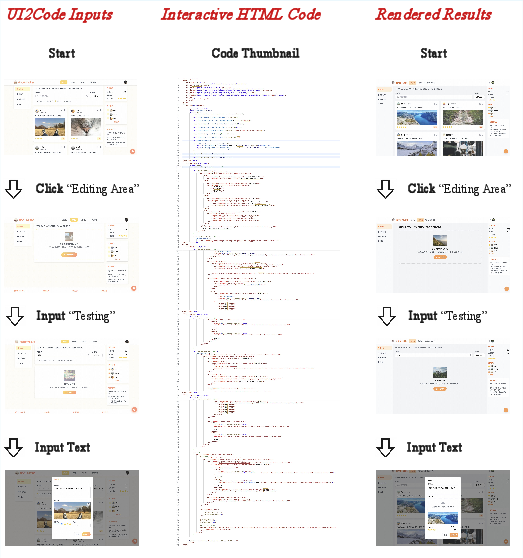

UI2Code Model: Interactive Program Synthesis

WebVIA-UI2Code employs supervised finetuning (using WebView data) to train models (Qwen-2.5-VL-7B and GLM-4.1V-9B) that translate multiple UI states and state transitions into executable code. Unlike classical single-screenshot approaches, this model leverages interaction graphs for richer grounding of both static and dynamic interface properties. The input representation consists of multi-state screenshots and event logs, and output is enforced through a structured <answer></answer> prompt format, promoting interpretable syntactic reasoning and stable generation.

Empirical results show that models finetuned with interactive multi-state data exhibit a 75.9 (Qwen) and 84.9 (GLM) UIFlow2Code score (passing ratio), closely matching large closed-source models such as Claude-Sonnet-4 under behavioral and visual correctness criteria. Notably, standard (non-interactive) UI2Code models trained on static screenshots failed to produce any valid interactive outputs, underscoring the necessity of multi-state input conditioning.

Functional Validation and End-to-End Assessment

WebVIA includes an automated validation module, which executes synthesized UI code in a headless browser (Playwright) and programmatically verifies the presence and functionality of required transitions in the interaction graph. The assessment pipeline employs action and state verification, with human-in-the-loop sampling to ensure dataset reliability.

Qualitative Demonstrations

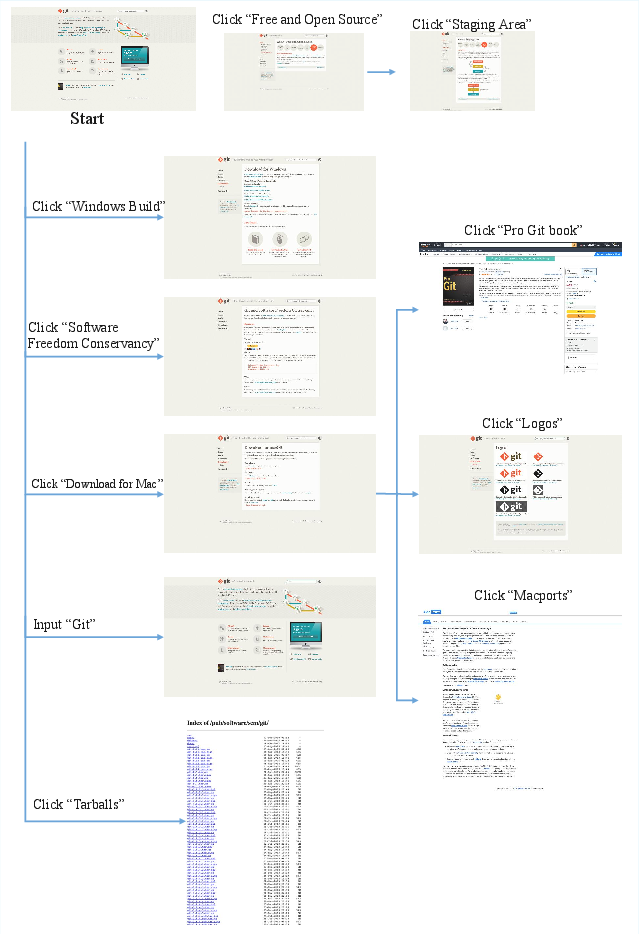

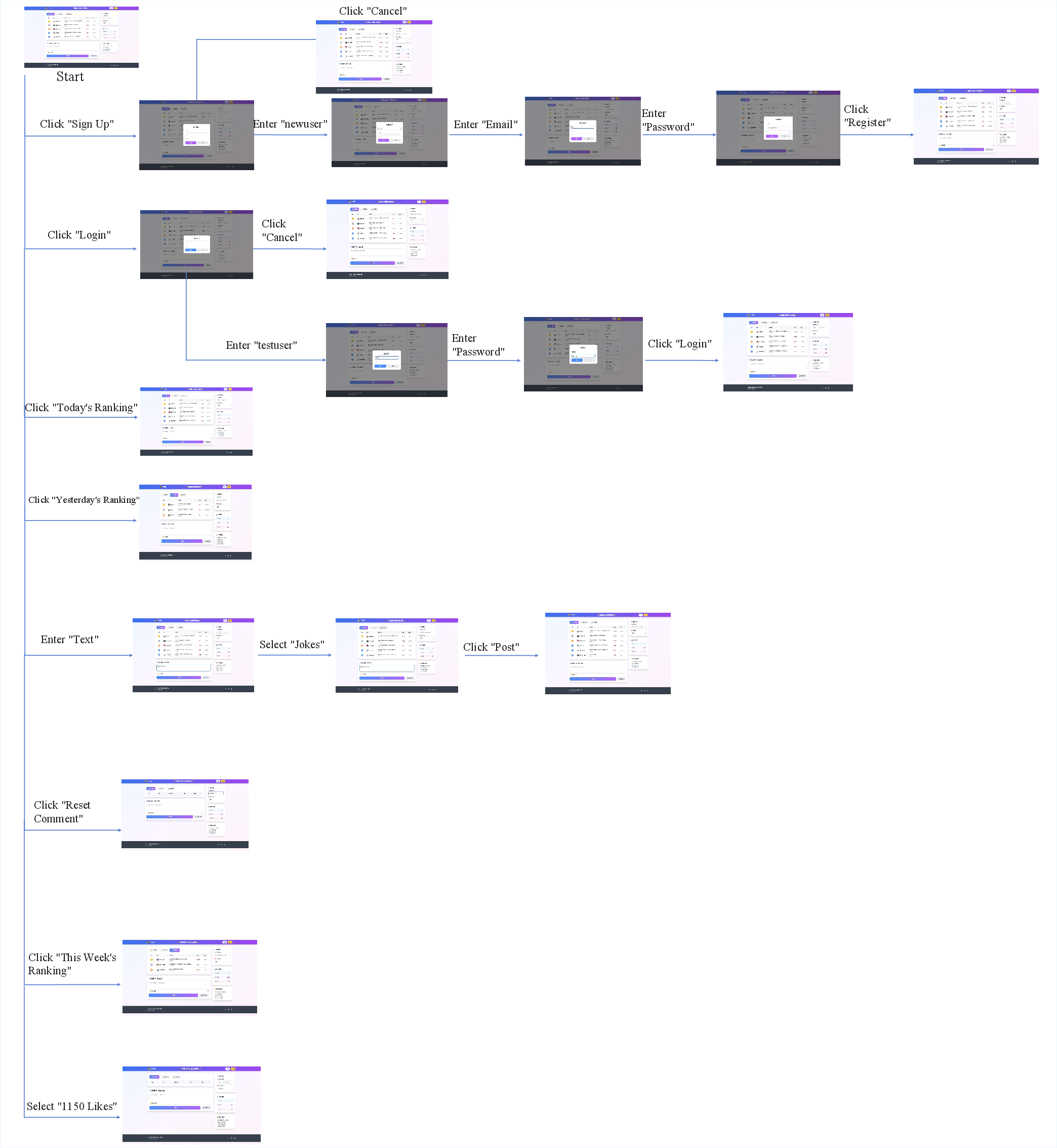

Exploration results are exemplified in various synthetic and real-world tasks, confirming the agent’s ability to discover and evaluate complex behaviors even without explicit exposure during training.

Figure 5: Exploration results of WebVIA-Agent on synthesized web environments, showcasing multi-step state transitions.

Figure 6: Exploration results of WebVIA-Agent on real-world web environments, demonstrating generalization beyond procedural data.

In the code generation stage, rendered outputs from WebVIA-UI2Code-GLM consistently reproduce intended interactive functionality and visual detail across diverse scenarios.

Figure 7: Rendered UI2Code demo for WebVIA-UI2Code-GLM demonstrating structurally complete and interactive HTML synthesis.

Limitations and Discussion

Despite substantial improvements, the paper identifies two principal limitations: (1) the action space for exploration is restricted (Click, Enter, Select), with complex gestures (Drag, Draw) and pixel-level specificity deferred for future work; (2) agent and model training is conducted primarily on synthetic data, which, while substantially generalizable, reveals deficiencies for specialized domains such as calculator UIs or custom function plotting, implying a need for broader data coverage and domain adaptation.

Theoretical and Practical Implications

WebVIA operationalizes the agentic paradigm for interactive UI2Code, reifying multi-stage pipeline design for realistic web engineering workflows and moving evaluation toward functional correctness rather than pure pixel-level fidelity. By decoupling exploration, code generation, and validation, the framework supports modular benchmarking and extensibility with alternative VLMs or RL-based agents.

Practically, WebVIA supports the synthesis of production-ready, verifiable user interfaces, which is critical for bridging automated prototyping and full-stack deployment pipelines. It also enables scalable creation of benchmarks for future vision–LLMs tasked with web automation, code synthesis, and multimodal reasoning.

Future Directions

The demonstrated benefits of multi-state reasoning and validation-centric training suggest several directions for subsequent research: (1) extension to additional action types and richer event modeling; (2) domain adaptation to ensure robustness in highly specialized or adversarial UI distributions; (3) application to accessibility verification, automated testing, and interactive documentation generation; (4) leveraging interaction-centric annotation strategies for dataset expansion; and (5) integration with RL or active learning loops for online, data-efficient exploration and synthesis.

Conclusion

WebVIA decisively addresses the long-standing gap in UI-to-Code pipelines by integrating agent-based exploration, behavior-preserving code generation, and systematic functional validation. The framework demonstrates strong empirical gains in both static and interactive UI synthesis, and establishes a scalable paradigm for future work in goal-directed code generation, web automation, and agentic multimodal reasoning. By shifting the evaluation focus from mere visual correspondence to interaction fidelity and executable correctness, WebVIA redefines benchmarking and paves the way for practical deployment of VLM-based agents in web engineering workflows.