- The paper proposes SCOPE, a distributionally robust framework that jointly estimates covariance and precision matrices using convex spectral divergence.

- It employs Stein-type nonlinear shrinkage to systematically correct spectral biases and improve estimation accuracy in high-dimensional settings.

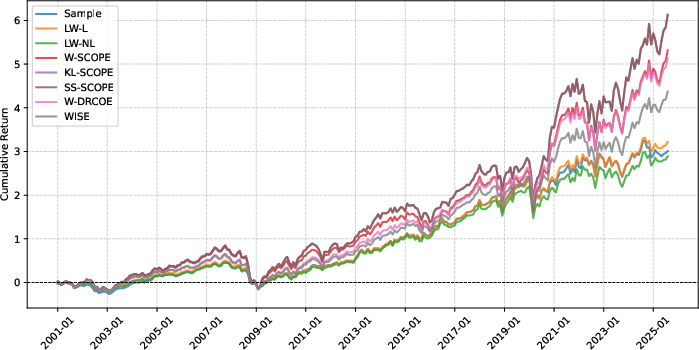

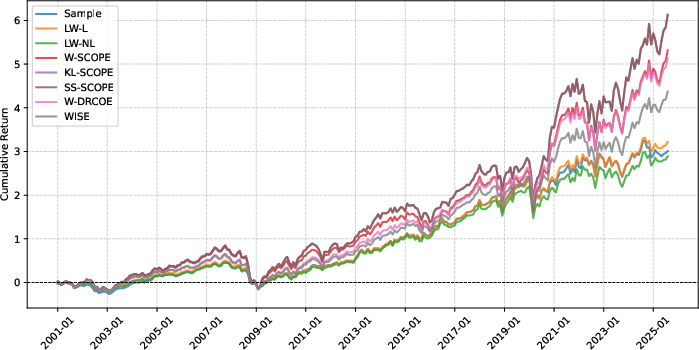

- Experimental results highlight SCOPE's superior performance in applications such as portfolio optimization and hyperspectral image anomaly detection.

SCOPE: Spectral Concentration by Distributionally Robust Joint Covariance-Precision Estimation

Introduction

The paper introduces SCOPE, a distributionally robust framework for the joint estimation of covariance and precision matrices that employs convex spectral divergence. This formulation minimizes the worst-case weighted sum of Frobenius covariance loss and Stein's precision matrix loss across distributions that are allowed to vary within an ambiguity set centered around the nominal distribution. The divergence is measured using a specially selected family of functions known as spectral divergence.

The estimation of covariance and precision matrices is critical in a wide array of data-driven applications, particularly in high-dimensional settings. Classic techniques often suffer from issues like singularities and bias in spectral estimations, which SCOPE effectively mitigates. By providing a meticulous shrinkage mechanism for eigenvalues towards a predefined target, SCOPE enhances the condition number of the estimated matrices and ensures the tractability of downstream tasks reliant on these estimations.

Theoretical Framework and Methodology

Convex Spectral Divergence

This paper defines a divergence based not only on matrix discrepancies but also guided by the spectral properties of matrices - the eigenvalues. A convex spectral divergence is distinguished by its capability to ensure orthogonal equivariance and its reliance on generator functions that define divergence over positive semi-definite matrices.

Under specified regularity conditions, the SCOPE model is shown to reduce to a convex optimization problem despite existing bilinear constraints. The pivotal aspect of SCOPE relies on choosing spectral divergence functions that enable characteristic tractable reformulations. Given these spectral assumptions, the solution to the optimization is quasi-analytical, enabling efficient computation in practice.

Nonlinear Shrinkage

The estimators derived in the SCOPE framework belong to a class known as Stein-type nonlinear shrinkage estimators. Such estimators apply non-linear mappings to the eigenvalues of the sample covariance matrix, correcting spectral biases systematically. Critically, both large and small eigenvalues are shrunk towards a central position, thus addressing estimation bias and computational stability concerns.

The paper details conditions and parameter tuning schemes for effectively determining shrinkage targets and intensities, rooted in both theoretical guarantees and empirical validations. Particularly, a proposed tuning method predicts that the optimal radius decreases with the square of the sample size, facilitating robust estimations even in data-scarce scenarios.

Results and Numerical Experiments

SCOPE's theoretical developments are substantiated with extensive experiments, demonstrating its compelling effectiveness in high-dimensional statistical tasks. Numerical results illustrate significant improvements in spectral bias correction, estimation error reduction, and computational feasibility compared to existing estimators such as Ledoit-Wolf's linear and non-linear shrinkage estimators.

Notably, in practical applications like hyperspectral image anomaly detections and minimum variance portfolio selection, SCOPE shows superior utility, achieving higher sensitivity, specificity, and return optimizations, underscoring its value in real-world implementations.

Figure 1: Cumulative returns of the portfolios induced by different estimators.

Conclusion

SCOPE represents a significant step forward in robust covariance and precision matrix estimation. Its foundation in distributionally robust optimization and spectral divergence advances both theoretical understanding and practical capability for handling high-dimensional data with superior stability and accuracy.

Future work can explore adaptive methods for parameter tuning, empirically estimating divergence constants under real-data constraints, thereby enhancing the framework's empirical applicability. The promise of SCOPE in advancing statistical and optimization methodologies highlights potential further developments in applications requiring precise covariance understanding.