Evolution Strategies at the Hyperscale

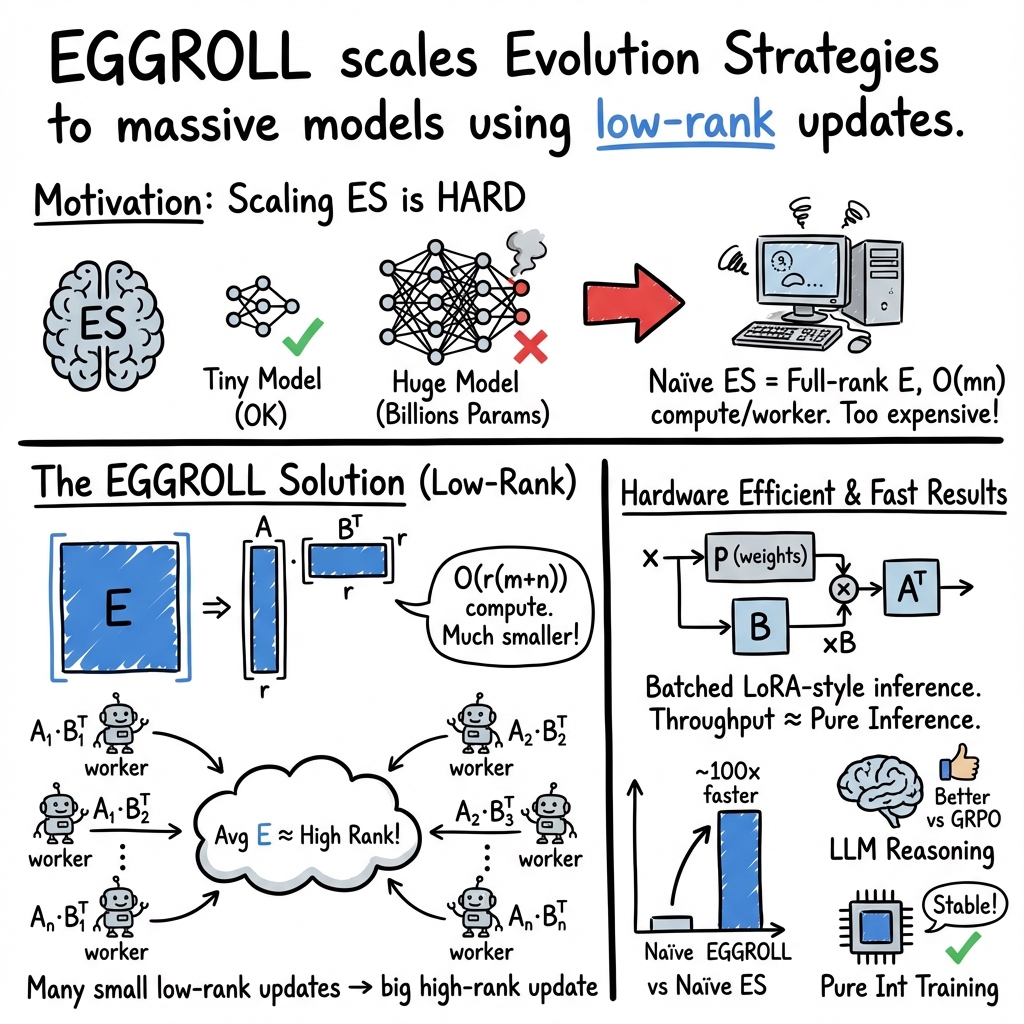

Abstract: We introduce Evolution Guided General Optimization via Low-rank Learning (EGGROLL), an evolution strategies (ES) algorithm designed to scale backprop-free optimization to large population sizes for modern large neural network architectures with billions of parameters. ES is a set of powerful blackbox optimisation methods that can handle non-differentiable or noisy objectives with excellent scaling potential through parallelisation. Na{ï}ve ES becomes prohibitively expensive at scale due to the computational and memory costs associated with generating matrix perturbations $E\in\mathbb{R}{m\times n}$ and the batched matrix multiplications needed to compute per-member forward passes. EGGROLL overcomes these bottlenecks by generating random matrices $A\in \mathbb{R}{m\times r},\ B\in \mathbb{R}{n\times r}$ with $r\ll \min(m,n)$ to form a low-rank matrix perturbation $A B\top$ that are used in place of the full-rank perturbation $E$. As the overall update is an average across a population of $N$ workers, this still results in a high-rank update but with significant memory and computation savings, reducing the auxiliary storage from $mn$ to $r(m+n)$ per layer and the cost of a forward pass from $\mathcal{O}(mn)$ to $\mathcal{O}(r(m+n))$ when compared to full-rank ES. A theoretical analysis reveals our low-rank update converges to the full-rank update at a fast $\mathcal{O}\left(\frac{1}{r}\right)$ rate. Our experiments show that (1) EGGROLL does not compromise the performance of ES in tabula-rasa RL settings, despite being faster, (2) it is competitive with GRPO as a technique for improving LLM reasoning, and (3) EGGROLL enables stable pre-training of nonlinear recurrent LLMs that operate purely in integer datatypes.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview of the paper

What’s the main idea?

This paper introduces a new way to train very large AI models called EGGROLL. EGGROLL is based on “evolution strategies” (ES), which means it improves a model by trying lots of small changes, seeing which changes work best, and then keeping the good ones. The big challenge with ES on huge models is that it’s slow and uses a lot of memory. EGGROLL fixes this by making the changes in a smart, compact way so you can try many of them at once, much faster and with much less memory.

Key questions the researchers asked

In simple terms, what did they want to know?

- Can we make evolution strategies fast enough to train models with billions of parameters?

- Can we do this without using backpropagation (the usual method that needs gradients)?

- Will this faster method still work well on different tasks, like training LLMs and solving reinforcement learning problems?

- How close is this “compact change” method to the full, expensive version in theory?

How EGGROLL works (methods and analogies)

First, what are Evolution Strategies (ES)?

Imagine you’re trying to improve a recipe. Instead of calculating exactly how each ingredient affects the taste (like gradients), you make lots of small random tweaks to the ingredients, taste the result (fitness), and keep the tweaks that made it better. That’s ES: try many variations, score them, and average the best directions to update the recipe.

The obstacle with big models

Modern AI models are like gigantic spreadsheets filled with numbers (weights). A basic ES tweak touches every cell, which:

- Takes huge memory to store each full tweak.

- Requires lots of compute to test each tweak. On billion-parameter models, this becomes painfully slow.

EGGROLL’s key idea: “low‑rank” tweaks

Instead of changing the entire big matrix directly, EGGROLL builds a large change from two skinny matrices:

- Make two small matrices: A (tall-and-skinny) and B (tall-and-skinny).

- Multiply them as A × Bᵀ to form a big “low‑rank” change.

Analogy: Instead of painting a mural by adjusting each pixel, use two simple stencils that, when combined, create a large pattern. You only store the stencils, not the full mural changes.

Why this helps:

- Memory drops from storing m×n numbers to storing r×(m+n), where r is small.

- Compute drops from doing m×n work to roughly r×(m+n).

- You can run many “taste tests” (population members) in parallel like a big team, almost as fast as regular batch inference.

Technical notes explained simply:

- “Rank” is like how many stencils you use at once. Even if each worker’s tweak is low‑rank (small number of stencils), EGGROLL averages many workers’ tweaks. Together, the final update can be high‑rank (rich and detailed), so you aren’t stuck with only simple changes.

- They use a smart random number generator to recreate the noise when needed, so they don’t have to store all tweaks in memory.

- On GPUs, EGGROLL shares most of the heavy computation and applies the small tweaks efficiently, similar to how LoRA speeds up fine‑tuning.

The theory in plain language

The authors prove that if you increase the number of stencils (the “rank” r), the EGGROLL update gets quickly closer to the full, expensive ES update. The error shrinks about as fast as 1/r. That means doubling r roughly halves the gap. Surprisingly, even very small r (like r=1) already works well in practice.

Main findings and why they matter

Here are the core results, explained after a brief introduction to the list:

- EGGROLL runs much faster than regular ES on huge models, reaching speeds close to plain inference. This is a big deal because it means you can try many variations without slowing everything down.

- It works well on reinforcement learning tasks. Across many environments, EGGROLL is often as good as or better than a common ES baseline, while being far more efficient.

- It’s competitive for improving LLM reasoning. On tasks like countdown and GSM8K, EGGROLL matched or beat a strong method called GRPO under the same hardware and time.

- It enables “pure integer” training of a custom RNN LLM. They trained a model using only integers (no floating point), which can be faster and more energy-efficient on certain hardware. Training was stable, and they scaled the population size extremely large (up to 262,144) on a single GPU. The best test loss reached 3.41 bits/byte on a character-level dataset.

Why this is important:

- Backpropagation can struggle with noisy, non-differentiable, or discrete systems. ES doesn’t need gradients, and EGGROLL makes ES practical at hyperscale.

- Using low-precision integers can save energy and run faster. EGGROLL is naturally compatible with the same datatypes used at inference.

- The theoretical guarantee gives confidence that the compact tweaks really do approximate the full method well as you increase rank.

Implications and potential impact

What could this change in AI practice?

- Training huge models without backprop: EGGROLL makes it feasible to optimize models where gradients are unavailable, unreliable, or expensive.

- Faster and cheaper experimentation: Because it’s highly parallel and memory-light, you can explore many variations at once on modern GPU clusters.

- New kinds of systems: EGGROLL could help train “neurosymbolic” systems that blend neural networks with non-differentiable symbolic tools (like calculators or memory modules) end-to-end.

- Hardware-friendly AI: Pure-integer training and low-rank computation can reduce energy use and cost, aligning model training with efficient inference hardware.

Bottom line

EGGROLL shows that evolution strategies, once considered too heavy for giant models, can be redesigned to work at hyperscale. With smart low‑rank tweaks, careful GPU batching, and solid theory, it brings backprop‑free optimization into the mainstream for large, complex, and even non-differentiable AI systems.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, focused list of what remains missing, uncertain, or unexplored, framed to be actionable for future work.

- Convergence with approximate score functions: No end-to-end convergence guarantees for EGGROLL when using the Gaussian score approximation; specify conditions (e.g., step-size, noise scale, Lipschitz/smoothness of f) under which the algorithm provably converges in non-convex settings.

- Finite-rank bias/variance characterization: Quantify the bias and variance of the gradient estimator for small ranks (especially r=1) and their effect on optimization speed and final performance; derive or measure correction terms or control variates to reduce bias.

- Rank selection and scheduling: Systematically ablate r beyond r=1 (per layer and globally), study adaptive rank schedules during training, and determine principled criteria to increase/decrease r based on estimator quality or progress.

- Layer-wise ranks and scaling: Explore per-layer rank choices and layer-wise noise scales; assess whether sensitive or large layers benefit from higher r while others remain at r=1.

- Covariance adaptation: Current method assumes isotropic noise; investigate anisotropic covariance (e.g., low-rank plus diagonal, Fisher-informed, or CMA-ES–style adaptation) and its impact on sample efficiency and stability.

- Variance-reduction techniques: Evaluate antithetic/mirrored sampling, common random numbers, rank-based fitness shaping, and control variates within EGGROLL to lower estimator variance at fixed N and r.

- Correlation from batched evaluation: Analyze whether sharing base activations across population members induces correlation between perturbations or fitness estimates; quantify its impact and propose decorrelation or reweighting schemes.

- Noise scale scheduling: Noise variance σ is fixed in theory/implementation; study adaptive σ schedules (e.g., annealing, trust-region criteria) and joint tuning with learning rate for improved stability and efficiency.

- Theory beyond bounded fitness: Theoretical results require bounded f(M); extend to unbounded or heavy-tailed reward distributions common in RL/LLMs, including tail-robust bounds and stability conditions.

- Non-Gaussian/heteroscedastic perturbations: Extend analysis to non-Gaussian p(A), p(B), anisotropic ε, or heavy-tailed noise; determine when Gaussian-score approximation remains accurate.

- Multi-node scaling limits: Provide empirical scaling studies for multi-node clusters (communication of scalar fitnesses, synchronization costs, stragglers, fault tolerance) at very large N; identify bottlenecks and remedies.

- Memory/compute accounting: Report absolute memory footprints and FLOP overheads (not only normalized throughput) across r, N, and model sizes; break down costs from RNG reconstruction, A/B generation, and batched kernels.

- Applicability beyond linear layers: Detail how low-rank perturbations are applied to non-linear parameterized modules (e.g., layernorm scales/biases, embeddings, convolutions); quantify benefits/costs per operator type.

- Embedding and convolutional models: Test EGGROLL on convolutional backbones and large embedding-heavy models (vision, speech, recommendation) to validate generality and identify operator-specific optimizations.

- Integer-only perturbations: Clarify whether A, B, and updates are sampled/applied in integer space; study numerical stability, saturation effects, and portability to other low-precision dtypes (int4, FP8, bfloat16).

- Data/sample efficiency in pretraining: Quantitatively compare token/sample and compute efficiency versus backprop baselines on the same architecture; report perplexity vs tokens and compute-normalized performance.

- Scaling the EGG model: Validate integer-only EGG (nonlinear RNN) at larger scales (deeper, wider, subword vocabularies) and on standard LM benchmarks; assess whether results carry over from character-level minipile.

- LLM finetuning fairness and breadth: Ensure equalized sample/compute budgets versus GRPO; expand benchmarks (e.g., MATH, BBH, GSM-hard), include safety/alignment metrics, and analyze robustness to prompt variations.

- Scoring function design for LLM tasks: Compare the proposed z-score–based fitness to alternatives (pairwise preference, listwise, calibration-aware); study sensitivity to global vs per-question variance, batch composition, and noisy evaluation.

- RL failure modes: Diagnose environments where EGGROLL underperforms OpenES; link performance to task properties (sparsity, stochasticity, deceptive rewards) and propose task-specific modifications (e.g., reward shaping, partial rollouts).

- Truncated ES with RNN hidden state: Analyze bias introduced by carrying hidden state between ES updates (truncation at document boundaries); compare with full unrolls or persistent ES and propose corrections.

- Integration with orthogonal ES advances: Combine EGGROLL with persistent ES, online updates, rank-based selection, evolution paths, or CMA-ES mechanisms; quantify additive benefits and interactions.

- Adaptive population sizing: Develop criteria to adapt N during training (e.g., based on signal-to-noise ratio or improvement per token) and characterize regimes where Nr < min(m,n) is insufficient.

- Cross-layer/shared subspaces: Explore sharing A or B across layers, block-diagonal or structured low-rank perturbations, and subspace reuse to further reduce memory and improve generalization.

- Sensitivity analyses and tuning recipes: Provide comprehensive sensitivity studies for learning rate, σ, rank r, population size N, and fitness shaping; release practical tuning guidelines.

- Robustness to distribution shift: Evaluate how EGGROLL-trained models (RL and LLMs) behave under shifts in tasks/prompts; assess generalization, stability, and catastrophic degradation risks.

- Energy and environmental impact: Measure real power/energy per training token/episode and CO2 emissions versus backprop and other ES baselines, especially under int8 claims.

- Security/privacy in distributed ES: Assess potential leakage via scalar fitness communication; explore differential privacy or secure aggregation adaptations suitable for EGGROLL.

- Reproducibility and ablations: Release full training configs, seeds, and per-task ablations (r, N, σ, scoring variants); quantify seed variance at large scales to establish robustness of reported gains.

Glossary

- Absolutely continuous: A property of distributions having a density with respect to Lebesgue measure. "zero- mean, symmetric, absolutely continuous (i.e., it has a density) distribution"

- Arithmetic intensity: The ratio of computations to memory accesses; low values indicate poor utilization on GPUs. "yielding poor arithmetic intensity."

- Batched LoRA inference: Applying multiple low-rank adapter updates in parallel during inference for efficiency. "This decomposition is key to efficient batched LoRA inference, such as those used by vLLM"

- Batched matrix multiplications: Performing many matrix multiplications in parallel as a single batched operation. "and the batched matrix multiplications needed to compute per-member forward passes."

- Blackbox optimisation: Optimization methods that do not require gradients or internal model details. "ES is a set of powerful blackbox optimisation methods"

- CMA-ES: Covariance Matrix Adaptation Evolution Strategy, an advanced ES algorithm adapting covariance of the search distribution. "singular values that are trained using CMA-ES."

- Counter-based deterministic random number generator (RNG): An RNG where outputs are generated from counters, enabling reproducible, stateless sampling. "we use a counter-based deterministic random number generator (RNG) (Salmon et al., 2011; Bradbury et al., 2018) to reconstruct noise on demand"

- Edgeworth expansion: An asymptotic series improving Gaussian approximations using cumulants beyond the CLT. "we make an Edgeworth expansion (Bhattacharya & Ranga Rao, 1976) of the density p(ZT)"

- Frobenius norm: A matrix norm equal to the square root of the sum of squared entries. "we use the Frobenius norm:"

- Gaussian approximate score function: An approximation to the score function assuming a Gaussian limit of a sum of random matrices. "we use a Gaussian approximate score function, which is obtained from taking the limit r > co."

- Gaussian matrix ES: Evolution Strategies where search distributions over matrices are Gaussian. "Gaussian matrix ES methods optimise the objective J(p) by generating search matrices"

- Gaussian matrix policy: A Gaussian distribution defined over matrices used as the search policy in ES. "Using the Gaussian matrix policy T (M|p) = N(p, Imo2, Ing2)"

- GRPO: Group Relative Policy Optimization, a reinforcement-learning fine-tuning method for LLMs. "it is competitive with GRPO as a technique for improving LLM reasoning"

- Isotropic matrix Gaussian distribution: A matrix normal distribution with identical variance in all directions. "For isotropic matrix Gaussian distributions with covariance matrices U = o2. Im and V = 02. In"

- Kronecker product: An operation on two matrices producing a block matrix; used to express covariances on vec-operator spaces. "where & denotes the Kronecker product."

- KV cache: The key-value cache used by transformers to store attention states across tokens. "any memory otherwise spent on the KV cache can be used to evaluate population members."

- LoRA: Low-Rank Adaptation; method to adapt large models by training small low-rank matrices. "Analogous to LoRA's low-rank adapters in gradient-based training (Hu et al., 2022)"

- Low-rank approximation: Approximating a matrix by a product of low-width factors to reduce parameters/compute. "a low-rank approximation can be made by decomposing each matrix:"

- Manifold Mr: The set (manifold) of rank‑r matrices embedded in Euclidean space. "E = 1 FAB maps to the manifold MY C IRmXn of rank r matrices"

- Matrix 2-norm: The spectral norm (largest singular value) of a matrix. "which provides an upper bound on the matrix 2-norm (Petersen & Pedersen, 2008)."

- Matrix Gaussian distribution: Also known as the matrix normal; a Gaussian distribution directly over matrices with row/column covariances. "the matrix Gaussian distribution (Dawid, 1981), which is defined directly over matrices X E Rmxn."

- Mean-field approximator: An approximation assuming independence among components to simplify complex distributions. "we derive and explore a set of mean-field approximators in Appendix B as alternatives"

- Monte Carlo estimate: Estimating expectations by averaging over random samples. "We make a Monte Carlo estimate of expectation in Eq. (5)"

- Natural Evolution Strategy (NES): ES variant that follows the natural gradient in parameter space. "the ES update in Eq. (4) is equal to the natural evolution stategy (NES) update"

- Natural gradient: Gradient preconditioned by the inverse Fisher information, respecting parameter-space geometry. "NES (Wierstra et al., 2008; 2011) updates follow the natural gradient"

- Natural policy gradient: Applying the natural gradient to policy optimization for invariance to parameterization. "recovering the Gaussian form from the true natural policy gradient update."

- Population-based exploration: Using a population of parameter samples to explore and smooth noisy landscapes. "population-based exploration smooths irregularities"

- Pre-layernorm: A transformer variant applying layer normalization before each block to improve stability. "pre-layernorm transformer decoder models"

- Rank-one perturbation: A parameter perturbation formed by the outer product of two vectors (rank 1). "Rank-one perturbation Ei"

- RWKV-7: A recurrent LLM architecture series optimized for parallel inference. "EGGROLL fine-tuning on an RWKV-7 1.5B model"

- Score function: The gradient of the log-density with respect to parameters; used in likelihood-ratio estimators. "VM log 7(M|0) is known as the score function"

- StopGrad: An operation that treats a tensor as a constant during optimization, blocking gradients/updates. "MO := StopGrad(Mi) is the imported matrix from the foundation model with frozen parameters"

- SVD: Singular Value Decomposition; factorizes a matrix into singular vectors/values. "decomposing them into fixed SVD bases"

- Tabula-rasa: Training from scratch without prior knowledge or pretraining. "tabula-rasa RL settings"

- Tangent space: The linear space of allowable infinitesimal variations at a point on a manifold. "gradients with respect to log p(E) are defined over the tangent space to M'"

- Tensor core operation: Specialized GPU hardware operation for high-throughput mixed-precision matrix math. "int8 matrix multiplication with int32 accumulation being the fastest tensor core operation."

- Truncated ES: ES training where sequences are truncated, retaining some state between updates. "applying truncated ES by keeping the hidden state and only resetting at document boundaries."

- Variance reduction: Techniques to decrease estimator variance for more sample-efficient optimization. "with followups offering further variance reduction"

- Zeroth order optimization: Optimization using only function evaluations (no gradients), akin to ES with small populations. "zeroth order optimization (Zhang et al., 2024), which is analogous to ES with a population size of 1"

- Z score: A standardized score subtracting mean and dividing by standard deviation. "We then compute the z score per question"

Practical Applications

Immediate Applications

Below are actionable use cases that can be deployed now, directly leveraging the paper’s findings on low-rank Evolution Strategies (EGGROLL), its hardware-efficient implementation, and demonstrated empirical performance.

- LLM Reasoning Fine-Tuning at Scale

- Sector: software, education

- Use case: Fine-tune LLMs for math and logic tasks (e.g., countdown, GSM8K) with higher throughput than GRPO, especially on architectures like RWKV that benefit from constant memory footprint.

- Workflow/product: “EGGROLL-FineTune” plugin that batches low-rank adapters across a large population, integrates with inference platforms (e.g., vLLM-like systems), and uses z-score-style scoring over question batches.

- Assumptions/dependencies: Access to high-throughput inference hardware (e.g., H100s), batch-friendly recurrent LLMs (RWKV-like), scalar-only rewards/accuracy signals; population sizes large enough for near full-rank aggregate updates; rank r kept small (often r=1).

- Memory- and Cost-Efficient LLM Adaptation in Enterprises

- Sector: software, finance, healthcare

- Use case: Replace gradient-based fine-tuning with ES-based updates for models with strict memory budgets or non-differentiable components (e.g., rule-augmented pipelines, compliance filters).

- Workflow/product: LoRA-style ES adapters that can be swapped at inference time; batch inference scheduler that shares base activations while applying many low-rank perturbations.

- Assumptions/dependencies: Inference infrastructure that supports batched matmul and low-rank adapter application; reliable counter-based RNG on device; task-specific scalar fitness functions.

- Robust RL Training in Simulators

- Sector: robotics, gaming, logistics

- Use case: Tabula-rasa RL in long-horizon or noisy environments (Brax, Jumanji, Navix, Craftax), exploiting ES’s robustness and parallel scaling.

- Workflow/product: “EGGROLL-RL” trainer that evaluates large populations per update and aggregates fitness scalars for fast, stable learning without backprop through time.

- Assumptions/dependencies: Simulator access with parallel rollout capability; scalar episodic return; hyperparameter search to tune ES step size and noise; modest network sizes or efficient batching for larger ones.

- High-Throughput Hyperparameter Optimization (HPO)

- Sector: software/MLOps

- Use case: Population-based HPO for deep nets and pipelines; EGGROLL reduces per-trial memory/compute overhead compared to full ES or backprop-based PBT.

- Workflow/product: “EGGROLL-HPO” service that treats configurations as policies with fitness (e.g., validation score), evolves them via low-rank perturbations, and maximizes throughput on inference clusters.

- Assumptions/dependencies: Ability to evaluate many configurations in parallel and report scalar scores; integration with existing experiment trackers.

- Integer-Only Pretraining for Edge-Friendly LLMs

- Sector: mobile, IoT, energy

- Use case: Pretrain compact RNN-based LLMs (e.g., the EGG architecture) entirely in int8/int32 for energy savings and hardware simplicity on edge devices.

- Workflow/product: “Int8-LM Pretrainer” that runs ES-only updates on integer tensor cores; deploys integer-only models for on-device inference and personalization.

- Assumptions/dependencies: Hardware with fast int8 matmul and int32 accumulation; integer-safe normalization (L1-like) and recurrent blocks; sufficient population sizes; dataset streaming pipelines.

- On-Device Personalization without Backprop

- Sector: consumer software, privacy

- Use case: Personalize small LMs or recommenders locally by evolving parameters using only inference compute and scalar feedback (e.g., click-through, task success).

- Workflow/product: Lightweight ES personalization client on mobile/edge that batches low-rank perturbations, evaluates on user tasks, and applies the aggregated update.

- Assumptions/dependencies: Small model size; local reward logging; battery and compute budgets; limited communication (only weights or adapters for updates).

- Tuning Non-Differentiable or Hybrid Pipelines

- Sector: adtech, search, operations research

- Use case: Optimize systems that mix neural modules with symbolic rules (e.g., rankers with hard constraints), where gradients are unavailable or unreliable.

- Workflow/product: ES-based optimizer wrapping the whole pipeline, evolving low-rank parameter matrices while reading scalar KPIs (CTR, latency, fairness metrics).

- Assumptions/dependencies: Stable scalar metrics; access to batched evaluation; careful score normalization to prevent reward hacking.

- Better Utilization of Inference Infrastructure for Training

- Sector: cloud/HPC

- Use case: Convert idle inference capacity into training throughput by running large ES populations with shared base activations, approaching pure batch inference speeds.

- Workflow/product: Inference-to-training scheduler that dispatches low-rank noise directions across GPUs, collects fitness scalars, reconstructs updates via counter-based RNG.

- Assumptions/dependencies: Cluster support for batched matmul and adapter application; monitoring to prevent contention with regular inference; data pipelines that stream evaluation batches.

- Educational Demonstrations of Optimization without Gradients

- Sector: education

- Use case: Teach optimization and robustness through hands-on labs where students evolve models using low-rank ES and visualize convergence rates (O(1/r)).

- Workflow/product: Classroom notebooks with EGGROLL kernels; interactive plots showing marginal score-density convergence and population effects.

- Assumptions/dependencies: Small models; simple datasets and simulators; reproducible RNG setup.

- Scientific Black-Box Parameter Tuning

- Sector: academia/science/HPC

- Use case: Fit models or simulators with discontinuous or noisy objectives (e.g., agent-based models, physics simulators), using ES to avoid gradient complications.

- Workflow/product: EGGROLL optimization harness with batched perturbations and scalar outputs from simulation runs.

- Assumptions/dependencies: Many parallel evaluations; reliable scalar fitness aggregation; appropriate noise scaling.

Long-Term Applications

These opportunities leverage EGGROLL’s ability to scale ES to hyperscale populations and integer-friendly architectures but need further research, engineering, or validation.

- End-to-End Neurosymbolic System Training

- Sector: software, education, healthcare

- Use case: Train LLMs that tightly integrate symbolic modules (memory, calculators, rule engines) where gradients are non-existent or misleading.

- Workflow/product: “NeuroSymbolic Trainer” that evolves the neural components and learns interfaces to symbolic modules via outcome-only rewards.

- Assumptions/dependencies: Robust task-specific scalar objectives; careful evaluation harnesses; safety/interpretability tooling.

- ES-Only Pretraining of Billion-Parameter Models

- Sector: software/cloud

- Use case: Train very large LMs end-to-end using ES without backprop, exploiting massive parallelism and low-rank noise directions.

- Workflow/product: Hyperscale ES pretraining pipeline with token-generation scoring, variance reduction, and population scheduling across clusters.

- Assumptions/dependencies: Extremely large inference throughput; advanced variance reduction and score functions beyond Gaussian approximation; data efficiency strategies.

- Real-World Robotics Learning from Outcome-Only Feedback

- Sector: robotics, manufacturing, logistics

- Use case: On-robot policy learning where gradients are unavailable; robust ES training for long-horizon tasks and discontinuous reward landscapes.

- Workflow/product: ES robotics stack with safety layers, simulators-to-real transfer, and large on-hardware populations.

- Assumptions/dependencies: Sample efficiency improvements; safe exploration; real-time scalar reward logging; hardware wear constraints.

- Federated ES Across Edge Devices

- Sector: mobile/IoT, privacy

- Use case: Global optimization using populations split across many devices, aggregating scalar fitnesses to compute high-rank updates without sharing raw data.

- Workflow/product: Federated EGGROLL coordinator that reconstructs perturbations via RNG seeds, aggregates scores, and distributes updates or adapters.

- Assumptions/dependencies: Reliable device participation; privacy-preserving aggregation; heterogeneity handling; communication efficiency.

- ES-Optimized ASICs and Runtime Systems

- Sector: semiconductors, energy

- Use case: Hardware specializing in batched low-rank perturbation application (vector-dot and scalar-vector ops) and counter-based RNG to minimize energy.

- Workflow/product: ES-aware accelerators and kernels that approach pure inference throughput during “training mode.”

- Assumptions/dependencies: Hardware design cycles; standardization of ES training APIs; market adoption.

- Mainstream Integer-Only Architectures Beyond Transformers

- Sector: software/mobile

- Use case: Adoption of nonlinear, integer-only RNNs (e.g., evolved GRU variants) for efficient long-sequence modeling on low-power devices.

- Workflow/product: “Int8-RNN” libraries with ES training recipes, quantization-aware components, and deployment toolchains.

- Assumptions/dependencies: Demonstrated accuracy on larger corpora; compression and distillation pipelines; developer ecosystem buy-in.

- Alignment Pipelines Using ES at Hyperscale

- Sector: software, policy

- Use case: Replace gradient RLHF with ES-based outcome optimization at scale, focusing on reasoning, safety behaviors, and instruction-following.

- Workflow/product: ES alignment framework with batched human/AI feedback, advanced scoring and normalization, and throughput-aware evaluation harnesses.

- Assumptions/dependencies: Reliable batchable feedback signals; mitigation of reward gaming; robust assessment methodologies.

- Financial Portfolio and Strategy Optimization with Hard Constraints

- Sector: finance

- Use case: Optimize strategies with non-differentiable transaction costs, compliance rules, or discrete actions using ES populations.

- Workflow/product: ES optimizer that evolves low-rank parameterizations of policies or models, scored by backtest metrics.

- Assumptions/dependencies: High-fidelity simulation; robust risk control; regulatory compliance verification.

- Outcome-Only Clinical Model Tuning

- Sector: healthcare

- Use case: Tune decision-support systems where gradients are unavailable and feedback is outcome-based (e.g., treatment success), integrating symbolic rules and constraints.

- Workflow/product: ES clinical optimization harness with robust scoring, fairness metrics, and audit trails.

- Assumptions/dependencies: Clinical trial frameworks; strong governance; bias and safety controls; explainability requirements.

- Carbon-Aware Training at Scale

- Sector: energy, cloud/HPC, policy

- Use case: Reduce training energy by shifting to integer-only computations and batch-inference training modalities; schedule populations for low-carbon grid windows.

- Workflow/product: Carbon-aware ES scheduler integrating energy telemetry, int8 kernels, and data center policies.

- Assumptions/dependencies: Accurate energy metering; cloud scheduling cooperation; mature int8 toolchains.

- AutoML and Meta-Learning via ES

- Sector: software/MLOps

- Use case: Evolve learning algorithms, architectures, or curricula using ES in regimes where gradient meta-learning is brittle.

- Workflow/product: ES meta-learning suite that evaluates candidate learners/policies across tasks and aggregates outcome-only rewards.

- Assumptions/dependencies: Large-scale benchmarking; careful score normalization; compute budgets for broad exploration.

- Simulation-Based Science Optimization

- Sector: academia/HPC

- Use case: Optimize parameters of complex simulations (e.g., climate, agent-based economics) with discontinuities or chaotic behavior.

- Workflow/product: ES wrapper around simulation pipelines, exploiting population parallelism and low-rank perturbations to search efficiently.

- Assumptions/dependencies: Access to HPC; robust scalar objectives; domain-specific validation and sensitivity analyses.

Collections

Sign up for free to add this paper to one or more collections.