- The paper presents a unified benchmark suite that standardizes pushing-based navigation and manipulation tasks for mobile robots.

- It details diverse simulation environments, such as Maze, Ship-Ice, Box-Delivery, and Area-Clearing, and introduces novel evaluation metrics for task efficiency and interaction cost.

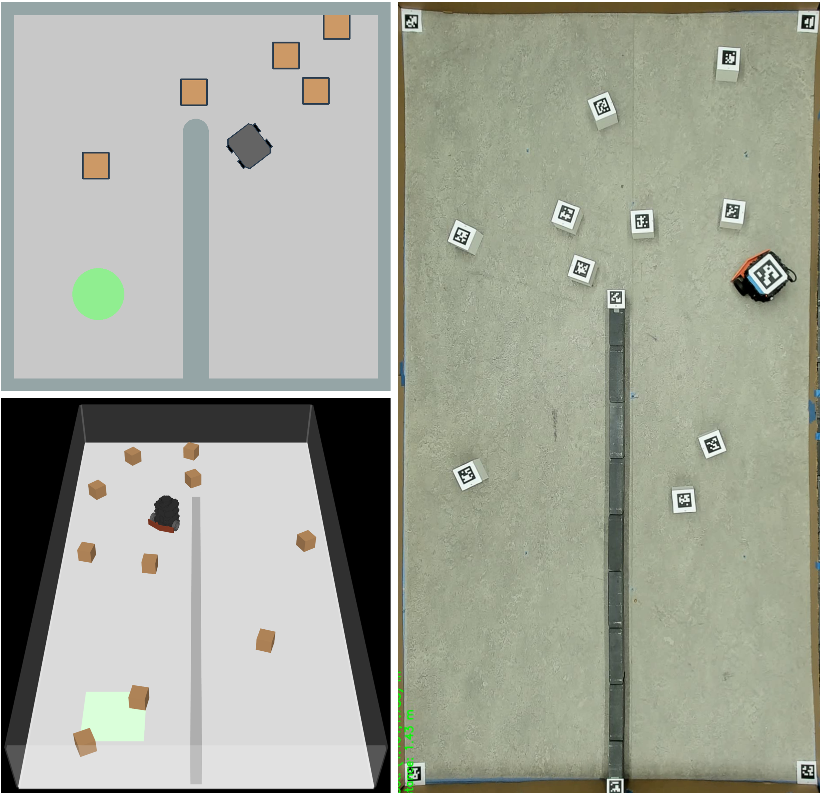

- Validated sim-to-real transfers and comparative analyses between RL baselines and task-specific planners underscore the benchmark’s practical impact on robust, interaction-aware planning.

Bench-Push: A Comprehensive Benchmark for Pushing-Based Mobile Robot Navigation and Manipulation

Introduction

Pushing-based navigation and manipulation are essential competencies for mobile robots tasked with operating autonomously in cluttered, unstructured environments where strict collision-free navigation is infeasible. "Bench-Push: Benchmarking Pushing-based Navigation and Manipulation Tasks for Mobile Robots" (2512.11736) addresses the long-standing fragmentation in this field by proposing a unified benchmark suite, including configurable simulation environments, task-specific and general evaluation metrics, and baseline implementations for state-of-the-art policies. This work provides a standardized platform for comparative analysis and systematic development of algorithms for nonprehensile mobile robot tasks.

Benchmark Design and Environments

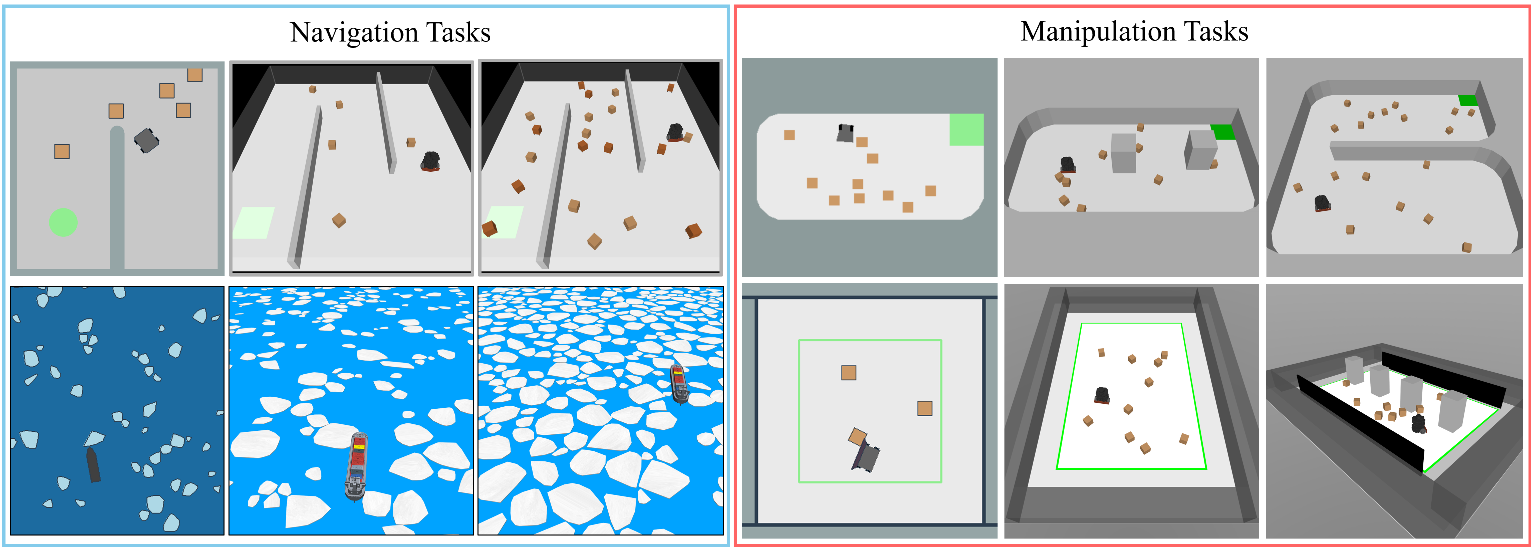

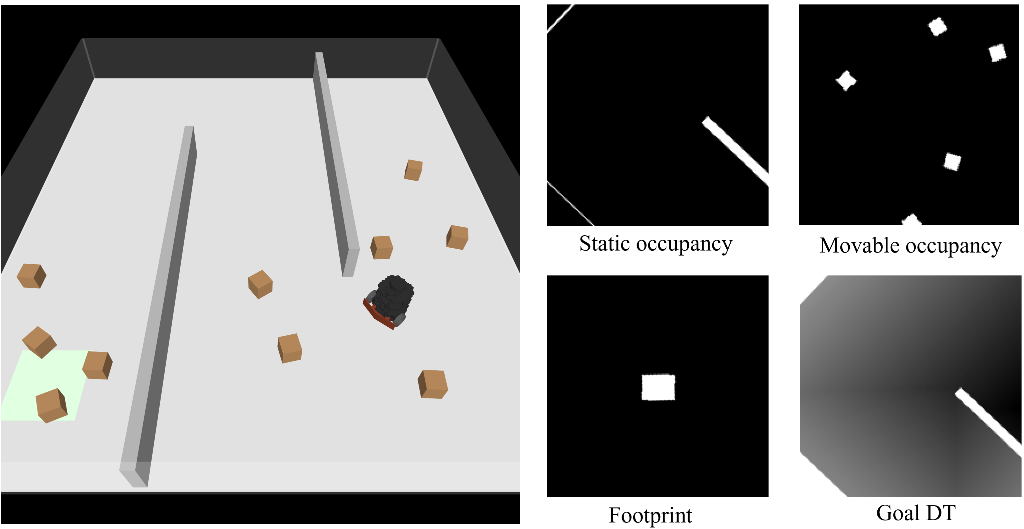

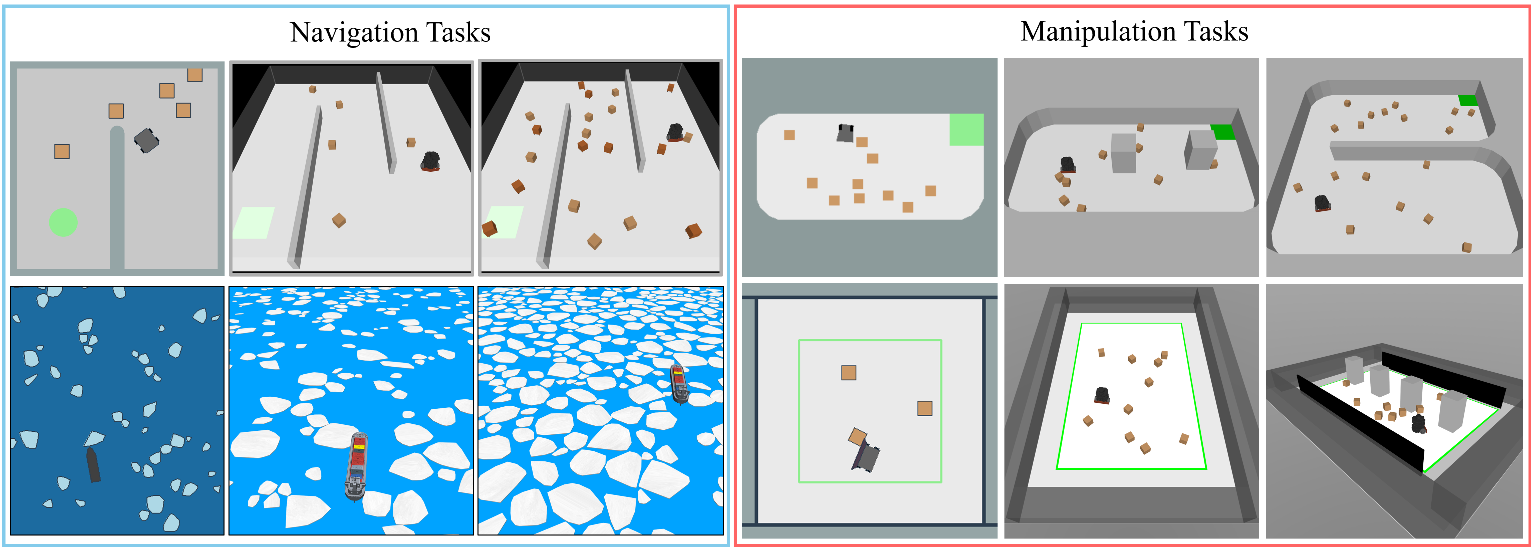

Bench-Push introduces a diverse set of simulated environments, each representing canonical pushing-based navigation and manipulation challenges. These environments are instantiated in both 2D (for rapid prototyping) and 3D MuJoCo-based simulation (for high-fidelity evaluation and sim-to-real transfer). The benchmark notably covers both task classes: navigation (reaching a goal while interacting with obstacles) and manipulation (actively reconfiguring objects in the environment).

Key environments include:

- Maze: A bounded, maze-like layout with random distribution of movable obstacles, suitable for navigation-centric evaluation.

- Ship-Ice: A domain-adapted scenario simulating autonomous ship navigation through ice-laden waters, presenting complex, irregularly shaped pushable obstacles and drag forces.

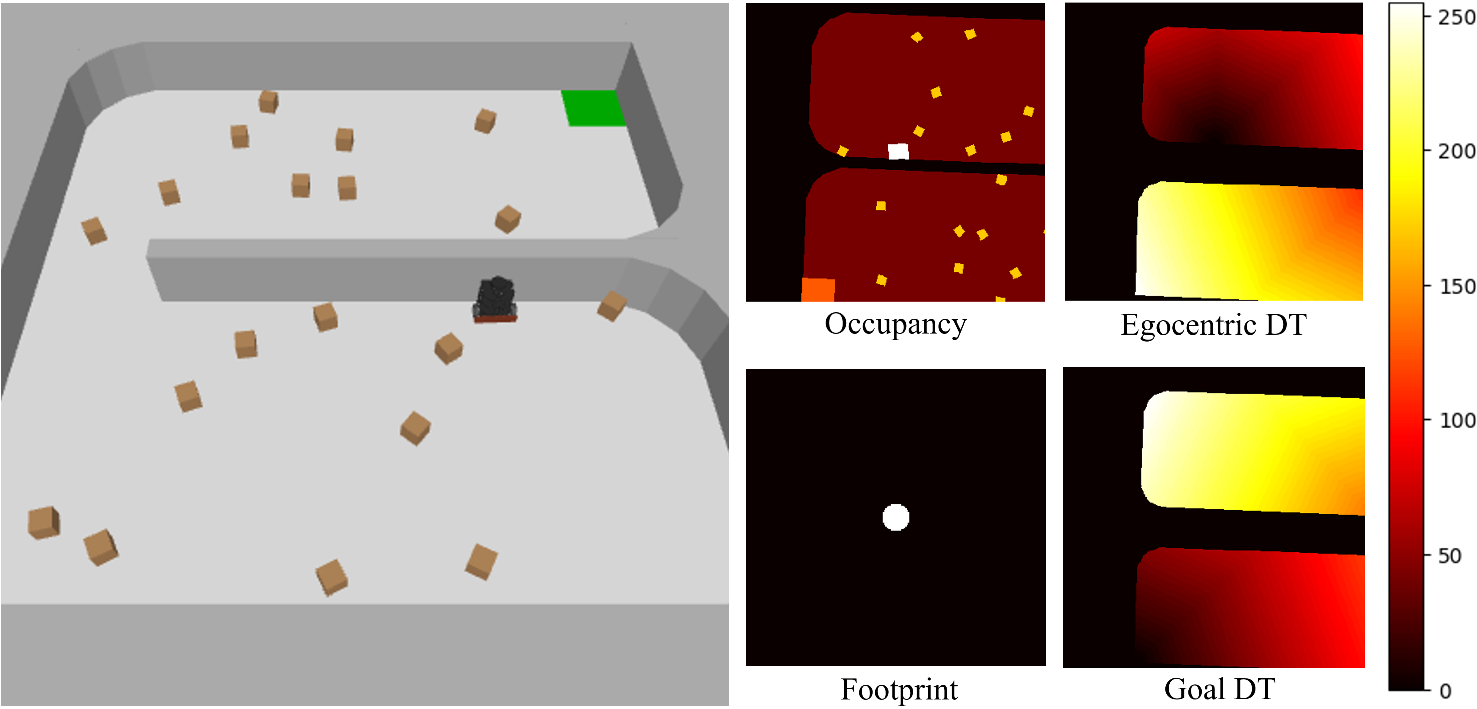

- Box-Delivery: A manipulation task where the robot must deliver all boxes to a designated receptacle using only pushing actions.

- Area-Clearing: The robot is responsible for clearing all boxes out of a specified region, agnostic to their final resting positions.

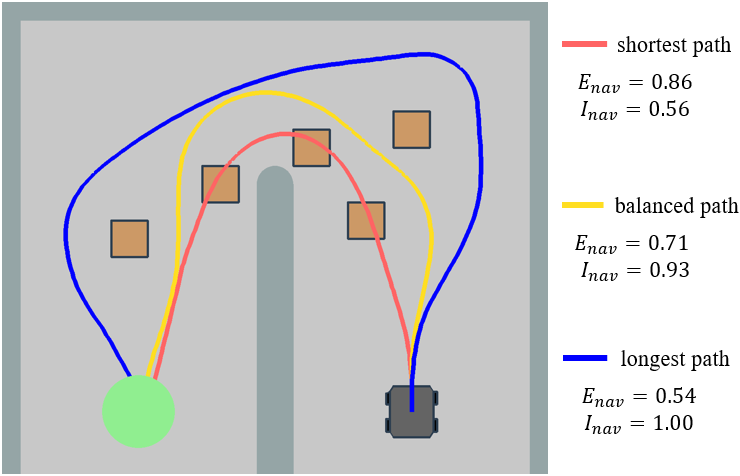

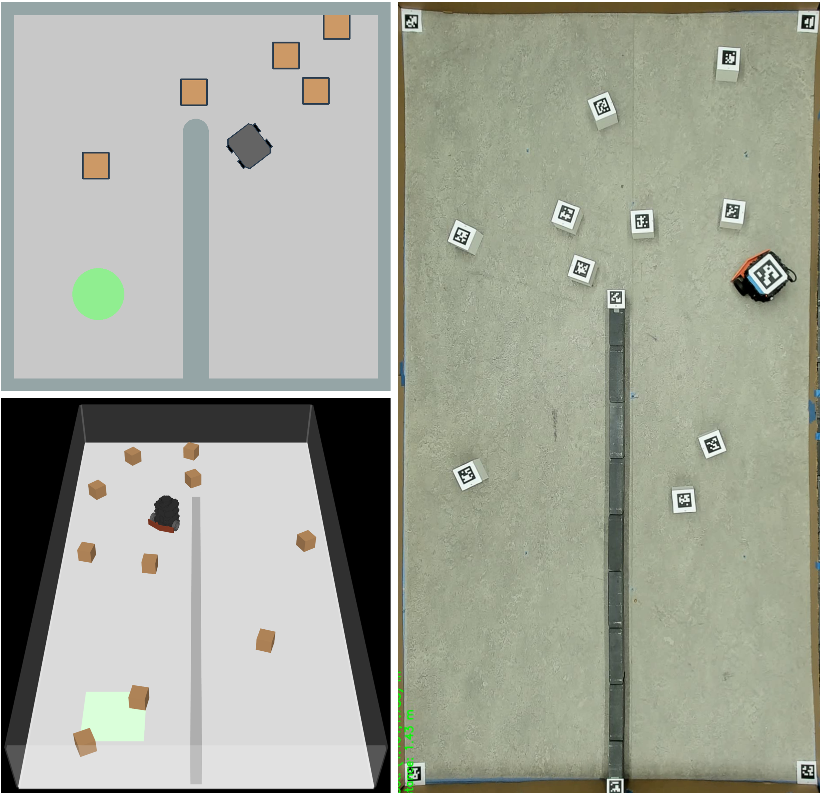

Figure 1: Maze environment in 2D simulation (top-left), 3D simulation (lower-left), and in the physical experimental setup (right panel).

Figure 2: Summary of Bench-Push environments. Each panel visualizes a different scenario: Maze, Ship-Ice, Box-Delivery, and Area-Clearing, with configurable complexity parameters.

The environments are fully compatible with Gymnasium APIs and are designed for direct integration of custom policies and experimental agents.

Task Classes and Actions

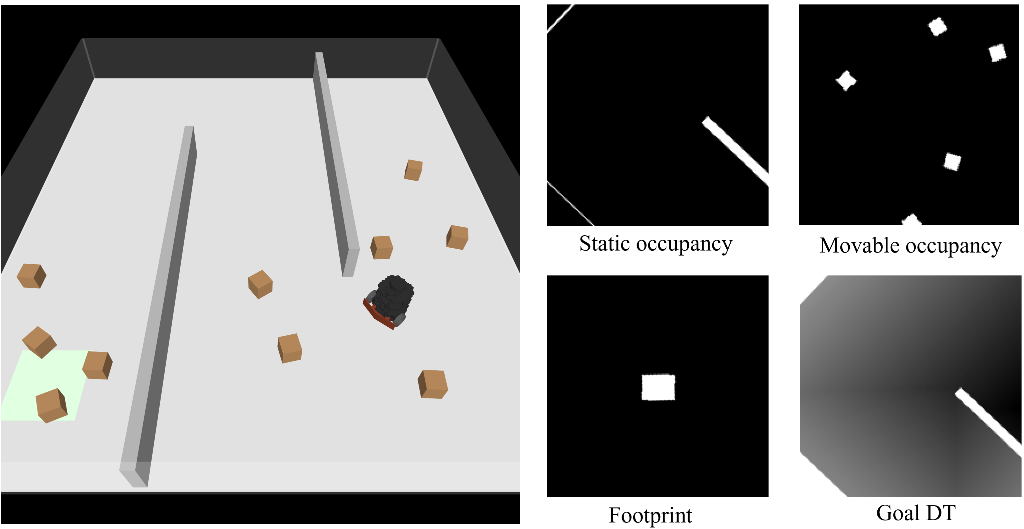

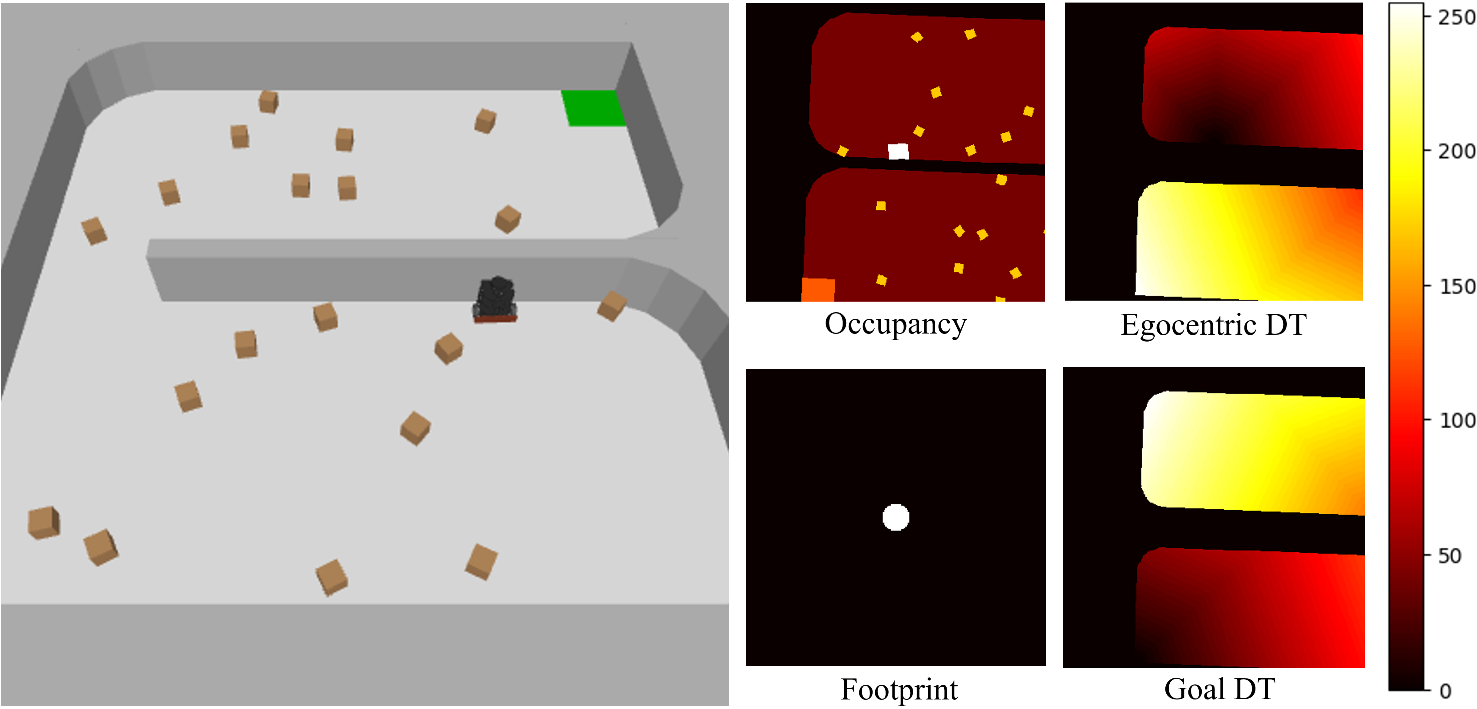

Bench-Push differentiates tasks as either navigation-centric, where the prime objective is to reach a goal region possibly by pushing aside obstacles, or manipulation-centric, where designated objects must be relocated according to task constraints. Each environment supports multiple action modalities (e.g., heading commands, angular velocity control, or low-level wheel velocities) and provides egocentric high-dimensional observations encoding static/movable occupancy, goal-oriented distance transforms, and robot footprint.

Figure 3: Maze environment (left) and a representative egocentric observation vector (right).

Figure 4: Box-Delivery environment (left) and an example observation structure (right).

Evaluation Metrics

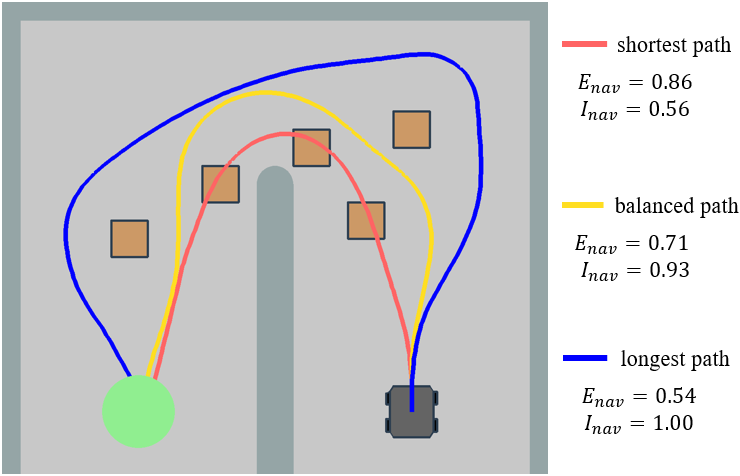

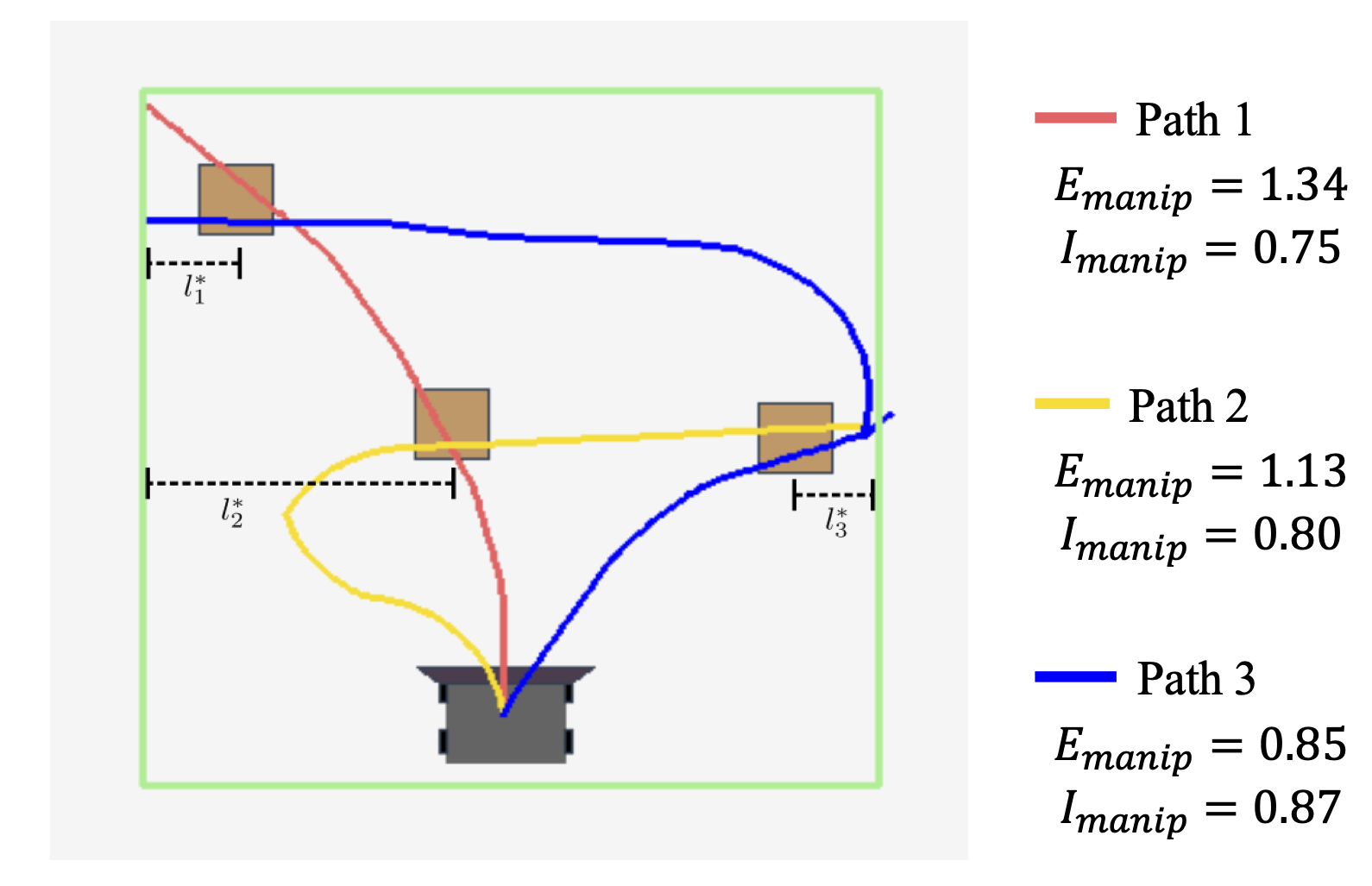

The work introduces new evaluation metrics for both navigation and manipulation that quantitatively distinguish between task efficiency and the physical cost of interaction, addressing the inherent multi-objective optimization challenge in pushing-based tasks.

- Navigation Efficiency (Enav): Fraction of shortest-path distance to goal, measuring path optimality given only static obstacles.

- Interactive Effort (Inav): Ratio of work done by the robot to the total work (including dissipated work to push all objects), normalized to penalize unnecessary object movements.

- Manipulation Success (Smanip): Fraction of objects successfully manipulated to their targets.

- Manipulation Efficiency (Emanip): Ratio of a lower-bound necessary path length to actual path length for all completed subtasks.

- Manipulation Interactive Effort (Imanip): Ratio of minimum necessary work for successfully completed sub-tasks to total work done, reflecting efficiency beyond mere completion.

Figure 5: Three trajectory examples (red, yellow, blue) in the Maze, illustrating efficiency vs. interaction trade-offs (Enav, Inav) for a teleoperated agent.

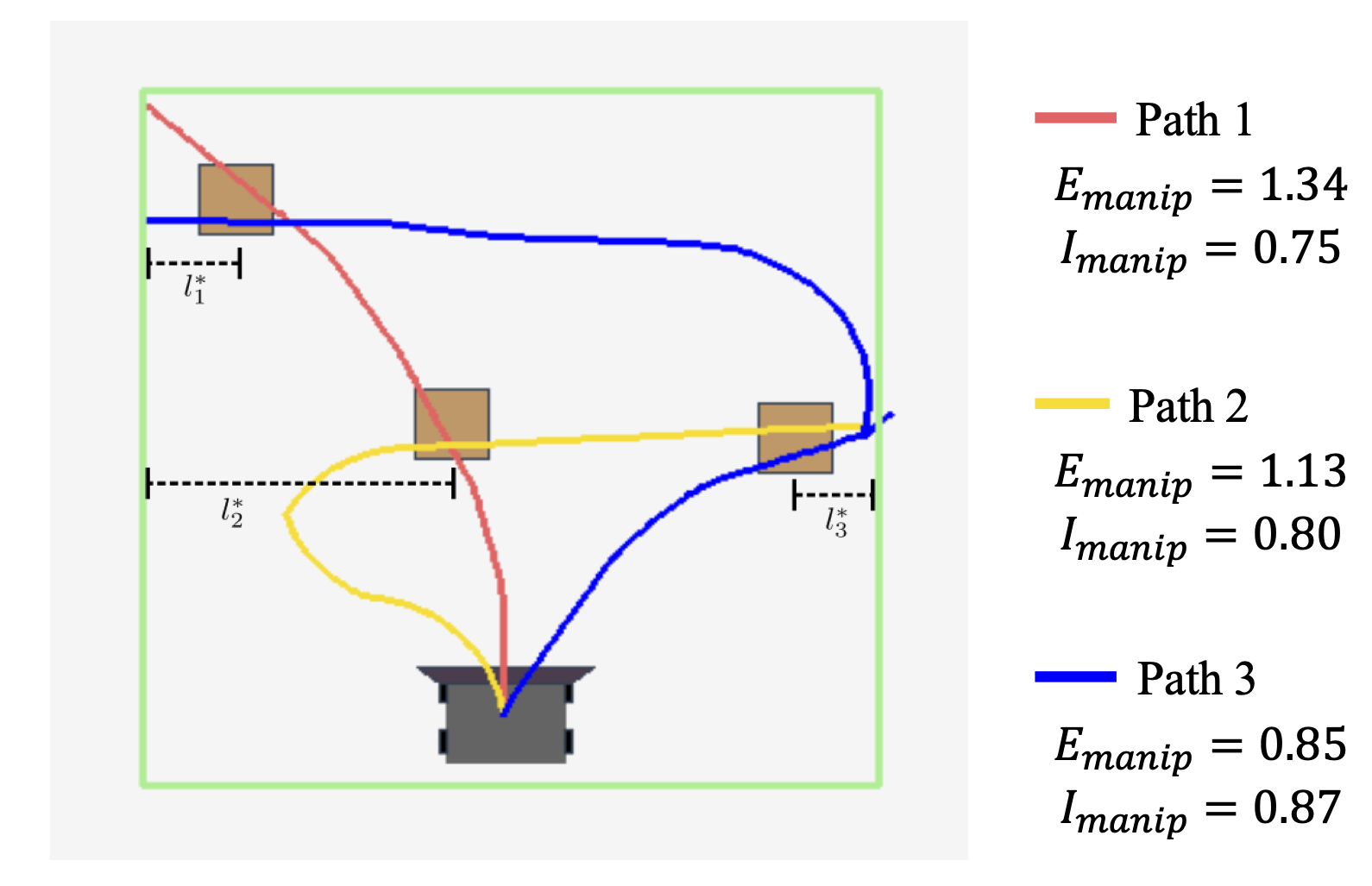

Figure 6: Example trajectories for Area-Clearing, showcasing variations in efficiency and effort (Emanip, Imanip) despite identical success rates.

These metrics enable fine-grained, quantitative policy evaluation and cross-environment comparison, situating Bench-Push distinctly from prior benchmarks where task and interaction costs were confounded or insufficiently captured.

Baselines and Reference Implementations

Bench-Push comes equipped with both algorithm-agnostic RL baselines (SAC, PPO) and task-specific planning/control approaches for select environments:

- RL Baselines: Implemented via Stable Baselines3, encoding raw observations with a ResNet18-based visual backbone. Deployed across all environments.

- Task-specific Policies:

- RRT-based planner (for Maze): Kinodynamic planning in the presence of movable obstacles.

- Spatial Action Map (SAM) (for Box-Delivery/Area-Clearing): A hierarchical waypoint-based planner especially suited for multi-object nonprehensile manipulation.

- Domain planners (for Ship-Ice and Area-Clearing): Including ASV path planners, GTSP-based trajectories, and predictive planners.

Policies are evaluated both in simulation and, crucially, in physical hardware deployments (TurtleBot3), enabling explicit assessment of sim-to-real transfer characteristics.

Experimental Results

Bench-Push demonstrates strong policy transfer fidelity: behaviors emergent in simulation are substantially preserved in real-robot deployments. In navigation (Maze) and manipulation (Box-Delivery) tasks, the relationship between increasing environmental complexity and degradation in interactive efficiency is clearly corroborated in both domains. Notably, PPO yields superior efficiency in navigation, while SAM drastically outperforms RL baselines in Box-Delivery for both success and path optimality. RL agents' manipulation success rates deteriorate substantially with increased object count, further highlighting the advantage of task-specific planners in structured environments.

Quantitative evidence reveals that physically accurate simulation (e.g., drag in Ship-Ice, nuanced interaction dynamics in MuJoCo) is critical to narrowing the sim-to-real gap, although some minor degradation arises from real-world system idiosyncrasies (e.g., odometry drift, control lag, imperfect object-robot contact).

Practical and Theoretical Implications

Bench-Push fills a significant gap in evaluation infrastructure for nonprehensile mobile manipulation. Practically, it establishes a reproducible, extensible framework for head-to-head algorithmic comparison and robust ablation studies. Theoretically, the metrics proposed disentangle navigation, manipulation, and interaction trade-offs, informing both algorithmic design (RL vs. planning) and system formulation (control granularity, observation structure). The modular architecture and codebase are expected to accelerate research in sim-to-real RL, multi-object pushing, and interaction-aware planning.

Key implications for future mobile robot systems include:

- Facilitating development and benchmarking of more sample-efficient, generalizable learning policies for pushing-based interaction.

- Providing standardized environments for research in nonprehensile manipulation strategies under varying perception, actuation, and object dynamics constraints.

- Enabling systematic exploration of robust sim-to-real transfer techniques, including domain randomization, perception error modeling, and hardware-in-the-loop evaluation.

Conclusion

Bench-Push represents a comprehensive and rigorously defined benchmark suite, establishing standardized environments, metrics, and evaluation protocols for pushing-based navigation and manipulation in mobile robotics (2512.11736). Its design supports a wide spectrum of tasks, robustly distinguishes task completion from interaction cost, and demonstrates effective sim-to-real transfer in core evaluation scenarios. The benchmark is expected to become an essential asset for the community, fostering reproducible, scalable research pipelines for both RL and control/planning paradigms in nonprehensile mobile robot manipulation.