S&P 500 Stock's Movement Prediction using CNN

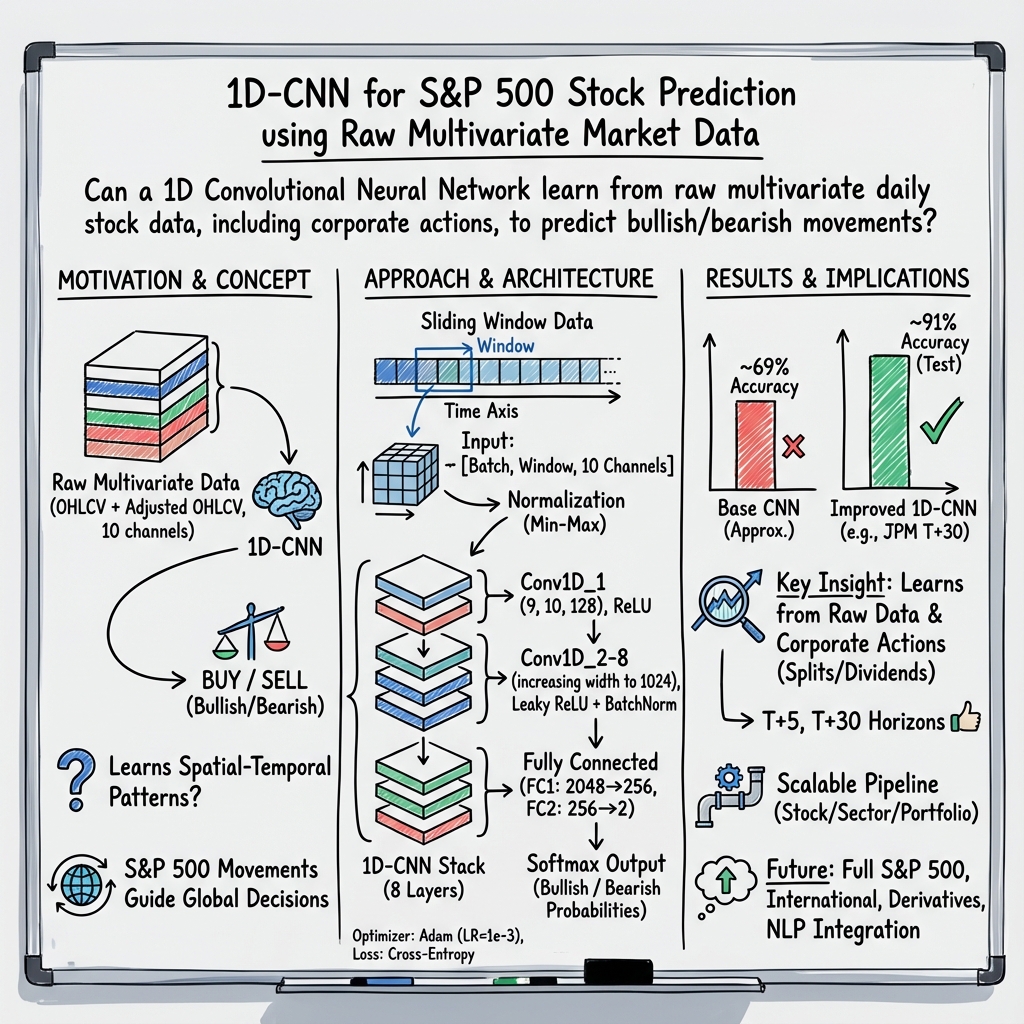

Abstract: This paper is about predicting the movement of stock consist of S&P 500 index. Historically there are many approaches have been tried using various methods to predict the stock movement and being used in the market currently for algorithm trading and alpha generating systems using traditional mathematical approaches [1, 2]. The success of artificial neural network recently created a lot of interest and paved the way to enable prediction using cutting-edge research in the machine learning and deep learning. Some of these papers have done a great job in implementing and explaining benefits of these new technologies. Although most these papers do not go into the complexity of the financial data and mostly utilize single dimension data, still most of these papers were successful in creating the ground for future research in this comparatively new phenomenon. In this paper, I am trying to use multivariate raw data including stock split/dividend events (as-is) present in real-world market data instead of engineered financial data. Convolution Neural Network (CNN), the best-known tool so far for image classification, is used on the multi-dimensional stock numbers taken from the market mimicking them as a vector of historical data matrices (read images) and the model achieves promising results. The predictions can be made stock by stock, i.e., a single stock, sector-wise or for the portfolio of stocks.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper looks at a big question in finance: can we predict whether a stock in the S&P 500 will go up or down soon? The author tries a deep learning method called a Convolutional Neural Network (CNN), which is normally used to recognize patterns in pictures, and applies it to raw stock market numbers to decide if you should “buy” (price likely to go up) or “sell” (price likely to go down).

What questions does it try to answer?

- Can a CNN learn useful patterns directly from raw market data (like daily prices and trading volume) without special “engineered” features?

- Can it handle real-life events that change prices suddenly, like stock splits and dividends?

- How accurate can such a model be when predicting the direction (up or down) of a stock, a sector, or a group of stocks?

How did they do it?

The data

Stocks have daily records with numbers like:

- OPEN, HIGH, LOW, CLOSE, VOLUME (often called OHLCV)

- “Adjusted” versions of these numbers that account for events like dividends and stock splits (so past prices are corrected to be comparable).

A stock split is when a company turns each share into multiple shares (for example, 1 share becomes 2). The total value doesn’t change, but the price per share drops. A dividend is when a company pays shareholders (sometimes in cash, sometimes as extra shares). Both can make raw price charts look like they jump. Using both adjusted and unadjusted prices helps the model learn through those events.

The paper uses daily data for S&P 500 companies from a data provider (Intrinio) and cleans it to keep the 10 key columns (OHLCV and adjusted OHLCV). Then it scales all numbers to the same range so big values don’t overpower small ones.

Turning prices into “pictures”

Even though this isn’t a photo, the author treats a short window of days (for example, the last 30 days) across those 10 columns like a small “image.” Imagine stacking 10 strips (one for each feature like OPEN, CLOSE, VOLUME) side by side for each day, then sliding a window across time to create many overlapping “snapshots.” This is called a sliding window, and it gives the model lots of examples.

Data augmentation here means creating more of these snapshots by sliding the window one day at a time, which helps the model see more patterns.

The model (CNN)

A CNN is great at spotting patterns, like edges in photos. Here, it tries to spot “patterns” in price and volume movements:

- It uses a 1D CNN (filters slide along time) with several layers (10 layers total).

- Early layers look for short-term patterns (like a quick rise or drop); deeper layers combine those into longer-term patterns.

- It outputs two probabilities: one for “bullish” (up) and one for “bearish” (down), and picks the higher one.

To decide how good its guesses are, it uses a standard scoring method called cross-entropy loss. Training adjusts the model so its predicted probabilities better match the true labels (up or down).

Training and testing

The author:

- Splits data into training, validation, and test sets.

- Tries different settings (learning rate, batch size, number of filters) to tune performance.

- Uses a “softmax” output so predictions are probabilities that add up to 1 (e.g., 70% up, 30% down).

- Measures accuracy (how often it predicts the correct direction) and monitors loss (how far predictions are from the truth).

What did they find and why is it important?

The main idea: treating raw stock data like a multi-channel time “image” and using a CNN can work surprisingly well.

Highlights:

- Earlier “base” models (from other work) often had accuracies around 38–48% without special features; a starting baseline similar to this project hit roughly 69%.

- After redesigning and tuning the CNN here (more layers, better data handling, and careful hyperparameters), the model’s best result on some tests reached about 91% accuracy for predicting JPMorgan’s 30-day-ahead direction. Other experiments on different stocks, sectors, and time horizons also showed “promising” accuracy curves.

Why it matters:

- If a model can reliably predict direction better than chance, it could help build trading strategies, manage risk, and construct portfolios.

- Using raw data reduces the need for hand-crafted financial indicators, making the approach simpler to apply across many stocks and markets.

Important caution:

- High accuracy in experiments doesn’t guarantee real trading profits. Markets are noisy and change over time, and models can overfit past data. Real-world testing (with transaction costs and changing conditions) is essential.

What’s the bigger impact?

If refined and tested carefully in live markets, this approach could:

- Help investors and funds forecast stock or sector trends.

- Scale to the full S&P 500 and even to other markets like foreign exchange or commodities.

- Be combined with other techniques (like LSTMs for memory of longer histories, or NLP to read news and social media sentiment) for potentially stronger results.

- Extend to more complex products like options and futures.

In simple terms: the paper shows that a smart pattern-spotting tool from image recognition (CNNs) can also spot useful patterns in stock price histories. It’s an encouraging step, but turning it into a reliable, real-world trading edge would require more testing, careful risk checks, and ongoing updates as markets change.

Knowledge Gaps

Knowledge Gaps, Limitations, and Open Questions

Below is a concise list of unresolved issues that future research could address to strengthen, validate, and operationalize the proposed CNN-based approach for S&P 500 stock movement prediction.

- Labeling scheme is underspecified:

- What exact horizon(s) define “BUY/SELL” (T+2, T+5, T+30 are mentioned inconsistently)?

- How is “absolute return” computed and thresholded for bullish/bearish labels?

- Is the labeling volatility-adjusted (e.g., returns scaled by ATR) and does class imbalance exist?

- Evaluation protocol risks time-series leakage:

- Data “split and shuffle” with sklearn and global normalization likely leak future information.

- No walk-forward or rolling-origin evaluation; no strictly chronological train/validation/test splits.

- Clarify whether normalization parameters are fit on train only (and applied to val/test).

- Dataset coverage and potential survivorship bias:

- Uses “current stock consists in S&P 500” rather than historical constituents; quantify survivorship bias.

- How many tickers and years per ticker are included in training/testing? Which sectors?

- Are delisted firms and index membership changes handled?

- Corporate actions handling is unclear and potentially inconsistent:

- Using both adjusted and unadjusted OHLCV while dropping EX_DIVIDEND and SPLIT_RATIO may cause redundancy or leakage.

- Demonstrate that the model indeed learns split/dividend effects (e.g., targeted ablation, event studies).

- Clarify how discontinuities around splits/dividends are treated in labels and windows.

- Normalization and preprocessing specifics are missing:

- The normalization formula is ambiguous and lacks detail on per-feature/per-stock normalization scope.

- Handling of missing values, outliers, extreme volume spikes, and bad ticks is not described.

- Sliding-window augmentation may inflate performance:

- Overlapping windows can create near-duplicate samples across splits, boosting apparent accuracy.

- Define non-overlapping out-of-sample windows and report performance stability across contiguous test periods.

- Model architecture details are incomplete or inconsistent:

- Kernel size, stride, padding, window_size (days), and receptive field are not explicitly specified.

- Final activation/loss is contradictory (Softmax with “binary Logistic Regression” vs “finally a sigmoid”).

- Dropout usage (“keep prob 0.6”) is mentioned but not placed or justified; no L2 regularization details.

- Hyperparameter search and reproducibility:

- Tuning is ad hoc (“babysitting”); define a systematic search (e.g., Bayesian optimization) with time-series CV.

- Provide random seeds, parameter counts, training hardware, and training time to enable replication.

- Baseline comparisons are inadequate:

- No direct, fair benchmarks on the same data/horizon (e.g., ARIMA, logistic regression with technical indicators, LSTM/GRU, gradient boosting).

- Include naive baselines (random, majority class, previous-day direction) to contextualize gains.

- Performance metrics are insufficient for finance:

- Report precision/recall, F1, ROC/AUC, balanced accuracy, Brier score, and probability calibration.

- Provide confidence intervals and statistical significance tests across multiple runs and periods.

- Economic evaluation is absent:

- No backtested returns, Sharpe ratio, drawdowns, turnover, hit ratio, or profit factor.

- No transaction costs, slippage, liquidity constraints, or shorting/borrowing assumptions.

- Define entry/exit rules, thresholds, holding periods, position sizing, and risk management.

- Generalization and robustness are untested:

- Performance across market regimes (e.g., 2008 crisis, 2020 COVID), volatility regimes, and structural breaks is unknown.

- Cross-ticker generalization (train on subset, test on unseen tickers/sectors) is not evaluated.

- Sensitivity analyses to hyperparameters, window sizes, and channel sets are missing.

- Multi-horizon predictability and modeling choices:

- Clarify whether separate models or a single multi-task model are used for T+2/T+5/T+30.

- Compare single-horizon vs multi-horizon architectures; report trade-offs.

- Cross-sectional information is unused:

- The model operates per ticker; explore “space-time” CNNs that exploit inter-stock correlations and sector co-movement.

- Quantify gains from cross-sectional features versus purely univariate inputs.

- Class imbalance and calibration:

- Assess bullish/bearish class ratios; consider class weights, focal loss, or re-sampling.

- Evaluate calibration (e.g., reliability curves) and post-hoc calibration methods (Platt scaling, isotonic).

- Interpretability and feature attribution:

- Provide filter/feature visualizations (e.g., Grad-CAM, integrated gradients) to identify learned patterns.

- Test whether learned features align with economically meaningful signals (momentum, reversal, volume surges).

- Model adaptation to non-stationarity:

- Define re-training frequency (online vs periodic), drift detection, and adaptation strategies.

- Compare fixed vs rolling normalization and rolling re-fitting.

- Portfolio construction and sector/portfolio results:

- Translate per-ticker signals into portfolios; specify weighting schemes, diversification, and risk controls.

- Present sector-level and portfolio backtests versus benchmarks (SPY, sector ETFs).

- Data integrity and provider dependence:

- Quantify data quality (missingness, feed latency, corrections) and impact on signals.

- Ensure results are not provider-specific; replicate on alternative vendors or public datasets.

- External/exogenous variables:

- The model excludes macro/news/sentiment; quantify incremental value from exogenous features (NLP, macro indicators) in controlled experiments.

- Operational constraints and deployment:

- Clarify inference latency, compute footprint, and the feasibility of real-time/near-real-time deployment.

- Define model monitoring KPIs and re-calibration triggers.

- Documentation and code availability:

- Release code, model configs, and experiment logs; specify licensing and data-access steps to enable independent verification.

These gaps point to concrete steps—rigorous time-series evaluation, robust baselines, economic backtesting, detailed documentation, and targeted ablations—that are necessary to validate claims, assess practical utility, and guide future extensions of the proposed approach.

Practical Applications

Immediate Applications

Below are actionable use cases that can be deployed now, leveraging the paper’s 1D-CNN on multivariate raw OHLCV and adjusted OHLCV data to produce probabilistic bullish/bearish signals at multiple horizons (e.g., T+2/T+5/T+30).

- Finance — Algorithmic trading signals for U.S. large-cap equities

- Use the model’s softmax probabilities to drive buy/sell/hold decisions for individual S&P 500 constituents across different forecast horizons; convert probabilities to orders via thresholds and position-sizing rules.

- Potential tools/products/workflows: Signal-generation microservice API; daily ETL from a data provider (e.g., Intrinio), normalization and sliding-window inference; backtesting engine that includes transaction costs; broker integration via FIX/REST.

- Assumptions/dependencies: Reliable daily OHLCV and corporate-action-adjusted data; robust out-of-sample validation (avoiding leakage from adjustments); calibration of thresholds; slippage/fees modeled; compliance with trading regulations.

- Finance/Software — Sector momentum heatmaps and dashboards

- Aggregate stock-level probabilities to sector-level scores (e.g., “TECH sector T+3 momentum”), supporting tactical allocation and analyst workflows.

- Potential tools/products/workflows: Sector Momentum Dashboard; batch jobs to compute sector aggregates; analyst portal integrations.

- Assumptions/dependencies: Correct sector mappings; adequate stock coverage per sector; monitoring for regime changes.

- Finance — Portfolio construction and screening

- Rank S&P 500 constituents by bullish probability to build long-only or long/short portfolios; use probabilities as alpha inputs in optimizers with risk constraints.

- Potential tools/products/workflows: Portfolio optimizer using predicted probabilities; risk overlays (e.g., volatility targeting); turnover controls.

- Assumptions/dependencies: Risk model availability; diversification/constraint handling; stability of signals; realistic backtests with costs.

- Finance — Risk management and hedging triggers

- Employ bearish-probability spikes as early warning signals to hedge (e.g., index futures or options) at the portfolio or sector level.

- Potential tools/products/workflows: Hedging playbooks; OMS integration; alerting when softmax bearish confidence exceeds thresholds.

- Assumptions/dependencies: Hedge instrument liquidity; false-positive management; proper escalation workflows.

- Retail investing/Daily life — Trend badges and alerts in consumer apps

- Provide simplified “Bullish/Bearish likelihood” labels and push notifications when probabilities cross thresholds; curate watchlists based on model signals.

- Potential tools/products/workflows: Mobile app features; scheduled daily inference; user preference/threshold settings; education content explaining OHLCV-based signals.

- Assumptions/dependencies: Clear disclaimers; suitability and risk disclosures; conservative default thresholds; data latency control.

- Academia/Education — Teaching and reproducible labs

- Use the model to teach time-series deep learning (1D-CNN vs. LSTM/ARIMA), corporate-action handling, and hyperparameter tuning; replicate reported accuracy (e.g., JPM T+30 ~91%) and compare across assets.

- Potential tools/products/workflows: Jupyter notebooks; reproducible pipelines (normalization, sliding window augmentation); structured assignments and benchmarks.

- Assumptions/dependencies: Licensed datasets; accessible compute; standardized evaluation protocols (train/val/test splits).

- Software/Finance — Corporate actions ETL and preprocessing library

- Package the paper’s adjusted vs. unadjusted price handling into a reusable module for robust pipelines that avoid leakage and misalignment around splits/dividends.

- Potential tools/products/workflows: CorporateActions ETL; normalization and augmentation utilities; unit tests for event boundaries.

- Assumptions/dependencies: High-quality corporate action feeds; careful timestamp alignment; consistent adjustment factors.

- Software/MLOps — Model deployment and monitoring

- Operationalize the CNN with monitoring for drift, calibration, and performance by horizon; automate retraining and validation using sliding windows.

- Potential tools/products/workflows: CI/CD for models; daily retraining schedules; cross-entropy/loss dashboards; alerting on degradation.

- Assumptions/dependencies: Governance and access controls; reproducibility (seed management, data versioning); resource allocation for training.

- Industry/Data providers — Value-added “trend probability” APIs

- Bundle the CNN outputs with market data (e.g., via Intrinio) as a premium API for quants and app developers.

- Potential tools/products/workflows: SLA-backed API endpoints; documentation and SDKs; client onboarding and sandboxing.

- Assumptions/dependencies: Licensing agreements; rate limits and scaling; support for multiple horizons and sectors.

Long-Term Applications

The following use cases require further research, scaling, or productization—often extending the model to new assets, modalities, or governance constraints.

- Finance — Derivatives and options strategy engines

- Use directional probabilities to inform delta-hedged trades, spreads, and event-aware strategies around splits/dividends; explore implied volatility forecasting with enhanced inputs.

- Potential tools/products/workflows: Options strategy optimizer; Greeks and risk scenario analysis; execution simulators.

- Assumptions/dependencies: Robust calibration; liquidity and slippage modeling; path-dependency considerations; enhanced validation across market regimes.

- Cross-asset forecasting (Energy, Commodities, FX)

- Adapt the CNN to multivariate time series for commodities (e.g., gas, precious metals) and FX; explore intraday horizons and additional channels (e.g., futures curves).

- Potential tools/products/workflows: Global Market Trend API; cross-asset dashboards; multi-market ETL pipelines.

- Assumptions/dependencies: Domain-specific microstructure; higher-frequency data quality; potentially different normalization and windowing strategies.

- Finance — S&P 500 index-level forecasting and tactical ETF overlays

- Train on the full S&P 500 to produce index movement probabilities and drive tactical overlays for index funds/ETFs.

- Potential tools/products/workflows: Index-level signal services; overlay fund modules; allocator-friendly reporting.

- Assumptions/dependencies: Robust aggregation methods; exposure controls; long-horizon validation across multiple cycles.

- Finance/Software — Hybrid models combining CNN + LSTM + NLP

- Integrate sentiment analysis (news/social) and memory (LSTM) to incorporate fundamentals and event context; move beyond price-only inputs.

- Potential tools/products/workflows: Real-time news ingestion; sentiment scoring; hybrid architecture orchestration; feature store for multimodal inputs.

- Assumptions/dependencies: High-quality text data and labeling; streaming infrastructure; careful fusion to avoid overfitting; explainability for mixed modalities.

- Policy/Industry — Model risk management and explainability toolkits

- Develop standardized interpretability for time-series CNNs (e.g., SHAP-like methods for channels and windows), probability calibration checks, and audit trails for regulatory reviews.

- Potential tools/products/workflows: Explainability dashboards; calibration plots; governance workflows; documentation templates.

- Assumptions/dependencies: Ongoing research into time-series interpretability; regulator acceptance of methodologies; internal audit readiness.

- Finance/Software — Regime detection and adaptive retraining

- Build meta-learning systems to detect regime shifts and adapt hyperparameters (window size, learning rate) and thresholds automatically.

- Potential tools/products/workflows: Drift detectors; AutoML for time series; scheduler-driven reconfiguration; safety constraints.

- Assumptions/dependencies: Adequate data for reliable detection; guardrails to prevent instability; continuous validation.

- Finance — Robo-advisory and dynamic asset allocation

- Use sector-level probabilities to dynamically tilt allocations (momentum overlays, risk parity adjustments) in retail and institutional robo-advisory products.

- Potential tools/products/workflows: Allocation engines; client personalization; suitability and risk controls; multi-horizon blending.

- Assumptions/dependencies: Regulatory approvals; multi-year performance evidence; robust downturn handling.

- Industry — Enterprise productization (“Trend-as-a-Service”)

- Deliver a comprehensive platform: APIs, dashboards, batch and streaming pipelines, connectors to OMS/EMS, client-specific calibration.

- Potential tools/products/workflows: Multi-tenant architecture; usage analytics; customer success playbooks.

- Assumptions/dependencies: Security and compliance; scalability; SLAs; support teams.

- Academia — Benchmarks and challenges for financial time-series DL

- Establish standardized datasets and evaluation protocols (including transaction costs) to benchmark 1D-CNN against LSTM, ARIMA, and hybrids; host community competitions.

- Potential tools/products/workflows: Open datasets (or synthetic proxies); leaderboards; reproducibility checklists; reference implementations.

- Assumptions/dependencies: Data licensing; consensus on metrics (risk-adjusted returns, drawdown); peer review processes.

- Policy — Standards for ML-driven financial advice and consumer protection

- Create guidelines for disclosure, calibration, and suitability for ML-generated signals in retail contexts; define guardrails for app-based recommendations.

- Potential tools/products/workflows: Certification frameworks; compliance checklists; monitoring for bias across sectors.

- Assumptions/dependencies: Regulator engagement; industry adoption; ongoing audits and transparency requirements.

Glossary

- Adam optimizer: A gradient-based optimization algorithm that adapts learning rates using estimates of first and second moments of gradients. "Optimizers: SGD, Adam optimizer"

- Adjusted OHLCV: Price and volume data adjusted for corporate actions (dividends/splits) to reflect continuous value. "OHLCV and adjusted OHLCV are most critical raw numbers that a stock/equity has prices comprises along with few others such as market capitalization, earning, etc."

- ARIMA: A classical time-series forecasting model combining autoregression and moving averages with differencing. "A recent study shows that LSTM outperforms traditional-based algorithms such as ARIMA model [4]"

- Autoregressive model: A statistical model where current values depend on a number of prior values in the series. "compared to that of the well-known autoregressive model and a long-short-term memory network."

- Batch normalization (BatchNorm): A technique to stabilize and accelerate training by normalizing layer inputs. "rest of Conv layers has a Leaky RELU unit and BatchNorm layer attached"

- Bearish trend: A downward market movement or expectation of falling prices. "bullish or bearish trend of the equities."

- Binary Logistic Regression: A probabilistic classification method modeling the log-odds of a binary outcome. "Softmax classifier, which has a loss function based on binary Logistic Regression, has been used."

- Bullish trend: An upward market movement or expectation of rising prices. "bullish or bearish trend of the equities."

- Convolution Neural Network (CNN): A deep learning architecture that learns spatial features via convolutional filters. "Convolution Neural Network (CNN), the best-known tool so far for image classification, is used"

- Conv1D (1D convolution): A convolutional layer operating across one dimension (e.g., time) to extract local patterns. "Conv1D_1 (9, 10, 128)"

- ConvNet: A convolutional neural network architecture arranging neurons in spatial dimensions. "A ConvNet arranges its neurons in three dimensions (width, height, depth), as visualized below in one of the layers."

- Cross-entropy loss: A loss function measuring the difference between predicted probabilities and true labels. "Cross-entropy loss, or log loss, measures the performance of a classification model whose output is a probability value between 0 and 1."

- Data augmentation: Techniques to expand training data by transforming inputs to improve generalization. "Data augmentation is used to create multiple images by sliding the window by number days to predict."

- Derivatives Market: Markets for financial contracts whose value derives from underlying assets (e.g., options, futures). "Derivatives Market for Options and Futures trading."

- Dilated convolutions: Convolutions with spaced kernels to expand receptive fields without increasing parameters. "stacks of dilated convolutions that allow it to access a wide-ranging history when forecasting"

- Dropout: A regularization technique that randomly disables neurons during training to reduce overfitting. "adding layers and biases, dropouts, splitting the data into train- validation-test sets"

- ETF (Exchange-Traded Fund): A pooled investment security that trades on exchanges like a stock. "stocks and ETFs in NYSE or NASDAQ."

- Ex-dividend: The state/date when a stock trades without the right to the next dividend payment. "EX_DIVIDEND, SPLIT_RATIO, ADJ_OPEN, ADJ_HIGH, ADJ_LOW, ADJ_CLOSE, ADJ_VOLUME, ADJ_FACTOR."

- Fundamental Analysis: Evaluating securities using economic, financial, and qualitative factors. "thus putting Fundamental Analysis as well in play"

- Gating mechanisms: RNN components that control information flow (e.g., input/forget/output gates). "gating mechanisms used in recurrent neural networks."

- Hedge: A strategy to reduce or offset risk in investments. "hedge their business risks."

- Hinge loss: A margin-based loss used in classification (notably SVMs). "replace the hinge loss with a cross-entropy loss"

- Hyperparameters: Configurable training parameters (e.g., learning rate, batch size) set before training. "Following are the ranges of input and hyperparameters that used are performance tuning and babysitting the model:"

- Large-cap: Corporations with large market capitalization. "large-cap sector of the U.S. market."

- Leaky ReLU: An activation function allowing a small, non-zero gradient when inputs are negative. "rest of Conv layers has a Leaky RELU unit"

- Liquidity: The ease with which an asset can be bought or sold without affecting its price. "Stock splits are usually done to increase the liquidity of the stock"

- Log loss: Another name for cross-entropy loss, penalizing confident wrong predictions. "Cross-entropy loss, or log loss, measures the performance of a classification model"

- Long Short-Term Memory (LSTM): A type of RNN designed to capture long-term dependencies via gated memory cells. "Another Deep Learning approach, LSTM (stands for Long-Short- Term-Memory), a prominent variation of Recurrent Neural Network or RNN"

- Market capitalization: The total value of a company’s shares (price × shares outstanding). "is a weighted index of the 500 largest U.S. publicly traded companies market capitalization."

- Maximum Likelihood Estimation (MLE): A method to estimate parameters by maximizing the likelihood of observed data. "Maximum Likelihood Estimation (MLE)."

- Multivariate time series: Time-dependent data with multiple variables observed simultaneously. "The performance of the CNN is analyzed on various multivariate time series"

- OHLCV: Open, High, Low, Close, and Volume data summarizing trading for a time interval. "open-high- low-close-volume (or OHLCV in short)"

- Online training: Incremental model training as data arrives, rather than in a single batch. "many problems require "online" training"

- Options and Futures: Derivative contracts giving rights/obligations to transact assets at set terms in the future. "Options and Futures trading."

- Overfitting: When a model memorizes training data patterns and fails to generalize. "prevent the overfitting."

- Regularization term: A penalty in the loss function to discourage complex models and reduce overfitting. "together with a regularization term R(W)."

- Recurrent Neural Network (RNN): A neural architecture for sequential data with recurrent connections. "Recurrent Neural Network or RNN"

- Rolling window: A fixed-size moving segment of sequential data used for modeling/prediction. "the rolling-window (the number of days of the data model is looking at in one batch)"

- SGD (Stochastic Gradient Descent): An optimization method updating parameters using mini-batches or single samples. "Optimizers: SGD, Adam optimizer"

- Sigmoid activation: An S-shaped function mapping inputs to (0, 1) used for probabilistic outputs. "finally a sigmoid activation to result in a tensor sized [batch_size, output_size]"

- Significance-Offset Convolutional Neural Network: A CNN architecture for multivariate asynchronous time-series regression. "Significance-Offset Convolutional Neural Network, a deep convolutional network architecture for regression of multivariate asynchronous time series."

- Softmax classifier: A classifier producing normalized class probabilities via the softmax function. "Softmax classifier, which has a loss function based on binary Logistic Regression, has been used."

- Space-Time Convolutional Neural Network (ST-CNN): A CNN architecture modeling spatial and temporal dependencies in time series. "Space-Time Convolutional Neural Network (ST-CNN) and Space-Time Convolutional and Recurrent Neural Network (STaR)."

- Space-Time Convolutional and Recurrent Neural Network (STaR): A hybrid CNN-RNN model capturing space-time features. "Space-Time Convolutional and Recurrent Neural Network (STaR)."

- Sliding window approach: A method to predict future values using sequential windows of past data. "applying a sliding window approach for predicting future values."

- Stock dividends: Share distributions to shareholders instead of cash, increasing shares outstanding. "Stock dividends are like cash dividends and effects company's stock price; however, instead of cash, a company pays out stock [17]."

- Stock splits: Corporate actions dividing shares to lower price and improve liquidity. "stock splits occur when a company perceives that its stock price may be too high"

- Vectorized calculations: Batch computations using vector/matrix operations for efficiency. "vectorized calculations in a batch"

- WaveNet: A deep convolutional architecture for sequence modeling using dilated convolutions. "deep convolutional WaveNet architecture."

- Wavelets: Mathematical functions for multi-resolution signal analysis. "combination of wavelets and CNN"

- Weighted index: An index where constituents are weighted (often by market cap) rather than equally. "is a weighted index of the 500 largest U.S. publicly traded companies market capitalization."

Collections

Sign up for free to add this paper to one or more collections.