Disordered Dynamics in High Dimensions: Connections to Random Matrices and Machine Learning

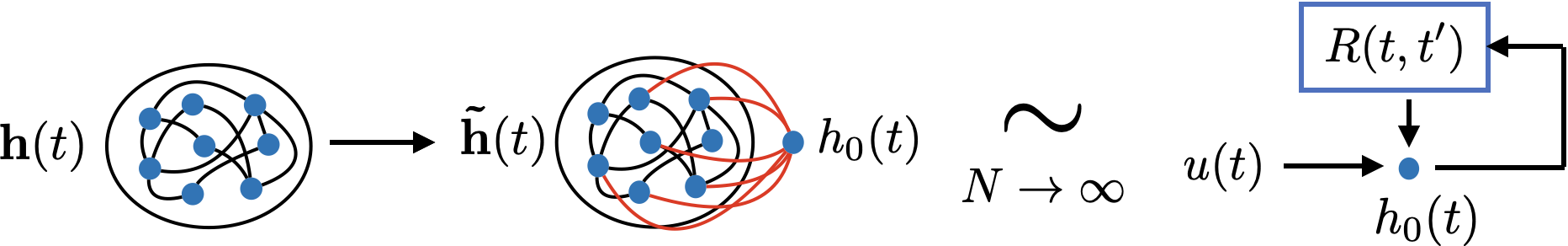

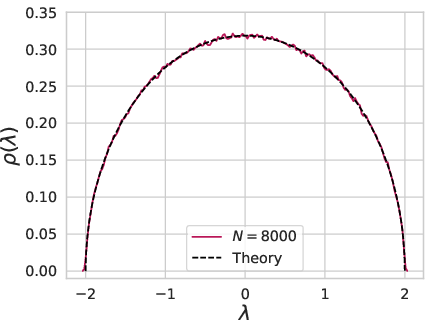

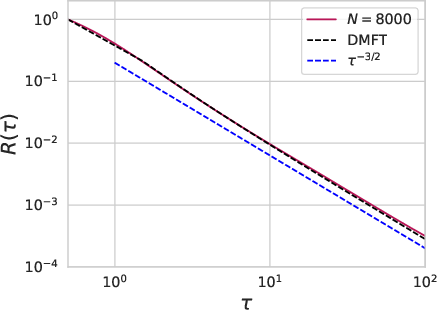

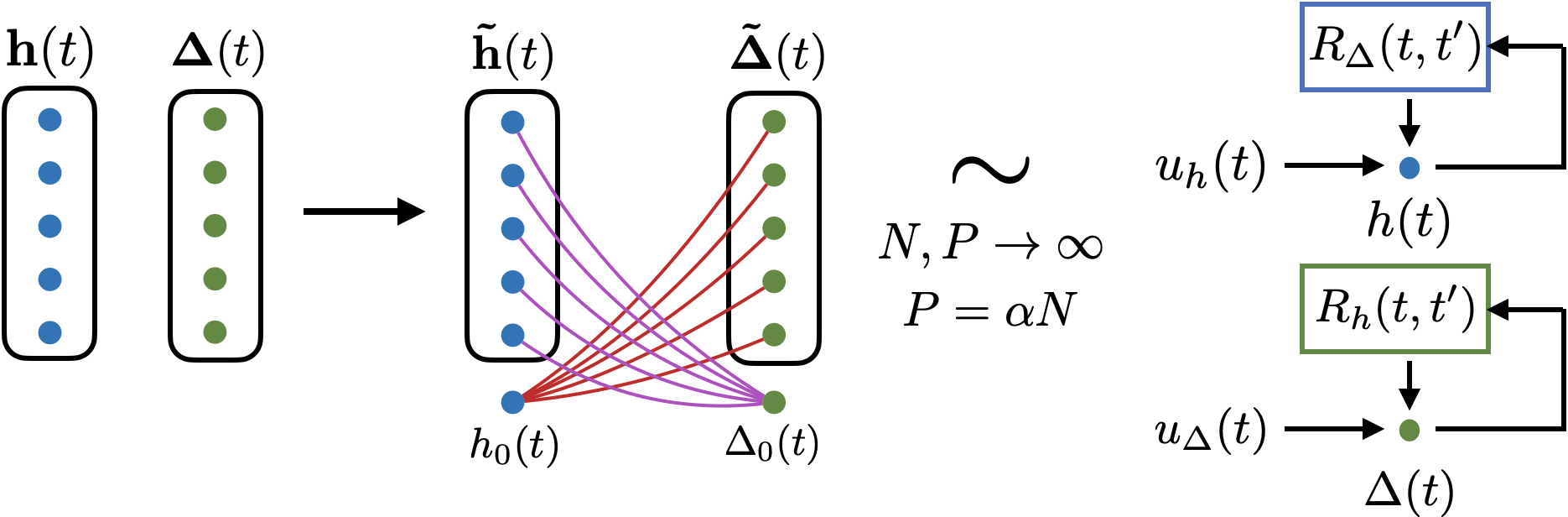

Abstract: We provide an overview of high dimensional dynamical systems driven by random matrices, focusing on applications to simple models of learning and generalization in machine learning theory. Using both cavity method arguments and path integrals, we review how the behavior of a coupled infinite dimensional system can be characterized as a stochastic process for each single site of the system. We provide a pedagogical treatment of dynamical mean field theory (DMFT), a framework that can be flexibly applied to these settings. The DMFT single site stochastic process is fully characterized by a set of (two-time) correlation and response functions. For linear time-invariant systems, we illustrate connections between random matrix resolvents and the DMFT response. We demonstrate applications of these ideas to machine learning models such as gradient flow, stochastic gradient descent on random feature models and deep linear networks in the feature learning regime trained on random data. We demonstrate how bias and variance decompositions (analysis of ensembling/bagging etc) can be computed by averaging over subsets of the DMFT noise variables. From our formalism we also investigate how linear systems driven with random non-Hermitian matrices (such as random feature models) can exhibit non-monotonic loss curves with training time, while Hermitian matrices with the matching spectra do not, highlighting a different mechanism for non-monotonicity than small eigenvalues causing instability to label noise. Lastly, we provide asymptotic descriptions of the training and test loss dynamics for randomly initialized deep linear neural networks trained in the feature learning regime with high-dimensional random data. In this case, the time translation invariance structure is lost and the hidden layer weights are characterized as spiked random matrices.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.