Self-Supervised Learning with Noisy Dataset for Rydberg Microwave Sensors Denoising

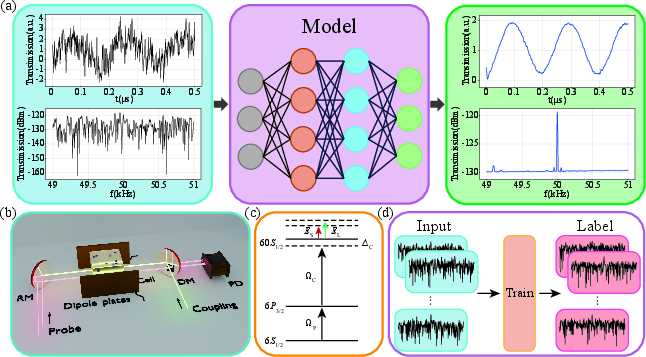

Abstract: We report a self-supervised deep learning framework for Rydberg sensors that enables single-shot noise suppression matching the accuracy of multi-measurement averaging. The framework eliminates the need for clean reference signals (hardly required in quantum sensing) by training on two sets of noisy signals with identical statistical distributions. When evaluated on Rydberg sensing datasets, the framework outperforms wavelet transform and Kalman filtering, achieving a denoising effect equivalent to 10,000-set averaging while reducing computation time by three orders of magnitude. We further validate performance across diverse noise profiles and quantify the complexity-performance trade-off of U-Net and Transformer architectures, providing actionable guidance for optimizing deep learning-based denoising in Rydberg sensor systems.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is about cleaning up noisy signals from a special kind of sensor that uses Rydberg atoms to detect microwaves. Think of Rydberg atoms as super-sensitive “atomic antennas.” They can pick up very weak microwave signals, which is useful for things like wireless communication and radar. But in real-life environments, these signals get buried under lots of noise (like static on a radio). The authors built a deep learning method that can remove this noise in a single measurement, as well as averaging 10,000 measurements, but much faster.

What questions does the paper ask?

- How can we clean noisy signals from Rydberg atom microwave sensors quickly and accurately without needing “perfect” clean signals to train on?

- Can deep learning beat traditional noise filters (like wavelets and Kalman filters) when the noise changes over time and isn’t predictable?

- What kind of deep learning model works best, and how does model complexity (simple vs. advanced) affect performance and speed?

How did they do it? (Methods explained simply)

First, here’s how the sensor works:

- The sensor uses a gas of cesium atoms and two lasers to excite the atoms into a Rydberg state. In this state, the atoms become very sensitive to electric fields.

- The sensor listens for a microwave signal under test (SUT). It mixes that signal with a nearby “local” microwave tone and produces a new, fixed-frequency “beat” signal (called an intermediate frequency, or IF, around 50 kHz). This makes the weak microwave easier to measure, like tuning to a known station so you can hear a whisper.

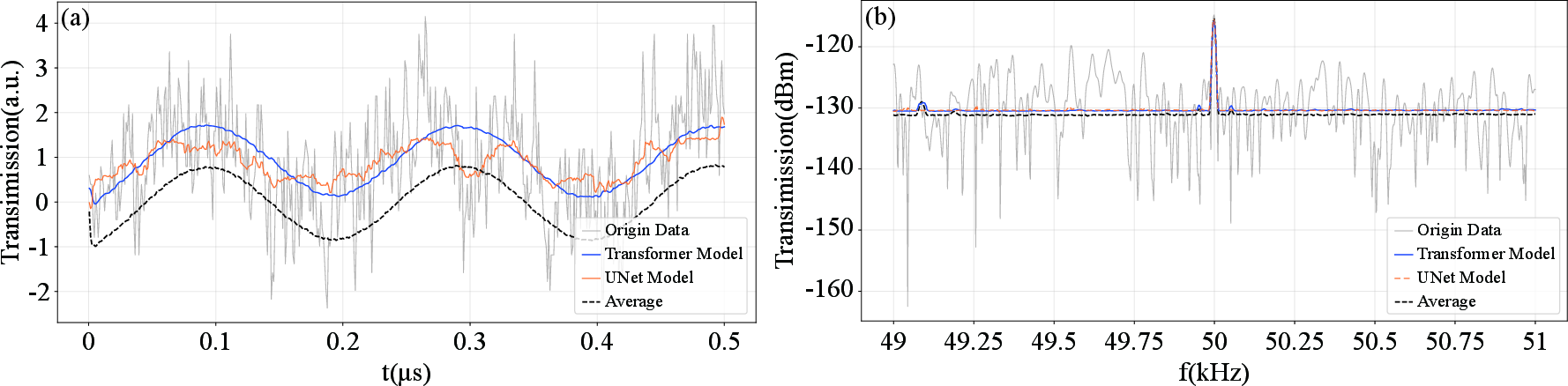

- They record the sensor’s output both over time (time domain) and across frequencies (frequency domain) using an oscilloscope and a spectrum analyzer.

Now, here’s the deep learning trick:

- Normally, deep learning needs pairs of noisy and clean signals to learn what “clean” looks like. But in quantum sensing, you almost never have clean signals.

- So they used self-supervised learning: they train the model using two separate measurements of the same signal that both contain noise. Each pair is “noisy vs. noisy,” but the noise is random and changes from one measurement to the next.

- Analogy: Imagine you take two photos of the same scene with a phone in low light. Each photo is grainy in slightly different ways. If a model looks at many such pairs, it learns what stays the same (the true scene) and what changes randomly (the noise). Over many examples, the model learns to focus on the stable parts and ignore the random fuzz.

- They tested two kinds of models:

- A Transformer (a powerful attention-based model that looks at relationships across the entire signal to separate noise from real patterns).

- A U-Net (a simpler model using 1D convolutions and skip connections to combine fine details with overall structure).

- They trained on thousands of noisy signal pairs, standardized the data (so differences in scale don’t confuse the model), and measured performance using mean squared error (MSE) compared to the “ground truth,” which they approximated by averaging 10,000 measurements.

What did they find and why does it matter?

Here are the main results:

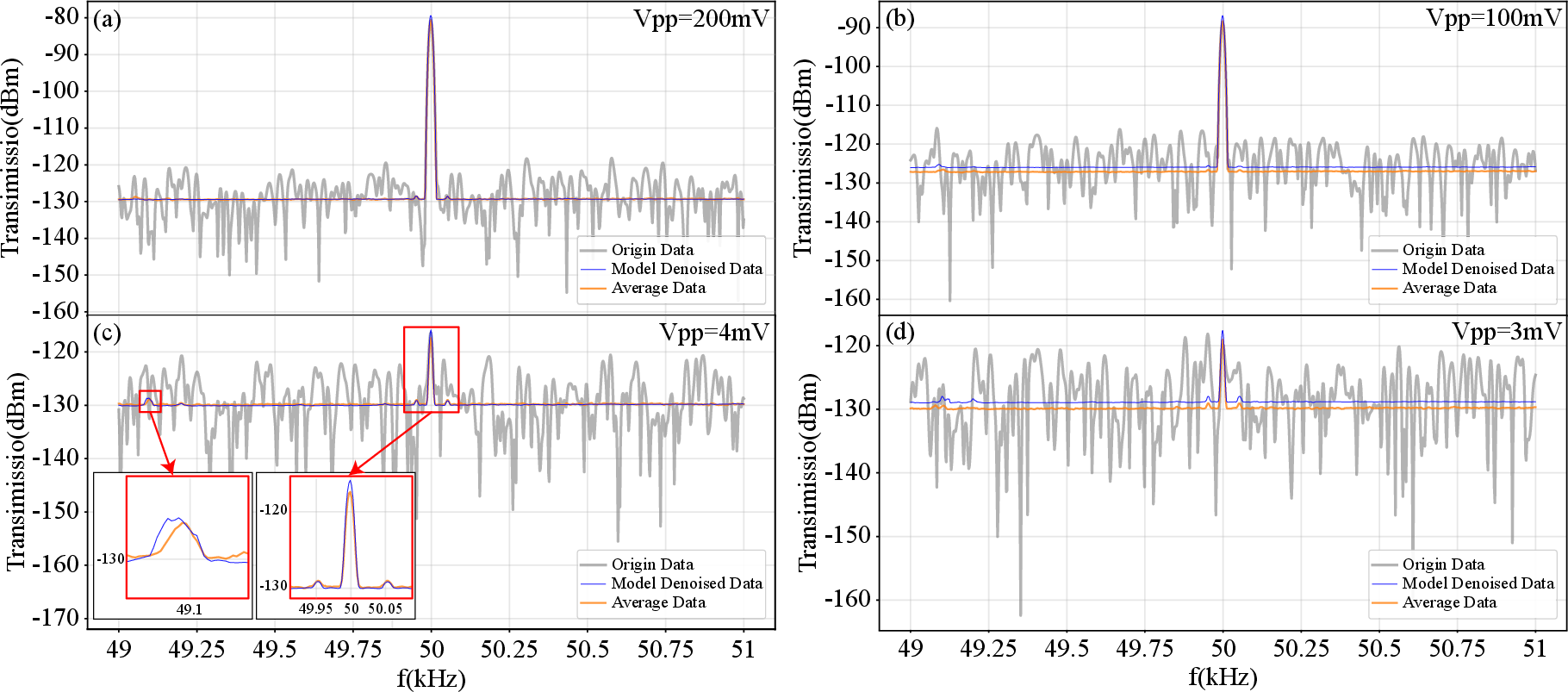

- Single-shot denoising as good as 10,000-set averaging:

- The model cleaned up a single measurement to match the quality of averaging 10,000 measurements. This is a huge speedup: seconds instead of about an hour.

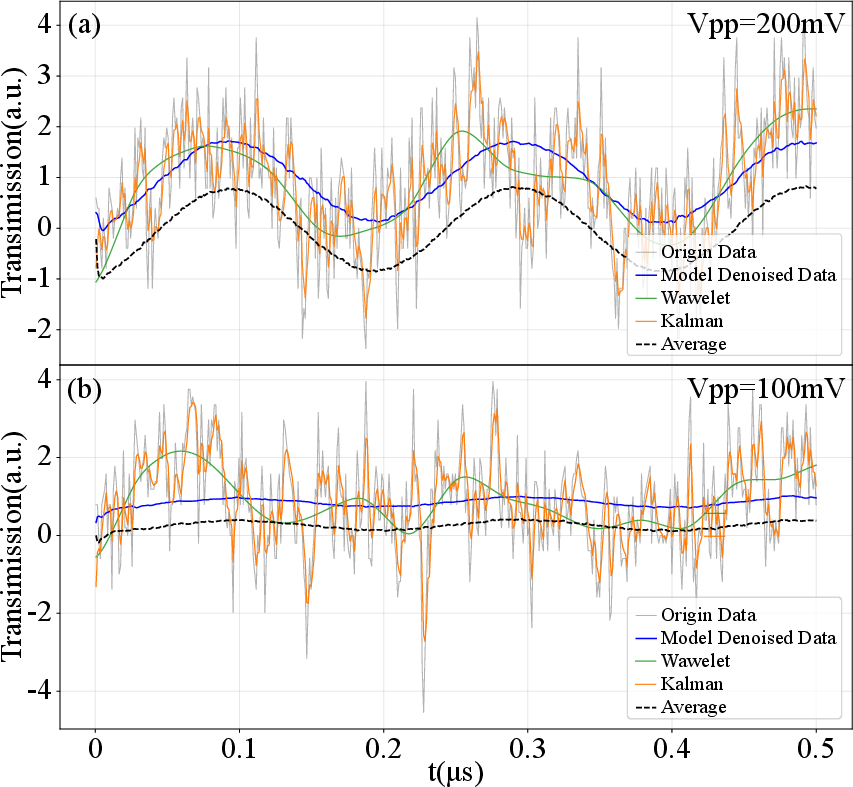

- Stronger than traditional filters:

- Compared to wavelet transform and Kalman filtering, the deep learning model consistently produced cleaner, more accurate signals in both time and frequency domains, especially when the noise wasn’t stable.

- It recovered hidden details:

- Even when the signal was mostly hidden by noise, the model pulled out the main IF tone at 50 kHz and small side features (like nearby peaks around 49–50 kHz) that matched what the 10,000-average revealed.

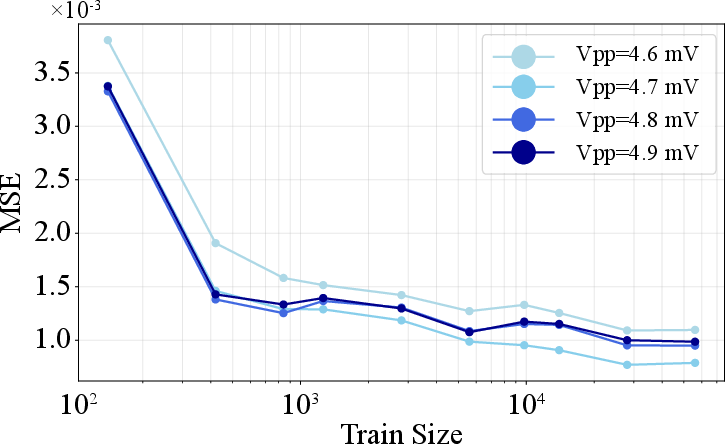

- More data helps, then plateaus:

- Adding more training examples sharply improved performance up to a point, then gains leveled off. That means you need a decent amount of data, but not infinite amounts.

- Model complexity trade-off:

- The Transformer outperformed the U-Net: it kept more subtle features and matched the 10,000-average more closely.

- But the Transformer takes longer to train and use more computing power. The U-Net is faster and lighter, though less accurate.

Why this matters:

- In real-world sensing (communications, radar, metrology), signals can change quickly. Waiting to average thousands of measurements is too slow.

- This method gives high-quality, real-time denoising, without needing clean “teacher” signals.

- It works even when noise is messy and unpredictable, which is common outside of controlled lab settings.

What’s the impact and what’s next?

- Practical impact:

- This approach makes Rydberg microwave sensors more useful in the real world: faster, cleaner, and more reliable even in noisy environments.

- It removes the need for clean training labels, which are hard or impossible to get in quantum sensing.

- It can be applied to both time and frequency signals, so it’s flexible for different tasks.

- Broader implications:

- The idea of training on two noisy views of the same thing could help other sensors and fields (like audio, medical signals, seismic data) where clean labels are rare.

- Limitations and future work:

- The model needs enough varied training data to learn well across different noise types.

- The best models (like Transformers) can be heavy for small, embedded devices. Future work could focus on making them smaller and faster without losing accuracy, or on smarter ways to gather training data.

In short, the paper shows a fast, label-free deep learning way to clean up noisy quantum sensor signals that rivals massive averaging, works better than classic filters, and helps push Rydberg sensors toward real-world use.

Collections

Sign up for free to add this paper to one or more collections.