- The paper introduces FACTUM, a framework that diagnoses citation hallucination by analyzing transformer attention and FFN pathways during citation generation in long-form RAG.

- It defines four novel scores—CAS, BAS, PFS, and PAS—that quantify semantic alignment, attention distribution, and parametric recall to assess citation reliability.

- Empirical results demonstrate that FACTUM outperforms prior methods by up to 37.5% in AUC and reveals scale-dependent mechanistic signatures across different model sizes.

Motivation and Problem Definition

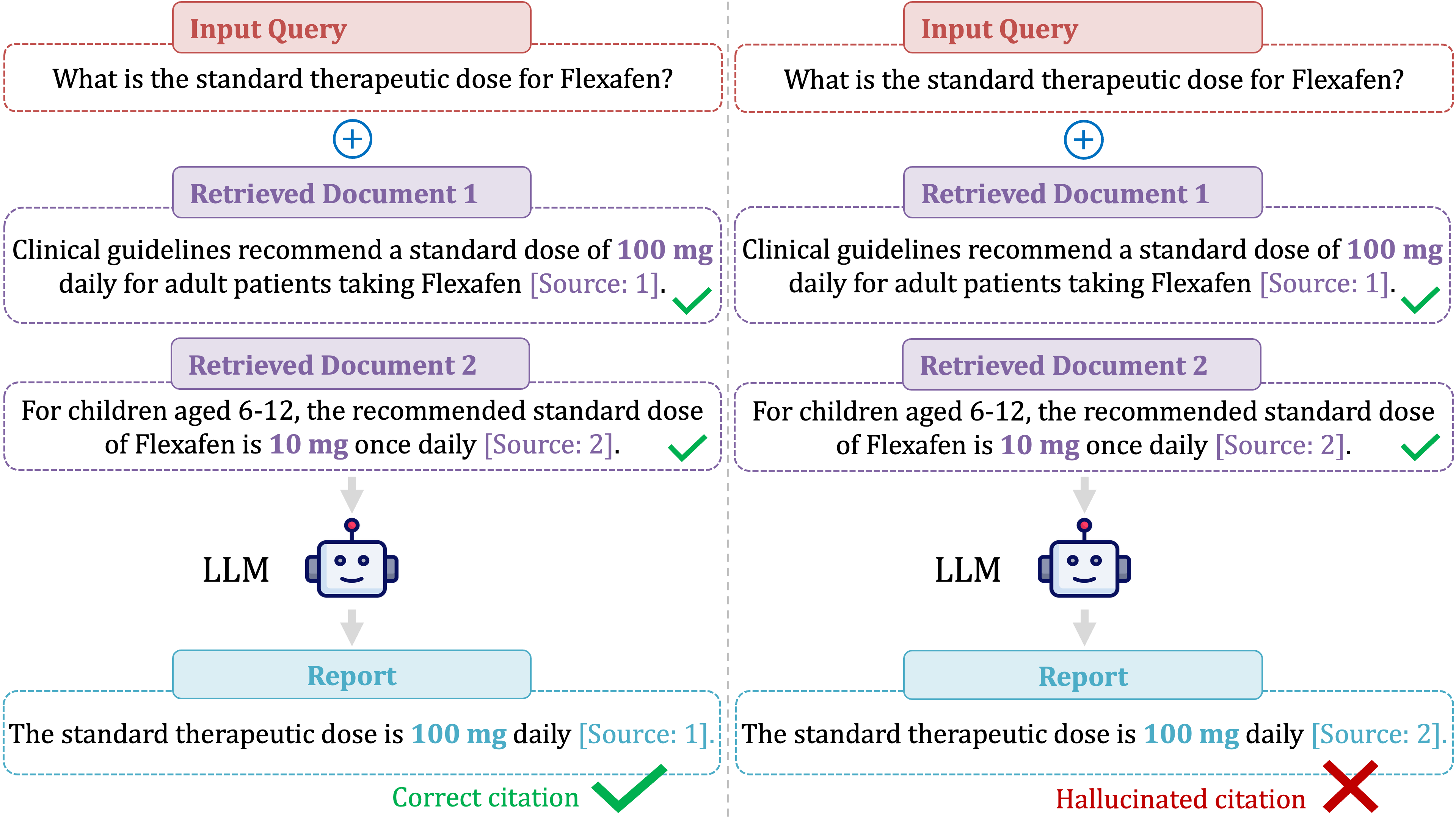

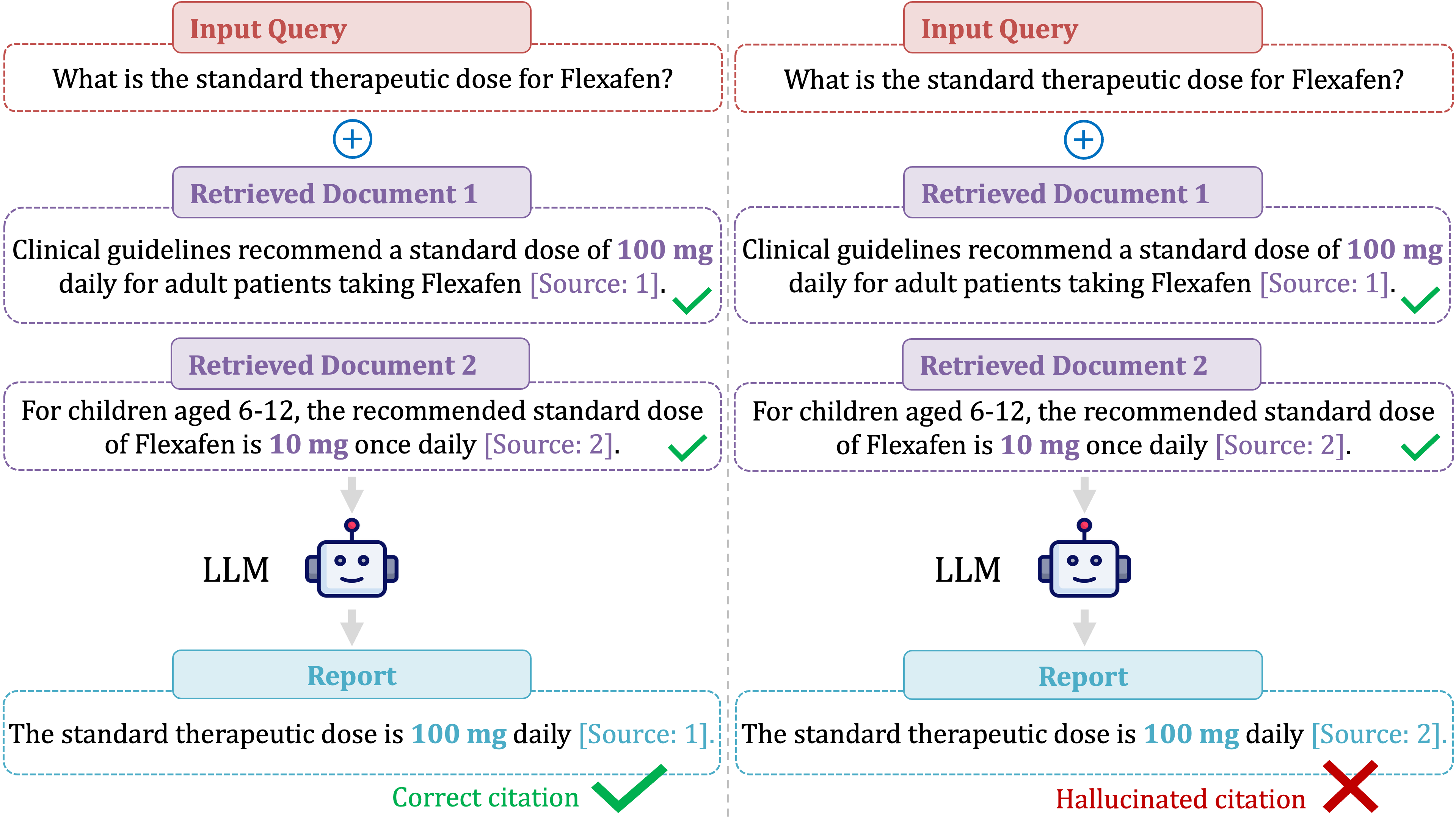

Citation hallucination in Retrieval-Augmented Generation (RAG) models represents a critical failure mode: models confidently attribute claims to sources that do not substantiate or even directly contradict those claims. This error undermines the core promise of verifiability in RAG, especially in long-form scenarios where attributional drift becomes prevalent as a model synthesizes content across vast context windows (up to millions of tokens). Notably, citation hallucination erodes user trust since citations are heuristically treated as marks of reliability, regardless of their factual correspondence.

Figure 1: Citation hallucination can render even factually accurate statements unverifiable and misleading by misattributing claims to incorrect sources.

Despite its importance, citation hallucination has received limited attention compared to broader RAG hallucination. Conventional approaches typically treat hallucination as a binary problem tied to over-reliance on parametric knowledge, yet fail to mechanistically differentiate between proper and improper citation. Existing detection methods are predominantly black-box or rely on token-level uncertainty, overlooking the transformer’s internal attribution mechanisms. This work proposes FACTUM (Framework for Attesting Citation Trustworthiness via Underlying Mechanisms) to diagnose citation hallucination by probing the underlying architectural pathways in transformer LLMs.

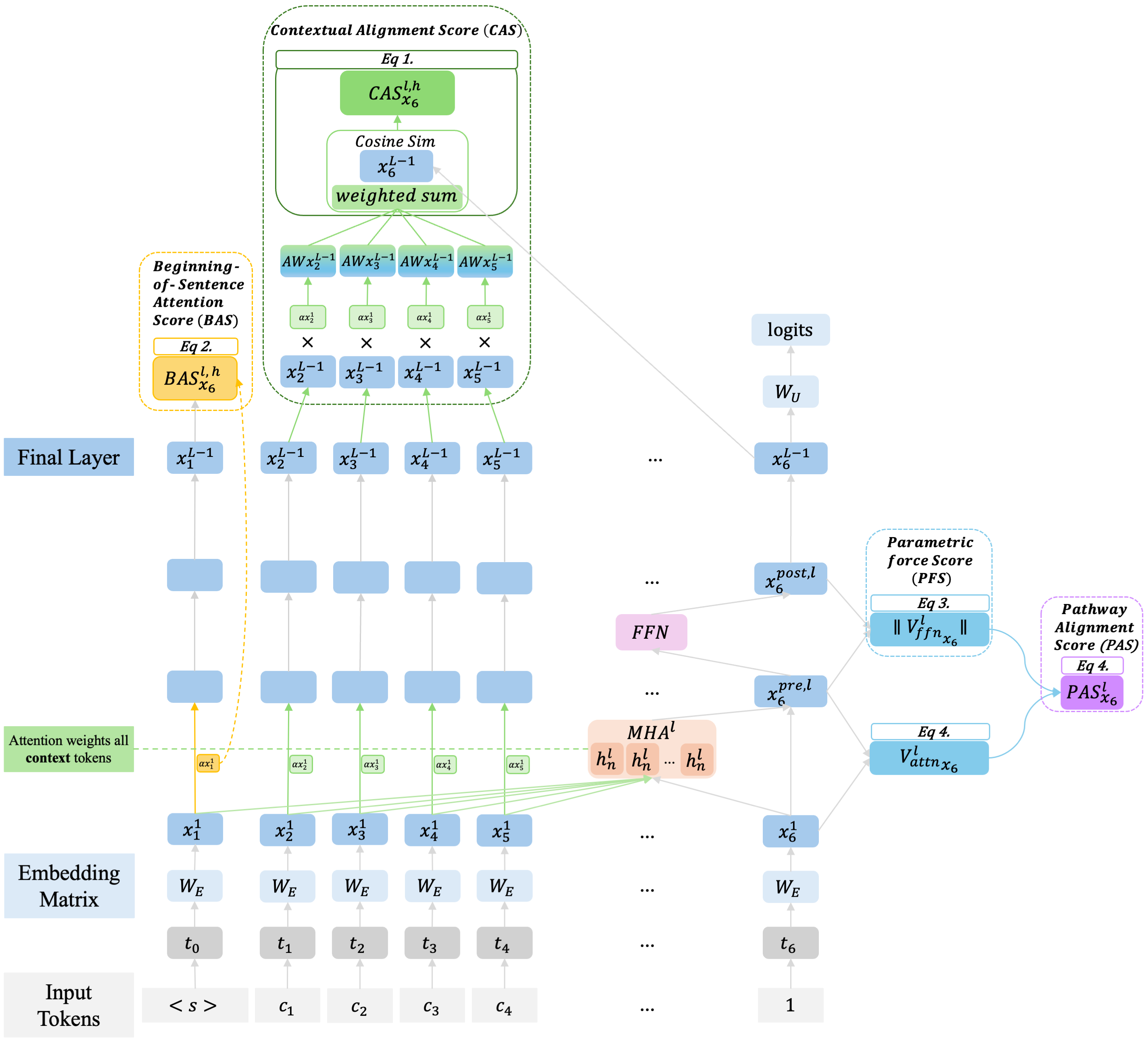

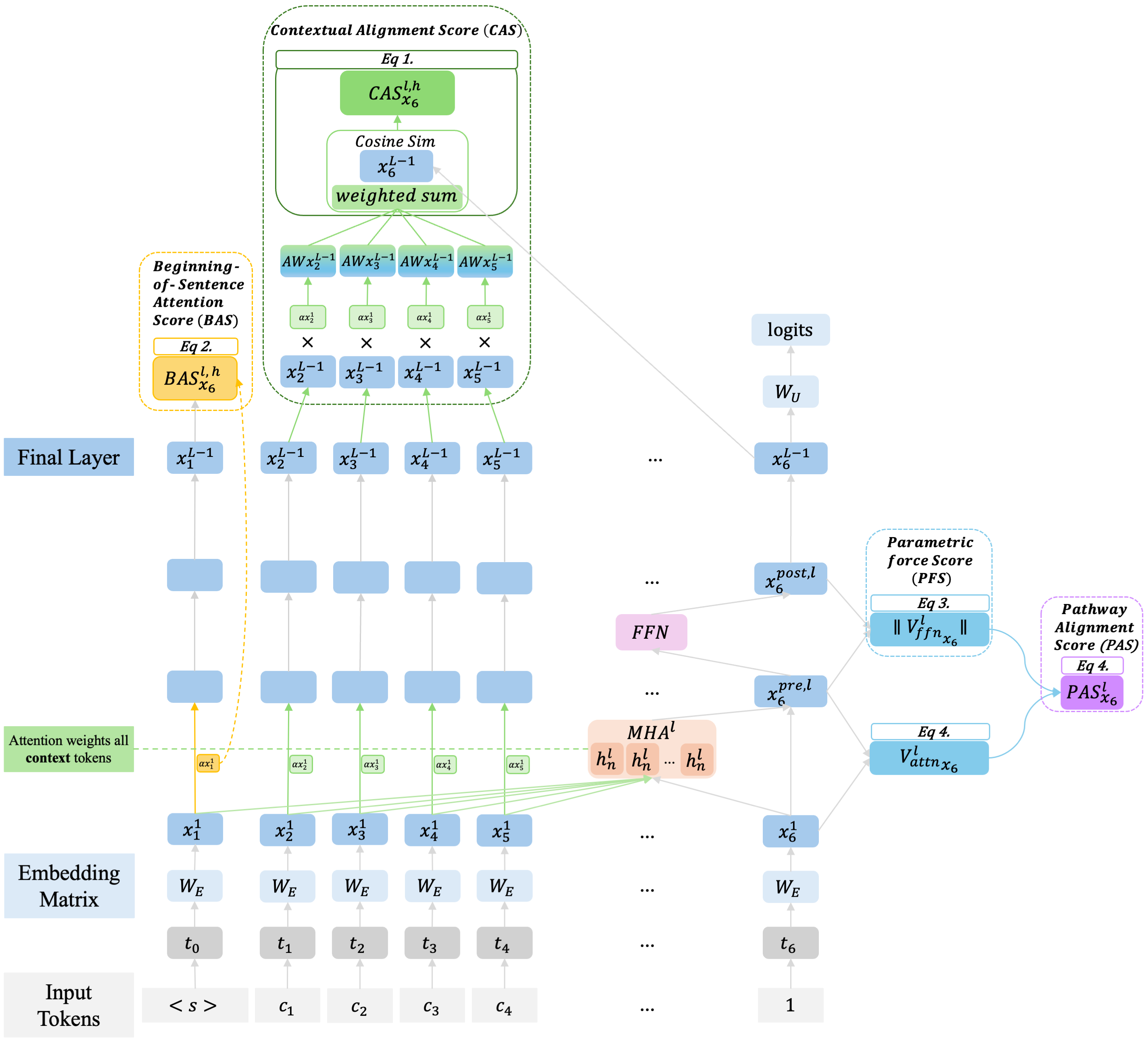

FACTUM Framework Overview

FACTUM leverages the separation of information streams in transformer architecture—specifically, Attention (external context reader) and Feed-Forward Network (FFN, internal parametric recall)—to disentangle the mechanistic contributions at a citation token. Four novel scores are introduced:

- Context Alignment Score (CAS): Directly quantifies the citation token’s semantic alignment with retrieved source documents using an attention-weighted aggregation. High CAS indicates contextual grounding.

- Beginning-of-Sentence Attention Score (BAS): Measures attention allocation to the "attention sink" token (e.g., <s>), an emergent mechanism for global synthesis in long contexts. High BAS correlates with improved information integration.

- Parametric Force Score (PFS): Captures the magnitude of FFN-induced modification to the residual stream; proxies the model’s reliance on parametric knowledge. High PFS during citation can indicate confident recall of fact.

- Pathway Alignment Score (PAS): Assesses geometric alignment (cosine similarity) between attention and FFN updates. High PAS signals coordinated use of both pathways; low or negative PAS reflects dissonance or orthogonal processing.

The holistic FACTUM diagnostic operates at the moment of citation generation, measuring these four signals to distinguish reliable from hallucinated citations.

Figure 2: FACTUM dissects the model’s internal state at the citation token, computing pathway-specific and interaction scores.

Experimental Validation

Task and Dataset

The evaluation targets single-citation, long-form RAG on NeuCLIR 2024—a challenging report generation task with 15-context documents. Citations are annotated for factual correspondence using a robust LLM-as-a-judge protocol, validated for stability and human-alignment using Cohen’s Kappa (κ=0.68).

Models and Feature Engineering

Two models are assessed: Llama-3.2-3B-Instruct and Llama-3.1-8B-Instruct, both with extended context capability. Raw FACTUM scores (thousands per token) are distilled via model-component pruning and time-series/statistical reductions, yielding concise features for interpretable classifiers (Logistic Regression, LightGBM, EBM).

Results

FACTUM decisively surpasses prior mechanistic baselines (ReDeEP’s ECS and PKS) and uncertainty-based methods. For instance, Logistic Regression with FACTUM features attains an AUC of 0.715 (3B) and 0.737 (8B), outperforming all rivals by up to 37.5% in AUC. General baselines such as P(True) and Perplexity regressed toward low precision despite reasonable recall, resulting in unreliable practical detection.

Mechanistic Signatures

FACTUM uncovers model-scale-dependent signatures for correct citations:

- 3B Model: Correct citations coincide with higher CAS, BAS, PAS, and PFS—internal pathways work in coordination for proper attribution.

- 8B Model: Peak detection requires top-25% specialized components; correct attribution is marked by high PFS and BAS, but PAS tends to be lower, indicating orthogonal, complementary information processing rather than straightforward pathway agreement.

This evidences a shift with scale from "pathway agreement" to "specialized, orthogonal contribution," refuting the notion that correct citation is simply tied to increased parametric knowledge utilization.

Practical and Theoretical Implications

- Reliable Citation Detection: FACTUM’s pathway-based diagnostic enables token-level detection of citation hallucination, providing an actionable verifiability safeguard for RAG outputs in real-time.

- Mechanistic Interpretability: By mapping distinct attribution failures and coordination signatures, FACTUM facilitates deeper model audits and interpretability beyond simplistic behavioral probes.

- Long-context Model Design: The scale-dependent mechanistic patterns uncovered suggest future RAG architectures might selectively enhance certain pathway operations, such as adaptive attention sink utilization or context-parametric interplay.

- Externally-Visible Trust Indicators: Scores such as PAS or BAS could be operationalized as warning indicators (e.g., citation asterisks), helping users gauge citation reliability in generated texts.

Speculation on Future Developments

Mechanistic frameworks like FACTUM could be integrated into model training and inference pipelines to dynamically enforce attribution integrity. Quantitative detection mechanisms might be coupled with retrieval or generative models to actively mitigate attributional drift, especially in compositional or multi-hop settings. Moreover, fine-grained pathway diagnostics will likely become essential for regulatory and academic applications requiring auditability of automated claims.

Conclusion

FACTUM establishes a new state-of-the-art for citation hallucination detection by exploiting transformer pathway mechanisms, revealing nuanced, scale-dependent signatures of factual attribution. Its diagnostic capability reframes citation reliability as an emergent property of coordinated internal processing, advocating for interpretability-driven advances in RAG model trustworthiness and design.