- The paper introduces a transformer-based architecture that exploits pre- and post-EEG segments to enhance seizure detection with extended temporal context.

- It achieves state-of-the-art sensitivity and F1-scores across diverse datasets, demonstrating effective generalization with fewer parameters.

- The model balances high detection accuracy with computational efficiency, enabling real-time performance on commodity hardware.

LookAroundNet: Extending Temporal Context with Transformers for Clinically Viable EEG Seizure Detection

Introduction and Motivation

Automated detection of epileptic seizures from EEG is constrained by variability in seizure morphologies, heterogeneity in recording environments (clinical vs. home-based), and inconsistent data labeling. These challenges limit the clinical viability and robust generalization of DNN-based detection systems. Existing methods predominantly rely on CNNs with short, fixed-length temporal windows, thereby restricting their temporal modeling efficiency. Whole-recording models alleviate this but are computationally burdensome and unsuitable for real-time deployment. The need for clinically relevant, generalizable, and computationally feasible seizure detectors motivates the development of LookAroundNet, a transformer-based architecture that exploits extended bidirectional temporal context for segment-wise EEG classification.

LookAroundNet Architecture and Methodological Advances

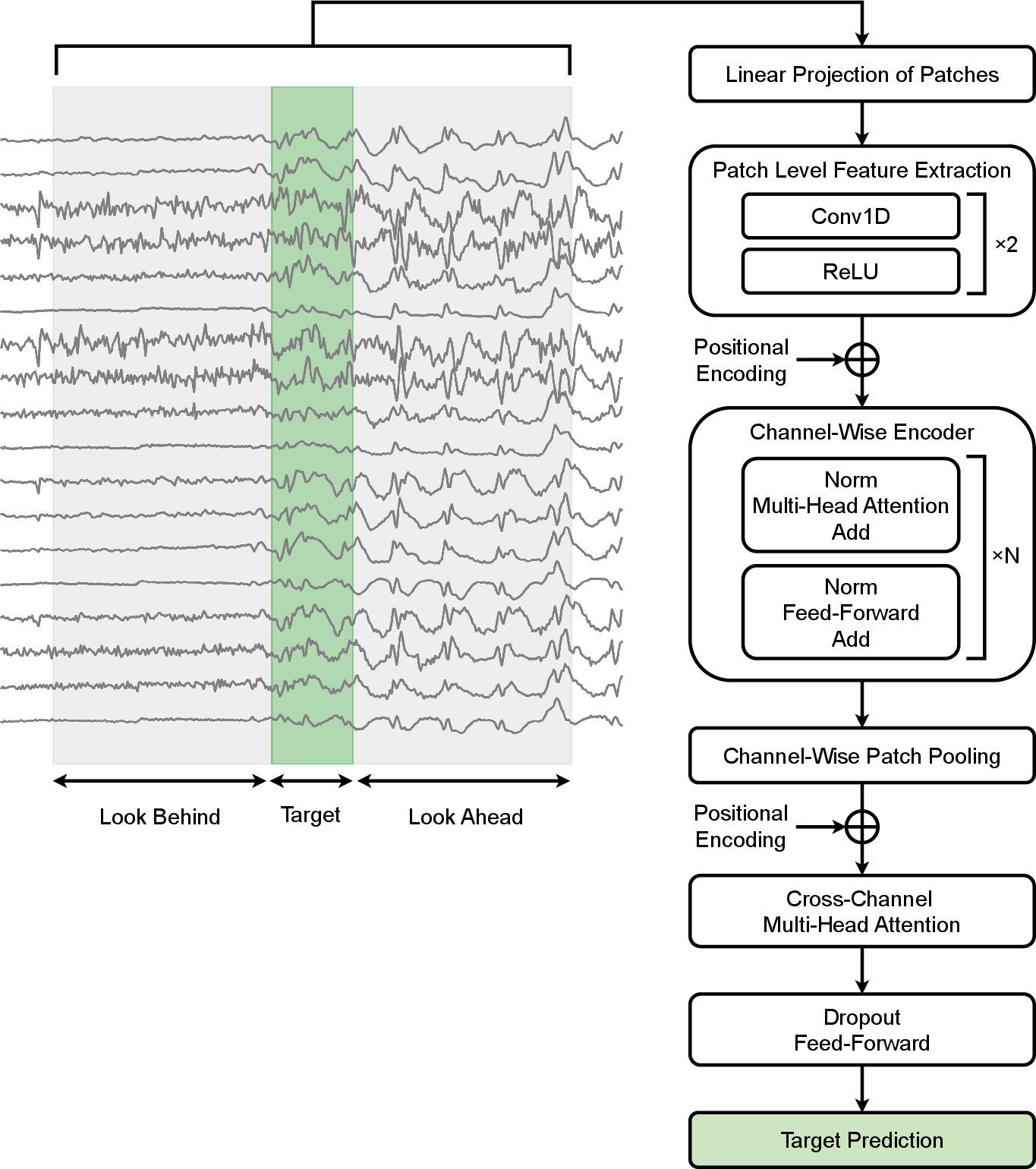

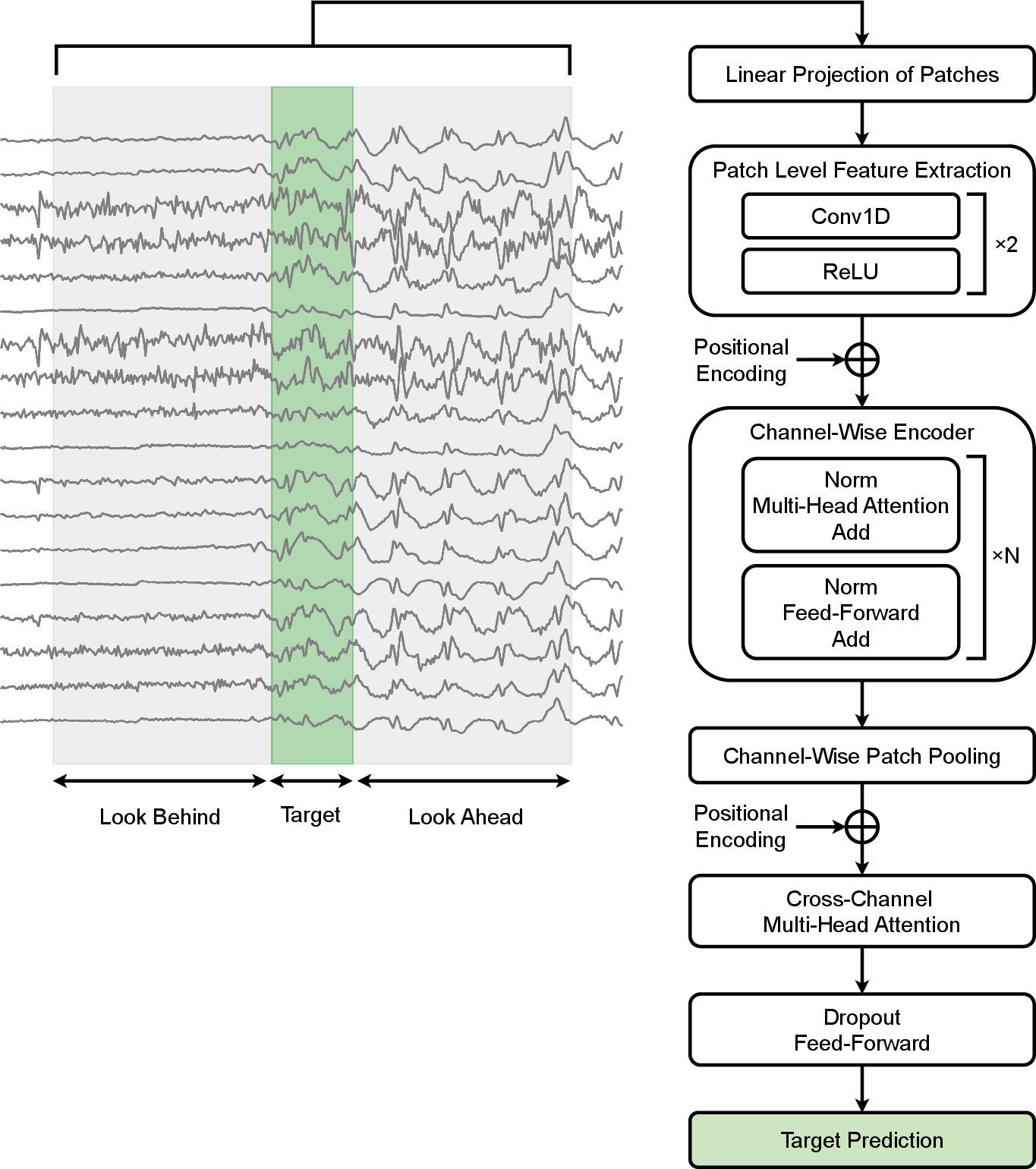

LookAroundNet introduces a hybrid transformer-based architecture specifically tailored for EEG seizure detection. The input is partitioned into a central target segment and symmetric (or asymmetric) past and future context segments. Each EEG channel is independently patched and fed through a two-layer CNN for local feature extraction, followed by channel-wise transformer encoder layers to capture robust temporal dependencies. After aggregation through mean pooling, inter-channel dynamics are modeled using multi-head attention, before a final classification layer outputs a binary decision for the target segment.

The architectural design is oriented toward maximizing clinical applicability—architecture and computational costs are constrained, and inference is explicitly benchmarked on multiple commodity hardware platforms. Preprocessing is standardized, including conversion to a Longitudinal Bipolar Montage, robust filtering, and downsampling.

Figure 1: LookAroundNet’s architecture, which leverages both past and future temporal context around a target EEG segment using a channel-wise transformer and inter-channel attention.

Experimental Framework

LookAroundNet is evaluated across broad and diverse EEG datasets, including TUSZ, SeizeIT1, Siena, and a large-scale proprietary home-EEG collection (Kvikna). The training strategy balances classes and patients to mitigate bias and addresses class imbalance by discarding long, artifact-laden non-seizure intervals. The training regime uses CrossEntropyLoss with label smoothing, the AdamW Schedule-Free optimizer, and thresholded decision rules optimized for clinical metrics.

Evaluation follows the SzCORE framework, using both sample-based and event-based metrics (sensitivity, F1, precision, and FP/day), with outputs generated at 1 Hz temporal resolution. Inference proceeds with sliding windows every 2 seconds and all metrics are averaged over five independent runs to control stochasticity.

Quantitative Outcomes

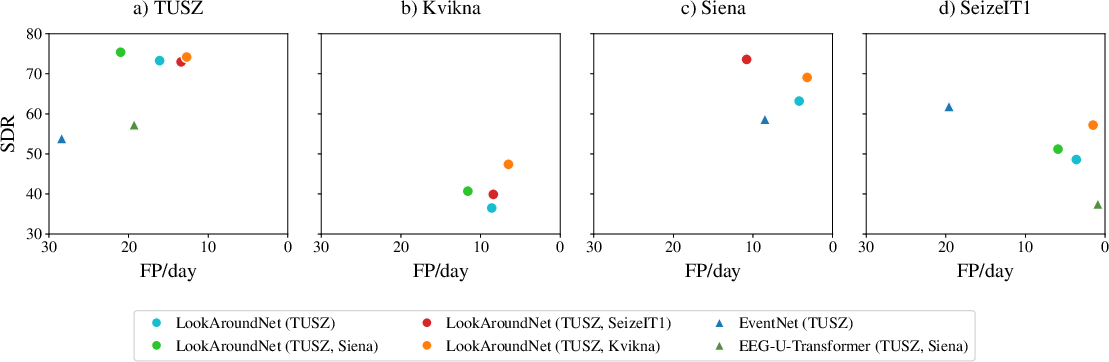

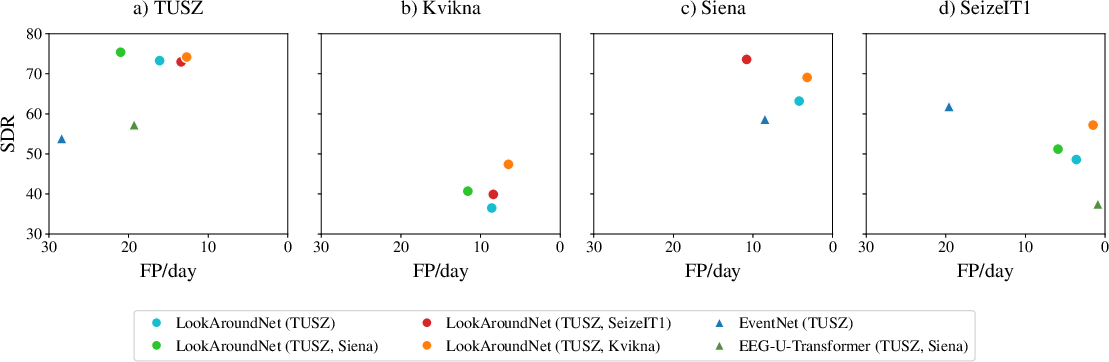

In direct comparison with leading benchmark models (EventNet, EEG-U-Transformer), LookAroundNet attains consistently superior F1-scores and sensitivity across multiple test environments, especially on TUSZ and Siena. Remarkably, it achieves these results with far fewer parameters than competing models, demonstrating efficiency in both accuracy and computation.

Figure 2: Seizure detection rate versus false positives per day for various models and training dataset combinations, demonstrating LookAroundNet’s improved trade-off across test sets.

Event-based F1 reaches 72.1 on TUSZ and 54.9 on Siena in the single-model (64s context) configuration, and up to 77.8 and 68.5, respectively, using ensembling with tripartite context alignments. Notably, ensemble models further reduce false positives (down to 0.4–3.4 FP/day across test domains) while preserving strong sensitivity.

Effects of Training Data Composition

Optimal results are achieved by augmenting TUSZ with the diverse Kvikna home-EEG corpus, supporting the assertion that training data diversity significantly improves generalization. Gains from including smaller datasets (Siena, SeizeIT1) are marginal, with some combinations inducing increased false positives, likely due to dataset-specific labeling or signal idiosyncrasies.

Temporal Context Modeling

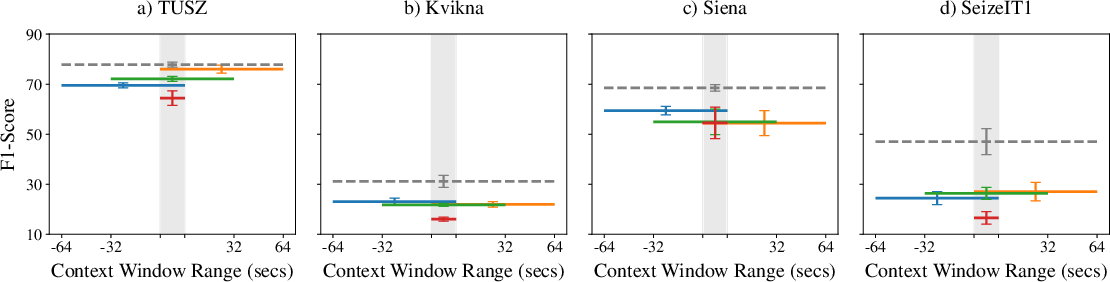

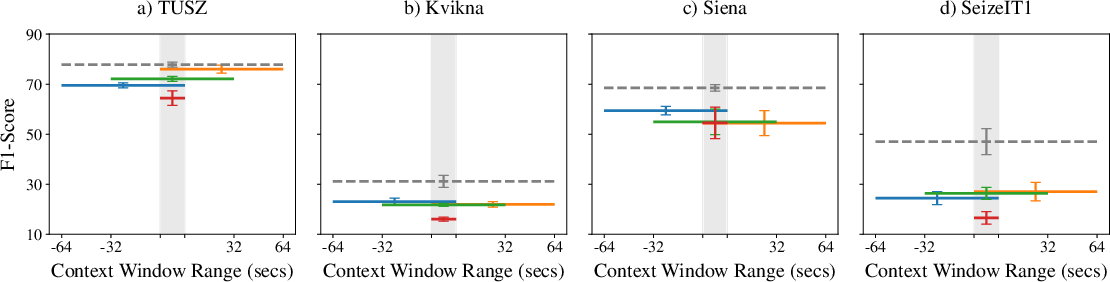

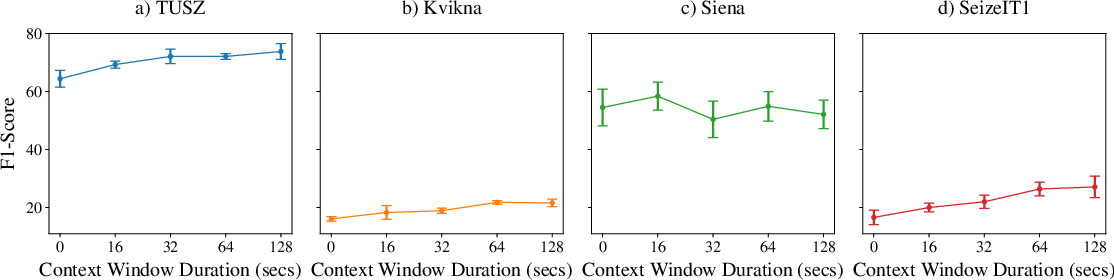

Extensive ablations reveal that both inclusion and extension of temporal context boost model performance over context-free baselines. The precise placement of the context window (pre, post, or centered) is less critical, but ensembling models with distinct context windows yields superior results, outperforming single models with a single broader contextual view.

Figure 3: Effects of context window size and placement on event-based F1-score, showing that providing peripheral context consistently enhances detection accuracy.

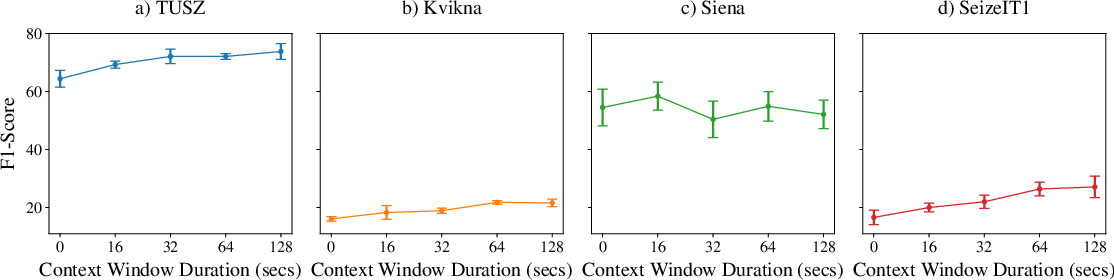

Performance saturates as the total context window approaches 128 seconds, suggesting diminishing returns for further extension, except on smaller datasets where variance dominates.

Figure 4: Relationship between total context window duration and event-based F1-score indicates that most of the performance benefit is attained by 128 seconds of temporal context.

Computational Efficiency

Inference time analysis confirms LookAroundNet can process one hour of EEG in less than 13 seconds on a MacBook Pro (M3), and under 6 seconds on a DGX Spark or workstation-class GPU. These results affirm suitability for both offline clinical review and real-time monitoring on commodity hardware.

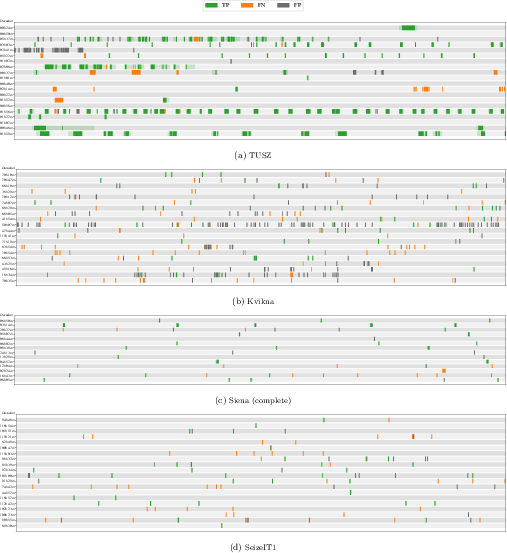

Model Predictions and Errors

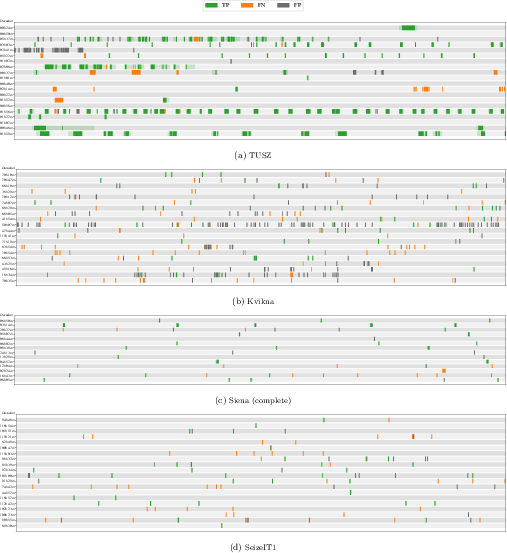

Visualization of predictions on test data show robust alignment with true seizure annotations, though some false positives arise primarily from artifact-dominated recordings—especially in ambulatory, at-home Kvikna data. Model errors cluster around unlabeled brief seizures, exploited context segment limits, and unanticipated signal artifacts.

Figure 5: Model-predicted segments versus ground-truth seizure events across test set patients; green (correct), orange (missed), black (false alarm), highlighting model strengths and error modes.

Implications and Future Directions

LookAroundNet establishes several critical points for advancement in automated seizure detection:

- Temporal context is essential: Incorporating pre- and post-segments robustly improves discrimination between seizures and non-seizure events.

- Training set diversity is non-negotiable: Generalization is primarily driven by access to varied clinical and ambulatory signal sources.

- Ensembling over context alignments is more effective than context window extension: Integrating distinct context windows lower error rates more than simple extension of a single window.

- Clinical viability is advanced but incomplete: Despite state-of-the-art F1, detection of very short events and false positive elimination in artifact-heavy intervals remain challenging. Real-world application will require improved artifact detection, robust post-processing, and more granular annotation protocol alignment.

Prospective application includes real-time, edge-based detection in home and wearable EEG settings. Future research directions involve dynamic context adaptation, automatic artifact rejection, explainability analyses via attention map inspection, and re-training/transfer for neonatal and outlier populations not well represented in current datasets.

Conclusion

LookAroundNet achieves SOTA performance for automated EEG seizure detection by leveraging extended temporal context within an efficient transformer-based framework. Its robust generalization across clinical and home environments, minimal computational requirements, and superior SDR/FP trade-off underscore its practical clinical value. Further work should focus on error analysis, explainability, and tailored adaptation to outlier patient cohorts.