ConvMambaNet: A Hybrid CNN-Mamba State Space Architecture for Accurate and Real-Time EEG Seizure Detection

Abstract: Epilepsy is a chronic neurological disorder marked by recurrent seizures that can severely impact quality of life. Electroencephalography (EEG) remains the primary tool for monitoring neural activity and detecting seizures, yet automated analysis remains challenging due to the temporal complexity of EEG signals. This study introduces ConvMambaNet, a hybrid deep learning model that integrates Convolutional Neural Networks (CNNs) with the Mamba Structured State Space Model (SSM) to enhance temporal feature extraction. By embedding the Mamba-SSM block within a CNN framework, the model effectively captures both spatial and long-range temporal dynamics. Evaluated on the CHB-MIT Scalp EEG dataset, ConvMambaNet achieved a 99% accuracy and demonstrated robust performance under severe class imbalance. These results underscore the model's potential for precise and efficient seizure detection, offering a viable path toward real-time, automated epilepsy monitoring in clinical environments.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Plain-English Summary of “ConvMambaNet: A Hybrid CNN–Mamba State Space Architecture for Accurate and Real-Time EEG Seizure Detection”

1) What is this paper about?

This paper is about helping doctors spot epileptic seizures quickly and accurately by using brainwave recordings called EEG. The authors built a new computer model, called ConvMambaNet, that mixes two ideas: CNNs (good at finding shapes and patterns) and a fast time-sequence model called Mamba (good at tracking how things change over time). Their goal is to detect seizures in real time, with high accuracy, and without using too much computing power.

2) What questions were they trying to answer?

The authors focused on a few simple questions:

- Can a model that combines “where” patterns happen (CNN) and “when” they happen (Mamba) detect seizures better?

- Will it still work well even when seizures are rare in the data (there are many normal moments and few seizure moments)?

- Is it fast enough to be used in real-time monitoring, like in a hospital?

- How does it compare to other popular methods, such as plain CNNs, RNNs/LSTMs, and Transformers?

3) How did they do it?

First, here’s what the data is:

- EEG is a way to record the brain’s electrical signals using sensors on the scalp.

- They used a well-known dataset called CHB-MIT, which contains many hours of EEG from children with epilepsy. It’s recorded at 256 samples per second and includes exact times when seizures start and end.

Here’s how their approach works:

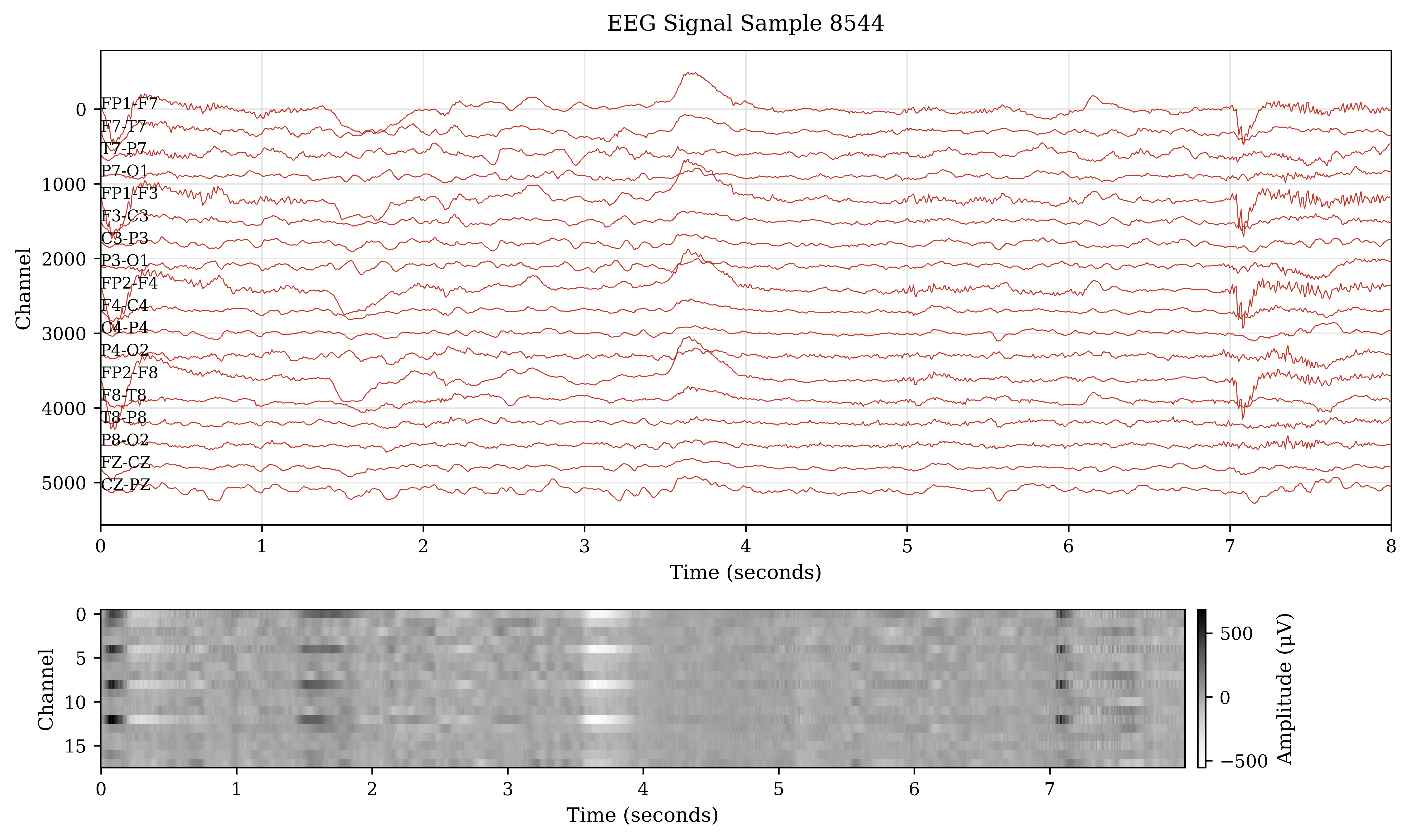

- Data prep: They picked common EEG channels, cleaned the signals, and split the recordings into short chunks (windows) of 8 seconds, stepping every 4 seconds. Each chunk was labeled as “seizure” or “non-seizure.” Because seizures are rare, they balanced the training examples so the model learned both classes well.

- The model design:

- CNN part (spatial): Think of this like scanning a picture to find shapes. The CNN learns patterns across EEG channels at a single moment.

- Mamba State Space Model (temporal): Think of this like following the melody of a song over time. A “state space model” keeps a running summary of what happened before and updates it as new data arrives. Mamba is a newer, faster version of this idea, built to handle long sequences efficiently on modern hardware.

- By combining both, ConvMambaNet learns where patterns appear across channels and how they change over seconds and minutes.

- Training and evaluation:

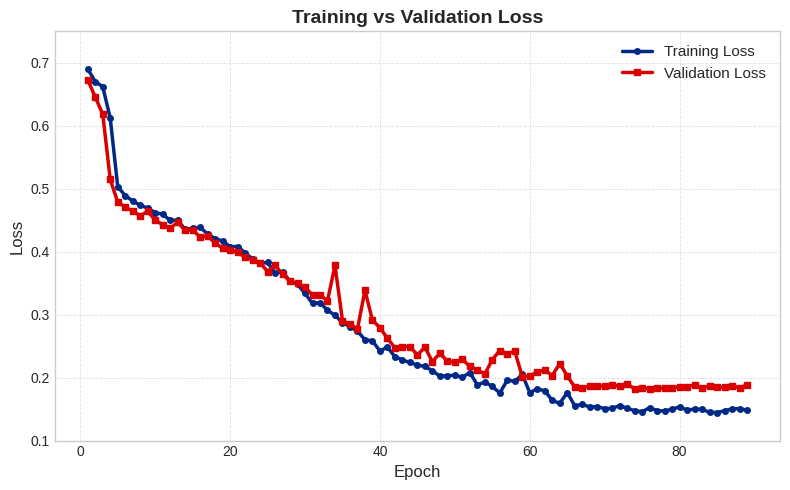

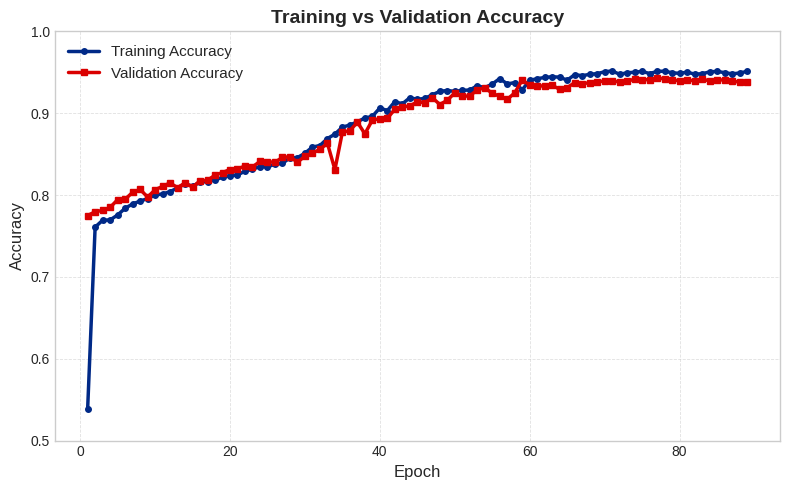

- They used standard deep learning training tricks to keep learning stable and avoid overfitting.

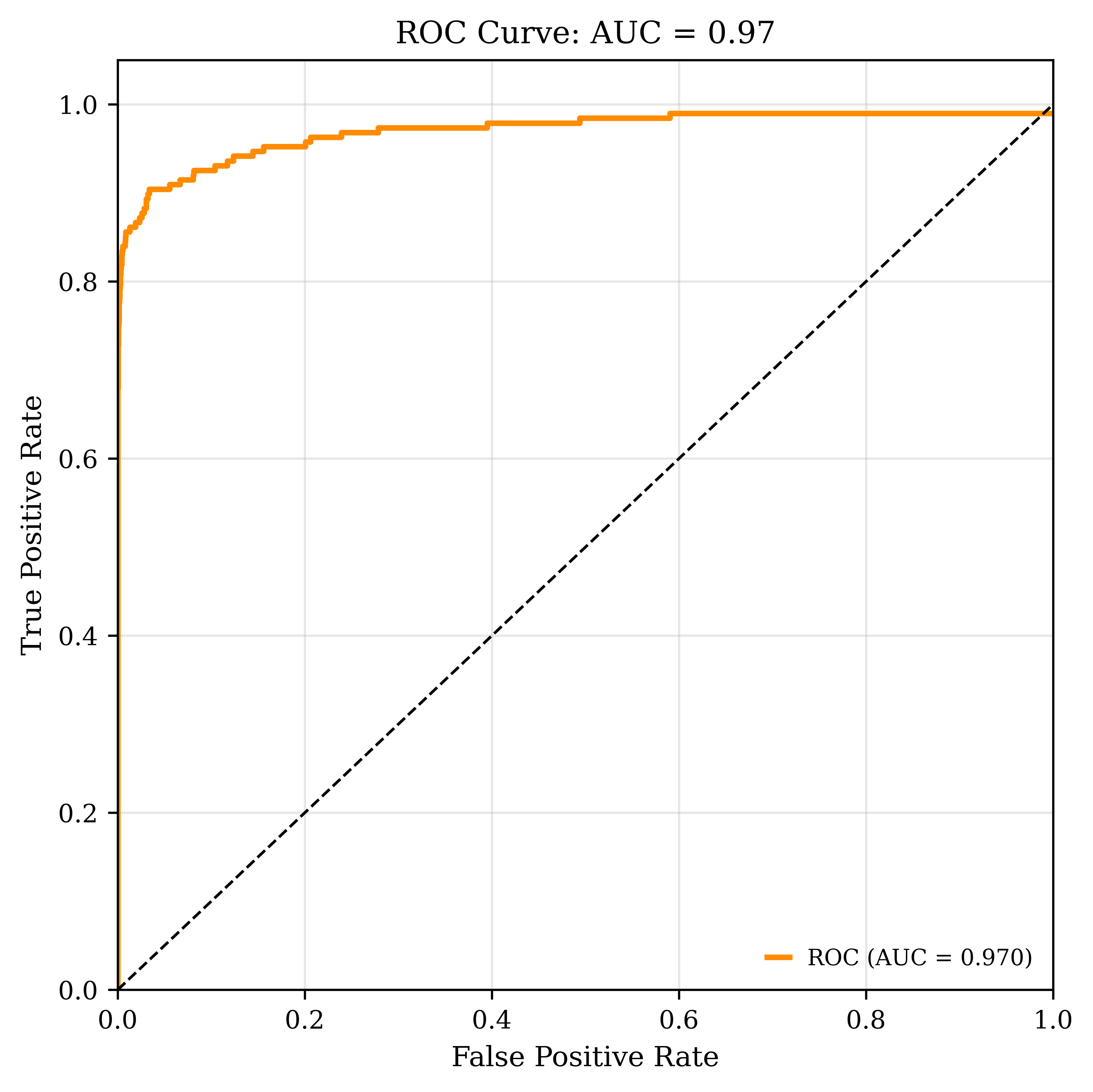

- They measured performance with accuracy, precision, recall, F1-score, and AUC. In simple terms: how often it’s right, how cleanly it finds seizures, and how well it separates seizure from non-seizure across different decision thresholds.

A helpful analogy:

- CNN is like spotting shapes in a photo (what and where).

- Mamba SSM is like remembering the tune in a song (how things evolve over time).

- ConvMambaNet does both at once.

4) What did they find, and why is it important?

Main results on the CHB-MIT dataset:

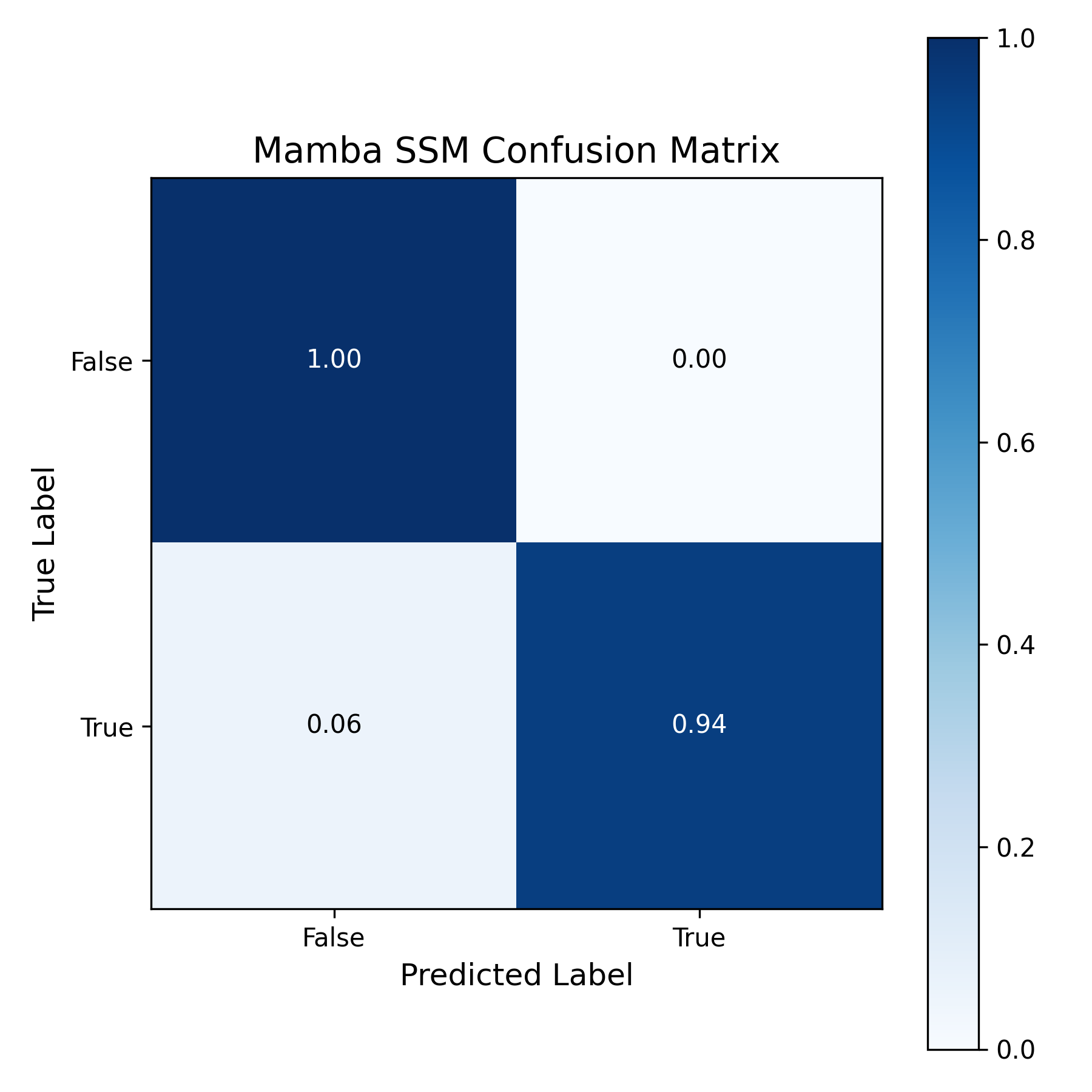

- Accuracy: 99%

- Precision: 0.99

- Recall: 0.99

- F1-score: 0.99

- AUC: 0.97

Why this matters:

- It’s very accurate at telling seizure from non-seizure, which can reduce false alarms and missed events.

- It handled class imbalance well (there are many more non-seizure moments than seizure moments in real life).

- It’s fast. One full pass through the training data (an “epoch”) took about 48 seconds—faster than their CNN, RNN, and Transformer baselines, which took 1–4 minutes per epoch.

- Compared to other published methods on the same dataset, ConvMambaNet matched or beat their scores while being more efficient. This is important for real-time monitoring, where speed and reliability are both critical.

5) What’s the bigger impact?

If this kind of system is put into practice:

- Hospitals could monitor patients in real time with fewer false alarms and faster responses.

- Doctors and nurses would get more reliable alerts, saving time and improving care.

- Because the model is efficient, it could be used on devices with limited computing power, potentially enabling wearable or bedside monitoring.

- It shows a promising path forward: combining “pattern-in-space” (CNN) with “pattern-over-time” (Mamba SSM) can improve performance without slowing down.

In short, ConvMambaNet is a strong, fast tool for detecting seizures from EEG. It could make real-time epilepsy monitoring more accurate and practical, helping patients get quicker and better care. Further testing across more hospitals and patient groups would be a good next step to confirm how well it generalizes in the real world.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise list of the main uncertainties and omissions that future work could address:

- External validity: results are reported only on CHB-MIT (pediatric, single-center). No cross-dataset evaluation (e.g., TUH, EPILEPSIAE, Bonn) or adult cohorts to assess generalization across age groups, hospitals, hardware, and montages.

- Patient-independent generalization: the split protocol is unclear regarding strict leave-one-subject-out (LOSO) or cross-subject evaluation. Quantify performance when training on some patients and testing on unseen patients, and compare against patient-specific models.

- Continuous-time, clinically relevant metrics: no reporting of event-level sensitivity, false alarms per hour (FA/h), detection latency from onset, or time-to-first-detection on continuous recordings—key metrics for clinical deployment.

- Real-time, causal inference: the model’s causal/online behavior is not evaluated (e.g., lookahead-free inference, streaming windowing). Quantify latency-accuracy trade-offs for true real-time operation.

- Inference efficiency on edge hardware: only training time on a desktop GPU is reported. Absent are inference latency, memory footprint, throughput, and energy on CPU-only systems, embedded GPUs, or wearables.

- Class imbalance under real-world priors: training uses balanced sampling; evaluation under realistic long interictal periods is missing. Report precision–recall curves, PR-AUC, FA/h over long continuous recordings, and threshold calibration for imbalanced deployments.

- Labeling rigor: reliance on WFDB-derived annotations is described but not validated against clinician-reviewed onset/offsets. Clarify label provenance and quantify sensitivity to annotation uncertainty and boundary errors.

- Windowing design: fixed 8 s windows with 4 s stride were used without analysis. Study the effect of window length/stride on latency, sensitivity, and stability; evaluate non-overlapping test windows to reduce correlation bias.

- Seizure-level vs window-level evaluation: metrics appear window-aggregated. Report seizure-level detection rates, per-seizure sensitivity, and time-to-detection to avoid inflation from many interictal windows.

- Ablation studies: no quantification of the contribution of CNN vs Mamba SSM vs added attention, or of key hyperparameters (e.g., SSM state size, depth, conv width, dropout, initialization). Provide controlled ablations and parameter sweeps.

- Attention within Mamba block: the added multi-head attention may compromise hardware efficiency; its necessity and cost–benefit trade-off are not analyzed. Compare Mamba-only vs Mamba+attention in accuracy and latency.

- Claim of long-range modeling vs short windows: with 8 s windows, benefits of long-range SSMs remain unsubstantiated. Test longer contexts (e.g., 30–120 s) and show performance scaling with sequence length.

- Preprocessing details and robustness: filtering (band-pass/notch), re-referencing, artifact mitigation (EOG/EMG/motion), and handling of missing/variable channels are insufficiently specified. Evaluate robustness to artifacts and montage changes.

- Channel robustness and adaptability: the fixed bipolar channel set was “qualitatively” chosen. Assess performance with channel dropouts, reduced-channel subsets, and channel-adaptive or single-channel variants.

- Domain shift and physiological states: no stratified analysis across sleep/wake states, medication changes, or artifacts. Evaluate domain adaptation and robustness to distribution shifts.

- Seizure heterogeneity: no breakdown by seizure types, durations, or morphologies. Report per-type performance and failure modes.

- Calibration and uncertainty: model confidence calibration, reliability diagrams, and uncertainty estimates (for alarm triage) are not reported.

- Statistical rigor and reproducibility: single split with no cross-validation, CIs, or significance tests; limited baseline tuning details. Release code, seeds, and exact hyperparameters; report variability across runs.

- Fairness of comparisons: literature metrics are mixed across protocols and datasets; head-to-head comparisons under identical splits/protocols are missing.

- Post-processing and alarm logic: unlike some baselines, no post-processing or smoothing is evaluated. Study hysteresis, refractory periods, and alarm aggregation to reduce FA/h.

- Safety and OOD detection: no mechanisms for out-of-distribution detection, drift monitoring, or fail-safe behavior in atypical EEG or hardware faults.

- Transfer learning and personalization: strategies for few-shot patient adaptation, continual learning, or federated training are not explored.

- Multi-task extensions: the model addresses binary detection only. Explore seizure prediction (preictal), onset/offset localization, and source/region localization to increase clinical utility.

- Ethical/operational integration: human-in-the-loop workflows, alarm review burden, and clinical usability studies are absent; investigate how the system integrates into ICU/ward monitoring.

Practical Applications

Immediate Applications

Below are concrete use cases that can be deployed now or piloted with minimal adaptation, drawing on ConvMambaNet’s real-time, high-accuracy seizure detection and efficient long-sequence modeling.

- Clinical EEG triage and real-time alerting in Epilepsy Monitoring Units (EMU) and ICUs

- Sectors: Healthcare, Software (clinical AI)

- Tools/products/workflows: Containerized inference microservice (gRPC/REST) connected to existing EEG systems; bedside dashboards; HL7/FHIR event hooks to nurse call systems; integration with EDF+/BIDS-EEG pipelines; MNE/WFDB-based pre-processing; on-prem GPU/CPU inference for low-latency alerts; configurable thresholds to tune sensitivity/specificity.

- Assumptions/dependencies: Initial use as decision-support (not autonomous diagnosis); site-specific calibration to channels/montages and artifact profile; prospective validation beyond CHB-MIT pediatric cohort; cybersecurity and HIPAA/GDPR compliance; clinician-in-the-loop for alarm fatigue mitigation.

- Ambulatory EEG review acceleration for neurologists

- Sectors: Healthcare, Software (digital health)

- Tools/products/workflows: Offline batch scoring of 24–72h recordings; ranked timeline of high-probability epochs; active-learning review UI that retrains on confirmed events; PACS/EHR attachment of AI summaries; automated report drafts with seizure counts/durations.

- Assumptions/dependencies: Accurate channel mapping and metadata; variability across ambulatory devices; labeling drift controls; maintains traceability/audit logs for medico-legal review.

- Clinical trial analytics for seizure burden quantification (exploratory endpoints)

- Sectors: Healthcare, Pharma/MedTech R&D

- Tools/products/workflows: Standardized analysis pipeline for multi-site EEG datasets; harmonized preprocessing (MNE) and model scoring; uncertainty estimates to flag borderline events; quality-control dashboards.

- Assumptions/dependencies: IRB/ethics approval for secondary AI analysis; cross-site calibration; adjudication workflow for ground truth.

- Edge deployment prototypes on low-cost hardware for point-of-care monitoring

- Sectors: Healthcare, Embedded/Edge AI

- Tools/products/workflows: Jetson Nano/Xavier or Raspberry Pi 5 + NPU prototypes; quantization-aware training (INT8/FP16); streaming inference with sub-second latency; battery/thermal profiling; BLE/Wi-Fi connectivity to clinician apps.

- Assumptions/dependencies: Robust artifact handling; power budget for continuous EEG; regulatory pathway planning; re-training with device-specific noise characteristics.

- EEG vendor SDK or PACS plugin for automated seizure flagging

- Sectors: Medical device industry, Software

- Tools/products/workflows: OEM SDK with standardized gRPC APIs; plugin for major EEG systems (e.g., EDF+/DICOM-encapsulated EEG import); model versioning and performance monitoring; integration with hospital IT.

- Assumptions/dependencies: Vendor partnerships; post-market surveillance; configuration for different montages and sampling rates.

- Research reproducibility package and teaching module on SSMs for biosignals

- Sectors: Academia, Education

- Tools/products/workflows: Open-source code with MNE/WFDB data loaders; scripted experiments; notebooks explaining Mamba-SSM integration and initialization; ablation studies for class imbalance handling (balanced sampling, dropout).

- Assumptions/dependencies: Licensing for dataset access; compute availability for coursework; maintenance of library dependencies.

- Cross-signal adaptation to other biomedical time series (pilot-level)

- Sectors: Healthcare

- Examples: ECG arrhythmia detection; sleep staging; ICU event detection (e.g., delirium/sedation state via EEG); EMG tremor detection.

- Tools/products/workflows: Replace input front-end and preprocessing; retrain ConvMamba-style hybrid on domain datasets; deploy as hospital middleware for multi-signal monitoring.

- Assumptions/dependencies: Labeled datasets for target modality; domain-specific artifacts; clinical validation.

- Industrial and IoT anomaly detection prototypes using Mamba-style long-sequence modeling

- Sectors: Manufacturing, Energy, Transportation

- Examples: Vibration- or acoustic-based predictive maintenance; energy grid disturbance detection; wind turbine condition monitoring; rail/engine anomaly detection.

- Tools/products/workflows: Edge inference agent; sliding-window scoring; alert integrations (SCADA/CMMS); model drift tracking.

- Assumptions/dependencies: Sufficient labeled anomalies; sensor quality; adaptation of preprocessing; safety case documentation for operations.

Long-Term Applications

The following use cases require additional research, multi-center validation, scaling, or regulatory development before widespread deployment.

- FDA/CE-cleared closed-loop neurostimulation trigger (RNS/DBS) using on-device detection

- Sectors: Healthcare, MedTech (neurostimulation)

- Tools/products/workflows: Ultra-low-latency embedded inference; safety-critical software lifecycle (IEC 62304); verification/validation under ISO 13485; real-time interfaces to stimulation controllers; formal post-market surveillance.

- Assumptions/dependencies: Rigorous, prospective multi-center trials across age groups and seizure types; hazard analyses for false positives/negatives; co-design with stimulation protocols; long battery life and hardware certification.

- Seizure prediction (preictal forecasting) with Mamba-augmented temporal context

- Sectors: Healthcare, Digital therapeutics

- Tools/products/workflows: Training on preictal/ictal/interictal labels; cost-sensitive loss for early warnings; patient-specific personalization modules; user-facing interventions (medication prompts, behavioral guidance).

- Assumptions/dependencies: Access to long-duration labeled preictal data; clinically acceptable lead time and false alarm rates; human factors validation.

- Generalizable, foundation-like EEG models robust to site/device variability

- Sectors: Healthcare, AI Platforms

- Tools/products/workflows: Multi-institutional federated learning; domain adaptation and calibration layers; shift detection and automatic threshold tuning; continual learning with clinician feedback loops.

- Assumptions/dependencies: Data-sharing agreements or federated infrastructure; harmonized metadata (montages, sampling, impedance); governance for continual updates.

- Consumer-grade continuous monitoring with dry electrodes and smartphone inference

- Sectors: Consumer health, Telemedicine

- Tools/products/workflows: Wearable headbands/behind-the-ear devices; on-phone inference using NNAPI/Core ML; caregiver alerting and escalation; longitudinal tracking and adherence nudges.

- Assumptions/dependencies: Sufficient signal quality from dry electrodes; artifact suppression (motion, EMG); privacy-by-design; regulatory classification as medical device; reimbursement pathways.

- Multimodal fusion (EEG + ECG + IMU/audio/video) to reduce false alarms

- Sectors: Healthcare, Assistive technology

- Tools/products/workflows: Sensor fusion architectures; synchronized acquisition; context-aware gating; explainability dashboards indicating modal contributions.

- Assumptions/dependencies: New datasets with synchronized modalities; increased power/compute requirements; consent and privacy constraints (especially with audio/video).

- Hospital-wide neuro-monitoring orchestration with AI-driven staffing and escalation

- Sectors: Healthcare operations, Policy

- Tools/products/workflows: Enterprise monitoring hub aggregating EEG/ECG/SpO2; AI-driven prioritization of beds; integration with paging/incident systems; KPI tracking (alarm burden, response time, outcomes).

- Assumptions/dependencies: Interoperability (FHIR, HL7 v2, DICOM-EEG); safety cases for workflow automation; change management and training; institutional buy-in.

- Standardized evaluation protocols and policy guidance for AI EEG tools

- Sectors: Policy, Standards, Regulatory

- Tools/products/workflows: Benchmark suites with multi-site data; reporting standards (e.g., CONSORT-AI/DECIDE-AI extensions for EEG); bias/fairness audits across age/sex/ethnicity; post-deployment monitoring requirements.

- Assumptions/dependencies: Collaboration among societies (AAN, AES), regulators, and vendors; access to representative datasets; alignment with evolving AI regulations.

- Hardware–algorithm co-design and accelerators for SSM/Mamba operators

- Sectors: Semiconductors, Edge AI

- Tools/products/workflows: DSP/NPU primitives for selective state-space scans; compiler support for sequence kernels; co-optimized quantization; benchmarking suites for long-sequence latency/jitter.

- Assumptions/dependencies: Market demand for long-sequence workloads (biosignals, audio, industrial telemetry); ecosystem support (TVM, ONNX, CUDA/ROCm backends).

- Cross-sector long-sequence analytics platforms (finance, energy, seismology)

- Sectors: Finance, Energy, Environmental monitoring

- Examples: Market regime change detection; grid instability early warning; earthquake early detection.

- Tools/products/workflows: Domain-specific adapters; risk-aware alerting with confidence intervals; governance for model risk (e.g., SR 11-7 in finance).

- Assumptions/dependencies: Domain labels and ground truth scarcity; strict oversight for model risk; real-time data feeds and latency SLAs.

Notes on feasibility and transferability

- Reported performance (99% accuracy, AUC 0.97) is on CHB-MIT pediatric scalp EEG with specific preprocessing (8 s windows, 4 s stride, selected bipolar montages). Real-world performance will depend on:

- Channel montages, sampling rates, and device noise profiles.

- Artifact prevalence (motion, muscle, electrode pops) and clinical workflow.

- Patient mix (adult vs pediatric), seizure types, comorbidities, and medications.

- Prospective validation, external datasets, and calibration/personalization.

- The architecture’s strengths that enable these applications:

- Efficient long-range temporal modeling (Mamba SSM) embedded within CNN spatial feature extractors.

- Robustness under class imbalance via balanced sampling and regularization.

- Lower training/inference cost than many RNN/Transformer baselines, enabling edge and real-time use.

- Common dependencies across applications:

- Data governance and privacy compliance (HIPAA/GDPR).

- Model monitoring for drift and recalibration over time.

- Clear human-in-the-loop policies to manage residual false alarms.

- Documentation for auditability, reproducibility, and safety cases.

Glossary

- Adam optimizer: A stochastic optimization algorithm that adapts learning rates for each parameter using estimates of first and second moments of gradients; widely used to train deep networks. "trained by Adam optimizer \cite{ravichandranadam}."

- Area under the receiver operating characteristic curve (AUC-ROC): A performance metric that summarizes a classifier’s ability to discriminate between classes across thresholds by integrating the ROC curve. "and the area under the receiver operating characteristic curve (AUC-ROC) are all included."

- Batch Normalization: A technique that normalizes intermediate activations to stabilize and speed up training by controlling mean and variance within batches. "Batch Normalization begins with , ."

- Bipolar EEG channels: EEG montage where each channel records the voltage difference between two electrodes, enhancing local activity detection. "The bipolar EEG channels in Table \ref{tab:channel_labels} were chosen"

- CHB-MIT Scalp EEG dataset: A benchmark EEG dataset of pediatric subjects with epilepsy, used for seizure detection research. "Evaluated on the CHB-MIT Scalp EEG dataset, ConvMambaNet achieved a 99\% accuracy"

- Class imbalance: A condition where one class is significantly more prevalent than others, complicating training and evaluation of classifiers. "demonstrated robust performance under severe class imbalance."

- Confusion matrix: A table summarizing true/false positives and negatives to evaluate classification performance. "validated by the confusion matrix shown in Figure \ref{fig:confusion-roc-vertical}."

- Depthwise convolution: A convolution that operates on each input channel separately, reducing parameters and computation. "applied in the depthwise convolution before the selective scan."

- Dropout: A regularization technique that randomly zeroes activations during training to reduce overfitting. "have the dropout terms utilized to reduce overfitting"

- Eigenvalues: Scalars indicating the stretching/shrinking factors along principal directions of a linear transformation; here used to ensure stability of the state-space dynamics. "so that eigenvalues are non-positive at initialization"

- Electroencephalography (EEG): A method for recording electrical activity of the brain via scalp electrodes. "Electroencephalography (EEG) remains the primary tool for monitoring neural activity"

- Exploding/vanishing gradients: Training instabilities in deep/long sequence models where gradients grow or shrink excessively, hindering learning. "alleviate exploding/vanishing gradients on long EEG sequences."

- Glorot initialization: A weight initialization scheme that maintains variance through layers to stabilize training. "Fully connected layer use Glorot initialization with zero bias."

- GPU parallelism: Leveraging the parallel processing capabilities of GPUs to accelerate model training and inference. "achieved by utilizing GPU parallelism."

- He/Kaiming initialization: A weight initialization method tailored for ReLU-like activations to preserve signal variance. "Weights in convolutions are initialized at He/Kaiming with zero biases"

- Long Short-Term Memory (LSTM): A type of recurrent neural network architecture designed to capture long-term dependencies via gating mechanisms. "Recurrent Neural Networks (RNNs) (e.g., Long Short-Term Memory (LSTM)) handle temporal dependencies."

- Mamba Structured State Space Model (SSM): A hardware-efficient sequence modeling framework using selective state-space layers to capture long-range dependencies. "the recently developed Mamba Structured State Space Model (SSM)."

- Mamba-style selective recurrence: A sequence modeling strategy that selectively updates states to balance efficiency and contextual modeling. "by combining bidirectional context modeling with Mamba-style selective recurrence"

- MNE library: An open-source Python toolkit for processing and analyzing MEG/EEG data. "processed by means of the MNE library \cite{esch2019mne, samarpita2023differentiating}."

- Multi-head attention mechanisms: Attention modules that project queries/keys/values into multiple subspaces to capture diverse relationships in sequences. "multi-head attention mechanisms \cite{vaswani2017attention} are embedded into the Mamba-SSM block"

- Onset-offset timing: Precise annotations marking the start and end of seizure events in time. "labelled with exact onset-offset timing"

- Recurrent Neural Networks (RNNs): Neural architectures that process sequences by maintaining hidden states across time steps. "Recurrent Neural Networks (RNNs) (e.g., Long Short-Term Memory (LSTM)) handle temporal dependencies."

- Selective scan: A Mamba-specific mechanism that performs efficient sequence scanning by selectively updating states. "before the selective scan."

- Sensitivity: The true positive rate measuring how well a model detects actual positive events. "ConvMambaNet's high specificity and good sensitivity are validated"

- Spatiotemporal attention mechanisms: Attention methods that jointly model spatial and temporal dependencies, often in sequence and graph data. "spatiotemporal attention mechanisms can perform exceptionally well on seizure prediction tasks"

- Specificity: The true negative rate reflecting how well a model avoids false positives. "ConvMambaNet's high specificity and good sensitivity are validated"

- State Space Models (SSMs): Mathematical models that represent systems via hidden states and observations, suited for sequential data. "SSMs, or structured state space models, provide a mathematical framework"

- State-space representation: A formulation of dynamical systems using state vectors and linear operators to model temporal evolution. "via state-space representation."

- Stratified sampling: A data splitting strategy that preserves class proportions across train/test sets to reduce sampling bias. "via stratified sampling."

- Throughput: The rate at which a system processes data; higher throughput implies faster training/inference. "Mamba's high throughput and low latency achieved by utilizing GPU parallelism."

- Waveform Database (WFDB): A toolkit for reading, writing, and processing physiologic signal annotations and recordings. "with the help of WFDB (Waveform Database) library"

- Weighted F1-score: An F1-score averaged across classes weighted by class support, reflecting performance on imbalanced data. "The weighted F1-score was 0.99"

Collections

Sign up for free to add this paper to one or more collections.