TranslateGemma Technical Report

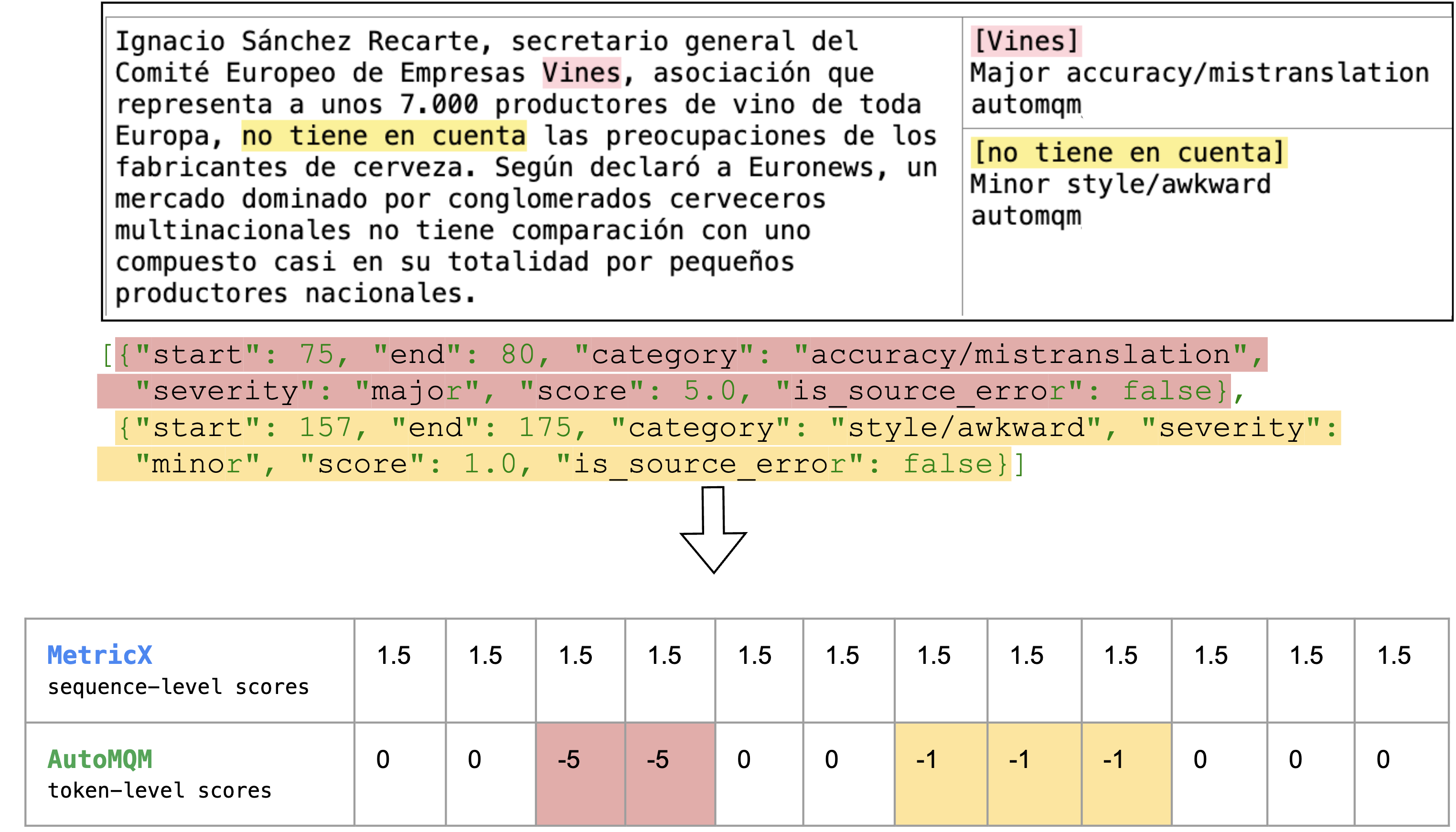

Abstract: We present TranslateGemma, a suite of open machine translation models based on the Gemma 3 foundation models. To enhance the inherent multilingual capabilities of Gemma 3 for the translation task, we employ a two-stage fine-tuning process. First, supervised fine-tuning is performed using a rich mixture of high-quality large-scale synthetic parallel data generated via state-of-the-art models and human-translated parallel data. This is followed by a reinforcement learning phase, where we optimize translation quality using an ensemble of reward models, including MetricX-QE and AutoMQM, targeting translation quality. We demonstrate the effectiveness of TranslateGemma with human evaluation on the WMT25 test set across 10 language pairs and with automatic evaluation on the WMT24++ benchmark across 55 language pairs. Automatic metrics show consistent and substantial gains over the baseline Gemma 3 models across all sizes. Notably, smaller TranslateGemma models often achieve performance comparable to larger baseline models, offering improved efficiency. We also show that TranslateGemma models retain strong multimodal capabilities, with enhanced performance on the Vistra image translation benchmark. The release of the open TranslateGemma models aims to provide the research community with powerful and adaptable tools for machine translation.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

TranslateGemma: A Simple Explanation

What is this paper about?

This paper introduces TranslateGemma, a set of open AI models made by Google to translate between many languages. They start from an existing LLM (Gemma 3) and teach it to be much better at translation. The big ideas are to train it in two stages and to check its progress carefully with both computers and human experts.

What were the researchers trying to find out?

They wanted to:

- Build strong, open translation models that people can study, use, and improve.

- Make translation better across many languages, including those with less available data.

- Keep the model useful for other tasks (not just translation) and keep its ability to understand images with text.

- See if smaller, faster models can reach the quality of larger, slower ones.

How did they do it?

Think of the model like a student learning languages. The team trained it in two steps:

- Supervised Fine-Tuning (SFT): “Classroom lessons and practice”

- The model practiced on pairs of sentences: one in a source language and the matching one in a target language. This is called “parallel data.”

- Where there wasn’t enough human-translated text, they created high-quality “synthetic” translations using a strong AI (Gemini 2.5). They were careful: they generated many options and kept only the best ones using a quality checker called MetricX.

- They also mixed in some general “follow instructions” tasks (about 30%) so the model wouldn’t forget how to do non-translation things.

- Reinforcement Learning (RL): “Coaching with judges”

- After classroom training, the model got coaching where multiple “judges” scored its translations. The model then learned to make choices that increase those scores.

- These judges included:

- MetricX-QE: a model that scores translation quality without needing a perfect reference.

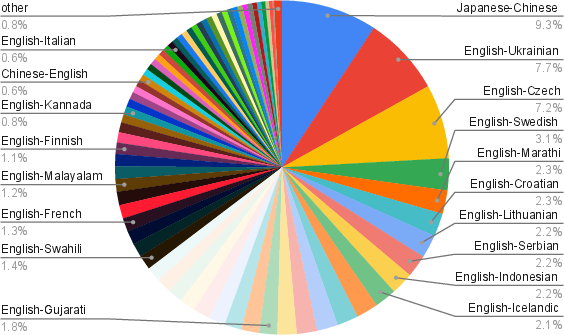

- AutoMQM: a system that highlights exact problem spots in a translation (like wrong words or missing parts).

- ChrF: a simple check that compares how similar the translation is to a reference using characters.

- A “Naturalness” judge: checks if the translation sounds like a native speaker wrote it.

- A general skills judge: makes sure the model still follows instructions and reasons well.

- The model didn’t just get an overall score. Some judges pointed to the exact words or phrases that were wrong. That’s like a teacher circling mistakes in your essay, which helps you learn faster.

Data choices in simple terms:

- They used a large collection of texts in many languages (MADLAD-400) to create synthetic translation examples.

- For low-resource languages (where data is scarce), they added carefully made human translations from SMOL and GATITOS datasets.

- They picked and cleaned examples so the training data was high quality and covered many scripts and styles.

What did they find?

Here are the main results in plain language:

- Better translations across the board: On a large 55-language benchmark (WMT24++), TranslateGemma beat the original Gemma 3 models at all sizes. Two common scoring tools showed this:

- MetricX (lower is better): for the biggest model, the score dropped from about 4.04 to 3.09, which is a clear improvement.

- COMET-22 (higher is better): scores went up for the TranslateGemma models.

- Smaller can match bigger: The 12B TranslateGemma model often matched or beat the older 27B Gemma 3 model. That means you can get high quality with less computing power.

- Works across many languages: Improvements showed up for both well-resourced languages (like German and Spanish) and low-resourced ones (like Icelandic and Swahili).

- Still good with images: Even though they didn’t train on images this time, the models stayed strong at translating text inside pictures (tested with the Vistra benchmark). The larger TranslateGemma models improved over the base models here, too.

- Human checks mostly agree: Professional translators rated outputs using MQM (a way to mark exact errors). TranslateGemma usually won, especially for lower-resource directions. There were a couple of exceptions:

- English→German was about tied.

- Japanese→English got slightly worse for names (mistranslating proper names), even though other parts improved.

Why does this matter?

- More people can access information: Better translations help people read news, books, and instructions in their own language.

- Helps low-resource languages: The careful mix of synthetic and human data improved languages that often get left behind.

- Faster and cheaper: Getting near-top quality from smaller models means translation can be run on fewer computers, saving time and energy.

- Open models for the community: By releasing these models openly, researchers and developers can build on them, test new ideas, and make translation even better.

- A training recipe that works: The two-step approach—classroom-style fine-tuning plus “judged” coaching—shows a practical way to push translation quality higher without losing other abilities, like following instructions or handling images.

In short, TranslateGemma shows that with smart training, good data, and multiple quality checks, we can make translation models that are accurate, natural-sounding, efficient, and broadly useful.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, concrete list of what remains missing, uncertain, or unexplored in the paper, framed so future researchers can act on it.

- Quantify the relative contribution of SFT vs RL to final quality through controlled ablations (e.g., SFT-only, RL-only, and removing or varying each reward model component).

- Detail and test the weighting, scaling, and combination scheme for reward models during RL (MetricX, AutoMQM, ChrF, Naturalness, Generalist), including sensitivity analyses and alternative credit assignment strategies beyond broadcasting sequence-level rewards uniformly.

- Provide ablations on token-level vs sequence-level rewards to verify the claimed training efficiency and improved credit assignment in different language/resource regimes.

- Examine potential metric overfitting: synthetic data selection and RL use MetricX-QE, while evaluation also emphasizes MetricX; test robustness with diverse metrics (COMET variants, BLEURT, HUME, MAUVE) and expanded human evaluations.

- Assess test-set contamination and data leakage: report deduplication and overlap checks between MADLAD-400, synthetic datasets, and WMT24++/WMT25 test sets; publish scripts/checksums for reproducibility.

- Release or document availability/licensing of the synthetic parallel data and reward models (Gemma-AutoMQM-QE, Naturalness Autorater) to enable full pipeline replication; specify training/evaluation licenses and usage constraints.

- Report full inference/decoding settings (temperature, beam size, MBR or constrained decoding usage) and study their impact on translation quality and consistency across languages.

- Measure prompt sensitivity: compare the “preferred prompt” to simpler and alternative prompts; quantify prompt adherence and failure modes (e.g., extraneous commentary) and provide prompt-robust decoding guidelines.

- Justify and ablate freezing of embeddings during SFT; quantify effects across scripts and low-resource languages to validate the claim that freezing helps languages not covered in the SFT mix.

- Investigate the reliability of reference-free QE rewards (MetricX-QE, AutoMQM-QE) for low-resource and morphologically rich languages; calibrate per-language or per-family reward reliability and consider language-specific weighting.

- Analyze reward hacking risks and the accuracy–naturalness trade-off: run adversarial evaluations for under/overtranslation, hallucination, and content omission; quantify adequacy vs fluency changes introduced by the Naturalness Autorater.

- Expand human evaluation: increase language coverage beyond the 10 pairs, include more target/source families and scripts, compute inter-annotator agreement, statistical significance, and run multi-rater assignments per document to reduce bias.

- Perform domain-specific analyses (e.g., legal, medical, technical, informal/social media) beyond literary/news/social; measure domain transfer, terminology fidelity, and jargon handling.

- Evaluate document-level translation with full context: track coherence, pronoun/reference consistency, lexical cohesion, and cross-sentence adequacy; compare sentence-level vs document-level inference setups.

- Diagnose and mitigate named entity errors (e.g., regression in Japanese→English): introduce entity-aware training, constrained decoding, or terminology injection and measure improvements across languages.

- Examine robustness to noisy inputs (typos, non-standard orthography, code-switching, dialectal variation, mixed scripts), and to colloquial or low-formality registers; report failure modes and mitigation strategies.

- Quantify performance on truly low-/no-resource languages not present in training; test zero-shot generalization, script transfer, and cross-family transfer mechanisms.

- Provide efficiency and cost metrics: training compute, RL sample complexity, inference latency/throughput, memory footprint; characterize quality–cost trade-offs across 4B/12B/27B models.

- Clarify the role of 30% generic instruction-following data: evaluate post-training general capabilities (reasoning, tool use, alignment) to confirm no degradation and characterize trade-offs with translation quality.

- Explore terminology and constraint handling: test glossaries, user-provided term lists, and constrained decoding; measure terminological consistency and adherence.

- Expand image translation evaluation: move beyond single-text images to multi-text, complex layouts, varied fonts/scripts, occlusions; compare direct image-to-text translation to OCR+MT pipelines and analyze error sources.

- Investigate the 12B Comet22 drop on Vistra: identify causes (e.g., vision encoder adaptation, prompt issues, decoding settings) and propose targeted multimodal fine-tuning or alignment fixes.

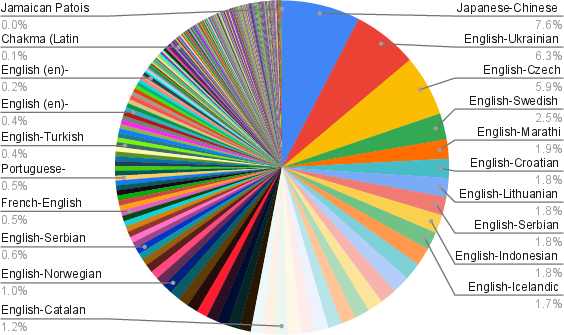

- Report language distribution specifics and per-language token counts used in SFT/RL beyond aggregate figures; analyze how sampling strategies (e.g., UniMax) affect per-language outcomes.

- Study long-context translation performance (e.g., chapters, reports) leveraging Gemma 3’s long-context capabilities; measure memory effects, re-referencing, and consistency over thousands of tokens.

- Provide detailed hyperparameter and training configuration sweeps (optimizer settings, LR schedules, batch sizes, RL horizons, normalization schemes) and best-practice recipes for reproducibility.

- Evaluate fairness and safety: gender/identity bias in translation, toxicity propagation, culturally sensitive content handling; include targeted stress tests and mitigation techniques (e.g., controlled rephrasing).

- Test locale and orthographic variants (e.g., en-US→zh-CN vs en-GB→zh-TW), script variants (Simplified vs Traditional Chinese, Serbian Cyrillic/Latin), and measure locale-aware formatting and style fidelity.

- Analyze the impact of synthetic reference quality used for ChrF-based rewards; compare to human references and study how reference noise affects learning signals.

- Verify the paper’s hypothesis that the 27B model benefits more from wide language coverage in SFT: run controlled experiments varying language breadth and measure capacity–coverage interactions.

Practical Applications

Immediate Applications

Below are deployable applications that can be built now using the released TranslateGemma models, their prompting recipe, and the paper’s training/evaluation workflows.

Industry

- Localization and internationalization at scale (software, gaming, media)

- What: Plug

TranslateGemma-12B/27Binto CAT tools and CI/CD localization pipelines to pretranslate UI strings, documentation, release notes; route “hard” segments to human post-editing using quality estimation. - Tools/workflows: CAT plug-ins with the paper’s preferred prompt; translation memory prefill; QE gate using MetricX-QE and AutoMQM spans; batch APIs.

- Assumptions/dependencies: Domain adaptation may be needed; license/compliance checks; named-entity accuracy for some pairs (e.g., noted regression in Ja→En entities) requires human review.

- What: Plug

- Multilingual customer support (software, e-commerce, telecom)

- What: Real-time translation of chat, email, and ticket content across 50+ languages; prioritize escalations using QE scores.

- Tools/workflows: Helpdesk connector that wraps content in the provided prompt; AutoMQM-span highlights to agents for quick fixes; fallback routing for low-confidence outputs.

- Assumptions/dependencies: PII handling and guardrails; latency vs. model size trade-offs; human-in-the-loop for sensitive cases.

- Global product listing and user reviews (e-commerce, marketplaces)

- What: Translate listings, reviews, and seller communications, including text embedded in images (e.g., label photos), leveraging image-translation capability.

- Tools/workflows: Image ingest → model prompt (no OCR prerequisite) for single-text images; text pre/post-processing; QE thresholds to flag items for review.

- Assumptions/dependencies: Current Vistra evaluation used single-text images; multi-text or complex layouts may need OCR/vision pre-processing.

- Regulated document translation with quality triage (finance, legal, healthcare)

- What: Batch translation of contracts, KYC materials, disclosures, patient instructions; use AutoMQM spans to direct legal/clinical reviewers to critical errors.

- Tools/workflows: Document hub with segment-level MQM/QE scoring dashboards; “critical error” filters; revision tracking.

- Assumptions/dependencies: Mandatory human validation in regulated settings; glossary/termbank integration; accuracy-naturalness trade-offs must be managed.

- Media subtitling and captioning

- What: Generate and post-edit subtitles for series, news, and user videos across high- and low-resource languages (e.g., Swahili, Marathi).

- Tools/workflows: ASR → segmentation → TranslateGemma with preferred prompt → QE-driven post-edit; segment alignment tools.

- Assumptions/dependencies: Domain tuning for idioms/cultural references; rights management; ASR quality upstream.

- Quality estimation and translation QA-as-a-service

- What: Offer automatic translation quality screening using MetricX-QE and AutoMQM span annotations without references.

- Tools/workflows: API that returns scalar scores plus error spans/categories; routing decisions (accept, auto-fix, or human edit).

- Assumptions/dependencies: Availability and licensing of MetricX/AutoMQM checkpoints; calibration per domain.

- Synthetic parallel data generation pipelines for internal MT training

- What: Replicate the paper’s Gemini 2.5 Flash + MetricX-guided sampling to build domain-specific synthetic corpora for proprietary MT systems.

- Tools/workflows: Source bucketing by length; 2-sample pre-filter to select “high-benefit” sources; 128-sample generation + QE reranking; formatting filters.

- Assumptions/dependencies: Access to a high-quality generator (e.g., Gemini 2.5 Flash) and MetricX-QE; generation budget.

- Developer-facing APIs and SDKs for standardized prompting

- What: Expose APIs that enforce the preferred prompt and language codes, ensuring consistent outputs for downstream automation.

- Tools/workflows: Prompt wrappers; schema validators; logging of MetricX/Comet22 for continuous monitoring.

- Assumptions/dependencies: Prompt adherence by all integration points; model version pinning.

Academia

- Strong open baselines for MT research across 55+ language pairs

- What: Use

TranslateGemmaas reproducible baselines for MT, low-resource transfer, and cross-lingual generalization studies. - Tools/workflows: Kauldron SFT setup; frozen embeddings for script coverage; public benchmarks (WMT25, WMT24++).

- Assumptions/dependencies: Compute for 4B/12B/27B fine-tuning; dataset licensing (SMOL, GATITOS).

- What: Use

- Research on RL for NLG with token-level reward integration

- What: Study and extend the paper’s token-level advantage combination of sequence-level metrics (MetricX, ChrF) and span-level rewards (AutoMQM, naturalness).

- Tools/workflows: RL training harness; batch-normalized combined advantages; ablation on reward mixtures.

- Assumptions/dependencies: Availability of span-level annotators or autoraters; stability vs. reward shaping.

- Human evaluation design replication with MQM

- What: Adopt “pseudo-SxS” rater assignment and Anthea tooling to run robust human MQM evaluations.

- Tools/workflows: Segment truncation rules; rater assignment; error-category analyses.

- Assumptions/dependencies: Budget for professional raters; inter-rater agreement procedures.

Policy and Government

- Multilingual access to public services and forms

- What: Translate civic information, benefits forms, and legal notices into minority languages with QE-based triage for human review.

- Tools/workflows: Content management integration; risk-weighted human review queues; public feedback loops.

- Assumptions/dependencies: Accessibility standards; data protection requirements; domain-specific glossaries.

- Emergency communication and signage updates

- What: Rapid translation of alerts and critical signage; limited image-translation support for single-text signs.

- Tools/workflows: Crisis content hub; pre-approved templates per language; fast-track review path.

- Assumptions/dependencies: Operational readiness; contingency QA; offline failovers not guaranteed.

Daily Life

- Camera-based translation for travel and everyday tasks

- What: Translate street signs, menus, and labels directly from images in many languages.

- Tools/workflows: Mobile app with image input → TranslateGemma VLM; user confidence indicators based on QE.

- Assumptions/dependencies: Complex images may need OCR; privacy settings for on-device vs. cloud.

- Reading assistance and cross-lingual browsing

- What: Browser extensions to translate articles, forums, and documentation with improved quality for low-resource languages.

- Tools/workflows: One-click translation widget with the paper’s prompt; glossary overlay; “naturalness” tuning per user.

- Assumptions/dependencies: Content policy compliance; caching and latency controls.

- Language learning aids

- What: Side-by-side translations plus AutoMQM-like span highlights to show typical errors and natural alternatives.

- Tools/workflows: Study mode with error categories; sentence/paragraph blob support (up to ~512 tokens).

- Assumptions/dependencies: Pedagogical alignment; user-level preference modeling.

Long-Term Applications

These use cases are enabled by the paper’s methods but require further R&D, scaling, or multimodal extensions.

Industry

- End-to-end multimodal document translation without explicit OCR

- What: Translate complex documents (multi-box layouts, tables, dense images) directly from visuals, using improved multimodal training.

- Tools/workflows: Layout-aware prompts; vision-language pretraining on documents; integrated QE for multimodal outputs.

- Assumptions/dependencies: Additional multimodal fine-tuning; datasets with layout/text annotations; robustness to multiple text instances.

- On-device offline translation for constrained hardware

- What: Deploy quantized

TranslateGemma-4Bfor offline mobile/edge translation, including basic image translation. - Tools/workflows: Quantization/distillation; caching; lightweight QE proxies.

- Assumptions/dependencies: Further optimization and memory footprint reduction; energy/thermal constraints; privacy guarantees.

- What: Deploy quantized

- Real-time AR translation overlays

- What: Persistent, low-latency overlay translating multiple text regions in live video with naturalness controls.

- Tools/workflows: On-device vision text detection + VLM translation; per-region QE; latency-aware decoding.

- Assumptions/dependencies: Stable multi-text handling; GPU/NPUs on device; tracking and layout stability.

- Domain- and brand-personalized translation via RL with preference rewards

- What: Train organization-specific naturalness/terminology reward models to enforce brand voice and regulatory phrasing.

- Tools/workflows: Preference data collection; reward shaping; continuous RL with guardrails.

- Assumptions/dependencies: Safe online learning pipelines; governance for the accuracy–naturalness trade-off.

- Continuous quality governance with live feedback loops

- What: Use production QE, user edits, and human MQM audits as rewards to auto-correct systemic errors over time.

- Tools/workflows: Streaming telemetry; reward aggregation; rollback and A/B policies.

- Assumptions/dependencies: Reliable metric drift detection; rater operations; compliance approvals.

- Speech and multimodal conversation translation

- What: Extend to speech-to-text translation and real-time multilingual meetings.

- Tools/workflows: ASR/streaming encoder integration; latency-aware decoding; diarization + speaker labels.

- Assumptions/dependencies: Audio fine-tuning; low-latency inference; privacy and consent management.

Academia

- Generalizing token-level reward RL to other generation tasks

- What: Apply span-aware rewards to summarization, data-to-text, and code translation for better credit assignment.

- Tools/workflows: New autoraters and span annotators; mixed sequence/span advantage computation.

- Assumptions/dependencies: Reliable reference-free metrics per task; stable training with reward ensembles.

- Systematic study of synthetic data curriculum design

- What: Explore metric-guided sampling, segment-length mixtures, and formatting filters across domains and languages.

- Tools/workflows: Controlled curricula; ablations on QE thresholds and sample counts; domain transfer experiments.

- Assumptions/dependencies: Access to strong generators; compute budgets; evaluation datasets.

- Expansion to additional low-resource and endangered languages

- What: Combine SMOL/GATITOS-style resources with synthetic data to bootstrap high-quality MT where none exists.

- Tools/workflows: Community partnerships; lexicon curation; human-in-the-loop validation loops.

- Assumptions/dependencies: Ethical data collection; orthography standardization; limited bilingual expertise.

Policy and Government

- National translation platforms with auditability

- What: Government-operated open MT stacks for public communication, with MQM-based certification and transparent QE.

- Tools/workflows: Metrics dashboards; independent audits; public model cards and error taxonomies.

- Assumptions/dependencies: Procurement frameworks for open models; data residency; governance over updates.

- Standards for translation quality and risk labeling

- What: Regulatory standards that codify MQM categories and QE thresholds for high-stakes domains (health, immigration, finance).

- Tools/workflows: Conformance suites; reference-free metric baselines; incident reporting.

- Assumptions/dependencies: Multi-stakeholder consensus; alignment with accessibility laws.

Daily Life

- Real-time bilingual conversation and group chat translation with preference tuning

- What: Personalized styles (formality, dialect) and named-entity fidelity in voice and text across devices.

- Tools/workflows: User preference profiles; entity protection/reinjection; latency-aware decoding.

- Assumptions/dependencies: Speech integration; privacy-preserving personalization; evaluation for miscommunication risk.

- Assistive technologies for low literacy and accessibility

- What: Image-to-text-to-speech pipelines translating signage, forms, and packaging into local languages with simple phrasing.

- Tools/workflows: Read-aloud + simplified output mode; safety filters; offline operation.

- Assumptions/dependencies: Additional naturalness/clarity rewards; device constraints; community validation.

Notes on Cross-Cutting Assumptions and Dependencies

- Model availability and licensing: Deployment depends on permissible use of

TranslateGemmacheckpoints and associated reward/metric models (MetricX, AutoMQM). - Prompt adherence: Consistent use of the paper’s preferred prompt and language codes improves determinism and quality in production workflows.

- Human-in-the-loop: High-stakes or regulated contexts should include professional review, especially for named entities and critical instructions.

- Domain adaptation: Fine-tuning or glossary integration may be necessary for legal, clinical, and financial terminology.

- Multimodal limits: Current image-translation results were strongest for single-text images; complex layouts require additional vision/OCR engineering.

- Resource trade-offs: Choose model size based on latency and cost constraints; smaller models offer efficiency but may need stricter QE gating.

- Metric drift and governance: Production use of QE/metrics should include monitoring for domain drift and periodic human audits to avoid overfitting to metrics.

Glossary

- AdaFactor optimizer: An adaptive optimization algorithm that reduces memory usage while training large models. "For fine-tuning we use the AdaFactor optimizer \citep{shazeer2018adafactor} with a learning rate of 0.0001 and a batch size of 64, running for 200k steps."

- AutoMQM: An automatic, fine-grained machine translation evaluation approach that identifies error spans and categories, aligned with MQM. "Default \gls{mqm} weights \citep{freitag2021experts} were used in computing (token-level) rewards from AutoMQM outputs."

- Batch normalization: Normalizing values across a batch during training to stabilize and speed up learning; here applied to RL advantages. "The combined advantages were then batch-normalized."

- ChrF: A character n-gram F-score metric for machine translation that measures lexical overlap. "ChrF \citep{popovic2015chrf}, a lexical overlap-based translation metric."

- Comet22: A neural metric for automatic MT evaluation released in 2022, used to assess translation quality. "We evaluate TranslateGemma using MetricX24 \citep{juraska2024metricx} and Comet22 \citep{rei2022comet}."

- Embedding parameters: The learned vectors mapping tokens to continuous representations; freezing them can preserve prior knowledge. "We update all model parameters, but freeze the embedding parameters, as preliminary experiments indicated this helped with translation performance for languages and scripts not covered in the SFT data mix."

- Ensemble of reward models: Combining multiple metrics or models to provide a richer reward signal for RL training. "This is followed by a reinforcement learning phase, where we optimize translation quality using an ensemble of reward models, including MetricX-QE and AutoMQM, targeting translation quality."

- GATITOS: A curated multilingual lexicon and parallel dataset aimed at improving low-resource machine translation. "This data comes from the SMOL~\citep{caswell2025smol} and GATITOS~\citep{jones-etal-2023-gatitos} datasets."

- Greedy decoding: A deterministic generation strategy that selects the highest-probability token at each step. "we select the sources where the sample achieves the largest improvement over the greedy decoding."

- Kauldron SFT tooling: A specialized framework used to perform supervised fine-tuning of LLMs. "We use the Kauldron SFT tooling\footnote{\url{https://kauldron.readthedocs.io/en/latest/} to fine-tune the Gemma 3 checkpoints."

- MADLAD-400: A large, multilingual, audited corpus used as monolingual source data for synthetic translation generation. "As the source of monolingual data we use the MADLAD-400 corpus \citep{kudugunta2023madlad}."

- MetricX-24-XXL-QE: A learned, regression-based quality estimation metric aligned with MQM scoring, used without references. "MetricX-24-XXL-QE \citep{juraska2024metricx}, a learned, regression-based translation metric producing a floating point score between 0 (best) and 25 (worst), matching the standard \gls{mqm} score range \citep{freitag2021experts}."

- MetricX24: An automatic MT evaluation metric used to measure translation quality across language pairs. "We evaluate TranslateGemma using MetricX24 \citep{juraska2024metricx} and Comet22 \citep{rei2022comet}."

- MQM (Multidimensional Quality Metrics): A human evaluation framework that annotates error spans by type and severity to derive quality scores. "We do so using MQM \citep{lommel-etal-mqm, freitag2021experts}, a human evaluation framework where professional translators highlight error spans in translations, with document context, assigning a severity and category to each, with a score being automatically derived by counting the errors with a weighting scheme."

- Naturalness Autorater: An LLM-as-a-judge component that penalizes outputs that sound non-native in the target language. "Naturalness Autorater developed in-house, using the base RL policy model as a prompted LLM-as-a-Judge."

- OCR (Optical Character Recognition): Technology that extracts text from images; relevant for translating text within images. "In particular, we did not include any other information about the text, like its location in the image or a previous OCR pass."

- Pseudo-SxS rater assignment: An evaluation setup where the same human rater evaluates outputs from multiple systems for the same source document. "Following \citet{riley-etal-2024-finding}, we used a ``pseudo-SxS'' rater assignment, where all system outputs for a particular source document were evaluated by the same rater."

- QE (Quality Estimation): Estimating translation quality without reference translations, often using learned metrics. "we used it as a QE metric by passing in an empty reference."

- Reinforcement Learning (RL): Training approach that optimizes model behavior based on reward signals, used here to improve translation quality. "This is followed by a reinforcement learning phase, where we optimize translation quality using an ensemble of reward models, including MetricX-QE and AutoMQM, targeting translation quality."

- Reward model: A metric or model that assigns rewards to outputs, guiding RL training. "We used the following metrics as reward models during RL:"

- Reward-to-go: The cumulative future reward used to compute advantages for each timestep in RL. "Note that advantage is computed from sequence-level rewards as `reward-to-go', meaning that rewards are broadcast uniformly to every token."

- Sequence-level rewards: Rewards assigned to entire generated sequences rather than individual tokens. "We used \gls{rl} algorithms extended to support token-level advantages, which were added to the advantages computed from sequence-level rewards."

- SMOL: Professionally translated parallel data covering many under-represented languages, used to improve diversity and script coverage. "SMOL covers 123 languages and GATITOS covers 170."

- Supervised Fine-Tuning (SFT): Adapting a pre-trained model using labeled data to improve performance on a specific task. "For supervised fine-tuning (SFT), we begin with the released Gemma 3 27B, 12B and 4B checkpoints."

- Temperature sampling: A stochastic decoding method that adjusts randomness via a temperature parameter. "sampled with a temperature of~$1.0$"

- Token-level advantages: RL credit assignment signals computed at the token or span level to improve training efficiency. "We used \gls{rl} algorithms extended to support token-level advantages, which were added to the advantages computed from sequence-level rewards."

- Vistra benchmark: A dataset for evaluating translation of text in natural images across multiple languages. "We used the Vistra benchmark \citep{salesky-etal-2024-benchmarking} to assess whether the models retained their ability to translate text within images after our additional training steps."

- WMT25: The 2025 Workshop on Machine Translation shared task dataset used for evaluation. "The source data is all taken from the WMT25 translation task, using the literary, news, and social domains."

Collections

Sign up for free to add this paper to one or more collections.