- The paper introduces a transformer-based probabilistic model that applies deep evidential regression with NIG priors to decompose epistemic and aleatoric uncertainty in time series forecasting.

- It achieves competitive point prediction accuracy while providing calibrated uncertainty estimates for both uncertainty sources.

- Empirical evaluations on cryptocurrency data demonstrate improved risk-aware decision-making with sharper prediction intervals and robust trading metrics.

ProbFM: Deep Evidential Regression for Uncertainty Quantification in Time Series Foundation Models

Motivation and Problem Statement

Recent advances in Time Series Foundation Models (TSFMs) have led to notable progress in transferability and zero-shot forecasting in financial contexts. Despite robust point prediction capabilities, a significant limitation of current TSFMs is their inadequate treatment of uncertainty quantification. Existing frameworks frequently rely on restrictive parametric assumptions, merge diverse uncertainty sources, or require auxiliary post-hoc calibration mechanisms. In disciplines such as quantitative finance, principled uncertainty estimation—particularly the explicit decomposition into epistemic (model) and aleatoric (data) uncertainty—remains a prerequisite for risk-aware decision-making.

ProbFM addresses these gaps by proposing a transformer-based probabilistic foundation model integrating Deep Evidential Regression (DER) with Normal-Inverse-Gamma (NIG) priors, enabling direct epistemic-aleatoric uncertainty decomposition. This methodology streamlines uncertainty estimation: it circumvents pre-specified distributional forms and expensive sampling, achieving competitive accuracy and actionable uncertainty decomposition in a single network pass.

ProbFM Architecture and Methodological Innovations

ProbFM’s core architecture encompasses five design stages:

- Input Processing: Adaptive patching based on series frequency, positional encoding enriched with temporal features.

- Transformer Module: Standard multi-head attention and Swish-Gated Linear Units for representation learning from temporally structured inputs.

- DER Head: Outputs NIG distribution parameters (μ,λ,α,β); facilitates uncertainty estimation over both mean and variance, with parameter constraints enforced via Softplus activations for strict positivity and stability.

- Loss Function: Combination of evidential and coverage loss, enforcing both evidence-reliant predictive accuracy and direct coverage optimization of prediction intervals, sidestepping binning or ad hoc calibration required by ECE or temperature scaling.

- Single-Pass Inference: During inference, ProbFM computes predictive mean, total variance, and uncertainty decomposition in one forward call.

ProbFM introduces evidence annealing during training to modulate evidence accumulation, preventing overconfidence in early epochs and facilitating stable learning of probabilistic representations.

Explicit Epistemic and Aleatoric Uncertainty Decomposition

Deriving uncertainty measures under NIG priors yields:

- Predictive mean: y^=μ

- Aleatoric uncertainty: α−1β

- Epistemic uncertainty: (α−1)λβ

- Total predictive variance: α−1β(1+λ1)

This enables filtering predictions by uncertainty type and adapting downstream decisions (e.g., trade execution, position sizing) to the estimated reliability of forecasts.

Controlled Empirical Evaluation

A rigorous statistical comparison is performed by maintaining a consistent 1-layer LSTM backbone with identical optimization and preprocessing pipelines across all methods. Five probabilistic approaches are benchmarked: DER, Gaussian NLL, Student-t NLL, Quantile Loss, and Conformal Prediction. The design ensures that observed performance deltas are directly attributable to the uncertainty modeling mechanism, not confounded by architectural variability.

Cryptocurrency Forecasting: Distributional Characteristics and Uncertainty Analysis

The chosen dataset comprises the 11 most liquid cryptocurrency assets, partitioned temporally, and processed with robust normalization and outlier truncation. One-day log returns are forecasted, with evaluation metrics spanning RMSE, MAE, CRPS, PICP, sharpness, uncertainty-error correlation, and comprehensive trading metrics (Sharpe, Sortino, Calmar ratios, win rate, max drawdown).

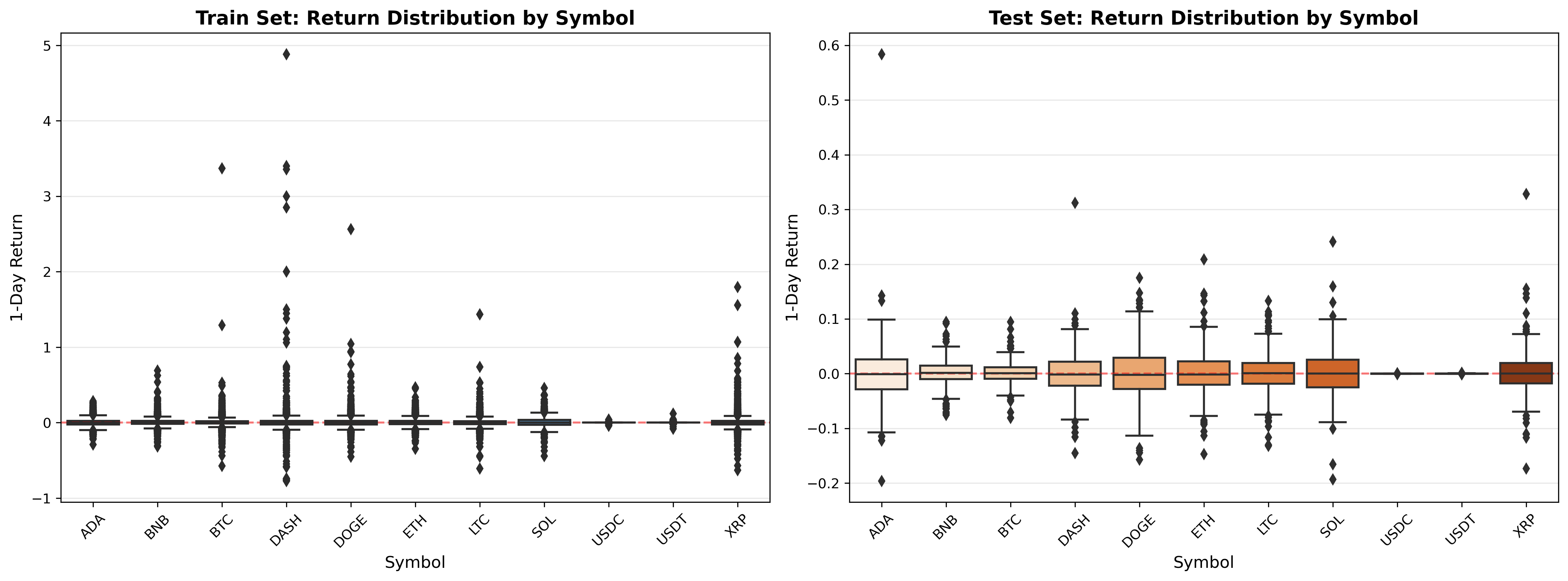

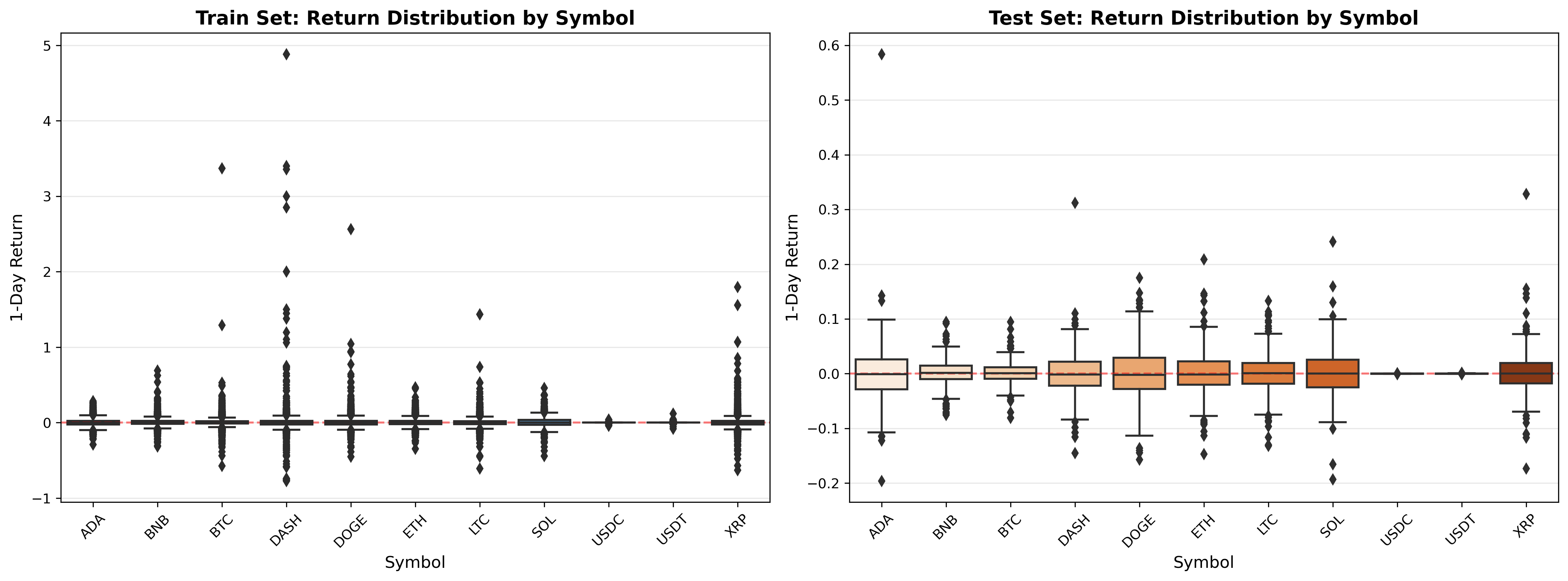

Distribution analysis reveals leptokurtic, heavy-tailed characteristics, highlighting the necessity for robust uncertainty models.

Figure 1: 1-day log return distribution across cryptocurrency assets, comparing training and test splits; assets exhibit significant non-Gaussianity and regime shift.

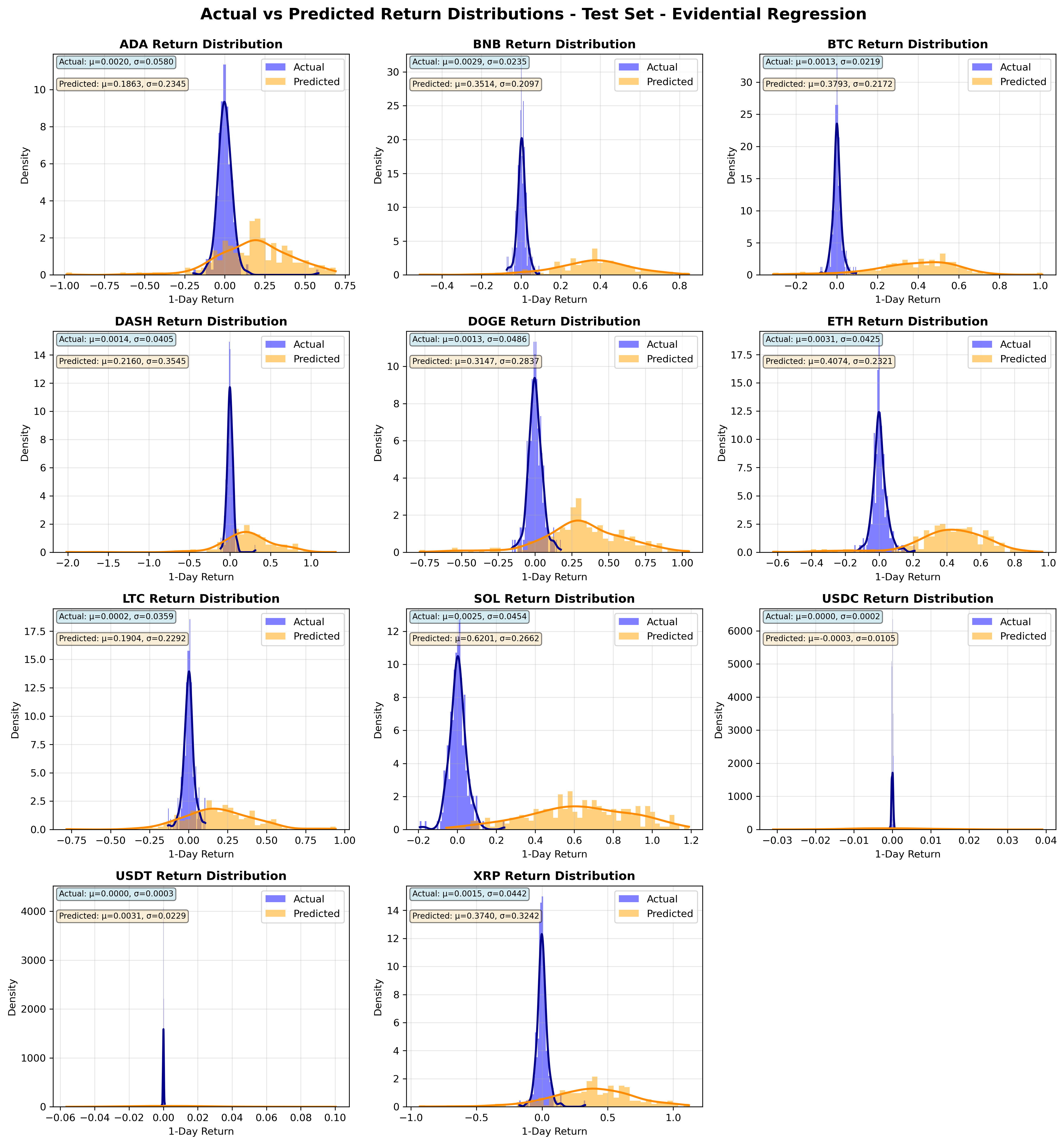

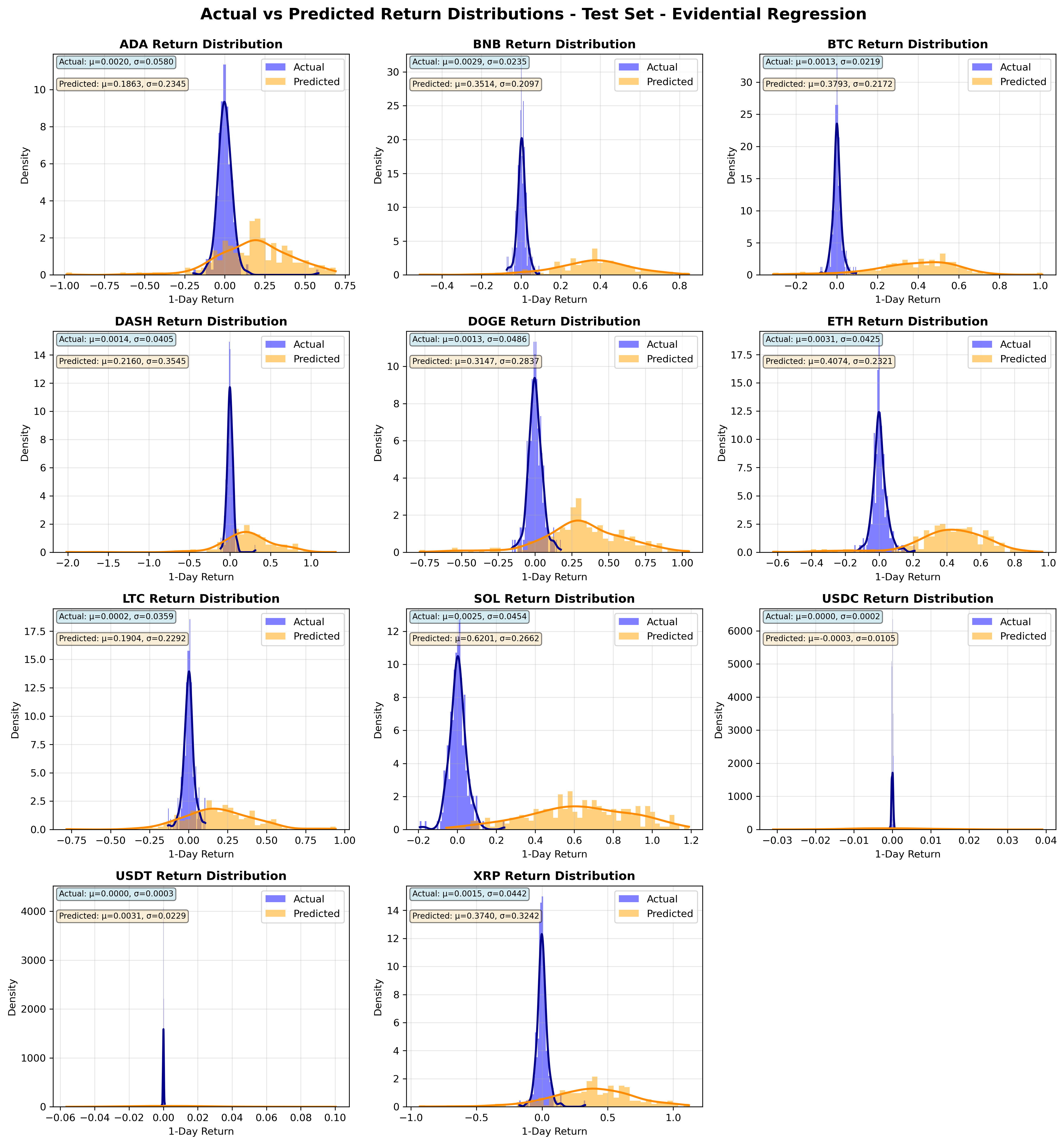

DER-based models provide point predictions with well-structured uncertainty bounds, crucial in volatile conditions.

Figure 2: Comparison of actual versus predicted 1-day returns using Evidential Regression, illustrating broader predictive coverage and model awareness of volatility extremes.

Numerical Results

Forecasting Accuracy: Evidential Regression matches baseline LSTM methods on RMSE and MAE, confirming that improved uncertainty quantification does not degrade point-wise accuracy.

Uncertainty Calibration: DER achieves competitive CRPS but demonstrates sharper prediction intervals (reduced average width), and lower coverage probability (PICP) compared to Gaussian NLL and Student-t NLL. The higher sharpness reflects more confident, selective predictions; the reduced PICP signals interval selectivity rather than systematic undercoverage.

Trading Metrics: DER yields superior risk-adjusted returns for BTC: annual Sharpe (1.33), Sortino (2.27), and Calmar (3.04) ratios, outperforming both deterministic and probabilistic competitors. Win rates (0.52) and drawdown statistics underscore robust downside risk control.

Controlled evaluations across 10 other cryptocurrencies confirm DER’s efficacy: comparable point prediction metrics and frequently dominant risk-adjusted trading performance (Appendix data).

Practical Implications and Theoretical Perspectives

ProbFM’s explicit uncertainty decomposition offers direct utility for risk-aware strategy design in quantitative finance. Epistemic uncertainty thresholds facilitate regime-dependent trade execution; aleatoric quantification supports dynamic position sizing. The coverage-optimized evidential loss provides reliable prediction intervals, mitigating false confidence.

Theoretically, DER-integrated TSFMs enable generalized probabilistic learning without rigid parametric or mixture assumptions. ProbFM’s framework is extensible to multivariate and multi-horizon forecasting via Normal-Inverse-Wishart priors. The evidence annealing paradigm could motivate further advances in calibration-aware deep probabilistic networks.

Limitations and Future Work

- Scope: Evaluation limited to cryptocurrency assets; expanding to other regimes and asset classes is required.

- Regime Coverage: Extreme market scenarios, slippage, and transaction costs are not incorporated.

- Architecture: Current instantiation uses LSTM backbones; transformer-only and state-space models merit analysis.

- Statistical Analysis: Further robustness checks (multi-seed runs, hypothesis testing) are necessary.

Anticipated directions include component ablations, extended cross-domain generalization, classical baseline integration, robust multi-horizon and multivariate extensions.

Conclusion

ProbFM advances TSFM research with principled, efficient epistemic-aleatoric uncertainty quantification via Deep Evidential Regression. Empirical and theoretical evidence supports its utility in risk-aware financial forecasting and decision-making, with numerically strong, actionable results and direct architectural flexibility. The framework paves the way for future multi-domain, multivariate, and multi-horizon foundation models that prioritize calibrated uncertainty and robust probabilistic forecasting.