The Poisoned Apple Effect: Strategic Manipulation of Mediated Markets via Technology Expansion of AI Agents

Abstract: The integration of AI agents into economic markets fundamentally alters the landscape of strategic interaction. We investigate the economic implications of expanding the set of available technologies in three canonical game-theoretic settings: bargaining (resource division), negotiation (asymmetric information trade), and persuasion (strategic information transmission). We find that simply increasing the choice of AI delegates can drastically shift equilibrium payoffs and regulatory outcomes, often creating incentives for regulators to proactively develop and release technologies. Conversely, we identify a strategic phenomenon termed the "Poisoned Apple" effect: an agent may release a new technology, which neither they nor their opponent ultimately uses, solely to manipulate the regulator's choice of market design in their favor. This strategic release improves the releaser's welfare at the expense of their opponent and the regulator's fairness objectives. Our findings demonstrate that static regulatory frameworks are vulnerable to manipulation via technology expansion, necessitating dynamic market designs that adapt to the evolving landscape of AI capabilities.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about (big picture)

This paper looks at what happens when more AI tools (like different AI “teammates”) are made available to people or companies who use them to make deals. It shows a surprising trick called the “Poisoned Apple” effect: someone can release a new AI tool not to use it, but to push the rule-maker (the regulator) to change the rules of the market in a way that helps them and hurts their opponent. So just making a new tool available can tilt the playing field—even if nobody ends up using that tool.

What questions the paper asks

The authors ask, in simple terms:

- If we add more AI options for people to choose from, how does that change who wins or loses in deals?

- Can someone game the system by releasing a tool that changes the regulator’s choice of rules?

- Do these changes help fairness (making outcomes balanced) or efficiency (making the “total pie” bigger)?

- Are today’s rules and regulations strong enough to handle fast-changing AI choices?

How the researchers studied it (in everyday language)

Think of three types of everyday “games” where people try to get good outcomes:

- Bargaining: splitting a pie (who gets how much).

- Negotiation: buyer and seller trade when they each know different things.

- Persuasion: one side sends messages trying to convince the other to act.

The setup uses a “game about the game” (a meta-game) with three roles:

- Two players (call them Alice and Bob) each pick an AI model to act for them.

- A regulator (like a referee or principal) picks which set of rules, or “market design,” everyone must play under. The regulator can aim for fairness (keep payoffs similar) or efficiency (make the total payoff as large as possible).

- After the regulator sets the rules, Alice and Bob’s chosen AIs play, and we see the outcome.

Key ideas explained simply:

- Technology expansion: adding a new AI model to the menu of choices.

- Nash equilibrium: a stable outcome where neither player wants to switch AI models given what the other chose.

- Fairness vs. efficiency: fairness tries to keep things balanced between players; efficiency tries to make the overall result as big as possible, even if it’s uneven.

What data and tools they used:

- The authors ran large computer simulations using 13 advanced LLMs as if they were economic agents.

- They tested 1,320 different market conditions (like changing what information is shared, how people can communicate, and how long the game lasts).

- They analyzed over 580,000 decisions to see patterns across many scenarios.

- They compared outcomes before and after adding a new AI option to the players’ menu, and watched how the regulator’s rule choice and the players’ payoffs changed.

What they found and why it matters

Main discovery: the “Poisoned Apple” effect.

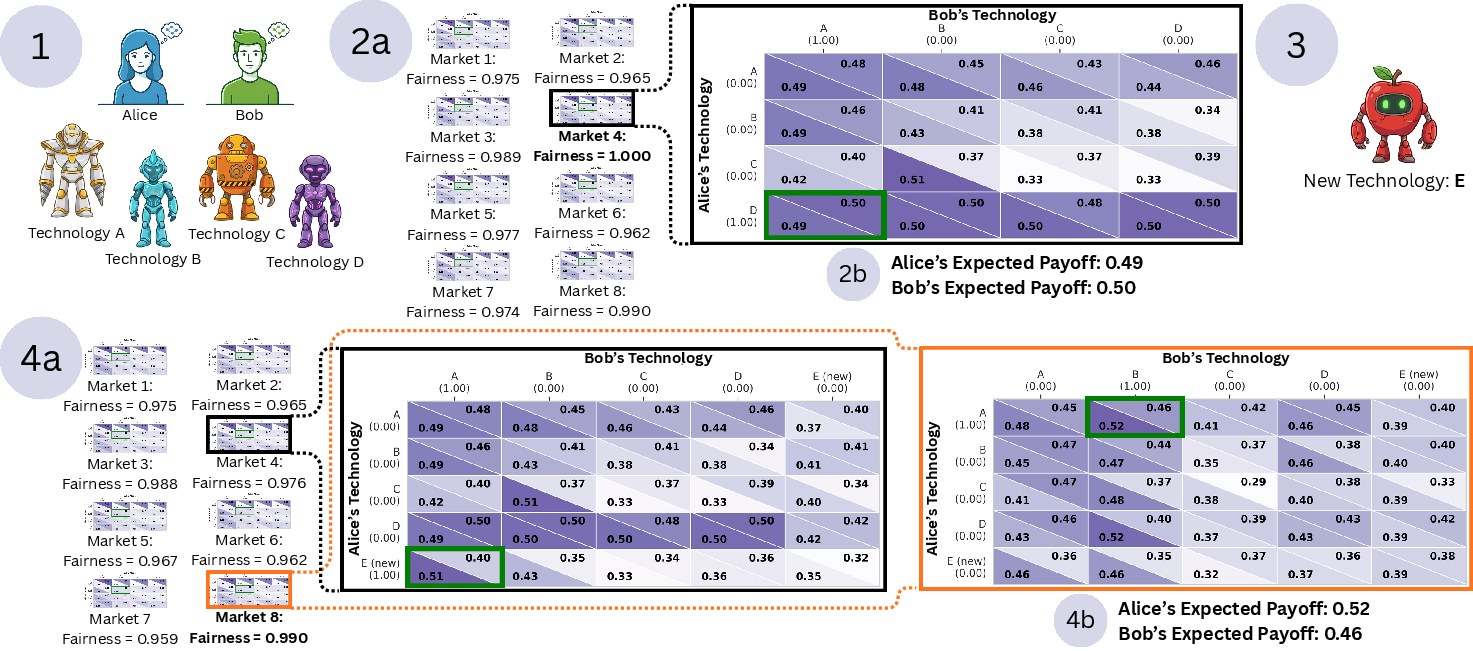

- Example: At first, with AI models A–D available and the regulator focused on fairness, the chosen market rules give Alice about 0.49 and Bob about 0.50 (very balanced).

- Alice then releases a new model E. If the regulator kept the old rules, fairness would drop. To protect fairness, the regulator switches to a different market design.

- In the new rules, nobody actually uses E. But the switch itself changes payoffs: Alice now gets about 0.52 and Bob 0.46. Alice benefits just by releasing E—even though neither player uses it.

System-wide pattern:

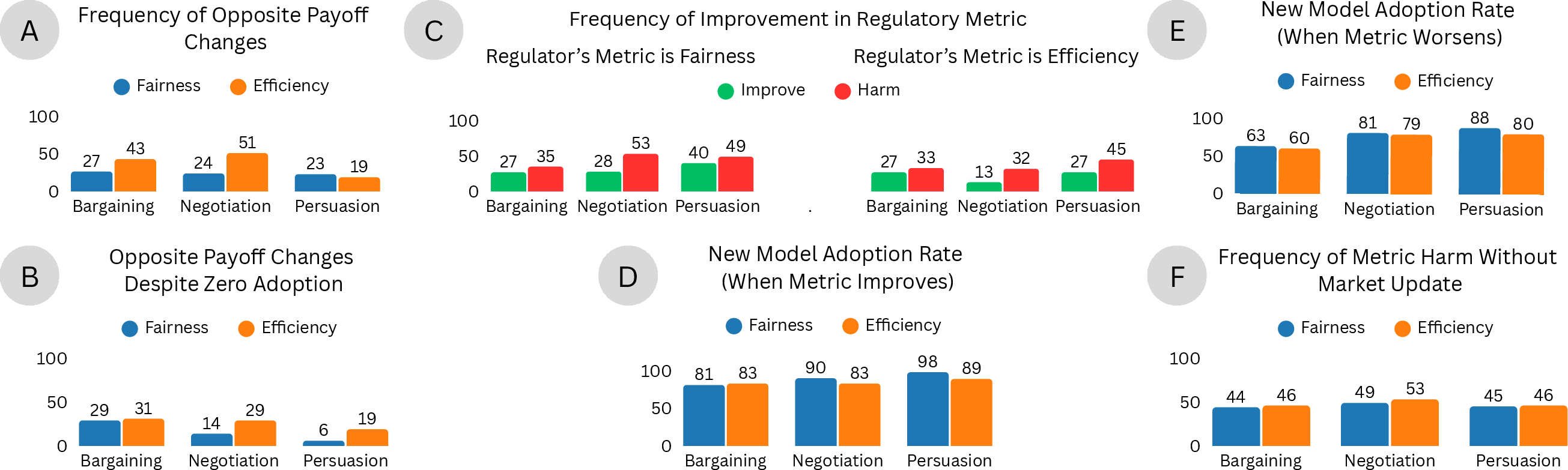

- In over 50,000 simulated “games about the game,” adding one new AI option often makes one player better off and the other worse off.

- About one-third of these “winner–loser flips” happen even when the new AI isn’t used by either player in the final outcome. The mere availability of the tool changes the regulator’s choice and thus the result.

- Whether this helps or hurts depends on the regulator’s goal:

- If the regulator maximizes efficiency (total size of the pie), adding new tech tends to help more often.

- If the regulator maximizes fairness (balance between players), adding new tech often backfires.

- If the regulator doesn’t update the rules after a new AI is released (regulatory inertia), outcomes get worse for the regulator’s goal about 40% of the time. In other words, static rules can be gamed by changing the menu of AI tools.

Why this matters:

- It shows that “more choice” isn’t always harmless. Even unused tools can change the game by forcing different rules that tilt outcomes.

- It warns that simply releasing new AI models (open weights or APIs) can be used strategically to influence regulators and market design.

What this could mean going forward

- Regulators should expect players to use tech releases tactically. Rules need to be dynamic—able to adjust quickly as new AI tools appear—so the system isn’t easy to manipulate.

- Market designs should evaluate not just what AIs people are using, but also what AIs are available as “threats,” because availability alone can change incentives and outcomes.

- When aiming for fairness, regulators must be extra careful: adding choices can unintentionally reduce fairness unless the rules adapt smartly.

- For efficiency-focused markets, adding new AI options can be good—but should still be monitored in case availability is used to shift who gains and who loses.

In short: The paper shows that in AI-powered markets, the tools on the shelf matter almost as much as the tools in use. To keep markets fair and effective, the rules must evolve as fast as the technology does.

Knowledge Gaps

Unresolved Knowledge Gaps, Limitations, and Open Questions

Below is a single, concrete list of what remains missing, uncertain, or unexplored, framed to guide future research.

- Formal characterization: Derive necessary and sufficient conditions (in terms of performance matrices, market parameters, and regulator objectives) under which the Poisoned Apple effect must arise, and bounds on the magnitude of payoff/fairness shifts.

- Alternative solution concepts: Test robustness of the effect under Stackelberg (leader–follower), correlated, trembling-hand, and learning-based equilibria rather than only simultaneous-choice Nash equilibrium.

- Dynamic meta-games: Model repeated cycles of “release → regulator re-optimization → agent re-selection” to study long-run arms races and convergence/stability properties.

- Regulator objectives: Evaluate susceptibility across different fairness notions (e.g., maximin, egalitarian, envy-freeness, proportional fairness) and multi-objective trade-offs (weighted efficiency–fairness), including thresholds and lexicographic rules.

- Switching and commitment: Introduce realistic regulatory constraints (switching costs, limited re-optimization frequency, commitment power) and quantify how they change manipulation incentives and outcomes.

- Mechanism design: Propose and analyze market-selection mechanisms that are strategy-proof or robust to technology expansion (e.g., minimax-regret selection, commitment to technology-agnostic rules), with formal guarantees.

- Uncertainty and noisy evaluation: Incorporate uncertainty about delegate performance (estimation noise, sample variance, distribution shift), and design robust regulator policies under incomplete/imperfect benchmarking.

- Multiple equilibria and tie-breaking: Investigate how equilibrium selection rules (e.g., payoff-dominant, risk-dominant, random tie-breaking) affect the presence and strength of Poisoned Apple outcomes.

- Mixed technology adoption: Allow agents to randomize across delegates (ensembles, probabilistic selection, contingent switching during play) and test whether latent-threat manipulation persists.

- Endogenous technology development: Add realistic release costs, time-to-deploy, reputational/legal risks, and exclusivity/licensing constraints to the meta-game; quantify when releasing a “poisoned” model is actually optimal.

- Access heterogeneity: Study effects when technologies are not symmetrically available (exclusive APIs, pricing differences, latency constraints) or when agents differ in adoption costs and capabilities.

- Multi-agent markets: Extend from two-player settings to n-player games with coalitions, collusion, or third-party technology releases; characterize how network effects and externalities alter manipulation.

- Market classes beyond the triad: Test the phenomenon in auctions, matching markets, two-sided platforms, public goods, and mechanism-design benchmarks to assess generality across core economic environments.

- Parameter-level drivers: Identify which dimensions (information structure, communication form, horizon) most strongly predict susceptibility, using causal or counterfactual analyses rather than aggregate frequencies.

- Effect-size distribution: Report magnitudes (not only frequencies) of fairness/efficiency changes, tail risks, and thresholds at which policy action is warranted; include confidence intervals on payoff shifts.

- Realistic regulator information: Model regulators with incomplete knowledge of performance matrices, reliance on third-party benchmarks, or misreporting by agents; design verification/auditing protocols.

- Human external validity: Replicate with human agents and human regulators (lab/field), and in high-stakes domains, to assess whether LLM-simulated effects translate to real decision-making.

- Cross-model and multi-modal generality: Test with non-LLM or multi-modal agents (vision, robotics), and with newer foundation models to evaluate whether findings are architecture- or modality-specific.

- Learning and fine-tuning dynamics: Allow agents to adapt or fine-tune their delegates in response to selected market designs; analyze whether this strengthens or mitigates Poisoned Apple incentives.

- Policy enforcement: Develop detection methods for manipulative technology releases (audits, behavioral tests), and evaluate sanction mechanisms that deter latent-threat releases without stifling innovation.

- Externalities and social welfare: Incorporate broader societal impacts (consumer harm, systemic risk, data privacy) into regulatory objectives beyond two-agent efficiency/fairness, and measure spillovers across markets.

- Computational scalability: Address the cost of recomputing equilibria across many markets and expanding technology sets; propose efficient algorithms or approximation schemes for real-time regulatory updates.

- Reproducibility and sensitivity: Release the meta-game pipeline, equilibrium solvers, and code; conduct sensitivity analyses to different LLMs, hyperparameters, sampling temperatures, and dataset subsets.

- Institutional and political economy: Model regulator capture, lobbying, and multi-stakeholder governance constraints that may interact with or exacerbate manipulation via technology expansion.

Practical Applications

Immediate Applications

Below are concrete, deployable use cases that leverage the paper’s findings on technology expansion, equilibrium shifts, and the “Poisoned Apple” effect.

- Technology-aware market stress testing (Sector: finance, ad-tech, gig platforms, real estate, energy)

- Product/workflow: Equilibrium Impact Simulator that runs meta-game analyses before and after a new AI agent or feature is made available.

- Action: Simulate fairness/efficiency outcomes across alternative market designs when adding, removing, or exposing AI delegates/APIs.

- Assumptions/dependencies: Access to performance matrices (e.g., GLEE-like data), equilibrium solvers, defined regulator objectives (fairness/efficiency), approximate validity of LLM-based agent simulations.

- Dynamic market design re-optimization cadence (Sector: policy agencies, exchanges, online platforms)

- Product/workflow: Market Design Autopilot that recomputes rules (information structures, communication forms, horizons) upon any technology release.

- Action: Establish triggers (e.g., new model/API availability) that automatically re-evaluate and update market parameters to avoid fairness deterioration.

- Assumptions/dependencies: Clear governance for rule updates, computational pipelines, monitoring to detect new releases and recalibrate metrics.

- Model-release governance and feature flagging (Sector: software platforms, marketplaces, ad-tech)

- Product/workflow: Model Availability Gatekeeper with option whitelisting, staged rollouts, and “kill-switches” tied to fairness metrics.

- Action: Gate the availability of new AI delegates to users/agents; stage releases with pre- and post-release equilibrium monitoring.

- Assumptions/dependencies: Platform control over model catalogs, observability of outcomes, risk tolerance defined by policy/compliance teams.

- Threat-of-use scorecards (Sector: policy, enterprise risk, finance)

- Product/workflow: Threat-of-Use Scorecard rating how a newly available model could shift regulator choice even if not adopted.

- Action: Integrate latent threat scoring into model release reviews and regulatory impact assessments.

- Assumptions/dependencies: Predictive models of equilibrium shifts, access to comparative agent performance data, defined fairness/efficiency thresholds.

- Equilibrium-aware A/B testing (Sector: software/product, marketplaces)

- Product/workflow: Meta-game Experimentation Harness to test feature/model releases under alternate market designs.

- Action: Replace naive A/B with tests that incorporate regulator response and cross-player technology selection dynamics.

- Assumptions/dependencies: Experimentation infrastructure, simulation capability, stakeholder buy-in to meta-game framing.

- Option-pruning and commitment windows (Sector: procurement, B2B marketplaces, platforms)

- Product/workflow: Contract clauses and policy rules that freeze market design for a set period to neutralize manipulative “poisoned” releases.

- Action: Limit the effective choice set or commit to non-reactive designs during critical windows to preserve fairness.

- Assumptions/dependencies: Legal enforceability, alignment with antitrust/competition rules, clarity on fairness metrics.

- Strategic release detection and triage (Sector: policy, compliance, platform trust & safety)

- Product/workflow: Release Risk Monitor that flags new model/API availability with high likelihood of unused-but-harmful equilibrium shifts.

- Action: Route flagged releases to regulatory sandbox testing and require compensatory safeguards.

- Assumptions/dependencies: Monitoring of external model ecosystem, triage criteria, cooperative data-sharing from model providers.

- Agent portfolio selection for negotiations (Sector: procurement/sales, real estate, insurance)

- Product/workflow: Agent Portfolio Selector recommending AI delegates that maximize outcomes given opponent technologies and current market design.

- Action: Choose representatives strategically with awareness of regulator objectives and opponent capabilities.

- Assumptions/dependencies: Up-to-date model benchmarking, understanding of current market rules, rational agent selection.

- Regulatory early-warning dashboards (Sector: policy, finance)

- Product/workflow: Fairness/Efficiency Early-Warning Dashboard linked to model availability feeds and equilibrium analyses.

- Action: Provide alerts when available-but-unused technologies could trigger harmful market redesigns if the regulator re-optimizes—or if it remains inert.

- Assumptions/dependencies: Continuous telemetry, agreed metrics, inter-agency data integration.

- Teaching and benchmarking in academia (Sector: education/academia)

- Product/workflow: Course modules using GLEE-style environments to demonstrate availability externalities and meta-game dynamics.

- Action: Train students in mechanism design robust to technology expansion and regulator objectives.

- Assumptions/dependencies: Access to datasets/simulators, alignment with curricula in economics/CS.

- Consumer-facing “fair outcome” modes (Sector: daily life, consumer fintech/commerce)

- Product/workflow: Fair-Outcome Mode in AI negotiation assistants that avoids triggering harmful market shifts (e.g., by selecting delegates strategically).

- Action: Offer consumer settings that prioritize fairness and predict regulator responses to broader technology availability.

- Assumptions/dependencies: Consumer apps with configurable agent selection, transparency about marketplace rules.

Long-Term Applications

Below are use cases that require further research, scaling, standard-setting, or development to become viable.

- Option-proof mechanism design (Sector: policy, marketplaces, exchanges)

- Product/workflow: Option-Proof Mechanism Designer that yields rules robust to availability expansion and unused-threat manipulation.

- Action: Design market mechanisms whose fairness/efficiency outcomes do not degrade when non-adopted options are added.

- Assumptions/dependencies: Advances in implementation theory and robust mechanism design, formal guarantees under meta-game dynamics.

- Capability disclosure and certification regime (Sector: policy/regulation)

- Product/workflow: Agent Capability Registry and certification tied to fairness/efficiency impact evaluations.

- Action: Require standardized disclosures for any significant AI delegate release; license high-impact models post equilibrium-impact audits.

- Assumptions/dependencies: Legislation, sector-wide standards, compliance tooling, international coordination.

- Interoperable equilibrium monitoring infrastructure (Sector: policy, platforms, finance)

- Product/workflow: Equilibrium Shift Monitor with shared telemetry across platforms and regulators.

- Action: Institutionalize data pipelines to detect shifts and coordinate rule updates across multi-market ecosystems.

- Assumptions/dependencies: Data-sharing agreements, privacy-preserving analytics, governance frameworks.

- Anti-arbitrage commitments and cryptographic attestations (Sector: finance, marketplaces)

- Product/workflow: Time-locked rule commitments and cryptographic proofs of mechanism stability across release windows.

- Action: Reduce incentives to release “poisoned” options by committing to non-reactive designs or controlled reaction functions.

- Assumptions/dependencies: Secure attestations, legal enforceability, careful tuning to avoid stifling constructive innovation.

- Regulatory sandboxes with adversarial simulations (Sector: policy/regulation)

- Product/workflow: Advanced sandboxes that regularly test meta-games for strategic release manipulation and regulatory inertia risks.

- Action: Institutionalize adversarial stress tests for market updates and model releases; publish equilibrium-risk reports.

- Assumptions/dependencies: Simulation capacity, expertise, standardized test suites, transparent reporting norms.

- Sector-wide fairness metric standardization (Sector: policy, industry consortia)

- Product/workflow: Fairness/efficiency taxonomies and sector-specific benchmarks that align with regulator objectives.

- Action: Establish interoperable measures to compare, audit, and certify market designs and agent portfolios.

- Assumptions/dependencies: Cross-sector consensus, robust metrics validated against human outcomes, continuous refresh cycles.

- Defensive platform architecture (Sector: software/platform engineering)

- Product/workflow: Delegate Abstraction Layer that clusters models into coarse-grained capability classes to dampen equilibrium sensitivity to fine-grained releases.

- Action: Manage exposure with capability tiers, aggregated delegates, and rate limits on option proliferation.

- Assumptions/dependencies: Engineering investment, performance trade-offs, business alignment.

- Portfolio governance suites for multi-agent enterprises (Sector: enterprise software, operations)

- Product/workflow: Portfolio Governance Suite integrating release policies, threat scoring, simulation, and compliance workflows.

- Action: Enterprise-scale tooling to manage internal/external agent catalogs and mitigate availability externalities.

- Assumptions/dependencies: Change management, integration with MLOps/DevOps, organizational incentives.

- Consumer protection labels for agent availability risks (Sector: daily life, consumer protection)

- Product/workflow: “Agent Availability Risk” labels and warnings in consumer apps and marketplaces.

- Action: Inform users when expanding option sets could reduce fairness or alter counterparty payoffs in their environment.

- Assumptions/dependencies: Usability research, regulatory support, standardized disclosures.

- Academic expansions: availability externalities and meta-game theory (Sector: academia)

- Product/workflow: Extended benchmarks beyond GLEE, hybrid human-LLM studies, and formal treatments of technology-availability externalities.

- Action: Build theory and empirical bases for designing regulators and markets resilient to evolving AI capabilities.

- Assumptions/dependencies: Funding, shared datasets, cross-disciplinary collaboration.

- International coordination on cross-platform releases (Sector: policy, international bodies)

- Product/workflow: Cross-border release registries and coordinated re-optimization protocols.

- Action: Reduce regulatory arbitrage across jurisdictions by harmonizing disclosure, certification, and market-update practices.

- Assumptions/dependencies: Diplomatic agreements, interoperability standards, governance enforcement.

Notes on overarching assumptions and dependencies

- Rationality and meta-game framing: Agents select delegates to maximize expected utility; regulators optimize explicit objectives (fairness, efficiency). Deviations from rational behavior may alter outcomes.

- Data and simulation fidelity: LLM-based agent simulations approximate real strategic behavior; the validity of results depends on model representativeness and continuous empirical calibration.

- Transparency and observability: Many applications assume visibility into available technologies, performance matrices, and equilibrium outcomes.

- Legal and institutional capacity: Several applications require new laws, standards, or governance mechanisms that may take time to enact.

- Metric selection: Outcomes depend on agreed fairness/efficiency definitions; misaligned metrics can misguide updates and incentives.

Glossary

- Alternating-offers game: A bargaining protocol where players take turns proposing how to split a surplus until they agree. "An alternating-offers game where players must agree on splitting a surplus or receive zero"

- Asymmetric information: A situation where different parties possess private information not known to others. "negotiation (asymmetric information trade)"

- Bilateral trade: A market interaction between one buyer and one seller. "A bilateral trade setting involving private information between a buyer and seller"

- Communication Form: The allowed modality or structure of communication in the interaction. "Information Structure, Communication Form, and Game Horizon"

- Efficiency: A regulatory objective focused on maximizing total welfare. "Efficiency (total social welfare)"

- Equilibrium payoffs: The payoffs received by agents at the game’s equilibrium. "drastically shift equilibrium payoffs and regulatory outcomes"

- Equilibrium strategies: The strategy profile that constitutes an equilibrium of the game. "the equilibrium strategies do not involve using ."

- Expected payoffs: Payoffs averaged over uncertainty or mixed strategies. "yielding expected payoffs of 0.49 for Alice and 0.50 for Bob."

- Expected utility: The anticipated utility given probabilistic outcomes across strategies. "select the representative that maximizes their expected utility."

- Fairness: A regulatory objective aimed at minimizing disparities between agents’ outcomes. "Fairness (minimizing the disparity between agents' payoffs)"

- Game Horizon: The temporal length or number of stages in the game. "Information Structure, Communication Form, and Game Horizon"

- Information Structure: The specification of who knows what and when in the interaction. "Information Structure, Communication Form, and Game Horizon"

- Market design: The choice of rules and institutions that govern interactions in a market. "manipulate the regulatorâs choice of market design"

- Market environment: A specific configuration of market parameters under which agents interact. "migrate to a new market environment (Market 8)"

- Meta-game: A higher-level game where a regulator sets market rules and agents choose technologies. "Our analysis models this interaction as a meta-game"

- Mixed strategy equilibria: Equilibria where players randomize over multiple strategies. "expected payoffs (calculated over mixed strategy equilibria)"

- Nash Equilibrium: A profile of strategies where no agent can gain by unilaterally deviating. "Nash Equilibrium -- a state where neither agent has an incentive to switch technologies solely given the other's choice."

- Open-weight releases: Publishing model parameters so others can use or fine-tune the model. "open-weight releases or API availability can serve as strategic weapons for regulatory arbitrage."

- Performance matrix: A table summarizing how available technologies perform across opponents and markets. "review the performance matrix of available AI delegates"

- Poisoned Apple effect: Releasing a technology to shift regulatory choices in one’s favor without intending to use it. "We identify a phenomenon we term the Poisoned Apple effect."

- Regulatory arbitrage: Strategically exploiting the regulator’s rules or objectives to gain advantage. "open-weight releases or API availability can serve as strategic weapons for regulatory arbitrage."

- Regulatory inertia: Failing to update market design after changes in available technologies. "the danger of regulatory inertia."

- Regulatory metric: The quantitative objective the regulator optimizes (e.g., fairness or efficiency). "the regulatory metric deteriorates"

- Sender-receiver game: A strategic communication setting where a sender influences a receiver’s action. "A sender-receiver game where a seller attempts to convince a buyer to purchase based on strategic information transmission"

- Social welfare: The total utility aggregated across agents in the market. "total social welfare"

- Strategic information transmission: Communicating information to influence decisions under strategic incentives. "strategic information transmission"

- Technology expansion: Increasing the set of AI technologies available to agents. "manipulation via technology expansion"

- Utility: A numerical measure of an agent’s preferences or payoff. "The utility of each participant is determined by the rules of interaction and the technologies selected by both participants."

- Zero-sum shifts: Outcome changes where one agent’s gain is matched by the other’s loss. "one-third of these zero-sum shifts"

Collections

Sign up for free to add this paper to one or more collections.