Flow-based Extremal Mathematical Structure Discovery

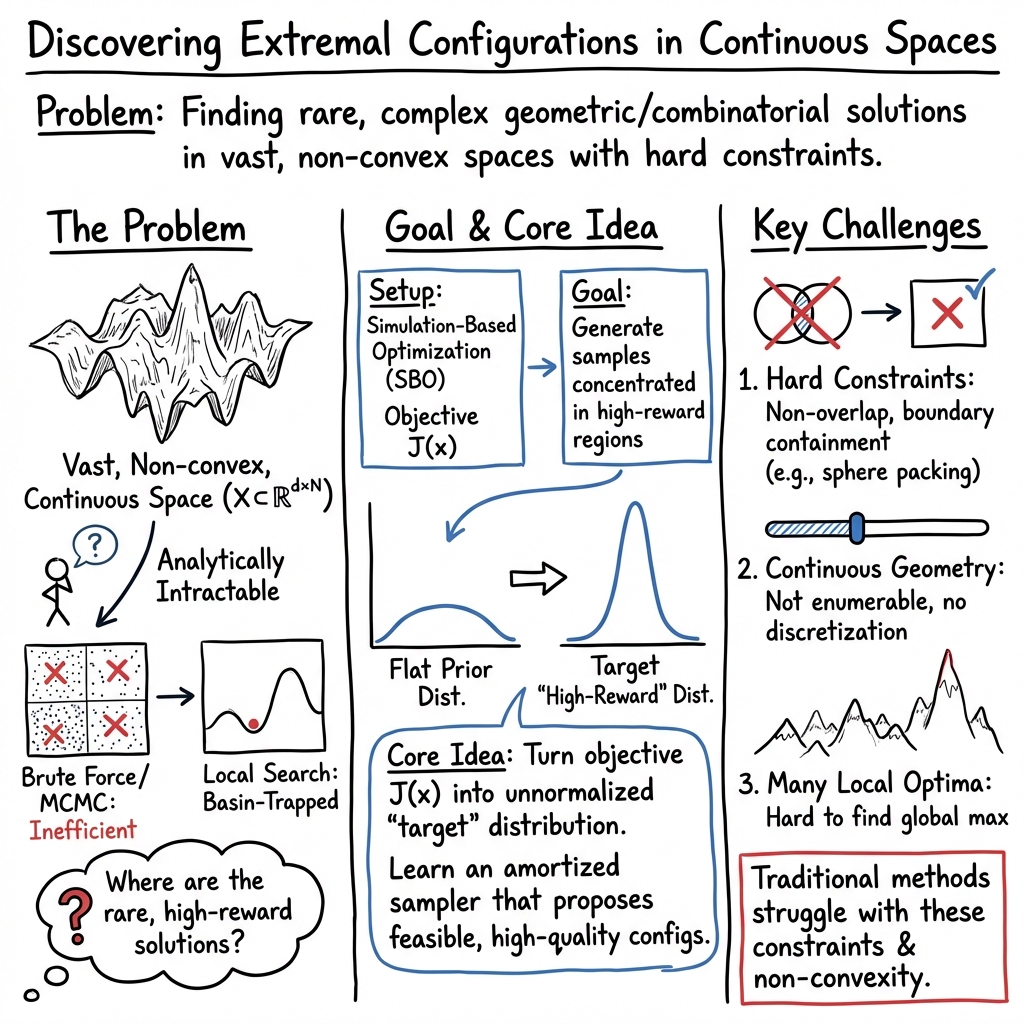

Abstract: The discovery of extremal structures in mathematics requires navigating vast and nonconvex landscapes where analytical methods offer little guidance and brute-force search becomes intractable. We introduce FlowBoost, a closed-loop generative framework that learns to discover rare and extremal geometric structures by combining three components: (i) a geometry-aware conditional flow-matching model that learns to sample high-quality configurations, (ii) reward-guided policy optimization with action exploration that directly optimizes the generation process toward the objective while maintaining diversity, and (iii) stochastic local search for both training-data generation and final refinement. Unlike prior open-loop approaches, such as PatternBoost that retrains on filtered discrete samples, or AlphaEvolve which relies on frozen LLMs as evolutionary mutation operators, FlowBoost enforces geometric feasibility during sampling, and propagates reward signal directly into the generative model, closing the optimization loop and requiring much smaller training sets and shorter training times, and reducing the required outer-loop iterations by orders of magnitude, while eliminating dependence on LLMs. We demonstrate the framework on four geometric optimization problems: sphere packing in hypercubes, circle packing maximizing sum of radii, the Heilbronn triangle problem, and star discrepancy minimization. In several cases, FlowBoost discovers configurations that match or exceed the best known results. For circle packings, we improve the best known lower bounds, surpassing the LLM-based system AlphaEvolve while using substantially fewer computational resources.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces FlowBoost, a smart computer system that helps discover “best possible” geometric arrangements—like how to pack circles or spheres tightly without overlap. These kinds of problems are hard because there are many rules to follow and an enormous number of ways things could be arranged. FlowBoost uses a new mix of tools from AI to search these huge spaces efficiently and find outstanding (sometimes record-setting) arrangements.

What questions are the authors trying to answer?

To make this clear, here are the simple goals behind the work:

- Can we teach a computer to generate high‑quality geometric configurations directly, without trying every possibility?

- Can we make the computer respect geometric rules while it’s generating, instead of fixing mistakes afterward?

- Can we give the computer direct feedback (rewards) so it improves quickly, instead of slowly copying past good examples?

- Can a small, focused model beat or match big general-purpose LLMs on these math discovery tasks?

How does FlowBoost work? (Explained with everyday analogies)

Think of trying to arrange marbles inside a box so they don’t touch or bump into the walls, while you’re trying to make them as big or as well‑spread as possible. Doing this by guessing randomly is slow and frustrating. FlowBoost combines three ideas to make this process fast and reliable.

- Geometry-aware “flow” generation: Imagine a gentle wind that moves marbles from random starting places toward good final positions. FlowBoost learns this wind. In math terms, this is called a “flow-based generative model” (here, “conditional flow matching”). Instead of placing objects all at once, it learns how to move them smoothly from simple starting points to excellent configurations.

- Checking and fixing while you go (not after): As the wind moves the marbles, the system continually checks the rules (for example, “no overlap,” “stay inside the box”) and makes small corrections if needed. This is “geometry-aware sampling.” It’s like having a coach who nudges pieces back into the safe zone during the move, instead of waiting until the end to fix errors.

- Reward-guided improvement with exploration: The system gives points (rewards) to better arrangements and learns to make those kinds more often. But it also avoids getting stuck on just one solution by:

- Staying somewhat close to what it already knows, so it doesn’t “forget” how to make a variety of good arrangements.

- Trying small, smart perturbations (exploration) to discover new, better patterns it hasn’t seen before.

Finally, there’s a polishing step (a fast “local search”) that tweaks the result to squeeze out extra quality—like carefully sliding marbles a tiny bit to improve spacing.

In short: learn how to move objects into good places, check and fix during the process, reward what works, keep exploring, and polish at the end.

What problems did they test, and what did they find?

The authors tested FlowBoost on four classic geometry tasks:

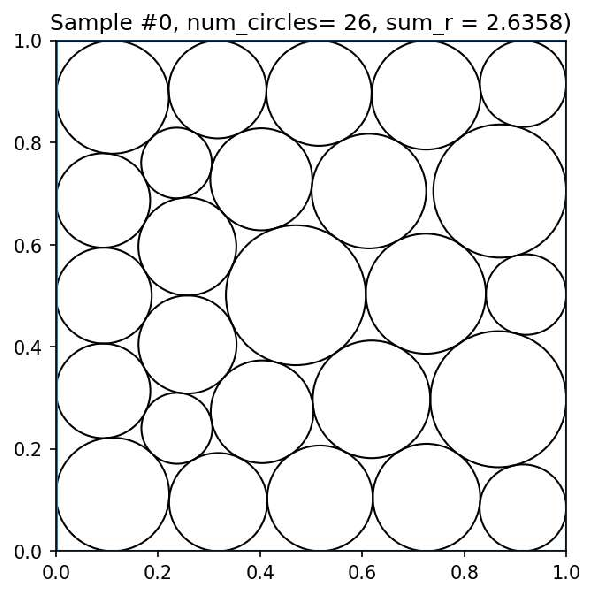

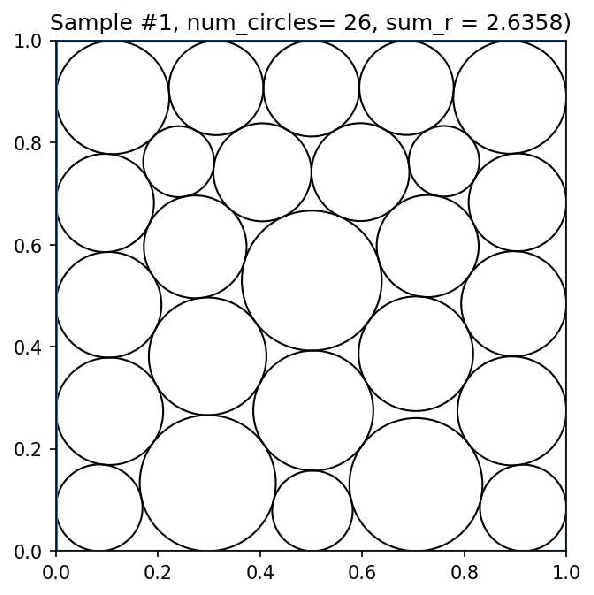

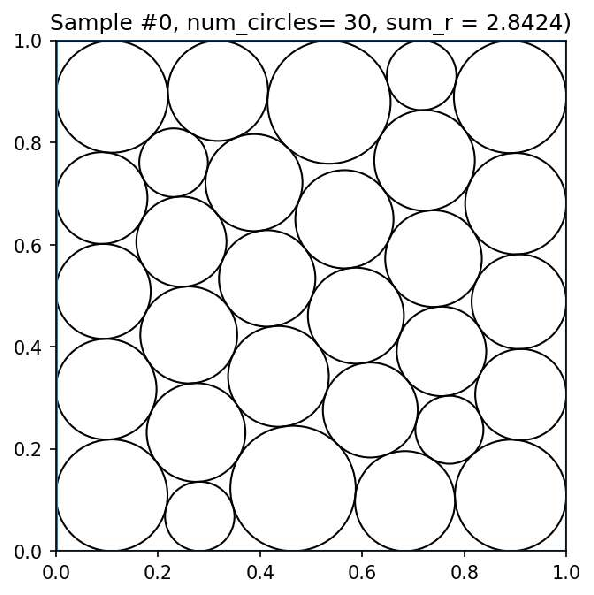

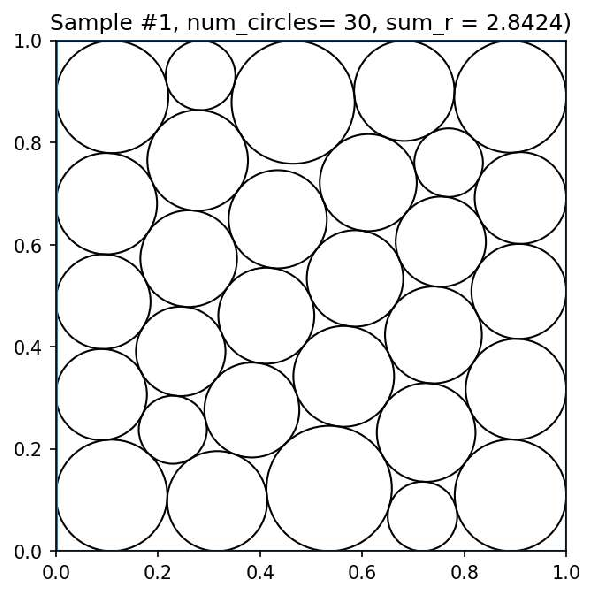

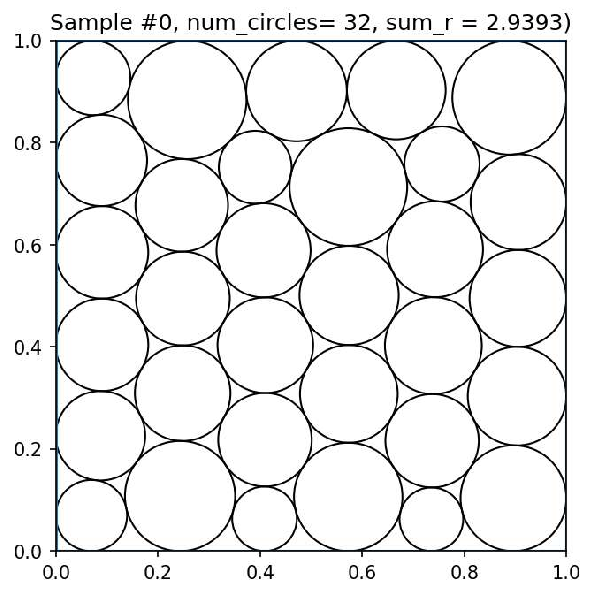

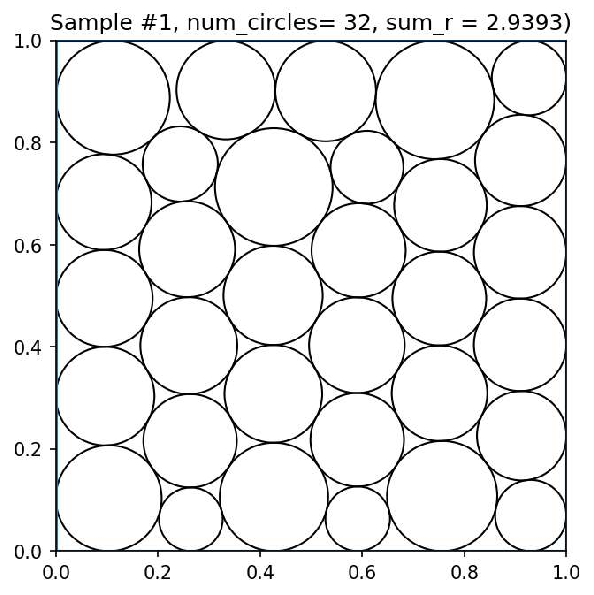

- Circle packing to maximize the sum of radii: Place circles in a square so the total size of all circles is as large as possible without overlap.

- Sphere packing in boxes: Place 3D spheres tightly inside a cube.

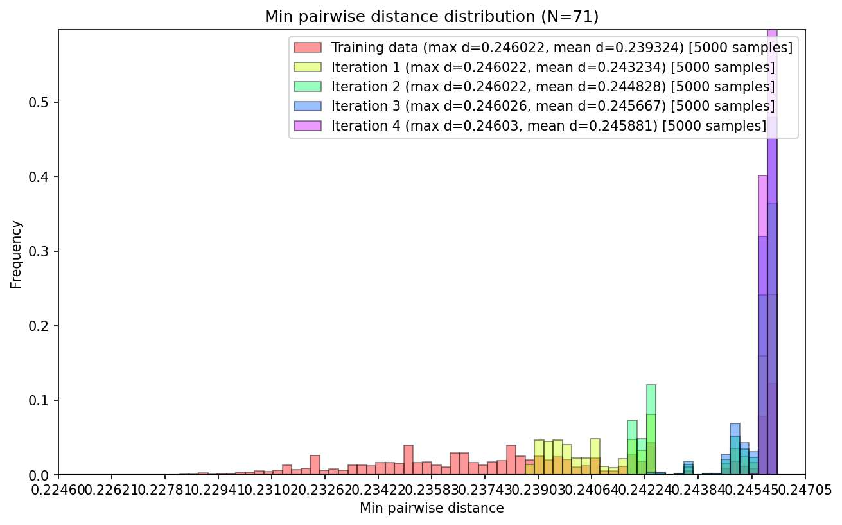

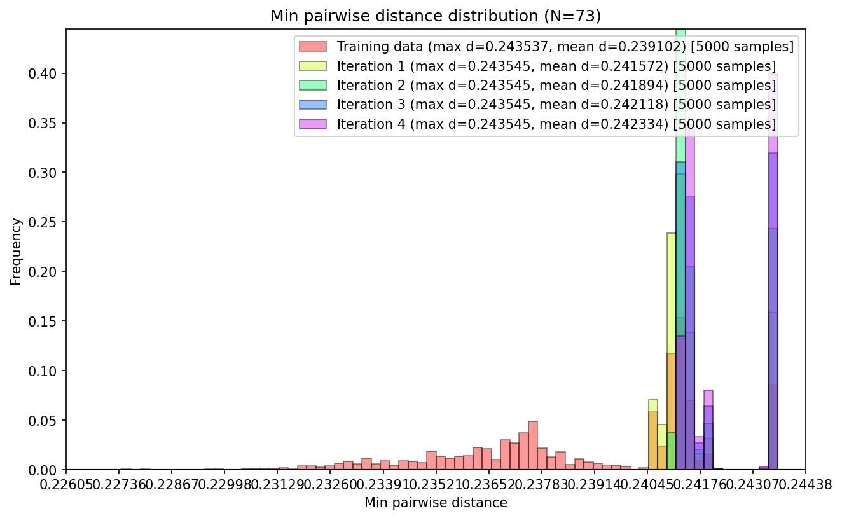

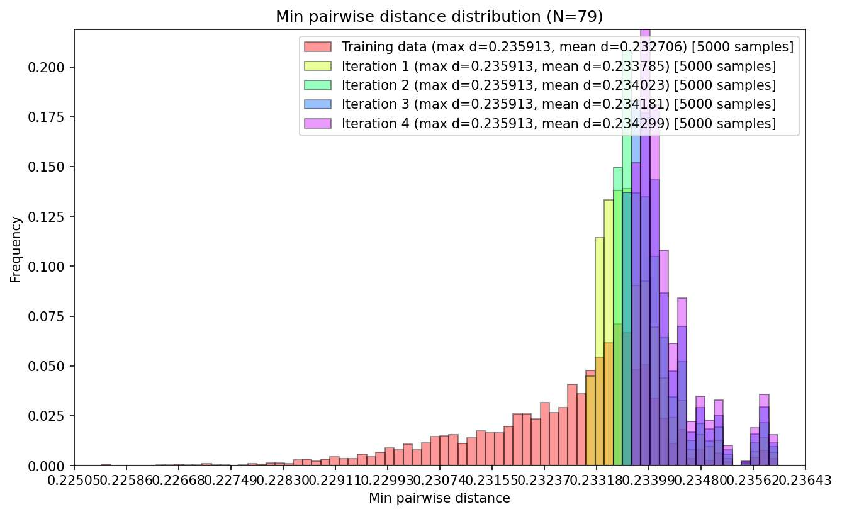

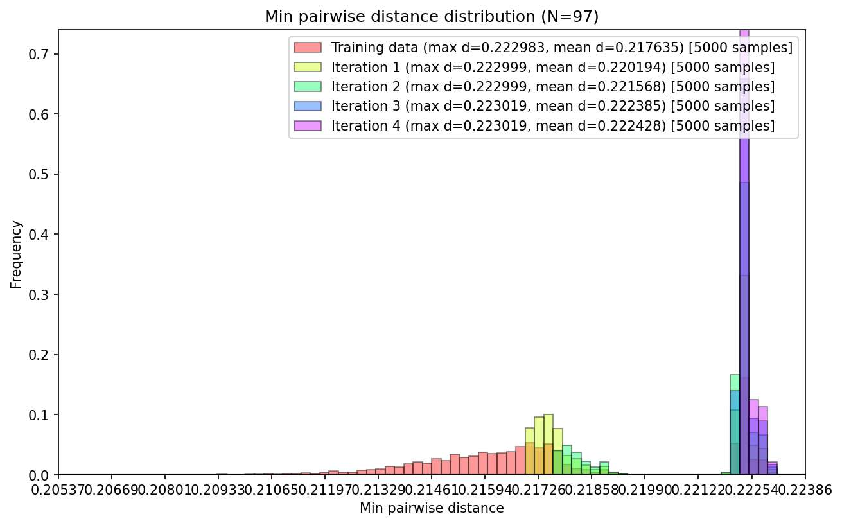

- Heilbronn triangle problem: Arrange points in a square so that the smallest triangle formed by any three points is as big as possible.

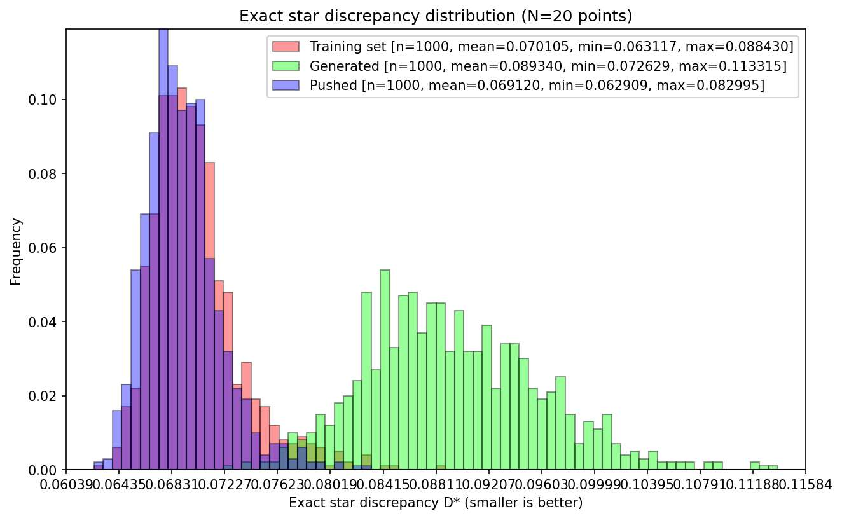

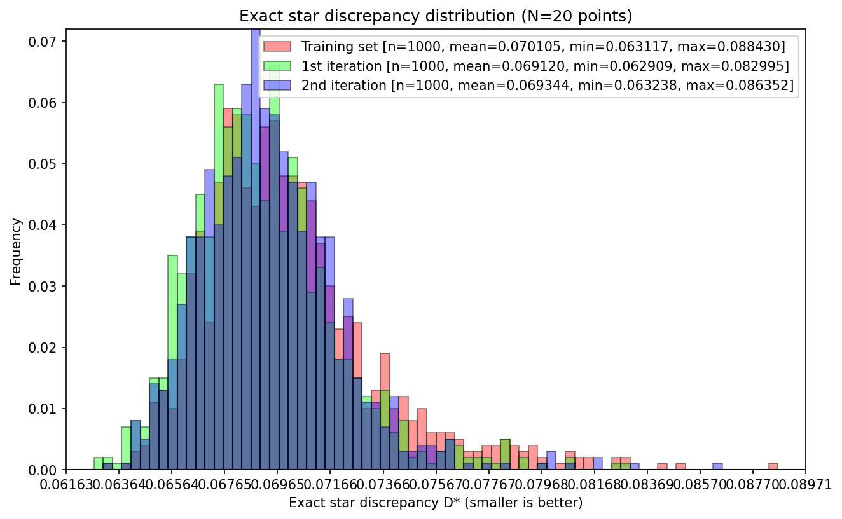

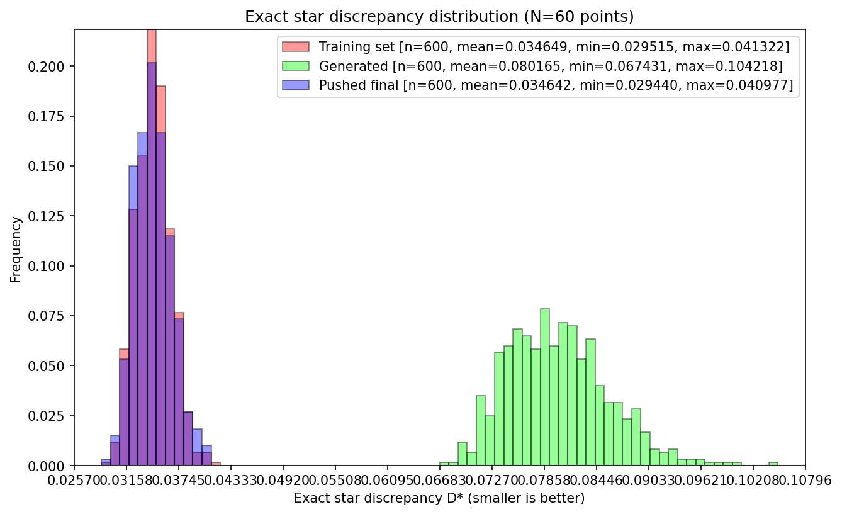

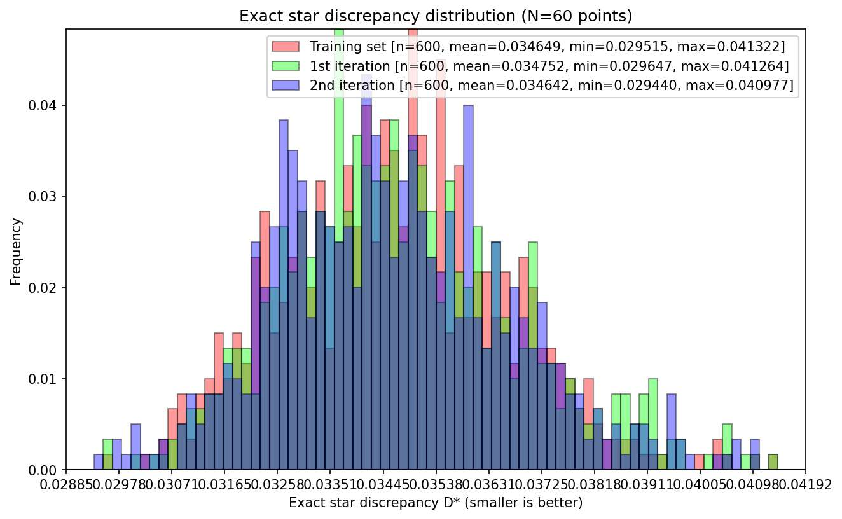

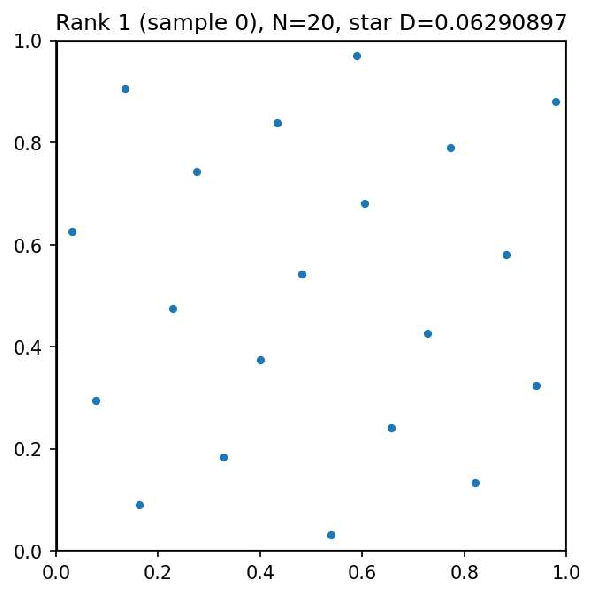

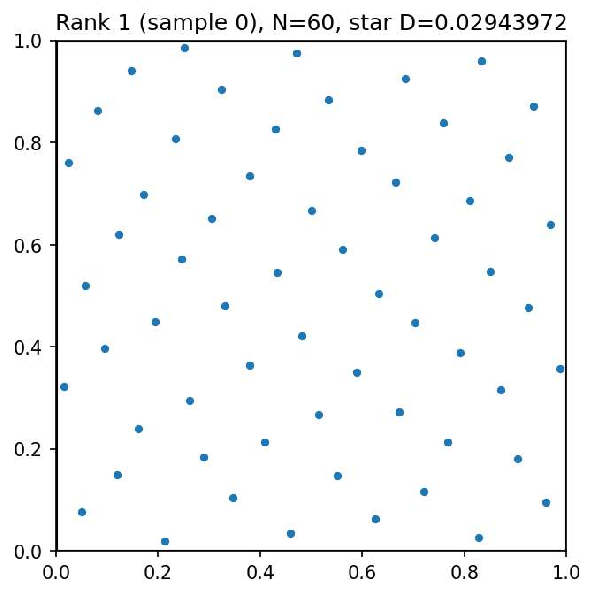

- Star discrepancy minimization: Arrange points so they are as evenly spread as possible (a measure important in randomized algorithms and simulation).

Here are the key results and why they matter:

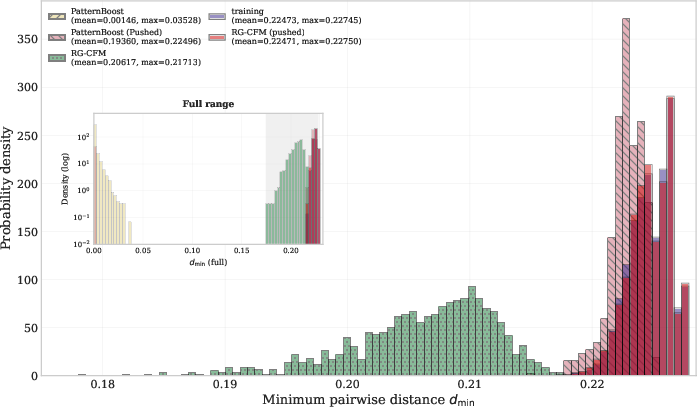

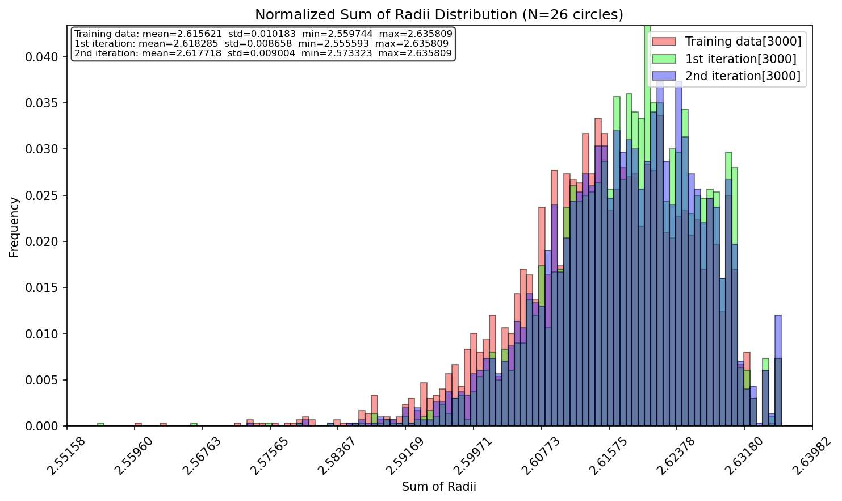

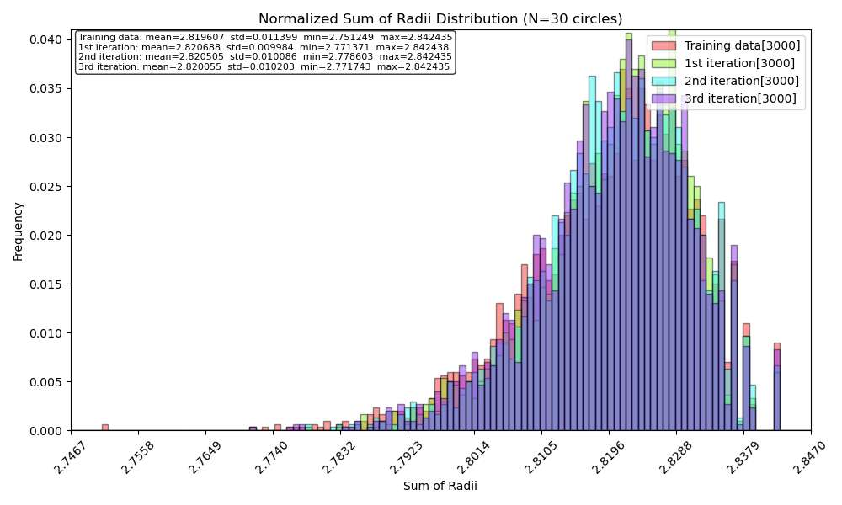

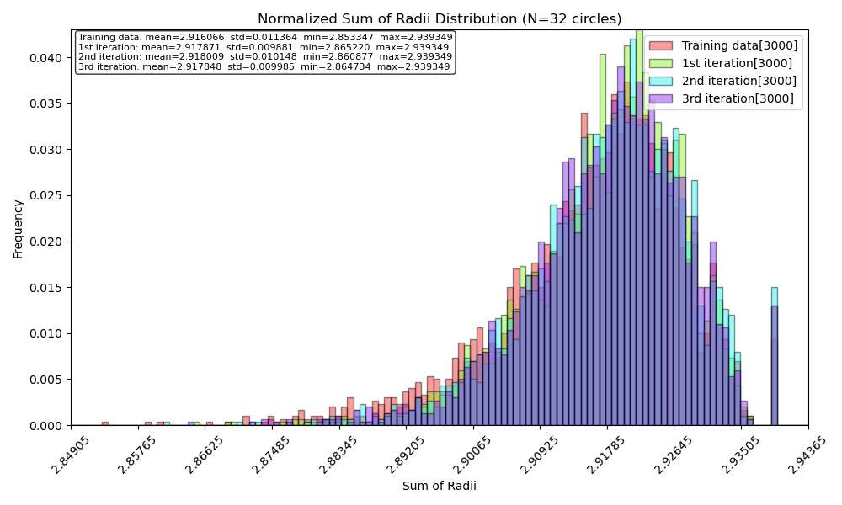

- New and better circle packings: For 26 and 32 circles, FlowBoost found arrangements that beat the best known lower bounds. It also outperformed a previous system that relied on LLMs, but used far less computing power.

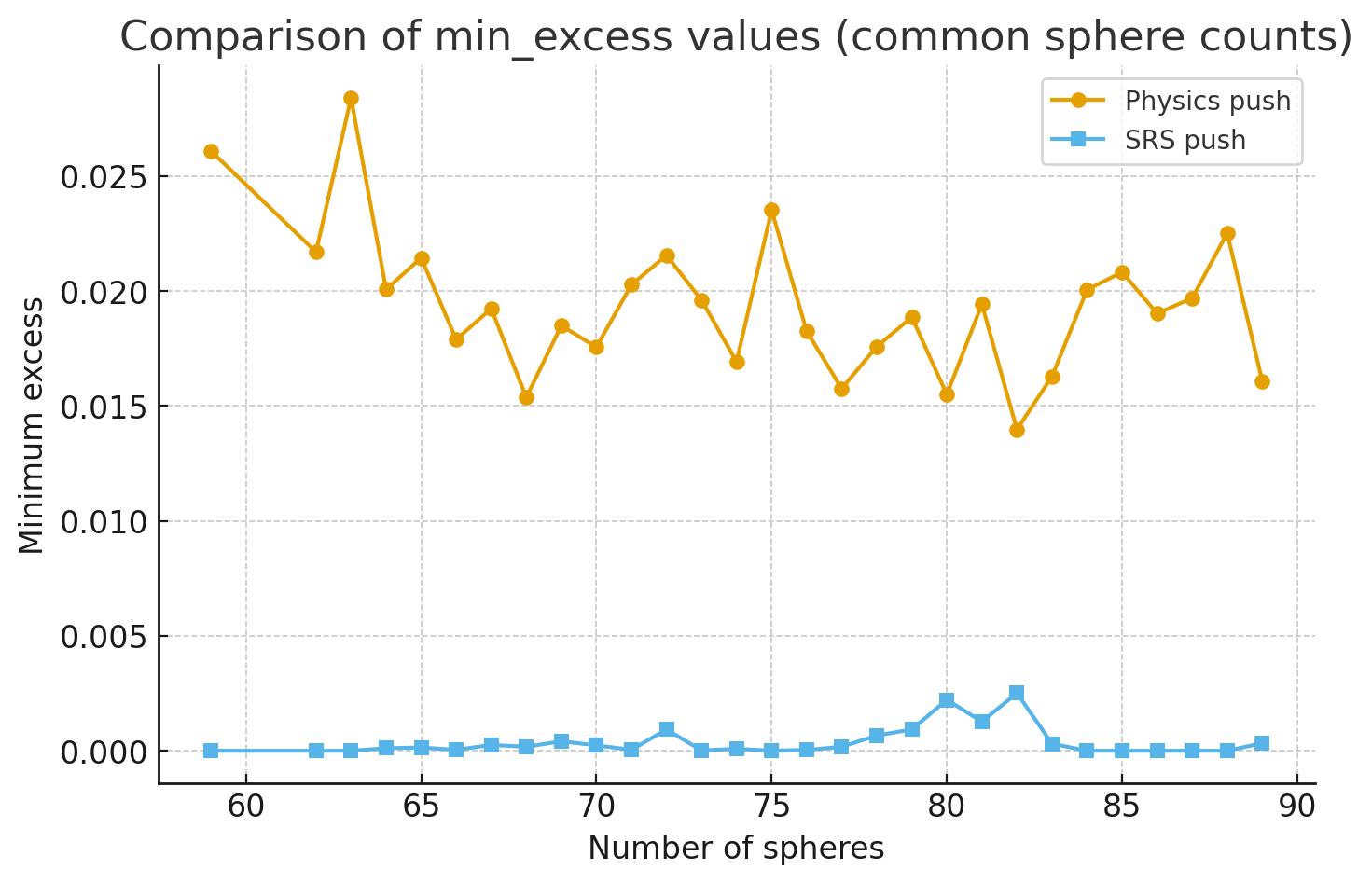

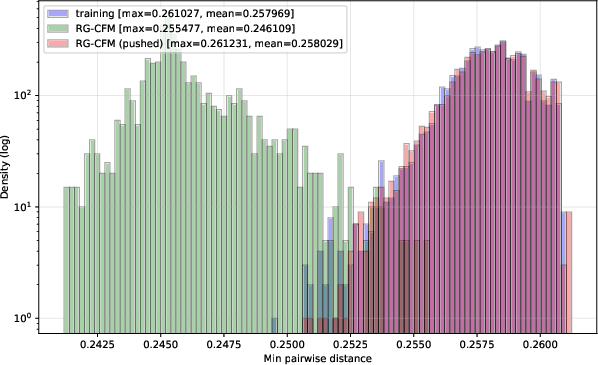

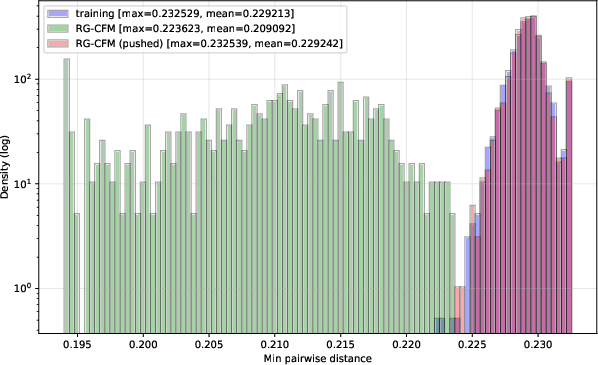

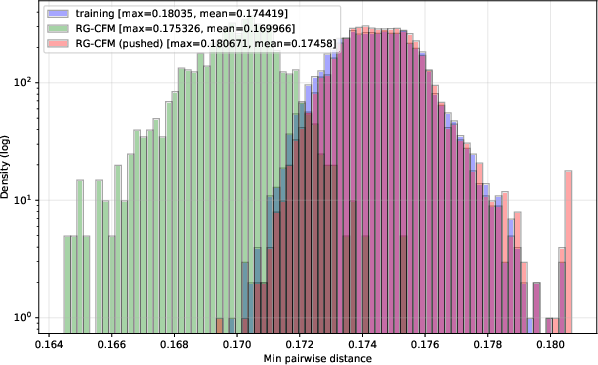

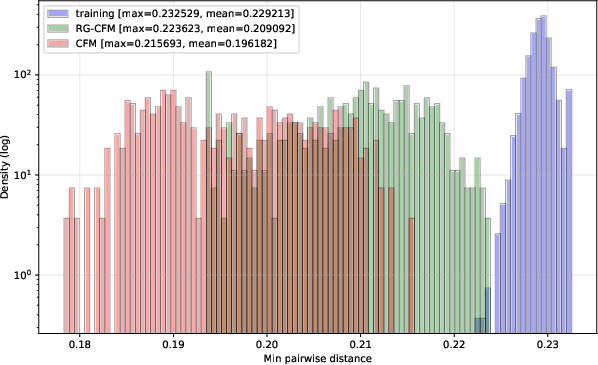

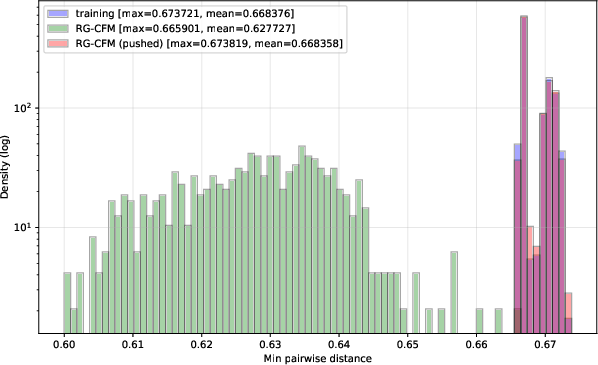

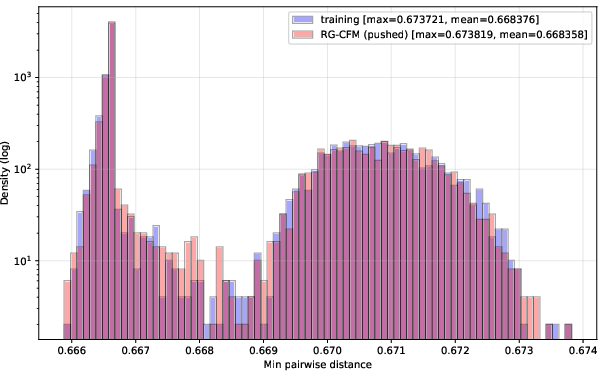

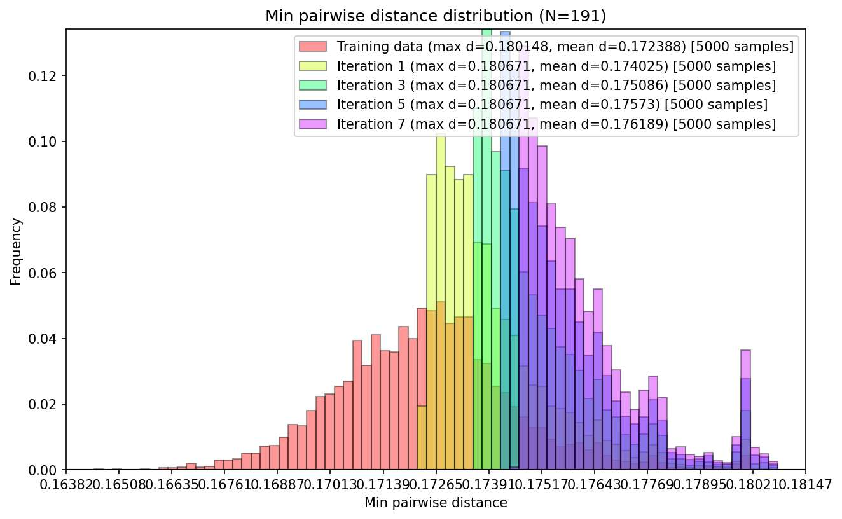

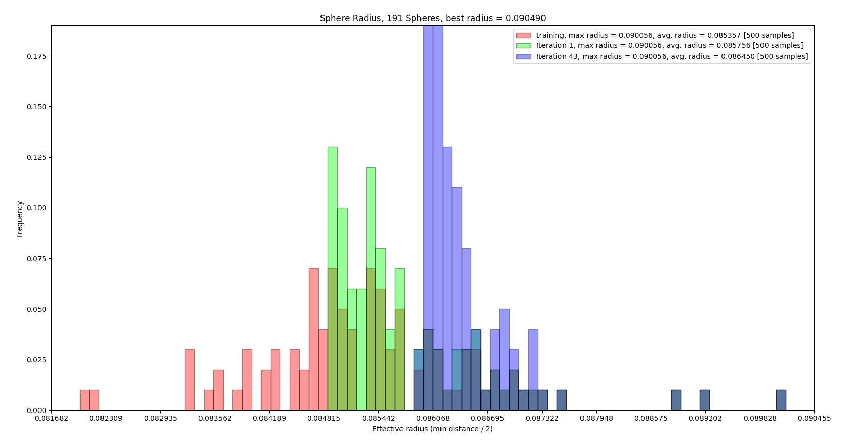

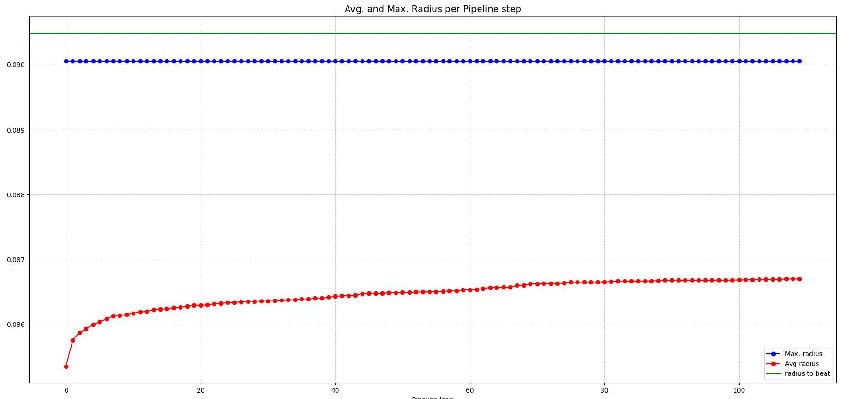

- Sphere packings that rival or beat classical methods: In 3D for many sphere counts and especially in 12D (yes, higher-dimensional spaces!), FlowBoost found denser packings than typical old-school heuristics.

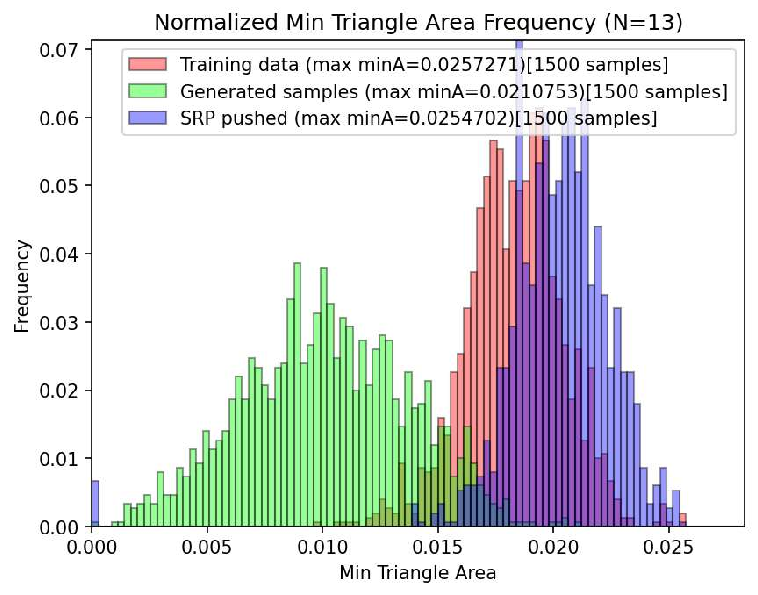

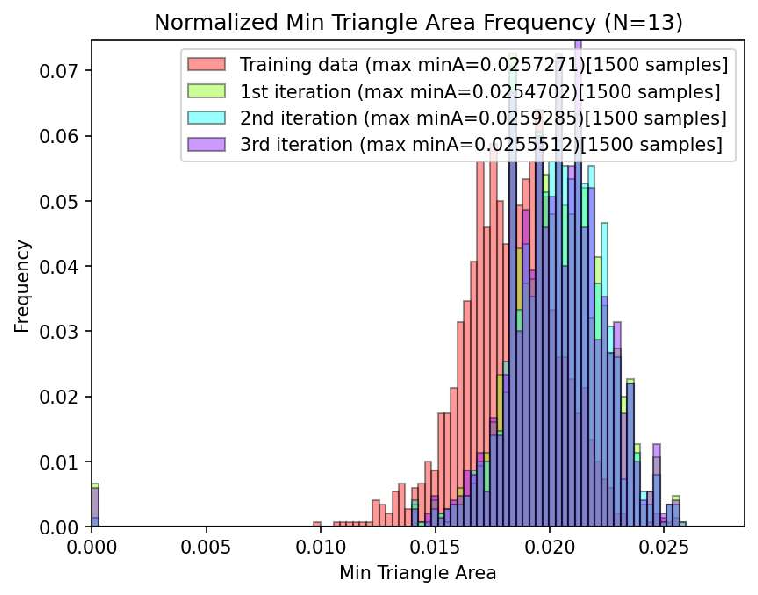

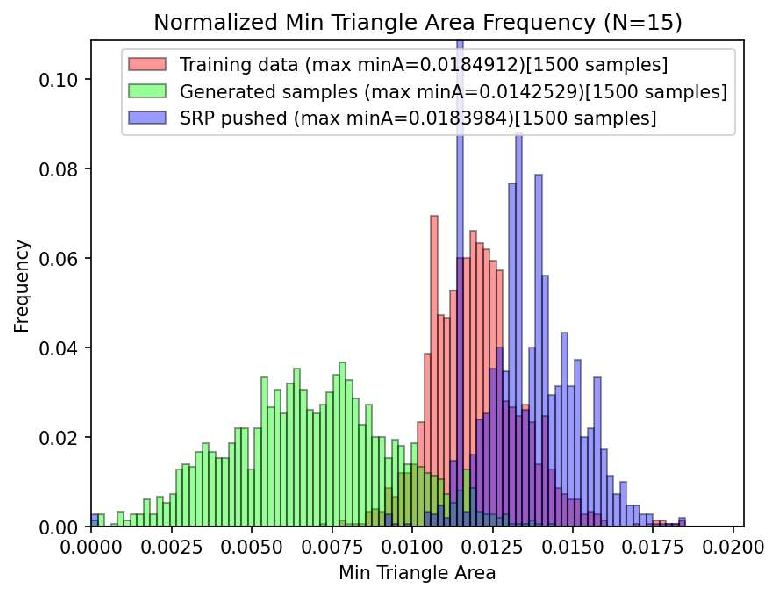

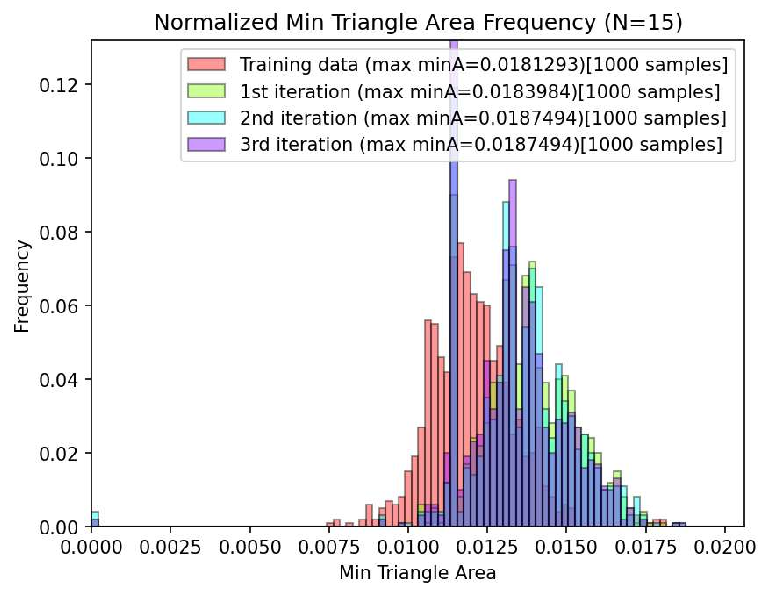

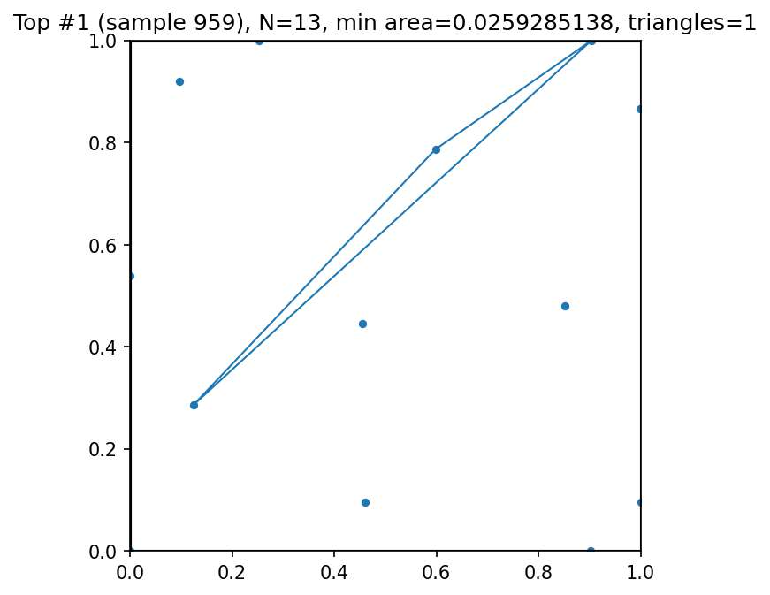

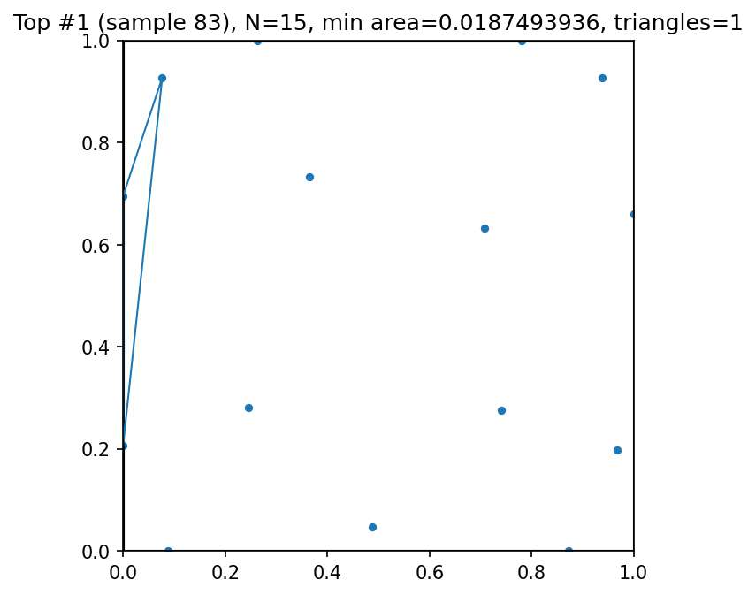

- Strong results on the Heilbronn problem: For 13 points, it improved the smallest triangle area compared to previous records and moved closer to the best known values.

- Faster learning with fewer examples: Because FlowBoost learns from continuous positions and uses direct reward feedback, it needs smaller training sets and fewer improvement rounds. It doesn’t rely on big LLMs, making it more accessible for researchers with regular hardware.

Overall, the system matched or exceeded the best known results in several cases, and did so efficiently.

Why is this important?

- A new way to “design” mathematical objects: FlowBoost shows that AI can help discover new, extreme configurations in math, not just solve equations or prove theorems. The authors call this “de novo mathematical structure design,” meaning creating top‑quality structures from scratch.

- Smarter than brute force, lighter than giant models: By building in the geometry rules and using rewards directly, FlowBoost explores smarter and faster than trial-and-error methods. It also runs on modest computers and avoids relying on huge general-purpose LLMs.

- Useful beyond pure math: The same ideas—placing things optimally under strict rules—appear in materials science, packing and logistics, experiment design, antenna layouts, and more. This approach could help discover better designs in many fields.

In short, FlowBoost is a practical, powerful toolkit for finding exceptional geometric arrangements. It learns how to move from random guesses to excellent solutions, checks the rules as it goes, listens to rewards, keeps exploring, and polishes the final result—all while using relatively little computing power.

Practical Applications

Practical Applications of FlowBoost

Below we distill practical, real-world applications of the paper’s findings and methods—conditional flow matching with geometric inductive biases, geometry-aware sampling, and closed-loop reward-guided optimization—across industry, academia, policy, and daily life. Each item notes sector links, likely tools/workflows, and feasibility assumptions.

Immediate Applications

The following applications are deployable now with modest adaptation, leveraging the open-source implementation and the paper’s demonstrated performance on packing, discrepancy, and extremal geometry tasks.

- Efficient nesting and layout optimization in manufacturing (sheet metal, textile cutting, 3D-print trays)

- Sector: manufacturing, CAD/CAM, additive manufacturing

- Tools/Products: “FlowBoost-Packing” CAD plugin that generates non-overlapping circle/sphere layouts maximizing coverage (sum of radii/density) inside given boundaries; a shop-floor optimizer for arranging parts on a print bed or cutting sheet

- Assumptions/Dependencies: clear geometric constraints (non-overlap, margins) and fast objective evaluators; projection-correction routines integrated into CAD kernels; moderate-scale problem sizes (hundreds–thousands of items) on a single GPU

- Blue-noise sampling and point-set generation for rendering and visualization

- Sector: software (graphics), media

- Tools/Products: “FlowBoost-Rendering” sampler producing low-discrepancy, evenly distributed points for global illumination, texture sampling, and halftoning; replacement for Poisson-disk and stratified samplers

- Assumptions/Dependencies: star discrepancy or related metrics are evaluable; integration with graphics pipelines (e.g., as a library replacing existing sampling modules)

- Quasi-Monte Carlo (QMC) sampling for faster risk estimation and option pricing

- Sector: finance

- Tools/Products: “FlowBoost-QMC” library that generates low-discrepancy point sets to improve convergence rates for pricing, VaR, CVaR, stress testing

- Assumptions/Dependencies: problem-specific integrands and dimensionality are known; evaluators exist to measure discrepancy or surrogate task loss; compatibility with existing numerical libraries

- Sensor, beacon, or camera placement with collision/coverage constraints

- Sector: smart cities, retail, security, IoT

- Tools/Products: “FlowBoost-Placement” optimizer that produces collision-free, high-coverage layouts in buildings or campuses under boundary constraints, with objectives such as minimum separation or coverage uniformity

- Assumptions/Dependencies: a geometric objective (e.g., maximize minimum pairwise distance, minimize discrepancy); reliable projection onto feasibility; on-site constraints (walls/obstacles) encoded

- Multi-robot formation planning and collision-free configuration generation

- Sector: robotics, autonomous systems

- Tools/Products: “FlowBoost-Planner” generating safe formations and waypoints subject to non-overlap and workspace boundaries, with reward-guided exploration to avoid local minima

- Assumptions/Dependencies: fast collision checks and local refinement; objectives aligned with safety and task performance; integration with motion planners

- Wireless constellations and geometric code prototypes (packing-inspired)

- Sector: telecom, signal processing

- Tools/Products: “FlowBoost-Constellation” for designing modulation constellations under geometric constraints (power, distance, PAPR bounds) beyond standard lattices; quick prototyping tool for small/medium dimensions

- Assumptions/Dependencies: performance objectives (e.g., minimum pairwise distance under power budgets) are evaluable; mapping from geometric packings to modulation/codebooks; constraints encoded and projectable

- EDA pilot: macro placement with non-overlap and boundary constraints

- Sector: semiconductors (EDA)

- Tools/Products: “FlowBoost-EDA” macro/block placer that proposes feasible layouts for floorplanning as seeds for downstream detailed placement and routing

- Assumptions/Dependencies: simplified objectives (area overlap, wirelength proxies) and geometry constraints; integration into EDA flows; scaling beyond pilot sizes will require further engineering

- Warehouse/bin packing and container loading (continuous-size variants)

- Sector: logistics

- Tools/Products: packing assistant for containers that treats items as continuous shapes (circles/spheres approximations) with reward-guided exploration to escape local packing minima

- Assumptions/Dependencies: approximating complex shapes by bounding spheres/circles (or convex approximations); feasibility projection for mixed constraints; alignment between continuous surrogate and discrete operational constraints

- Experimental design and coverage in parameter spaces (DoE)

- Sector: R&D, pharmaceuticals, A/B testing

- Tools/Products: “FlowBoost-DoE” generating evenly spread samples (low discrepancy) for surrogate modeling, Bayesian optimization initial designs, and robust coverage of high-dimensional domains

- Assumptions/Dependencies: discrepancy and coverage metrics computable for the domain; clear bounds; moderate dimensionality for tractable evaluation

- Academic tool for extremal geometry and combinatorics

- Sector: academia (mathematics, CS)

- Tools/Products: open-source “FlowBoost-MathLab” supporting discovery in packings, discrepancy minimization, and extremal point configurations; a reproducible alternative to LLM-driven open-loop systems

- Assumptions/Dependencies: problem objectives defined; constraints encoded for geometry-aware sampling; single-GPU access

- Materials/colloids pilot studies: packing in confined geometries

- Sector: materials science, chemistry

- Tools/Products: “FlowBoost-Materials” for designing particle arrangements in microfluidic channels or porous matrices; feasibility-aware sampling of high-density layouts

- Assumptions/Dependencies: geometric surrogates reasonably reflect physical interactions; boundary conditions known; lab validation needed for property-structure links

- Education: interactive exploration of extremal configurations

- Sector: education

- Tools/Products: classroom demos and notebooks that visualize sphere/circle packings, discrepancy, and triangle extremals, illustrating AI-driven mathematical discovery at low cost

- Assumptions/Dependencies: simple objectives and constraints prepackaged for teaching; minimal compute requirements

Long-Term Applications

These applications are plausible but require further research, scaling, domain-specific objective design, and integration with production toolchains.

- De novo error-correcting codes and signal constellations from high-dimensional packings

- Sector: telecom, data storage

- Tools/Products: “FlowBoost-Codes” exploring continuous geometric design spaces to propose new lattice-like structures or irregular constellations under realistic channel constraints

- Assumptions/Dependencies: tighter domain objectives (capacity, BER under fading), differentiable or evaluable simulators; mapping from geometric constructions to code families

- Photonic crystals and metamaterial microstructure design (packing-driven)

- Sector: materials, energy, optics

- Tools/Products: “FlowBoost-Photonic” for inverse design of microstructures with target bandgaps/porosity via reward-guided search in constrained geometry spaces

- Assumptions/Dependencies: physics-informed rewards (EM solvers), scalable feasibility projection for complex constraints; co-design loops with simulators

- Battery electrodes and catalyst supports with targeted porosity/distribution

- Sector: energy, chemical engineering

- Tools/Products: reward-guided layout/design of pore networks and particle distributions to optimize transport, reaction surface area, and mechanical stability

- Assumptions/Dependencies: realistic multiphysics objectives (diffusion, reaction); robust surrogates; fabrication constraints encoded

- Large-scale EDA integration for floorplanning/placement with multi-objective costs

- Sector: semiconductors

- Tools/Products: “FlowBoost-EDA Pro”: closed-loop generative seeds for placer/routing stages optimizing congestion, wirelength, thermal and timing, under massive constraints

- Assumptions/Dependencies: high-quality objective surrogates; coupling with industrial tools; hybrid optimization with classical heuristics; scalability engineering

- Autonomous systems: on-board generative planners with geometric feasibility

- Sector: robotics, aerospace, automotive

- Tools/Products: embedded “FlowBoost-Planner” modules that sample feasible trajectories/configurations under collision constraints with reward signals from mission objectives

- Assumptions/Dependencies: real-time guarantees, safety certification, reliable projection under dynamic constraints; hardware acceleration

- Standardization of low-discrepancy generators for QMC in regulated domains

- Sector: finance, pharmaceuticals (trial design)

- Tools/Products: validated “FlowBoost-QMC” generators with documented convergence properties for risk, clinical trial simulation, and uncertainty quantification

- Assumptions/Dependencies: regulator-accepted benchmarks; reproducibility; statistical guarantees for discrepancy improvements

- High-dimensional sampling for AI systems (dataset design and augmentation)

- Sector: AI/ML

- Tools/Products: “FlowBoost-Sampler” for generating structured, evenly distributed samples over feature spaces for training robust models, uncertainty quantification, and active learning

- Assumptions/Dependencies: meaningful geometric parameterizations of feature spaces; reliable coverage metrics; interaction with model training loops

- Lattice-based cryptography parameter exploration (packing/discrepancy proxies)

- Sector: cybersecurity

- Tools/Products: exploratory tools for assessing parameter regimes and geometric constructions affecting hardness assumptions, guided by packings/discrepancy analogues

- Assumptions/Dependencies: mapping between geometric extremals and cryptographic hardness proxies; collaboration with cryptographers; careful validation

- Integrated “automated mathematician” workflows for extremal combinatorics

- Sector: academia

- Tools/Products: closed-loop discovery agents using domain-specific generative models (FlowBoost) plus local refinement to propose candidate constructions, conjectures, and counterexamples

- Assumptions/Dependencies: scalable training for novel domains; robust scoring functions; community adoption and curation of benchmarks

- Facility layout and urban planning under complex geometric constraints

- Sector: policy, civil engineering

- Tools/Products: long-horizon layout optimization of parks, facilities, or sensor grids with multi-objective rewards (coverage, fairness, access)

- Assumptions/Dependencies: socio-technical objectives; integration with GIS; public consultation constraints; transparent optimization for policy acceptance

Notes on Feasibility and Dependencies

- Objective design and evaluation: FlowBoost needs task-specific objectives that are computable (not necessarily differentiable). Success depends on well-crafted rewards and fast evaluators.

- Constraint encoding: Geometry-aware sampling relies on accurate feasibility projections. Complex constraints (non-convex obstacles, multi-physics) require domain-specific projection or repair.

- Scale and compute: The paper demonstrates single-GPU feasibility for moderate sizes. Industrial-scale problems may require solver engineering, batching, or hybrid strategies.

- Diversity vs. exploitation: Reward-guided updates must be regularized (trust-region/consistency) to avoid collapse; exploration operators should expand support beyond current modes.

- Domain adaptation: Mapping discrete/graph problems to continuous configuration spaces is possible but may need permutation-equivariant architectures and specialized penalties.

- Validation and guarantees: Many long-term applications require empirical validation against domain simulators and, in regulated sectors, statistical or safety guarantees.

Glossary

- Advantage-Weighted Regression (AWR): An offline RL method that updates a policy via weighted maximum likelihood where weights are exponential in estimated advantages. "reminiscent of Advantage-Weighted Regression (AWR), where policy updates take the form of weighted maximum likelihood with weights exponential in the advantage."

- AlphaEvolve: An LLM-driven evolutionary coding agent that proposes code edits and iteratively improves solutions via selection. "AlphaEvolve \cite{novikov2025alphaevolve,georgiev2025mathematical} scales this paradigm into a more general-purpose evolutionary coding agent."

- AlphaFold: A deep learning system for protein structure prediction that exemplifies domain-specific architectures. "AlphaFold \cite{jumper2021highly}"

- Amortized sampler: A learned generator that maps noise to solutions in one pass, avoiding slow iterative sampling. "learn a generative model (amortized sampler) so that it concentrates mass near "

- Boltzmann-generator: A flow-based approach that learns transports to sample from Gibbs distributions defined by energies. "Boltzmann-generator approaches in molecular and materials modeling \cite{noe2019boltzmann}"

- BYOL: A self-supervised learning method using a target (EMA) network to avoid collapse without negatives. "BYOL \cite{grill2020bootstrap}"

- Circle packing (sum of radii): A geometric optimization problem placing circles to maximize the total radii under non-overlap constraints. "Circle packing (sum of radii): New best constructions for and circles, surpassing AlphaEvolve."

- Closed-loop Reward-Guided optimization: A training regime where objective feedback directly updates the generative model, transforming search into a closed-loop system. "Closed-loop Reward-Guided optimization."

- Conditional Flow Matching (CFM): A training framework that learns a time-dependent vector field to transport a simple prior to a data distribution via conditional velocity regression. "We adopt the conditional flow matching (CFM) \cite{lipman2022flow} framework"

- Constraint manifold: The set of configurations satisfying hard constraints, often treated as a manifold for projection. "Project onto the constraint manifold via linearised constraint enforcement."

- Consistency regularization: A technique that penalizes deviation from a reference (teacher) model to prevent collapse and maintain diversity. "We prevent collapse through consistency regularization, penalising deviation of the student's velocity field from the frozen teacher's predictions."

- Contact graph: A graph capturing pairs of objects in contact or overlap to guide geometric corrections. "Δcontact is computed from the contact graph (directions that relieve overlaps)"

- DINO: A self-supervised method using knowledge distillation with an EMA teacher for stable targets. "DINO \cite{caron2021emerging}"

- Energy-based (Boltzmann/Gibbs) target: A distribution over configurations proportional to the exponential of (negative) energy or reward. "an energy-based (Boltzmann/Gibbs) target"

- Equivariant diffusion models: Diffusion models that respect symmetry groups (e.g., rotations, permutations), useful in molecular generation. "equivariant diffusion models for molecular generation \cite{watson2023novo}"

- Exponential Moving Average (EMA): A smoothed average of parameters used to form a stable teacher network. "the exponential moving average (EMA) teacher in DINO \cite{caron2021emerging} and BYOL \cite{grill2020bootstrap}"

- Flow Matching: A class of generative models that learn deterministic vector fields to transport distributions. "Flow Matching \cite{lipman2022flow} models"

- FlowBoost: The proposed closed-loop generative framework combining CFM, reward-guided optimization, and geometry-aware sampling. "We introduce FlowBoost, a closed-loop generative framework that learns to discover rare and extremal combinatorial structures"

- Frobenius norm: A matrix norm equal to the square root of the sum of squared entries. "Here $\|\cdot\|_{\mathrm{F}$ is the Frobenius norm on "

- Gauss--Newton Projection: A linearized least-squares projection used to enforce constraints by solving normal equations. "Gauss--Newton Projection: Project onto the constraint manifold via linearised constraint enforcement."

- Generative Adversarial networks: A generative modeling framework with a generator and discriminator trained adversarially. "Generative Adversarial networks \cite{goodfellow2020generative}"

- Geometry-Aware Sampling (GAS): An inference procedure interleaving ODE flow integration with constraint projections to maintain feasibility. "We introduce a Geometry-Aware Sampling (GAS) algorithm that interleaves flow integration with constraint projection"

- Heilbronn triangle problem: An extremal geometry problem about arranging points to optimize minimal triangle area. "the Heilbronn triangle problem"

- Jacobian: The matrix of first derivatives of a vector-valued function, used here for constraint linearization. "let denote the Jacobian of the constraint residuals ."

- MAP-Elites: A quality-diversity evolutionary algorithm that maintains elites across behavior niches. "LLM-guided mutation, MAP-Elites"

- Markov Chain Monte Carlo (MCMC): A family of sampling methods that generate samples from a target distribution via a Markov chain. "Markov Chain Monte Carlo (MCMC) methods can sample from given only the unnormalized density"

- Molecular dynamics: A simulation technique integrating equations of motion; used by analogy for constraint satisfaction and refinement. "This projection-relaxation scheme is conceptually similar to constraint satisfaction in molecular dynamics \cite{nam2024flow}"

- Normalizing Flows: Invertible generative models enabling exact likelihoods and efficient sampling via change of variables. "Normalizing Flows \cite{rezende2015variational}"

- ODE integration: Numerical solution of ordinary differential equations to follow a learned flow field. "Standard ODE integration of the learned flow~\eqref{eq:flow_ode} can drift off the feasible set"

- Optimal transport: A theory of transporting probability mass optimally; used for defining interpolation paths and target velocities. "regression onto the conditional velocity field of an optimal transport interpolation."

- Out-of-Distribution (OOD): Samples or regions not represented in the training distribution. "Out-of-Distribution (OOD)"

- Permutation Equivariance: A property where model outputs transform consistently under permutations of inputs. "Permutation Equivariance, Geometric Consraints"

- Proximal Policy Optimisation (PPO): An RL algorithm employing a clipped objective as a trust-region-like constraint. "proximal policy Optimisation (PPO) \cite{schulman2017proximal}"

- Proximal Relaxation: A regularized projection step balancing constraint satisfaction with staying close to the flow trajectory. "Proximal Relaxation: To prevent projection from deviating excessively from the learned flow manifold"

- Push-forward: The distribution resulting from mapping a random variable through a function. "the push-forward of under this flow approximates $\mu_{\mathrm{data}$ at ."

- Reinforcement Learning: A learning paradigm optimizing actions through reward signals, used here to guide generation. "flow-based generative models with Reinforcement Learning to extremal structure discovery in pure mathematics."

- RLHF: Reinforcement Learning from Human Feedback, used to align models with human preferences. "RLHF \cite{christiano2017deep}"

- Simulation Based Optimization (SBO): Using simulators as optimization oracles to learn samplers that concentrate on high-reward solutions. "Simulation Based Optimization (SBO) \cite{amaran2016simulation}"

- Simulation-Based Inference (SBI): Learning posteriors or likelihood surrogates from simulators rather than optimizing objectives. "Simulation-Based Inference (SBI) \cite{cranmer2020frontier,deistler2025simulation}"

- Sphere packing in hypercubes: Placing equal spheres inside a hypercube to maximize radius/density without overlaps. "sphere packing in hypercubes"

- Star discrepancy minimization: Minimizing the star discrepancy of point sets to achieve uniform distribution. "and star discrepancy minimization."

- Stochastic Relaxation with Perturbations (SRP): A local search heuristic combining relaxation steps with random perturbations. "stochastic relaxation with perturbations (SRP)"

- Teacher-student framework: A setup where a student model is trained with guidance or targets from a fixed teacher model. "RG-CFM adopts a teacher-student framework"

- Trust region: A constraint on update magnitude to ensure stable optimization steps. "the trust region in TRPO \cite{schulman2015trust}"

- TRPO: Trust Region Policy Optimization, an RL algorithm enforcing a trust region via KL constraints. "TRPO \cite{schulman2015trust}"

- Variational Auto Encoders (VAEs): Latent-variable generative models trained via variational inference. "Variational Auto Encoders (VAEs) \cite{kingma2013auto}"

Collections

Sign up for free to add this paper to one or more collections.