- The paper introduces EFSI-DETR, a transformer-based framework that integrates adaptive frequency-spatial fusion to enhance small object detection in UAV imagery.

- It utilizes innovative modules—DyFusNet, ESFC, and FFR—to achieve substantial AP gains and maintain real-time performance on benchmarks like VisDrone and CODrone.

- Experimental results demonstrate up to +5.0% AP improvement over YOLO variants, highlighting efficient deployment with minimal computational overhead.

EFSI-DETR: Efficient Frequency-Semantic Integration for Real-Time Small Object Detection in UAV Imagery

Introduction

Small object detection remains a persistent obstacle in UAV imagery applications, predominantly due to the significant scale variation and the limited pixel coverage of objects in aerial views. Standard convolutional approaches and popular detector architectures such as the YOLO series exhibit notable performance deterioration in this regime, primarily for two reasons: their reliance on static multi-scale fusion strategies and their underutilization of frequency-domain information. The paper "EFSI-DETR: Efficient Frequency-Semantic Integration for Real-Time Small Object Detection in UAV Imagery" systematically addresses these core shortcomings by introducing a transformer-based detection framework that incorporates adaptive frequency-spatial fusion and efficient semantic extraction.

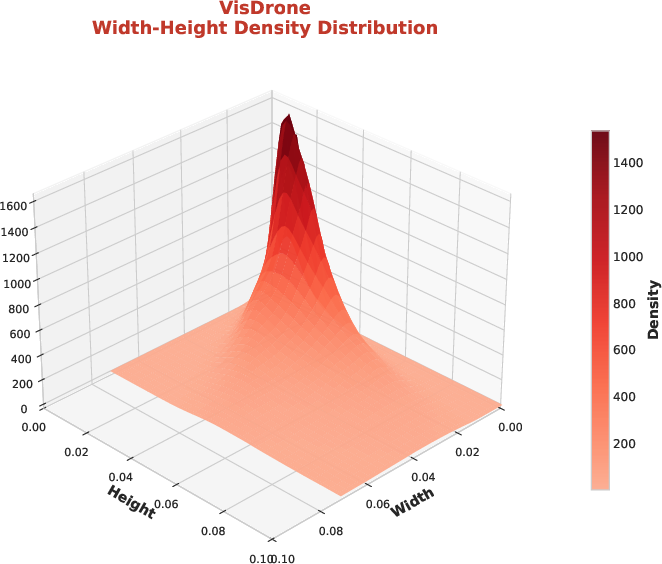

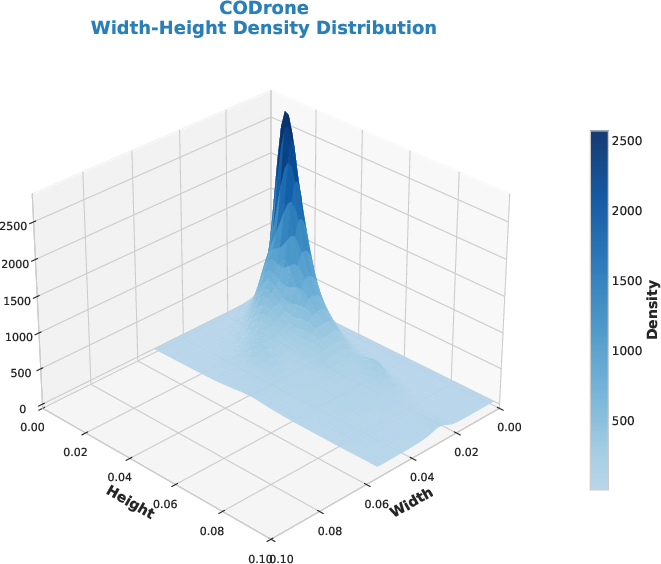

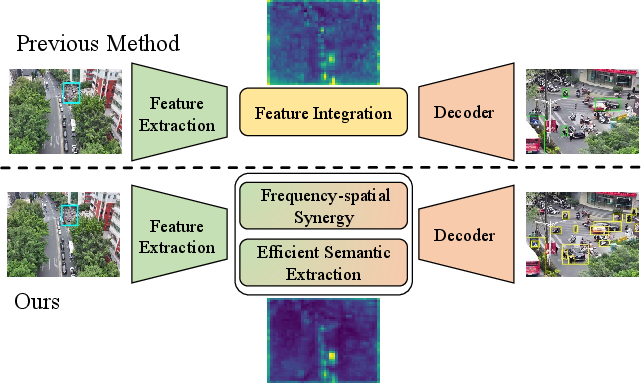

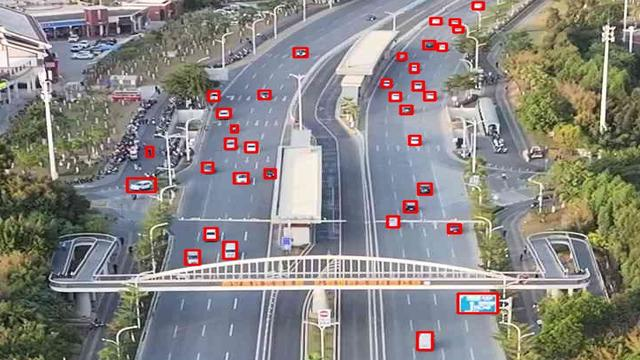

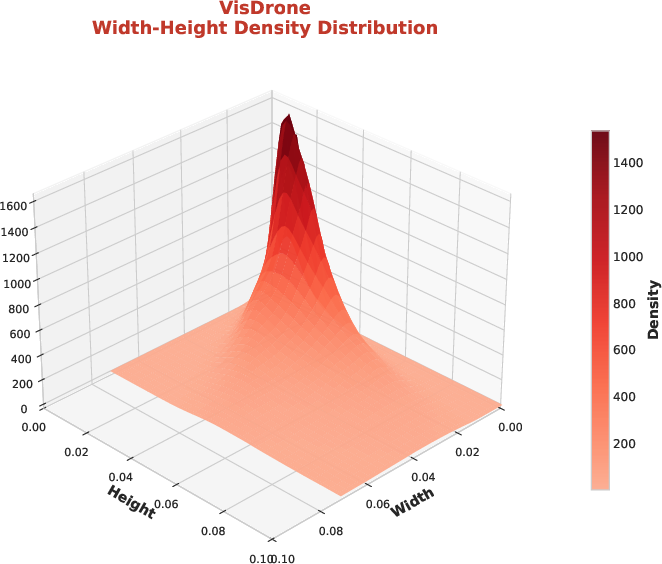

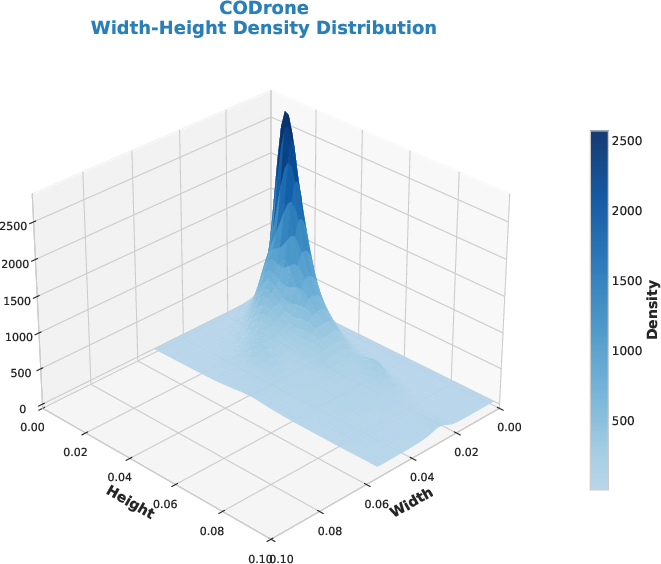

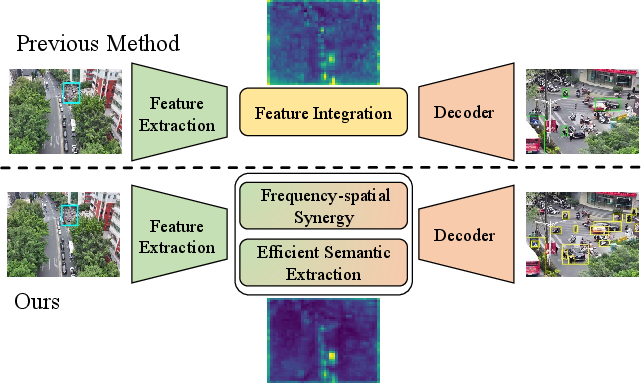

Figure 1: (a) Density plot showing the dominance of small objects in VisDrone and CODrone; (b) Schematic indicating EFSI-DETR's dynamic feature integration compared to static prior approaches.

Methodology

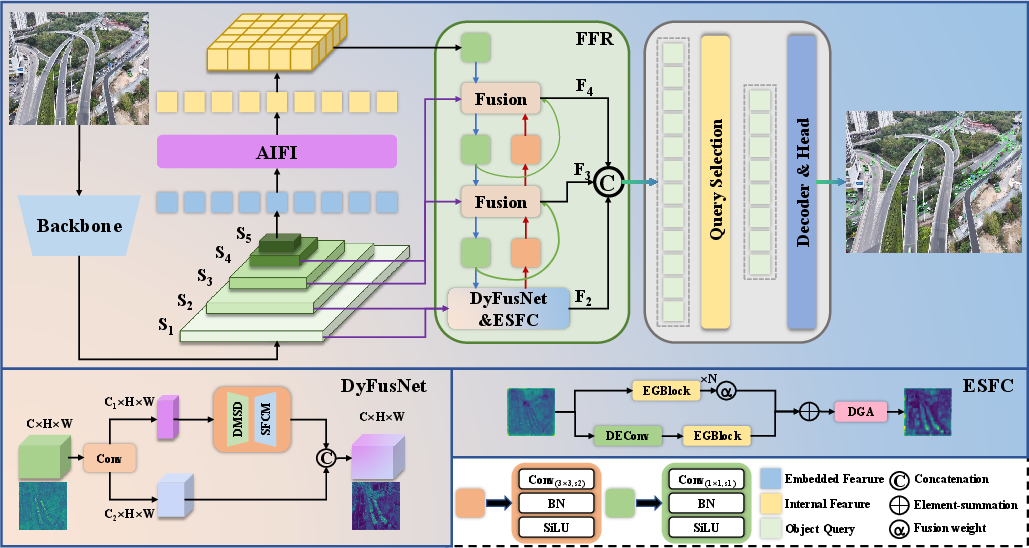

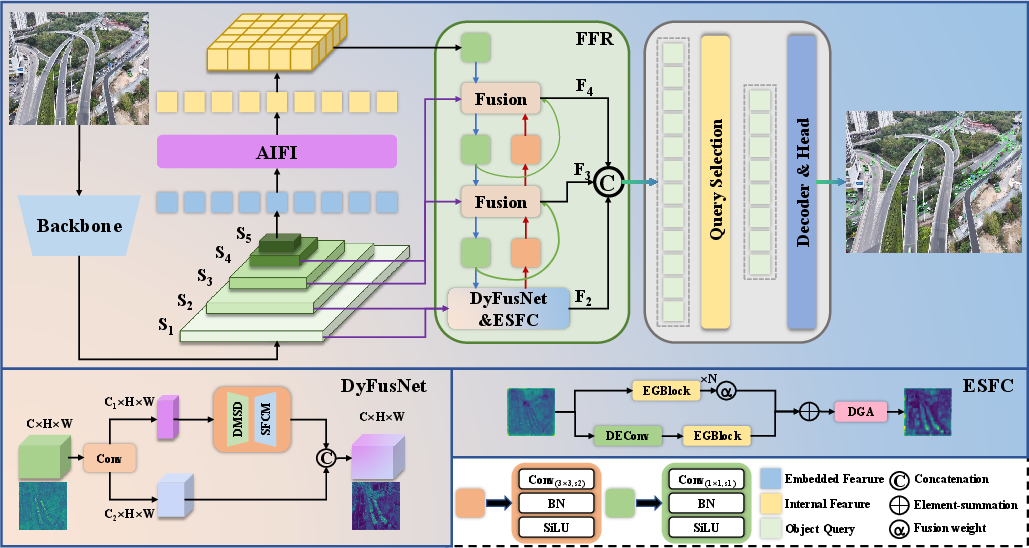

The proposed EFSI-DETR framework comprises three key modular innovations: the Dynamic Frequency-Spatial Unified Synergy Network (DyFusNet), the Efficient Semantic Feature Concentrator (ESFC), and the Fine-grained Feature Retention (FFR) strategy. The design intention is to enhance discriminative power for small object representations while retaining real-time throughput and deployment efficacy.

Figure 2: Overview of the EFSI-DETR architecture highlighting DyFusNet, ESFC, and FFR integration within the detection pipeline.

Dynamic Frequency-Spatial Unified Synergy Network (DyFusNet)

DyFusNet introduces a content-adaptive, learnable decomposition of input features into low-, mid-, and high-frequency analogues without explicit transforms (e.g., FFT). Instead, the module synthesizes frequency-selective responses using average pooling (low), identity (mid), and depthwise convolution (high), modulated via softmax-weighted gating derived from input content. This design circumvents memory and kernel-fusion bottlenecks endemic to transform-based networks, offering hardware-friendly deployment. Additionally, the Spatial-Frequency Cooperative Modulation further integrates multi-scale spatial context with channel attention, yielding contextually gated, adaptively fused feature maps optimal for dense prediction tasks.

Efficient Semantic Feature Concentrator (ESFC)

The ESFC module leverages a dual-branch structure consisting of dynamic expert convolution (multiple expert kernels with input-conditioned attention weighting) and parameter-efficient ghost blocks. The dynamic expert branch increases representational diversity while the ghost branch recapitulates expensive convolutional outputs with cheap, depthwise alternatives, facilitating robust semantic extraction at low inference cost. A subsequent dual-domain guidance aggregation stage applies content-adaptive channel and spatial attention, enabling more fine-grained discrimination crucial for small object contexts.

Fine-grained Feature Retention (FFR)

Recognizing the critical dependency of small object detection on high-resolution, low-level features, the FFR mechanism supplements the semantic backbone by routing additional shallow feature maps into the fusion process, while eliminating semantically redundant deeper layers. This hybrid path enhances localization precision for small-scale objects without marked overhead on latency or parameter count.

Experimental Results

Evaluation on two UAV-specific benchmarks—VisDrone and CODrone—demonstrates that EFSI-DETR provides substantial gains in precision, especially for small objects, without sacrificing real-time performance. On VisDrone (input size 640), EFSI-DETR delivers 33.1% AP and 24.8% APs, outperforming recent YOLO and DETR variants by robust margins (e.g., +5.0% AP over YOLOv12-X and +4.9% AP over DEIM-RT-DETRv2-R50). The model attains 188 FPS on a single RTX 4090, indicative of strong deployment potential. On CODrone, AP improvements are consistent, with a 20.2% AP—+3.0% over the strongest YOLO competitor.

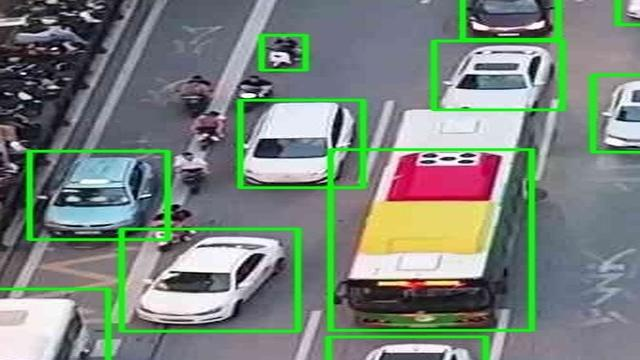

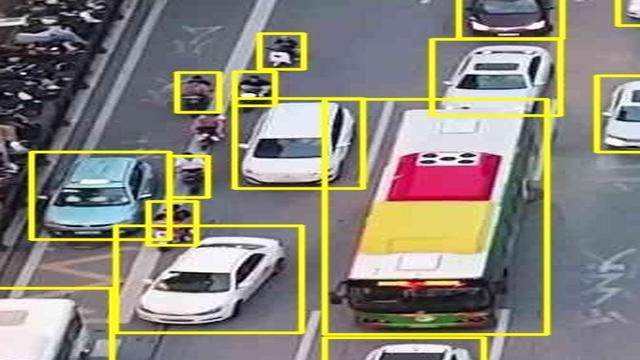

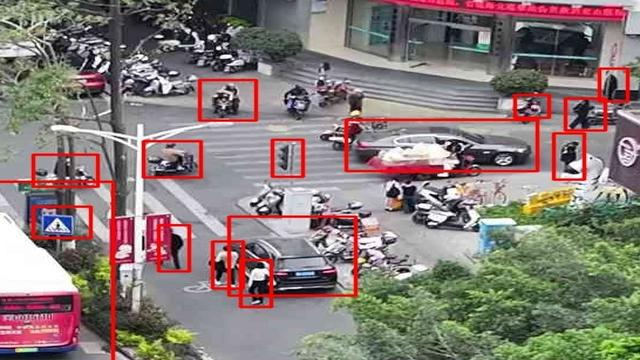

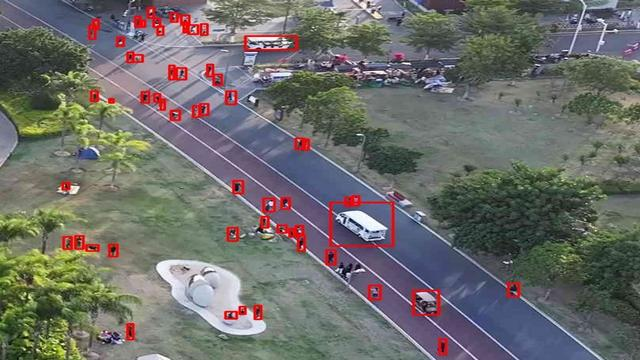

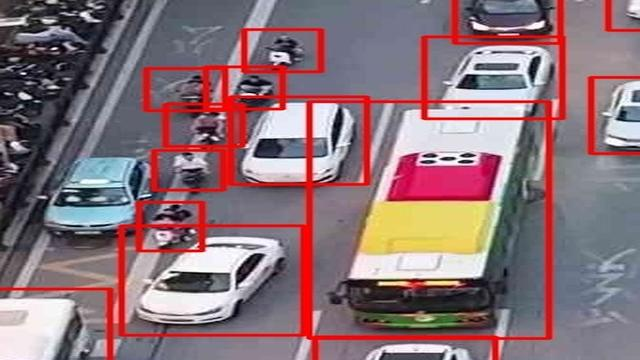

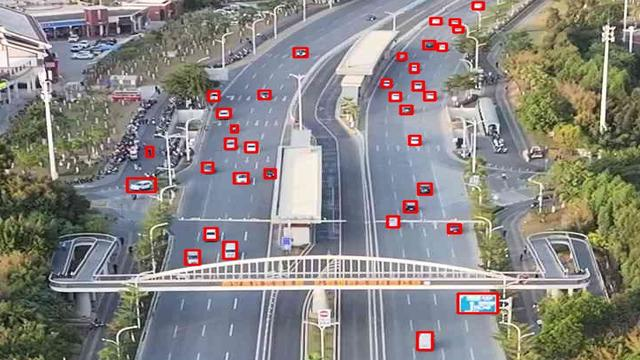

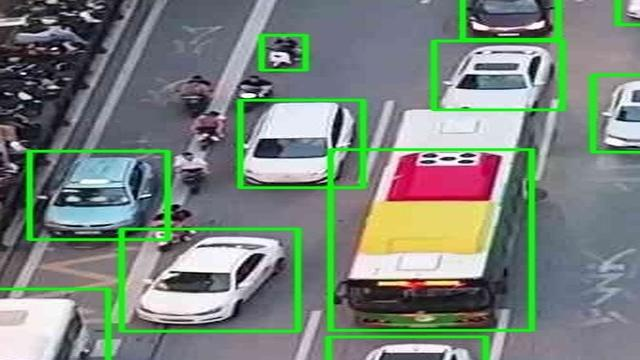

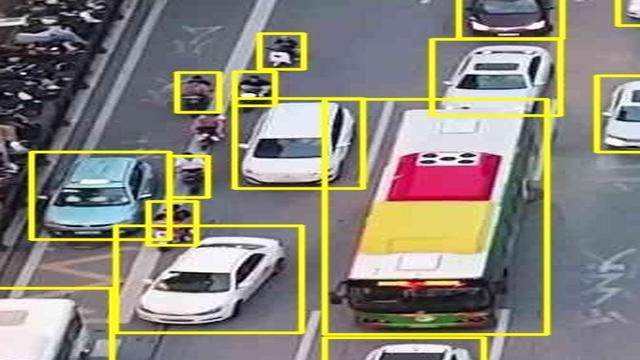

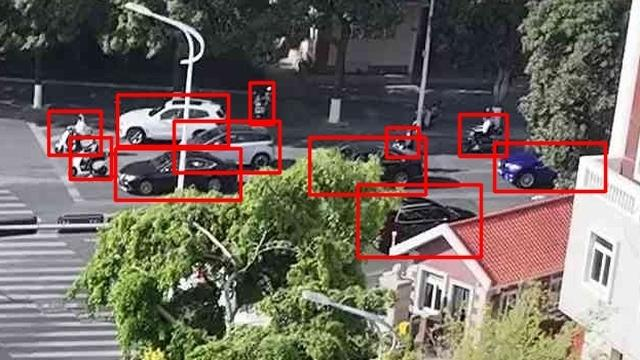

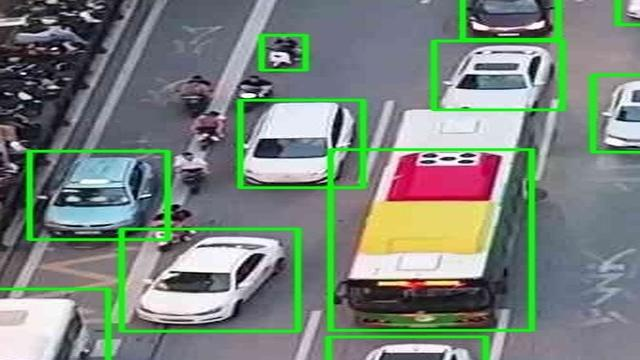

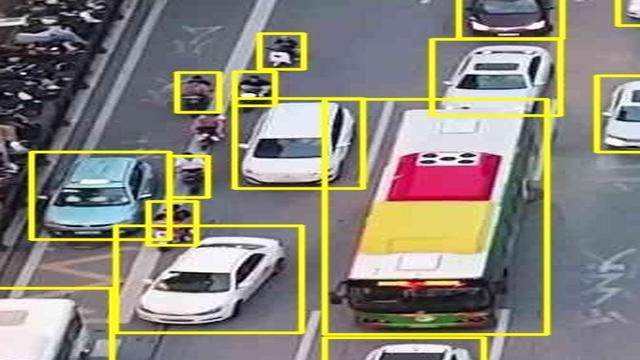

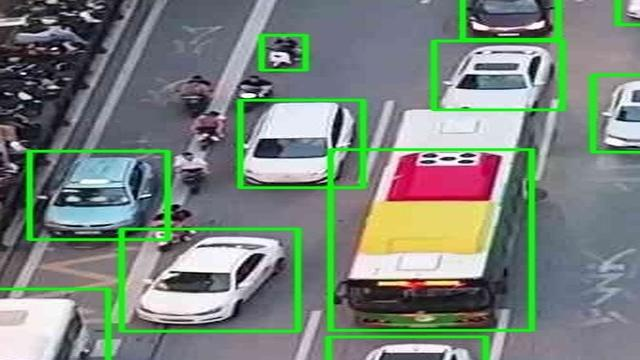

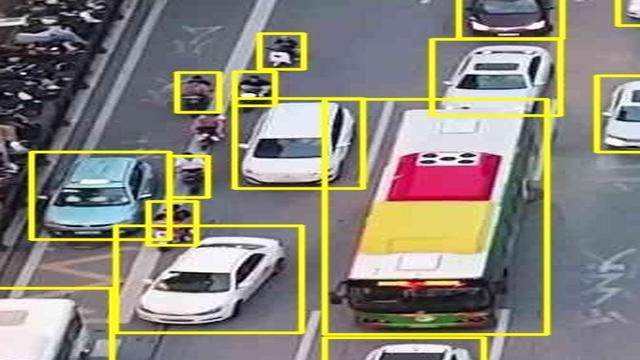

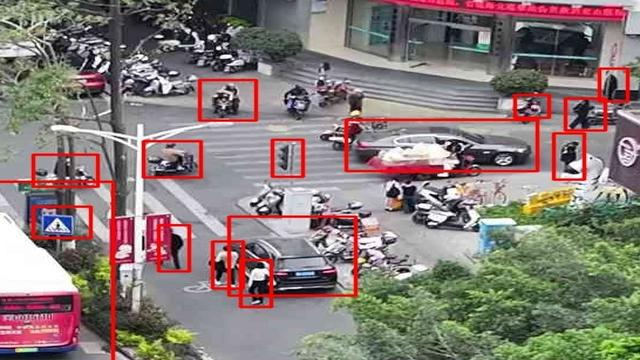

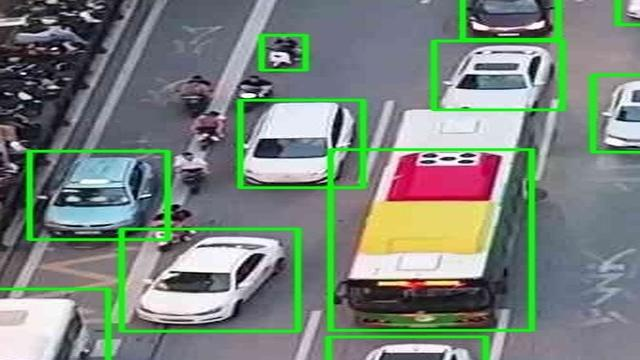

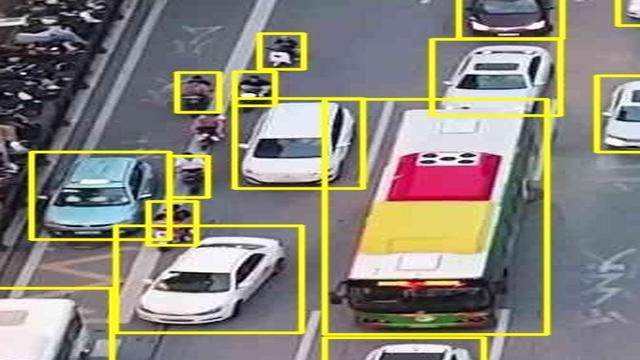

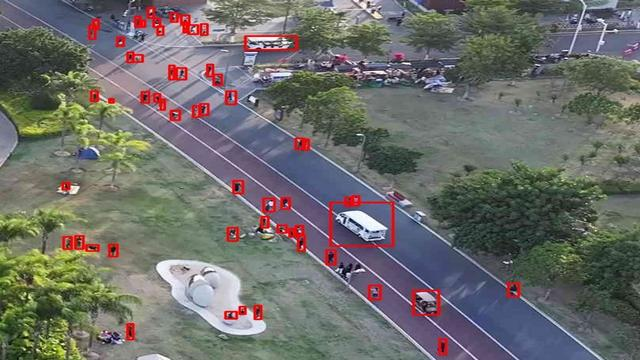

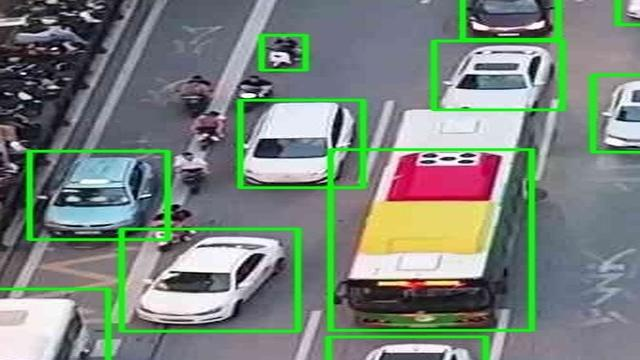

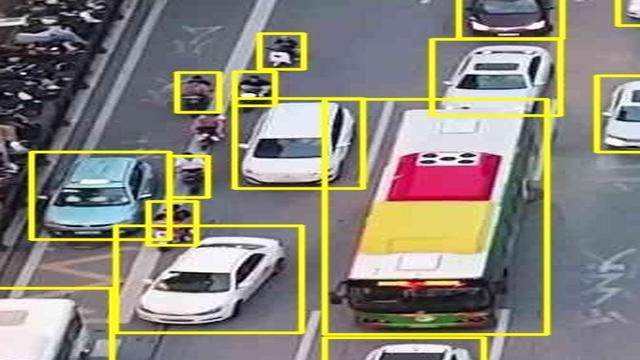

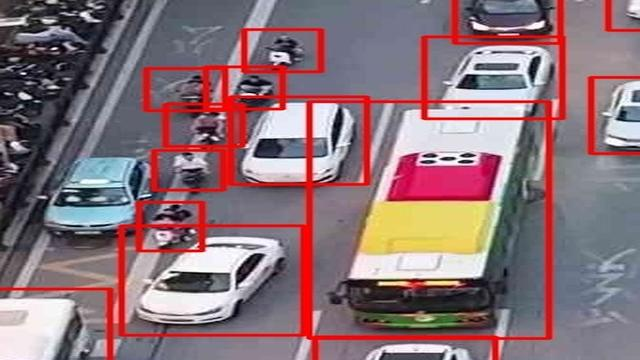

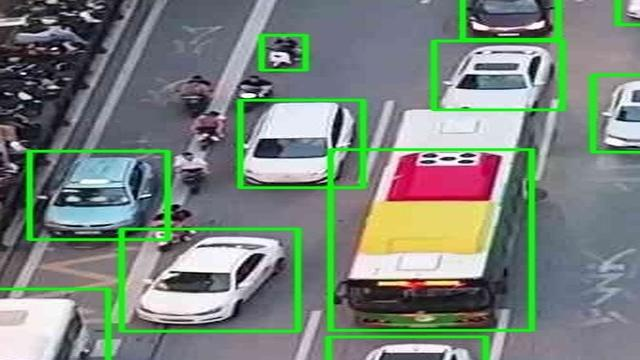

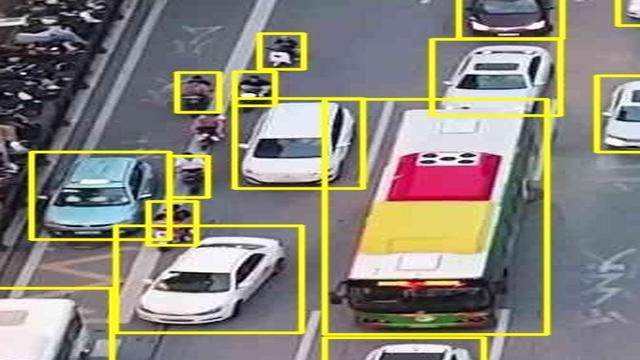

Figure 3: Qualitative visual comparison of RT-DETR and EFSI-DETR: EFSI-DETR exhibits greater recall and localization accuracy for small and overlapped targets.

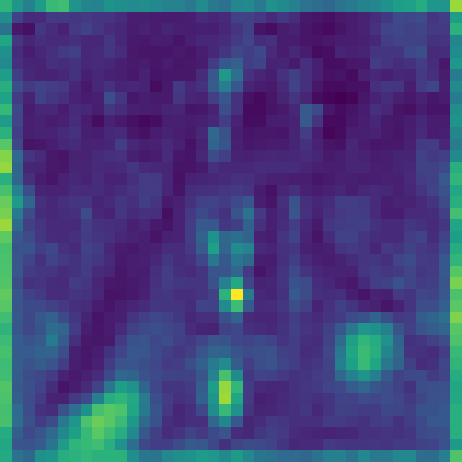

Ablation studies quantify the effect of each major component: FFR provides substantial AP boosts, DyFusNet yields consistent gains in all precision metrics, and ESFC improves both semantic abstraction and parameter efficiency. Architectural analysis reveals that placement of ESFC in deeper stages optimizes overall performance while retaining high small-object recall.

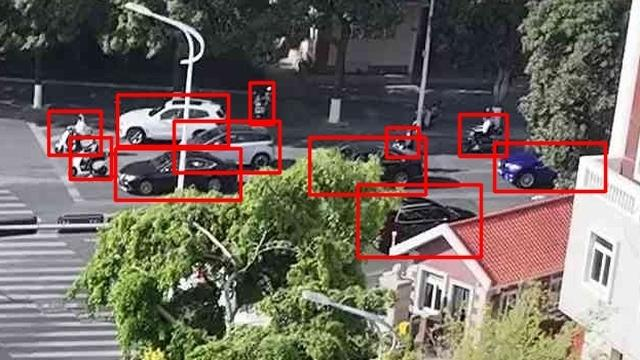

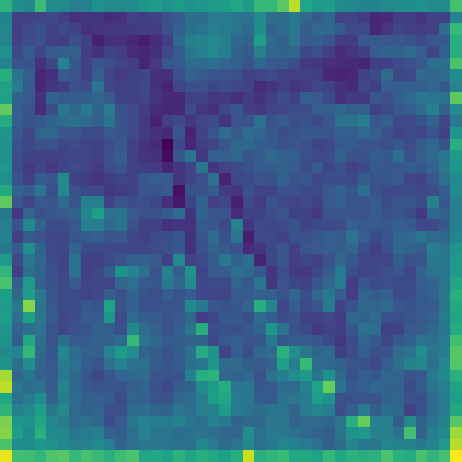

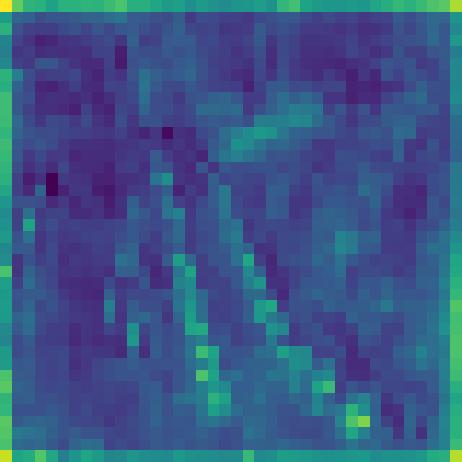

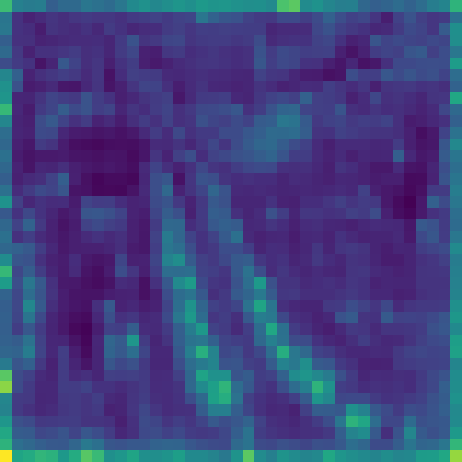

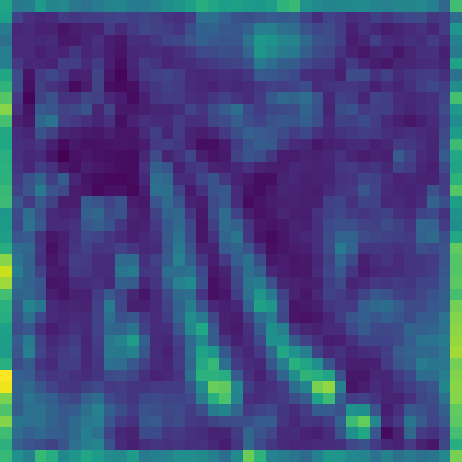

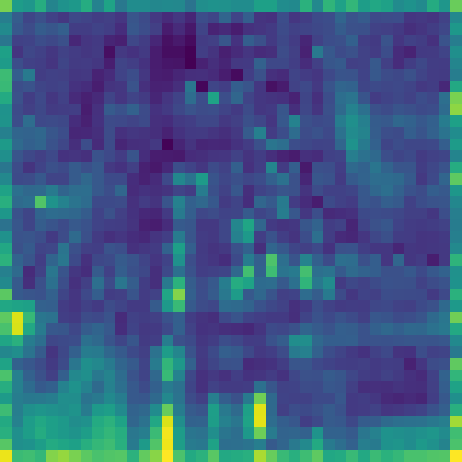

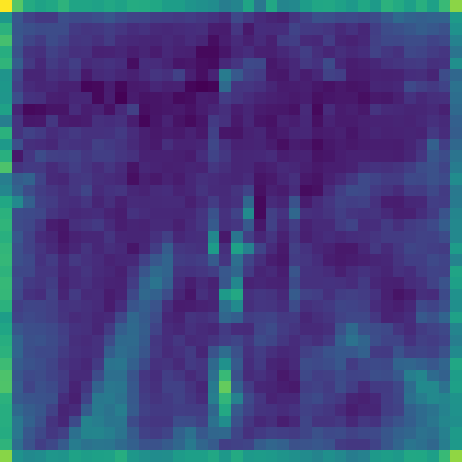

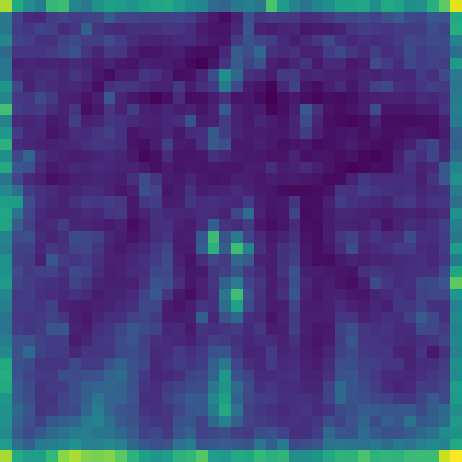

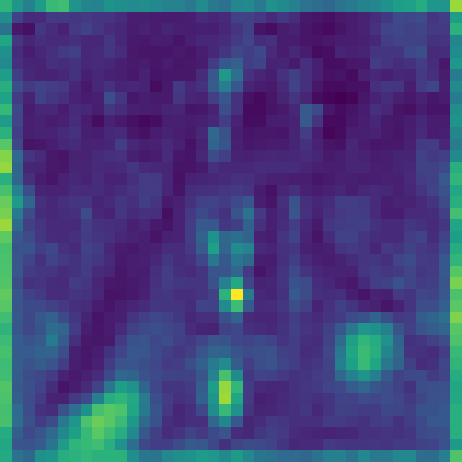

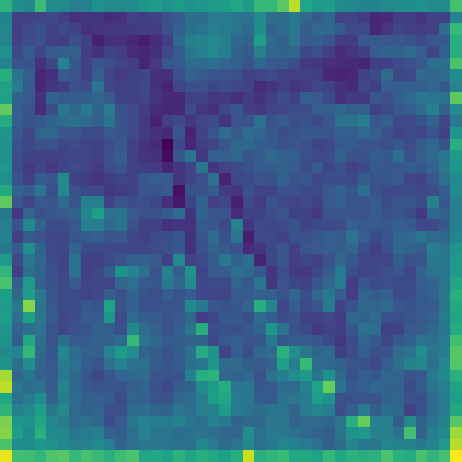

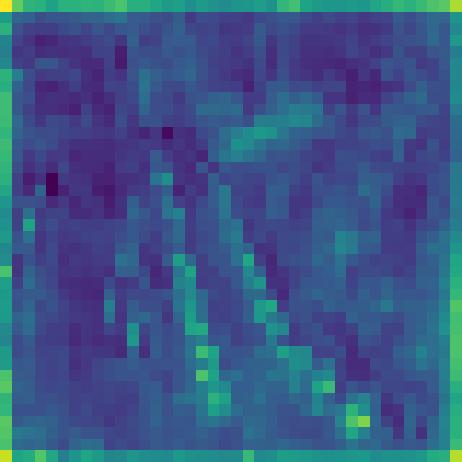

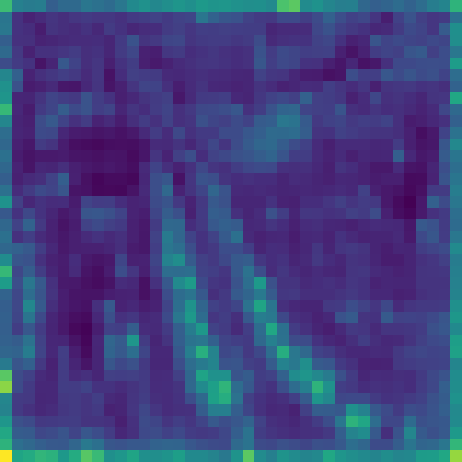

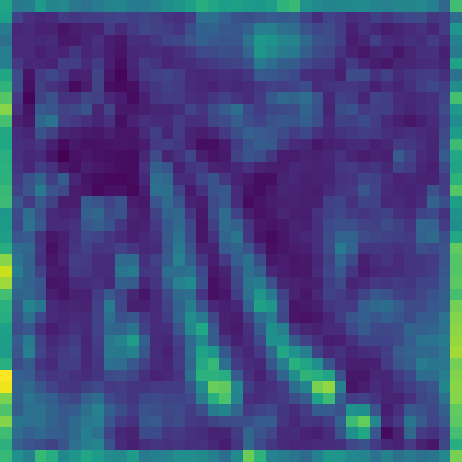

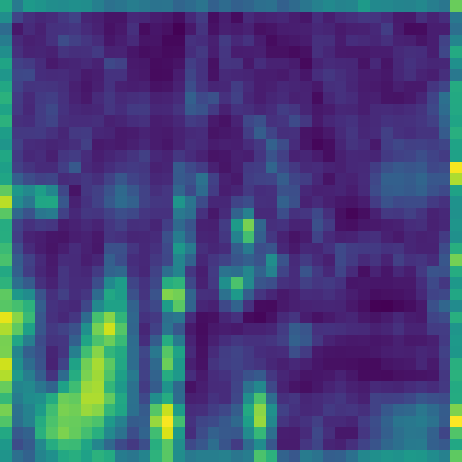

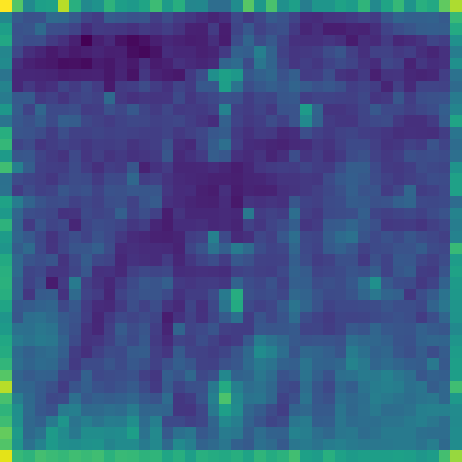

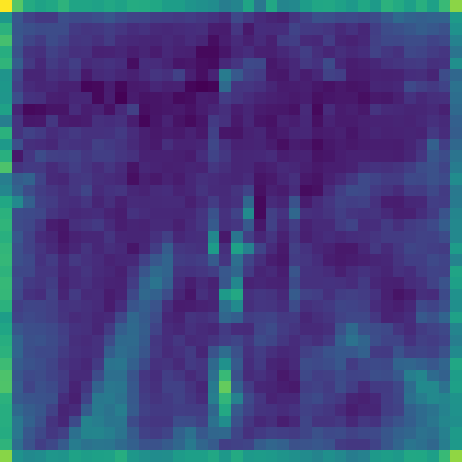

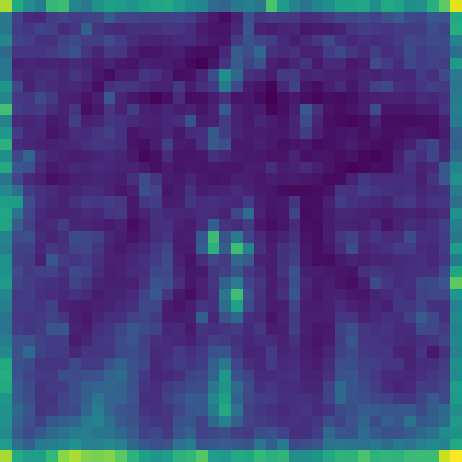

Figure 4: Visualization of intermediate feature representation improves progressively as each core component (FFR, DyFusNet, ESFC) is added, evidencing cumulative gains in object awareness and discrimination.

Implications and Future Directions

This work demonstrates the feasibility and utility of incorporating frequency-inspired, content-adaptive fusion within the DETR paradigm for UAV-specific small object detection. The deployment-oriented design (no explicit transforms, operator fusion compatibility) positions EFSI-DETR as an attractive candidate for edge and real-time platforms typical in UAV applications. The authors observe a slight trade-off in performance for large objects, attributed to the reduced emphasis on deep semantic maps; future research can explore more adaptive, context-sensitive multi-scale allocation strategies to balance performance across object sizes.

Theoretically, the network establishes that frequency-domain information need not be explicitly computed: learnable spatial-domain proxies are sufficient and deployment-friendly. Practically, the architecture’s modularity and low computational footprint recommend it for further integration into high-throughput vision pipelines.

Conclusion

EFSI-DETR offers a robust, end-to-end solution for small object detection in UAV imagery by synergistically fusing dynamic, frequency-inspired components, efficient semantic extractors, and enhanced spatial retention strategies. It achieves clear state-of-the-art results on prominent UAV benchmarks, especially for small-scale objects, while maintaining real-time efficiency and low complexity. The approach's emphasis on inference-optimized design and adaptive feature fusion has significant implications for both methodological advances and practical deployment in aerial vision systems.

(2601.18597)