- The paper demonstrates that integrating LLMs with static analysis reduces false positives by 94-98% while maintaining high recall.

- Methodology involved analyzing 433 bug alarms from Tencent, categorizing issues into NPD, OOB, and DBZ for empirical assessment.

- LLM techniques proved cost-effective by decreasing inspection time from 10-20 minutes per alarm to seconds with minimal cost.

Reducing False Positives in Static Bug Detection with LLMs: An Empirical Study in Industry

Introduction

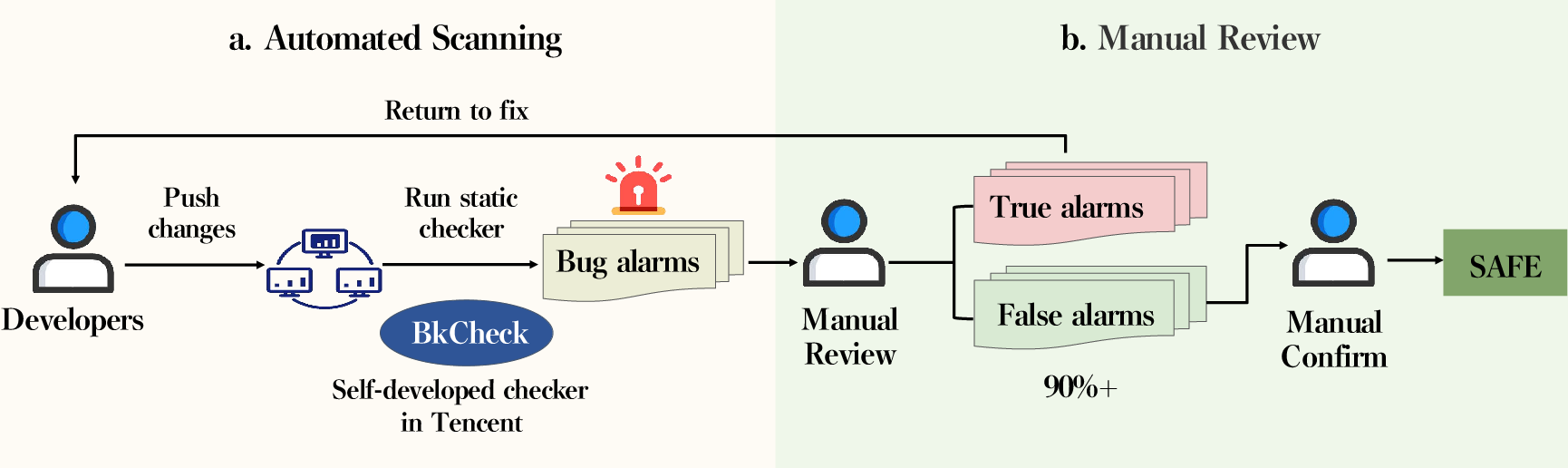

Static analysis tools (SATs) are extensively utilized in software development to enhance software quality by identifying bugs without executing the software. However, their practical effectiveness is significantly hindered by high false positive rates, especially in large-scale industrial applications. This paper presents an empirical study investigating the efficacy of LLMs to address false positive alarms in an operational industry context at Tencent, utilizing a dataset drawn from real-world code bases.

Methodology

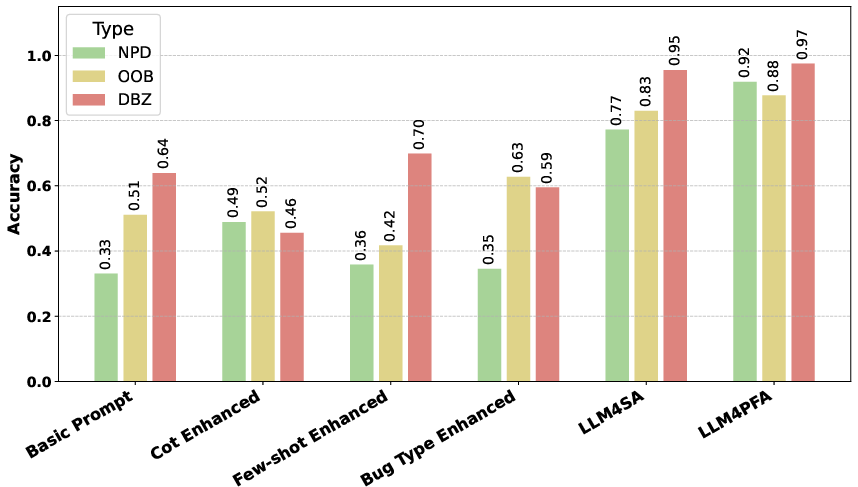

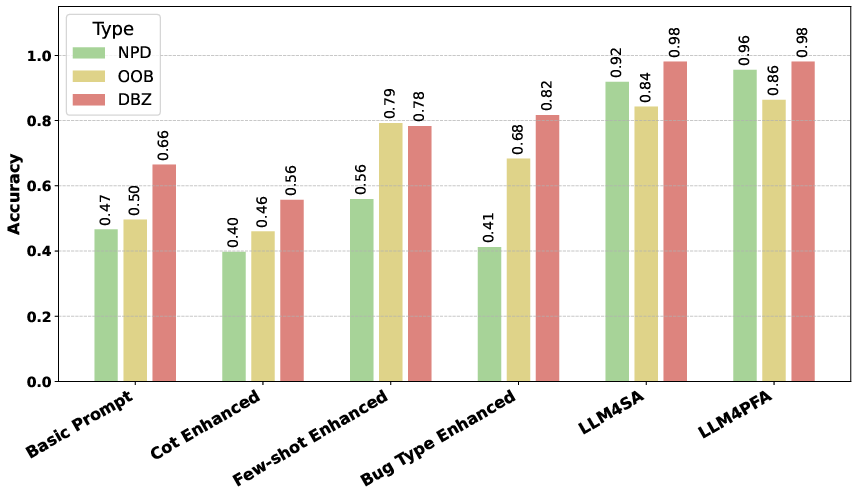

The study constructed a dataset involving 433 bug alarms from Tencent's enterprise SATs, encompassing software from its Advertising and Marketing Services sector. Out of these, 328 were false positives and 105 were true positives, primarily categorized into three bug types: Null Pointer Dereference (NPD), Out-of-Bounds (OOB), and Divide-by-Zero (DBZ). The research evaluates the performance of various LLM-based techniques on this dataset, aiming to assess their effectiveness in reducing false positives.

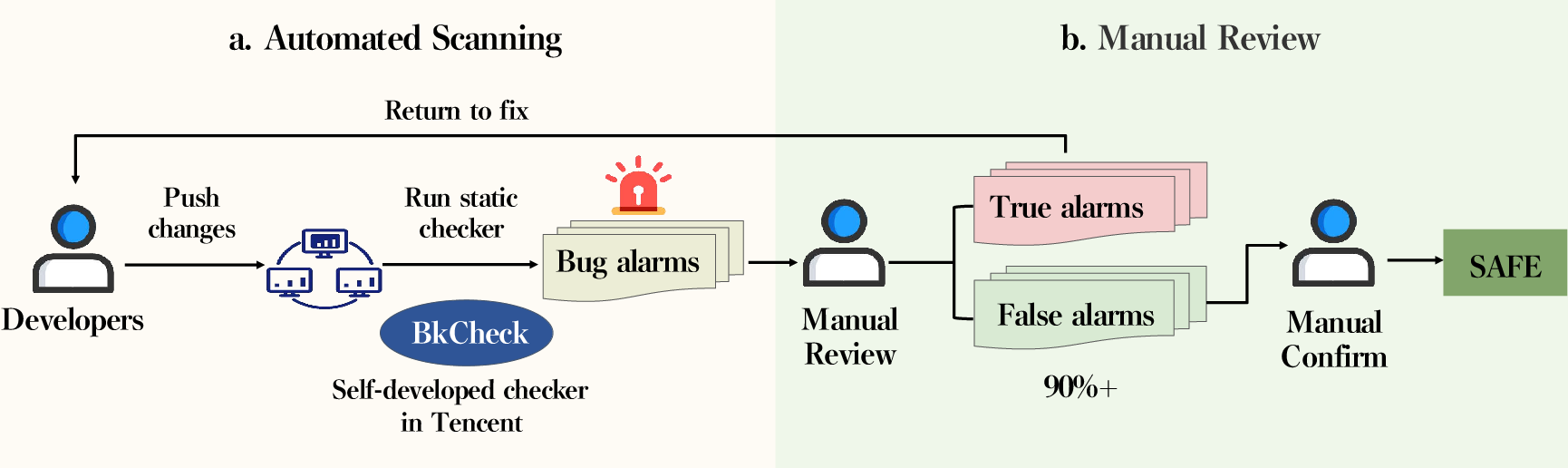

Figure 1: Overview of Code Review in Tencent.

Understanding False Positives in Industry

The prevalence of false positives in industrial settings is alarmingly high. It was found that developers at Tencent spend between 10-20 minutes manually inspecting each alarm, underscoring the inefficiencies introduced by false alarms. The study reveals a false positive rate exceeding 76%, which is exacerbated by the need for high recall and integral safety assurance in enterprise environments.

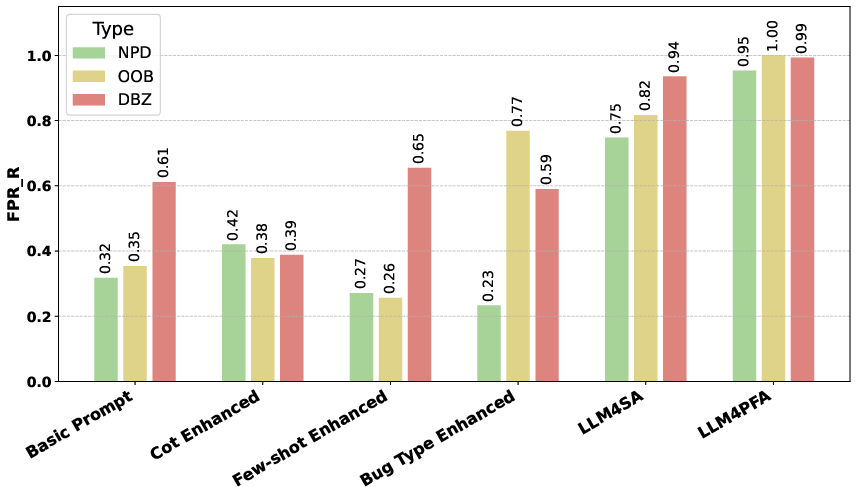

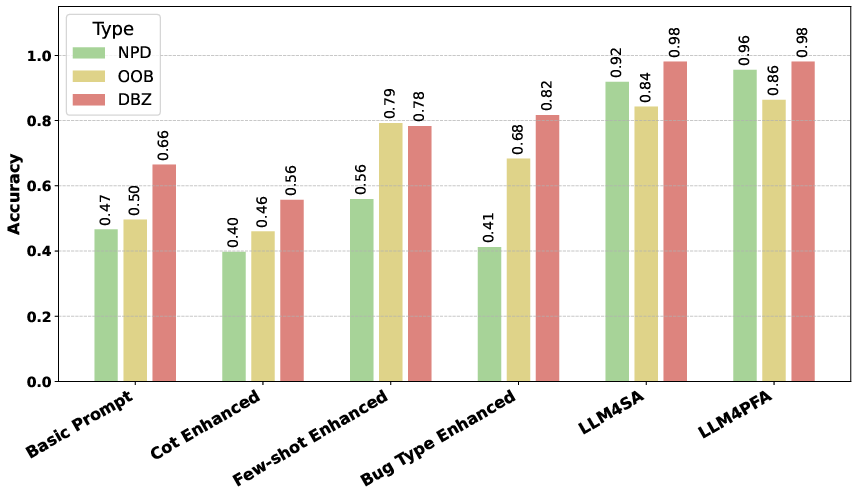

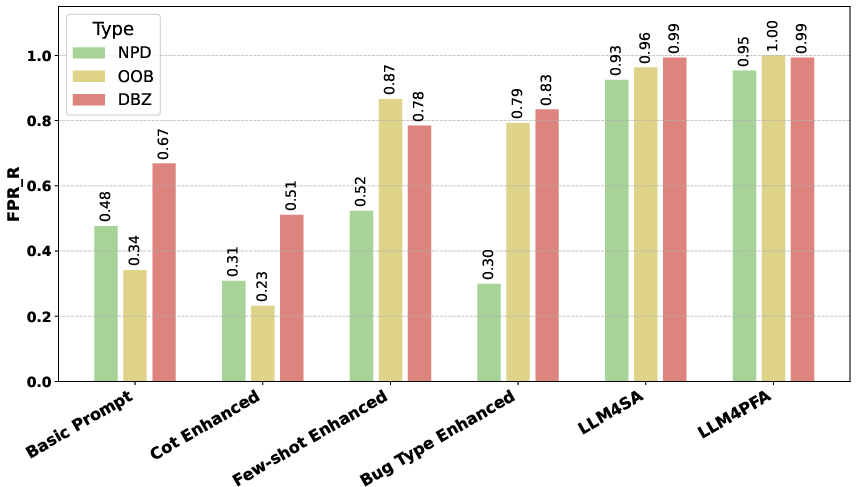

Effectiveness of LLM-Based Techniques

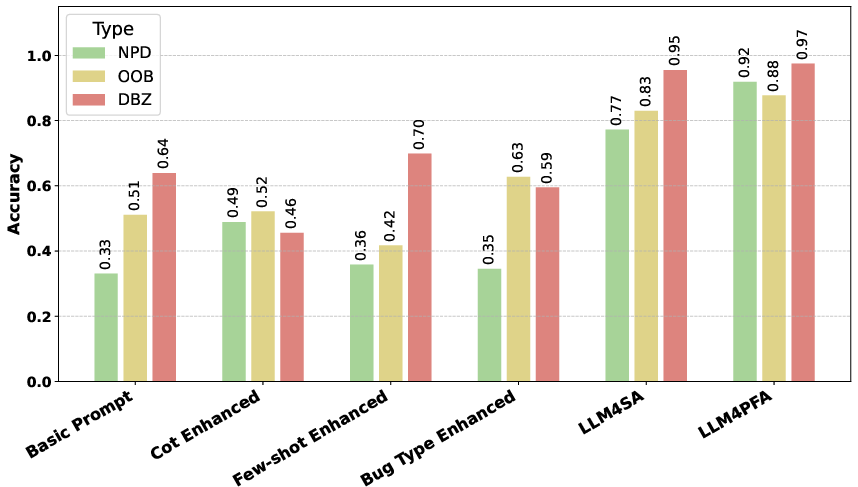

The study evaluates several LLM-based techniques, including basic LLMs, advanced prompting strategies, and hybrid techniques leveraging static analysis. Among these, LLM4PFA—a method combining LLMs with static analysis for enhanced path feasibility analysis—demonstrates superior effectiveness, eliminating 94-98% of false positives while maintaining high recall.

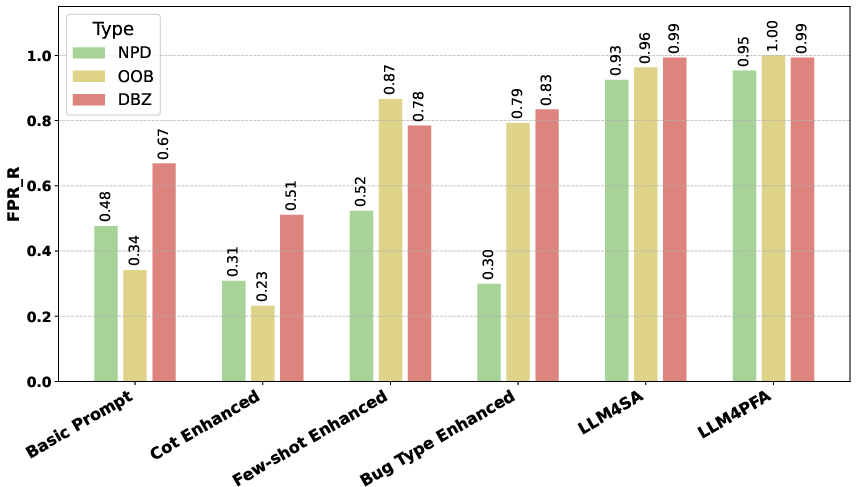

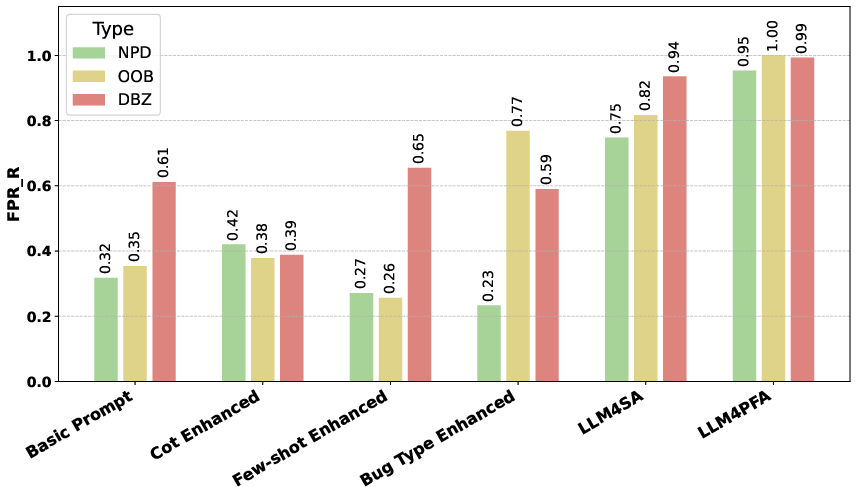

Figure 2: Comparison of performance for LLM-based methods.

Comparative Analysis with Traditional Methods

The study highlights the limitations of traditional learning-based methods, such as GNN-based and PLM-based approaches, which perform poorly due to their dependence on specific training datasets and lack of generalizability across unseen enterprise data. In contrast, LLM-based techniques offer more scalable and adaptable solutions for false-positive reduction.

Cost Analysis

In terms of resource efficiency, the LLM-based techniques are found to be cost-effective. On average, LLM implementations range from 2.1 to 109.5 seconds in processing time per alarm, with associated costs being minimal ($\$0.0011-\$0.12 per alarm), which is substantially lower than the cost of manual inspections.

Future Directions and Implications

The paper outlines several avenues for future research, including the need for enhanced LLM models capable of more nuanced context reasoning and complex constraints analysis. This includes integrating deeper static analysis insights to mitigate long-context reasoning challenges. Additionally, augmenting LLMs with domain-specific knowledge could further enhance their capability to identify and explain false positives effectively.

Conclusion

This study establishes the substantial benefits of deploying LLMs for reducing false positives in static bug detection in an industrial context. It demonstrates that, while challenges remain, LLMs present a promising avenue for practical and efficient SAT enhancement. These findings provide a strong foundation for leveraging AI in software quality assurance, suggesting significant potential for future research and development in this domain.