- The paper introduces InstaRadar, a novel method that uses instance segmentation to enhance radar depth data for improved 3D object detection.

- It integrates instance-guided radar expansion with a radar-guided depth prediction transformer within the BEVDepth framework, yielding more accurate depth and spatial features.

- Experimental results on the nuScenes dataset demonstrate improved metrics like AbsRel and RMSE, highlighting the method's effectiveness in challenging conditions.

Instance-Guided Radar Depth Estimation for 3D Object Detection

The paper "Instance-Guided Radar Depth Estimation for 3D Object Detection" (2601.19314) introduces an innovative framework designed to enhance monocular 3D object detection in autonomous vehicles, leveraging a combination of instance-guided Radar data expansion and Radar-guided depth estimation techniques. This groundbreaking approach addresses the inherent limitations in monocular depth estimation, characterized by depth ambiguity, vulnerability to adverse environmental conditions, and reliance on less robust traditional expansion strategies for Radar data.

Introduction and Motivation

Autonomous driving necessitates a precise 3D understanding of the environment to support detection, tracking, and motion planning. While RGB cameras offer high-resolution visual information, they struggle with depth ambiguity, especially in challenging weather and lighting conditions. Radar, with its resilience to these environmental factors, provides a complementary yet sparse and lower-resolution data set. Therefore, effective Radar-camera fusion is crucial for accurate 3D perception. This paper proposes InstaRadar—a novel method that leverages instance segmentation masks to densify and semantically align Radar data.

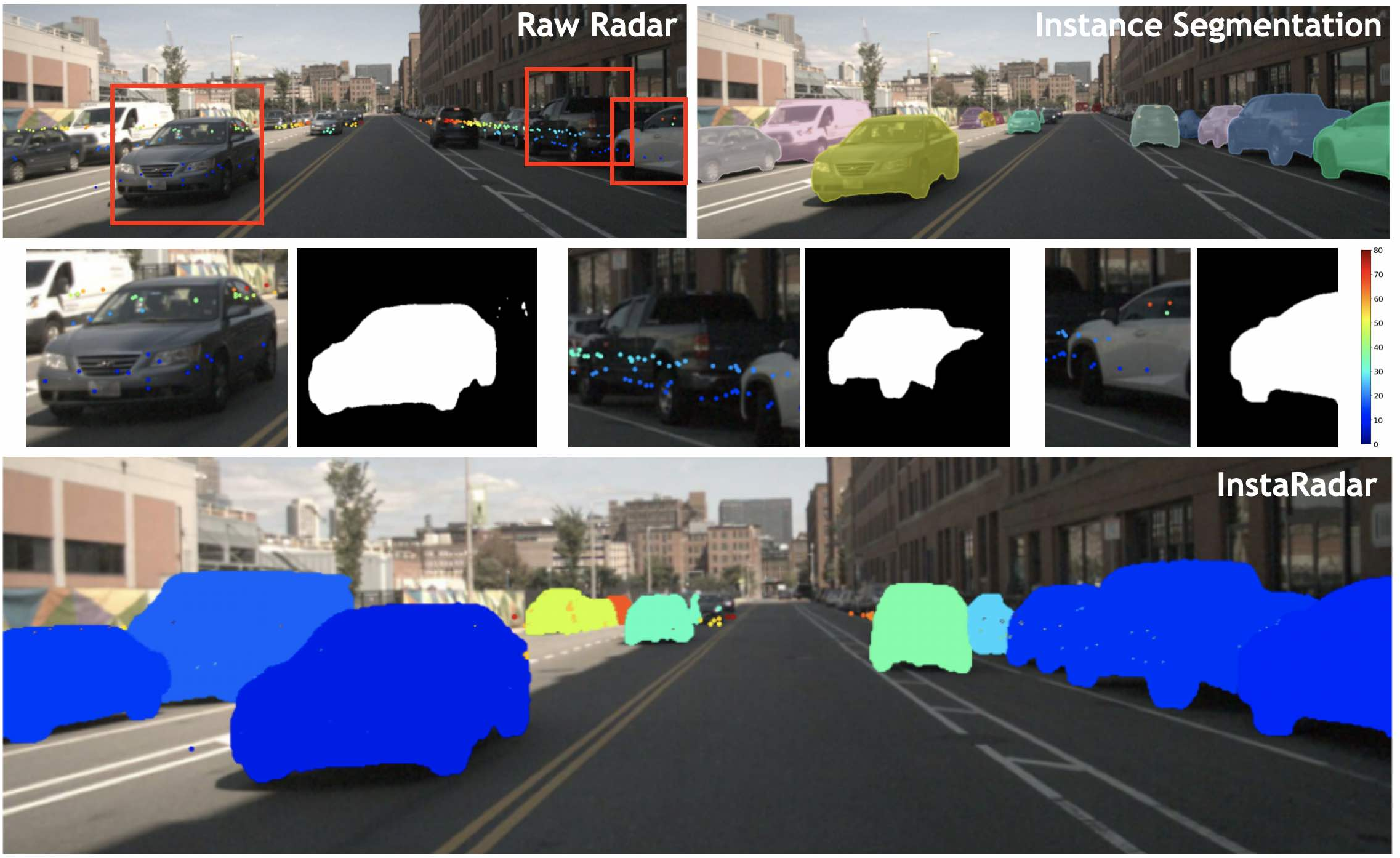

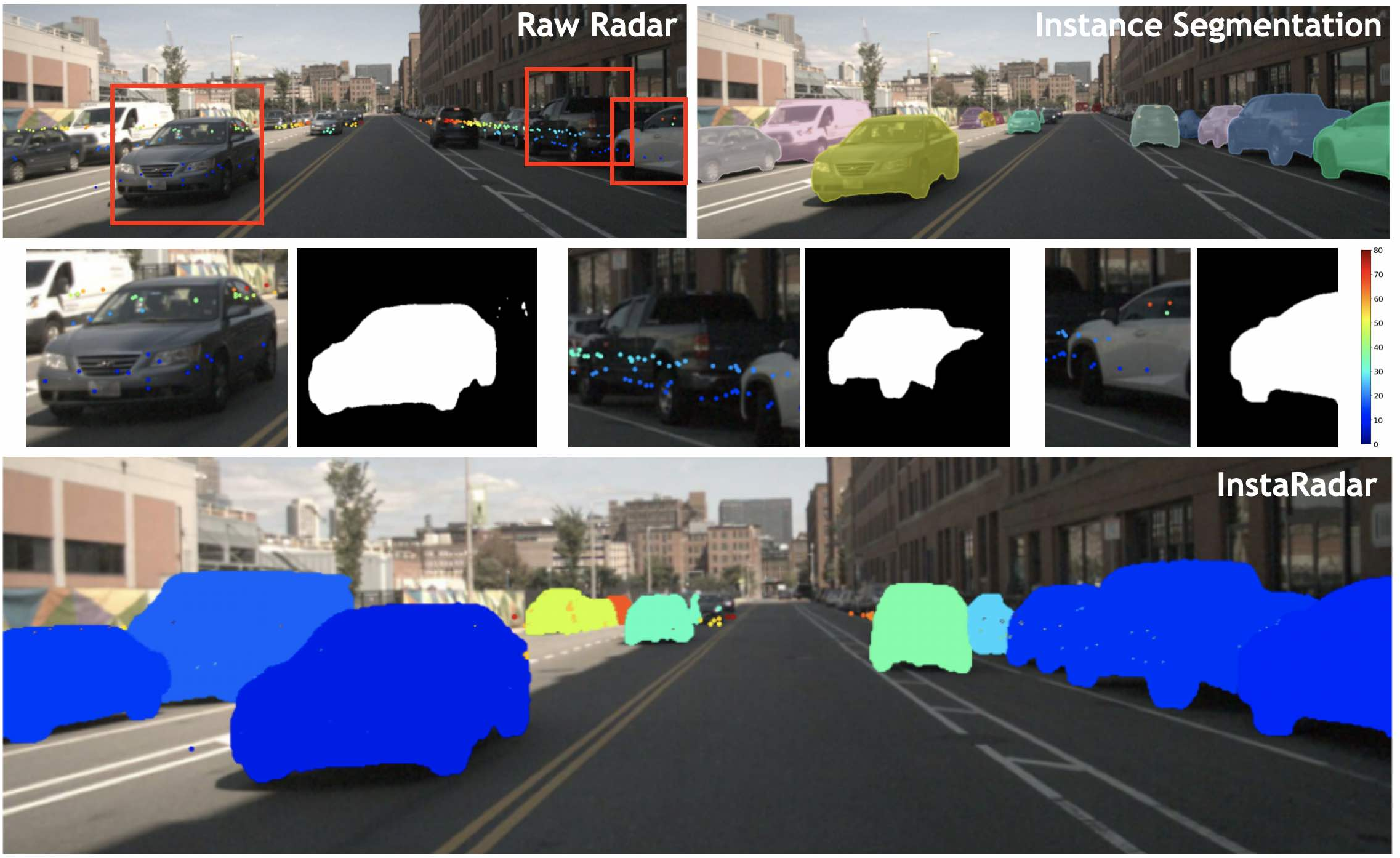

Figure 1: Visualization of the proposed InstaRadar method improving Radar resolution and spatial coverage on the nuScenes dataset.

Methodology

InstaRadar: Instance Segmentation-Guided Radar Expansion

InstaRadar utilizes instance segmentation tools like OneFormer for instance-aware expansion of Radar points. This method selectively enhances Radar density by expanding Radar data within instance masks, preserving crucial object structures and semantic alignment. The technique produces structured depth features that contribute significantly to 3D detection accuracy. Unlike previous methods, InstaRadar maximizes object awareness through segmentation, yielding localized and semantically rich Radar data.

Figure 2: Qualitative examples of InstaRadar under various conditions, illustrating improved depth alignment with object regions.

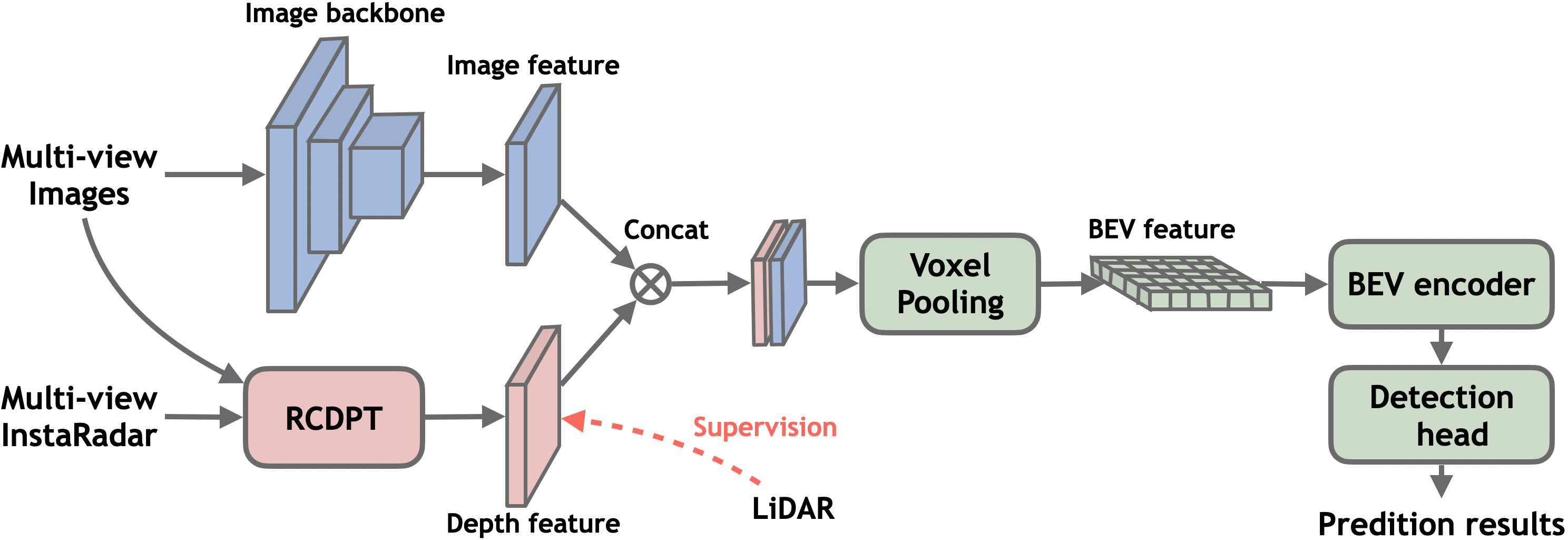

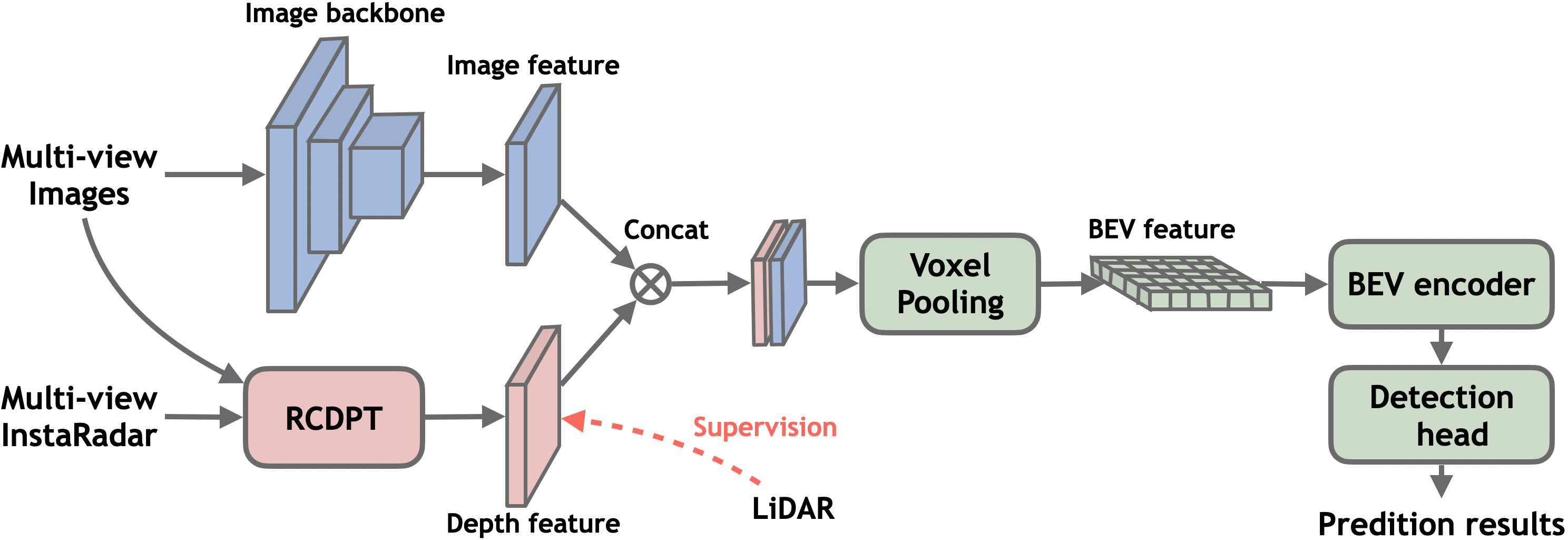

By integrating InstaRadar-enhanced inputs with the RCDPT into the BEVDepth framework, the paper showcases improvements in overall 3D detection performance. The RCDPT explicitly supervises depth prediction by combining image and enhanced Radar features through a novel cross-modal reassembly mechanism. This integration results in more accurate depth maps, subsequently projected into the Bird's-Eye-View (BEV) space. This process enhances spatial feature accuracy and robustness in 3D object detection.

Figure 3: Framework illustrating the integration of InstaRadar and RCDPT into BEVDepth for enhanced 3D detection.

Experimental Evaluation

The experimental setup utilizes the nuScenes dataset, a multimodal benchmark providing synchronized data across various sensor types. The authors report improvements in metrics such as AbsRel and RMSE, underscoring the efficacy of InstaRadar in Radar-guided depth estimation. Comparative analysis against existing methods like Pseudo-LiDAR and BEV-based models confirms the superiority of instance-guided Radar expansion in enhancing depth precision and maintaining semantic consistency.

Implications and Future Directions

The proposed framework significantly elevates 3D object detection capabilities by improving feature consistency and detection robustness in challenging scenarios. However, the paper acknowledges the framework's limitation relative to comprehensive Radar-camera fusion methods. Future research will focus on extending InstaRadar to point cloud-like data representations and integrating temporal cues in Radar processing to facilitate richer multimodal fusion and further improve BEV feature extraction.

Conclusion

The paper demonstrates substantial advancements in monocular 3D object detection by introducing the instance-guided InstaRadar expansion method and integrating a Radar-guided depth estimation approach. These contributions consistently yield improvements over baseline models, showcasing the potential of leveraging structured Radar data for enhanced semantic alignment and robust 3D perception. Continued evolution of these methodologies will further refine autonomous sensing systems in diverse operational environments.