- The paper introduces a self-prediction mechanism that generates a predictive current to modulate the membrane potential, improving spike timing and gradient flow.

- It adds an extra forward path for self-prediction, which creates continuous gradient pathways, thereby addressing vanishing gradients and enhancing training efficiency.

- Experimental validation on CIFAR-10, ImageNet, and reinforcement learning tasks confirms improved performance and robustness across various spiking neural network architectures.

General Self-Prediction Enhancement for Spiking Neurons

Spiking Neural Networks (SNNs) have garnered significant interest due to their potential for energy-efficient computation, driven by event-based dynamics. However, the challenges associated with training SNNs, particularly due to the non-differentiable nature of spike events, remain considerable. The paper "General Self-Prediction Enhancement for Spiking Neurons" (2601.21823) addresses this crucial issue by proposing a novel mechanism inspired by predictive coding theories found in biological neural systems.

Self-Prediction Approach

The central premise of this paper is the introduction of a self-prediction mechanism within spiking neurons, which involves generating an internal prediction signal based on the historical input-output data of each neuron. This novel mechanism aims to enhance the membrane potential dynamics through a predictive current. By modulating the membrane potential with this prediction current, the neurons become capable of pre-activating expected spikes and calculating errors when actual outputs deviate from predictions.

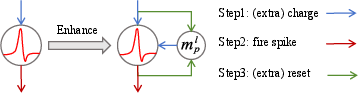

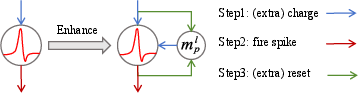

Figure 1: Left: original neuron, Right: our proposed self-prediction enhanced neuron. Neurons operate in the sequence of charging, firing, and resetting at each time-step.

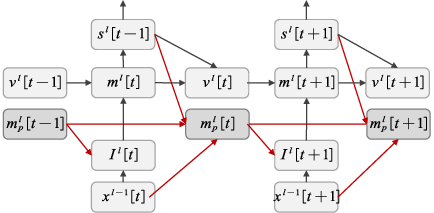

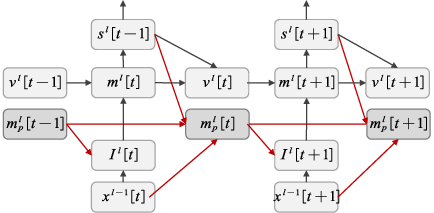

The forward propagation of these enhanced neurons includes an additional path for self-prediction, illustrated by the schematic (Figure 2). This mechanism addresses the gradient propagation issue prevalent in SNNs, providing an auxiliary gradient path that supports more stable and efficient training, effectively mitigating vanishing gradients.

Figure 2: Forward propagation pathway of the self-prediction enhanced LIF neuron. The red lines indicate the additional forward path associated with self-prediction.

Training Dynamics and Gradient Propagation

The paper further explores the dynamics of self-prediction-enhanced neurons, demonstrating that their design creates continuous gradient pathways. These pathways facilitate seamless propagation of learning signals across time steps, thus enhancing the overall training stability and accuracy of SNNs.

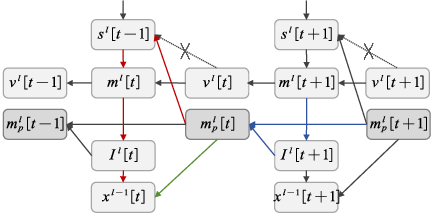

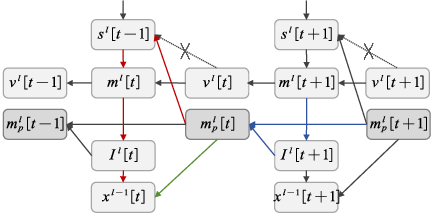

Figure 3: Backward propagation pathway of the self-prediction enhanced LIF neuron. The blue and green lines form the first additional gradient path, and the blue and red lines form the second additional gradient path.The dashed lines indicate paths that are detached from the computational graph.

An analysis of the gradient propagation (Figure 3) reveals that the self-prediction methodology not only enriches the computational pathway but also aligns with biological principles like dendritic modulation and synaptic plasticity. By modulating the membrane potential dynamically and correcting prediction errors locally, this approach reflects processes observed in biological neural circuits.

Experimental Validation

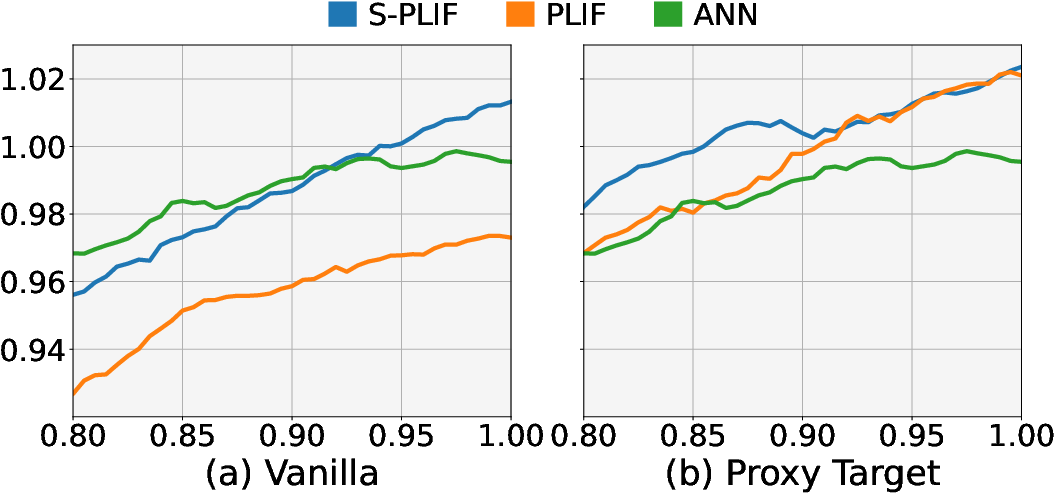

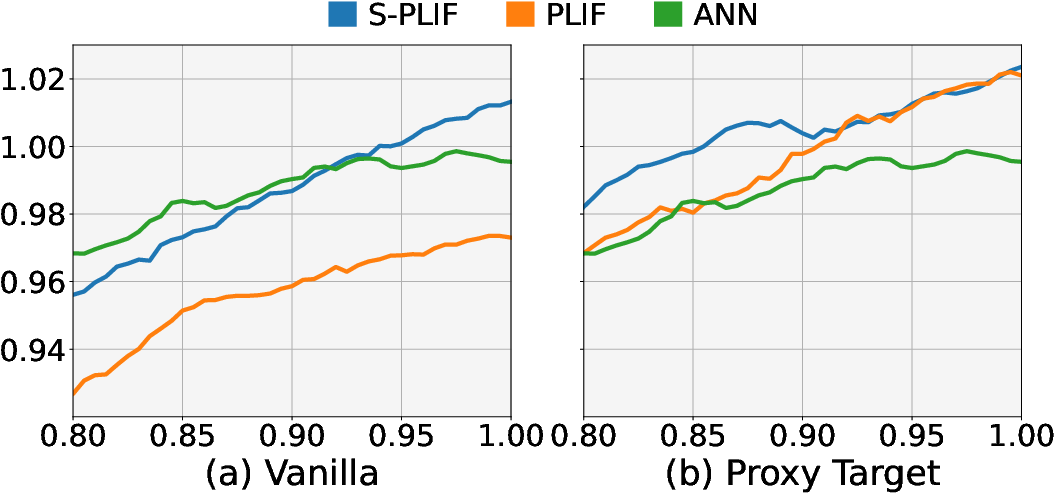

The effectiveness of the proposed mechanism is demonstrated through extensive experiments across various architectures and tasks, including image classification on both CIFAR-10 and ImageNet, as well as sequential classification and reinforcement learning tasks. Results consistently show improved performance as illustrated by the normalized learning curves in reinforcement learning scenarios (Figure 4). These curves highlight the efficacy of self-prediction in lifting SNNs closer to or even beyond the performance levels of traditional ANNs.

Figure 4: Normalized learning curves across all environments of the TD3 algorithm with different spiking neurons across all environments. The performance and training steps are normalized linearly based on ANN performance. Curves are uniformly smoothed for visual clarity.

In particular, the self-prediction enhancement shows significant improvement in training stability and final accuracy across various networks such as SEW ResNet and Spiking ResNet architectures, validating its versatility and robustness.

Conclusion

The paper "General Self-Prediction Enhancement for Spiking Neurons" proposes an innovative approach to enhancing spiking neural networks by leveraging self-prediction principles. Through biologically inspired mechanisms, it effectively addresses the core challenges in SNN training, offering promising improvements in computational efficiency and network performance. As the results suggest, this method holds substantial potential for advancing SNNs in both practical applications and theoretical research, paving the way for further exploration into neuron-level predictive coding mechanisms within artificial neural systems.