- The paper introduces a hybrid rendering system combining high-quality foveated path tracing with lightweight peripheral Gaussian splatting for interactive VR anatomy visualization.

- The methodology leverages decoupled foveal and peripheral pipelines with real-time Gaussian model refinement, achieving improved MPSNR by up to 2 dB and rapid model regeneration within 1 second.

- The system enables immersive clinical applications, such as interactive diagnostic planning and medical education, by balancing visual fidelity and performance.

Hybrid Foveated Path Tracing with Peripheral Gaussians for Immersive Anatomy: A Technical Analysis

Introduction

The paper "Hybrid Foveated Path Tracing with Peripheral Gaussians for Immersive Anatomy" (2601.22026) describes an integrated rendering architecture for medical volume visualization that maximally exploits advances in foveated path tracing and Gaussian Splatting (GS) for interactive, high-fidelity immersive experiences, particularly in virtual reality (VR). By combining high-quality, resource-intensive methods (path tracing) in the perceptually critical foveal region with lightweight, on-the-fly Gaussian-based approximations in the periphery, the system achieves substantial improvements in both performance and interactivity for anatomical applications.

System Architecture and Technical Innovations

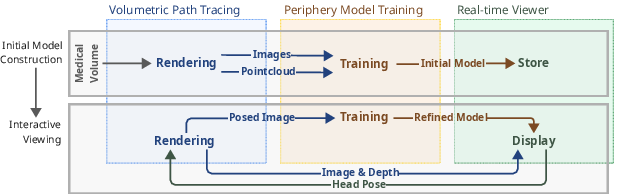

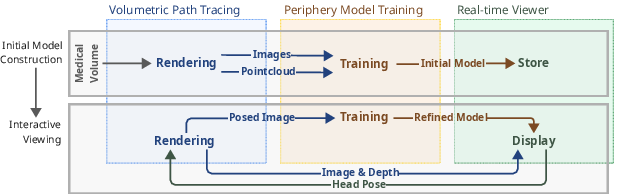

The architecture comprises three decoupled components: a foveated volumetric path tracer, a streamlined GS-based peripheral model trainer, and a real-time VR viewer. The system operates in two main flows: initial model construction—executing high-quality path-tracing renders for initialization—and interactive immersive viewing, which asynchronously combines new foveal observations to iteratively refine the peripheral GS model.

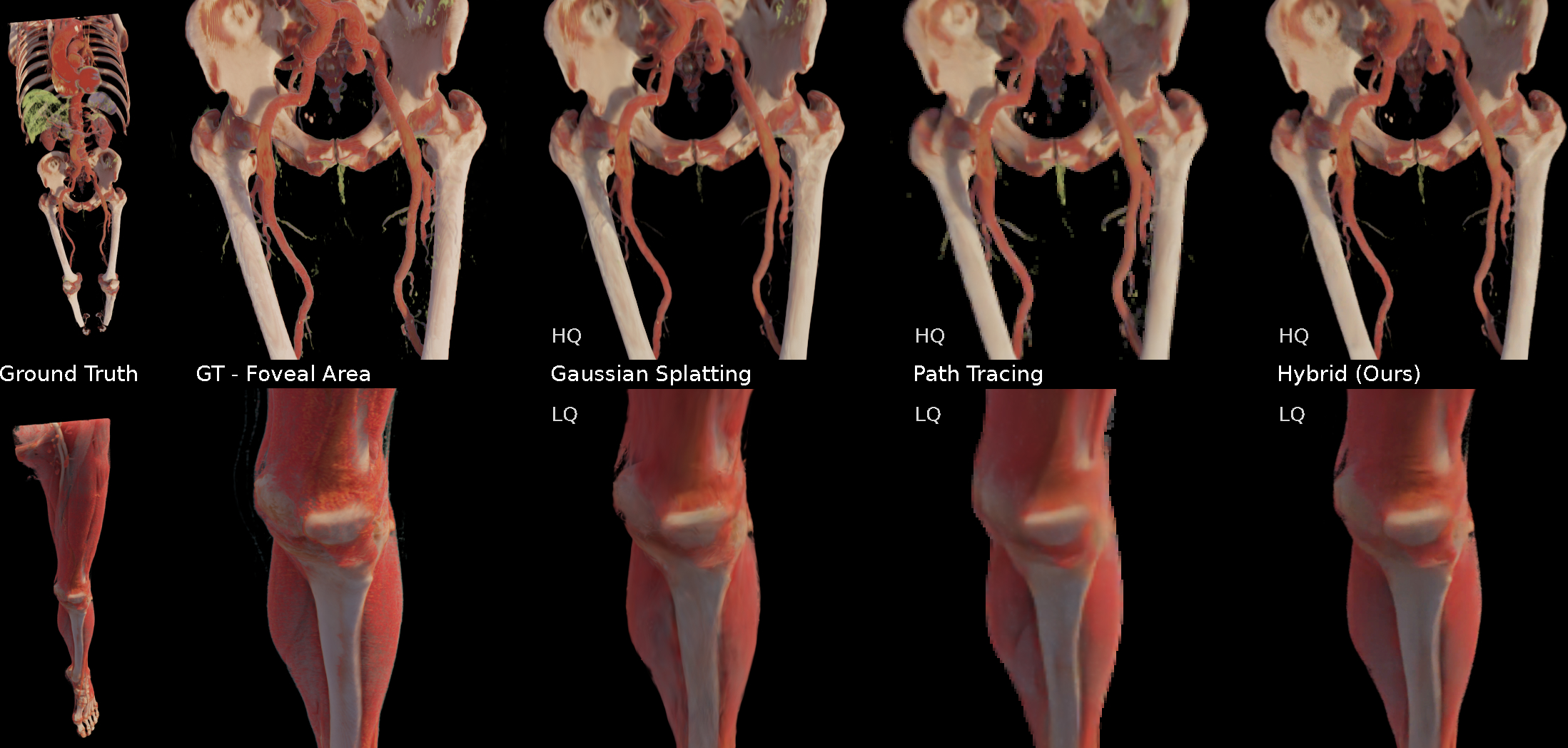

Figure 1: System architecture with decoupled path-tracing, GS-based peripheral model, and synchronous/asynchronous application flows.

A core innovation is the tight integration of streamed foveated path tracing with rapid GS reconstruction and continual refinement. This configuration is designed for high perceptual fidelity within the foveal region, while the periphery leverages advances in GS optimization, aggressive simplification, and strategic view selection. Further, depth-guided reprojection decouples the slow foveal path tracing from the high refresh-rate requirements of the VR display, providing flexibility in the latency-fidelity trade-off.

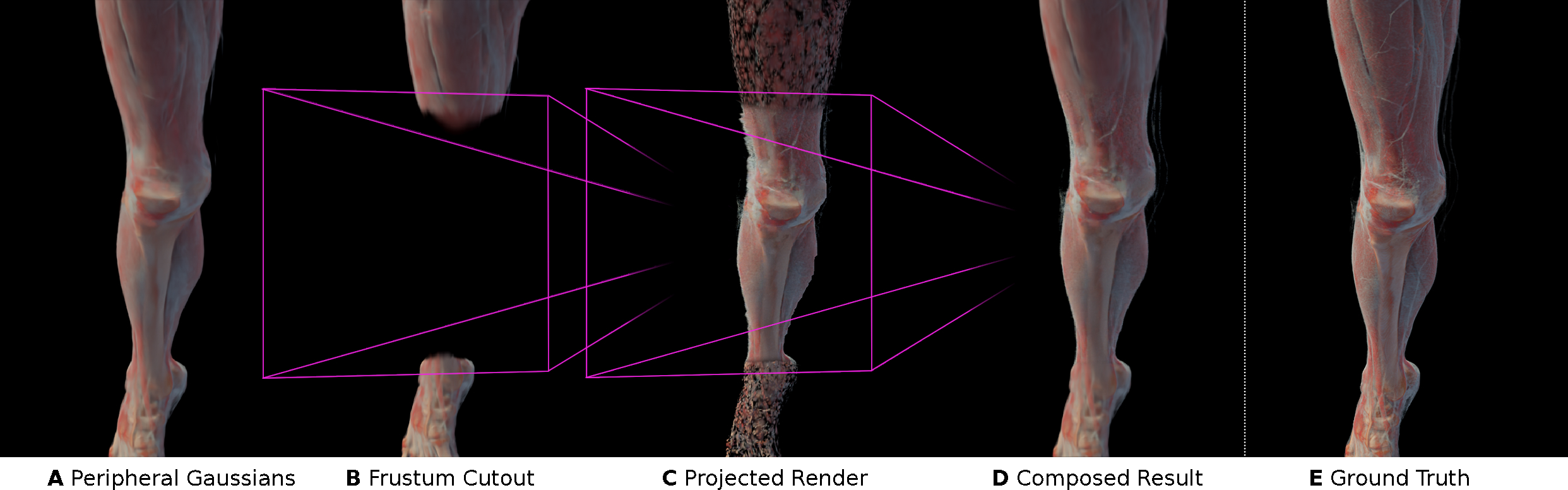

Foveated–Peripheral Composition and Rendering Pipeline

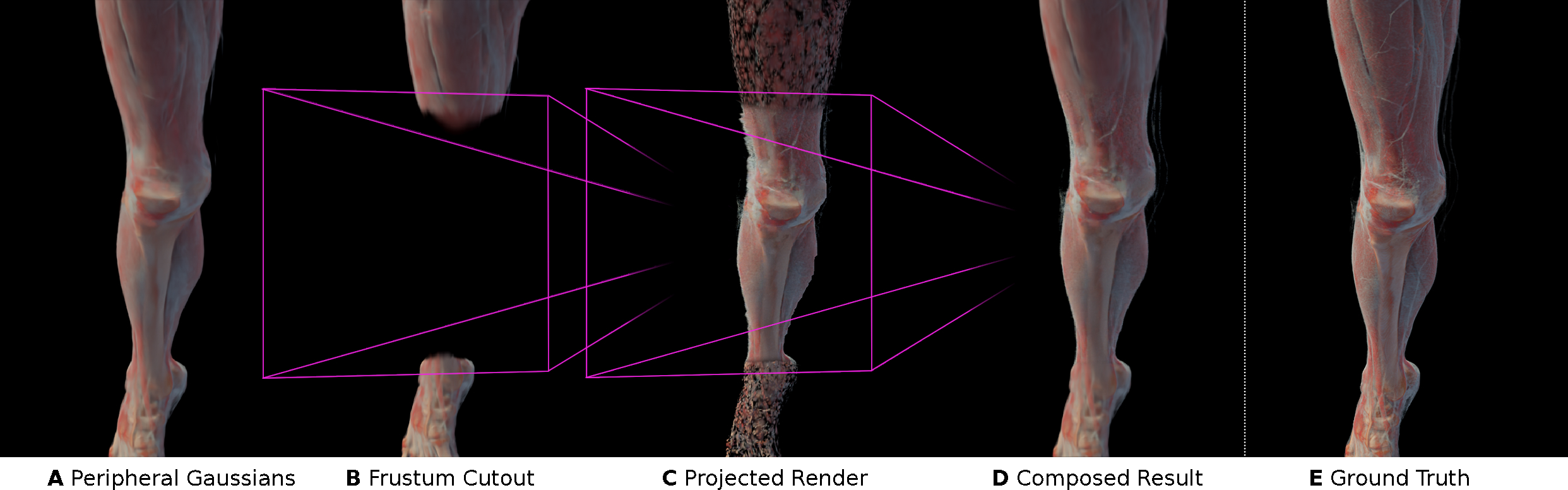

Interactive compositing of foveated and peripheral regions proceeds as follows: the user’s head pose determines on-demand path-traced images in the foveal region (20° FoV), while the periphery is rendered via the GS model, adapted in real time. The compositing uses a frustum-shaped transition zone with alpha blending at foveal boundaries to mask artifacts and ensure consistent visual coherence.

Figure 2: Pipeline for compositing foveated (PT) and peripheral (GS) regions, detailing exclusion of peripheral Gaussians within the foveal frustum and blending at the transition.

Peripheral Model Training: Fast Initialization and Continual Refinement

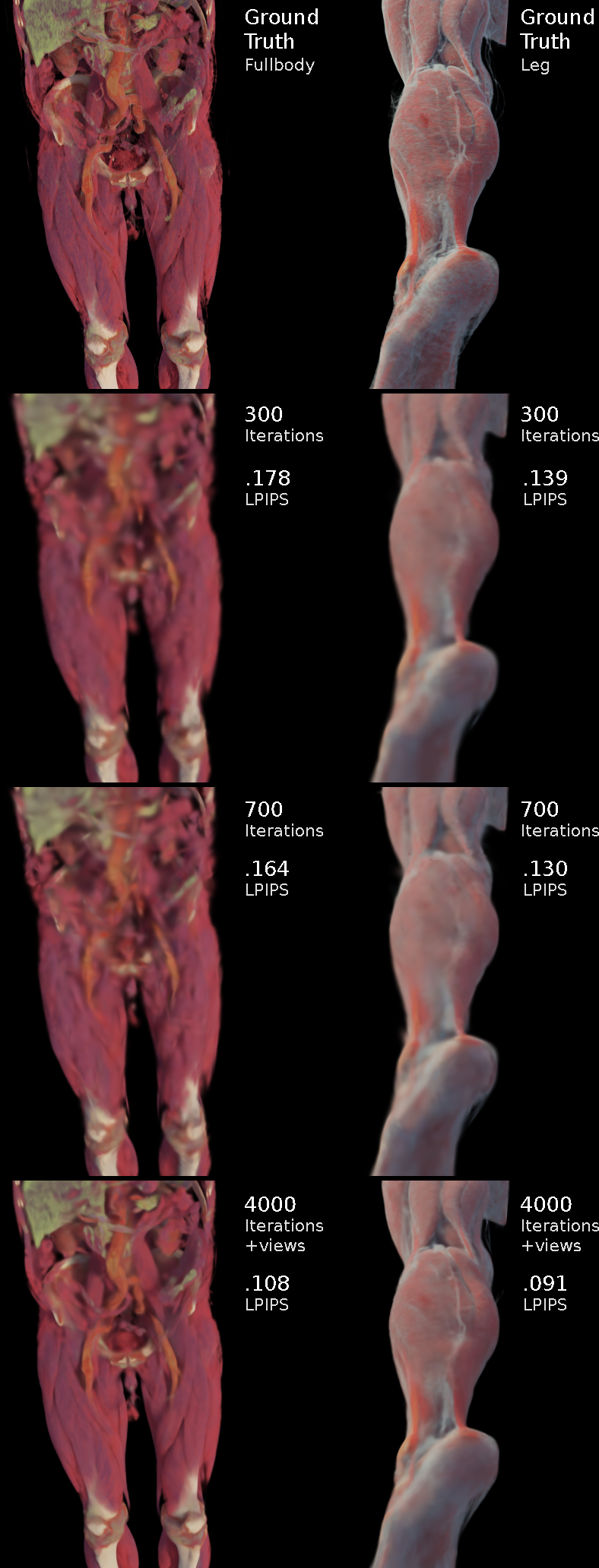

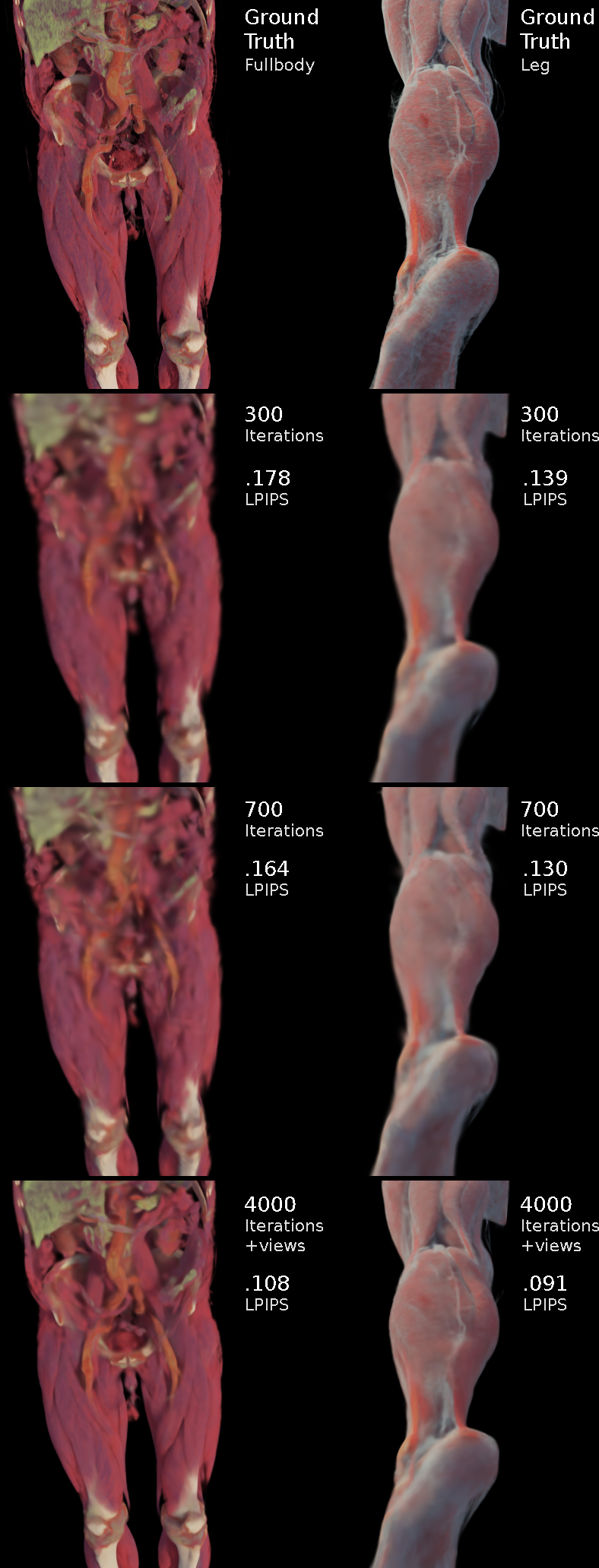

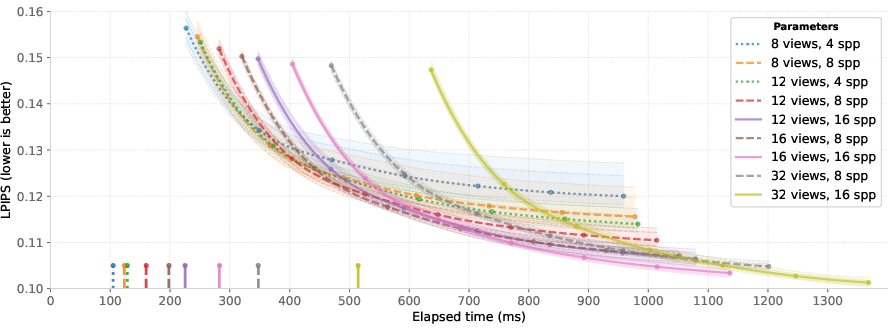

Model creation is staged: an initial set of posed images (varying between 8–32 views, typical 8–16 spp) seeds a surface-aligned point cloud. Model optimization uses a non-densifying, high-rate adaptation of Mini-Splatting2 with Taming 3DGS enhancements, generating a visually plausible and compact GS model (typically ~10k splats) within 300–400 ms. Early simplification and avoidance of densification prevent undesired floaters—a crucial requirement for semi-transparent anatomical data.

Figure 3: Peripheral GS model quality evolution: initial state from few views, and subsequent improvement after continual training with additional foveated perspectives.

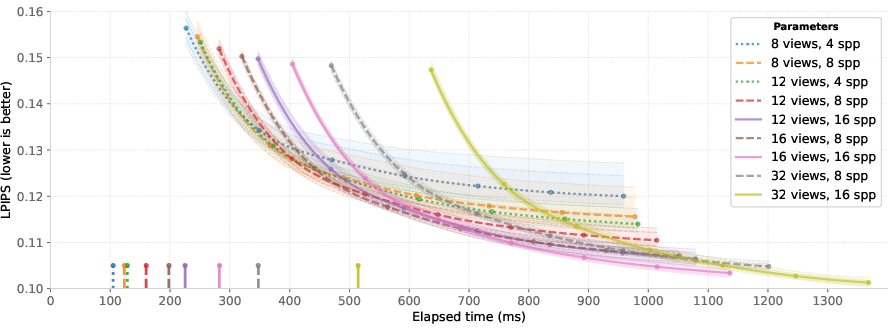

Empirically, broad angular coverage by a moderate number of views supersedes higher samples-per-pixel (spp) for rapid initialization, with diminishing returns beyond 16 views. Upon interaction, close-up foveated path-traced images inform incremental refinement, with a heuristic for pose- and view-novelty-driven selection to control training resource overhead.

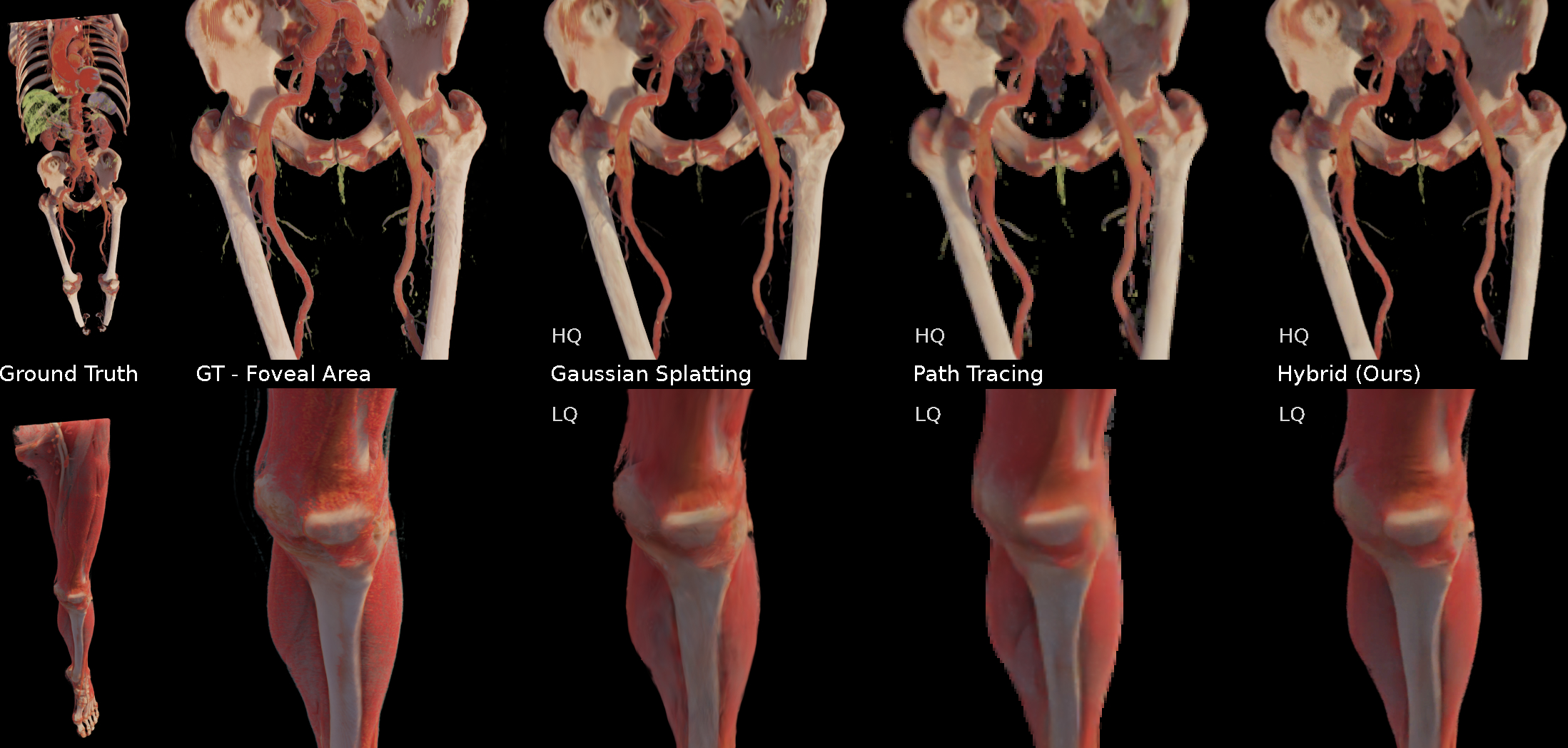

Figure 4: Initialization quality as a function of number of input views and spp, showing rapid convergence and highlighting the comparative gains of additional viewpoints over increased spp.

The hybrid pipeline is evaluated against two strong baselines: standalone foveated path tracing and standalone GS-based NVS models (trained for one to two minutes). Quantitative metrics include masked PSNR (MPSNR), SSIM, and LPIPS, computed within the foveal region and over the full image. In VR-suitable settings, the approach outperforms real-time path tracing in foveal regions at comparable frame times, while largely matching the peripheral quality of longer-trained GS baselines, all with near-instant model regeneration.

Figure 5: Visual comparison with ground-truth path tracing, full GS baseline, and the proposed hybrid (with/without peripheral model improvements), demonstrating higher anatomical fidelity and fewer artifacts in the foveal-peripheral transition.

Empirical results indicate:

- Initial GS model ready at ~400 ms, and refined within ~1 s.

- Foveal MPSNR improvements exceeding 1.5–2 dB over path tracing alone, and competitive with full GS models (reported for both full and foveated masks).

- Peripheral GS model files are compact (few MB), supporting efficient streaming.

Boundary artifacts at the foveal-peripheral transition are managed by empirical scaling and opacity adjustments, with a noted improvement in fine-structure visibility; persistent color/luminance mismatches are minimized but remain an area for further perceptual optimization.

Implications for Medical Visualization and Interactive Applications

This hybrid approach enables immersive exploration and real-time interaction with clinical anatomical data on consumer and mobile VR devices, overcoming longstanding barriers between visual fidelity and interactivity. Regeneration of GS-based peripheral models in seconds accommodates dynamic transfer function changes and region-of-interest clip planes—crucial for diagnostic and instructional scenarios requiring rapid feedback. The architecture is notably tolerant to latency and scalable across distributed compute/server environments.

By consolidating path tracing and GS NVS advances, the approach also creates new possibilities for:

- Interactive clinical education and simulation—eliminating the cognitive load of cross-sectional 2D-to-3D inference.

- Patient-informed consent and communication, leveraging high-fidelity 3D contextualization.

- Pre- and intra-operative planning, where dynamic exploration of volumetric regions and immediate feedback are essential.

Limitations and Prospective Directions

Key limitations include boundary transition artifacts, slower peripheral update rates for highly mobile scenarios, and limited performance in exceedingly large or unbounded environments. The system does not yet leverage real-time, gaze-driven foveation or streaming peripheral GS models; instead, updates are applied under simplified scheduling.

Potential advances include:

- Improved perceptual blending (contrast, vibrancy, and noise consistency) across regions.

- Adaptive, attention-driven resource scheduling to manage peripheral refinement in frequently visited anatomical areas.

- Integration of data-driven estimation of GS parameters directly from rendered volumes, further truncating initialization delays.

- Enhanced robustness against disocclusions and fast head movements through improved view selection and incremental GS optimization techniques.

Conclusion

This work demonstrates an effective hybrid system for foveated path traced and GS-based peripheral rendering that uniquely balances visual fidelity, reactivity, and interactivity for immersive anatomical visualization. By efficiently partitioning computational budgets based on proven perceptual models and leveraging rapid NVS approaches, the system substantiates a scalable design pattern for future medical VR frameworks and broader real-time immersive 3D data exploration applications.