PLANING: A Loosely Coupled Triangle-Gaussian Framework for Streaming 3D Reconstruction

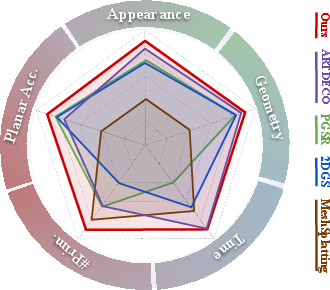

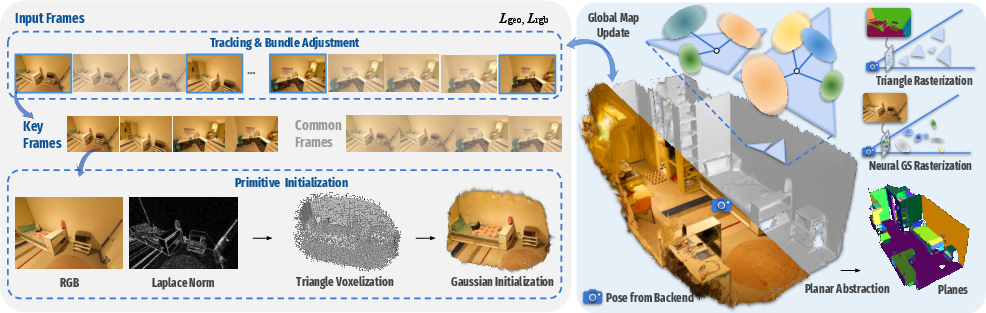

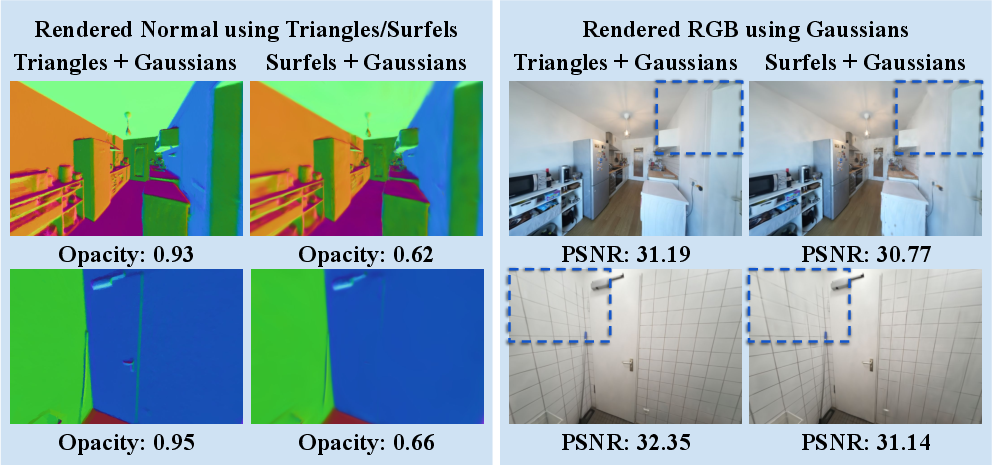

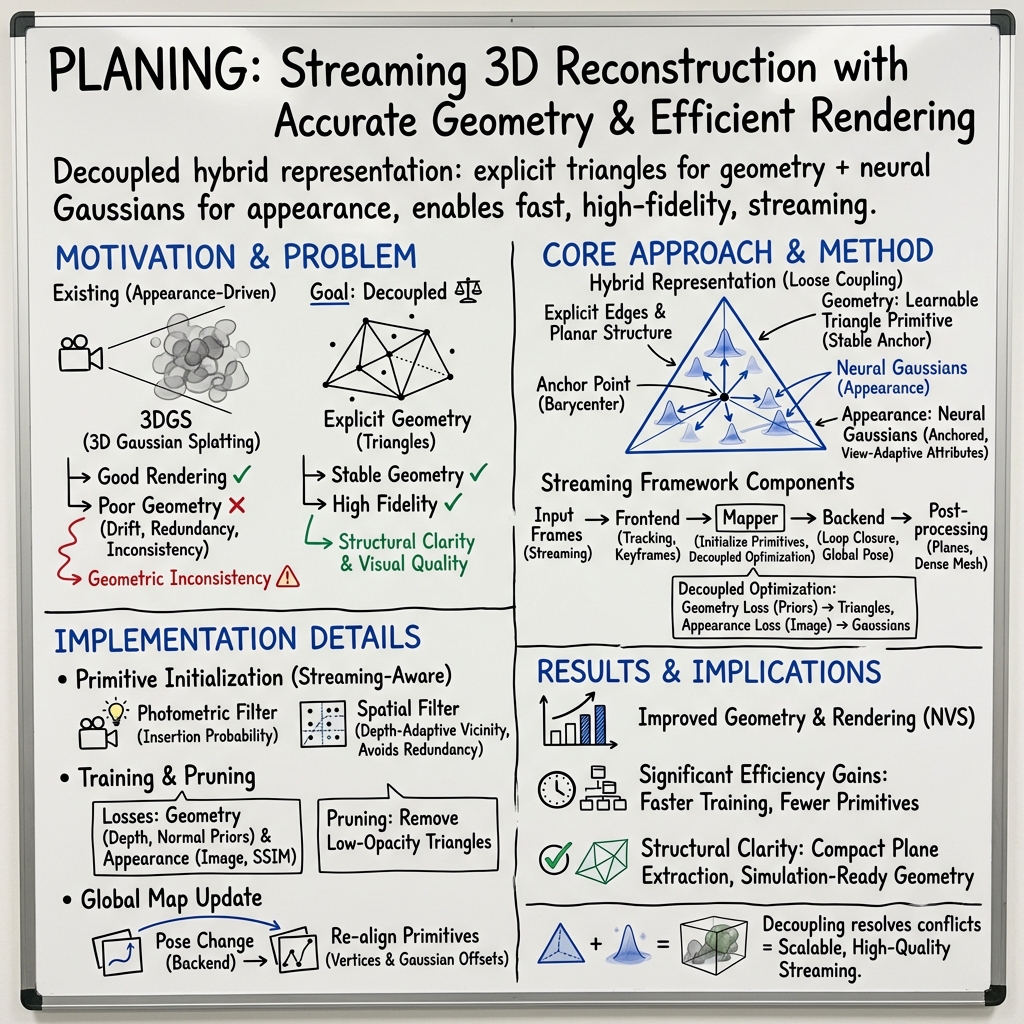

Abstract: Streaming reconstruction from monocular image sequences remains challenging, as existing methods typically favor either high-quality rendering or accurate geometry, but rarely both. We present PLANING, an efficient on-the-fly reconstruction framework built on a hybrid representation that loosely couples explicit geometric primitives with neural Gaussians, enabling geometry and appearance to be modeled in a decoupled manner. This decoupling supports an online initialization and optimization strategy that separates geometry and appearance updates, yielding stable streaming reconstruction with substantially reduced structural redundancy. PLANING improves dense mesh Chamfer-L2 by 18.52% over PGSR, surpasses ARTDECO by 1.31 dB PSNR, and reconstructs ScanNetV2 scenes in under 100 seconds, over 5x faster than 2D Gaussian Splatting, while matching the quality of offline per-scene optimization. Beyond reconstruction quality, the structural clarity and computational efficiency of \modelname~make it well suited for a broad range of downstream applications, such as enabling large-scale scene modeling and simulation-ready environments for embodied AI. Project page: https://city-super.github.io/PLANING/ .

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is about building 3D models from a regular video quickly and accurately. The authors introduce a new method called PLANING that can reconstruct a 3D scene while the video is still coming in (streaming). It aims to keep surfaces straight and sharp (good geometry) while also making the scene look realistic (good appearance), and do all of this fast.

Key Objectives

The paper focuses on three simple goals:

- Make 3D surfaces clear and accurate, especially flat areas like walls, floors, and tables.

- Keep the scene looking realistic and detailed when you view it from different angles.

- Run fast enough to work “on the fly” while the video is still being captured.

How It Works

To explain the method, think of building a digital world like making a Lego model and then painting it.

Two-part scene representation

- Geometry with triangles: Triangles are like the Lego pieces that form the shape of things. They have sharp edges and can build clean, flat surfaces. Using triangles helps the system find and keep straight lines and planes in the scene.

- Appearance with neural Gaussians: Gaussians are soft, fuzzy blobs used to paint color, texture, and lighting on the triangles. They’re great for making the scene look realistic, like smooth shading and reflections. The authors use small neural networks to adjust these Gaussians so they match the look of the video frames.

By separating “shape” (triangles) from “look” (Gaussians), the system can improve each part without messing up the other. This decoupling is the key idea: triangles keep structure stable; Gaussians handle the visual details.

Streaming pipeline

The whole system runs while a video is coming in from a single camera:

- Frontend (tracking): Figures out where the camera is for each frame, picks important frames (keyframes), and estimates rough 3D points and surface directions (depth and normals) from a learned model.

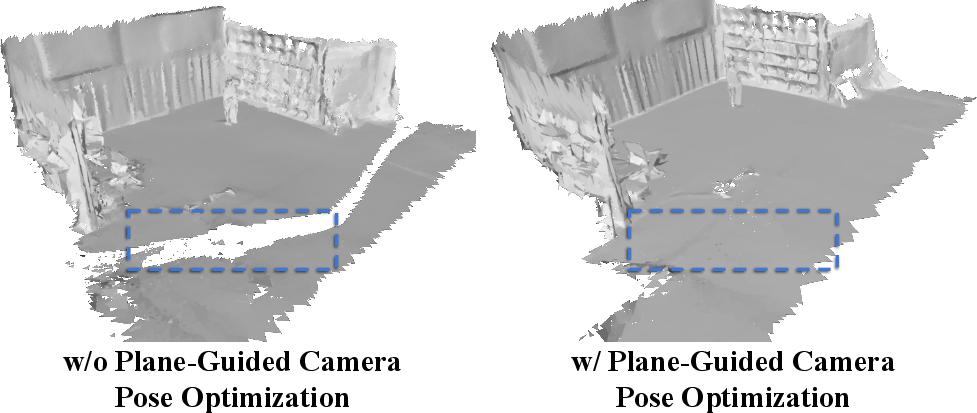

- Backend (global adjustment): Detects when the camera revisits places (“loop closure”) and corrects the camera path so the whole 3D map stays consistent.

- Mapper (reconstruction): Builds the 3D scene using the triangle–Gaussian design. It adds new triangles only where needed and paints them with Gaussians for realistic appearance.

If the backend later improves the camera positions, the mapper updates the triangles and Gaussians to match, so everything stays aligned.

Smart initialization and learning

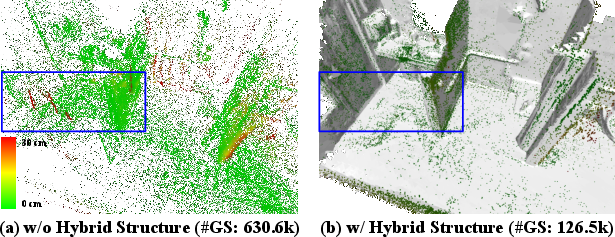

- Picking where to add triangles: The system uses simple image checks to only insert new triangles in areas that lack detail or are poorly reconstructed. It also avoids placing overlapping triangles too close together. This keeps the model small and efficient.

- Training signals:

- Geometry is trained using estimated depth and surface directions so triangles match the shape of the scene.

- Appearance is trained using how well the rendered image matches the real video frame (color differences, structural similarity).

- Redundancy pruning: Triangles that don’t contribute are removed, keeping the scene compact.

Main Findings

Here are the main results reported by the authors:

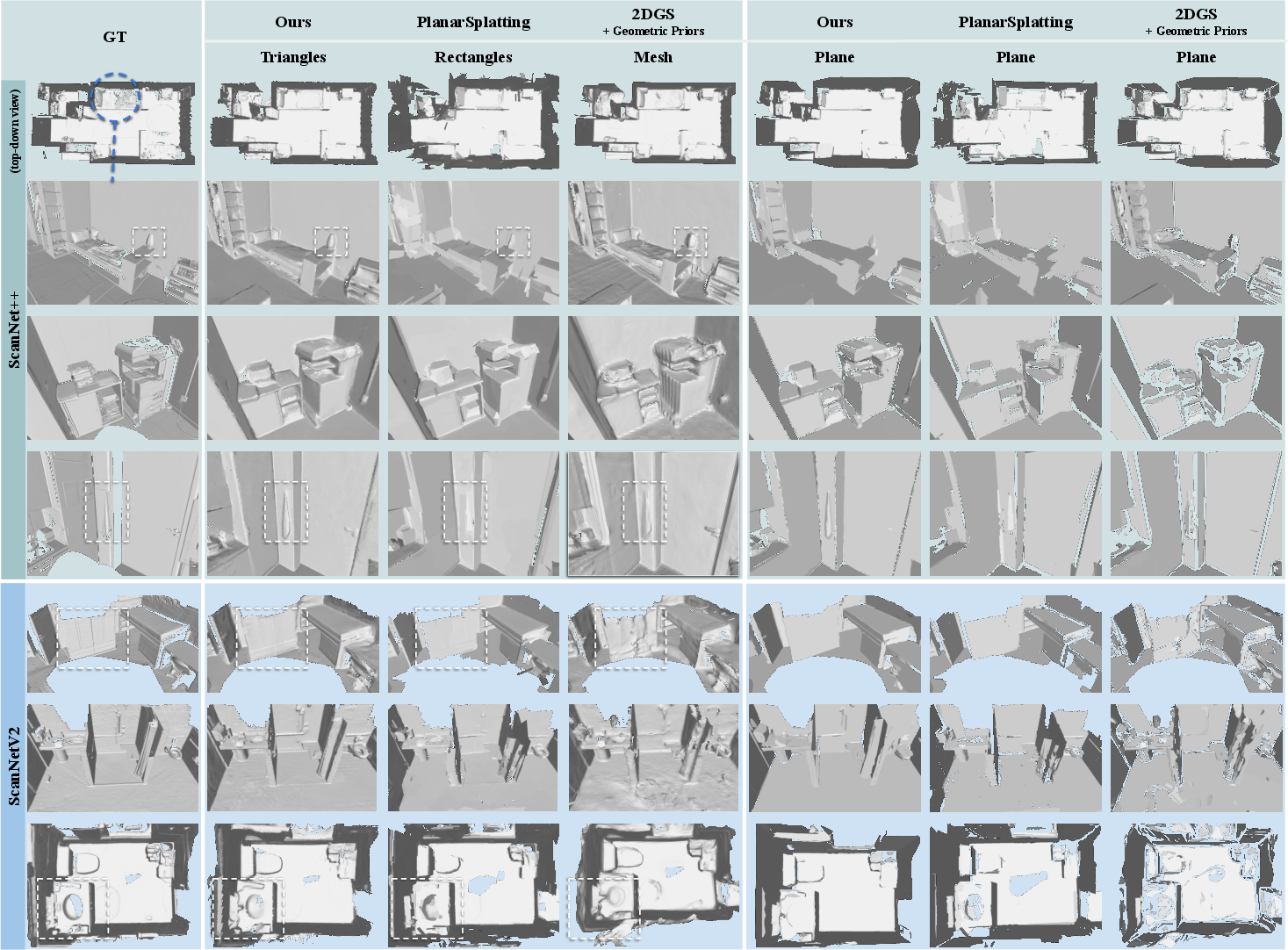

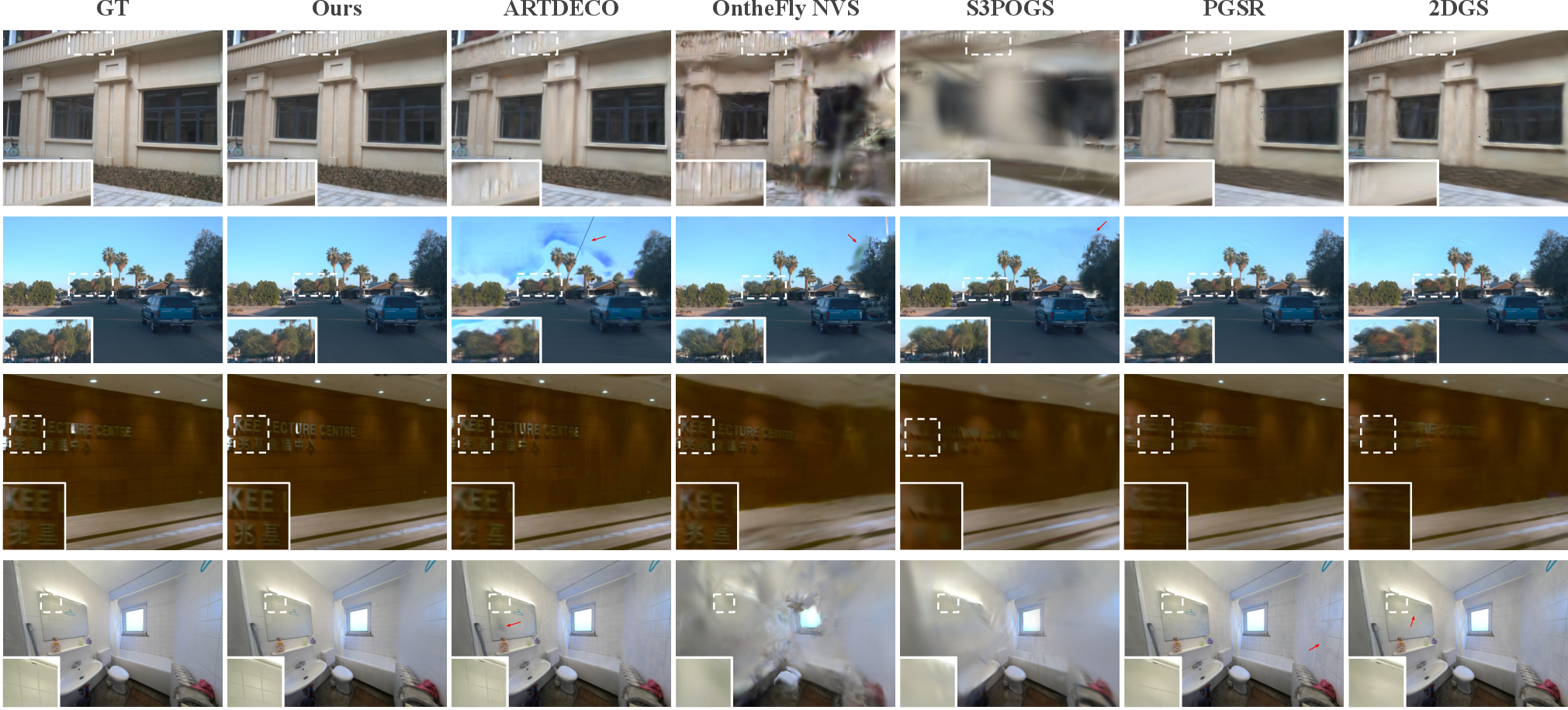

- Better geometry: Compared to strong baselines, PLANING recovers sharper, cleaner surfaces and edges. It achieved lower geometric error (like Chamfer distance) and higher F-score on several datasets.

- Better or equal visual quality: It produces more realistic images (higher PSNR and SSIM, lower LPIPS) than other streaming methods, and often matches or surpasses offline methods.

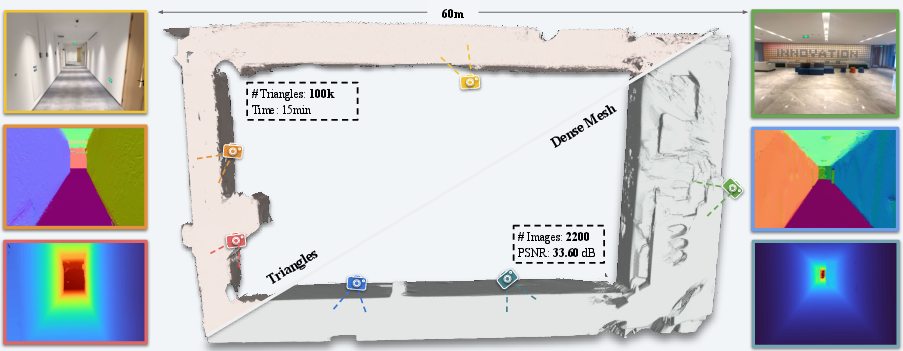

- Much faster: It reconstructs scenes in under about 100 seconds in tests, more than 5× faster than some popular approaches, while keeping quality high.

- Fewer primitives: It uses far fewer triangles/Gaussians than other methods, which reduces memory and speeds up processing.

- Ready for practical use: The clear planar structure makes it suitable for downstream tasks like pose refinement and creating simulation environments for robots.

Why It Matters

- For AR/VR and robots: Fast and accurate 3D reconstruction helps devices understand and interact with the real world in real time. Clean planes and sharp edges are crucial for reliable navigation and object placement.

- For large-scale modeling: Because it keeps the model compact and updates efficiently, PLANING can scale to bigger environments without exploding memory or runtime.

- For simulation and training: The method produces clean, flat surfaces that are “simulation-ready,” which is great for training robot motion policies (like walking or climbing stairs) in realistic environments.

- For research and applications: The clear split between geometry (triangles) and appearance (Gaussians) is a simple but powerful idea that could inspire future 3D methods to be both accurate and efficient.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, concrete list of what remains missing, uncertain, or unexplored in the paper, formulated to guide future research.

- Generalization beyond planar/Manhattan-like scenes: Assess reconstruction fidelity for highly curved, organic, or cluttered geometries (e.g., foliage, sculptures, wires), where triangle-based planar bias may be suboptimal.

- Materials and appearance complexity: Evaluate performance on scenes with strong specularities, transparency, interreflections, or subsurface scattering; current SH-based appearance may be insufficient for complex BRDFs.

- Dynamic scenes and non-rigid motion: Extend or constrain the method for scenes with moving objects/people; analyze how the pipeline prevents mapping dynamic content and its impact on pose/geometry stability.

- Robustness to prior quality: Quantify sensitivity to inaccuracies in feed-forward priors (MASt3R depth/normals, pose/loop-closure models) across out-of-distribution, low-light, or motion-blurred inputs; provide ablations without priors.

- Monocular scale handling: Clarify how metric scale is established/stabilized across sequences and datasets; measure scale errors and drift under varying prior accuracy.

- Pose–map coupling: The global map update applies a transform from a triangle’s source keyframe; analyze errors when many poses contributing to the same area change inconsistently and whether re-fitting is needed.

- Loop closure failure modes: Characterize how often loop closure and global BA fail or produce suboptimal corrections, and how the mapper recovers from tracking loss or wrong relocalization.

- Real-time constraints and latency: Report frame rates, per-frame latency, and overlap of frontend/backend/mapper on standard GPUs; quantify contention between tracking and mapping under streaming loads.

- Memory and compute profiling: Provide detailed memory footprints (triangles, Gaussians, features, SH), CUDA kernel costs for the triangle rasterizer, and scaling curves in image resolution and primitive count.

- Large-scale scalability: Beyond the corridor demo, benchmark dynamic loading and CPU–GPU swapping overheads on kilometer-scale trajectories; report throughput, stutters, and quality degradation.

- Outdoor robustness: Systematically evaluate on outdoor scenes with vegetation, sky, and moving traffic (beyond small subsets of KITTI/Waymo), where plane-guided constraints are weaker.

- Triangle rasterizer stability: Analyze numerical stability, anti-aliasing, and gradient behavior of the edge-preserving contribution function (δ, σ); provide guidelines or auto-tuning for these hyperparameters.

- Occlusion and depth sorting complexity: Quantify the computational overhead of per-pixel ray–triangle intersections and subdivision-based depth sorting, especially at high resolutions or dense geometry.

- Primitive initialization sensitivity: Study the impact of LoG threshold τ_a and vicinity function V(d) hyperparameters on missed structures (low-frequency regions) vs. redundancy; propose adaptive or learned strategies.

- Pruning risks: Evaluate false positives/negatives when pruning triangles by opacity (<0.5) and the system’s ability to recover wrongly pruned geometry in later frames.

- Geometry–appearance interference: Although decoupled, gradients from Gaussians still backpropagate to triangles; bound or diagnose cases where appearance induces geometric drift, especially with weak or erroneous priors.

- Thin structures: Quantify reconstruction of thin or high-frequency features (railings, cables, chair legs) and whether triangle initialization and spatial filtering suppress them inadvertently.

- Topology and watertightness: Assess whether the “triangle soup” yields consistent, watertight surfaces for mesh extraction and simulation; measure self-intersections and holes.

- Plane extraction robustness: Provide a detailed evaluation of the coarse-to-fine plane extraction’s parameter sensitivity, failure modes (partial/occluded planes), and accuracy on full plane sets (not only top-20).

- Pose refinement with planes: Quantify when plane-guided pose constraints help vs. hurt (e.g., non-planar scenes, slanted/curved surfaces incorrectly detected as planes); explore robust plane weighting.

- Appearance model limitations: Compare SH with more expressive view-dependent models (e.g., learned BRDFs) for challenging materials; analyze trade-offs with streaming efficiency.

- Hyperparameter auto-tuning: Many design choices (K_min/K_max per triangle, δ, σ, opacity schedules) are fixed; explore self-calibration or meta-learned schedules to reduce manual tuning and dataset sensitivity.

- Training-time variance: Report run-to-run variability (random seeds, keyframe selection), statistical significance of improvements, and confidence intervals for metrics.

- Fairness of comparisons: Reconcile iteration budgets and priors across methods; analyze time–quality trade-offs to ensure conclusions don’t hinge on favorable optimization schedules.

- Intrinsic calibration errors: Investigate sensitivity to unknown or drifting intrinsics (focal length, distortion), especially for casual mobile captures; integrate online intrinsic refinement if needed.

- Handling of lighting/exposure changes: Evaluate robustness to auto-exposure/white-balance variations across frames and their impact on both geometry and appearance.

- Catastrophic forgetting in long streams: Examine whether earlier map regions degrade as new areas are optimized (streaming continual learning), and propose mechanisms to preserve past quality.

- Degenerate triangle handling: Describe safeguards against triangle collapse, flipping, or near-collinearity; propose regularizers to maintain well-conditioned triangles.

- Occluder-aware insertion: Ensure new triangles are not initialized behind existing surfaces; add explicit occlusion checks during candidate selection and quantify resulting improvements.

- Multi-sensor extensions: Explore integration with IMU, stereo, or depth sensors for improved robustness and scale; clarify how the framework generalizes to multi-camera rigs.

- Simulation-readiness evaluation: Beyond qualitative locomotion demos, quantify contact accuracy (normal consistency, penetration rates), friction assumptions, and RL sample efficiency compared to ground-truth CAD or scans.

- Reproducibility and availability: Specify code/data release status, training scripts, and exact third-party model versions to facilitate replication and comparison.

Practical Applications

Immediate Applications

Below is a concise set of specific, deployable use cases that can be built with the paper’s methods and findings today, along with sector tags and key dependencies.

- Plane-guided pose refinement plug-in for SLAM (robotics, AR/VR, mapping)

- What: Drop-in module that feeds extracted planar constraints back into a visual SLAM frontend to reduce drift and improve global consistency during mapping.

- How: Use the triangle-derived planar map and point-to-plane alignment loss described in the paper to refine poses as frames stream in.

- Tools/products/workflows: “PLANING Pose-Refiner” SDK; ROS2 node; integration with ORB-SLAM/VINS-Fusion-like systems; an on-device or edge service.

- Assumptions/dependencies: Reliable feed-forward priors (e.g., MASt3R, Pi3); largely static scenes; adequate feature coverage; GPU/strong edge compute for real-time updates.

- Real-time AR occlusion and physics-ready surfaces (AR/VR, mobile software)

- What: Use explicit triangles as collision meshes for occlusion, contact, and physics in mobile AR apps and VR experiences.

- How: Export triangles from the streaming mapper to an application engine (Unity/Unreal) as lightweight, editable meshes.

- Tools/products/workflows: “Triangle Mesh Exporter” runtime component; ARKit/ARCore integration; physics plugins using triangle meshes for contact.

- Assumptions/dependencies: Stable tracking and reasonably planar indoor structures; device GPU; privacy compliance for indoor scanning.

- Rapid indoor scanning to floor plans + textured meshes (AEC/BIM, real estate, facility management)

- What: Convert phone video into compact planes and meshes for layout understanding, space planning, or virtual tours.

- How: Run streaming reconstruction; export plane abstractions via the paper’s coarse-to-fine plane extraction and depth-fused dense meshes.

- Tools/products/workflows: “PLANING Capture” mobile app; cloud pipeline for triangle-to-BIM conversion; floor plan extractor.

- Assumptions/dependencies: Monocular capture quality; adequate lighting; reliance on priors for normals/depth; domain tuned to indoor, planar-rich scenes.

- Simulation-ready environment generation for RL locomotion training (robotics)

- What: Auto-generate high-fidelity, lightweight terrains and stairs (triangles) to train locomotion policies with PPO in Isaac environments.

- How: Export compact triangles; load into Isaac Lab/Sim; run PPO training as demonstrated in the paper (humanoid/quadruped).

- Tools/products/workflows: “RL Terrain Exporter”; Isaac Lab assets; automated pipeline from capture → triangle mesh → sim-ready environment.

- Assumptions/dependencies: Accurate contact geometry; static scenes; sim engine compatibility; robotics teams familiar with PPO/Isaac workflows.

- Large-scale corridor and campus mapping with dynamic loading (security, FM/operations)

- What: Map long indoor spaces from thousands of frames using GPU/CPU parameter swapping, maintaining responsiveness.

- How: Use the paper’s dynamic loading strategy to scale beyond single-GPU memory constraints.

- Tools/products/workflows: “Dynamic Loader” service; edge/cloud-recon split; job scheduler for long sequences.

- Assumptions/dependencies: Memory-aware deployment; loop closure available; consistent camera motion; static or low-dynamics environments.

- Photorealistic telepresence and site walkthroughs (media/communications, real estate)

- What: Generate high-quality novel views quickly for remote walkthroughs or client reviews.

- How: Leverage neural Gaussians anchored to triangles for fast rendering; stream updates as the scene is captured.

- Tools/products/workflows: “PLANING Viewer” web/mobile client; low-latency NVS rendering service; collaborative annotations.

- Assumptions/dependencies: Stable pose estimation; good coverage of viewpoints; bandwidth for streaming assets.

- Edge-friendly mapping for robots and IoT (manufacturing, logistics)

- What: Reduce on-board compute by using the compact triangle-Gaussian representation and redundancy-aware primitive initialization.

- How: Deploy mapper with photometric/spatial filtering and regular pruning; stream only compact plane outputs for downstream planning.

- Tools/products/workflows: Microservices for map thinning; ROS integration for plane-based navigation; lightweight SLAM enhancements.

- Assumptions/dependencies: Onboard GPU or accelerator; reliable priors; environments with usable planar cues (floors, walls, shelves).

- Inspection and QA of scans via structural metrics (AEC, insurance)

- What: Automated quality checks using primitive count, opacity pruning statistics, and planar fidelity/accuracy metrics.

- How: Report the paper’s metrics (Chamfer-L2, F-score, planar fidelity/accuracy) to flag coverage gaps and reconstruction errors.

- Tools/products/workflows: “Scan QA Dashboard”; batch reports; thresholds tuned per site type.

- Assumptions/dependencies: Ground-truth or reference planes for benchmarking; standardized capture protocols.

- Dataset bootstrapping with consistent planes and meshes (academia)

- What: Generate training/validation sets with explicit geometry for SLAM, NVS, and scene understanding research.

- How: Use the hybrid pipeline to produce aligned planes, meshes, and camera trajectories; include per-frame priors.

- Tools/products/workflows: “PLANING Data Factory”; scripts to export COCO-like plane annotations; open benchmarking integrations.

- Assumptions/dependencies: Licensing of priors/models; documentation for reproducibility; storage/bandwidth for dissemination.

- Fast digital twin creation for retail/warehouses (digital twins)

- What: Build lightweight digital twins for layout optimization, line-of-sight analysis, and safety planning.

- How: Triangles for structure + neural Gaussians for textures; export to twin platforms for analytics.

- Tools/products/workflows: “Twin Exporter” connector; analytics dashboards; CAD/BIM sync.

- Assumptions/dependencies: Up-to-date scans; policy-compliant handling of indoor imagery; repeatable capture schedule.

Long-Term Applications

Below are use cases that benefit from further research, scaling, integration, or standardization before broad deployment.

- Consumer-grade home scanning apps with AR layout and furnishing (daily life, retail)

- What: One-tap room capture that returns editable planes (walls, floors) and realistic textures; smart placement of furniture.

- Why long-term: Robustness in cluttered, low-light, dynamic scenes; UX polish; device diversity and on-device acceleration.

- Dependencies: Strong priors on diverse households; privacy/consent flows; integration with AR platforms.

- BIM/CAD round-trip editing with triangle anchors (AEC/BIM)

- What: Convert captured triangles into parametric BIM elements; support bidirectional edits (as-built vs. design).

- Why long-term: Need robust parametric fitting across non-ideal geometry; standards interoperability (IFC).

- Dependencies: Accurate plane segmentation; tolerances; CAD plugins; standardization of triangle-Gaussian formats.

- City-scale AR cloud and indoor positioning using plane maps (mapping, AR cloud)

- What: Use compact plane maps to anchor AR content and indoor navigation across large facilities and campuses.

- Why long-term: Persistent maps, multi-user synchronization, drift control at scale.

- Dependencies: Loop closures across sessions; cloud infra; privacy/security policies for indoor scans.

- Autonomous driving and mobile robotics scene modeling (mobility, robotics)

- What: Decoupled appearance/geometry improves map reliability for planning under sparse/light-challenged conditions.

- Why long-term: Generalization to highly non-planar, dynamic outdoor scenes; multi-sensor fusion (LiDAR, radar).

- Dependencies: Robust priors outdoors; moving-object handling; standards for sensor fusion.

- Multi-agent collaborative mapping with decoupled updates (robotics, warehousing)

- What: Robots contribute local triangles/Gaussians to a shared global map in real time.

- Why long-term: Conflict resolution, consistent global optimization across agents, bandwidth constraints.

- Dependencies: Distributed SLAM protocols; edge/cloud orchestration; synchronization guarantees.

- Disaster response and emergency site digitization (public safety, policy)

- What: Rapid capture of damaged structures to assess stability, plan routes, and simulate rescue operations.

- Why long-term: Robustness under debris, dust, low light, and motion; training responders on workflows.

- Dependencies: Ruggedized hardware; pre-trained priors for adverse conditions; coordination with authorities.

- Insurance claims and property assessments (finance/insurance)

- What: Streamlined documentation of interior damage with accurate geometry and textures; automated report generation.

- Why long-term: Regulatory acceptance; standardized measurement accuracy; chain-of-custody for imagery.

- Dependencies: Certification of reconstruction accuracy; privacy safeguards; audit trails.

- Generative scene editing anchored on explicit surfaces (software, media)

- What: Use triangle surfaces as editable canvases for generative inpainting, texture synthesis, or layout correction.

- Why long-term: Reliable coupling between generative models and explicit geometry; UX for creators.

- Dependencies: APIs bridging reconstruction and generative diffusion models; content safety filters.

- Drone-based infrastructure inspection at scale (energy, transportation)

- What: Lightweight streaming reconstruction for bridges, corridors, substations; export planes for defect detection.

- Why long-term: Complex outdoor geometry, occlusions, moving elements; flight planning integration.

- Dependencies: Multi-view coverage; robust priors outdoors; regulatory flight permissions.

- Healthcare facility mapping for accessibility and fall-risk analysis (healthcare)

- What: Capture clinics/hospitals to analyze slopes, stairs, narrow passages; simulate patient mobility training.

- Why long-term: Clinical validation; integration with EHR/operations systems.

- Dependencies: HIPAA-compliant handling; standardized risk metrics; staff training.

- Education and cultural heritage digitization (academia, museums)

- What: Stream-to-twin digitization of labs, galleries, historic sites for remote learning and preservation.

- Why long-term: Rights management; variation in lighting/textures; curation tools.

- Dependencies: Institutional policies; archival formats; accessibility requirements.

- Standardization and governance of hybrid triangle–Gaussian formats (policy, standards)

- What: Define interoperable file formats and evaluation metrics for decoupled geometry/appearance reconstructions.

- Why long-term: Requires multi-stakeholder consensus and alignment with existing standards (IFC, USD).

- Dependencies: Industry bodies; test suites; open datasets and benchmarks.

- Energy and sustainability benefits via compute efficiency (energy, sustainability)

- What: Lower runtime and primitive count reduce costs and carbon footprint of large-scale mapping.

- Why long-term: Need lifecycle accounting; broad deployment across enterprises.

- Dependencies: Measurement frameworks; green SLAs; hardware efficiency targets.

Common assumptions and dependencies that affect feasibility

- Reliance on feed-forward geometric and pose priors (e.g., MASt3R, Pi3, VGGT) for stable initialization and streaming robustness; performance drops if priors are weak or domain-shifted.

- Best suited to static or low-dynamics scenes, with strong planar structures (indoor environments); additional work needed for highly non-planar or dynamic outdoor scenes.

- Requires GPU or accelerated hardware for real-time streaming and rendering; dynamic loading mitigates memory limits but needs engineering.

- Privacy, consent, and data governance are critical for indoor scene capture; enterprises need compliant workflows and retention policies.

- Integration effort with downstream engines (Unity/Unreal/Isaac), SLAM stacks (ROS), and CAD/BIM ecosystems; potential need for new format standards to represent decoupled geometry and appearance.

Glossary

- 3D Convex Splatting (3DCS): An explicit primitive-based rendering approach using convex shapes for differentiable rasterization. "Following 3D Convex Splatting (3DCS)~\cite{held20253d}, we further introduce two learnable triangle-wise parameters"

- 3D Gaussian Splatting (3DGS): An explicit representation that renders scenes using many anisotropic Gaussian primitives for real-time, high-fidelity view synthesis. "3D Gaussian Splatting (3DGS)~\cite{kerbl20233d} has emerged as a compelling explicit representation, offering high visual fidelity with efficient rendering"

- alpha compositing: A rendering technique that blends overlapping primitives based on their opacity, often in a front-to-back order. "Finally, triangles are rendered into depth and normal maps using front-to-back alpha compositing:"

- anisotropic Gaussian primitives: Ellipsoidal Gaussians with direction-dependent scales used as explicit rendering elements. "3D Gaussian Splatting (3DGS) employs explicit anisotropic Gaussian primitives"

- barycenter: The centroid of a triangle used as the origin of a local coordinate frame. "where the barycenter is set as the origin of the local frame."

- bundle adjustment: A global non-linear optimization of camera poses and structure to improve reconstruction consistency. "The backend subsequently performs loop closure detection~\cite{wang2025pi3} and global bundle adjustment~\cite{murai2025mast3r} over keyframes"

- Chamfer Distance: A geometric error metric computing the average nearest-neighbor distance between two point sets. "we evaluate plane geometry using Chamfer Distance and F-score."

- Chamfer-L2: The L2 variant of Chamfer Distance used to quantify geometric reconstruction accuracy. "PLANING improves dense mesh Chamfer-L2 by 18.52\% over PGSR"

- differentiable triangle rasterizer: A rendering module that computes triangle contributions and gradients to enable learning from depth/normal supervision. "We implement an efficient differentiable triangle rasterizer that enables direct supervision of triangles using prior normals and depths."

- entropy loss: A regularization on opacity encouraging confident, non-ambiguous contributions from primitives. "$\mathcal{L}_{\text{o}$ is an entropy loss on triangle opacity , following~\cite{guedon2024sugar}."

- F-score: A harmonic mean of precision and recall used to assess geometric reconstruction quality. "we evaluate plane geometry using Chamfer Distance and F-score."

- feed-forward models: Pretrained networks that produce scene predictions in a single pass without per-scene optimization. "Our framework leverages feed-forward models as learned priors to enable robust camera pose estimation"

- Gaussian smoothing kernel: A convolution kernel that blurs an image using a Gaussian function to suppress noise or high-frequency content. " denotes a Gaussian smoothing kernel."

- Laplacian of Gaussian (LoG): An edge detection operator combining Gaussian smoothing with a Laplacian to highlight intensity changes. "using the Laplacian of Gaussian (LoG) operator "

- loop closure detection: Identifying revisited locations to correct accumulated drift in SLAM systems. "The backend subsequently performs loop closure detection~\cite{wang2025pi3}"

- LPIPS: Learned Perceptual Image Patch Similarity, a perceptual metric for image similarity based on deep features. "we use standard metrics including PSNR, SSIM~\cite{wang2004image}, and LPIPS~\cite{zhang2018unreasonable}"

- Marching Cubes: A classic algorithm to extract polygonal meshes from implicit fields or volumetric data. "mesh extraction using Marching Cubes~\cite{lorensen1998marching}."

- monocular image sequences: Ordered single-camera frames used for reconstruction without stereo or multi-camera setups. "Streaming reconstruction from monocular image sequences remains challenging"

- Neural Radiance Fields (NeRF): Implicit neural scene representations that model view-dependent color and density for volumetric rendering. "Neural Radiance Fields (NeRF) \cite{mildenhall2021nerf} established a neural rendering milestone by using MLPs for ray-based synthesis."

- neural Gaussians: Gaussians whose attributes (e.g., scale, rotation) are decoded from learned features to model appearance. "we introduce neural Gaussians to flexibly encode view-dependent appearance."

- opacity: A per-primitive parameter controlling transparency during compositing. "Each triangle is also associated with a learnable opacity parameter , analogous to 3DGS~\cite{kerbl20233d}."

- photometric filter: A selection scheme that prioritizes high-frequency or poorly reconstructed regions based on image discrepancies. "we first apply photometric filter, which prioritizes high-frequency regions and poorly reconstructed areas"

- Planar Accuracy: A metric evaluating how accurately reconstructed planes align with annotated planar regions. "we further assess the top-20 largest planes using Planar Fidelity, Planar Accuracy, and Planar Chamfer metrics."

- Planar Chamfer: Chamfer Distance computed specifically over detected planar structures. "we further assess the top-20 largest planes using Planar Fidelity, Planar Accuracy, and Planar Chamfer metrics."

- Planar Fidelity: A measure of how well reconstructed planes preserve planar structure details. "we further assess the top-20 largest planes using Planar Fidelity, Planar Accuracy, and Planar Chamfer metrics."

- PPO (Proximal Policy Optimization): A reinforcement learning algorithm for training policies with clipped objective functions. "trained two motion policies using Proximal Policy Optimization (PPO) within the Isaac Lab framework"

- PSNR: Peak Signal-to-Noise Ratio, a standard metric for image reconstruction fidelity. "surpasses ARTDECO by 1.31 dB PSNR"

- quaternion: A 4D representation of 3D rotations used to parameterize Gaussian orientation. "a base quaternion $\mathbf{q}_{\text{g}\in\mathbb{R}^4$"

- rasterization: The process of converting primitives into pixel-based images efficiently on GPUs. "leveraging efficient rasterization to enable real-time reconstruction"

- ray-triangle intersection: A geometric computation to find where a camera ray intersects a triangle for accurate depth rendering. "we adopt an explicit ray-triangle intersection strategy"

- signed distance fields (SDFs): Scalar fields giving distance to the nearest surface, used for geometry modeling. "integrate neural signed distance fields (SDFs) with 3DGS."

- spherical harmonics (SH) coefficients: Basis function coefficients modeling view-dependent color on Gaussians. "spherical harmonics (SH) coefficients, opacity $\alpha_{\text{g}\in\mathbb{R}$"

- SSIM: Structural Similarity Index, a perceptual metric assessing image quality based on structural information. "we use standard metrics including PSNR, SSIM~\cite{wang2004image}, and LPIPS~\cite{zhang2018unreasonable}"

- surfels: Small surface elements (or disk-like primitives) used for explicit scene representation. "using 2D surfels for coarse structure and 3D Gaussians for fine detail."

- tangent plane: The plane locally approximating a surface at a point, used to parameterize triangle coordinates. "Under this construction, the three vertices can be expressed in the local tangent plane"

- volumetric rendering: Rendering by integrating color and density along rays through a volume. "the high computational cost of per-ray volumetric rendering limits its suitability for real-time applications."

- visual SLAM: Simultaneous Localization and Mapping using visual data to estimate camera motion and map structure. "Classical visual SLAM frameworks provide robust online tracking and mapping"

Collections

Sign up for free to add this paper to one or more collections.