First Proof

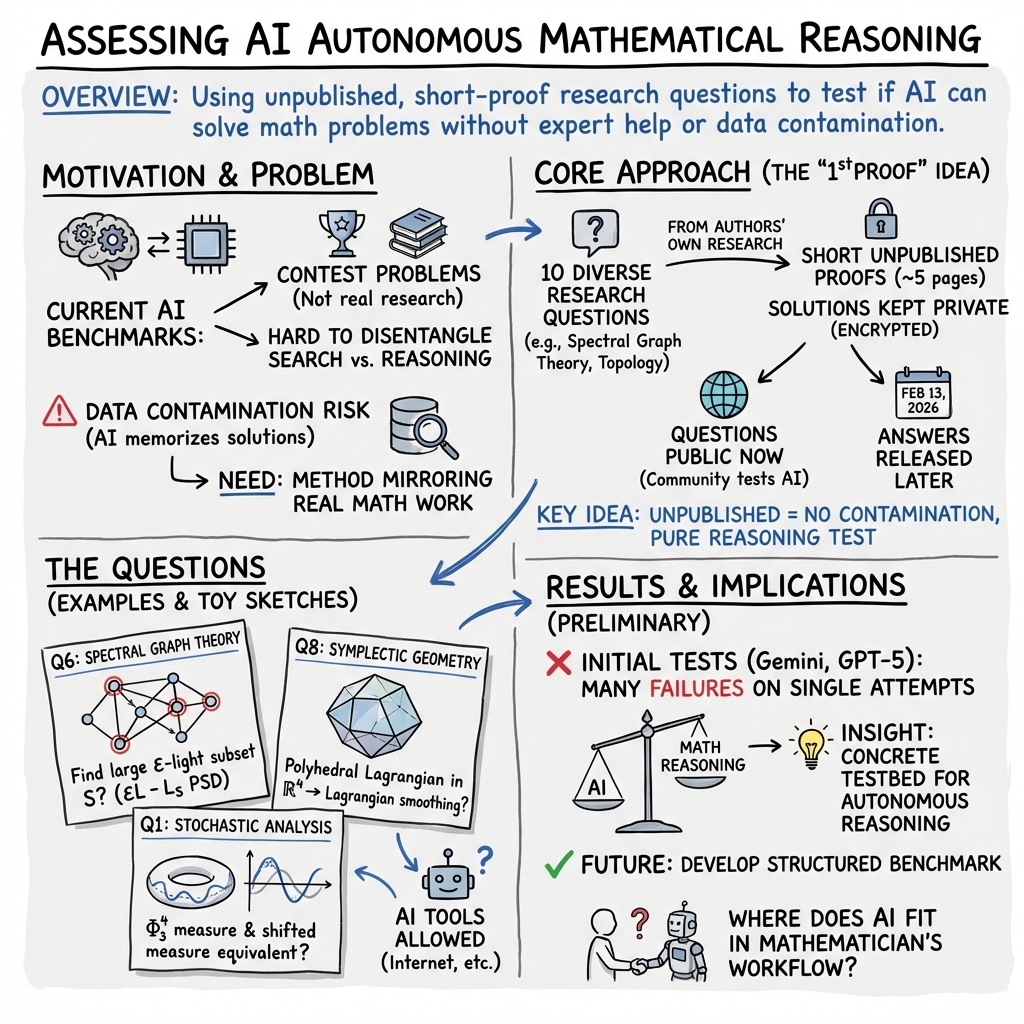

Abstract: To assess the ability of current AI systems to correctly answer research-level mathematics questions, we share a set of ten math questions which have arisen naturally in the research process of the authors. The questions had not been shared publicly until now; the answers are known to the authors of the questions but will remain encrypted for a short time.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is about testing how well today’s AI systems can solve real, research-level math problems on their own. Instead of using contest-style questions or puzzles, the authors collected ten genuine math questions that came up during their own research. They’ve kept the official answers secret for a short time so people can try using AI to solve them without accidentally finding the solutions online.

The title “First Proof” is a playful nod to baking: in bread-making, the “first proof” is when dough is left to rise. Similarly, the authors are letting this idea “rise” in the math and AI community before building a more polished, formal test.

Objectives and Research Questions

The paper focuses on simple but important goals:

- Figure out how to fairly test whether AI can solve real math research questions without human help.

- Use questions that are truly like what mathematicians work on, not simplified problems designed just for grading.

- Avoid “data contamination,” meaning the answers aren’t already on the internet where an AI could just find them.

- Learn how people and AI interact when solving such problems, and what good testing rules should look like.

The actual math questions cover many areas of advanced math. You don’t need to know the details; the idea is that they are the kinds of questions professional mathematicians actually face, such as in algebra, geometry, probability, and graph theory.

Approach and Methods

Here’s how the authors set up their test:

- They collected ten math questions that arose naturally during their research. Each one has a short, human-made proof (around five pages), but the answers haven’t been posted online.

- They encrypted the answers and posted them at https://1stproof.org. The answers will be released on February 13, 2026.

- They invited the community to try solving the questions with AI and to share complete transcripts of their attempts. This helps everyone learn what prompts, formats, and grading methods work best.

- They allowed AI systems to use outside resources (like internet searches) to simulate real-life conditions—but since the answers aren’t public, the AI can’t just copy a solution.

- They did small, preliminary tests using advanced public AI models (like GPT-5.2 Pro and Gemini 3.0 Deepthink) in a “one-shot” manner: they asked each model to produce an answer once, without back-and-forth hints. The goal was to keep things fair and simple.

To reduce the chance of training on their data, they disabled data-sharing settings. The authors also explain that human experts will need to grade the proofs, because the final answers aren’t neat numbers or short expressions—there can be multiple correct proofs or counterexamples.

Main Findings and Why They Matter

While this paper doesn't present final scores or a formal benchmark yet, it shares early observations:

- In one-shot tests, top public AI systems struggled with many of these real research questions.

- The design of their test reduces the chance that AIs are just “searching the web” for answers, since the answers aren’t online.

- The questions are varied and realistic, coming from mathematics as it’s actually done, not from contests. This better reflects creative problem solving in math.

- Human grading is necessary because research problems often have more than one correct solution and require reasoning, not just a final number.

This matters because it helps the community understand the current limits of AI in creative, high-level math. It also points the way to building stronger, fairer tests in the future.

Implications and Potential Impact

The project aims to guide how we evaluate and improve AI for math research:

- It could lead to a new kind of benchmark: fewer questions, but more realistic, carefully curated, and human-graded.

- It may help mathematicians see where AI can be useful today (for tasks like searching papers, checking for mistakes, writing code) and where it still needs improvement (creating and proving novel results).

- By sharing experiences from many attempts, the community can learn better prompting strategies, grading standards, and ways to avoid data contamination.

- The team plans to release a second set of questions and possibly work with AI labs to test models before public release, helping ensure fair, informative evaluations.

In short, this paper starts a careful, community-driven effort to measure and improve AI’s ability to tackle the kind of deep, original thinking that real math research requires.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, consolidated list of concrete gaps the paper leaves unresolved, framed to guide future research and benchmark design.

- Benchmark scope and statistical power: With only 10 problems, there is no analysis of how many items are required to detect meaningful differences between models, estimate confidence intervals, or support statistical comparisons across systems and versions.

- Difficulty calibration and metadata: The paper does not quantify per-problem difficulty, prerequisites, or domain coverage, nor provide metadata (e.g., topic tags, proof length, technique type) to enable stratified evaluation or difficulty-adjusted scoring.

- Grading rubric and inter-rater reliability: No formal criteria for correctness, completeness, rigor, or partial credit are specified, and no plan is provided to measure inter-rater agreement (e.g., kappa) or to resolve disagreements among expert graders.

- Solution format specification: The acceptable format for answers (full proof, counterexample, computational verification, outline with lemmas) is not defined, nor are standards for referencing external results, using computer algebra, or including auxiliary computations.

- Separation of “search” vs “solve” capabilities: The methodology allows unfettered internet access but does not provide controlled variants (e.g., closed-book/offline settings, restricted search) to disentangle retrieval and synthesis from genuine problem-solving.

- One-shot vs interactive protocols: The initial tests were one-shot, but the paper does not define standardized multi-turn interaction protocols, time budgets, or interaction limits, all of which critically affect outcomes and comparability.

- Compute and resource controls: There are no controls or reports for inference-time compute, tool access (code execution, CAS), or model-specific capabilities, making results difficult to reproduce or compare fairly across systems.

- Model versioning and reproducibility: The paper lacks a pre-registered evaluation plan, version pinning, environment specification, and a protocol for repeated runs to quantify variance across queries and sessions.

- Automatic vs human grading: While acknowledging the need for human grading, there is no pathway outlined for partial automation (e.g., proof-checkers, formal verification, unit tests for subclaims), nor criteria for when automation is reliable.

- Post-release contamination management: After solutions are released, the paper does not propose mechanisms to maintain benchmark utility (e.g., new blinded sets, staggered releases, provenance checks) or to track data contamination over time.

- Participant reporting standards: The request for public transcripts is not accompanied by a standardized logging schema (e.g., prompt, system settings, tool usage, timestamps, iterations) to enable comparable and analyzable submissions.

- Baselines and human performance: No human baseline (e.g., time-to-solution for experts vs non-experts) or classical algorithmic baselines are provided to contextualize model performance or to calibrate “research-level” difficulty.

- Coverage and representativeness: The selection (one problem per author, five-page proof cap) may bias toward certain subfields and techniques; there is no analysis of how well the set reflects the “true distribution” of contemporary research tasks.

- Evaluation dimensions beyond correctness: The paper does not propose metrics for solution quality dimensions (e.g., elegance, generality, robustness, error analysis, explanatory clarity) that matter to mathematicians and research workflows.

- Partial progress measurement: There is no scheme to award credit for meaningful partial progress (e.g., reduction to known lemmas, correct intermediate claims, plausible conjectures with evidence) while penalizing gaps and errors.

- Time-to-solution and efficiency metrics: The protocol does not collect or report time-to-solution, tool-call counts, or interaction length, missing critical indicators of practical utility and cost-performance trade-offs.

- Security and privacy assurances: While noting retention policies, the paper does not implement or verify cryptographic commitments, leak detection, or systematic audits to ensure zero exposure of solutions prior to release.

- Licensing and data governance: The paper does not specify licensing for the problem set, expected use constraints, or governance for derivative benchmarks and public leaderboards.

- Machine-readable problem formats: No standardized, machine-readable packaging (e.g., JSON with fields for definitions, assumptions, allowed references) is provided to facilitate automated ingestion, prompting, and grading pipelines.

- Error taxonomy and failure analysis: There is no plan to categorize model errors (e.g., logical gaps, hallucinated citations, misuse of definitions, invalid inference steps) or to publish systematic failure analyses to guide model improvement.

- Generalization and transfer: The paper does not propose methods to assess whether solving one problem generalizes to related problems or whether models can transfer techniques across domains.

- Higher-level research tasks: The paper explicitly excludes question formulation and framework development; however, no roadmap or protocol is proposed for evaluating these capabilities in subsequent phases.

- Ethical considerations for public experiments: There is no guidance on responsible disclosure (e.g., avoiding toxic prompting, plagiarism checks, citation practices) or on credit assignment for AI-assisted solutions.

- Post-hoc training risks: The paper does not address how public sharing of transcripts and solutions might be used for post-training or RLHF by vendors, nor how to prevent overfitting to the benchmark.

- Reference integrity and comparability: The Related Work section cites private benchmarks but does not define criteria to compare results across datasets, nor standardize evaluation dimensions for fair cross-benchmark comparisons.

- Second-set design commitments: The proposed “second set of questions” is mentioned without a pre-registered plan for sampling, blinding, grading, interaction modes, contamination controls, or long-term maintenance.

- Domain-specific constraints: Some problems rely on highly specialized contexts (e.g., Whittaker models, Lagrangian smoothing), yet the paper does not define allowable background references or provide canonical definitions to minimize misinterpretation by models.

- Proof length and complexity constraints: Imposing a five-page limit introduces selection bias; the paper does not analyze how proof-length constraints affect task realism or propose variants to capture longer, multi-lemma research workflows.

- Leaderboards and reporting: No framework is proposed for public leaderboards, standardized reporting (e.g., per-problem scores, error types), or mechanisms to prevent cherry-picking and ensure fair comparison.

These gaps suggest concrete next steps: pre-register evaluation protocols; define grading rubrics and partial-credit schemes; implement controlled online/offline conditions; specify machine-readable problem formats and logging standards; measure inter-rater reliability; calibrate difficulty; collect time and resource metrics; and design contamination-resilient, renewable test sets with transparent governance.

Practical Applications

Immediate Applications

Below are deployable ways to use the paper’s methodology and artifacts today, organized by stakeholder and sector. Each item notes tools/workflows that could emerge and key assumptions/dependencies.

- AI evaluation of research-level reasoning (software, academia, policy)

- Use case: Run head-to-head evaluations of AI systems on the paper’s 10 public, unpublished-at-training-time research questions to measure “first-proof” capability under realistic conditions (browsing allowed, human grading).

- Tools/workflows: “FirstProofEval” kit combining standardized prompts, transcript logging, contamination audits (pre/post web search traces), and human grading templates.

- Assumptions/dependencies: Availability of qualified human graders; adherence to the paper’s one-shot vs interactive protocols; careful handling of browsing to avoid leakage from early community posts; legal/ethical compliance for internet use in evaluation.

- Procurement tests for R&D AI assistants (government, pharma, finance, aerospace)

- Use case: Adopt the paper’s evaluation protocol as a vendor-neutral test suite when procuring AI tools meant to assist scientific and mathematical research teams.

- Tools/workflows: RFP annex that specifies “unknown-problem” evaluation, transcript disclosure, and contamination reporting; leaderboards with audit trails.

- Assumptions/dependencies: NDAs protecting yet-to-be-released problems; panel of external graders; comparable browsing configurations across vendors.

- Classroom modules for AI-augmented proof practice (education)

- Use case: Graduate courses in math/CS integrate the 10 questions to teach prompting strategies, proof verification, and responsible use of AI.

- Tools/workflows: Assignment templates requiring full interaction transcripts, instructor rubrics mirroring the paper’s grading philosophy, and reflection prompts on data contamination.

- Assumptions/dependencies: Instructor time; student access to comparable AI systems; departmental policy on AI usage and disclosure.

- Stress-testing automated theorem provers and CAS integrations (software)

- Use case: Evaluate and improve integrations between LLMs and formal systems (Lean/Isabelle/Coq) or CAS tools by attempting formalizations/proofs of the questions’ lemmas.

- Tools/workflows: Harness to translate natural-language statements into formal languages; auto-tactic benchmarking; error localization logs tied to human grading.

- Assumptions/dependencies: Significant formalization effort; community curation of formal statements; permissive licenses for sharing partial formalizations.

- Data governance playbook for evaluation sets (policy/compliance)

- Use case: Repurpose the paper’s “unknown-to-the-web” approach to build contamination-resistant evaluation sets in other domains (e.g., law, chemistry, biomedicine).

- Tools/workflows: Pipelines for holdout creation, encryption, delayed release, and transcript-based auditability of external lookups.

- Assumptions/dependencies: Domain experts able to generate/solve holdouts; secure storage and staged disclosure processes.

- PCG-based solver for kernelized CP with missing data (software/data science; healthcare, recommender systems, climate)

- Use case: Implement an iterative preconditioned conjugate gradient (PCG) solver that exploits Khatri–Rao/Kronecker and selection-matrix structure for the mode-k RKHS factor update (Question 10’s setup).

- Tools/workflows: Drop-in module for TensorLy, MATLAB Tensor Toolbox, PyTorch/JAX backends; GPU-friendly matvecs using (Z ⊗ K), selection S, and kernel-apply primitives; block-Jacobi/diagonal-plus-Kronecker preconditioners.

- Assumptions/dependencies: SPD kernel K, q ≪ N sparsity of observations, n,r < q, numerically stable kernel application; convergence/regularization tuning.

- Heuristic “ε-light” subgraph finders (networking/cloud)

- Use case: Even without a proof (Question 6), deploy spectral/SDP heuristics to identify “light” vertex subsets for load shedding, throttling, or sampling in large networks.

- Tools/workflows: Laplacian-based filters that search for S maximizing PSD margin of εL − L_S; integration into graph analytics pipelines.

- Assumptions/dependencies: No worst-case guarantees yet; rely on empirical validation and fallback safeguards.

- Open challenge platform for community benchmarking (community/industry)

- Use case: Host a public challenge with the paper’s protocol (complete transcript sharing, browsing allowed), facilitating transparent comparisons and prompt engineering research.

- Tools/workflows: Kaggle/CF-style platform with encrypted answer vault, submission auditing, and human-grading coordination.

- Assumptions/dependencies: Funding for moderation and grading; anti-contamination safeguards; clear terms for sharing interactions.

Long-Term Applications

These depend on the planned second release, broader adoption, or on the eventual resolution of the specific mathematics problems listed in the paper.

- Formalized benchmark and standards for scientific reasoning (AI industry, academia, standards bodies)

- Use case: The planned “second set” evolves into a maintained benchmark with interactivity protocols, rubricized scoring, and contamination audits—usable for capability tracking, model selection, and scientific QA.

- Tools/workflows: NIST-like benchmark specifications; reproducibility harnesses; standardized browse tools with logging; periodic refresh cycles.

- Assumptions/dependencies: Sustainable pipeline of curated, solved-but-unpublished problems; multi-stakeholder governance; funding for graders.

- Certification for AI tools used in science policy and procurement (policy/standards)

- Use case: Regulators and public funders require “unknown-problem, human-graded” certifications for AI used in grant reviewing, regulatory science, or critical R&D decisions.

- Tools/workflows: Certification schemes with external proctoring, tamper-proof transcript storage, and post-hoc publishing of problem sets.

- Assumptions/dependencies: Broad consensus on metrics; alignment with privacy/IP rules; interoperability across AI vendors.

- Safer training regimes for proof-capable models (AI R&D)

- Use case: Use similar holdout structures to train and evaluate RLHF/RLAIF for proof search without leakage, improving reliability of STEM co-pilots.

- Tools/workflows: Curriculum with synthetic subgoals, decontamination tools, “don’t-guess” penalties, and formal-verification feedback loops.

- Assumptions/dependencies: Strong guardrails to prevent train–test contamination; scalable human feedback; compute budgets.

- Generalization to other high-stakes domains (healthcare, law, materials science)

- Use case: Adopt the “first proof” methodology to assess AI on unpublished clinical protocol questions, novel case-law hypotheticals, or unseen synthesis targets in materials.

- Tools/workflows: Domain-specific graders, secure repositories, and structured answer formats that allow partial credit and human verification.

- Assumptions/dependencies: Availability of expert solvers and graders; ethical oversight; domain-adjusted grading schemas.

- If specific questions are resolved, downstream sectoral impacts could include:

- Graph ε-light subset theorem (Question 6) — software/energy/telecom

- Applications: Constructive sparsification and throttling algorithms for scalable graph processing, congestion control, and resilient grid/network design.

- Tools: Linear-time or near-linear-time routines integrated into graph libraries; guarantees for PSD-preserving sampling.

- Dependencies: Existence of constructive proofs and algorithmic reductions.

- Additive convolution inequality for roots (Question 4) — control/signal processing/numerics

- Applications: Tighter stability/conditioning bounds for polynomial filtering, robust control design, and root-finding under perturbations.

- Tools: Certified “stability margin” calculators; improved preconditioners for polynomial eigenproblems.

- Dependencies: Proof of inequality and numerically stable estimators of Φ_n.

- Kernelized CP with missing data theory (Question 10, beyond PCG engineering) — healthcare/climate/media

- Applications: Higher-fidelity spatiotemporal imputation (EHRs, climate grids, recommender logs) with RKHS priors; uncertainty-aware forecasting.

- Tools: End-to-end alternating solvers with kernel learning and convergence guarantees; GPU/TPU acceleration.

- Dependencies: Theoretical convergence/identifiability under missingness and kernel choices.

- Determinantal tensor relations and separability test (Question 9) — computer vision/ML

- Applications: Fast tests for multi-view consistency and factor separability; diagnostics for latent-variable models; “Tensor Integrity Checker” SaaS.

- Tools: Polynomial map F packaged as batched kernels; symbolic–numeric hybrid solvers.

- Dependencies: Explicit construction of F with degree independent of n; conditioning analysis.

- ASEP/Macdonald stationary Markov chain (Question 3) — logistics/queueing

- Applications: New queueing-network models with analytically tractable stationary laws; performance tuning in supply chains and data centers.

- Tools: Simulators and parameter estimators leveraging interpolation polynomials’ structure.

- Dependencies: Existence and constructive transition kernels independent of the polynomials themselves for definition.

- Shift-equivalence for the measure (Question 1) — computational physics

- Applications: Correctness of MCMC/samplers for renormalized SPDEs under field shifts; variance-reduced proposals in physical simulations.

- Tools: Sampler libraries with invariance checks; diagnostics for measure equivalence.

- Dependencies: Rigorous equivalence criteria and numerically testable surrogates.

- Equivariant slice filtration characterization (Question 5) — formal methods/materials with symmetries

- Applications: Computable invariants for symmetry-preserving systems; analysis tools for topological phases and symmetric controllers.

- Tools: Software for geometric fixed points computations and slice connectivity bounds.

- Dependencies: Effective algorithms derived from the characterization.

- Polyhedral Lagrangian smoothing (Question 8) — robotics/CAD

- Applications: Smooth, physically consistent Lagrangian surface design for manipulation and haptics; path planning honoring symplectic constraints.

- Tools: CAD plugins for Lagrangian smoothing; motion planners with symplectic feasibility checks.

- Dependencies: Constructive smoothing procedure and numerical robustness.

- Uniform lattices with 2-torsion and rationally acyclic manifolds (Question 7) — geometry/topology

- Applications: Constraints for manifold models in geometric group theory and numerical topology; potential implications for crystal and lattice modeling.

- Tools: Topology toolkits encoding (im)possibility results to prune search spaces.

- Dependencies: Clear translation of the classification result to computational criteria.

- Rankin–Selberg local integral witness functions (Question 2) — number theory/cryptography

- Applications: Improved computation of local factors/L-functions; potential tuning of cryptographic assumptions relying on number-theoretic heuristics.

- Tools: Libraries for explicit local integrals with guaranteed nonvanishing regions.

- Dependencies: Proofs that yield constructive Whittaker functions and stable numerics.

Across both categories, feasibility hinges on: sustained access to expert graders; robust anti-contamination practices; transparent interaction logs; and, for math-derived impacts, constructive proofs translating into algorithms with performance and stability guarantees.

Glossary

- Acyclic (over the rational numbers): A space whose reduced homology groups with coefficients in vanish. "whose universal cover is acyclic over the rational numbers ?"

- Additive character: A continuous homomorphism from the additive group of a field to , often used in number theory. "Let be a nontrivial additive character of conductor "

- Admissible representation: For -adic groups, a smooth representation where invariant subspaces under compact open subgroups are finite-dimensional. "Let be a generic irreducible admissible representation of "

- ASEP polynomial (interpolation ASEP polynomial): A family of polynomials associated with the asymmetric simple exclusion process, here in an interpolation form. "interpolation ASEP polynomial and interpolation Macdonald polynomial"

- CP decomposition: The CANDECOMP/PARAFAC factorization of a tensor as a sum of rank-1 components. "computing a CP decomposition of rank "

- Conductor ideal: An ideal measuring the level of ramification or minimal level (newform) of a representation. "Let denote the conductor ideal of "

- Equivalence of measures: Two measures are equivalent if they have the same null sets (mutual absolute continuity). "Here, equivalence of measures is in the sense of having the same null sets"

- Equivariant stable category: The stable homotopy category of spectra equipped with a -action. "the -equivariant stable category adapted to KK_tKKR^4L=D-ALGB = TZ\mathcal{T}ZN_\inftyN_\infty\mathbb{Q}_p$). "Let \(F\) be a non-archimedean local field" - **Positive semidefinite (PSD)**: A matrix whose eigenvalues are all nonnegative, implying $x^\top M x \ge 0x\epsilon L - L_ST_\psi^*\muT_\psiT_\psi^*T_\psiL\lambda01S \in \mathbb{R}^{N \times q}G"

- Uniform lattice: A discrete subgroup of a Lie group whose quotient is compact (cocompact lattice). "Suppose that is a uniform lattice in a real semi-simple group"

- Unipotent: A matrix whose eigenvalues are all 1; in algebraic groups, elements with nilpotent. "upper-triangular unipotent elements."

- Universal cover: A simply connected covering space of a topological space. "whose universal cover is acyclic over the rational numbers"

- Vec operator (vectorization): The linear operation that stacks the columns of a matrix into a single vector. "The \vecop operations creates a vector from a matrix by stacking its columns,"

- Whittaker model: A realization of a representation via Whittaker functions relative to a nontrivial character on a unipotent subgroup. "realized in its -Whittaker model ."

- Zariski-generic: Holding on a Zariski-open dense subset; generic in algebraic geometry. "be Zariski-generic."

- measure: The (renormalized) Gibbs measure for the three-dimensional quantum field/the SPDE invariant measure. "the measure on the space of distributions ."

Collections

Sign up for free to add this paper to one or more collections.