Structured Context Engineering for File-Native Agentic Systems: Evaluating Schema Accuracy, Format Effectiveness, and Multi-File Navigation at Scale

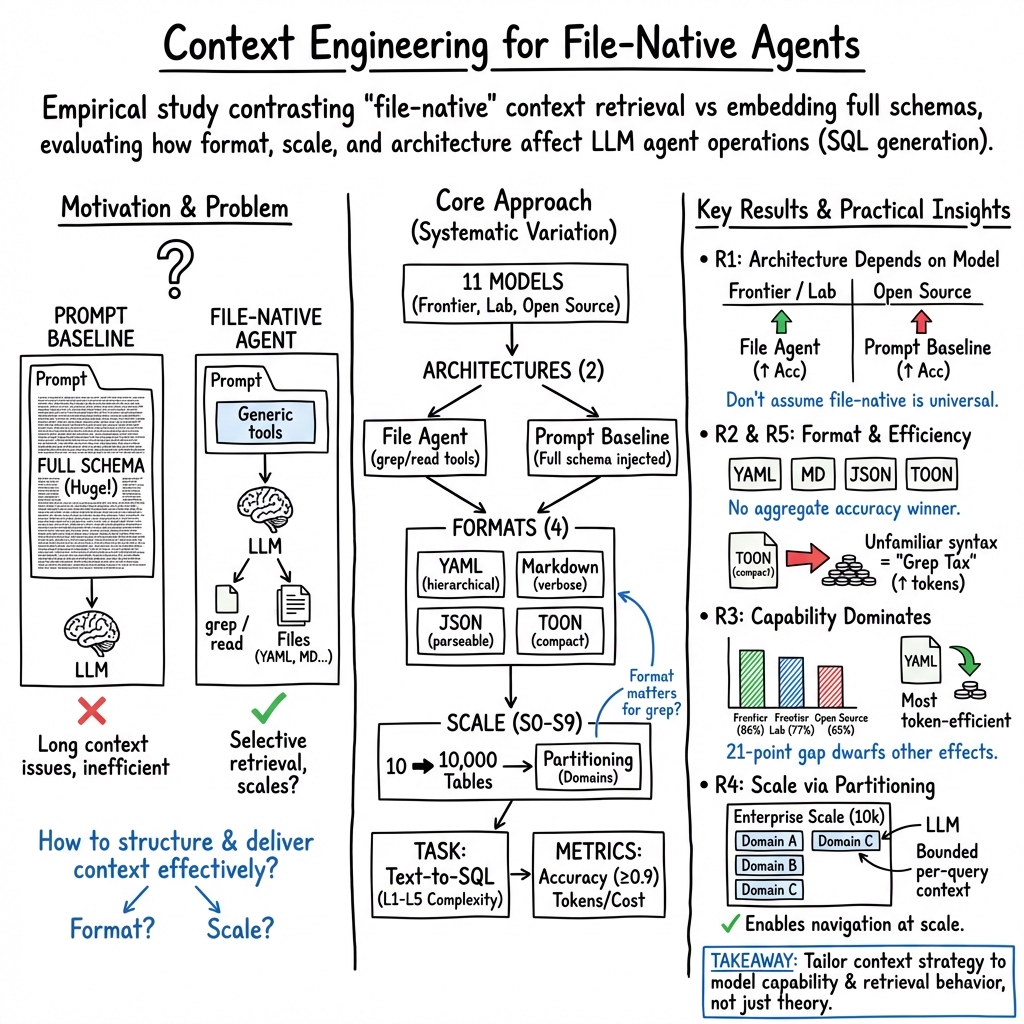

Abstract: LLM agents increasingly operate external systems through programmatic interfaces, yet practitioners lack empirical guidance on how to structure the context these agents consume. Using SQL generation as a proxy for programmatic agent operations, we present a systematic study of context engineering for structured data, comprising 9,649 experiments across 11 models, 4 formats (YAML, Markdown, JSON, Token-Oriented Object Notation [TOON]), and schemas ranging from 10 to 10,000 tables. Our findings challenge common assumptions. First, architecture choice is model-dependent: file-based context retrieval improves accuracy for frontier-tier models (Claude, GPT, Gemini; +2.7%, p=0.029) but shows mixed results for open source models (aggregate -7.7%, p<0.001), with deficits varying substantially by model. Second, format does not significantly affect aggregate accuracy (chi-squared=2.45, p=0.484), though individual models, particularly open source, exhibit format-specific sensitivities. Third, model capability is the dominant factor, with a 21 percentage point accuracy gap between frontier and open source tiers that dwarfs any format or architecture effect. Fourth, file-native agents scale to 10,000 tables through domain-partitioned schemas while maintaining high navigation accuracy. Fifth, file size does not predict runtime efficiency: compact formats can consume significantly more tokens at scale due to format-unfamiliar search patterns. These findings provide practitioners with evidence-based guidance for deploying LLM agents on structured systems, demonstrating that architectural decisions should be tailored to model capability rather than assuming universal best practices.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about

Think of an AI agent like a very smart student trying to use a complex computer system (like a big database). To do a good job, the AI needs the right “notes” about how the system is organized. This paper studies the best way to give those notes to AI agents so they can find the right information and act correctly.

Specifically, the paper looks at “file-native” agents—AIs that read information from files (like YAML, JSON, or Markdown) using simple search tools—versus putting everything directly into their prompt (their immediate memory). It asks: which setup helps different kinds of AI models do better, how do different file formats matter, and what happens when the system gets very big?

The main questions (in simple terms)

The researchers wanted to answer five practical questions:

- R1: Is letting the AI read files (file-native) better than stuffing all info into its memory (prompt)?

- R2: Does the file format (YAML, Markdown, JSON, or TOON) change how accurate the AI is?

- R3: Do stronger models benefit more from certain setups than weaker models?

- R4: Can file-reading AIs handle very large systems (up to 10,000 tables) and still find what they need?

- R5: Which formats are most efficient—meaning they use fewer “tokens” (pieces of text the AI reads), time, and tool calls?

How they did the study

To keep things fair and measurable, they used a common task: text-to-SQL. That means the AI reads a question in plain English (like “How many orders did we have last month?”) and generates an SQL query to get the answer from a database.

Here’s the setup, explained with everyday analogies:

- Models: They tested 11 AI models. “Frontier” models are top-of-the-line commercial AIs. “Open-source” models are publicly available and often free.

- Formats: They organized database “maps” (schemas) in four ways:

- YAML: Like tidy bullet-point notes with clear labels.

- Markdown: Like a human-friendly document with headings and tables.

- JSON: Like a structured, machine-readable list; a bit verbose.

- TOON: A compact custom format with short keywords (e.g., TABLE, COL); smaller files on disk.

- Architectures (how the AI gets the context):

- File Agent: The AI searches and reads files on demand using simple tools like “grep” (think: Ctrl+F, but in code).

- Prompt Baseline: The entire schema is pasted into the AI’s prompt up front (like handing the student a massive cheat sheet).

- Scale: They tested from small systems (10 tables) up to huge (10,000 tables). For the largest ones, they split the schema into domains (like separate folders) so the AI only reads the part it needs.

- Accuracy check: They used Jaccard similarity, which measures overlap between the AI’s results and the correct results. If the overlap was at least 90%, it counted as correct.

- Fairness: They ran 9,649 experiments, kept model randomness low, and used standard statistical tests to make sure differences were real, not just luck.

What they found (and why it matters)

Here are the big takeaways, explained simply:

- Architecture depends on model strength:

- For top models (frontier), letting the AI read files improved accuracy slightly.

- For several open-source models, putting all info in the prompt worked better. Some struggled with the file-searching approach.

- Why: Stronger models seem better at using tools and searching. Weaker ones may do better when everything is handed to them directly.

- Format didn’t change accuracy overall:

- Across all models together, YAML vs Markdown vs JSON vs TOON didn’t significantly change how often the AI was right.

- But individual models had preferences. Open-source models were more sensitive to format differences than frontier models.

- Model capability matters most:

- The biggest factor was how powerful the model is. Frontier models outperformed open-source by about 21 percentage points on accuracy.

- At easy questions, all models did well. At hard ones, frontier models stayed strong, while some open-source models dropped a lot.

- File-native agents can handle huge systems:

- With smart “foldering” (domain partitioning), agents accurately navigated schemas as big as 10,000 tables. This keeps each search focused, even when the total system is massive.

- Efficiency is not just about file size:

- “Tokens” are the chunks of text models read. YAML was the most token-efficient on average.

- TOON files are smaller on disk, but some models used way more tokens with TOON at scale. Why? The “grep tax”: if the AI isn’t familiar with TOON’s syntax, it tries many search patterns that don’t work, which adds extra text to the conversation.

- Bottom line: a format that looks compact can still be “expensive” during runtime if the model doesn’t know how to search it well.

What this means in practice

Here’s how you might use these findings in the real world:

- Match the setup to your model:

- Strong (frontier) models: let them read files. They benefit from searching only what they need, when they need it.

- Many open-source models: consider giving them everything in the prompt, or test carefully before switching to file-based search.

- Don’t sweat format for accuracy—focus on operations:

- Choose formats for efficiency and maintenance. YAML tends to use fewer tokens and is easy to search. JSON is great for programmatic workflows. Markdown is readable for humans but can be verbose in searches.

- Plan for scale:

- Split giant schemas into domain folders so the AI can find the right part quickly. This keeps search focused and fast, even at 10,000 tables.

- Invest in capability first:

- Upgrading the model often helps more than tweaking formats or architecture. Stronger models handle complexity better.

- Test formats with your specific model:

- Some models search TOON just fine; others suffer a big grep tax. Run small experiments to see what’s most efficient for your setup.

A quick note on limitations

- They focused on SQL generation as a stand-in for general agent actions. Testing other tools and tasks would help confirm the results.

- The largest-scale tests used Claude models only for navigation. Full SQL accuracy at 10,000 tables wasn’t tested.

- TOON is new and may not be well represented in training data; better guidance could improve results.

- Not all runs were perfectly temperature-controlled (a setting that affects randomness), which may introduce small differences.

- Single-turn evaluation can miss improvements that happen with multi-step corrections.

Final takeaway

There isn’t a one-size-fits-all “best practice” for giving AI agents context. The strongest models benefit from reading files as they go. Many open-source models do better when you put everything in the prompt. Formats don’t change accuracy much overall, but they can change efficiency, especially at scale. For big systems, organize your schema into domains. And most importantly, pick your architecture and format based on the specific model you’re using, then test to confirm.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The paper leaves the following concrete gaps and unresolved questions that future work could address:

- Non-SQL generalization: Do the findings transfer from text-to-SQL to other agentic operations (API orchestration, code editing, RPA workflows, configuration changes)?

- Scale beyond navigation: At 10,000 tables, only schema navigation (metadata retrieval) was evaluated and only with Claude; what is end-to-end SQL correctness at this scale, and how does it vary across models?

- Tooling scope: Results are limited to grep and read; how do richer file-native tools (ripgrep/regex variants, fuzzy search, prebuilt indices, symbol tables, code-aware parsers) change accuracy and efficiency?

- Hybrid architectures: How do file-native approaches compare against or combine with RAG/vector search, keyword search, or long-context summarization baselines?

- Format-specific retrieval guidance: How much do targeted grep patterns/prompts per format (e.g., YAML anchors, JSON paths, TOON keywords) reduce the “grep tax” and improve accuracy?

- TOON familiarity: Can few-shot priming, instruction-tuning, or format-aware training eliminate TOON’s token overhead and improve model search behavior at scale?

- Query set breadth: With only 100 queries, how do results change with larger, more diverse, and adversarial query banks, including systematically ambiguous and multi-hop enterprise-style tasks?

- Domain and data realism: Do conclusions hold for messy, real enterprise schemas (sparse/incorrect documentation, naming inconsistencies, denormalization, missing FKs, soft deletes)?

- Schema modality coverage: How do results extend to non-relational data (nested JSON, document stores, graph DBs), API specs (OpenAPI/IDLs), event logs, or mixed schema ecosystems?

- Long-context baselines: How do file-native agents compare to long-context-only baselines augmented by in-context summarization, compression, or structure-aware chunking?

- Temperature and variance: Uncontrolled temperature in some conditions and single-run design limit confidence; what are the effects under multi-seed replication with confidence intervals?

- Multi-turn behavior: How much do iterative self-correction, tool retries, and multi-agent collaboration improve accuracy and efficiency relative to the single-shot design?

- Telemetry and error attribution: What proportion of failures stems from retrieval (missed/overbroad matches) vs reasoning (join/aggregation errors)? Instrument grep attempts, patterns, and failure modes.

- Partitioning design: Which domain-partitioning strategies (granularity, cross-domain linkage, sharding rules) maximize navigation and reasoning accuracy while minimizing token costs?

- Filesystem organization: How do directory depth, file naming conventions, file sizes, and index files affect retrieval accuracy and token efficiency?

- Navigator design: What disambiguation rules, link structures, and summaries in navigator.md best reduce ambiguous join paths and improve L4–L5 performance?

- Efficiency metrics: Beyond tokens, what are the effects on wall-clock latency, tool-call counts, memory footprint, and monetary cost across providers and scales?

- Security and robustness: How resilient are file-native agents to prompt injection or poisoned schema files, misleading metadata, and adversarial formatting?

- Maintainability: What are the authoring and maintenance costs (drift management, versioning, regeneration) of schema files across formats, and how do these impact reliability over time?

- Format breadth: Results omit formats like SQL DDL, TOML, XML, CSV, Parquet metadata, and compact Markdown styles; how do these affect accuracy and efficiency?

- Tokenizer confounds: To what extent do tokenizer differences across providers drive token-efficiency results; can normalization or unified tokenization change conclusions?

- Model-update drift: As providers update models, do architecture/format preferences shift; can longitudinal studies quantify stability and reproducibility over time?

- Open-source model training: Can tool-use finetuning or instruction-tuning close the file-native gap for open-source models; what data and objectives are most effective?

- Cross-lingual settings: How do results change with non-English schemas, multilingual descriptions, or queries in other languages?

- Multimodal context: Do visual artifacts (ERDs, diagrams) or mixed text+image schema files improve navigation and reasoning for complex joins?

- Noisy/contradictory documentation: How robust are agents when schema files contain typos, stale entries, or conflicting guidance; which validation routines help?

- SQL safety and performance: Beyond Jaccard accuracy, what about execution errors, unsafe queries, performance regressions (e.g., Cartesian joins), and data leakage risks?

- Evaluation metrics: Is Jaccard≥0.9 sufficient; should semantic equivalence, plan-level similarity, latency, and resource usage be included for a fuller picture?

- Statistical rigor: Were per-condition sample sizes sufficiently powered; how do results change under preregistered analyses, alternative corrections, and hierarchical models?

- Reproducibility: Are code, prompts, schema files, and raw logs available; can independent groups replicate results across environments and providers?

- Provider scope at scale: Scale experiments used Claude only; do the navigation and grep-tax scaling behaviors hold for GPT, Gemini, and open-source models?

- Caching and memory: How do caching, persistent memory, and per-session index construction influence token efficiency and accuracy over long runs?

- OS and tooling variability: How sensitive are results to shell, OS, and grep variants (GNU vs BSD, ripgrep), and to regex capabilities (PCRE, fuzzy)?

- Reasoning prompts: Do chain-of-thought or structure-aware reasoning prompts alter the relative effects of architecture and format, especially for L4–L5 queries?

Practical Applications

Immediate Applications

Below is a set of deployable, real-world uses you can implement now based on the paper’s findings, with sectors, potential tools/products/workflows, and feasibility notes.

- Model-tier-driven agent architecture selection

- Sector: software platforms, finance, healthcare, retail analytics

- What to deploy: A “model-tier router” that selects file-native retrieval for frontier-tier models (Claude, GPT, Gemini) and prompt-based context injection for most open-source models; rollout playbooks and runbooks reflecting Table 9’s guidance

- Assumptions/dependencies: Accurate identification of model tier; tool-call support (grep/read) in your agent framework; acceptance that benefits are model-dependent and small but statistically significant for frontier models

- YAML-first agent schema catalogs

- Sector: data platforms, BI, database administration, enterprise IT

- What to deploy: Generate schema documentation in YAML with predictable, grep-friendly patterns; include a Navigator.md overview for quick table/domain descriptions; adopt YAML for token efficiency and maintainability

- Assumptions/dependencies: LLM familiarity with YAML; schema metadata available; agent has file-system access and tool permissions; token-efficiency gains vary by model

- Domain-based schema partitioning at enterprise scale

- Sector: finance (risk and compliance data warehouses), healthcare (EHR analytics), telecom (customer and network ops), retail (omnichannel analytics)

- What to deploy: Partition large schemas into domain files (~250 tables/domain) and route queries to relevant partitions; maintain high navigation accuracy with bounded per-query context

- Assumptions/dependencies: Correct domain assignment and routing; union of partitions still represents full schema; SQL generation accuracy at 10,000 tables is not yet validated (navigation is)

- Navigator disambiguation rules and linters

- Sector: software and data engineering, analytics enablement

- What to deploy: A “Navigator.md generator + linter” that adds explicit disambiguation rules (e.g., clarifying “customer state” joins) to reduce format-driven ambiguity; align with paper’s finding that navigator clarity matters more than format

- Assumptions/dependencies: Access to subject-matter experts for rule authoring; integration in CI to prevent ambiguous metadata

- Token-cost telemetry and “grep tax” management

- Sector: platform operations, cost governance

- What to deploy: Telemetry capturing tokens per tool-call and per format; an automated “grep tax analyzer” that flags formats/models where retrieval verbosity or unfamiliar syntax inflates tokens; default to YAML for Claude at scale and avoid TOON unless model-specific tests show parity

- Assumptions/dependencies: Tool-call logging; per-provider token accounting; the grep tax is model- and scale-dependent; results strongest for Claude in the paper’s scale experiments

- Dev workflows standardizing CLAUDE.md/AGENTS.md and LLMs.txt-style context files

- Sector: software engineering, MLOps, DevOps

- What to deploy: Organization-wide conventions for agent-facing files (CLAUDE.md, AGENTS.md, Navigator.md) and schema docs in YAML/JSON; IDE/CI plugins that validate format patterns (headings, keys, delimiters) for grepability

- Assumptions/dependencies: Teams adopt consistent repository patterns; agent frameworks can read local files

- Vendor/model evaluation and procurement guardrails

- Sector: enterprise procurement, risk/compliance

- What to deploy: RFP criteria and bake-off scripts that test file-native vs prompt architectures per model; require disclosure of tool-use capabilities and token-efficiency metrics by format; adopt the study’s evaluation methods (Jaccard >= 0.9 threshold)

- Assumptions/dependencies: Access to candidate models; acceptance of text-to-SQL as a proxy for broader programmatic operations

- Personal knowledge base structuring for assistants

- Sector: daily life, productivity

- What to deploy: Organize personal notes, contacts, and tasks in YAML with a top-level Navigator.md index; use frontier models for tool-heavy workflows (searching, extracting), and open-source models for lightweight reasoning without file access

- Assumptions/dependencies: Your assistant app supports file-native retrieval; personal comfort with YAML authoring

- Curriculum and hands-on labs in context engineering

- Sector: education (CS, data systems, HCI)

- What to deploy: Course modules that reproduce file-native vs prompt results across formats; assignments on schema partitioning and navigator design; lab telemetry to explore the grep tax

- Assumptions/dependencies: Access to multiple LLMs and tool calling; institutional compute budgets

- API and data spec representation for agent-action

- Sector: software integration, DevRel

- What to deploy: Maintain API catalogs and data specs in YAML/JSON with grep-friendly keys; pair with Navigator.md to guide tool selection; align with standards like Model Context Protocol and LLMs.txt

- Assumptions/dependencies: Generalization from SQL to APIs holds in your environment; agents rely on file-native catalogs for tool discovery

Long-Term Applications

Below are applications requiring further research, validation, scale-up, or ecosystem development before broad deployment.

- Tool-use training and fine-tuning for open-source models

- Sector: AI model development

- What to build: Fine-tune open-source models on file-native retrieval tasks, grep patterns, and schema navigation to reduce the 21pp capability gap; include multi-turn correction and partition-aware routing

- Assumptions/dependencies: Availability of training data and compute; licensing permitting tool-use finetuning; measurable transfer from SQL proxy to broader agentic tasks

- Standards for agent-readable schema formats and navigator files

- Sector: industry standards, policy

- What to build: A community standard (YAML profiles, required keys, heading schemas, disambiguation sections) for agent-consumable schema docs and Navigator.md; certification programs ensuring grep-friendly design

- Assumptions/dependencies: Multi-stakeholder consensus; involvement of vendors and OSS communities; interoperability with Model Context Protocol

- Grep-aware compact serializations and adapters

- Sector: software tooling, data serialization

- What to build: Next-gen compact formats that remain grepable (token-light, pattern-predictable) or adapters that translate TOON-like files to YAML/JSON on-the-fly; training exposure so models learn these syntaxes

- Assumptions/dependencies: Demonstrated token savings without tool-use penalties; provider willingness to include format exposure in training

- Generalizing file-native navigation to non-SQL domains

- Sector: APIs/tool orchestration, robotics, energy/industrial control

- What to build: Partitioned, file-native catalogs for large API surfaces (e.g., ToolLLM-scale), robot skill libraries, and grid/SCADA command schemas; agents that reliably discover and act across thousands of tools

- Assumptions/dependencies: Safety and guardrails for action execution; validation beyond SQL; domain-specific navigator rules

- Enterprise agent governance (privacy, cost, and tool-access policy)

- Sector: compliance, security, cost management

- What to build: Policies and enforcement for which files agents may grep; token budgets and alerts; review workflows for Navigator.md updates; audit trails for tool calls

- Assumptions/dependencies: Integration with IAM, DLP, and observability stacks; organizational change management

- Dynamic architecture selection middleware

- Sector: AI platforms

- What to build: Middleware that profiles a model’s tool-use behavior in real time and switches between file-native and prompt-based delivery; adaptive selection of formats per query/model

- Assumptions/dependencies: Low-latency behavior profiling; robust fallbacks; acceptance of hybrid context strategies

- Commercial “Agent Schema Navigator” products

- Sector: data platforms, analytics tooling

- What to build: Turnkey products that generate YAML catalogs, Navigator.md indices, domain partitions, and telemetry dashboards for token and accuracy; connectors for major DWs (Snowflake, BigQuery, Databricks) and lakehouses

- Assumptions/dependencies: Vendor partnerships; market need for agent-ready catalogs; enterprise security and compliance readiness

- Context engineering benchmarks and certifications

- Sector: academia/industry consortia

- What to build: Cross-provider, multi-task benchmarks measuring architecture, format, and scale effects (beyond SQL); certification badges for “agent-ready” repositories and data estates

- Assumptions/dependencies: Funding and neutral hosting; representative workloads; data-sharing agreements

- Multi-turn correction protocols for structured operations

- Sector: agent frameworks

- What to build: Protocols and toolchains enabling iterative retrieval-correction (e.g., ReAct-style loops) with navigator-informed self-checks; evaluate gains over single-shot behaviors noted in the paper’s limitations

- Assumptions/dependencies: Careful safety design to avoid tool overuse; empirical validation of gains across models

- Cross-provider scale validation and best-practice libraries

- Sector: research, platform engineering

- What to build: Replicate 10,000-table navigation across providers and models; publish best-practice libraries (grep pattern cookbooks, partitioning blueprints, navigator templates) per model tier

- Assumptions/dependencies: Compute and licensing; willingness of vendors to cooperate; reproducibility across diverse enterprise schemas

Glossary

- AgentBench: A benchmark for evaluating LLMs as agents across diverse environments. "Agent evaluation has advanced through benchmarks such as SWE-bench [14] for software engineering tasks and AgentBench [15] for evaluating LLMs across diverse agent environments."

- ANOVA (One-way ANOVA): A statistical test used to compare means across multiple groups to determine if there are significant differences. "One-way ANOVA: F(10, 8390) = 30.55, p< 0.001."

- Benjamini-Hochberg correction: A statistical procedure to control the false discovery rate when conducting multiple comparisons. "Statistical tests include independent t-tests, chi-square tests, and ANOVA with Benjamini- Hochberg correction [9]."

- BIRD: A large-scale, real-world text-to-SQL benchmark featuring noisy schemas. "BIRD [17] which introduced large-scale, real-world databases with noisy schemas."

- Chain-of-Table: A method where LLMs iteratively transform tables during the reasoning process. "Chain-of-Table [22] demonstrated that LLMs can iteratively transform tables during reasoning."

- Chain-of-thought prompting: A technique that elicits step-by-step reasoning from LLMs to improve performance on complex tasks. "Chain-of-thought prompting [13] demonstrated that explicit reasoning steps improve performance on complex tasks, a finding relevant to our complexity tier analysis."

- Claude Agent SDK: A toolkit for building file-based agents using Claude models, with specific constraints on configuration. "The Claude Agent SDK, used for file-based Claude conditions, does not expose temperature control; OpenAI GPT-5 models enforce a minimum temperature of 1; all other conditions set temperature=0 explicitly via provider APIs."

- Context engineering: The systematic optimization of information payloads for LLMs, including retrieval, processing, and management. "A comprehensive survey [12] covering over 1,400 papers establishes context engineering as the systematic optimisation of information payloads for LLMs, encompassing retrieval, processing, and management."

- Common Table Expression (CTE): A named temporary result set in SQL used to simplify complex queries and enable modular query design. "CTEs, window functions"

- Data Definition Language (DDL): The subset of SQL used to define and modify database schema objects. "When initial searches returned too many matches, agents cycled through patterns from known formats (DDL, JSON, YAML) before finding correct TOON patterns"

- DIN-SQL: A text-to-SQL approach using decomposed in-context learning with self-correction mechanisms. "DIN-SQL [4] achieved strong results by decomposing complex queries"

- Domain partitioning: Splitting a large schema into domain-based partitions to maintain efficient navigation and bounded context. "Domain partitioning enabled high navigation accuracy at 10,000 tables."

- File-based semantic layers: Structured documents that agents read through native file tools to obtain context for operations. "practitioners are increasingly adopting file-based semantic layers: structured documents that agents read through native file tools such as grep and read operations."

- File-native agents: Agents that retrieve and use context via native file operations rather than embedding all context in prompts. "File-native agents scale to 10,000 tables through domain-partitioned schemas while maintaining high navigation accuracy."

- Frontier-tier models: High-capability proprietary LLMs from leading labs, often outperforming open-source models on complex tasks. "file-based context retrieval improves accuracy for frontier-tier models (Claude, GPT, Gemini; +2.7%, p=0.029)"

- Gorilla: A system demonstrating connectivity between LLMs and massive APIs for real-world actuation. "Gorilla [16] showed that LLMs can be connected with massive APIs for real- world actuation."

- Grep tax: A phenomenon where unfamiliar or non-standard formats cause inefficient grep-based retrieval, increasing token consumption. "identification of the 'grep tax' phenomenon where compact formats consume more runtime tokens."

- Jaccard similarity: A metric measuring the similarity between two sets, used here to evaluate result set correctness. "We use Jaccard similarity over result sets: J(A,G) = |A n G| / |A U G|, where A is the agent-generated result set and G is the ground truth."

- Lost in the middle: An LLM context phenomenon where information in the middle of long contexts is used less effectively. "Notably, the 'lost in the middle' phenomenon [6] demonstrates that LLMs struggle to use information positioned in context middles, motivating selective retrieval approaches like our file-native architecture."

- MAC-SQL: A multi-agent collaborative framework for text-to-SQL tasks. "MAC-SQL [18] demonstrated multi-agent collaborative approaches to text-to-SQL."

- Model Context Protocol: A standard specifying how agents access external resources and context. "The Model Context Protocol and similar standards have formalised how agents access external resources [2]."

- Partitioned architecture: A schema organization strategy that bounds per-query context by dividing data into partitions. "The partitioned architecture keeps per-query context bounded regardless of total schema size."

- Prompt engineering: The practice of designing and refining prompts to guide LLM behavior. "Context engineering has recently been formalised as a discipline distinct from prompt engineering."

- ReAct framework: A paradigm where models interleave reasoning (thought) and acting (tool use). "The ReAct framework [1] established the reasoning-plus-acting paradigm where models interleave chain-of-thought reasoning with tool invocations."

- Retrieval-augmented approaches: Methods that enhance LLMs by retrieving external knowledge to supplement the prompt. "Rather than relying solely on retrieval-augmented approaches or embedding context directly in prompts, practitioners are increasingly adopting file-based semantic layers"

- Schema linking: Explicitly connecting natural language expressions to schema elements in text-to-SQL tasks. "Recent work has also questioned whether explicit schema linking remains necessary with capable models [24]."

- Spider benchmark: A widely used cross-domain text-to-SQL benchmark for evaluating generalization. "The Spider benchmark [3] established cross-domain evaluation standards"

- StructGPT: A framework enabling LLMs to reason over structured data via iterative reading and reasoning. "StructGPT [21] proposed an iterative reading- then-reasoning framework for structured data including databases and knowledge graphs."

- SWE-bench: A benchmark for assessing whether LLMs can resolve real-world software engineering issues on GitHub. "Agent evaluation has advanced through benchmarks such as SWE-bench [14] for software engineering tasks"

- Text-to-SQL: The task of translating natural language queries into SQL statements. "Text-to-SQL translation has advanced significantly with LLMs."

- TPC-DS benchmark: An industry-standard decision support benchmark used to derive query patterns. "Queries were derived from the TPC-DS benchmark [8] query patterns and curated to ensure coverage across L1-L5 complexity tiers."

- Token-Oriented Object Notation (TOON): A compact serialization format designed to be efficient for LLM context consumption. "TOON (Token-Oriented Object Notation) [10] is a compact serialisation format designed specifically for LLM context efficiency."

- ToolACE: A method advancing LLM function calling across real-world APIs. "ToolLLM [23] and ToolACE [25] have further advanced LLM function calling across thousands of real-world APIs."

- ToolLLM: A system facilitating LLM mastery over a large number of real-world APIs. "ToolLLM [23] and ToolACE [25] have further advanced LLM function calling across thousands of real-world APIs."

- Toolformer: A training approach where LLMs learn to use tools autonomously. "Toolformer [11] demonstrated that LLMs can learn to use tools autonomously"

- Window functions: Advanced SQL functions that perform calculations across sets of rows related to the current row. "CTEs, window functions"

Collections

Sign up for free to add this paper to one or more collections.