Bootstrapping Life-Inspired Machine Intelligence: The Biological Route from Chemistry to Cognition and Creativity

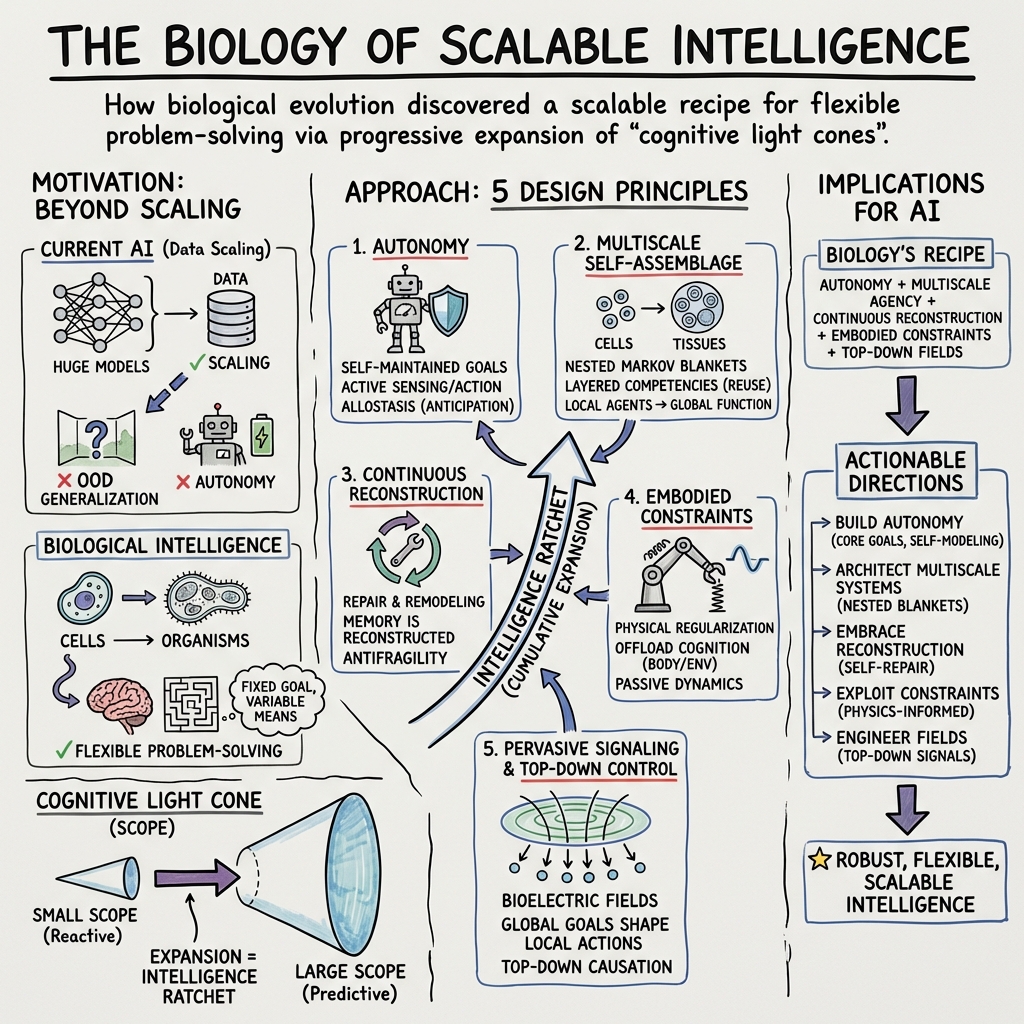

Abstract: Achieving advanced machine intelligence remains a central challenge in AI research, often approached through scaling neural architectures and generative models. However, biological systems offer a broader repertoire of strategies for adaptive, goal-directed behavior - strategies that emerged long before nervous systems evolved. This paper advocates a genuinely life-inspired approach to machine intelligence, drawing on principles from biology that enable robustness, autonomy, and open-ended problem-solving across scales. We frame intelligence as flexible problem-solving, following William James, and develop the concept of "cognitive light cones" to characterize the continuum of intelligence in living systems and machines. We argue that biological evolution has discovered a scalable recipe for intelligence - and the progressive expansion of organisms' "cognitive light cone", predictive and control capacities. To explain how this is possible, we distill five design principles - multiscale autonomy, growth through self-assemblage of active components, continuous reconstruction of capabilities, exploitation of physical and embodied constraints, and pervasive signaling enabling self-organization and top-down control from goals - that underpin life's ability to navigate creatively diverse problem spaces. We discuss how these principles contrast with current AI paradigms and outline pathways for integrating them into future autonomous, embodied, and resilient artificial systems.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Bootstrapping Life-Inspired Machine Intelligence — A Simple Explanation

What is this paper about?

This paper argues that to build truly smart machines, we should learn not just from brains and neural networks, but from life as a whole. The authors show how living things—from single cells to humans—solve problems flexibly and keep getting better at it over time. They introduce a big idea called the “cognitive light cone” to describe how far an organism (or a machine) can plan, care, remember, and control things across space and time. Then they extract five design principles from biology that could guide the next generation of AI and robots.

What questions are they asking?

- How can we define intelligence in a way that works for bacteria, animals, humans, and machines?

- What “recipe” did evolution discover that lets life scale up its intelligence—from chemistry to cells to minds?

- Why don’t today’s AIs match biology’s flexibility and resilience, and what should we change to fix that?

How did they study it?

This is a perspective paper (an argument and framework), not a lab experiment. The authors:

- Gather examples from biology showing surprising problem-solving (like tadpoles seeing with eyes on their tails).

- Propose the “cognitive light cone” to measure the scope of an agent’s goals and control.

- Use ideas like “Markov blankets” (borders between an organism and its world) and “generative models” (internal world-simulators) to explain autonomy.

- Compare biological strategies with current AI and outline five life-inspired design principles for future machines.

Key ideas explained simply

- Intelligence as flexible problem-solving: Think “fixed goal, variable means.” If your goal is “get water,” a flexible agent can do it in many ways—even in a new situation.

- Cognitive light cone: Imagine a flashlight beam showing the size of what you can care about, plan for, and control across space and time. A bacterium’s beam is tiny (nearby sugar, right now). A human’s beam is huge (personal plans, community goals, even concerns about the planet’s future).

- Markov blanket: Like your skin plus your senses and muscles. It marks the boundary between you and the world. Sensors bring information in; actions push influence out. This lets you keep your “self” stable while still interacting.

- Generative (world) model: Your inner simulator. It predicts what will happen if you act. It doesn’t need to be perfect—just good enough to guide smart actions.

- Multiscale intelligence: Living systems are “teams of teams.” Cells are tiny agents with their own goals, but they cooperate to form tissues, organs, and whole bodies with larger, shared goals.

Real-life examples of flexible problem-solving

- Tadpoles with eyes on their tails can still learn to see and respond to light, even though their bodies are rearranged. Their systems adapt and make the new wiring useful.

- Planarian worms exposed to a chemical that destroys their heads can regenerate heads that work under the new conditions, finding clever genetic tweaks to cope.

- In newts, when cell sizes change dramatically, their tissues still rebuild the correct organ shapes by switching strategies (few big cells can do the job many small cells normally do).

These show that life reuses parts, rewires functions, and keeps the goal in sight, even when things change.

Five design principles biology uses (and AI should learn from)

- Autonomy: Living things set and protect core goals (like staying alive), choose what to sense, and learn actively. They don’t wait for labeled data; they explore and figure things out.

- Growth through self-assembly of active parts: Bodies and minds “grow themselves” out of smaller, agent-like parts (cells) that cooperate. Intelligence scales up by organizing these active parts, not by bolting on passive pieces.

- Continuous reconstruction of capabilities: Life can repair, adapt, and repurpose. If parts break or the environment changes, organisms rebuild functions and find new ways to reach the same goals.

- Use physical and embodied constraints: Bodies help thinking. Creatures exploit their shape and materials (like springy tendons or the way fluids flow) to make control easier and cheaper.

- Pervasive signaling and top-down goals: Parts communicate constantly. Signals (chemical, electrical, mechanical) help local pieces self-organize, while high-level goals guide lower-level actions—like a team aligning under a shared plan.

What are the main takeaways and why do they matter?

- Intelligence is a spectrum across life, not a special switch that only humans have.

- The “cognitive light cone” captures how far an agent can care, plan, and control. Over evolution (and sometimes within a lifetime), living systems expand this cone—an “intelligence ratchet” that opens up new problem spaces (like moving from chemistry, to body-building, to behavior, to language).

- Biology’s success comes from autonomy, self-assembly, repair, embodiment, and rich communication across scales. These features make life robust and creative.

- Current AI is powerful but often narrow: it’s usually trained on static data, lacks a body, struggles with self-repair, and doesn’t truly set or protect its own goals. That’s why it can fail outside its training (“out of distribution”).

- If we bring biological principles into AI and robotics, we can build systems that are more adaptable, independent, and resilient—better at handling surprises in the real world.

What could this change in the future?

If AI follows biology’s playbook, we might see machines that:

- Set and pursue meaningful goals safely, while exploring to learn on their own.

- Grow or self-assemble from active parts, repair themselves, and adapt when parts fail.

- Use their bodies’ physics to simplify control (like animals do), saving energy and computation.

- Coordinate across many levels (from tiny modules to whole systems) using constant signaling and top-down guidance from goals.

This approach could lead to more trustworthy robots, smarter autonomous systems, and AI that keeps improving in open-ended ways—more like life does. It also raises important safety and ethics questions: richer, more autonomous systems can have richer failure modes. So, along with copying biology’s strengths, we need careful designs that keep goals aligned and stable across all scales.

In short, the paper says: Don’t just scale up neural nets—scale up life’s strategies. That’s how we get machines that can truly think, adapt, and create over the long haul.

Knowledge Gaps

Key knowledge gaps, limitations, and open questions

Below is a single list of concrete gaps and unresolved questions that future researchers could address to advance the paper’s life-inspired machine intelligence agenda.

- Quantifying the “cognitive light cone”: develop formal, testable metrics for the size and scope of an agent’s goals, memory, prediction, and control across space, time, and unconventional problem spaces (e.g., morphospace, transcriptional space), and validate these across cells, tissues, organisms, and artificial systems.

- Standardized benchmarks for flexible problem-solving in unconventional spaces: design reproducible tasks, datasets, and evaluation criteria that probe flexibility under internal reconfiguration (e.g., sensor remapping, component failure) in morphogenesis-like settings and other non-3D problem spaces.

- Operationalizing nested Markov blankets in practice: create methods to infer the statistical boundaries of agency from multimodal data (bioelectric, chemical, mechanical, neural) and to measure emergent autonomy and goal-directedness in collectives; test when and how blankets merge or split.

- Translating autopoietic goal formation to AI: specify algorithms that self-generate and protect core goals (non-updatable priors) while learning other beliefs, and demonstrate these in embodied agents that must maintain viability under resource constraints.

- Implementing allostasis and predictive control in machines: develop architectures and training regimes (e.g., active inference-style controllers) that anticipate deviations from goals and act before errors occur; quantify performance and robustness gains over purely reactive systems.

- Mechanisms for “top-down control from goals”: identify concrete signaling architectures that allow distal, abstract goals to modulate lower-level behaviors across scales in synthetic agents; evaluate stability, latency, and failure modes of such top-down pathways.

- Engineering pervasive signaling substrates: design and test non-neural information media (e.g., bioelectric-like networks, distributed chemical signaling, mechanical coupling) in robots or smart materials to enable self-organization and multiscale coordination.

- Continuous reconstruction of capabilities in machines: devise methods for self-repair, self-reconfiguration, and competency preservation when internal parts change (akin to regeneration and adaptation under channel blockers), and define performance tests under controlled internal perturbations.

- Multiscale competency alignment without centralized control: develop behavior-shaping strategies and objective functions that align local agents’ goals with organism-level goals; quantify alignment efficiency, energy cost, and resilience under adversarial internal dynamics.

- Failure mode taxonomy and mitigation: formalize cancer-like dissociation, psychopathology analogs, and aging in multiscale cognitive systems; create monitoring, diagnosis, and intervention mechanisms to maintain coherence across nested blankets and goal hierarchies.

- Action-centric generative models in AI: provide concrete design patterns and training procedures that prioritize predictions of action consequences (corollary discharge, proprioceptive targets) over veridical world modeling; measure generalization and sample efficiency impacts.

- Evidence and protocols for learning below the cellular level: produce reproducible experiments showing learning and memory in chemical networks; define tasks, stimuli, and analysis for causal emergence and history-dependent control in subcellular systems.

- Embodiment as a constraint to enhance generalization: quantify how physical affordances and constraints improve out-of-distribution performance; identify minimal embodiment features needed to realize gains and how to incorporate them into simulators and real robots.

- Convergence claims across substrates: establish rigorous geometric and statistical tests to compare internal representations of biological and artificial systems; define shared manifolds, alignment metrics, and conditions under which convergent solutions arise.

- Social-scale agency and blankets: determine how collectives (colonies, teams, cultures) form emergent goals and boundaries; engineer synthetic collectives with measurable goal alignment, conflict resolution, and scalable cognitive light cone expansion.

- Measuring the “intelligence ratchet”: create longitudinal metrics and experimental ecosystems to quantify how an agent’s cognitive light cone expands over time through exploration, problem invention, and cumulative capability building.

- Resource–generalization trade-offs: empirically characterize how time and energy constraints drive coarse-graining and improve generalization; develop models linking resource budgets to abstraction quality and control efficacy.

- Learning hierarchical goal structures: design algorithms that discover and prioritize layered goals (from homeostatic to abstract) and can suppress immediate self-preservation for higher-order aims in a controlled and safe manner; define safety guarantees.

- Operationalizing valence and the “radius of care”: propose measurable constructs for valence, motivation, and care radius in artificial agents; link these to action policies and cognitive light cone size.

- Concrete pathways from principles to prototypes: provide detailed architectural blueprints, materials choices, and integration steps to instantiate the five design principles in embodied AI or soft robots; include ablation studies isolating each principle’s contribution.

- Bridging current ML with embodied, autonomous learning: specify data regimes, curricula, and closed-loop training infrastructures that enable self-supervised, curiosity-driven exploration in physical or high-fidelity simulated environments.

- Skill pivoting across nested problem spaces: develop methods to transfer and adapt policies learned in one space (e.g., metabolic control) to another (e.g., morphological construction); define mapping operators and transfer efficiency metrics.

- Engineering proxies for “final cause”: articulate implementable analogs of Aristotelian final causes in AI (e.g., persistent target morphologies, viability constraints, narrative identity variables) and test how they guide long-range planning and self-maintenance.

- Robot analogs of tadpole eye-transplant adaptation: build agents that can exploit ectopic or reconfigured sensors without retraining from scratch; benchmark functional recovery, task performance, and adaptation speed.

- Bioelectric and gap junction analogs in synthetic media: specify hardware and software designs that emulate bioelectric signaling for collective memory and goal storage; test scalability, noise tolerance, and reconfigurability.

- Clarify principle count and scope: resolve the inconsistency between “five” versus “six” design principles mentioned; assess whether the set is complete, how principles interact, and which are necessary versus sufficient for scalable intelligence.

- Formal limits of William James’s definition: develop a mathematical formalism that avoids over-broadness (e.g., excluding trivial computations) while capturing flexible problem-solving; propose falsifiable criteria and edge-case tests.

- Governance for autonomous, self-assembling agents: outline risk models, auditing protocols, and containment strategies for agents with expanding cognitive light cones; specify intervention points across scales and ethical frameworks for deployment.

Glossary

- Active inference: A framework where actions and perceptions minimize prediction errors to achieve goals. "active sensing in active inference: they generate prediction errors when the expected state is not yet realized, which in turn drive actions to minimize these errors"

- Active matter: Collections of components that consume energy to move or exert forces, producing emergent behaviors. "a wide diversity of agents, including biological organisms, artificial systems, robots, cyborgs, and forms of active matter"

- Adaptive immunity: The vertebrate immune subsystem that learns to recognize specific pathogens and remembers them. "animal bodies coordinate multiple internal systems-circulatory, excretory, microbiota regulation, adaptive immunity, and sensory-motor systems"

- Allostatic mechanisms: Predictive regulatory processes that anticipate needs and adjust internal states before deviations occur. "goal achievement can also be (and in living organisms, often is) achieved through allostatic mechanisms-which anticipate discrepancies from goals and compensate for them before they occur"

- Anatomical morphospace: The conceptual space of possible anatomical forms or target body structures an organism can achieve. "for example specific patterns of whole organs (a goal in anatomical morphospace)"

- Autopoiesis: The self-producing and self-maintaining organization of living systems. "they are autopoietic: they must generate and maintain the conditions necessary for their own existence and integrity"

- Axolotls: Regenerative salamanders often used to illustrate large-scale pattern restoration in biology. "such as occurs in axolotls (right panel)"

- Bifurcation points: Critical points in a system where small changes can lead to qualitatively different behaviors or decisions. "with a few bifurcation points that embody simple decisions"

- C. elegans: A model nematode species used in neuroscience and systems biology. "In C. elegans, a dynamical internal model describes-or more precisely, prescribes-largely preconfigured transition dynamics"

- Causal emergence: The phenomenon where higher-level system descriptions exhibit effective causal power not evident at lower levels. "and causal emergence (higher levels of control) linked to their learning history 70"

- Cognitive light cone: The functional boundary representing the spatial and temporal scope of an agent’s goals, predictions, and control. "The 'cognitive light cone' 19 indicates the size, in space/time or in other spaces, of the largest goal state a system can pursue"

- Coarse-graining: Simplifying detailed information into higher-level summaries to enable efficient prediction and control. "they are forced to learn to coarse-grain signals"

- Convergent evolution: Independent evolution of similar features in distinct lineages due to similar selective pressures. "In nature, this is reflected in convergent evolution: similar solutions, such as the repeated emergence of eyes, have arisen independently across lineages"

- Corollary discharges: Internal copies of motor commands used to predict and cancel self-generated sensory inputs. "Sensory predictions, often implemented via corollary discharges, allow organisms to distinguish self-generated stimuli from external events"

- Cybernetic hierarchy: A layered control framework in which regulation and goals operate across multiple organizational levels. "about the level, along a cybernetic hierarchy 13, of evolutionary search algorithms"

- Ectopic: Occurring in an abnormal position or place within an organism. "when eyes are transplanted to ectopic locations-such as the tail"

- Electrophysiological sharing: Exchange of bioelectric signals among cells that coordinates collective behavior and memory. "groups of cells merged together via electrophysiological sharing of information"

- Epistemic goals: Objectives aimed at reducing uncertainty and acquiring information (e.g., curiosity-driven exploration). "epistemic goals-those that reduce uncertainty, promote curiosity, and foster creative exploration"

- Final cause: An Aristotelian notion of purpose, here referring to self-generated goals guiding biological processes. "final cause (the self-generated purpose or goal)"

- Gap junction: Intercellular channels that allow direct electrical and chemical communication between adjacent cells. "ion channel, transporter, and gap junction expression"

- Gene regulatory network: Interacting genes and regulatory elements that control gene expression patterns. "even subcellular gene regulatory networks"

- Generative model: An internal model that predicts sensory inputs and consequences of actions to guide behavior. "referred to in various ways (with distinctions that are not crucial here), e.g., a generative model, predictive model, or world model"

- Homeostasis: Regulatory processes that maintain internal variables within viable ranges. "the setpoints pursued by a homeostatic system"

- Infotaxis: A strategy of actively seeking information, treated as a drive that scales into problem-finding behavior. "Primitive systems' basic drive for information (infotaxis) leads, when scaled up, to the ability to find new problems to solve"

- Interoceptive predictions: Predictions about internal bodily states used to evaluate actions and maintain regulation. "Interoceptive predictions evaluate whether a course of action is beneficial or harmful to the organism"

- Laplacean Daemons: A reference to hypothetical omniscient predictors, used here to contrast with resource-limited organisms. "they don't have luxury of being micro-reductionists or Laplacean Daemons"

- Markov blanket: A statistical boundary separating internal and external states, mediating perception-action exchanges. "the concept of a Markov blanket 42,43: a (statistical) boundary that mediates interactions between an organism's internal states and the external environment"

- Microbiota: The community of microorganisms living in and on organisms that influence physiology and behavior. "microbiota regulation"

- Morphogenetic control: Regulatory processes that guide the formation and maintenance of body shape and structure. "In morphogenetic control, this can result in dissociative conditions such as cancer"

- Multimodal AI: Artificial systems that process and integrate multiple data modalities (e.g., text, images, video). "generative (possibly multimodal) AI"

- Multiscale autonomy: Autonomy expressed across nested levels of organization, from cells to organisms (and beyond). "five design principles- multiscale autonomy, growth through self-assemblage of active components"

- Multiscale competency architecture: The layered organization of problem-solving abilities across biological scales. "Figure 1. Multiscale competency architecture of biology"

- Proprioceptive predictions: Expectations about one’s own body movements used for control and action initiation. "Proprioceptive predictions (e.g., anticipating that one's finger will be raised) guide adaptive motor control"

- Self-organization: The spontaneous emergence of ordered structure or function from local interactions without central control. "self-organization, robustness, and open-ended adaptation"

- Self-supervised learning: Learning from data without explicit external labels by using internally generated objectives. "Learning is largely self-supervised"

- Setpoint: A target value or preferred state that regulatory systems strive to maintain. "the setpoints pursued by a homeostatic system"

- Teleology: Explanation of phenomena by their purposes or goals; here grounded in biological processes. "providing a notion of teleology that is not mysterious but firmly grounded in the organism's own biological processes"

- Transcriptional space: The space of possible gene expression configurations navigated by regulatory processes. "transcriptional space once genes came on the scene"

- World model: An internal predictive model of the environment used to anticipate outcomes and plan actions. "e.g., a generative model, predictive model, or world model"

- Xenopus laevis: A frog species used as a model organism, especially in developmental biology. "tadpoles of the frog Xenopus laevis"

Practical Applications

Below are practical applications derived from the paper’s findings and design principles. Each item names specific use cases, links to sectors, and notes tools/workflows and feasibility assumptions. Applications are grouped by deployment horizon.

Immediate Applications

The following use cases can be piloted or deployed now using existing methods and infrastructure.

- Bold goal-first AI specifications using the “cognitive light cone”

- Sectors: software, AI, product management, AI governance

- What: Introduce design checklists that explicitly define an agent’s goal scope across space/time (radius of care), protected core goals (homeostatic setpoints), and allowable flexibility (“variable means to fixed goals”).

- Tools/workflows: Model cards, system design briefs, OKR mapping to agent goal hierarchies, evaluation sandboxes.

- Assumptions/dependencies: Agreement on qualitative/quantitative measures of goal scope; stakeholder alignment on “protected” goals; integration into existing product lifecycles.

- Active-inference controllers for robotics and IoT (anticipatory setpoint regulation)

- Sectors: robotics, manufacturing, energy, smart buildings

- What: Replace purely reactive control with allostatic controllers that anticipate deviations and act preemptively (e.g., HVAC that predicts occupancy/thermal drift, robot arms that counter expected load changes).

- Tools/workflows: ROS, MPC, Bayesian filters, time-series forecasting; simulation-in-the-loop deployment.

- Assumptions/dependencies: Adequate sensing, reliable forecasting, and safe actuation; ability to tune priors and trade off epistemic vs. pragmatic actions.

- Damage-aware adaptive robotics (morphological flexibility)

- Sectors: logistics, warehouse automation, field robotics

- What: Behavior libraries that reassign functions and reinterpret signals when sensors/actuators fail or are reconfigured (e.g., visual servoing when LiDAR degrades, using joint torque feedback for tactile estimation).

- Tools/workflows: Redundant modalities, self-diagnosis routines, fallback policies, rapid domain randomization for OOD adaptation.

- Assumptions/dependencies: Hardware redundancy, fault detection coverage, safe failure modes; operator acceptance of variable behavior.

- Modular swarm workflows with nested autonomy

- Sectors: agriculture, mining, environmental monitoring, disaster response

- What: Multi-agent policies that align local agent goals with global mission goals via goal-broadcast channels (pervasive signaling) and nested “blankets” (team-cell-task hierarchies).

- Tools/workflows: Multi-agent RL, event buses/pub-sub, consensus protocols, goal arbitration layers.

- Assumptions/dependencies: Reliable low-latency communications, robust identity and synchronization across agents, clear global mission definitions.

- Flexible problem-solving benchmarks (OOD generalization with fixed goals)

- Sectors: academia, AI evaluation, standards

- What: Benchmark suites where tasks keep goals fixed but systematically alter constraints/tools (missing tools, reconfigured sensors, time/energy budgets) to measure flexible means.

- Tools/workflows: Open-source task generators (procedural variations), reproducible evaluation harnesses, metrics for “creative re-use” and goal fidelity.

- Assumptions/dependencies: Community adoption; careful task design to avoid leakage; compute for large-scale evaluation.

- Pervasive signaling architectures for distributed software systems

- Sectors: cloud software, microservices, DevOps

- What: Event-driven systems where services “listen” to goal-level signals (SLOs, policy intents) and self-organize to meet them, instead of hard-coded pipelines.

- Tools/workflows: Kafka/PubSub, service meshes, policy engines (OPA), intent-driven orchestration.

- Assumptions/dependencies: Strong observability, well-specified intents, guardrails against oscillations or goal conflicts.

- Allostatic telehealth monitoring (anticipate and compensate)

- Sectors: healthcare, digital health

- What: Patient monitoring that predicts near-term risk (e.g., heart failure exacerbation) and initiates preemptive interventions (medication reminders, care team alerts).

- Tools/workflows: Bayesian state-space models, smartphone/wearable data, clinician-in-the-loop workflows.

- Assumptions/dependencies: Data quality/compliance, integration with EHRs, clear escalation protocols; privacy and regulatory approvals.

- Autonomy-aware AI safety documentation using the cognitive light cone

- Sectors: policy, enterprise procurement, AI governance

- What: Include qualitative/quantitative summaries of an agent’s goal scope, timescale, and cross-scale effects in model cards and risk reports; map “adjacent possible” expansions.

- Tools/workflows: Risk registers, alignment checklists, impact modeling.

- Assumptions/dependencies: Consensus on reporting schema; organizational will to adopt; early-stage metrics may be coarse.

- Embodied-constraint design in soft robotics

- Sectors: robotics, medical devices, inspection

- What: Use morphological computation—compliance, passive dynamics—to offload control complexity (e.g., soft grippers that conform to objects, passive walkers).

- Tools/workflows: CAD/FEA, topology optimization, rapid prototyping, sim-to-real calibration.

- Assumptions/dependencies: Material availability, accurate physical modeling; task domains that benefit from compliance.

Long-Term Applications

These applications require further research, scaling, or technology development before widespread deployment.

- Multiscale competency architectures for general-purpose agents

- Sectors: software, AI platforms

- What: Agents with protected core goals, hierarchical generative models, and top-down goal broadcasting to submodules; flexible reassembly of skills across novel problem spaces.

- Potential products: “Self-governing” enterprise copilots, mission-level autonomy stacks.

- Assumptions/dependencies: Robust world models, persistent memory, safe goal arbitration, alignment strategies; thorough evaluation in dynamic environments.

- Growth-like self-assembling modular robots

- Sectors: construction, space exploration, hazardous environments

- What: Robots that add/remove modules, self-repair, and change morphology to suit tasks (“recipes become ingredients” at hardware level).

- Potential products: Reconfigurable field robots, on-orbit assembly systems.

- Assumptions/dependencies: Advances in programmable matter, dock/undock reliability, energy autonomy; certification for safety-critical use.

- Bioelectric control therapies for regeneration and cancer

- Sectors: healthcare, regenerative medicine, oncology

- What: Clinical devices/drugs that modulate bioelectric signaling to reset morphogenetic setpoints (repair/regenerate tissues) or correct dysregulated “goals” (cancer, aging).

- Potential products: Electroceuticals, optogenetic implants, bioelectric diagnostics.

- Assumptions/dependencies: Strong translational evidence, precise targeting, long-term safety; regulatory pathways and ethical oversight.

- Synthetic living machines (e.g., xenobots) for environmental remediation

- Sectors: environmental services, bioremediation

- What: Biobots that autonomously navigate and perform tasks (microplastic aggregation, pollutant sensing) using cell-level competencies.

- Potential products: Programmable cellular collectives tailored for cleanup tasks.

- Assumptions/dependencies: Biocontainment, lifecycle control, public acceptance; scalable fabrication and mission planning.

- Standardized cognitive light cone metrics for regulation and procurement

- Sectors: policy, standards bodies, risk management

- What: Formal test suites and reporting standards that quantify an agent’s spatial/temporal goal scope and cross-scale influence.

- Potential products: Compliance frameworks, certification labels for autonomy scope.

- Assumptions/dependencies: Multistakeholder consensus, validated measurement methodology, legal integration.

- Top-down goal broadcasting for smart infrastructure (nested blankets)

- Sectors: energy, transportation, smart cities

- What: Hierarchical control where city/grid-level intents propagate to neighborhoods/devices, with local autonomy optimizing under global goals (load balancing, resilience).

- Potential products: Intent-driven grid orchestration, adaptive traffic ecosystems.

- Assumptions/dependencies: Secure interoperable communications, robust local controllers, defenses against emergent pathologies (instabilities).

- Self-directed personal AI that sets and updates goals over long horizons

- Sectors: consumer software, productivity, wellness

- What: Assistants that manage multi-year objectives, proactively seek information, and flexibly replan under changing constraints.

- Potential products: Life-planning copilots, adaptive health/finance advisors.

- Assumptions/dependencies: Trust and alignment, privacy-preserving persistent memory, guardrails against undesirable goal drift.

- Active matter computing and programmable tissues

- Sectors: computing, synthetic biology, materials

- What: Tissues or active materials performing computation via collective dynamics; leveraging “agentic parts” for robust, parallel processing.

- Potential products: Bio-compute substrates for niche sensing/control tasks.

- Assumptions/dependencies: Design tools for collective behavior, safe interfacing, reproducibility; ethical and regulatory frameworks.

- Education tech that scaffolds problem-space expansion

- Sectors: education, workforce training

- What: Curricula and platforms that teach flexible problem-solving by progressively expanding goal scope and variable means (e.g., constrained toolsets, iterative recombination).

- Potential products: Simulation-based learning environments with “adjacent possible” pathways.

- Assumptions/dependencies: Pedagogical validation, teacher training, equitable access; assessment metrics for flexibility and scalability.

- Financial and risk systems using allostatic control across timescales

- Sectors: finance, insurance, operations

- What: Agents that anticipate regime shifts and preemptively adjust portfolios or operational plans, balancing epistemic exploration (scenario probing) with pragmatic goals.

- Potential products: Allostatic risk engines, adaptive hedging copilots.

- Assumptions/dependencies: Reliable forecasting under uncertainty, robust governance, worst-case safeguards; explainability for compliance.

These applications translate the paper’s biological design principles—autonomy, multiscale self-assembly, continuous capability reconstruction, embodied constraints, and pervasive signaling—into concrete tools and workflows. Feasibility depends on aligning goals across scales, building reliable predictive models and interfaces (Markov blankets), and instituting governance that manages the expanded “radius of care” as systems become more capable.

Collections

Sign up for free to add this paper to one or more collections.