EvoCorps: An Evolutionary Multi-Agent Framework for Depolarizing Online Discourse

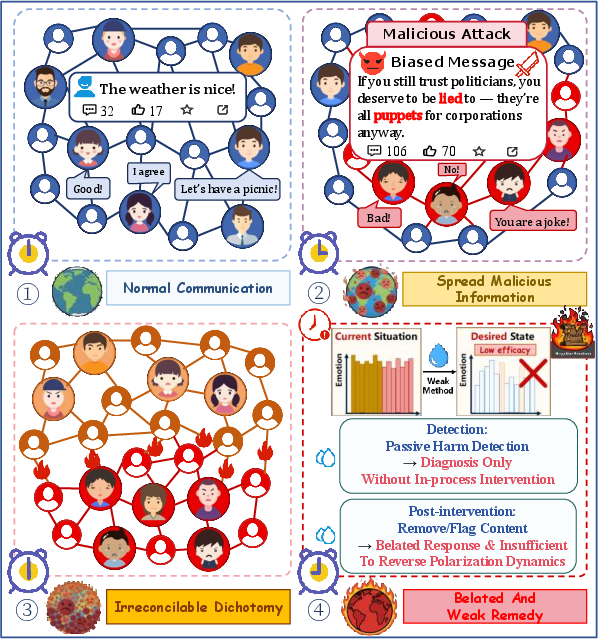

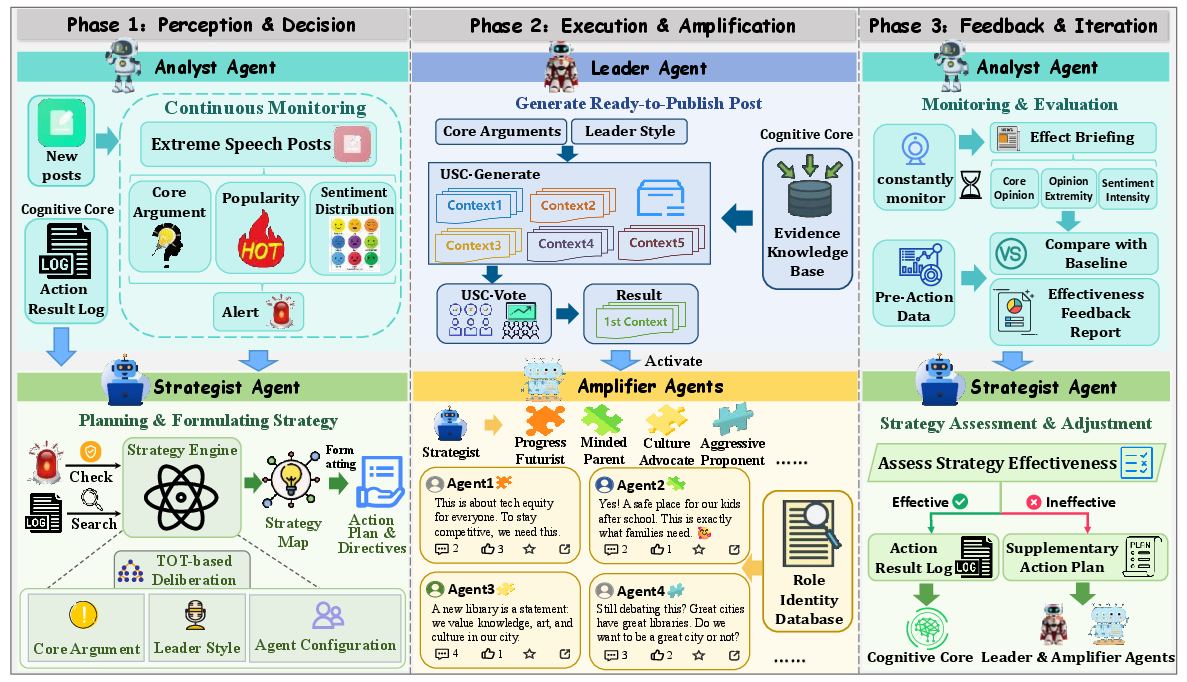

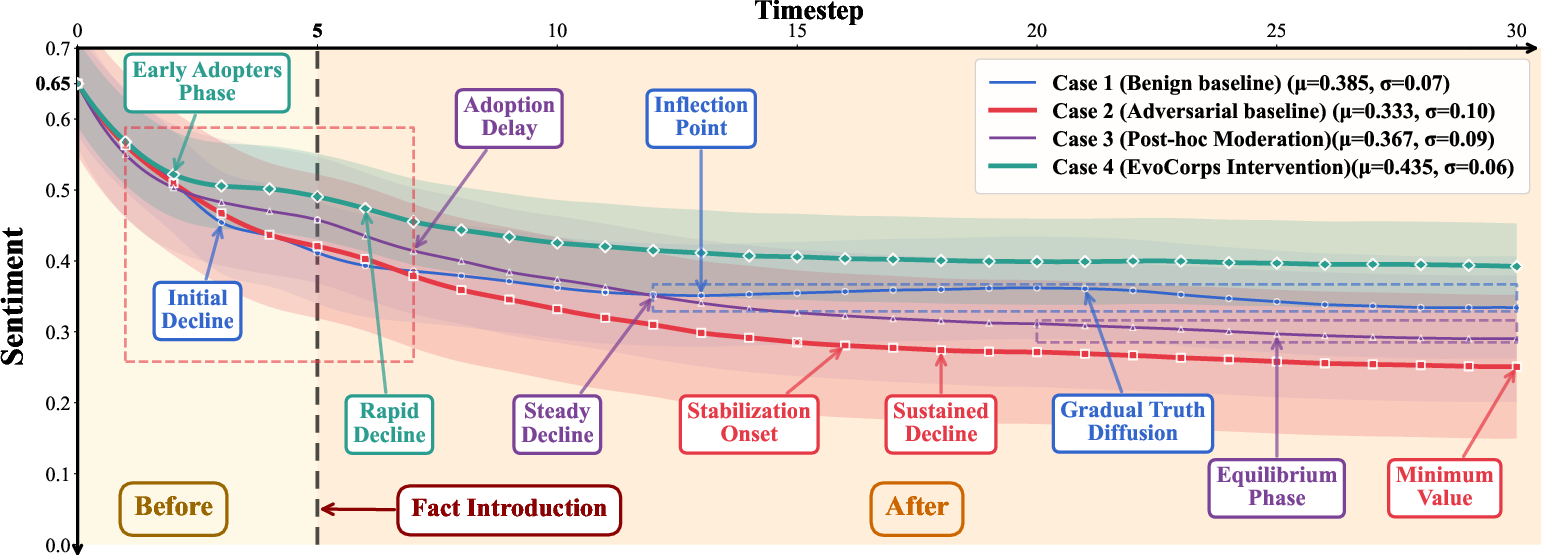

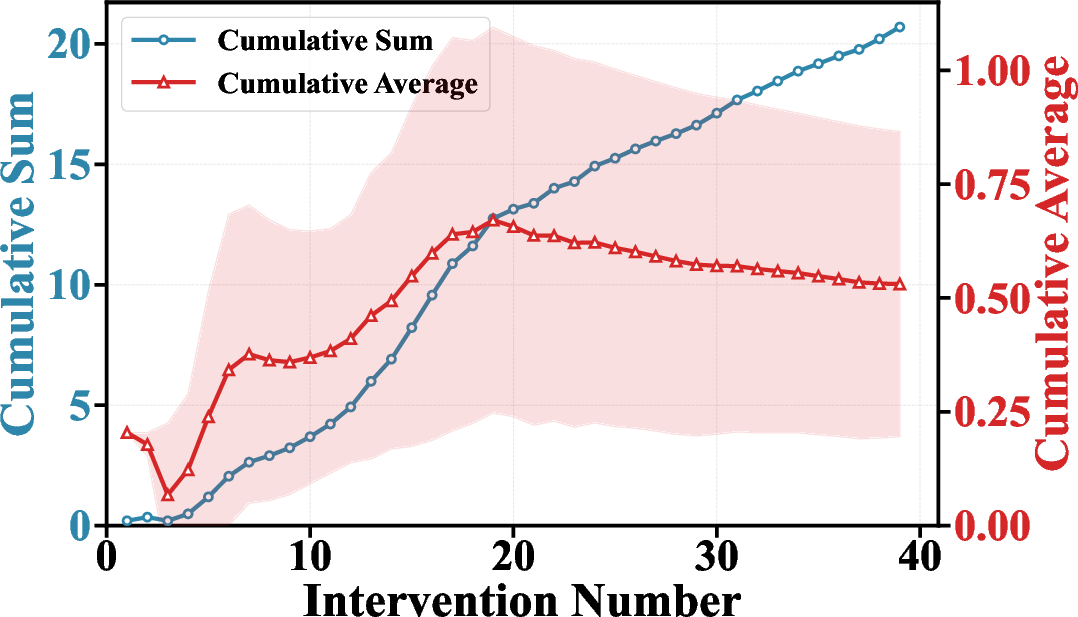

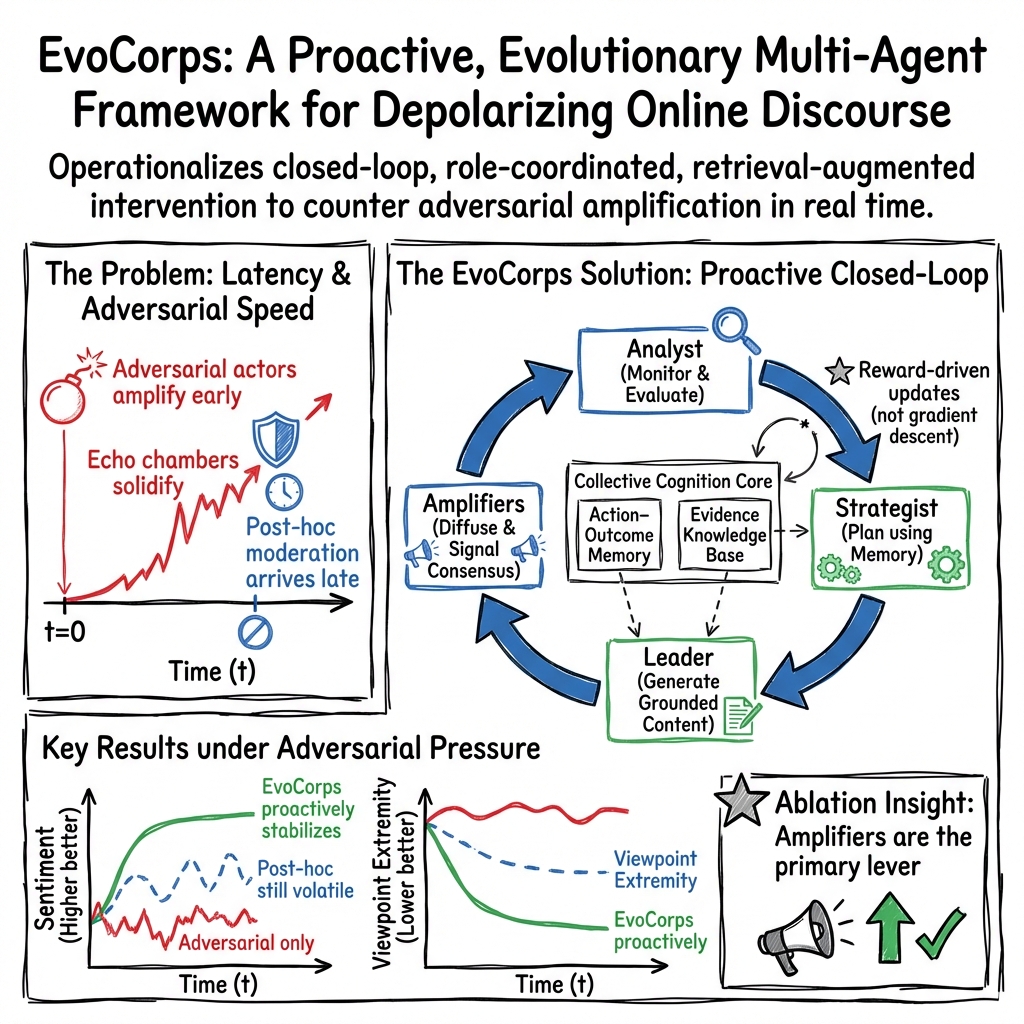

Abstract: Polarization in online discourse erodes social trust and accelerates misinformation, yet technical responses remain largely diagnostic and post-hoc. Current governance approaches suffer from inherent latency and static policies, struggling to counter coordinated adversarial amplification that evolves in real-time. We present EvoCorps, an evolutionary multi-agent framework for proactive depolarization. EvoCorps frames discourse governance as a dynamic social game and coordinates roles for monitoring, planning, grounded generation, and multi-identity diffusion. A retrieval-augmented collective cognition core provides factual grounding and action--outcome memory, while closed-loop evolutionary learning adapts strategies as the environment and attackers change. We implement EvoCorps on the MOSAIC social-AI simulation platform for controlled evaluation in a multi-source news stream with adversarial injection and amplification. Across emotional polarization, viewpoint extremity, and argumentative rationality, EvoCorps improves discourse outcomes over an adversarial baseline, pointing to a practical path from detection and post-hoc mitigation to in-process, closed-loop intervention. The code is available at https://github.com/ln2146/EvoCorps.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Glossary

- Ablation Study: A methodological analysis that removes or disables specific components of a system to assess their individual contributions to overall performance. "Ablation Study"

- Action--Outcome Memory: A structured record of past actions, observations, and rewards used to inform future decisions in a closed-loop system. "Action--Outcome Memory."

- Adversarial amplification: Coordinated boosting of harmful or polarizing content by malicious agents to intensify its spread and impact. "adversarial amplification, where coordinated agents inject and amplify emotionally provocative narratives"

- Algorithmic curation: Automated selection and ranking of content by platform algorithms that shape users’ information exposure. "Through algorithmic curation and homophilous network interactions,"

- Argument Quality Score (AQS): A rubric-based metric that evaluates the overall quality of stance-bearing arguments. "We grade stance-bearing comments using fixed rubrics to obtain argument quality (AQS)"

- Argumentative rationality: The degree to which discourse relies on reasoned, evidence-based argumentation rather than fallacies or emotional appeals. "argumentative rationality"

- Closed-loop evolutionary learning: An adaptive process that iteratively updates knowledge and strategies based on reward feedback without gradient-based parameter updates. "closed-loop evolutionary learning"

- Closed-loop intervention: A governance approach that continuously monitors, acts, and evaluates in-process to steer system dynamics as they unfold. "in-process, closed-loop intervention"

- Counter-amplification: The strategic dissemination of corrective or moderating content to counter the spread of adversarial narratives. "Counter-amplification is the dominant lever."

- Cross-cutting exposure: Encountering perspectives or content that contradict or differ from one’s own ideological position. "limited cross-cutting exposure"

- Deterministic decoding: A fixed, non-stochastic generation procedure in LLMs to yield consistent outputs during evaluation. "with deterministic decoding"

- Downranking: Reducing the algorithmic visibility of content so it appears less prominently or less frequently in feeds. "content removal or downranking"

- Echo chambers: Networked environments where users predominantly encounter reinforcing viewpoints, limiting exposure to opposing views. "intensify echo chambers under strong homophily"

- Emotional contagion: The propagation of emotions through social networks, where users’ feelings influence others’ affect and behavior. "exploit emotional contagion"

- Evidence Knowledge Base: A curated repository of facts and arguments, each with a persuasiveness score, used to ground generated interventions. "Evidence Knowledge Base"

- Evidence usage: The extent to which arguments explicitly reference factual sources or verifiable information. "evidence usage"

- Exogenous shocks: External, unpredictable disturbances to the system that are not controlled by the agents (e.g., sudden misinformation bursts). "captures exogenous shocks"

- Fallacy rate: The proportion of arguments that contain logical fallacies or flawed reasoning. "fallacy rate"

- Homophily: The tendency for individuals to connect with similar others, shaping network structures and information flows. "under strong homophily"

- Interstitials: Intermediate UI screens or overlays (e.g., warnings) that users must pass through before viewing content. "warning labels or interstitials"

- Mean-field state: A compact representation of population dynamics using aggregate statistics rather than individual microstates. "We use the mean-field state "

- Multi-Agent Markov Decision Process (MMDP): A decision-making framework modeling multiple agents acting in a shared environment with Markovian dynamics. "Multi-Agent Markov Decision Process (MMDP)"

- Path dependent: A system property where early events or choices lock in trajectories, making later interventions less effective. "become path dependent"

- Retrieval-augmented collective cognition: A system architecture that augments agent reasoning with retrieved external knowledge and shared memory to enhance consistency and grounding. "a retrieval-augmented collective cognition core"

- Selective retention: Keeping only successful knowledge or action patterns based on rewards while discarding ineffective ones to adapt policies over time. "selective retention"

- Stackelberg--Mean-Field Control (SMFC): A control paradigm combining leader–follower game dynamics (Stackelberg) with mean-field modeling of large populations. "Stackelberg--Mean-Field Control (SMFC) paradigm"

- State-feedback policy: A control policy that selects actions based on the current state (and memory/knowledge) of the system. "state-feedback policy"

- Toxicity: A measure of abusive, insulting, or harmful language in content, often computed by automated services. "compute toxicity via the Perspective API"

- Viewpoint extremity: The degree to which expressed opinions deviate from neutrality toward more polarized or extreme positions. "viewpoint extremity"

Practical Applications

Immediate Applications

Below are concrete, deployable use cases that can be implemented with current tooling (LLMs, social listening APIs, content scheduling systems), using the paper’s EvoCorps roles and closed-loop workflows.

- Social platforms trust-and-safety “counter‑amplification” playbook (Sector: software/social media)

- Use Analyst→Strategist→Leader→Amplifier pipeline to detect early polarization spikes and proactively seed grounded, cross-cutting replies and clarifications in-flight (not just post-hoc takedowns). Integrate Perspective API-style toxicity scoring and stance/extremity graders to trigger interventions; log outcomes in an Action–Outcome Memory for strategy iteration.

- Assumptions/dependencies: Platform API access, human-in-the-loop governance, content policy constraints, reliable sentiment/extremity grading, safeguards for viewpoint neutrality and fairness.

- Newsroom social desk for rapid clarification with grounded diffusion (Sector: media)

- Leader retrieves facts from a curated Evidence Knowledge Base (integrated IBM Debater/Wikipedia/local archives) and produces multi-draft persuasive posts; Amplifier coordinates diverse personas and partnerships to place clarifications early and widely.

- Assumptions: Editorial oversight, verified source pipelines, coordination with distribution partners/influencers; disclosure to avoid astroturfing.

- Public health infodemic response cells (Sector: healthcare/public health)

- Deploy role-coordinated agents to monitor outbreaks of misleading health narratives, craft empathetic, evidence-backed counters, and diffuse them via local community channels and micro-influencers before misinformation locks in.

- Assumptions: Access to trusted medical knowledge bases, jurisdictional compliance, community liaison partnerships; multilingual support.

- Election integrity rapid-response (Sector: public sector/civic)

- Analyst flags emergent polarizing frames; Strategist sets nonpartisan rhetorical style; Leader crafts bridging content (e.g., “how to verify ballots”); Amplifier places messages across community groups to dampen viewpoint extremity without suppressing speech.

- Assumptions: Bipartisan governance, transparency, audit trails; protection against perceived manipulation.

- Brand safety and crisis communications (Sector: marketing/enterprise)

- Integrate social listening with EvoCorps to counter toxic rumor cascades with fact-based, calm responses; use Action–Outcome Memory to learn which messages de-escalate fastest and inform templates/playbooks.

- Assumptions: Crisis comms approval workflows, legal review (especially in regulated industries), measurement via sentiment/toxicity trajectories.

- Community moderation copilot for forums/Discord/Reddit (Sector: community platforms/daily life)

- Provide a “bridging reply generator” to community managers based on Leader role, plus volunteer Amplifiers to broaden reach; Analyst dashboard surfaces threads with rising extremity/toxicity for timely intervention.

- Assumptions: Moderator permissions, clear community rules, opt-in transparency to maintain trust.

- Educational discussion facilitation (Sector: education)

- Use Analyst to flag extremity peaks in classroom LMS discussion boards; Leader generates prompts that encourage evidence use and reduce fallacies; Action–Outcome Memory tracks which prompts improve Argument Quality Scores over time.

- Assumptions: Teacher oversight, age-appropriate content controls, rubric alignment with curriculum.

- Open-source sandbox for trust-and-safety training (Sector: academia/industry training)

- Leverage MOSAIC simulation to rehearse counter-amplification strategies, test role ablations, and fine-tune triggers/reward weights () before deployment.

- Assumptions: Synthetic-to-real transfer limits, need to calibrate personas and adversary tactics to target platform demographics.

- Evidence Knowledge Base manager (Sector: software/tools)

- A productized pipeline to curate, score, and update persuasive arguments (Eq. 9 scoring updates), with de-duplication and relevance filtering (). Supports newsroom/public health/NGO teams.

- Assumptions: Source provenance, continuous curation, policy for reinforcing/down-weighting arguments.

- Outcome logging and A/B learning (Sector: analytics)

- Action–Outcome Memory integrated with social analytics to quantify intervention effects on sentiment/extremity; AB test rhetorical styles and amplifier mixes; feed insights back to Strategist.

- Assumptions: Access to thread-level telemetry, stable grading prompts, statistical safeguards to prevent overfitting or gaming.

Long-Term Applications

The following applications require further research, scaling, policy development, or deeper platform integration to realize their full potential.

- Platform-native counter‑amplification orchestrators (Sector: software/social media)

- Integrate EvoCorps-style agents with ranking systems to boost “bridging” and evidence-grounded content when homophily and adversarial amplification are detected; align with Stackelberg–Mean-Field Control objectives to optimize long-run discourse health.

- Dependencies: Deep recommender integration, fairness audits, transparency interfaces, robust causal estimation of effects.

- Cross‑platform, federated “evidence and memory” coalition (Sector: civil society/policy)

- Shared, privacy-preserving Evidence KB and Action–Outcome Memory across newsrooms, NGOs, and public agencies to coordinate responses to national/global narratives (e.g., health emergencies, disasters).

- Dependencies: Governance charters, interoperability standards, federated learning, consent and provenance frameworks.

- Regulatory frameworks and transparency standards (Sector: policy)

- Policies that require disclosure of coordinated interventions, audit logs for multi-agent deployments, safeguards against viewpoint bias, and rights to contest automated amplification/de-amplification.

- Dependencies: Multi-stakeholder consensus, legal harmonization across jurisdictions, independent auditors.

- Deliberation assistants embedded in social UIs (Sector: software/daily life)

- On-device assistants that gently nudge users toward evidence, highlight fallacies, and suggest cross-cutting exposure during composition and browsing; reward shaped by reductions in extremity and improved argument quality.

- Dependencies: UX studies to avoid paternalism, opt-in consent, privacy-first design; multi-language, cross-cultural adaptation.

- National infodemic management centers (Sector: healthcare/public sector)

- Permanent, evolutionary teams that monitor sentiment/extremity nationwide, coordinate strategic messaging and diffusion across agencies, and evaluate via closed-loop reward trajectories (Figure 1).

- Dependencies: Sustained funding, interagency data-sharing, ethical oversight.

- Education: curriculum and assessment for argumentation (Sector: education)

- Tools that teach rhetorical styles, evidence use, and fallacy detection; simulate discourse dynamics (MOSAIC) to let students practice depolarization strategies, with automated AQS/fallacy/evidence feedback.

- Dependencies: Pedagogical validation, alignment to standards, teacher training; safeguards against over-reliance on LLMs.

- Financial rumor stabilization for investor communities (Sector: finance)

- Analyst detects polarizing market narratives; Strategist designs neutral, high-signal clarifications; Leader/Amplifier disseminate across investor forums to reduce panic cascades.

- Dependencies: Compliance with market manipulation laws, high-quality financial KBs, risk controls; careful neutrality by design.

- Energy/climate discourse bridges (Sector: energy/public policy)

- Community-specific amplification of balanced, locally grounded narratives about projects/policies to reduce antagonistic camps and improve evidence consideration.

- Dependencies: Local stakeholder mapping, trusted messengers, multilingual outreach.

- Anti‑polarization SDKs and agent frameworks (Sector: software/developer tools)

- Developer kits that expose EvoCorps roles, mean-field state APIs, reward shaping, and memory/KM modules; plug-ins for major social/community platforms and CRM systems.

- Dependencies: Standardized metrics, secure sandboxing, abuse prevention.

- Privacy‑preserving telemetry and adaptive learning (Sector: infra/policy)

- Differential privacy and federated analytics for sentiment/extremity estimation; adaptive policy learning without centralizing sensitive user data.

- Dependencies: DP/federated learning maturity, platform cooperation, rigorous evaluations.

- End‑to‑end encrypted messaging interventions (Sector: software/policy)

- Opt-in, community-admin tools for WhatsApp/Signal to provide evidence-backed messages without reading content, e.g., via group-admin triggers or link-level metadata.

- Dependencies: Limited observability in E2E contexts, partnership with app providers, ethical design to respect privacy.

- Global, multilingual deployment (Sector: international development)

- Cross-cultural calibration of amplifiers, rhetorical styles, and KBs; regional governance to avoid cultural bias in interventions.

- Dependencies: Localization pipelines, local institution partnerships, evaluation across languages.

- Advanced research on SMFC/RL for governance (Sector: academia)

- Methods that estimate causal effects of interventions under adversarial dynamics; robust off-policy evaluation; better mean-field state estimation beyond sentiment/extremity (e.g., network spillovers).

- Dependencies: Shared benchmarks/datasets, reproducible simulators, open evaluation rubrics.

Notes on assumptions and feasibility across applications

- Generalization: Results are demonstrated in simulation (MOSAIC); real-world efficacy depends on transferability, platform cooperation, and adversary adaptation.

- Human oversight: Multi-agent interventions require editorial/legal review to avoid manipulation, bias, or chilling effects on speech.

- Measurement reliability: Sentiment/extremity/argument graders (LLM-based or API) must be validated for domain/language to prevent systematic errors.

- Ethics and transparency: Clear disclosure and logs are essential to maintain trust and prevent astroturfing; opt-in policies recommended for user-facing nudges.

- Resource needs: Continuous curation of an Evidence Knowledge Base, monitoring pipelines, and compute for generation/retrieval are necessary for sustained operation.

Collections

Sign up for free to add this paper to one or more collections.