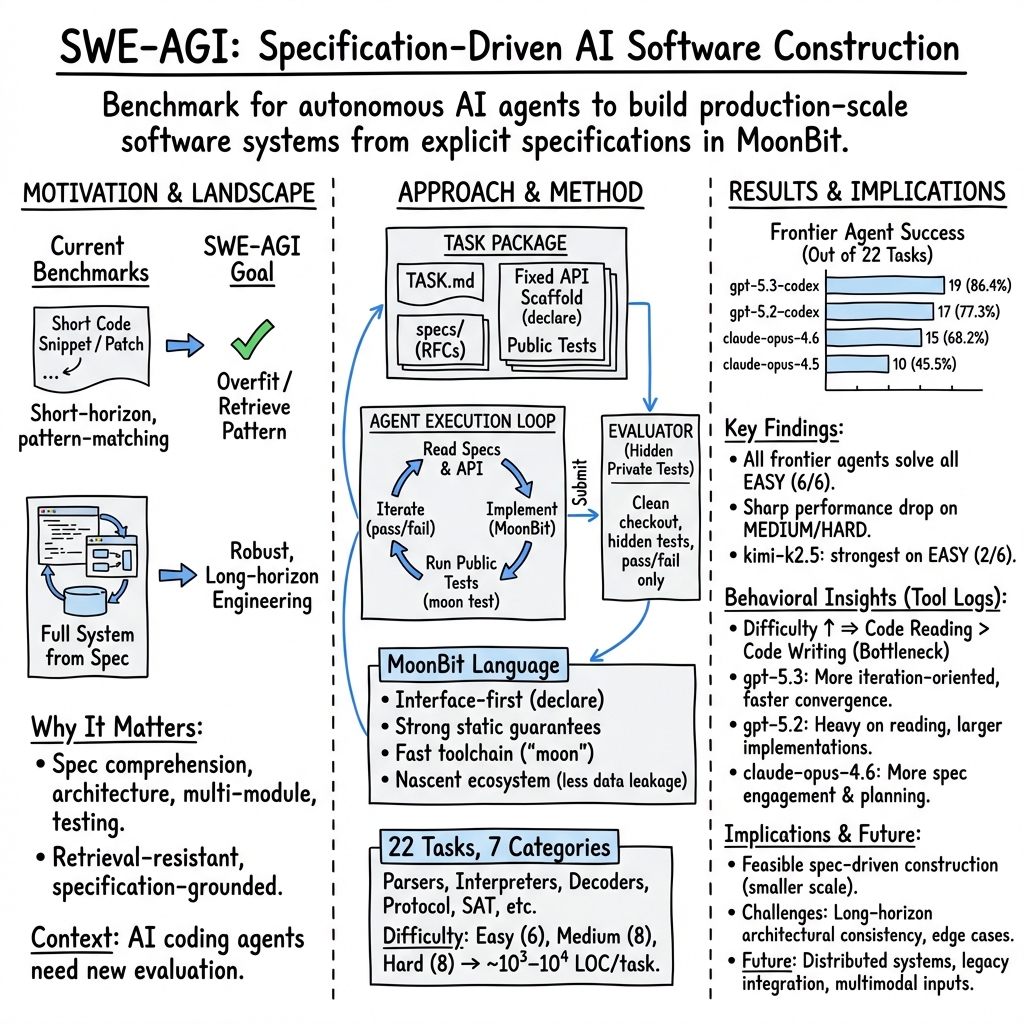

SWE-AGI: Benchmarking Specification-Driven Software Construction with MoonBit in the Era of Autonomous Agents

Abstract: Although LLMs have demonstrated impressive coding capabilities, their ability to autonomously build production-scale software from explicit specifications remains an open question. We introduce SWE-AGI, an open-source benchmark for evaluating end-to-end, specification-driven construction of software systems written in MoonBit. SWE-AGI tasks require LLM-based agents to implement parsers, interpreters, binary decoders, and SAT solvers strictly from authoritative standards and RFCs under a fixed API scaffold. Each task involves implementing 1,000-10,000 lines of core logic, corresponding to weeks or months of engineering effort for an experienced human developer. By leveraging the nascent MoonBit ecosystem, SWE-AGI minimizes data leakage, forcing agents to rely on long-horizon architectural reasoning rather than code retrieval. Across frontier models, gpt-5.3-codex achieves the best overall performance (solving 19/22 tasks, 86.4%), outperforming claude-opus-4.6 (15/22, 68.2%), and kimi-2.5 exhibits the strongest performance among open-source models. Performance degrades sharply with increasing task difficulty, particularly on hard, specification-intensive systems. Behavioral analysis further reveals that as codebases scale, code reading, rather than writing, becomes the dominant bottleneck in AI-assisted development. Overall, while specification-driven autonomous software engineering is increasingly viable, substantial challenges remain before it can reliably support production-scale development.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Practical Applications

Immediate Applications

Below is a concise set of actionable, real-world uses that can be deployed today, grounded in the paper’s benchmark design, findings, and tooling.

- Benchmarking and procurement of AI coding tools

- Sector: Software; enterprise IT; DevOps

- What to do: Use SWE-AGI to run head-to-head evaluations of internal or vendor-provided LLM-based coding agents. Compare task success rates, time-to-solution, and cost profiles on representative tasks to select and right-size tools.

- Tools/Workflow:

swe-agi-submit,moon test, fixed API scaffolds, hidden private test suites; reporting of behavior stats (Read/Write/Debug shares) - Assumptions/Dependencies: Adoption of MoonBit for evaluation; availability of compute budgets; alignment between SWE-AGI tasks and organizational needs

- Spec-first engineering within CI/CD

- Sector: Software; platform engineering

- What to do: Adopt declaration-first scaffolds (MoonBit’s

declare) or language analogs (e.g., TypeScript interfaces, Rust traits) to freeze public APIs before implementation; gate merges with hidden private tests to prevent overfitting to visible tests. - Tools/Workflow: MoonBit toolchain (

moon), fixed interface declarations, public+private test split, CI gating on private suite - Assumptions/Dependencies: Team buy-in for spec-first workflows; robust private test management; compatibility in non-MoonBit stacks via language-equivalent scaffolding

- Rapid agent-assisted implementation of easy-tier components

- Sector: Data engineering; web backends

- What to do: Use frontier models to implement and validate specification-grounded parsers/encoders for formats like CSV, INI, TOML, XML that showed 100% pass rates on the easy tier.

- Tools/Workflow: SWE-AGI starter repos and tests; agent front-ends (Codex CLI, Claude Code, etc.); human review and hardening

- Assumptions/Dependencies: Model choice matters; ensure human-in-the-loop review and security audits before production

- Standards-compliant protocol parsing and validation (URI/URL/HPACK)

- Sector: Networking; web infrastructure; content delivery

- What to do: Integrate SWE-AGI-like spec-driven tests to enforce compliance in URL parsing, URI normalization, and HPACK handling within gateways, load balancers, and SDKs.

- Tools/Workflow: Hidden private tests derived from normative RFCs; continuous conformance checks in CI

- Assumptions/Dependencies: High-coverage test suites reflecting operational edge cases; careful handling of malformed inputs

- Agent behavior telemetry for engineering management

- Sector: Software engineering operations; developer tooling

- What to do: Instrument agent workflows using the paper’s behavior taxonomy (Spec/Plan/Read/Write/Debug/Hyg/Ext) to identify bottlenecks—especially code reading—and tune prompts, tool policies, and repository structure.

- Tools/Workflow: Front-end logging (shell actions, file reads/writes, builds/tests), action categorization, periodic reviews

- Assumptions/Dependencies: Access to detailed logs; consistent labeling; privacy and compliance in telemetry

- Property-based and fuzz-style test generation augmenting QA

- Sector: QA; security engineering

- What to do: Replicate the hybrid test construction pipeline (normative cases + property-based generators + LLM-generated candidates + fuzz mutations) to raise coverage and harden spec-critical subsystems.

- Tools/Workflow: LLM-assisted test generation; fuzz tools; manual triage to align with standards; hidden-private test management

- Assumptions/Dependencies: Expert triage for expected behaviors; adequate compute for fuzzing; maintenance of test corpora

- Academic curriculum and assignments

- Sector: Education; CS programs; bootcamps

- What to do: Use public subsets of SWE-AGI tasks to teach spec reading, interface-first design, and end-to-end testing. Grade with a hidden-private test split to assess generalization rather than overfitting.

- Tools/Workflow: Starter repos with

TASK.mdand specs/; controlled public tests; instructor-managed private suites - Assumptions/Dependencies: Student access to MoonBit and compute; policies against training-set contamination

- Open-source contribution workflows with fixed scaffolds

- Sector: Open-source software; community-driven projects

- What to do: Define contribution tasks via fixed API scaffolds and hidden tests to ensure spec compliance and consistent interfaces across modules.

- Tools/Workflow: Declare-first public APIs; contributor starter repos; CI verification with private tests

- Assumptions/Dependencies: Maintainer capacity to curate specs and tests; contributor acceptance of stricter interfaces

- Model selection and capacity planning using cost/time metrics

- Sector: Enterprise software; finance/operations for tooling

- What to do: Track wall-clock time, token usage, and monetary cost per task to optimize model-choice and agent configurations for target workloads.

- Tools/Workflow: Agent run logs; periodic benchmarking; procurement scorecards

- Assumptions/Dependencies: Stable vendor pricing; representative task mix; reliable logging

- Individual developer skill-building

- Sector: Daily life for practitioners; professional development

- What to do: Practice spec-first development with SWE-AGI’s public tasks to improve reading formal specs, designing modular architectures, and building robust test suites.

- Tools/Workflow: MoonBit starter repos; local

moon test; iterative self-assessment via public tests - Assumptions/Dependencies: Time investment; willingness to work in a nascent language (MoonBit)

Long-Term Applications

These applications require further research, scaling, and/or ecosystem development before wide deployment.

- Autonomous software factories (spec-to-production)

- Sector: Software; platform teams; SaaS

- What it could deliver: End-to-end pipelines that translate authoritative specs into production-grade, standards-compliant systems with minimal human intervention, including parsers, protocol stacks, language front-ends, and decoders.

- Tools/Products: Agentic IDEs; long-horizon planning modules; conformance dashboards; auto-refactoring and regression guards

- Assumptions/Dependencies: Higher reliability on hard, spec-intensive tasks; robust long-context code comprehension; stronger memory and architectural reasoning; scalable test coverage

- Conformance and certification labs for standards bodies

- Sector: Policy; standards organizations; public-sector procurement

- What it could deliver: SWE-AGI–style, contamination-resistant conformance suites used to certify implementations across languages and vendors, with reproducible hidden-private tests and audit trails.

- Tools/Products: Certification harnesses; “conformance-as-a-service” platforms; public registries of certified components

- Assumptions/Dependencies: Broad community buy-in; governance for test neutrality; legal handling of normative references; multi-language ports of spec-first scaffolds

- Safety-critical, regulated software via spec-driven agents

- Sector: Healthcare (HL7/DICOM), automotive (ISO 26262), aerospace (DO-178C), finance (PCI/ISO 20022)

- What it could deliver: Agent-assisted construction and maintenance of standards-compliant modules with audit logs, traceability, and formal methods overlays.

- Tools/Products: Verified code-generation pipelines; formal specification integration; runtime monitoring for compliance

- Assumptions/Dependencies: Formal verification and proof tooling; risk management; liability and regulatory acceptance; exhaustive test coverage

- Cross-language spec-first SDKs and scaffolding

- Sector: Software; developer tooling; language ecosystems

- What it could deliver: Port MoonBit’s

declaremodel to Rust/Go/Java/C#, enabling compile-time enforcement of public APIs in spec-driven projects and standardized evaluation interfaces. - Tools/Products: Spec-first SDKs; language plugins; static analyzers enforcing interface fidelity

- Assumptions/Dependencies: Language compiler support or plug-in mechanisms; community adoption; interoperability with existing build systems

- Architecture-aware “code reader” models and IDE agents

- Sector: Developer tools; IDEs

- What it could deliver: Agents optimized for code comprehension at scale (module graphs, invariants, interfaces), alleviating the observed reading bottleneck with semantic maps, queryable architecture views, and long-term memory.

- Tools/Products: Semantic code browsers; graph-based context retrieval; comprehension metrics integrated into IDEs

- Assumptions/Dependencies: New model architectures; datasets and benchmarks focused on reading/comprehension; efficient long-context handling

- Performance- and resource-constrained agent-built systems (WASM, HPACK, ZIP)

- Sector: Robotics; embedded; edge computing; telecom

- What it could deliver: Agent-generated decoders/interpreters optimized for latency, memory, and throughput, extending SWE-AGI’s correctness focus with performance scoring.

- Tools/Products: Performance-aware test suites; SLO-based agent objectives; hardware-in-the-loop evaluation

- Assumptions/Dependencies: Expanded benchmarks with runtime/memory metrics; optimization-aware agents; hardware integration

- Continuous compliance pipelines in production

- Sector: Finance; telecom; web platforms

- What it could deliver: Ongoing monitoring that replays SWE-AGI–style hidden tests and fuzzed variants against deployed microservices to detect drift from normative behavior.

- Tools/Products: Compliance dashboards; regression sentinels; escalation workflows

- Assumptions/Dependencies: Safe test replay at scale; access to operational traces; strong observability

- Procurement and governance standards for AI coding agents

- Sector: Policy; enterprise governance; risk and compliance

- What it could deliver: Requirements that AI coding tools demonstrate spec-grounded performance on agreed benchmarks before deployment, with standardized reporting of cost, time, and coverage.

- Tools/Products: Benchmark-based RFP criteria; audit templates; disclosure guidelines

- Assumptions/Dependencies: Industry consensus on benchmark suites; responsible-use frameworks; legal clarity on accountability

- Scalable education platforms for spec-driven engineering

- Sector: Education; MOOCs; workforce upskilling

- What it could deliver: Large-scale, auto-graded courses that teach spec reading, interface-first design, and long-horizon debugging, with adaptive agents and robust anti-cheating private tests.

- Tools/Products: Courseware built on SWE-AGI-like tasks; grading sandboxes; analytics on student agent behaviors

- Assumptions/Dependencies: Compute and cost controls; proctoring and fairness; multi-language support

- Contamination-resistant evaluation frameworks in other domains

- Sector: Robotics; energy; cybersecurity

- What it could deliver: SWE-AGI’s design replicated in ecosystems with low data leakage to evaluate true reasoning (e.g., emerging robotics control languages, energy grid protocols).

- Tools/Products: Domain-specific starter repos and spec packs; hidden-private test harnesses; end-to-end agent loops

- Assumptions/Dependencies: Availability of nascent ecosystems; curated, authoritative specs; tooling parity with MoonBit

- Advanced test generation and triage pipelines

- Sector: QA; security; reliability engineering

- What it could deliver: Integrated property-based + LLM + fuzz test generators with automated triage to produce high-coverage private suites for complex state machines and error recovery logic.

- Tools/Products: Test generation services; triage assistants; coverage reporters

- Assumptions/Dependencies: Human oversight for expected behaviors; scalable triage workflows; minimizing false positives/negatives

Notes on general feasibility:

- The strongest immediate gains are in evaluation, QA, and spec-first workflows; the paper’s results show frontier agents reliably solve easy-tier tasks but degrade on hard, specification-intensive systems.

- Long-term applications hinge on improved code comprehension, architectural reasoning, and performance-aware evaluation—echoing the paper’s observed bottleneck that code reading dominates as codebases scale.

Collections

Sign up for free to add this paper to one or more collections.