- The paper introduces a modular toolkit that converts minimal 2D user input into full 3D segmentation of Gaussian splats with hybrid AI-human correction.

- It leverages AI mask propagation, frustum and depth projections, and differentiable rendering to achieve high mask IoU and pixel accuracy in complex scenes.

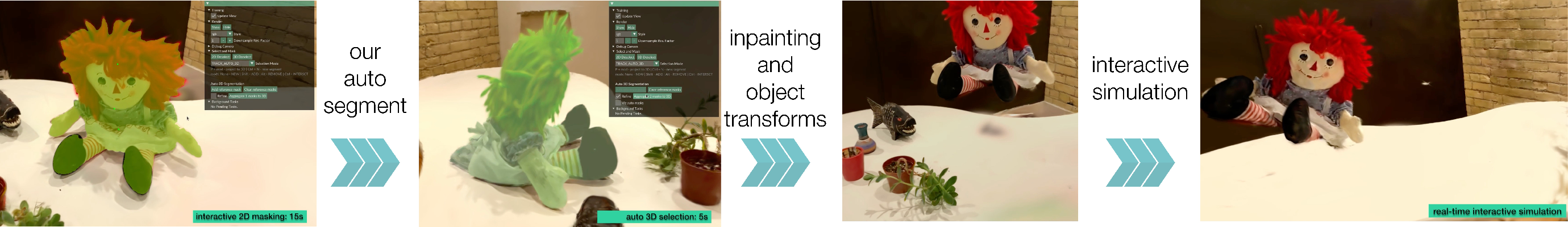

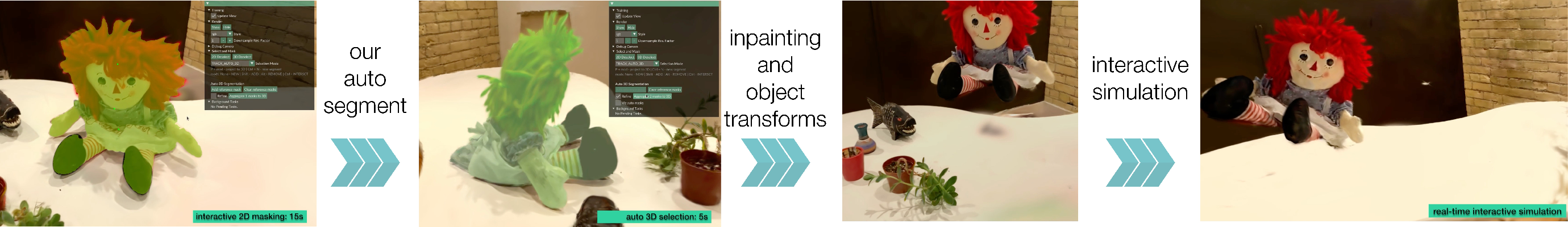

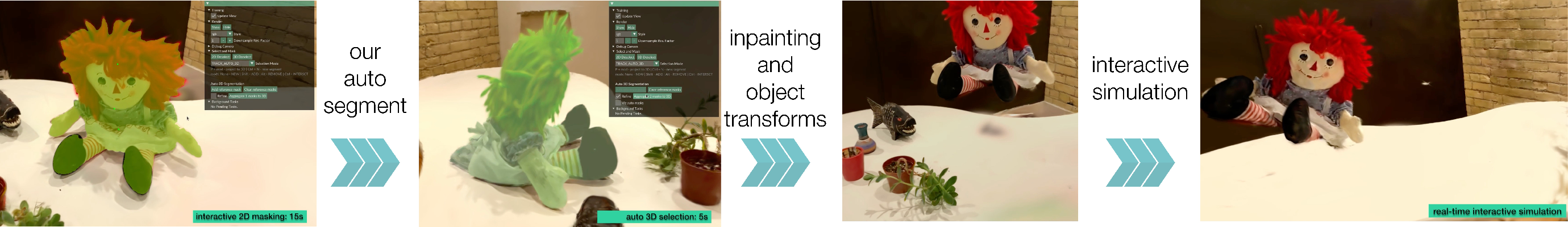

- Results highlight rapid segmentation, flexible editing, and applications in object-centric simulation, orientation correction, and localized inpainting.

Overview of 3DGS Editing Challenges and Motivation

The adoption of 3D Gaussian Splatting (3DGS) as a core representation for multi-view 3D captures introduces explicit spatial primitives well-suited for high-fidelity scene reconstruction, facilitating direct manipulation, local editing, and dynamic simulation—capabilities less tractable with NERF-like volumetric approaches. However, robust and flexible object extraction from unstructured, in-the-wild 3DGS scenes remains unsolved, significantly impeding downstream applications such as user-driven editing, object-centric simulation, and interactive 3D content creation. Existing segmentation pipelines either rely on time-intensive scene-specific feature training that precludes post hoc correction or are limited by rigid click-based selection interfaces with high error propagation across views.

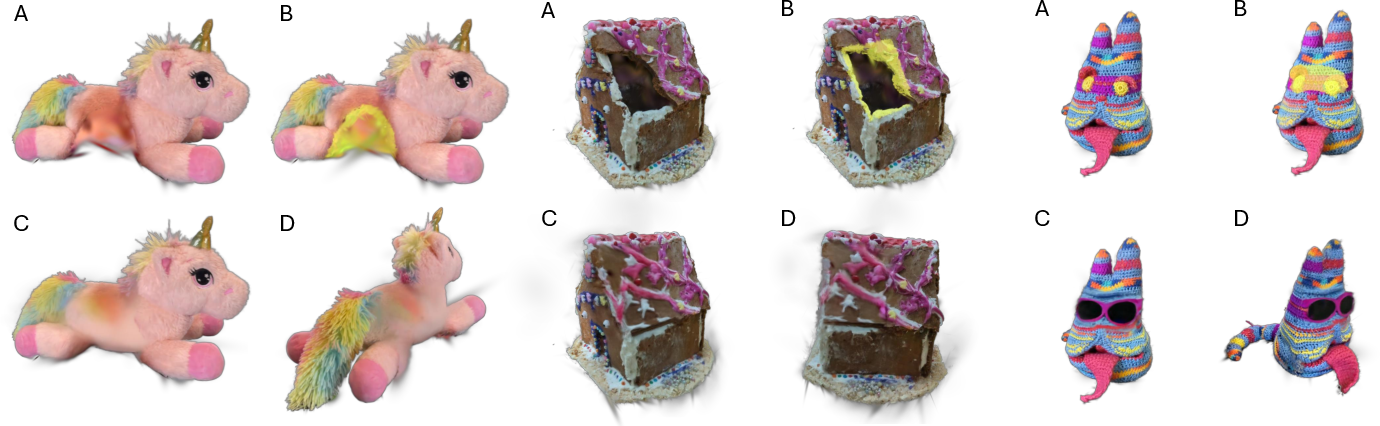

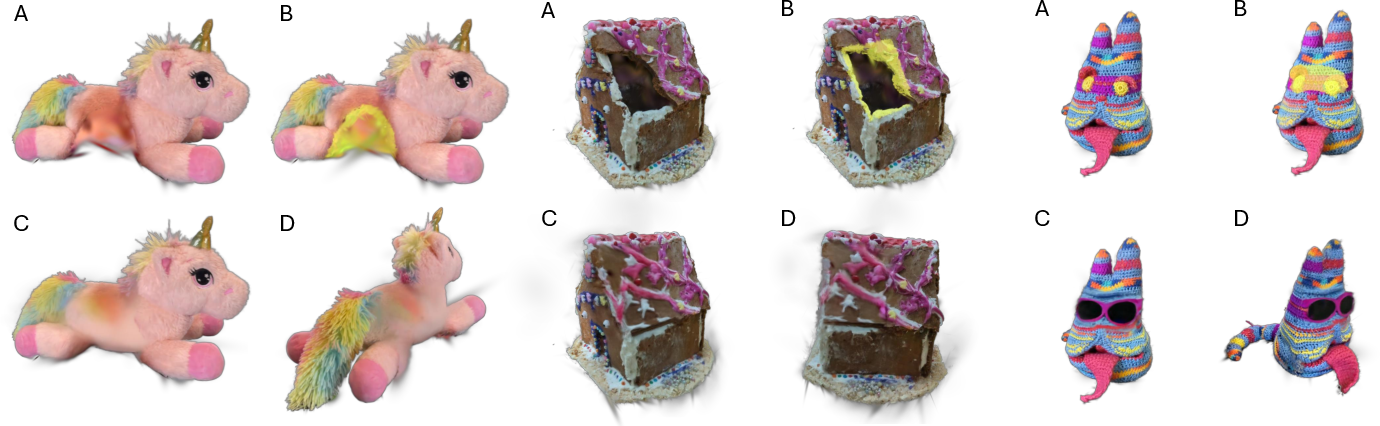

Figure 1: Comparison of controlled vs. realistic capture environments, highlighting segmentation complexity in real-world scenarios.

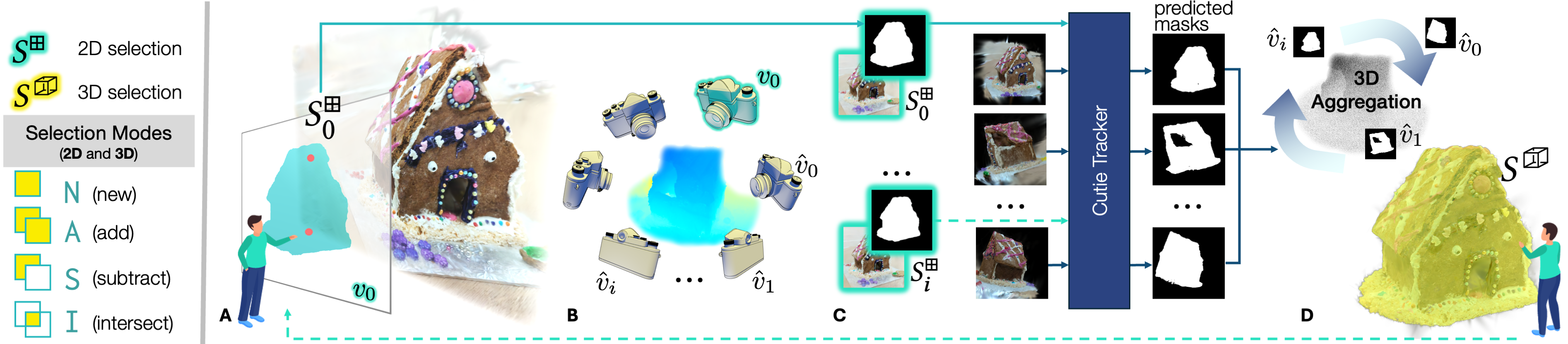

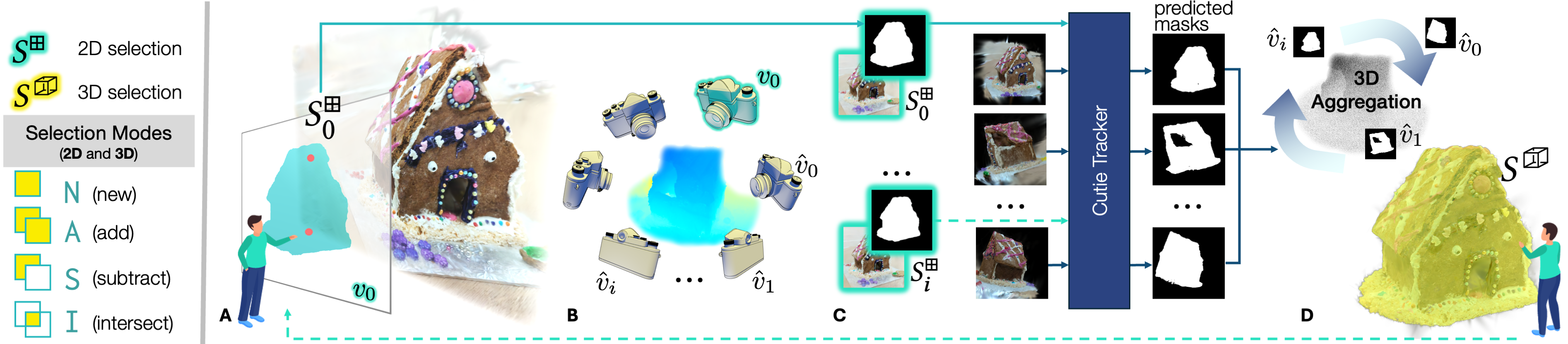

ArtisanGS introduces a modular, interactive suite for selection and segmentation of 3DGS objects, unifying AI-powered automatic mask propagation, user-driven correction, and manual selection modalities. The central innovation is the conversion of minimal user 2D input (single mask or click) into a full 3DGS segment, with seamless transitions between automatic and manual procedures, supporting virtually any binary segmentation target within an unstructured scene.

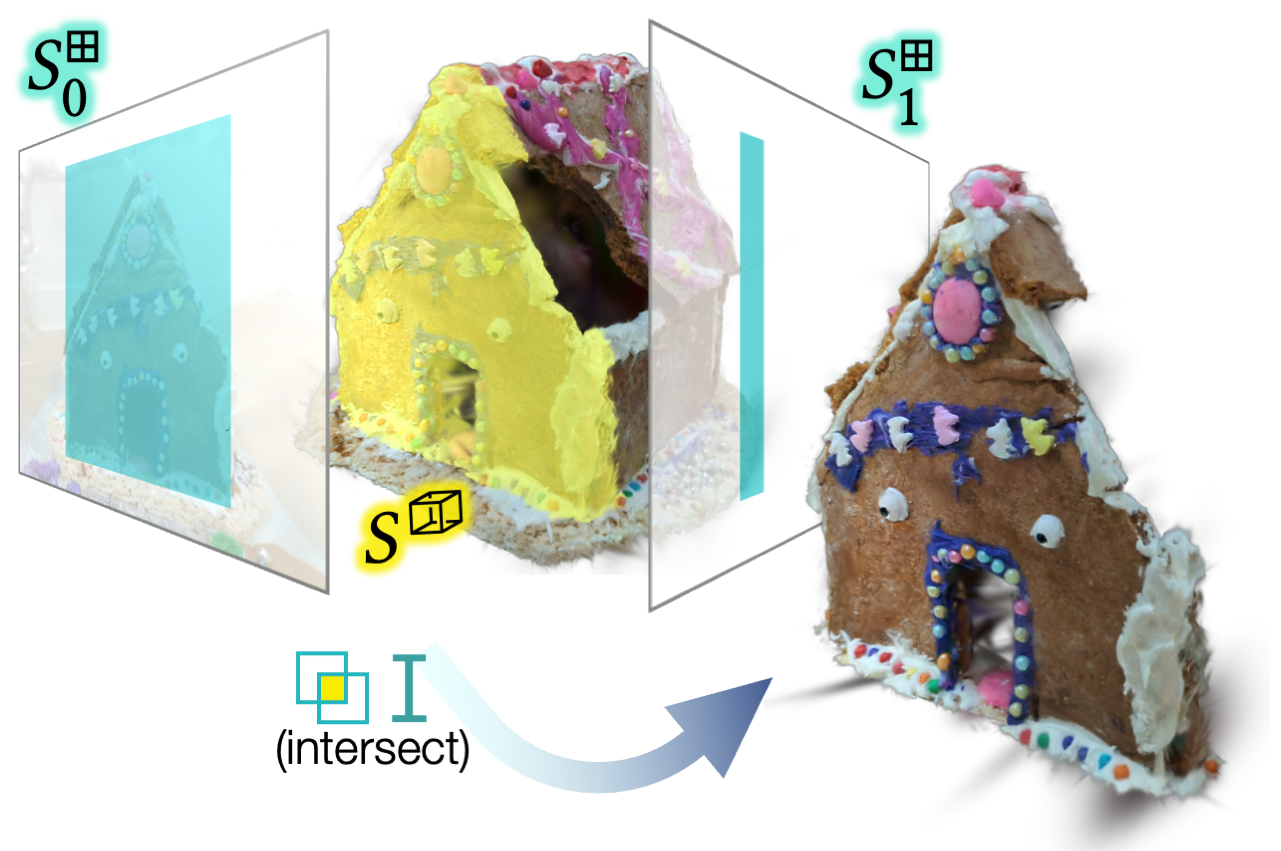

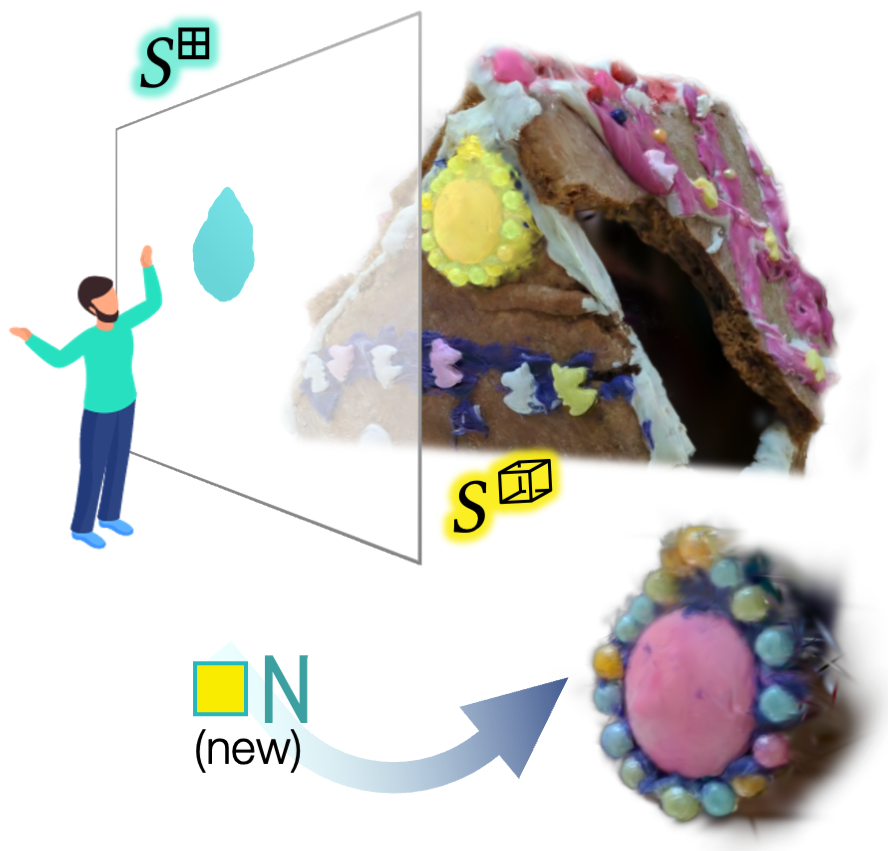

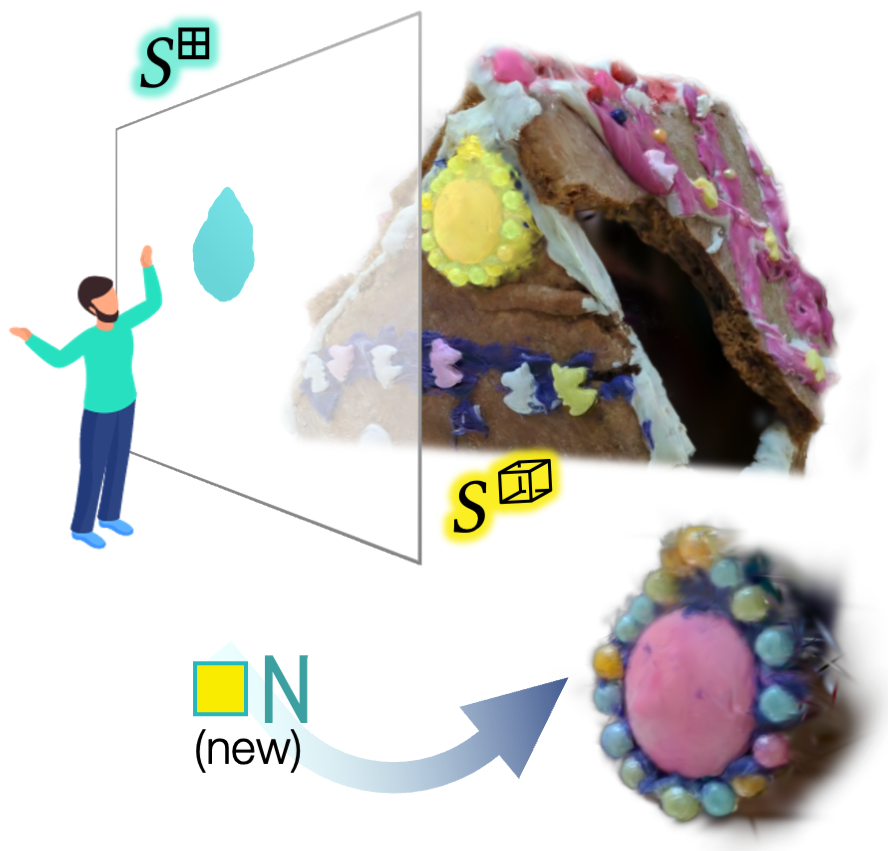

Selection Modes

Selection is organized into familiar modes: replace, add, subtract, and intersect, for both 2D and 3D segments. Color coding and UI affordances allow real-time toggling between modes.

Manual Projection and Surface Selection

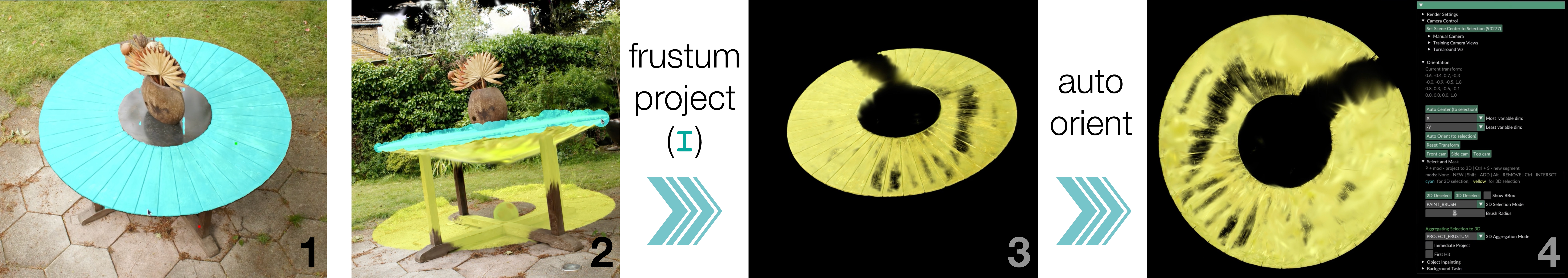

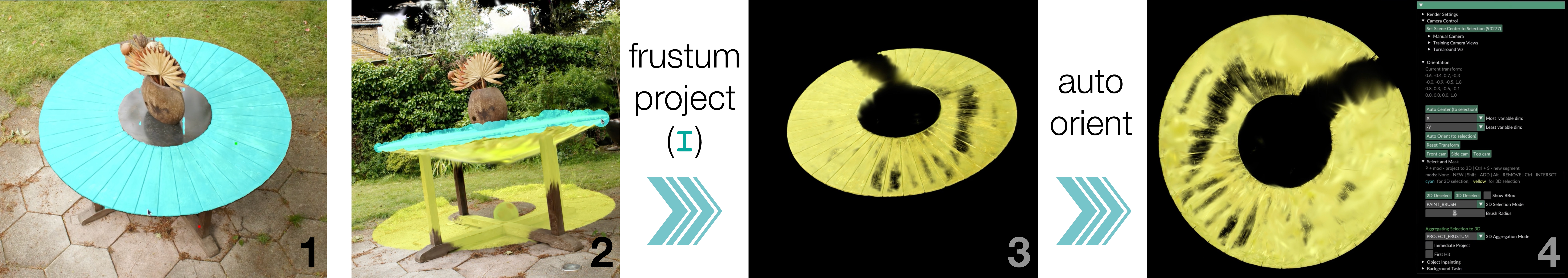

ArtisanGS supports frustum and depth-based manual projection. Frustum projection sweeps all Gaussians whose mean falls inside the user-defined 2D mask, permitting rapid broad selections. Depth projection constrains to surface-aligned Gaussians within a mask to facilitate layer and detail extraction.

Figure 2: Manual projection strategies enable fine-grained, user-specified segmentation across multiple modes.

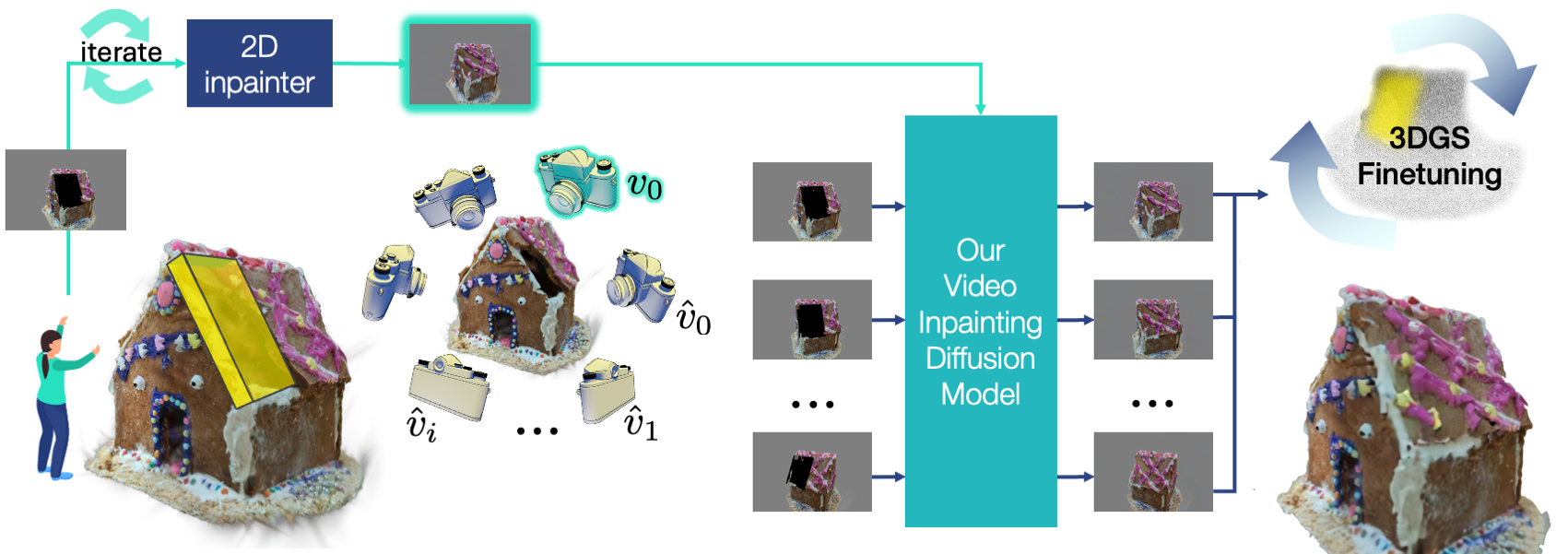

Automatic Segmentation with AI and User Correction

The toolkit leverages the Cutie mask tracking network, robust to occlusions and support for object-level conditioning via inserted reference frames. Masks are tracked across systematically sampled views—either original training views or automatically generated turnaround views around the segmented object. Multi-view masks are aggregated by minimizing L2 loss between mask renders and the per-Gaussian assignment feature, using the differentiable 3DGS renderer as a black-box function for generality. Crucially, ArtisanGS permits iterative correction: the user can review auto-generated masks and inject new annotations at failure points, augmenting the memory frames during inference for robust turnaround performance.

Figure 3: Automatic propagation of user masks across views and the correction workflow.

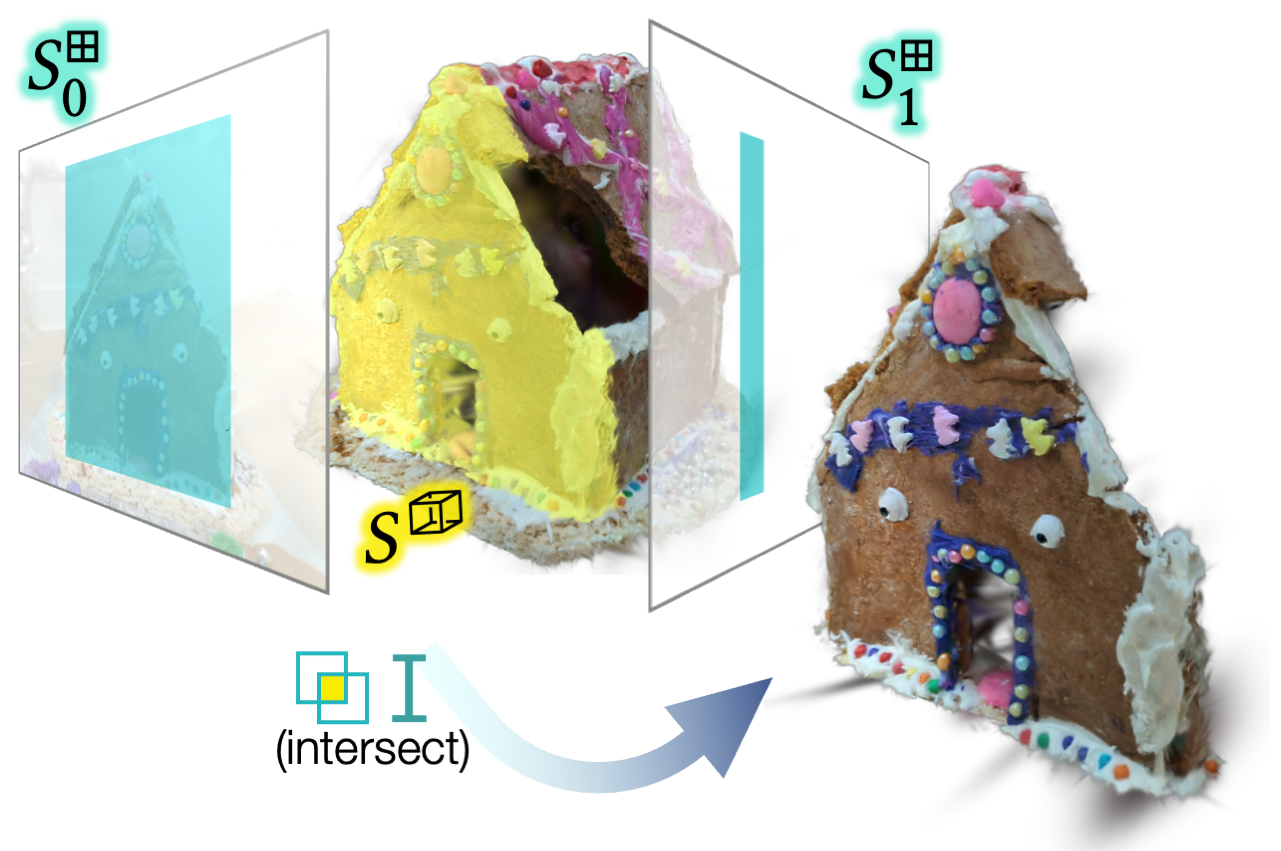

Presegmentation and Occlusion Robustness

To tolerate cluttered environments, users can flag masks as occlusion-free; the system then pre-segments via intersecting frustum projections prior to AI tracking, restricting aggregation to relevant Gaussians and increasing both speed and accuracy in dense scenes.

Figure 4: Demonstrating the impact of presegmentation on tracking inputs—removing irrelevant occluders improves quality and efficiency.

Quantitative and Qualitative Results

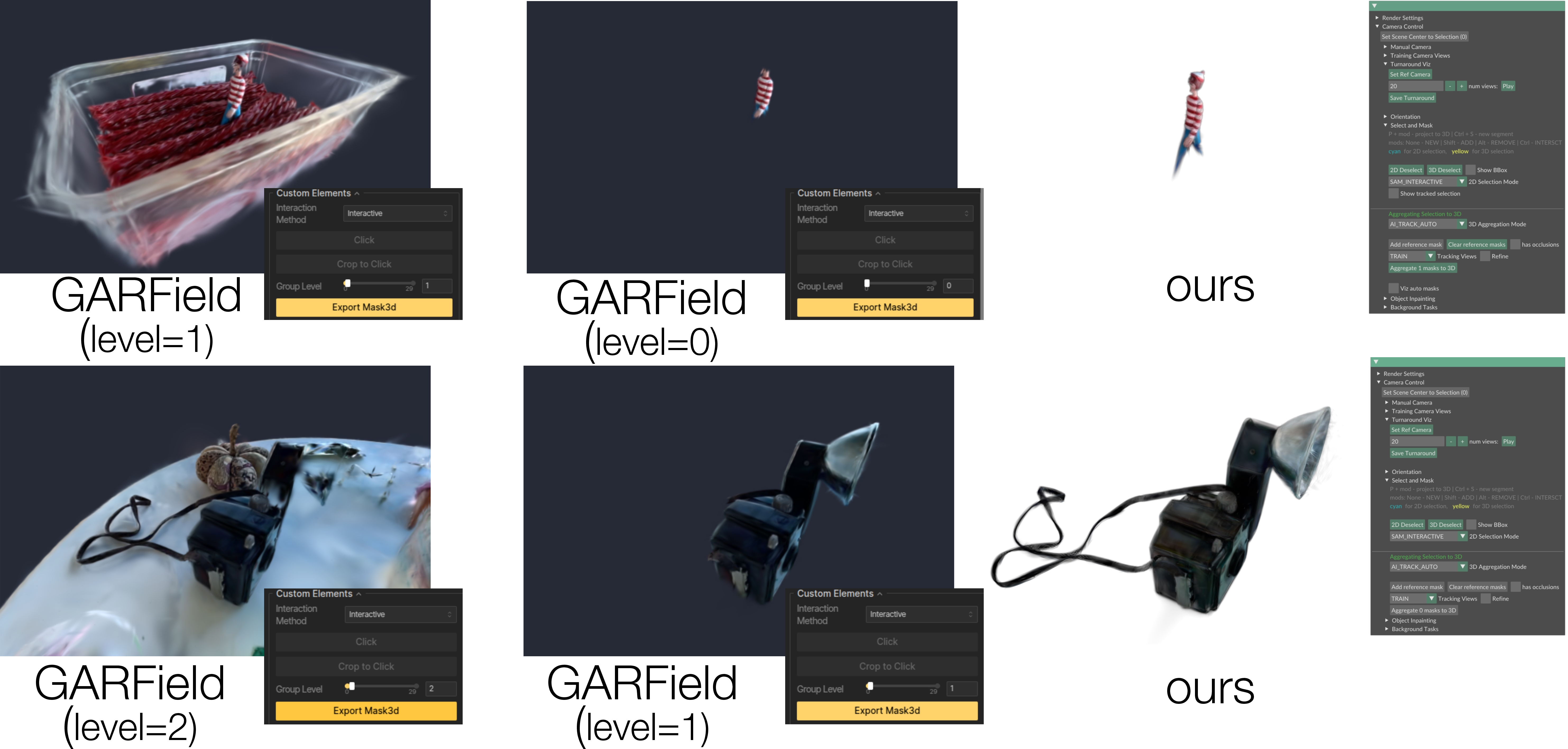

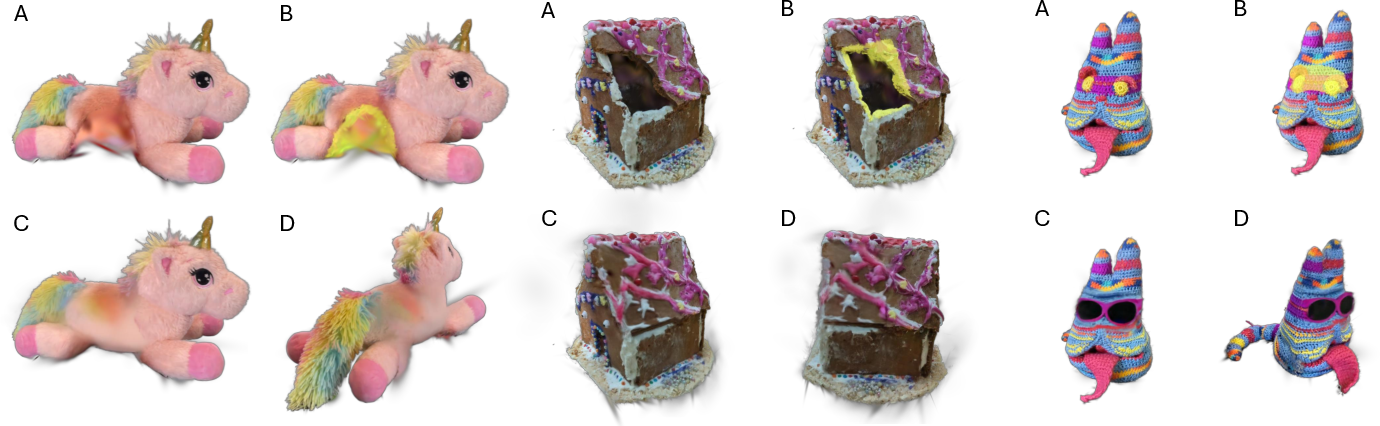

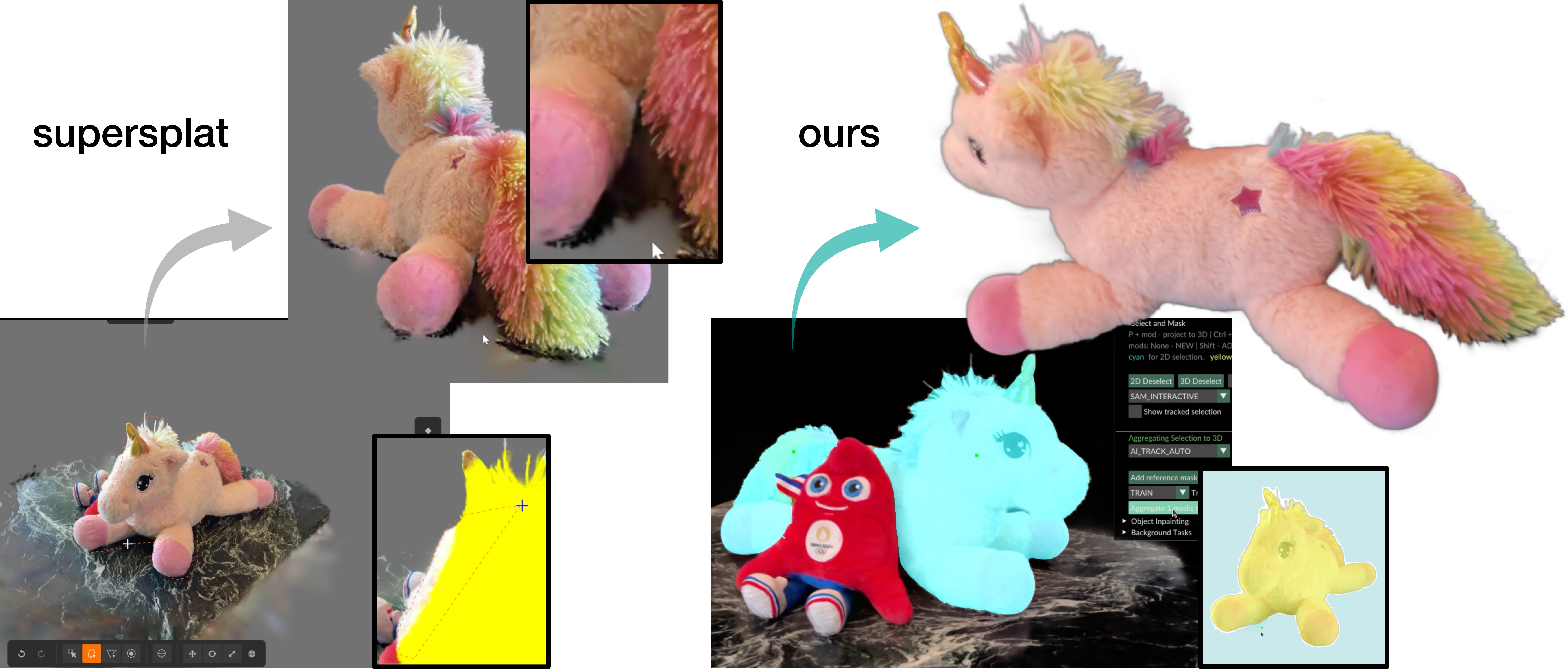

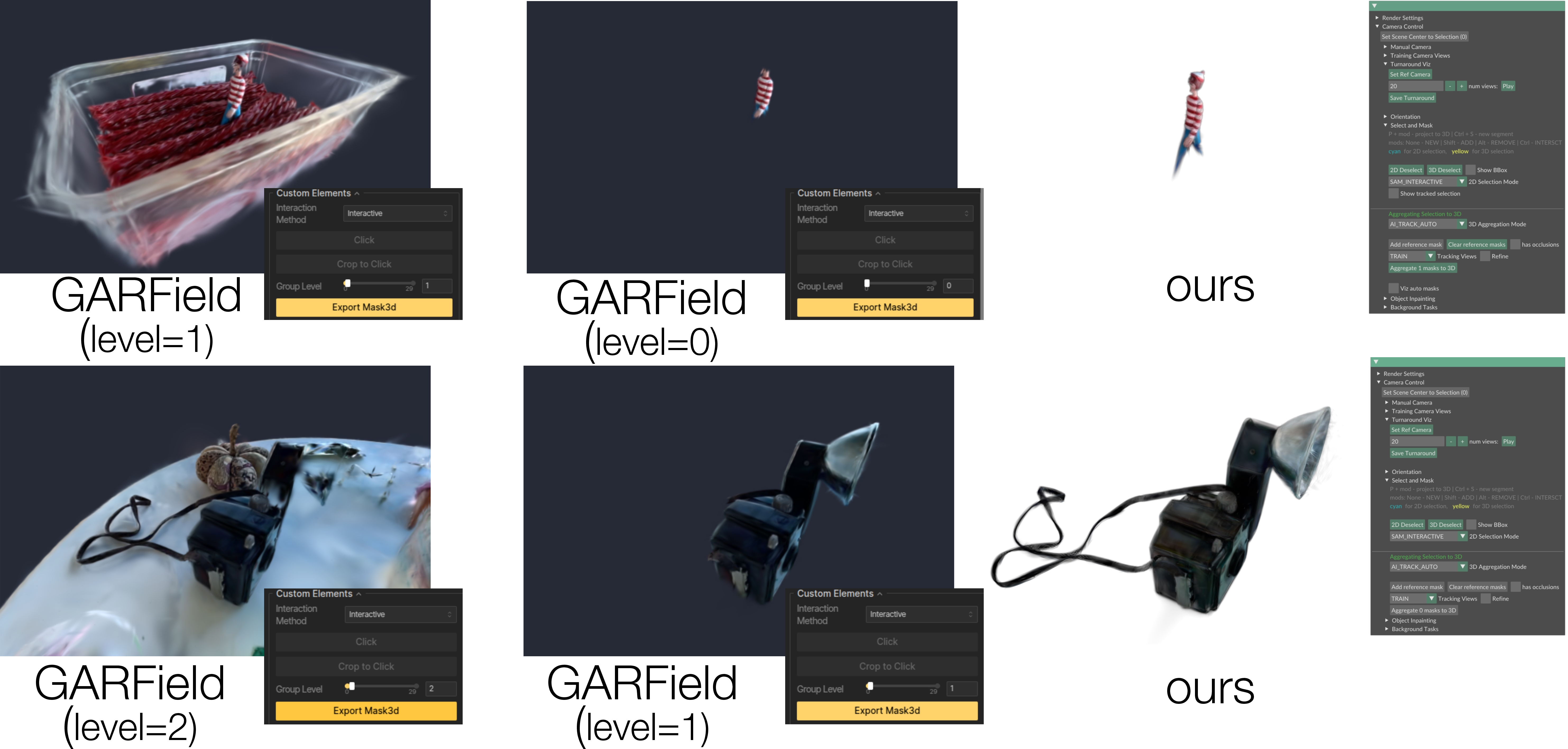

Evaluation was conducted on established datasets like NVOS and LERF-Mask, as well as hand-annotated challenging LERF figurines. ArtisanGS achieves competitive mask IoU and pixel accuracy (NVOS: mIoU up to 94.1%, Acc up to 98.8%), outperforming several baselines especially regarding robustness to input initialization. Qualitative comparison with prior methods (e.g., GaussianEditor, GARField) reveals that ArtisanGS allows substantially more flexible, controllable, and rapid segment extraction, especially in dense objects with fine structures or ambiguous boundaries, demonstrating substantial practical usability gains. Ablations show that increasing dense view count improves accuracy up to a plateau, with tracking and aggregation running in 1.5–2.5s per segmentation edit, enabling true interactivity.

Figure 5: Application of ArtisanGS segmentation toolkit across various objects and comparison with prior art.

Downstream Applications: Orientation, Editing, Simulation

ArtisanGS selection pipelines unlock advanced applications:

- Automatic Orientation: Principal axes of variation in selected Gaussians can be aligned to world axes, improving camera manipulation and physics realism. PCA-based computation ensures scene-level consistency with minimal user input.

Figure 6: User-guided orientation of segmented objects, aligning axes via principal component analysis.

Figure 6: Physics simulation and editing application using ArtisanGS-segmented objects.

Implications and Future Directions

The ArtisanGS toolkit embodies a paradigm shift from monolithic, rigid segmentation pipelines to modular, user-correctable, and application-centric workflows for 3DGS. The ability to propagate minimal 2D input to robust multi-view 3D segmentation, augmented by on-demand manual intervention, is directly extensible to new 3DGS variants and underlying rendering architectures. Practically, it enables interactive scene authoring, physical simulation, robotic manipulation, and fine-grained generative editing from in-the-wild video captures.

Theoretically, ArtisanGS suggests that segmentation and selection in spatially explicit representations benefit substantially from hybrid human-AI interfaces, and that black-box aggregations using differentiable renderers enable flexible plug-and-play deployment regardless of architectural evolution. Future directions include real-time streaming mask propagation, generalized n-object selection, multimodal editing interfaces, and deeper integration of semantic reasoning with geometric selection.

Conclusion

ArtisanGS delivers a computationally efficient, flexible, and user-guided selection and segmentation toolkit for 3D Gaussian Splatting, achieving strong accuracy and practical usability with minimal scene-specific optimization. This enables a new class of interactive applications for editing, simulation, and physical-based manipulation in 3DGS environments, and lays foundational groundwork for future research in hybrid human-AI interfaces and object-centric workflows in explicit geometry representations.